Not quite as ear-catching as the American War of Independence slogan: ‘No taxation without representation’, maybe, but potentially as world-changing. Having started in the IT industry as a storage specialist and then gradually acquiring knowledge and skills in other data centre and IT disciplines, what has always struck me, at least until now, is the extraordinary inability and/or unwillingness of the various technology specialists to learn about, let alone understand, what goes on outside their own little world. Even to the extent that the storage hardware professionals more often than not proudly proclaimed their ignorance of all things storage software-related. Same technology, two different disciplines, so two largely separate teams.

Of course, there always has been and, I suspect, always will be, the jockeying for position over which part of the overall IT infrastructure is the most important. The network folks point out that it’s all very good having large amounts of data, but if you can’t move them around quickly and efficiently, then servers and storage aren’t much use. For the server folks, the applications are what matter most, and who would need either storage or networks if there weren’t all those applications sat on servers, generating data and traffic? As for storage? Well, create all the information you want, move it around all you want, but if you can’t save it somewhere, what’s the point?! And I guess the security folks would point out that if your servers, networks and storage aren’t secure, we might as well all go home…

Alongside these technology silos, we have parallel industry sector silos – all of which have tended to move at their own pace when it comes to adopting IT as a business enabler. The finance sector, the media industry and the Web companies have led the way when it comes to technology adoption, with the other industry sectors lagging some way, or a very long way, behind.

Convergence has been long talked about, both in terms of the IT functions working together, and the data centre and IT folks at least saying ‘hello’ to each other in the morning. However, it seems as if the quest for digital transformation has brought this required coming together into stark focus. The data centre and IT disciplines really do need to work together, and with the rest of the business, to ensure that technology solutions work right across the enterprise. Meanwhile, it’s also becoming clear that the various industry sectors are beginning to understand that, if they want to interact with each other, they’re going to have to consider how best to adopt and implement joint, end-to-end solutions, using common technology platforms.

The poet John Donne famously wrote: “No man is an island entire of itself; every man is a piece of the continent, a part of the main; if a clod be washed away by the sea, Europe is the less”

I thought I’d leave the bit about Europe in just so some of you can have a chuckle over Brexit (chuckling is better than crying over the unholy mess, whichever side of the debate you sit on); but if we amend the first part of the quote thus:

‘No company is an island entire of itself; every company is a piece of the continent, a part of the main’

hopefully, you’ll get the idea. Cloud, IoT, AI, ML, VR, autonomous vehicles…just as none of these technologies can work to maximum effect in isolation from others, neither can one company involved in, say, the transport sector, hope to be as efficient or as successful as possible without interacting with a whole technology and industry-focused ecosystem.

Integration, not isolation (unlike Brexit), has to be the way forward.

Meaningful artificial intelligence (AI) deployments are just beginning to take place, according to Gartner, Inc. Gartner’s 2018 CIO Agenda Survey shows that four percent of CIOs have implemented AI, while a further 46 percent have developed plans to do so.

"Despite huge levels of interest in AI technologies, current implementations remain at quite low levels," said Whit Andrews, research vice president and distinguished analyst at Gartner. "However, there is potential for strong growth as CIOs begin piloting AI programs through a combination of buy, build and outsource efforts."

As with most emerging or unfamiliar technologies, early adopters are facing many obstacles to the progress of AI in their organizations. Gartner analysts have identified the following four lessons that have emerged from these early AI projects.

1. Aim Low at First

"Don’t fall into the trap of primarily seeking hard outcomes, such as direct financial gains, with AI projects," said Mr. Andrews. "In general, it’s best to start AI projects with a small scope and aim for 'soft' outcomes, such as process improvements, customer satisfaction or financial benchmarking."

Expect AI projects to produce, at best, lessons that will help with subsequent, larger experiments, pilots and implementations. In some organizations, a financial target will be a requirement to start the project. "In this situation, set the target as low as possible," said Mr. Andrews. "Think of targets in the thousands or tens of thousands of dollars, understand what you’re trying to accomplish on a small scale, and only then pursue more-dramatic benefits."

2. Focus on Augmenting People, Not Replacing Them

Big technological advances are often historically associated with a reduction in staff head count. While reducing labor costs is attractive to business executives, it is likely to create resistance from those whose jobs appear to be at risk. In pursuing this way of thinking, organizations can miss out on real opportunities to use the technology effectively. "We advise our clients that the most transformational benefits of AI in the near term will arise from using it to enable employees to pursue higher-value activities," added Mr. Andrews.

Gartner predicts that by 2020, 20 percent of organizations will dedicate workers to monitoring and guiding neural networks.

"Leave behind notions of vast teams of infinitely duplicable 'smart agents' able to execute tasks just like humans," said Mr. Andrews. "It will be far more productive to engage with workers on the front line. Get them excited and engaged with the idea that AI-powered decision support can enhance and elevate the work they do every day."

3. Plan for Knowledge Transfer

Conversations with Gartner clients reveal that most organizations aren't well-prepared for implementing AI. Specifically, they lack internal skills in data science and plan to rely to a high degree on external providers to fill the gap. Fifty-three percent of organizations in the CIO survey rated their own ability to mine and exploit data as "limited" — the lowest level.

Gartner predicts that through 2022, 85 percent of AI projects will deliver erroneous outcomes due to bias in data, algorithms or the teams responsible for managing them.

"Data is the fuel for AI, so organizations need to prepare now to store and manage even larger amounts of data for AI initiatives," said Jim Hare, research vice president at Gartner. "Relying mostly on external suppliers for these skills is not an ideal long-term solution. Therefore, ensure that early AI projects help transfer knowledge from external experts to your employees, and build up your organization’s in-house capabilities before moving on to large-scale projects."

4. Choose Transparent AI Solutions

AI projects will often involve software or systems from external service providers. It’s important that some insight into how decisions are reached is built into any service agreement. "Whether an AI system produces the right answer is not the only concern," said Mr. Andrews. "Executives need to understand why it is effective, and offer insights into its reasoning when it’s not."

Although it may not always be possible to explain all the details of an advanced analytical model, such as a deep neural network, it’s important to at least offer some kind of visualization of the potential choices. In fact, in situations where decisions are subject to regulation and auditing, it may be a legal requirement to provide this kind of transparency.

Organizations are embracing self-service analytics and business intelligence (BI) to bring these capabilities to business users of all levels. This trend is so pronounced that Gartner, Inc. predicts that by 2019, the analytics output of business users with self-service capabilities will surpass that of professional data scientists.

"The trend of digitalization is driving demand for analytics across all areas of modern business and government," said Carlie J. Idoine, research director at Gartner. "Rapid advancements in artificial intelligence, Internet of Things and SaaS (cloud) analytics and BI platforms are making it easier and more cost-effective than ever before for nonspecialists to perform effective analysis and better inform their decision making."

Gartner's recent survey of more than 3,000 CIOs shows that CIOs ranked analytics and BI as the top differentiating technology for their organizations. It attracts the most new investment and is also considered the most strategic technology area by top-performing CIOs.

As a result, data and analytics leaders are increasingly implementing self-service capabilities to create a data-driven culture throughout their organization. This means that business users can more easily learn to use and benefit from effective analytics and BI tools, driving favorable business outcomes in the process.

"If data and analytics leaders simply provide access to data and tools alone, self-service initiatives often don't work out well," said Ms. Idoine. "This is because the experience and skills of business users vary widely within individual organizations. Therefore, training, support and onboarding processes are needed to help most self-service users produce meaningful output."

The scale of the task of implementing self-service analytics and BI can catch organizations by surprise, especially if they are successful. In large organizations, popular self-service initiatives can very rapidly expand to encompass hundreds or thousands of users. To avoid a descent into chaos, it's crucial to identify the right organizational and process changes before starting the initiative.

Gartner recommends addressing four areas to build a strong foundation for self-service analytics and BI:

Align self-service initiatives with organizational goals and capture anecdotes about measurable, successful use cases

"It's important to confirm the value of a self-service approach to analytics and BI by communicating its impact and linking successes directly to good outcomes for the organization," said Ms. Idoine. "This builds confidence in the approach and justifies continued support for it. It also encourages more business users to get involved and apply best practice to their own areas."

Involve business users with designing, developing and supporting self-service

"Creating and executing a successful self-service initiative means forging and preserving trust between the IT team and business users," said Ms. Idoine. "There's no technical solution to build trust, but a formal process of collaboration from the start of a self-service initiative will go a long way to helping IT and business users understand what each party needs from the other to make self-service a success."

Take a flexible, light approach to data governance

"The success of a self-service initiative will depend hugely on whether the data and analytics governance model is flexible enough to enable and support the free-form analytics explorations of self-service users," said Ms. Idoine. Strict, inflexible frameworks will deter casual users. On the other hand, a lack of proper governance will overwhelm users with irrelevant data, or create serious risks of a breach of regulation. "IT leaders must find the right balance of governance to making self-service successful and scalable," she added.

Equip business users for self-service analytics success by developing an onboarding plan

"Data and analytics leaders must support enthusiastic business self-service users with the right guidance on how to get up and running quickly, as well as how to apply their new tools to their specific business problems," said Ms. Idoine. "A formal onboarding plan will help automate and standardize this process, making it far more scalable as self-service usage spreads throughout the organization."

Worldwide IT spending is projected to total $3.7 trillion in 2018, an increase of 4.5 percent from 2017, according to the latest forecast by Gartner, Inc.

"Global IT spending growth began to turn around in 2017, with continued growth expected over the next few years. However, uncertainty looms as organizations consider the potential impacts of Brexit, currency fluctuations, and a possible global recession," said John-David Lovelock, research vice president at Gartner. "Despite this uncertainty, businesses will continue to invest in IT as they anticipate revenue growth, but their spending patterns will shift. Projects in digital business, blockchain, Internet of Things (IoT), and progression from big data to algorithms to machine learning to artificial intelligence (AI) will continue to be main drivers of growth."

Enterprise software continues to exhibit strong growth, with worldwide software spending projected to grow 9.5 percent in 2018, and it will grow another 8.4 percent in 2019 to total $421 billion (see Table 1). Organizations are expected to increase spending on enterprise application software in 2018, with more of the budget shifting to software as a service (SaaS). The growing availability of SaaS-based solutions is encouraging new adoption and spending across many subcategories, such as financial management systems (FMS), human capital management (HCM) and analytic applications.

Table 1. Worldwide IT Spending Forecast (Billions of U.S. Dollars)

|

| 2017 Spending | 2017 Growth (%) | 2018 Spending | 2018 Growth (%) | 2019 Spending | 2019 Growth (%) |

| Data Center Systems | 178 | 4.4 | 179 | 0.6 | 179 | -0.2 |

| Enterprise Software | 355 | 8.9 | 389 | 9.5 | 421 | 8.4 |

| Devices | 667 | 5.7 | 704 | 5.6 | 710 | 0.9 |

| IT Services | 933 | 4.3 | 985 | 5.5 | 1,030 | 4.6 |

| Communications Services | 1,393 | 1.3 | 1,427 | 2.4 | 1,443 | 1.1 |

| Overall IT | 3,527 | 3.8 | 3,683 | 4.5 | 3,784 | 2.7 |

Source: Gartner (January 2018)

The devices segment is expected to grow 5.6 percent in 2018. In 2017, the devices segment experienced growth for the first time in two years with an increase of 5.7 percent. End-user spending on mobile phones is expected to increase marginally as average selling prices continue to creep upward even as unit sales are forecast to be lower. PC growth is expected to be flat in 2018 even as continued Windows 10 migration is expected to drive positive growth in the business market in China, Latin America and Eastern Europe. The impact of the iPhone 8 and iPhone X was minimal in 2017, as expected. However, iOS shipments are expected to grow 9.1 percent in 2018.

"Looking at some of the key areas driving spending over the next few years, Gartner forecasts $2.9 trillion in new business value opportunities attributable to AI by 2021, as well as the ability to recover 6.2 billion hours of worker productivity," said Mr. Lovelock. "That business value is attributable to using AI to, for example, drive efficiency gains, create insights that personalize the customer experience, entice engagement and commerce, and aid in expanding revenue-generating opportunities as part of new business models driven by the insights from data."

"Capturing the potential business value will require spending, especially when seeking the more near-term cost savings. Spending on AI for customer experience and revenue generation will likely benefit from AI being a force multiplier — the cost to implement will be exceeded by the positive network effects and resulting increase in revenue," said Mr. Lovelock.

Interest and investment in blockchain as an emerging technology is accelerating as firms seek secure, sequential, and immutable solutions to improve business processes, enable new services, and reduce service costs. Given the maturity state of the technology, the hype surrounding potential applications, and the need for specialized skills, the majority of blockchain spending will be in the services market – both business and technology services. A new forecast from International Data Corporation (IDC) shows worldwide spending on blockchain services growing from $1.8 billion in 2018 to $8.1 billion in 2021, achieving a compound annual growth rate (CAGR) of 80%.

IDC defines blockchain as a digital, distributed ledger of transactions or records. The ledger, which stores the information or data, exists across multiple participants in a peer-to-peer network. There is no single, central repository that stores the ledger. Distributed ledgers technology (DLT) allows new transactions to be added to an existing chain of transactions using a secure, digital or cryptographic signature. To develop, build, deploy, and maintain these distributed ledgers and smart contracts, enterprises are turning to professional services firms, systems integrators, and application developers.

"IDC believes the short-term blockchain services opportunity is small but strategically important, as developers, vendors, and their customers work out the standards and protocols and promote blockchain capabilities as a 'trust and scale' alternative to traditional database, ETL, and OLTP applications," said Michael Versace, research director, Digital Strategy Consulting at IDC. "Over the long-term horizon, blockchain services, including business consulting, IT consulting, custom development, and managed services, have the potential to become foundational to a new generation of enterprise IT infrastructure, resulting in a growing demand for consultants and developers and hundreds of billions of dollars of market size for the service company of the future."

As blockchain begins to find its way into corporate strategies and business processes, a variety of business and IT consulting, development, platform, outsourcing, and educational services will be needed. These fall into three market segments:

Project-oriented services consist of business consulting services that define enterprise strategy and readiness, identify high-value applications and profit pools, and metrics for value creation using blockchain technologies; IT consulting services that advise on platform selection, data and system architecture, application performance, capacity and business continuity planning; system integration for the planning, design, implementation and management of blockchain solutions; and custom application development to design, build and test new blockchain applications.

Outsourced services consist of business process outsourcing for the execution of key business activities, business processes, or entire business functions by an external (third party) services provider or outsourcer; and other outsourced services such as hosted application management, blockchain infrastructure outsourcing, and hosted infrastructure services.

Support services which consist of support and training services including hardware and software deployment and support services, content development, training processes to support enterprise, partner, or end-user adoption of blockchain networks and technologies.

"IDC expects to see dramatic growth in the blockchain developer marketplace over the next several years," noted Versace. "By 2021, the number of consultants and developers in blockchain services will have grown tenfold from current estimates."

A new update to the International Data Corporation (IDC) Worldwide Semiannual Robotics and Drones Spending Guide forecasts worldwide spending on robotics and drones solutions will total $103.1 billion in 2018, an increase of 22.1% over 2017. By 2021, IDC expects this spending will more than double to $218.4 billion with a compound annual growth rate (CAGR) or 25.4%.

Robotics spending will reach $94 billion in 2018 and will account for more than 90% of all spending throughout the 2017-2021 forecast. Industrial robotic solutions will account for the largest share of robotics spending (more than 70%), followed by service robots and consumer robots. Discrete and process manufacturing will be the leading industries for robotics spending at more than $60 billion combined in 2018. The resource and healthcare industries will also make significant investments in robotics solutions this year. The retail and wholesale industries will see the fastest robotics spending growth over the forecast with CAGRs of 46.3% and 41.2%, respectively.

"Industrial robots are becoming more intelligent, human-friendly and easier to work with," said Dr. Jing Bing Zhang, research director, Robotics. "This has accelerated their rapid expansion in the manufacturing industry beyond automotive, especially in high-tech manufacturing that requires light-weight robots with higher precision, flexibility, mobility and collaborative capability. Vendors who are not able to meet such demands will see their market position quickly eroded."

"Growth in the service robotics market is being driven by a collision of robotic technology maturity, market readiness, and related technology maturity," said John Santagate, research director, Service Robotics. "Robots did not reach this point overnight; it has been a decades-long effort to bring robots to the point they are today. Over time, innovators have been building upon existing technology and layering new and emerging technology onto robotic devices. We have reached a point now where the mechanics of robots are mature and the addition of artificial intelligence, advanced vision systems, cloud applications, Internet of Things, and continued mechanical innovation has enabled safe, collaborative robots that are working with people rather than replacing people."

"While robotics has its roots in the manufacturing sector, we continue to see increasing acceptance and adoption of robots in several other industries, such as resources and transportation," said Jessica Goepfert, program director, Customer Insights & Analysis. "Organizations in these areas are attracted to the promise of greater efficiency and productivity. But they are also turning to robotics to address other concerns such as skills shortages, workplace safety, and keeping up with the accelerating pace of business."

Worldwide drone spending will be $9 billion in 2018 and is expected to grow at a faster rate than the overall market with a five-year CAGR of 29.8%. Enterprise drone solutions will deliver more than half of all drone spending throughout the forecast period with the balance coming from consumer drone solutions. Enterprise drones will increase its share of overall spending with a five-year CAGR of 36.6%. The utilities and construction industries will see the largest drone spending in 2018 ($912 million and $824 million, respectively), followed by the process and discrete manufacturing industries. The fastest growth in drone spending will come from the education (74.1% CAGR) and state/local government (70.5% CAGR) industries.

"Drones have become an indispensable tool, especially in industries such as oil and gas, agriculture, and telecommunications. In many instances, drones have helped reduce their employees' exposure to dangerous tasks such as cell tower or electrical grid inspection. Farmers have also utilized drones to help monitor their land for irrigation deficiencies. While there is a growing number of consumer drone enthusiasts, we expect that drones will soon become part of the connected-home providing home security, monitoring children at play, or delivering groceries," said William Stofega, program director, Mobile Device Technology and Trends.

"Drones have become a forefront solution for many tasks and applications that were once deemed too dangerous, dirty, dull, or dear. Technological advancements such as improved sensors, enhancements in collision avoidance systems, or innovations related to full automation or intelligent piloting, have propelled new interest and acknowledgement from many industries that drones are here to stay. Groundbreaking improvements in the technology has piqued interest by industries that operate in open or outdoor space, such as utilities, where inspection-related applications such as transformer substation inspection or power line, foliage, and telephone line inspection are key drivers of the industry. As policies change and governments work with vendors and end users to formulate regulation allowances, new opportunities and expanded use cases will come to light as their benefits are realized across all industries," said Stacey Soohoo, research manager, Customer Insights and Analysis.

China will be the largest geographic market for robotics, delivering more than 30% of all robotics spending throughout the forecast, followed by the rest of Asia/Pacific (excluding China and Japan), the United States, and Japan. The United States will be the largest geographic market for drone spending at $4.3 billion in 2018, followed by Western Europe, China, and the rest of Asia/Pacific (excluding China and Japan). However, exceptionally strong spending growth in China (55.5% CAGR) and Asia/Pacific (excluding China and Japan) (62.0% CAGR) will move these two markets ahead of Western Europe by 2021.

The European Managed Services & Hosting Summit 2018 is a management-level event designed to help channel organisations identify opportunities arising from the increasing demand for managed and hosted services and to develop and strengthen partnerships.

Previous articles here have reflected on the changes that the managed services model brings customers – their abilities to change their buying model to revenue-based, often to do more with less resources, and then adopt new working tools such as analytics, which just weren’t available at the right price before. The impact on the IT industry supplying those customers has been profound as well, requiring a real re-think of sales processes, built around a continuous relationship with the customer, not just a “sell-and-forget” on big-ticket items.

Obviously, the IT channel is attracted by the prospect of more sales by working in managed services, with the world market predicted to grow at a compound annual growth rate of 12.5% to 2019. But it is such a fundamental change in their structures, that some are thinking it a step too far, even under pressure from customers for the benefits that managed services can bring them. Those partners may find themselves rapidly left behind as the new model becomes the standard in most industries.

This, coupled with the ease of entry into the market for cloud-based solutions suppliers, means that the IT channel is having to face a whole new competitive threat. A business “born in the cloud” has an obvious advantage when trying to sell cloud services to a customer, compared with a traditional reseller – the cloud-based channel “eats its own dog-food”, to adopt a rather unwholesome phrase imported across the Atlantic.

So, in establishing the agenda for the European Managed Services and Hosting Summit in Amsterdam in May this year, the organisers are thinking beyond the obvious GDPR issues which will inevitably be in the headlines as its deadline comes round, and even the ever-popular M&A discussions of company value, to bring out a flavour of the sales-engagement process in managed services. We are asking our leading speakers to examine the business processes of the best managed services companies, to try to identify what makes them tick - and tick ever faster and with wider portfolios.

How is the sales process managed? How are the salespeople rewarded in the revenue model? How do they maintain that ongoing relationship with the customer in a cost-effective way? How do they ensure that the salesforce is motivated and retained in the longer term, while keeping them up-to-date with the latest information on the market, the technologies, and customers issues?

None of this is easy, and many managed service providers, integrators, traditional resellers and even those new and fast-growing “born-in-the cloud” supplier companies still have many questions to put to the experts, and the MSHS Europe is the perfect event at which to do this, with many leading suppliers on hand as well as industry experts.

The MSHS event offers multiple ways to get those answers: from plenary-style presentations from experts in the field to demonstrations; from more detailed technical pitches to wide-ranging round-table discussions with questions from the floor. There is no excuse not to come away from this with questions answered, or at least a more refined view on which questions actually matter.

One of the most valuable parts of the day, previous attendees have said, is the ability to discuss issues with others in similar situations, and we are all hoping to learn from direct experience, especially in the complex world of sales and sales management.

In summary, the European Managed Services & Hosting Summit 2018 is a management-level event designed to help channel organisations identify opportunities arising from the increasing demand for managed and hosted services and to develop and strengthen partnerships. More details:

Security and risk management leaders must take a pragmatic and risk-based approach to the ongoing threats posed by an entirely new class of vulnerabilities, according to Gartner, Inc. "Spectre" and "Meltdown" are the code names given to different strains of a new class of attacks that target an underlying exploitable design implementation inside the majority of computer chips manufactured over the last 20 years.

Security researchers revealed three major variants of attacks in January 2018. The first two are referred to as Spectre, the third as Meltdown, and all three variants involve speculative execution of code to read what should have been protected memory and the use of subsequent side-channel-based attacks to infer the memory contents.

"Not all processors and software are vulnerable to the three variants in the same way, and the risk will vary based on the system's exposure to running unknown and untrusted code," said Neil MacDonald, vice president, distinguished analyst and Gartner fellow emeritus. "The risk is real, but with a clear and pragmatic risk-based remediation plan, security and risk management leaders can provide business leaders with confidence that the marginal risk to the enterprise is manageable and is being addressed."

Gartner has identified seven steps security leaders can take to mitigate risk:

"Ultimately, the complete elimination of the exploitable implementation will require new hardware not yet available and not expected for 12 to 24 months. This is why the inventory of systems will serve as a critical roadmap for future mitigation efforts," said Mr. MacDonald. "To lessen the risk of future attacks against vulnerabilities of all types, we have long advocated the use of application control and whitelisting on servers. If you haven't done so already, now is the time to apply a default deny mindset to server workload protection — whether those workloads are physical, virtual, public cloud or container-based. This should become a standard practice and a priority for all security and risk management leaders in 2018."

Angel Business Communications have announced the categories and entry criteria for the 2018 Datacentre Solutions Awards (DCS Awards).

The DCS Awards are designed to reward the product designers, manufacturers, suppliers and providers operating in data centre arena and are updated each year to reflect this fast moving industry. The Awards recognise the achievements of the vendors and their business partners alike and this year encompass a wider range of project, facilities and information technology award categories as well as Individual and Innovation categories, designed to address all the main areas of the datacentre market in Europe.

The DCS Awards categories provide a comprehensive range of options for organisations involved in the IT industry to participate, so you are encouraged to get your nominations made as soon as possible for the categories where you think you have achieved something outstanding or where you have a product that stands out from the rest, to be in with a chance to win one of the coveted crystal trophies.

This year’s DCS Awards continue to focus on the technologies that are the foundation of a traditional data centre, but we’ve also added a new section which focuses on Innovation with particular reference to some of the new and emerging trends and technologies that are changing the face of the data centre industry – automation, open source, the hybrid world and digitalisation. We hope that at least one of these new categories will be relevant to all companies operating in the data centre space.

The editorial staff at Angel Business Communications will validate entries and announce the final short list to be forwarded for voting by the readership of the Digitalisation World stable of publications during April and May. The winners will be announced at a gala evening on 24th May at London’s Grange St Paul’s Hotel.

The 2018 DCS Awards feature 26 categories across five groups. The Project and Product categories are open to end use implementations and services and products and solutions that have been available, i.e. shipping in Europe, before 31st December 2017. The Company nominees must have been present in the EMEA market prior to 1st June 2017. Individuals must have been employed in the EMEA region prior to 31st December 2017 and the Innovation Award nominees must have been introduced between 1st January and 31st December 2017.

Nomination is free of charge and all entries can submit up to two supporting documents to enhance the submission. The deadline for entries is : 9th March 2018.

Please visit : www.dcsawards.com for rules and entry criteria for each of the following categories:

DCS Project Awards

Data Centre Energy Efficiency Project of the Year

New Design/Build Data Centre Project of the Year

Data Centre Automation and/or Management Project of the Year

Data Centre Consolidation/Upgrade/Refresh Project of the Year

Data Centre Hybrid Infrastructure Project of the Year

DCS Product Awards

Data Centre Power product of the Year

Data Centre PDU product of the Year

Data Centre Cooling product of the Year

Data Centre Facilities Automation and Management Product of the Year

Data Centre Safety, Security & Fire Suppression Product of the Year

Data Centre Physical Connectivity Product of the Year

Data Centre ICT Storage Product of the Year

Data Centre ICT Security Product of the Year

Data Centre ICT Management Product of the Year

Data Centre ICT Networking Product of the Year

DCS Company Awards

Data Centre Hosting/co-location Supplier of the Year

Data Centre Cloud Vendor of the Year

Data Centre Facilities Vendor of the Year

Data Centre ICT Systems Vendor of the Year

Excellence in Data Centre Services Award

DCS Innovation Awards

Data Centre Automation Innovation of the Year

Data Centre IT Digitalisation Innovation of the Year

Hybrid Data Centre Innovation of the Year

Open Source Innovation of the Year

DCS Individual Awards

Data Centre Manager of the Year

Data Centre Engineer of the Year

Data protection of digital data is a fundamental and mandatory responsibility for all organizations. Therefore, organizations need to understand the basic principles and concepts of data protection, especially in our current era of massive data breaches. To satisfy that need, the Storage Networking Industry Association (SNIA) has developed a technical whitepaper to provide the industry with a vendor-neutral overview of the relevant best current practices for data protection at the storage level.

By Thomas Rivera, CISSP, Chair of SNIA Data Protection and Capacity Optimization (DPCO).

Data protection is traditionally viewed as the execution of backup operations that are assured of providing data recovery if a loss of the original data (production data) occurs. In fact, data protection encompasses much more than backups and recovery techniques, such as dealing with issues related to data corruption and data loss, data accessibility and availability, as well as compliance with retention and privacy rules and regulations.

There are many factors to consider when it comes to data protection at the storage level. The main areas fall into three data protection “drivers”. These are data corruption and data loss, accessibility and availability, and compliance. Protected data must meet intended uses for all three drivers. Preventing data corruption and data loss ensures that the data is what the organization expects it to be when the data needs to be used. Accessibility and availability relate to the data being made available in a timely manner for intended uses. Compliance ensures that the data usage meets all associated legal and regulatory requirements.

Data corruption and data loss

Data must be protected both logically (to prevent data corruption from hacking or other external threats) and physically (in the case of data loss or the irreversible failure of a storage device). Physical prevention of data loss from hardware failure on a random-access storage system can use techniques such as RAID or erasure coding.

Backup and recovery are two of the traditional cornerstones to data protection for both physical and logical reasons. Backup relates to the processes of providing a copy of the data at a point in time and recovery refers to the ability to restore data for intended application use according to the organizational Service Level Agreements (SLA). One approach on a storage system itself is through the use of snapshots. These snapshots may serve as the basis for the data that is copied to a backup target storage system. Other approaches include the use of continuous data protection (CDP) or to use a public or private cloud as a backup service.

Cloud backup refers to backing up data to a remote, storage-as-a-service (public, private or hybrid). A cloud backup service is not a pre-defined, fixed solution and must be considered in the overall context of a business data protection or disaster recovery strategy. Cloud-based backup appeals to many businesses because it offers a low-cost way to protect business data off-site but there are many different considerations to be aware of when planning such an implementation.

Moving the business data into the cloud is the easy part. Getting it back when you really need it is when things can get challenging. For this reason, it is important to understand all the imperatives before embracing public cloud-based data backup as part of the data protection and disaster recovery strategy.

When considering backup to a cloud provider, it is imperative to define the business requirements. These requirements may include business demands, SLAs and Quality of Service (QoS) levels for the backup data, along with the required skills for deployment of the cloud-based backup technology. It is also important to pay careful attention to the network design that will connect the cloud service provider to your data center. What network currently exists, what security does it offer, is there enough bandwidth redundancy, latency, etc.

Replication and mirroring are also used to make copies of data. Replication refers to point in time copies whereas mirroring provides for continuous writing of data to two or more targets. Replication may be used for both physical and logical data protection while mirroring is a physical data protection approach.

An archive is an official set of more or less fixed data that is managed separately from more active production data. As such, copies have to be made for data protection purposes, but more active measures, such as standard backup or mirroring are not necessary.

Accessibility and Availability

For accessibility and availability, Business Continuity Management (BCM) includes the processes and procedures for ensuring ongoing business operations. One key aspect of BCM is Disaster Recovery (DR), which involves the coordinated process of restoring systems, data, and the infrastructure required to support ongoing business operations after a disaster occurs. But a BCM plan also includes technology, people, and business processes for recovery.As part of accessibility and availability, basic infrastructure redundancies need to be provided, including UPS systems to provide redundancy for power in case of a power outage and extra network and power connections.

Compliance

Compliance includes the application of specific technologies that allow for the ability to secure data for meeting the appropriate rules and regulations typically related to data retention, authenticity, immutability, confidentiality, accountability, and traceability, as well as the more general problem of data breaches. There are a number of technologies that relate to compliance including:

The two sides of data protection

Data protection is an important component of any Information Technology (IT) system, and the methods used for data protection and how they are configured have important inter-relationships with other aspects of the data center. By its nature, data protection has two sides: the backup or replication side and the restore or recovery side.

The backup side of data protection is the process or processes performed on a regular, or even a continuing basis to create one or more copies of an organization’s primary data at a particular point in time. Backup processes may well differ from one type or subset of data to another, and they must be chosen with care to minimize the impact on the availability of primary data to all applications and users that need it. The backup must also provide for recovery of data in the way prescribed by the organization’s Service Level Agreements (SLAs) with regard to each set of data. Thus traditional daily backups (copies of data to a different media) may be used for some subsets of data, while a real-time mirroring process may need to be used for other, highly critical data sets, in order to facilitate faster restores of the data.

Successful recovery operations are the result of having put appropriate backup processes in place, and recovery of lost or corrupted data is vital to an organization’s health. A recovery operation may be required just to replace a file that a user accidentally deleted or a corrupted set of data (operational recovery), or to replace a major portion of a data center or an entire data center, in case of a disaster such as a multiple device failure, a virus, denial of service attack, or the destruction of a data center by a fire or flood (disaster recovery).

There are two important considerations or objectives for data recovery that in turn determine how it needs to be backed up; they are the Recovery Point Objective (RPO) and the Recovery Time Objective (RTO). RTO and RPO are important factors in deciding what backup or replication strategy the business needs to use, and they need to be a part of any organization’s SLAs with regard to data protection.

Data protection and digital archives

Although a digital archive represents another set of copies of primary data like those intended for backup or disaster recovery (DR), an archive is more immutable in nature, with changes either not allowed, or strictly controlled by a journaling process. Also, archives themselves require data protection; they are not intended to be used for data protection.

Archives may be divided into two types based on their intended longevity, with those intended to last more than ten years being considered a long-term archive’s. Long-term archives typically require different methods for storage, security and management.

About the DPCO

For more information about the relevant best current practices for Data Protection, please feel free to download the complete technical white paper at: https://www.snia.org/education/whitepapers

The DPCO was created to foster the growth and success of the market for data protection and capacity optimization technologies.

For more information about the work of the DPCO, visit: http://www.snia.org/dpco

The role of the IT manager has changed dramatically over the last few years, as has the role of IT. No longer seen as a necessary evil, IT has shifted from being a mere function of business to being an enabler of growth.

By Gavin Russell, CEO, Wavex.

While the provision and maintenance of the technology infrastructure still plays a part, as does managing third-party suppliers, vendors, staff and operational requirements, IT managers and IT as a whole has become a lot more strategic. This means that IT managers have a lot more to consider, and more to actually manage as a result.

This is evident considering that in many organisations there is a drive to align the IT strategy to the business strategy. The benefit? The business has certain goals to achieve and by connecting both aspects, IT can be mapped out to help achieve those goals. IT becomes an enabler, rather than the distractor. In the past, IT was seen merely as a function, with the IT manager seen as someone who keeps things running, identifies problems and worries about getting the right level of investment approved.

If an IT manager is expected to help drive strategic business goals – how do they find the time? The short answer: by using a range of increasingly sophisticated tools to improve the efficiency of the function.

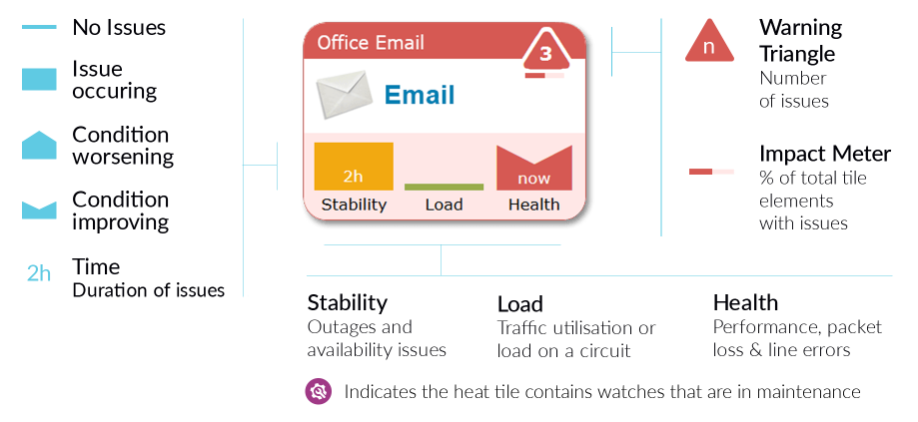

Consider something like monitoring; there’s a great deal of this that needs to take place in order to ensure the business, its network and its assets keep working.

With the solutions available on the market at the moment, proactive monitoring becomes that much easier, whether it’s during a standard workday (9-5) or outside office hours. These platforms proactively monitor operations and also allow IT managers to gain overall visibility over the entire system, through a web portal, dashboard or app. Getting an early warning of a possible infrastructure or network problem can stop them escalating into full-blown critical incidents requiring substantial manpower to resolve.

Another area that can drain resources and attention is shadow IT. This has been a challenge for organisations for the last few years, driven largely by the use of personal devices on the company network. While shadow IT in the traditional sense is still a concern, un-vetted devices on a company network present a significant vulnerability, there is also a more subtle side to the issue. This almost covert side of shadow IT comes in the form of software. Often, PCs and laptops are bought with a number of software programs pre-installed, such as Adobe Acrobat Reader. If the software is used often and is part of the organisational workflow it will be upgraded and patches will be applied by IT as standard.

However, if the software isn’t used, it may be forgotten about. In this case it wouldn’t be subjected to the constant updates and patches that are necessary to ensure the security around the software is up to spec. As these applications get older, the number of ways they can be exploited by hackers increases and can pose a significant risk to cyber security. The solution? The IT manager and team need to have an awareness of the software that is installed on every single end point. It’s here that a vulnerability monitoring solution can add value by constantly looking at what software is installed across the network and its end points, and compare that with a database of known vulnerabilities.

Essentially IT managers are battling on multiple fronts, so the use of automated, proactive monitoring tools can add tremendous value, freeing up time to focus on strategic initiatives.

IT and the IT manager are integral to business success. But with the changing role of IT and the growing cyber threat landscape, it’s critical that IT is recognised as strategic partner throughout the business. By aligning the IT strategy with the wider business strategy, the IT manager has the opportunity to ensure the board understands the strategic value that IT can deliver. Taking that further, with the right tools and monitoring platforms IT managers can ensure that they are able to monitor their infrastructure, operationally and in terms of cyber security, and deliver that value back to the organisation.

When looking at the virtualization landscape in its broader sense I can’t help seeing that the real problem is agility or, rather, the lack of it.

By Pravin Mirchandani, CMO, OneAccess Networks.

Talk of robotic process automation (RPA) is all the rage, but what can it really do for your business?

By Scott Dodds, CEO at Ultima.

First, let’s be clear about what we mean by RPA. Put simply, it is the use of software ‘robots’ to automate business processes, for example, in back-office functions or your other core business processes. By automating time-consuming, repetitive tasks organisations stand to improve their productivity and gain competitive advantage.

But RPA provides much more than this. Software robots can also be used to ensure greater accuracy and compliance of data by removing human error, which in turn provides greater security of data and information. A bonus for those companies worried about meeting the forthcoming GDPR regulations in May.

Software robots consistently carry out prescriptive, logic-based functions to automate end-to-end processes or process parts, without the need to modify underlying systems. They speed-up back-office tasks in areas such as procurement, finance, IT, human resources and business processes.

Recent industry research has found more than half (57 per cent) of the UK’s SMEs fear big businesses use of RPA will help to drive them out of business in the next five years. But the good news is that this needn’t be the case. There are now RPA offerings that can be purchased by SMEs at affordable prices as part of Software-as-a-Service offerings. The technology is relatively simple to implement and requires little or no infrastructure and application re-architecture.

SME and IT solutions provider, Ultima is using RPA across several of its own business processes and is seeing some dramatic changes in productivity. The CEO, Scott Dodds, estimates the company’s overall productivity has increased by a factor of two, allowing its staff to focus on growing the business and delivering an improved customer service.

One of the most exciting ways Ultima is using RPA is to automate some of its forecasting and planning tasks within the business. Software robots collate real-time sales and marketing information and process all the information they collect during the day, to produce detailed forecasts and business intelligence for the next morning. To collate this information and analyse it would have taken approximately eight to ten hours per day of staff time. As a result, the business has improved business intelligence to plan with, and staff have more time to spend on customer service and strategic thinking.

Within most organisations IT support teams spend too much time undertaking manual administrative tasks, such as backups, running diagnostics or system checks and managing patch processes. Using software robots frees these people to concentrate on higher priority tasks, or to work on business improvement and change projects, without the need to increase headcount.

Ultima is using software robots, or what it likes to call its ‘Virtual Workers’, to handle the processing of tickets that come into its IT managed services desk. As software robots are available 24/7, 365 days of the year the company is able to respond to customer needs faster and more accurately as the robots leave no room for human error. Where once they had between six and ten touch points for staff logging and dealing with each ticket they now need only two. This reduction in the number of times a member of support staff has to handle each ticket has led to enormous productivity gains.

RPA is also proving its worth across human resources. End-to-end business processes, such as a starters and leavers’ work-flow, can span multiple teams or extend to third-party providers, partners and customers. In many cases, these process ‘islands’ are linked by inefficient hand-offs which slow down processing and can result in errors.

At Ultima when people join or leave the firm there are many routine and mundane tasks that HR staff used to spend many hours completing, for example, ordering new equipment and logging them on to IT, HR and financial systems. With RPA all this is done automatically once a few details of the joiner are put into the main system. And indeed, if anyone leaves, their equipment is automatically recalled and they are logged off all appropriate systems. In addition, Ultima has automated some of its invoice posting activities and is currently looking at other processes it can automate to create real savings.

Within any corporate function, there are a host of common, cyclical activities which are candidates for being automated. RPA can be implemented to handle everything from accounts payable, accrual bookings and credit checks, to salary processing, tax reporting and auditing, improving standardisation and speed of execution.

Contact centres and service desks tend to use several different systems and applications, and often undertake a high volume of low complexity, repetitive tasks such as fulfilling service requests. Service agents often have to navigate multiple applications while simultaneously managing the call with the customer. Where customers make contact via email or messaging systems, agents are required to translate this information from those systems while executing the required actions.

Software robots can improve the experience by streamlining processes and enabling customers to leverage self-service portals for common requests. By simplifying the service agent process with the automation of tasks, and the introduction of Natural Language Processing (NLP) for extracting key information from emails and messaging chats, agents can focus on providing the best experience for customers, in the quickest time.

On a positive note, the One Poll research undertaken by Ultima of SME senior executives found that two-thirds of businesses want to use robotic process automation. With sixty-five per cent of companies reporting that they either plan to or already automate repetitive, time-consuming tasks. It found the financial services sector leads the charge, where more than 80 per cent of companies either plan to or already automate at least some business processes.

For SMEs now is the time to sit down and think about which internal processes could be automated to create efficiencies in your business. Ultima has only automated five key processes, but the returns have been dramatic. Most businesses will have several processes they can automate; some businesses will have a myriad of processes that can be automated. Working out which ones to automate should be done on a clear ROI basis and by looking at where mundane tasks are hampering staff’s ability to work on more important tasks.

One thing that Ultima is keen to point out is that it is not using RPA to help reduce staff numbers. Rather, the firm sees it as an opportunity to free up staff time and allow them to focus on more strategic work. McKinsey’s research has shown that employees welcomed the technology because they hated the boring tasks that the machines now do, and it relieved them of the rising pressure of work.

Scott Dodds, CEO, Ultima comments, “We call our software robots ‘Virtual Workers’ as they are there to work alongside humans to do the work they don’t need, or want, to do. They have allowed our employees to spend more time on strategic and creative projects that will give us competitive advantage, and has already improved our productivity by a factor of two.”

The research backs this theory up. It found 77% of respondents want to use RPA to automate mundane, transactional tasks, and 56% said freeing up staff time to focus on more strategic work was a key driver for using RPA.

Now is the time for SMEs to embrace the opportunity of implementing RPA in their businesses to increase productivity and help them remain competitive. RPA and the digital transformation that it brings by automating tasks and procedures that allow specialist teams to focus on higher-value tasks is exciting. It means that smaller businesses will be able to deliver tasks at a scale and speed that would only have previously been imaginable for larger enterprises.

To the outside observer, the world of cybercrime might seem cryptic at best, and completely unknowable at worst. However, the important thing to remember is that no matter how dark or murky it may appear, cybercriminals have their own set of goals and objectives, just like you.

By Angel Grant, Director, Identity, Fraud and Risk Intelligence at RSA Security.

So just because understanding the enemy is hard, this doesn’t mean it’s impossible or unnecessary. Amassing this kind of knowledge is an essential step in achieving business-driven security, which dictates that organisations stop thinking of security as just a technology problem and learn how to link security incidents with business context. Corporations that try and get to know their opponents, and are willing to put themselves in their shoes, will always have leg up when it comes to defeating them. In fact, with 2018 fast approaching, knowing what your enemies want and the lengths they’ll go to get it might be the only way to protect what matters most to your business.

Don’t stop there. Good cybersecurity is a continual process. Even after launch, it is important to keep revisiting the question. Cybercriminals are nothing if not adaptable, and the rock-solid defences you put in one day might be a piece of cake to crack the next.

A little dose of paranoia can also be extremely healthy. It’s best to assume the worst and that you are compromised already. Think of all the ways you might be vulnerable, and act to find out more about who might be hunting you and how. For example, if your customers encounter phishing attempts, is it easy for them to report incidents to you (e.g., through a dedicated email address), so you can track the nature and characteristics of this type of fraud.

You should also try to question why the hacker is trying to get at you. If your ‘crown jewels’ were to be sold on the dark web, what kinds of agents would be behind that? Who is collecting data on them, and how can you tap into that surveillance to gain insight that can help your business?

By doing this, you’ll be better able to stop fraud before it happens, reducing the risk to your organisation of cyber attacks, identity theft, and account takeover.

It’s not enough to only check people when you let them in. From there, you need to keep an eye on visitors once they’re inside. To do that, you need to know how a “normal” person behaves. For example, a visitor to your house might take a seat and make conversation. If they leap to their feet and start checking out your valuables, you’ll want to keep a closer eye on them.

Continuous web session analysis recognises that cybercriminals don’t behave like other site visitors; they move faster, navigate differently, and often leave more than one device trail behind. To spot these differences, you need a reliable baseline to measure against. Do this by consistently identifying and tracking the interactions that occur across the entire customer web session, from login and browsing to the completion of a transaction. You’ll be amazed at how quickly you discover anomalies between how a genuine customer and a cybercriminal interacts with your web and mobile services.

To this end, data is one of the most powerful tools in your quest to profile your enemy. Optimise the security investments you’ve already made by correlating data from your various anti-fraud tools to get a more complete picture of normal and anomalous behaviour. Advanced data analytics technologies mean you can do this without compromising your customers' experience or data privacy.

It’s not enough to just understand your opponent if you don’t know how to take the necessary steps to protect against them. Now that you’ve gotten to know your enemy, you’ll want to capitalise on the understanding that you’ve built up and take the fight to them. Here are five tips for doing exactly that.

1. Understand and avoid business logic abuse

Fraud does not only occur at the transaction level. It has the potential to occur the moment a user hits your web page. Many precursors to fraud, such as DDoS attacks, web scraping, and HTML/script injection, occur at the pre-login stage and that can indicate a high potential for business logic abuse—the hijacking of normal application flows for illegitimate purposes.

Business logic abuse is not easily identified by traditional security software, so it’s essential to prevent these attacks from occurring in the first place. A good example of a business logic abuse attack is coupon stacking in the ecommerce world. Combining good coding practices with a solid understanding of your application flows and transaction processes is essential to prevent cybercriminals from abusing your website to commit fraud.

2. Put your omni goggles on

When building your omnichannel strategy, you’ll be thinking first and foremost about the new business models you can achieve. But you also need to think about omnichannel fraud management from the outset, especially in connecting with partners and aggregators.

If there's one thing we can be certain of, it's that cross-channel attacks will grow. This is why visibility into device reputation (e.g., has this particular mobile, tablet or computer previously been used to commit fraud) and user behaviour across channels is critical. Now is the time to invest in centralised fraud management that can leverage input from all anti-fraud tools used across your channels.

3. Use the buddy system

Visibility isn’t just about what’s going on in your own organisation—it also means understanding fraud activity within the context of global, cross-industry threats. For example, fraud intelligence feeds can tell an organisation if an IP address or account has been involved in confirmed fraud, or if a shipping or email address has been used by a known reshipping mule.

Collective intelligence is a powerful tool—sharing helps everyone improve their fraud detection and means we win as one.

4. Recognise the value of a whole-organisation approach to security

Change the internal conversation. Rather than looking at cybersecurity and fraud management as an overhead, think instead of the positive contribution they make to your bottom line by reducing fraud losses, improving the customer experience to drive revenue, and protecting your business reputation.

Once you do that, it’s much easier to get buy-in from the whole organisation and establish close co‑operation between different departments (after all, what looks like benign activity to one group may be a significant problem when viewed holistically). Combining the information security team’s technical knowledge with the fraud team’s view of criminal behaviour, for example, could bring valuable insights.

5. Step it up… carefully

Effective fraud management is a balancing act between minimising losses and reducing customer friction. Be clear in establishing your organisation's tolerance to risk, then tailor your interventions to your threshold.

Whether your risk tolerance is high or low, you can always work on improving the customer experience with consumer- optimised authentication methods, such as fingerprint or voice recognition or in-app one-time passwords, based on your user populations, segmentations, and regulatory requirements.

As 2018 begins, cybercrime might seem like an unstoppable force - however that doesn’t have to be the case. The bad guys might be getting smarter, but businesses who take the onus upon themselves to learn about the mounting threats they face, and act appropriately, will be in a great position to reap the rewards.

International manufacturers and industrial organisations have been investing more heavily in digital programs and initiatives to help accelerate the era of IT-optimised smart manufacturing in the age of Industry 4.0. This includes automating data and processes, and adopting technologies to support this, with which digital transformation goes hand-in-hand.

By John Newton, CTO and Founder, Alfresco.

According to a recent PTC report, manufacturing will be the biggest IoT platform by 2021[1], reaching $438 million as the Industrial Internet of Things (IIoT) increases efficiency and decreases downtime. While a separate study by Accenture[2] says that the IIoT could help reduce machinery breakdowns by 70% and reduce overall maintenance costs by 30%.

However, many companies are still struggling to deal with the flow of information across the extended enterprise. As manufacturers’ first-generation processes and systems, built on 30-year-old technologies for 30-year-old computing environments, can’t meet new demands. As a result, businesses risk being left behind because of the need to immediately manage and analyse exponentially increasing amounts of data, images and documents. As well as having to deal with the use of unsanctioned applications or ad hoc workarounds that leave content insecure, out of date, or non-compliant, which introduces risk no company can afford.

This results in intense pressure for manufacturers to improve the way they manage product and engineering information. Here are the five biggest forces intensifying manufacturers’ interest in new technologies and digital transformation:

1. Mobile and Social: The rise of mobile and social technologies has changed not only where we work, but how we work. Manufacturing organisations are under tremendous pressure to support connected, tech-savvy employees whose expectations have been shaped by consumer web services. Staff expect to find and share business and product-related information as easily as they can browse and buy a book online, and this leads to unsanctioned use of non-business tools. They also expect their company systems to support remote and collaborative working styles, allowing them to get their work done independent of location, network, and device. Their expectations for ease of access intensify the pressure on IT organisations to modernise their content and process management strategies.

2. Evolving Customer Expectations: With customers able to stay connected 24/7, the pressure to deliver for them on their chosen platforms and around the clock is huge. To meet these new expectations, manufacturers face disruptions across the product lifecycle. Not only must they innovate faster, but also create products that are software enabled and connected (e.g. cars and even tractors). These next-generation products require collaboration amongst diverse engineering teams that previously haven’t worked together. These product lifecycle management changes must be supported with new forms of information that can be readily shared inside and outside of the enterprise. But orchestrating this across divisions, geographies, expertise, and roles in the product lifecycle is a more complicated challenge than ever before.

3. Value of the Extended Enterprise: Exponential growth in connected activity and information flow is reshaping the modern manufacturing firm. Today, businesses are often a web of companies, contractors, suppliers, resellers, employees, and customers. The ability to share content and process effectively across the extended enterprise is a must. Manufacturing firms are extending their value chains by using external collaborators for product design, development, marketing etc., in particular to optimise processes and increase transparency. Partner and supplier input is also crucial, facilitating all of this collaboration will lead to the ultimate extended enterprise.

4. Big Data, the IoT and the era of context: IDC predict the market for big data technology and services will grow at a compound annual growth rate (CAGR) of 23 percent by 2019[3]. A staggering 90 percent of it will be unstructured information such as e-mails, documents, and video. This adds to the content management challenge. Furthermore, as these pressures converge with new IoT capabilities, offline products are turned into ones always on tap with data. The digitised manufacturer now has a slew of new sources of product information that must be managed, updated, analysed, and somehow combined with those produced from within.

5. Hybrid Cloud IT Infrastructure: Manufacturers aren’t known for going overboard investing in advanced IT, yet leading-edge cloud computing technology seems tailor-made for them and many are moving business content to this platform. IDC and Forrester predict a major shift to hybrid enterprise content management. This next-generation approach involves storing content both on-premises and in the cloud that are seamlessly connected. Hybrid ECM meets IT’s need for control and compliance, while freeing business users and external collaborators to be more productive.

The digital economy favours those who consistently demonstrate agility and do things differently. The flexible, digitised manufacturer will invest in technologies to create value across the extended enterprise and in the process, deliver on the real business of innovation, revenue growth and customer satisfaction. Manufacturers should look at how digital transformation initiatives can deliver real impact across the value chain, including employees, partners and suppliers, enabling better collaboration and knowledge sharing. The end of the year is the perfect time to look ahead at how they can innovate with new business model approaches and leverage the latest technology.

Containers are considered one of the most innovative technologies among developers because they streamline the development process and make it as agile as possible. As a result, there are many arguments in favour of using container-based hosting for testing environments.

By Alexander Vierschrodt, Head of Commercial Product Management Server, 1&1 Internet SE.

Containers can run independently from the operating system and additional virtual machines, allowing the application in a container to be copied and transferred easily from the development environment to the testing environment. This lets the developer continue working on projects during the test without being affected by it. This evolved process creates more flexibility, since testing and productive operation can run simultaneously, enabling continuous delivery.

The new status quo

In Europe, container technology has evolved from something rare and exotic to a popular phenomenon, with large corporations increasingly wanting to optimise their IT infrastructure. Just a few years ago, outside of the developer community, container technology was only popular in certain circles. The technology has since been adopted by many companies who have realised that with the help of containers, IT processes, especially development projects, can be made more efficient and implemented more quickly.

Kubernetes has established itself as the leading brand in the automation of provisioning, scaling, and managing container applications, and has become the industry standard. With Kubernetes, the open source community has created a great tool to set up and manage a wide variety of containers. In addition, many new products and services relating to container technologies are also emerging. Gradually, a container ecosystem is growing, which will eventually connect open source and commercial applications symbiotically. This makes it increasingly easier for companies to use containers and find a set of tools that suits their individual needs. At the same time, users are generally becoming braver and more open to trying new things, and consequently, more and more companies have endeavoured to implement container technology. This is an important step. Only companies that implement containers along the entire application process can benefit from their full potential, as well as take advantage of highly scalable and ultra-flexible IT infrastructure.

Software testing: plan-build-run vs continuous delivery

Businesses have become increasingly more agile across the board, especially in their IT departments. IT professionals are constantly searching for new technologies to make their projects flexible and testable. This is where the strength of container technology comes into play. The classic plan-build-run model has been obsolete for years as it is too rigid for the development of IT projects, which must be fast and flexible. The stringent sequence of planning, construction, and operation offers no space for continuous review of the project. As a result, errors are usually identified and remedied only in the test phase shortly before the final phase. In the worst case scenario, the entire development process must be run again. An agile, flexible process with fast implementation is therefore impossible using this model.

Continuous delivery, on the other hand, relies on a consistent review of the individual subprojects. Iterations and optimisations are done during the ongoing development process and are thus constantly executed. The cornerstone of continuous delivery is that the code or the project can be delivered and used productively at any point in time. Unlike the plan-build-run concept, there are no fixed project times in the continuous delivery system, during which the integration, testing, or completion of the project would take place. Iterative sprints take place exactly when they are needed for the project. This ensures that there are no unpleasant surprises that could delay or prevent a project from going live.

The benefits of dynamic continuous delivery are significant when compared to the classic plan-build-run process. It is therefore clear why the former is the more advanced and promising method today. The benefits, much like the process, merge smoothly into an ongoing cycle. First of all, it is less risky; releases can be continuously developed and implemented, and do not require expensive preparation. Roll-backs are also easy and straightforward, meaning a quicker time to market can be guaranteed. In particular, testing and improvement phases are usually very time-consuming. With continuous delivery, these phases are ongoing during the actual development process. The result is an uninterrupted quality control and verification process. This allows you to react quickly and flexibly without risking the headaches that can be the case with the classic plan-build-run model. Additionally, errors are detected earlier. The consistent review of subprojects ensures a higher level of quality in the end; there is no time pressure in the final phases of development, as the transition to a productive system often follows directly.

Use of containers in testing: agile and reliable

Conventional test environments have the disadvantage that they are very resource-intensive. The virtual machines (VMs) used here require the appropriate operating systems, computing power, and storage space for the VM itself, as well as the stored data volume. Additionally, there are numerous security risks to the resources used, all of which need to be patched separately. Containers, on the other hand, share all these resources with the so-called container host. It provides all of the operating systems, storage, and other necessary resources. All containers in the cluster access this host and its resources, making it faster to use because not every container needs to boot separately. In addition, container processes are isolated and are therefore less vulnerable to security risks.

If you transfer a test project into a container, the project benefits from a faster development time. The container is not only available more quickly, but also independently of the actual environment since it interacts with the container host in terms of resources. Security is also less of a concern than in conventional development environments. Because all the resources needed are used centrally through the cluster host, it takes much less effort to maintain and update security settings. In this way, gaps in security can be eliminated faster and more effectively, or do not even arise in the first place.

One further advantage is that the environment within different containers in a cluster is identical, and can be adapted to the actual production environment during testing. This way, the transition is seamless and free of problems.

Conclusion

Container technologies are not only beneficial for software testing: development projects of all kinds benefit from this technology because it saves resources while also being stable, flexible and agile. Furthermore, in comparison with conventional development environments, they are more cost-effective.

In order to be able to use containers efficiently, many companies will need to make adjustments to their processes. An IT strategy on its own is the most basic requirement in order to successfully implement containers. However, to achieve agility in the entire development process, the correct corporate structure must be present in addition to the appropriate IT architecture. If both of these are present, then nothing stands in the way of success.

Berendsen, a world leading textile and laundry provider, is using Highlight’s network and application monitoring service to deliver visibility into its managed network service supplied by Gamma.

The Highlight service is an integrated part of Gamma’s managed voice and data network service. It allows both Berendsen and Gamma to have a shared and consolidated view into the behaviour of the network that serves Berendsen’s 152 circuits with 52 laundries and an array of on-premise customer sites across the UK. Highlight’s graphical view delivers insights into what might need upgrading, where misuse is happening and where there are issues that need to be fixed.