I recently attended a local sporting venue and, in attempting to buy some refreshments, was told that the card machine wasn’t working, owing to the poor wireless connectivity, so I was invited to go and join the very long queue at the only cash machine, pay for the privilege of accessing some money and then return to the bar to pay for the drinks. Not a great experience and one that would almost certainly put me off from returning.

One of my sons works at this venue and he tells me that they have looked at upgrading the wireless network, but the cost is deemed to be so prohibitive, that they carry on as is – with an unreliable network, and some dissatisfied customers.

This, in miniature, is the choice facing almost every business across the globe right now. For sure, all would love to have the very latest IT and offer the best possible customer service, but there’s a significant, often unaffordable, price tag to make this happen. So, many companies either make do, or carry out partial upgrades.

Most importantly, every business needs to carry out what is, in essence, a risk assessment befor making any IT investment. What are the consequences to the business of doing nothing, and what are the consequences to the business of doing everything? At the one extreme – doing nothing – there might be some loss of customers, but if the business has something of a monopoly (as is the case with this local sporting venue), does this really matter? At the other extreme, spending out large sums of money to offer the very best possible customer environment could just bankrupt a business.

There are no easy answers to the IT investment dilemma, but by carrying out a thorough risk analysis, it’s possible to arrive at what level of spending makes the most sense to the business.

Back to my sporting venue, and about an hour or so after I’d purchased the bottles of wine, the waitress who had served me came over and apologised that she’d overcharged me and gave me back the ‘extra’ money. Human nature being what it is, I was not annoyed that I’d been overcharged, rather surprised and delighted that someone had been honest enough to rectify the mistake, rather than pocket the money. So, maybe I will be returning to this venue after all!

Less than one third (31%) of data specialists, including data analysts, data scientists and data quality managers, are fully confident in their ability to deliver trusted data at speed throughout their organization, reveals a new global survey from Talend.

In the Talend commissioned survey conducted by Opinion Matters, 763 data professionals (executives and operational data workers) around the globe were queried to understand confidence levels in their organizations' ability to deal with two significant simultaneous challenges: 1) Capturing, processing, and democratizing data at speed; and 2) ensuring the reliability and integrity of the information in the data streams shared by the organization.

Trust Perception Gap between Management and Operations

According to the survey, there is a significant gap in perceptions between senior IT management and mid-ranking data professionals (operational data workers), with the former substantially more confident in their organizations' abilities.

"The different levels of confidence displayed by people at a management level and operational data workers are not surprising, but it is definitely worrying," said Ciaran Dynes, Senior VP of Products at Talend. "What we see today is that organizations are struggling to deliver trusted data when they need to deliver it and they are also struggling to gain credibility internally, in the market and with customers. Organizations need to build a bridge between IT and data workers - responsible for delivering at speed - and the people in charge of building and safeguarding trust, something which is often led at an executive level. Although generating trust may come from the top, the ability to deliver trusted data at speed requires the commitment of every data specialist within an organization as well as cultural alignment. This usually relies on the work of data champions, who have the skills to lead cultural change in data handling and processes as well."

Major findings from the survey, highlighting three significant gaps - trust, speed and execution - include:

Excellence of speed and integrity control: The Leaders and the others

Digital transformation is often about speed: accelerating time to market, driving business insights or actions in real time, or delivering personalized customer experiences. When organizations succeed in combining speed with integrity, they can deliver intelligent and trusted data in everything they do. However, despite the importance of ensuring speed and trust in data, a mere 11% of respondents consider that their businesses have reached excellence in both speed and integrity.

A significant difference between between management and operational workers

Overall, people close to data (data workers) are less confident in their organizations' abilities to trust in their own data, with only 31% showing high levels of confidence. By contrast, 46% of respondents at a management level are confident in the ability of their organizations to deliver trusted data at speed.

For regulatory compliance, one of the key criteria to evaluate trust, 52% of respondents at a management level claim to be very optimistic when it comes to having achieved compliance with data regulations, while the rate falls to 39% among the operational data workers - who may be in charge of making the practical changes to deliver compliance.

Data quality confidence remains low

The survey shows that only 38% of respondents believe their organizations excel in controlling data quality. Less than one in three (29%) operational data workers are confident their companies’ data is always accurate and up-to-date.

360-degree real-time data integration is still a challenge

Having access to real-time or at least timely data accelerates changes and helps organizations to make faster, more reliable strategic business decisions, which lead to better outcomes.

According to the survey, only 34% of operational data workers believe in their organizations' capability to succeed in a 360-degree real-time data integration process whereas respondents at a management level again feel more confident (46%) in this regard.

"We’ve entered the era of the information economy, where data has become the most critical asset for every single organization," continues Dynes. "Data-driven strategies are imperative for success in any industry. To support business objectives such as revenue growth, profitability, and customer satisfaction, organizations require trusted data which can be delivered when it is needed and relied upon to drive critical business insights. Trust in data has to be paramount because without trusted data there can be no confidence in business decisions, and at that point stakeholder and customer trust will quickly evaporate too."

Two in three organizations plan to deploy Artificial Intelligence to bolster their defense as soon as 2020.

Businesses are increasing the pace of investment in AI systems to defend against the next generation of cyberattacks, a new study from the Capgemini Research Institute has found. Two thirds (69%) of organizations acknowledge that they will not be able to respond to critical threats without AI. With the number of end-user devices, networks, and user interfaces growing as a result of advances in the cloud, IoT, 5G and conversational interface technologies, organizations face an urgent need to continually ramp up and improve their cybersecurity.

The “Reinventing Cybersecurity with Artificial Intelligence: the new frontier in digital security” study surveyed 850 senior IT executives from IT information security, cybersecurity and IT operations across 10 countries and seven business sectors, and conducted in-depth interviews with industry experts, cybersecurity startups and academics.

Key findings include:

AI-enabled cybersecurity is now an imperative: Over half (56%) of executives say their cybersecurity analysts are overwhelmed by the vast array of data points they need to monitor to detect and prevent intrusion. In addition, the type of cyberattacks that require immediate intervention, or that cannot be remediated quickly enough by cyber analysts, have notably increased, including:

·cyberattacks affecting time-sensitive applications (42% saying they had gone up, by an average of 16%).

·automated, machine-speed attacks that mutate at a pace that cannot be neutralized through traditional response systems (43% reported an increase, by an average of 15%).

Facing these new threats, a clear majority of companies (69%) believe they will not be able to respond to cyberattacks without the use of AI, while 61% say they need AI to identify critical threats. One in five executives experienced a cybersecurity breach in 2018, 20% of which cost their organization over $50m.

Executives are accelerating AI investment in cybersecurity: A clear majority of executives accept that AI is fundamental to the future of cybersecurity:

·64% said it lowers the cost of detecting breaches and responding to them – by an average of 12%.

·74% said it enables a faster response time: reducing time taken to detect threats, remedy breaches and implement patches by 12%.

·69% also said AI improves the accuracy of detecting breaches, and 60% said it increases the efficiency of cybersecurity analysts, reducing the time they spend analyzing false positives and improving productivity.

Accordingly, almost half (48%) said that budgets for AI in cybersecurity will increase in FY2020 by nearly a third (29%). In terms of deployment, 73% are testing use cases for AI in cybersecurity. Only one in five organizations used AI pre-2019 but adoption is poised to skyrocket: almost two out of three (63%) organizations plan to deploy AI by 2020 to bolster their defenses.

“AI offers huge opportunities for cybersecurity,” says Oliver Scherer, CISO of Europe’s leading consumer electronics retailer, MediaMarktSaturn Retail Group. “This is because you move from detection, manual reaction and remediation towards an automated remediation, which organizations would like to achieve in the next three or five years.”

However, there are significant barriers to implementing AI at scale: The number-one challenge for implementing AI for cybersecurity is a lack of understanding of how to scale use cases from proof of concept to full-scale deployment. 69% of those surveyed admitted that they struggled in this area.

Geert van der Linden, Cybersecurity Business Lead at Capgemini Group says “Organizations are facing an unparalleled volume and complexity of cyber threats and have woken up to the importance of AI as the first line of defense. As cybersecurity analysts are overwhelmed, close to a quarter of them declaring they are not able to successfully investigate all identified incidents, it is critical for organizations to increase investment and focus on the business benefits that AI can bring in terms of bolstering their cybersecurity.”

Additionally, half of surveyed organizations cited integration challenges with their current infrastructure, data systems, and application landscapes. Although the majority of executives say they know what they want to achieve from AI in cybersecurity, only half (54%) have identified the data sets required to operationalize AI algorithms.

Anne-Laure Thieullent, AI and Analytics Group Offer Leader at Capgemini concludes “Organizations must first look to address the underlying implementation challenges that are preventing AI from reaching its full potential for cybersecurity. This means creating a roadmap to address key barriers and focusing on use cases that can be scaled most easily and deliver the best return. Only by taking these steps can organizations equip themselves for the rapidly evolving threat of cyberattacks. By doing so, they will save themselves money, and reduce the likelihood of a devastating data breach.”

Fujitsu has released its Fujitsu Future Insights Global Digital Transformation Survey Report 2019, which highlights the results of its survey conducted among 900 CxOs and decision-makers at large and mid-sized companies spread across different industries in 9 countries.

With this survey Fujitsu aims to understand the state of their digital transformation journeys with regard to AI and other advanced technologies, and to clarify how business leaders around the world perceive the concept of "trust", an increasingly urgent theme in recent years. The third iteration of this survey also revealed the six success factors in digital transformation initiatives and the importance of organizational abilities such as leadership. The survey additionally takes an in-depth look at trust toward online data and decisions made by AI or a person.

Fujitsu will ultimately draw on these findings to accelerate its work with customers in advancing their digital transformation initiatives to achieve greater trust in business and society.

Background

We live today in a world that is more connected, more globally integrated, and faster paced than ever before. While the benefits delivered by digital technology seem obvious and ubiquitous, issues surrounding the trustworthiness of personal data control and decisions made by AI remain a growing concern.

In light of these persistent challenges, Fujitsu embarked on the third iteration of its Global Digital Transformation Survey, first carried out in 2017, revealing the status of digital transformation initiatives and clarifying how global business leaders perceive "trust", which represent important themes in driving business success in recent years.

Summary of Survey Findings

1. Status of Digital Transformation

87% of companies surveyed have already begun their digital transformation journey. Players in financial services and transportation companies were found to be the most advanced in their initiatives. About half of companies in these industries delivered positive outcomes.

Fujitsu's previous survey revealed that six organizational capabilities are required to deliver positive outcomes in digital transformation projects: Leadership, Ecosystem, Empowered people, A Culture of Agility, Value from Data, and Business Integration. Analysis of this year's survey also reveals that successful companies possess these organizational capabilities, which we refer to as "digital muscles."

2. Trust in Online Data

72% of respondents were worried that organizations may exploit personal data without their permission. In some cases, however, respondents found it acceptable to provide personal data. These include cases in which the company receiving the personal data can be trusted and where the personal data provided can be used to enhance products and services.

3. Decisions Made by AI or by a Person

Respondents remain uncertain whether they better trust decisions made by AI or by a person. Our survey shows that respondents tend to trust decisions made by AI more in situations where the human impact is less significant. Moreover, 63% of respondents said that they would trust decisions made by AI if the AI shows substantial reasons for reaching the decisions, and 66% indicated that they would trust a company that published a code of ethics governing the use of AI.

4. Empowering People to Drive Successful Digital Transformation

Companies in which management places an emphasis on long-term perspectives, practices empathic leadership by sharing their messages and passion with employees, and empowers their staff tend to achieve greater success in their journeys toward digital transformation.

A greater understanding and acceptance of the value of Augmented Reality (AR) and Virtual Reality (VR) solutions across enterprise verticals are driving continued investment and growth. In addition, advancements in hardware and software systems and increasing capabilities are creating a robust objective value among numerous use cases. According to ABI Research, a global tech market advisory firm, this growth trend will continue as more ROI data points become available, and enterprise AR/VR customers continue to scale their efforts. Case studies highlight immense ROI for AR/VR integrations, with training time reduction up to 50 % and general efficiency increases showing similar results.

“Successful case studies have proven that businesses can see immediate and notable ROI with use cases such as remote expertise and AR training,” says Eleftheria Kouri, Research Analyst at ABI Research. For example, traveling costs or training courses that require instructors and external facilities dramatically increase expenses. AR and VR solutions have proven to minimize travel and at the same time, allow multiple trials and repeats during or after a formal training, at zero cost. No more consuming extra resources/materials or requiring supervision. Emerging technologies also have a direct impact on other crucial metrics, such as significantly reducing employee accidents while increasing employee engagement and satisfaction.

Companies such as Portico and PixoVR have proven that leveraging VR solutions reduces training time significantly. Porsche decreased service resolution time by 40% when utilizing AR guidance and remote assistance for maintenance, and Boeing managed to reduce production time by 35% with AR compared to traditional documentation and training methods. There are numerous successful examples in the market that prove the advantages that emerging solutions bring in terms of employee efficiency, improved production and quality, customer service, and machine lifecycle. Overall Equipment Effectiveness (OEE) can benefit dramatically from AR/VR, especially with reduced service resolution rate, error/defect rate, and total unplanned downtime.

“A deeper examination of AR/VR applications, integration with other disruptive technologies such as IoT or AI, and increasing successful implementations in the market continuously prove the value of AR/VR solutions in the enterprise,” concludes Kouri. “Advancements and more competitive prices in hardware and software will naturally happen, and more long-term implementations are necessary for minimizing uncertainty, for identifying and addressing potential challenges such as security or change management, and for determining best practices that drive successful and stable digital transformation.”

Apptio has released a report highlighting how the changing economics of IT and the push for digital transformation is disrupting C-suite dynamics and positioning the CIO as the leader most effective at driving change.

The survey, Disruption in the C-suite: How the digital transformation imperative is changing CxO dynamics and technology strategy, conducted in partnership with Financial Times (FT) Focus, the independent thought leadership arm of the Financial Times, found that digital transformation taking place in almost every company, in every industry, means that agility, advocacy and data is the new IT currency.

“Digital is completely transforming the IT operating model, and that means CIOs and CFOs need to work collaboratively,” said Sunny Gupta, Apptio CEO. “These business leaders need to accelerate new delivery models such as hybrid and cloud, optimise growth investments to fund innovation, and boost financial agility so that IT finances can be managed and adjusted in real-time based on the highest needs of the business.”

The survey finds that the pursuit of digital transformation has led to a greater spirit of collaboration in the C-suite, and greater trust in IT across the business. Yet this report also reveals a blurring of responsibilities, tensions between IT and finance, and a critical role for the CIO in reshaping the organisation for sustained growth.

“Technology leaders are more emboldened to drive organisational change: their priorities are shifting as they take a more agile approach to IT strategy,” said Sean Kearns, Editorial Director for FT Focus. “Customer expectation, and businesses’ corresponding sense of urgency, is changing the dynamics of the C-suite.”

Strategy at speed

More than half (56%) of organisations who are embracing digital transformation say they are adopting an agile, flexible strategic approach that constantly evolves based on continual learning from the business and customers. And while technology leaders seek to drive growth through innovation, they expect to maintain almost the same proportion of effort enabling business model change over the next three years, as they balance the need for growth with the need to operate existing IT systems.

The new C-suite

More than two-thirds (68%) of global respondents agree that digital transformation has strengthened collaboration across the C-suite leaders when it comes to developing new products and services. Yet, 47% of business leaders say digital transformation blurs the lines of roles and responsibilities. This doesn’t mean that all leaders are necessarily aligned on business priorities or technology strategy. CIOs and CFOs are seen as the least aligned, with only 23% of UK respondents saying the two functions are in deep alignment, compared to a global average of 30%. These new dynamics are creating tension – especially between finance and the IT leadership.

The power of persuasion

This dynamic creates an enormous opportunity for CIOs to drive change. Survey findings highlight that CIOs are now considered the C-suite leader most effective at delivering change based on customer insight, even more than the CMO or CEO. But in order for CIOs to take advantage of the enormous opportunity this poses to be the change-driver in their organization, CIOs need to communicate effectively with the rest of the business and influence all stakeholders. Seventy-one percent of finance leaders say that the IT function needs to develop greater influencing skills in order to deliver the change their business requires. IT leaders need to develop those communication skills within their teams and ensure that they are equipped with the right blend of technical, business and influencing skills.

Decisions and where to make them

Companies are unsettled about how important technology decisions are made and evaluated. The cloud is crucial to meeting digital aspirations, but concerns over governance pose challenges for adoption and migration, causing only 30% of leaders to feel confident in IT’s ability to govern cloud across the business. Agile delivers value in accelerating adoption of new technology and enabling digital transformation, but greater clarity is needed on tracking performance. Less than one fifth of companies (16%) have a clearly defined framework to map success across the business, and UK leaders are among the most likely to adopt agile without this framework (24%).

Leading with data

Real-time data gives IT leaders the power to assess their investments in new technology and make better decisions. According to 51% of leaders in our survey, the IT function is taking a more proactive stance on data leadership across the business compared with other functions, and they say that this approach is paying off. Of those who say that the IT function is taking a more proactive stance, 58% say that this approach is very effective in helping to meet growth targets.

Continuing unprecedented demand for new datacentres, fears around the shortage of skilled professionals, concerns about the future disruption of 5G, and the limited impact of Brexit are some of the key findings from the latest industry survey from Business Critical Solutions (BCS).

The Summer Report, now in its 10th year, is undertaken by independent research house IX Consulting, who capture the views of over 300 senior datacentre professionals across Europe, including owners, operators, developers, consultants and end users. It is commissioned by BCS, the specialist services provider to the digital infrastructure industry.

The report highlights the rising demand for datacentres with almost two thirds of users exceeding 80% of their capacity today, 70% having increased capacity in the last six months and almost 60% planning increase capacity next year. This demand is currently being driven by cloud computing with over three quarters of respondents identifying 5G and Artificial Intelligence (AI) as disruptors for the future. With industry predictions that edge computing will have 10 times the impact of cloud computing in the future, half of respondents believe it will be the biggest driver of new datacentres. However, the survey found that the market remains confident that supply can be maintained, with over 90% of developers stating they have expanded their datacentre portfolio in the last six months.

With regards to supply, there are concerns that a shortage of sufficiently qualified professionals at the design and build stages will cause a bottle neck, with 64% of datacentre users and experts believing there is a lack of skilled design resource in the UK. AI and Machine Learning may help to mitigate these issues with nearly two thirds of respondents confident that datacentres will utilise these to simplify operations and drive efficiency.

The political uncertainty around Brexit continues to impact the sector with 78% of respondents believing that it will create an increase in demand for UK-based datacentres. However, the overall feeling was that the fundamentals underpinning the demand for datacentre space, such as the continued proliferation of technology-led services, outweighs these concerns and the European datacentre market will overcome any difficulties that occur.

Commenting on the report, James Hart, CEO at BCS, said: “As always this report makes for fascinating reading and I was encouraged by the overwhelming positive sentiment to forecast growth and the limited impact of Brexit. The fact that half of our respondents believe that edge computing will be the biggest driver of new datacentres tallies with our own convictions. We believe that the edge of the network will continue to be at the epicentre of innovation in the datacentre space and we are seeing a strong increase in the number of clients coming to us for help with the development of their edge strategy and rollouts.”

RiskIQ has released its annual “Evil Internet Minute” report today. The company tapped proprietary global intelligence and third-party research to analyse the volume of malicious activity on the internet, revealing that cybercriminals cost the global economy £2.3 million every minute last year, a total of £1.2 trillion.

The data shows that in a single internet minute, £2,300,000 is lost to cybercrime. Top companies pay £20 per minute due to security breaches. Additional malicious activity includes:

"As the scale of the internet continues to proliferate, so does the threat landscape," said Lou Manousos, CEO of RiskIQ. "By compiling the vast numbers associated with cybercrime in the past year, we made the research more accessible by framing it in the context of an 'internet minute.' We are entering our third year defining the sheer scale of attacks that take place across the internet using the latest third-party research and our own global threat intelligence so that businesses can better understand what they're up against on the open web."

Tactics range from malvertising to phishing to supply chain attacks that target e-commerce, like the Magecart hacks that have increased by 20 percent in the last year. The motives of cybercriminals include monetary gain, large-scale reputational damage, political motivations, and espionage.

“Without greater awareness and an increased effort to implement necessary security controls, there will be more attacks using an ever-expanding range of technologies and strategies,” Manousos said. “With the recent explosion of web and browser-based threats, organisations should look to what can happen in a matter of minutes and evaluate their current security strategy. Businesses must realise that they are vulnerable beyond the firewall, all the way across the open internet."

Every day, there are countless headlines in the world’s media documenting the damage of cyber attacks. It’s almost always the financial and reputational damage inflicted upon organisations that’s focused upon, rather than the human cost of these attacks.

In order to find out more about this, Barracuda Networks carried out a study of 660 IT security professionals in organisations between 100 to over 5000 employees across the globe. Of those, almost 20% of responses (124) came from EMEA.

The results make worrying reading for businesses, suggesting that employee productivity is under considerable threat from email security attacks. At a time when organisations are looking to maximise budgets and resources, we discovered that 40% of IT professionals in EMEA consider email security attacks to have a negative impact on employee productivity.

The human element

According to the survey, EMEA IT teams receive more suspicious emails than the global average, with 7% receiving over 50 per day and a third (32%) receiving between six and 50 per day.

Although 44% of respondents agreed that very few (less than 10%) of the suspicious emails reported turn out to be fraudulent, the time taken to identify and respond to email reports on this scale is taking its toll on IT teams’ productivity. So much so, that the vast majority (81%) admitted spending over 30 minutes investigating and remediating each email attack, while 47% spend over an hour per attack.

It’s clear email attack management has created a significant overhead for EMEA organisations. Without the correct automated incident response tools in place to alleviate the stress and complexity of email attacks, the manual investigation and resolution time can only be expected to rise.

A rising concern outside of the workplace

Outside of the office, these attacks are also affecting the well-being of IT professionals at home. Over a third (38%) blame email attacks for increased stress at work, with senior IT leaders most likely to suffer this impact.

The same number (38%) admit to worrying about email attacks outside of work hours with 16% having to cancel personal plans due to email attacks. Additional stress comes from the potential reputation damage that comes from successful attacks, which 32% admit is a concern.

This stress is also reinforced by respondents lack of faith in their organisation’s security. Over half (52%) of EMEA respondents claim that their organisation’s security is unlikely to have improved in the last year, compared to the global average of 63%. The global results also identified EMEA as the region most likely to fall victim to spear phishing attacks, with 48% of EMEA organisations falling victim to spear phishing in the past twelve months.

The results found that the impact of spear phishing attacks on the reputation of organisations in EMEA is much higher compared to other regions; 39% of EMEA respondents reported damage to the reputation over the past year, compared to the global average of 27%.

Combine this with the fact that EMEA respondents believe their investment is lagging behind the rest of the world when it comes to dedicated spear phishing and automated incident response and we begin to see the worrying spot EMEA IT teams find themselves in.

But why is this the case? Especially since IT professionals know that successful security requires a combination of innovative technology and effective training.

A lagging region

Firstly, EMEA budgets are increasing at a much slower rate than the rest of the world. This could be attributed to the pattern of spending on email security, which shows that over half (54%) of EMEA organisations have not changed their spending over the past year, versus a global average of 45%, while only 39% have increased their spending (compared to 48% worldwide).

In addition to this, most organisations are also lacking correct security awareness training, with almost a quarter (23%) of EMEA respondents admitting that they have never received email attack training, compared to the global average of 17%. Less than a quarter (21%) of EMEA respondents had received sufficient email security training in the past three months. For context, in the US this number almost doubled at 39%.

Across the board EMEA IT security teams are lagging behind their global peers when it comes to email security, which is having a direct impact on the productivity and stress of their employees. Be it the right tools, the right training or more, it’s clear EMEA organisations have far to go to bridge the gap and turn their employees into an effective line of defence as part of a wider holistic email protection strategy.

Link11, a leader in cloud-based anti-DDoS protection, has published its DDoS statistics for Q2 2019. The data shows that the quarter saw a massive 97% year-on-year increase in average attack bandwidth, up from 3.3Gbps in Q2 2018 to 6.6Gbps in Q2 2019.

These attacks are easily capable of overloading many companies’ broadband connections. There are several DDoS-for-hire services offering attacks between 10 and 100 Gbps for a modest fee. Currently, one DDoS provider is offering free DDoS attacks of up to 200 Mbps bandwidth for a duration of five minutes.

The maximum attack volumes seen by Link11 between April and June 2019 also increased by 25% year-on-year, to 195Gbps from 156Gbps in Q2 2018. In addition, 19 more high-volume attacks with bandwidths over 100 Gbps were registered in Q2 2019.

Rolf Gierhard, Vice President Marketing at Link11 said: "Too many companies still have the wrong idea when it comes to the threat posed by DDoS attacks. Our data shows that the gap between attack volumes, and the capability of corporate IT infrastructures to withstand them, is widening from quarter to quarter. Given the scale of the threat that organizations are facing, and the fact that the attacks are deliberately aimed at causing maximum disruption, it’s clear that businesses need to deploy advanced techniques to protect themselves against DDoS exploits."

Increasing complexity of attacks

Multi-vector attacks posed an additional threat in Q2 2019, with a significant increase in complex attack patterns. The proportion of multi-vector attacks grew from 45% in Q2 2018 to 63% in the second quarter of 2019. Attackers most frequently combined three vectors (47%), followed by two vectors (35%) and four vectors (15%). The maximum number of attack vectors seen was seven.

Further findings from Link11’s Q2 DDoS statistics include:

IBM Security has published the results of its annual study examining the financial impact of data breaches on organizations.

According to the report, the cost of a data breach has risen 12% over the past 5 years and now costs $3.92 million on average. These rising expenses are representative of the multiyear financial impact of breaches, increased regulation and the complex process of resolving criminal attacks.

The financial consequences of a data breach can be particularly acute for small and midsize businesses. In the study, companies with less than 500 employees suffered losses of more than $2.5 million on average – a potentially crippling amount for small businesses, which typically earn $50 million or less in annual revenue.

For the first time this year, the report also examined the longtail financial impact of a data breach, finding that the effects of a data breach are felt for years. While an average of 67% of data breach costs were realized within the first year after a breach, 22% accrued in the second year and another 11% accumulated more than two years after a breach. The longtail costs were higher in the second and third years for organizations in highly-regulated environments, such as healthcare, financial services, energy and pharmaceuticals.

"Cybercrime represents big money for cybercriminals, and unfortunately that equates to significant losses for businesses," said Wendi Whitmore, Global Lead for IBM X-Force Incident Response and Intelligence Services. "With organizations facing the loss or theft of over 11.7 billion records in the past 3 years alone, companies need to be aware of the full financial impact that a data breach can have on their bottom line –and focus on how they can reduce these costs."

Sponsored by IBM Security and conducted by the Ponemon Institute, the annual Cost of a Data Breach Report is based on in-depth interviews with more than 500 companies around the world that suffered a breach over the past year.3 The analysis takes into account hundreds of cost factors including legal, regulatory and technical activities to loss of brand equity, customers, and employee productivity. Some of the top findings from this year's report include:

Malicious Breaches Pose a Growing Threat; Accidental Breaches Still Common

The study found that data breaches which originated from a malicious cyberattack were not only the most common root cause of a breach, but also the most expensive.

Malicious data breaches cost companies in the study $4.45 million on average – over $1 million more than those originating from accidental causes such as system glitch and human error. These breaches are a growing threat, as the percentage of malicious or criminal attacks as the root cause of data breaches in the report crept up from 42% to 51% over the past six years of the study (a 21% increase).

That said, inadvertent breaches from human error and system glitches were still the cause for nearly half (49%) of the data breaches in the report, costing companies $3.50 and $3.24 million respectively. These breaches from human and machine error represent an opportunity for improvement, which can be addressed through security awareness training for staff, technology investments, and testing services to identify accidental breaches early on. One particular area of concern is the misconfiguration of cloud servers, which contributed to the exposure of 990 million records in 2018, representing 43% of all lost records for the year according to the IBM X-Force Threat Intelligence Index5.

Breach Response Remains Biggest Cost Saver

For the past 14 years, the Ponemon Institute has examined factors that increase or reduce the cost of a breach and has found that the speed and efficiency at which a company responds to a breach has a significant impact on the overall cost.

This year's report found that the average lifecycle of a breach was 279 days with companies taking 206 days to first identify a breach after it occurs and an additional 73 days to contain the breach. However, companies in the study who were able to detect and contain a breach in less than 200 days spent $1.2 million less on the total cost of a breach.

A focus on incident response can help reduce the time it takes companies to respond, and the study found that these measures also had a direct correlation with overall costs. Having an incident response team in place and extensive testing of incident response plans were two of the top three greatest cost saving factors examined in the study. Companies that had both of these measures in place had $1.23 million less total costs for a data breach on average than those that had neither measure in place ($3.51 million vs. $4.74 million).

Additional factors impacting the cost of a breach for companies in the study included:

Regional and Industry Trends

The study also examined the cost of data breaches in different industries and regions, finding that data breaches in the U.S. are vastly more expensive – costing $8.19 million, or more than double the average for worldwide companies in the study. Costs for data breaches in the U.S. increased by 130% over the past 14 years of the study; up from $3.54 million in the 2006 study.

Additionally, organizations in the Middle East reported the highest average number of breached records with nearly 40,000 breached records per incident (compared to global average of around 25,500.)

For the 9th year in a row, healthcare organizations in the study had the highest costs associated with data breaches. The average cost of a breach in the healthcare industry was nearly $6.5 million - over 60% higher than the cross-industry average.

According to a new global survey from CyberArk, 50 percent of organizations believe attackers can infiltrate their networks each time they try. As organizations increase investments in automation and agility, a general lack of awareness about the existence of privileged credentials – across DevOps, robotic process automation (RPA) and in the cloud – is compounding risk.

According to the CyberArk Global Advanced Threat Landscape 2019 Report, less than half of organizations have a privileged access security strategy in place for DevOps, IoT, RPA and other technologies that are foundational to digital initiatives. This creates a perfect opportunity for attackers to exploit legitimate privileged access to move laterally across a network to conduct reconnaissance and progress their mission.

Preventing this lateral movement is a key reason why organizations are mapping security investments against key mitigation points along the cyber kill chain, with 28 percent of total planned security spend in the next two years to focus on stopping privilege escalation and lateral movement.

Proactive investments to reduce risk are critical given what this year’s survey respondents cite as their top threats:

Security Barriers to Digital Transformation and the Privilege Priority

The survey found that while organizations view privileged access security as a core component of an effective cybersecurity program, this understanding has not yet translated to action for protecting foundational digital transformation technologies.

“Organizations are showing increasing understanding of the importance of mitigation along the cyber kill chain and why preventing credential creep and lateral movement is critical to security,” said Adam Bosnian, executive vice president, global business development, CyberArk. “But this awareness must extend to consistently implementing proactive cybersecurity strategies across all modern infrastructure and applications, specifically reducing privilege-related risk in order to recognize tangible business value from digital transformation initiatives.”

Global Compliance Readiness

According to the survey, a surprising 41 percent of organizations would be willing to pay fines for non-compliance with major regulations, but would not change security policies even after experiencing a successful cyber attack. On the heels of more than $300M in General Data Protection Regulation (GDPR) fines being levied on global organizations for data breaches, this mindset is not sustainable.

The survey also examined the impact of major regulations around the world:

DataStax has published results from an IT Architecture Modernization Trends survey, showing that 99% of IT execs report challenges with architecture modernization and 98% report challenges with their corporate data architectures (data silos). Vendor lock-in (95%) was of particular concern among respondents.

The survey, conducted in conjunction with Dimensional Research and DataStax, takes the pulse of IT architecture modernization trends by investigating current experiences with and plans to reduce complexity and cost around architecture modernization. Respondents included more than 300 executives who work for companies of more than 5,000 employees.

The resulting report provided a number of key insights, including:

The report dives into all the key findings above, showing exactly how all respondents answered the questions and detailing the key drivers and challenges behind their architecture modernization efforts.

“What this report makes clear is that data is certainly the hardest part of architecture modernization,” said DataStax SVP and Chief Product Officer Robin Schumacher. “While the cloud makes so many things around architectures much easier, it also creates additional data-related challenges. DataStax helps enterprises face those challenges so that architecture modernization goes from a daunting task to one that makes it easier for them to out-innovate their competition.”

Organizations are concerned about their ability to keep up with a rapidly changing business landscape, driven in part by concerns about their own organizations’ lagging and misconceived digitalization strategies, according to Gartner, Inc.’s latest Emerging Risks Monitor Report.

In the second quarter of 2019, Gartner surveyed 133 senior executives across industries and geographies, and the results showed that “pace of change” had emerged as the top emerging risk in the 2Q19 Emerging Risk Monitor survey (see Table 1). Last quarter’s top emerging risk, “accelerating privacy regulation,” has now become an established risk after ranking on four previous emerging risk reports.

Closely linked to the concern around pace of change are two operational risks, including “lagging digitalization” and “digitalization misconceptions,” which Gartner experts said may be partly driving the top concern around pace of change and related threats from business model disruption.

“Among the top five emerging risks in the quarter’s survey, the linkages are clear,” said Matt Shinkman, managing vice president and risk practice leader in the Gartner audit and risk practice. “Organizations are concerned with the pace of business change and vulnerability to disruption. Part of the reason they may feel this risk so acutely is related concerns around their own operations, including digitalization strategies and an inadequate talent pipeline.”

Table 1. Top Five Risks by Overall Risk Score: 3Q18-2Q19

| Rank | 3Q18 | 4Q18 | 1Q19 | 2Q19 |

| 1 | Accelerating Privacy Regulation | Talent Shortage | Accelerating Privacy Regulation | Pace of Change |

| 2 | Cloud Computing | Accelerating Privacy Regulation | Pace of Change | Lagging Digitalization |

| 3 | Talent Shortage | Pace of Change | Talent Shortage | Talent Shortage |

| 4 | Cyber Security Disclosure | Lagging Digitalization | Lagging Digitalization | Digitalization Misconceptions |

| 5 | Artificial Intelligence (AI)/Robotics Skill Gap | Digitalization Misconceptions | Digitalization Misconceptions | Data Localization |

Source: Gartner (July 2019)

Seventy-one percent of respondents indicated that pace of change was a key risk facing their organizations. This risk was a consistent concern across industries, with particularly high ratings in healthcare, insurance and industrials, with executives in these industries indicating pace of change as a top emerging risk with a frequency of 70% or higher.

The concern around pace of change is driven by fears of being disrupted by nimbler competitors and a lack of clear avenues for growth. This risk can materialize through a rise in the number of new, disruptive competitors, a failure of the brand proposition to meet client needs or demands and executives not responding to macro trends and changing consumer needs.

Risk leaders have a role to play in inserting themselves early in the strategic planning process and to work across function by collaborating with strategy and finance teams to encourage positive risk taking, such as transformative measures to the business.

“Although the pace of business change is the top concern among organizations, we see a lack of tangible action among many organizations to address it,” said Mr. Shinkman. “Twenty-four percent of organizations report no action to address the impact of the pace of change, while only 28% are elevating this risk to the board.”

Digitalization Concerns Increase Vulnerabilities

Other emerging risks that may be contributing to executives’ concerns around pace of change are related to digitalization:

Worldwide IaaS Public Cloud Services market grew by over 30 percent in 2018

The worldwide infrastructure as a service (IaaS) market grew 31.3% in 2018 to total $32.4 billion, up from $24.7 billion in 2017, according to Gartner, Inc. Amazon was once again the No. 1 vendor in the IaaS market in 2018, followed by Microsoft, Alibaba, Google and IBM.

"Despite strong growth across the board, the cloud market’s consolidation favors the large and dominant providers, with smaller and niche providers losing share,” said Sid Nag, research vice president at Gartner. “This is an indication that scalability matters when it comes to the public cloud IaaS business. Only those providers who invest capital expenditure in building out data centers at scale across multiple regions will succeed and continue to capture market share. Offering rich feature functionality across the cloud technology stack will be the ticket to success, as well.”

In 2018, the top five IaaS providers accounted for nearly 77% of the global IaaS market, up from less than 73% in 2017. Market consolidation will continue through 2019, driven by the high rate of growth for the top providers, which experienced aggregate growth of 39% from 2017 to 2018 compared with the more modest growth of 11% for all other providers during the same period. “Consolidation will occur as organizations and developers look for standardized, broadly supported platforms for developing and hosting cloud applications,” said Mr. Nag.

Amazon continued to lead the worldwide IaaS market with an estimated $15.5 billion of revenue in 2018, up 27% percent from 2017 (see Table 1). The largest of the IaaS providers, Amazon accounts for nearly half of the total IaaS market. It continues to aggressively expand into new IT markets via new services, as well as acquisitions, growing its core cloud business.

Table 1.

Worldwide IaaS Public Cloud Services Market Share, 2017-2018 (Millions of U.S. Dollars)

|

| 2018 Revenue | 2018 Market Share (%) | 2017 Revenue | 2017 Market Share (%) | 2018-2017 Growth (%) |

| Amazon | 15,495 | 47.8 | 12,221 | 49.4 | 26.8 |

| Microsoft | 5,038 | 15.5 | 3,130 | 12.7 | 60.9 |

| Alibaba | 2,499 | 7.7 | 1,298 | 5.3 | 92.6 |

| | 1,314 | 4.0 | 820 | 3.3 | 60.2 |

| IBM | 577 | 1.8 | 463 | 1.9 | 24.7 |

| Others | 7,519 | 23.2 | 6,768 | 27.4 | 11.1 |

| Total | 32,441 | 100.0 | 24,699 | 100.0 | 31.3 |

Source: Gartner (July 2019)

Microsoft secured the No. 2 position in the IaaS market with revenue surpassing $5 billion in 2018, up from $3.1 billion in 2017. Microsoft delivers its IaaS capabilities through its innovative and open Microsoft Azure offering, which continues to solidify its position as a leading IaaS provider.

The dominant IaaS provider in China, Alibaba Cloud, experienced the strongest growth among the leading vendors, growing 92.6% in 2018. The company has built an ecosystem consisting of managed service providers (MSPs) and independent software vendors (ISVs). Its success last year was driven by aggressive R&D investment in its portfolio of offerings, especially compared with its hyperscale provider counterparts. Alibaba has the financial capability to continue this trend and invest in global expansion.

Google came in at the No. 4 spot, growing 60.2% in revenue from 2017. “Google’s cloud offering is something to keep an eye on with its new leadership focus on customers and shift toward becoming a more enterprise-geared offering,” said Mr. Nag.

“As the cloud business continues to gather momentum and hyperscale cloud providers consolidate the market, product managers at cloud MSPs must look at other ways to differentiate, such as focusing on vertical industries and getting certified in the hyperscale cloud provider partner programs in order to drive revenue,” said Nag.

Wireless technology plays a key role in today’s communications, and new forms of it will become central to emerging technologies including robots, drones, self-driving vehicles and new medical devices over the next five years. Gartner, Inc. has identified the top 10 wireless technology trends for enterprise architecture (EA) and technology innovation leaders.

“Business and IT leaders need to be aware of these technologies and trends now,” said Nick Jones, distinguished research vice president at Gartner. “Many areas of wireless innovation will involve immature technologies, such as 5G and millimeter wave, and may require skills that organizations currently don’t possess. EA and technology innovation leaders seeking to drive innovation and technology transformation should identify and pilot innovative and emerging wireless technologies to determine their potential and create an adoption roadmap.”

The top 10 wireless technology trends are:

1. Wi-Fi

Wi-Fi has been around a long time and will remain the primary high-performance networking technology for homes and offices through 2024. Beyond simple communications, Wi-Fi will find new roles — for example, in radar systems or as a component in two-factor authentication systems.

2. 5G Cellular

5G cellular systems are starting to be deployed in 2019 and 2020. The complete rollout will take five to eight years. In some cases, the technology may supplement Wi-Fi, as it is more cost-effective for high-speed data networking in large sites, such as ports, airports and factories. “5G is still immature, and initially, most network operators will focus on selling high-speed broadband. However, the 5G standard is evolving and future iterations will improve 5G in areas such as the Internet of Things (IoT) and low-latency applications,” Mr. Jones added.

3. Vehicle-to-Everything (V2X) Wireless

Both conventional and self-driving cars will need to communicate with each other, as well as with road infrastructure. This will be enabled by V2X wireless systems. In addition to exchanging information and status data, V2X can provide a multitude of other services, such as safety capabilities, navigation support and infotainment.

“V2X will eventually become a legal requirement for all new vehicles. But even before this happens, we expect to see some vehicles incorporating the necessary protocols,” said Mr. Jones. “However, those V2X systems that use cellular will need a 5G network to achieve their full potential.”

4. Long-Range Wireless Power

First-generation wireless power systems have not delivered the revolutionary user experience that manufacturers had hoped for. In terms of the user experience, the need to place devices on a specific charger point is only slightly better than charging via cable. However, several new technologies can charge devices at ranges of up to one meter or over a table or desk surface.

“Long-range wireless power could eventually eliminate power cables from desktop devices such as laptops, monitors and even kitchen appliances. This will allow for completely new designs of work and living spaces,” Mr. Jones said.

5. Low-Power Wide-Area (LPWA) Networks

LPWA networks provide low-bandwidth connectivity for IoT applications in a power-efficient way to support things that need a long battery life. They typically cover very large areas, such as cities or even entire countries. Current LPWA technologies include Narrowband IoT (NB-IoT), Long Term Evolution for Machines (LTE-M), LoRa and Sigfox. The modules are relatively inexpensive, so IoT manufacturers can use them to enable small, low-cost, battery-powered devices such as sensors and trackers.

6. Wireless Sensing

The absorption and reflection of wireless signals can be used for sensing purposes. Wireless sensing technology can be used, for example, as an indoor radar system for robots and drones. Virtual assistants can also use radar tracking to improve their performance when multiple people are speaking in the same room.

“Sensor data is the fuel of the IoT. Accordingly, new sensor technologies enable innovative types of applications and services,” Mr. Jones said. “Systems including wireless sensing will be integrated in a multitude of use cases, ranging from medical diagnostics to object recognition and smart home interaction.”

7. Enhanced Wireless Location Tracking

A key trend in the wireless domain is for wireless communication systems to sense the locations of devices connected to them. High-precision tracking to around one-meter accuracy will be enabled by the forthcoming IEEE 802.11az standard and is intended to be a feature of future 5G standards.

“Location is a key data point needed in various business areas, such as consumer marketing, supply chain and the IoT. For example, high-precision location tracking is essential for applications involving indoor robots and drones,” said Mr. Jones.

8. Millimeter Wave Wireless

Millimeter wave wireless technology operates at frequencies in the range of 30 to 300 gigahertz, with wavelengths in the range of 1 to 10 millimeters. The technology can be used by wireless systems such as Wi-Fi and 5G for short-range, high-bandwidth communications (for example, 4K and 8K video streaming).

9. Backscatter Networking

Backscatter networking technology can send data with very low power consumption. This feature makes it ideal for small networked devices. It will be particularly important in applications where an area is already saturated with wireless signals and there is a need for relatively simple IoT devices, such as sensors in smart homes and offices.

10. Software-Defined Radio (SDR)

SDR shifts the majority of the signal processing in a radio system away from chips and into software. This enables the radio to support more frequencies and protocols. The technology has been available for many years, but has never taken off as it is more expensive than dedicated chips. However, Gartner expects SDR to grow in popularity as new protocols emerge. As older protocols are rarely retired, SDR will enable a device to support legacy protocols, with new protocols simply being enabled via software upgrade.

AI projects set to double

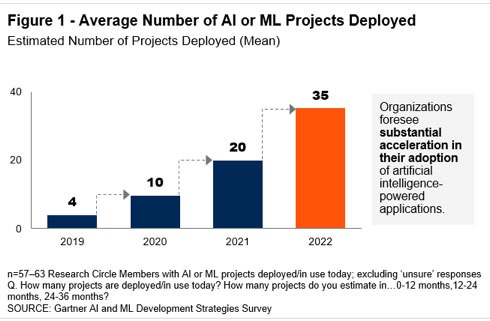

Organizations that are working with artificial intelligence (AI) or machine learning (ML) have, on average, four AI/ML projects in place, according to a recent survey by Gartner, Inc. Of all respondents, 59% said that they have AI deployed today.

The Gartner “AI and ML Development Strategies” study was conducted via an online survey in December 2018 with 106 Gartner Research Circle Members – a Gartner-managed panel composed of IT and IT/business professionals. Participants were required to be knowledgeable about the business and technology aspects of ML or AI either currently deployed or in planning at their organizations.

“We see a substantial acceleration in AI adoption this year,” said Jim Hare, research vice president at Gartner. “The rising number of AI projects means that organizations may need to reorganize internally to make sure that AI projects are properly staffed and funded. It is a best practice to establish an AI Center of Excellence to distribute skills, obtain funding, set priorities and share best practices in the best possible way.”

Today, the average number of AI projects in place is four, but respondents expect to add six more projects in the next 12 months, and another 15 within the next three years (see Figure 1). This means that in 2022, those organizations expect to have an average of 35 AI or ML projects in place.

Source: Gartner (JULY 2019)

Customer Experience (CX) and Task Automation Are Key Motivators

Forty percent of organizations named CX as their top motivator to use AI technology. While technologies such as chat bots or virtual personal assistants can be used to serve external clients, most organizations (56%) today use AI internally to support decision making and give recommendations to employees. “It is less about replacing human workers and more about augmenting and enabling them to make better decisions faster,” Mr. Hare said.

Automating tasks is the second most important project type — named by 20% of respondents as their top motivator. Examples of automation include tasks such as invoicing and contract validation in finance or automated screening and robotic interviews in HR.

The top challenges to adopting AI for respondents were a lack of skills (56%), understanding AI use cases (42%), and concerns with data scope or quality (34%). “Finding the right staff skills is a major concern whenever advanced technologies are involved,” said Mr. Hare. “Skill gaps can be addressed using service providers, partnering with universities, and establishing training programs for existing employees. However, establishing a solid data management foundation is not something that you can improvise. Reliable data quality is critical for delivering accurate insights, building trust and reducing bias. Data readiness must be a top concern for all AI projects.”

Measuring the Success of AI Projects

The survey showed that many organizations use efficiency as a target success measurement when they seek to measure a project’s merit. “Using efficiency targets as a way of showing value is more prevalent in organizations who say they are conservative or mainstream in their adoption profiles. Companies who say they’re aggressive in adoption strategies were much more likely instead to say they were seeking improvements in customer engagement,” said Whit Andrews, distinguished vice president, analyst at Gartner

Worldwide IT spending is projected to total $3.74 trillion in 2019, an increase of 0.6% from 2018, according to the latest forecast by Gartner, Inc. This is slightly down from the previous quarter’s forecast of 1.1% growth.

“Despite uncertainty fueled by recession rumors, Brexit, trade wars and tariffs, we expect IT spending to remain flat in 2019,” said John-David Lovelock, research vice president at Gartner. “While there is great variation in growth rates at the country level, virtually all countries tracked by Gartner will see growth in 2019. Despite the ongoing tariff war, North America IT spending is forecast to grow 3.7% in 2019 and IT spending in China is expected to grow 2.8%.”

“Although an economic downturn is not the likely scenario for either 2019 or 2020, the risk is currently high enough to warrant preparation and planning. Technology general managers and product managers should plan out product mix and operational models that will optimally position product portfolios in a downturn should one occur,” said Mr. Lovelock.

The enterprise software market will experience the strongest growth in 2019, reaching $457 billion, up 9% from $419 billion in 2018 (see Table 1). CIOs are continuing to rebalance their technology portfolios, shifting investments from on-premises to off-premises capabilities.

Table 1. Worldwide IT Spending Forecast (Billions of U.S. Dollars)

|

| 2018 Spending | 2018 Growth (%) | 2019 Spending | 2019 Growth (%) | 2020 Spending | 2020 Growth (%) |

| Data Center Systems | 210 | 15.7 | 203 | -3.5 | 208 | 2.8 |

| Enterprise Software | 419 | 13.5 | 457 | 9.0 | 507 | 10.9 |

| Devices | 712 | 5.9 | 682 | -4.3 | 688 | 0.8 |

| IT Services | 993 | 6.7 | 1,031 | 3.8 | 1,088 | 5.5 |

| Communications Services | 1,380 | -0.1 | 1,365 | -1.0 | 1,386 | 1.5 |

| Overall IT | 3,716 | 5.1 | 3,740 | 0.6 | 3,878 | 3.7 |

Source: Gartner (July 2019)

As cloud becomes increasingly mainstream over the next few years, it will influence ever-greater portions of enterprise IT decisions, in particular system infrastructure. Prior to 2018, more of the cloud opportunity had been in application software and business process outsourcing. Over this forecast period it will expand to cover additional application software segments, including office suites, content services and collaboration services. “Spending in old technology segments, like data center, will only continue to be dropped,” said Mr. Lovelock.

Globally, consumer spending as a percentage of total spend is dropping every year in every region due to saturation and commoditization, especially with PC, laptops and tablet devices. Cloud applications allow these devices to have an extended life, with less powerful equipment needed to run new software. This is why the devices market will experience the strongest decline in 2019, down 4.3% to $682 billion in 2019.

“There are hardly any ‘new’ buyers in the devices market, meaning that the market is now being driven by replacements and upgrades,” said Mr. Lovelock. “Add in their extended lifetimes along with the introduction of smart home technologies and IoT, and consumer technology spending only continues to drop.”

A recent International Data Corporation (IDC) survey of global organizations that are already using artificial intelligence (AI) solutions found only 25% have developed an enterprise-wide AI strategy. At the same time, half the organizations surveyed see AI as a priority and two thirds are emphasizing an "AI First" culture.

"Organizations that embrace AI will drive better customer engagements and have accelerated rates of innovation, higher competitiveness, higher margins, and productive employees. Organizations worldwide must evaluate their vision and transform their people, processes, technology, and data readiness to unleash the power of AI and thrive in the digital era," said Ritu Jyoti, program vice president, Artificial Intelligence Strategies.

The primary drivers behind these organizations' AI initiatives were to improve productivity, business agility, and customer satisfaction via automation. Faster time to market with new products and services was another leading reason for implementing AI. The cost of AI solutions, a lack of skilled personnel, and bias in the data were identified as the primary factors holding back the implementation of AI technology in these organizations.

Other key findings from the survey include:

"For many organizations, the rapid rise of digital transformation has pushed AI to the top of the corporate agenda. However, as AI accelerates toward the mainstream, organizations will need to have an effective AI strategy aligned with business goals and innovative business models to thrive in the digital era," noted Jyoti.

Spending on customer experience (CX) was reported at $97 billion in 2018 and is expected to increase to $128 billion by 2022, growing at a healthy 7% five-year CAGR, according to the International Data Corporation (IDC) Worldwide Semiannual Customer Experience Spending Guide. The European industries spending the most on CX in 2019 will be banking, retail, and discrete manufacturing. Together, these verticals will absorb 33% of the European CX spend this year. Retail will also have the fastest growing spend on CX throughout 2022, outgrowing banking by 2021.

Customer care and support, digital marketing, and order fulfillment are the use cases with the highest spending in CX today and will continue to be a strong investment area throughout 2022. Investing in CX represents a clear opportunity for industries to differentiate, implementing these use cases to mold a public brand perception around the customer, improving websites, social media interactions, and product and service promotions. Looking at long-term opportunities, omni-channel content will be the fastest growing CX use case by 2022, with European companies focusing on this space to build organizational experience delivery competency, leveraging investments in content and experience design, to lower the cost of supporting new channels and ensure brand consistency. Omni-channel content reflects the core foundation of the future of CX through the optimization of content across channels at every point in the customer journey, creating a non-linear experience around the user.

Emerging technologies (such as AI, IoT, and ARVR) and hyper-micro personalization are fueling investments in CX together with rising customer expectations, intensified competition, ever-changing customer behaviors, and stronger demand for personalization. The innovations in CX are about centering the experience of a product or service around the user, approaching each customer as an individual in real time and moving away from segment-based approaches to customer engagement.

"Customer Experience is the top business priority for European companies in 2019," said Andrea Minonne, senior research analyst, IDC Customer Insight & Analysis in Europe. "Businesses are moving from traditional ways of reaching out to customers and are embracing more digitized and personalized approaches to delivering empathy where the focus is on constantly learning from customers. As a customer-facing industry, retail spend on CX is moving fast as retailers have fully understood how important it is to embed CX in their business strategy."

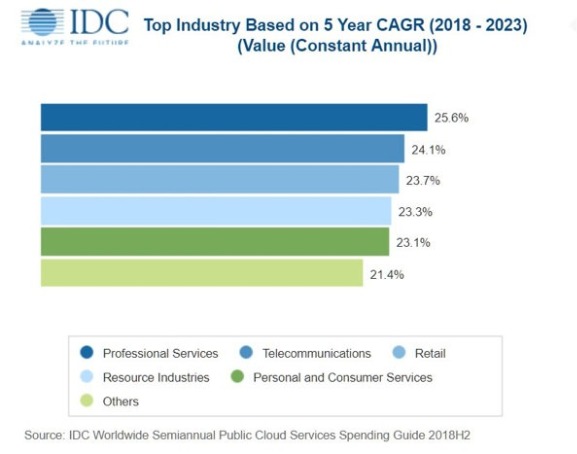

Worldwide spending on public cloud services and infrastructure will more than double over the 2019-2023 forecast period, according to the latest update to the International Data Corporation (IDC) Worldwide Semiannual Public Cloud Services Spending Guide. With a five-year compound annual growth rate (CAGR) of 22.3%, public cloud spending will grow from $229 billion in 2019 to nearly $500 billion in 2023.

"Adoption of public (shared) cloud services continues to grow rapidly as enterprises, especially in professional services, telecommunications, and retail, continue to shift from traditional application software to software as a service (SaaS) and from traditional infrastructure to infrastructure as a service (IaaS) to empower customer experience and operational-led digital transformation (DX) initiatives," said Eileen Smith, program director, Customer Insights and Analysis.

Software as a Service (SaaS) will be the largest category of cloud computing, capturing more than half of all public cloud spending in throughout the forecast. SaaS spending, which is comprised of applications and system infrastructure software (SIS), will be dominated by applications purchases. The leading SaaS applications will be customer relationship management (CRM) and enterprise resource management (ERM). SIS spending will be led by purchases of security software and system and service management software.

Infrastructure as a Service (IaaS) will be the second largest category of public cloud spending throughout the forecast, followed by Platform as a Service (PaaS). IaaS spending, comprised of servers and storage devices, will also be the fastest growing category of cloud spending with a five-year CAGR of 32.0%. PaaS spending will grow nearly as fast (29.9% CAGR) led by purchases of data management software, application platforms, and integration and orchestration middleware.

Three industries – professional services, discrete manufacturing, and banking – will account for more than one third of all public cloud services spending throughout the forecast. While SaaS will be the leading category of investment for all industries, IaaS will see its share of spending increase significantly for industries that are building data and compute intensive services. For example, IaaS spending will represent more than 40% of public cloud services spending by the professional services industry in 2023 compared to less than 30% for most other industries. Professional services will also see the fastest growth in public cloud spending with a five-year CAGR of 25.6%.

On a geographic basis, the United States will be the largest public cloud services market, accounting for more than half the worldwide total through 2023. Western Europe will be the second largest market with nearly 20% of the worldwide total. China will experience the fastest growth in public cloud services spending over the five-year forecast period with a 49.1% CAGR. Latin America will also deliver strong public cloud spending growth with a 38.3% CAGR.

Very large businesses (more than 1000 employees) will account for more than half of all public cloud spending throughout the forecast, while medium-size businesses (100-499 employees) will deliver around 16% of the worldwide total. Small businesses (10-99 employees) will trail large businesses (500-999 employees) by a few percentage points while the spending share from small offices (1-9 employees) will be in the low single digits. All the company size categories except for very large businesses will experience spending growth greater than the overall market.

SD-WAN market to reach $5.25 billion in 2023

International Data Corporation (IDC) has published two new research reports on the fast-growing Software Defined Wide Area Network (SD-WAN) infrastructure market. This important segment of the enterprise networking market will grow at a 30.8% compound annual growth rate (CAGR) from 2018 to 2023 to reach $5.25 billion, according to IDC's SD-WAN Infrastructure Forecast. The IDC Market Shares report includes 2017 and 2018 revenues by vendor for SD-WAN infrastructure.

"SD-WAN continues to be one of the fastest-growing segments of the network infrastructure market, driven by a variety of factors. First, traditional enterprise WANs are increasingly not meeting the needs of today's modern digital businesses, especially as it relates to supporting SaaS apps and multi- and hybrid-cloud usage. Second, enterprises are interested in easier management of multiple connection types across their WAN to improve application performance and end-user experience," said Rohit Mehra, vice president, Network Infrastructure. "Combined with the rapid embrace of SD-WAN by leading communications service providers globally, these trends continue to drive deployments of SD-WAN, providing enterprises with dynamic management of hybrid WAN connections and the ability to guarantee high levels of quality of service on a per-application basis."

The SD-WAN infrastructure market continues to be highly competitive with sales increasing 64.9% in 2018 to $1.37 billion. Incumbent networking vendors have leveraged their technological strengths and installed bases in routing and WAN optimization sales to lead the market, while numerous start-ups remain active. IDC finds that Cisco holds the largest share of the SD-WAN infrastructure market, fueled by its extensive routing portfolio that is used in SD-WAN deployments, as well as its Meraki portfolio and its SD-WAN management platform powered by technology it acquired from Viptela in August 2017. VMware, with its SD-WAN service powered by VeloCloud (which VMware acquired in December 2017), holds the second largest market share in the SD-WAN infrastructure market, followed by Silver Peak, Nokia-Nuage, and Riverbed.

(This issue of Digitalisation World includes a major focus on Software-Defined-Networking).

The value of Europe's managed services contracts in the second quarter exceeded €2.7 bn (£2.4bn) for the second quarter running, indicating a potential return to pre-2015 spending levels, according to the latest state-of-the-industry report from US-based researcher Information Services Group.

The research is based on the EMEA ISG Index, which measures commercial outsourcing contracts with annual contract value (ACV) of €4m or more. It shows the region's combined first-half ACV, including both managed services and as-a-service contracts, was up 12%, to €8.9bn.

Managed services, with two straight quarters exceeding €2.7bn in ACV, reached €5.7bn in the first half, up 10% against the softer 2018 period. Within managed services, IT outsourcing (ITO) rose 12%, to €4.7bn, while business process outsourcing (BPO) was up 1 percent, to €1.0bn. The second quarter was the third quarter in the last five that managed services ACV exceeded €2.7bn.

With continuing strong demand for cloud-based solutions, as-a-service ACV climbed 17%, to €3.2bn, in the half. Infrastructure-as-a-Service (IaaS) surged 19%, to €2.4bn, while Software-as-a-Service (SaaS) rose 9%, to €846m.

Managed services ACV, meanwhile, dropped 1 percent in the second quarter, to €2.9bn, but the number of contract awards reached 204, up 10% over the prior year. This may show that the managed services market is reaching further into the SMB sector, with the consequent smaller deals.

Steve Hall, partner and president of ISG, said: "For the past couple of quarters, we've been bracing for the impact of some of the macro risks that could affect the global economy — Brexit, tariffs and trade wars. But the talk about overall market growth has turned surprisingly positive. There continue to be recession concerns in European markets, especially the UK and Germany, but overall technology spend remains robust. With the Brexit deadline pushed farther out once again, uncertainty has become the new normal for many businesses in the UK, and companies seem to be making strategic adjustments."

Globally, managed services ACV was down 3% in the second quarter against a very strong 2018 period, so in uncertain times, managed services may be being seen as giving an element of control, with the ability to scale, both up and down.

ISG finds UK companies are continuing to exercise caution in their managed services investments, instead focusing spending on new technologies that will increase their agility and efficiency

"Technology spending remains robust, and technology providers remain positive about tech spend in the near term," said Hall. "We are projecting 22% year-on-year revenue growth for the remainder of 2019 in the global as-a-service market. This takes into account a slightly more optimistic view of the SaaS segment and factors in some uncertainty in IaaS. In the managed services market, we also have an optimistic perspective and are raising our growth forecast to 3.5% through to the end of the year.

The Managed Services & Hosting Summits are firmly established as the leading Managed Services event for the channel. Now in its ninth year, the London Managed Services & Hosting Summit 2019 on September 19 aims to provide insights into how managed services continues to grow and change as customer demands expand suppliers into a strategic advisory role, and the pressures for compliance and resilience impact the business model at a time of limited resources. Managed Service Providers, other channels and their suppliers can evolve new business models and relationships but are looking for advice and support as well as strategic business guidance.

The Managed Services & Hosting Summits feature conference session presentations by major industry speakers and a range of sessions exploring both technical and sales/business issues.