‘CIOs and other IT leaders need to be prepared to embrace the hybrid IT as the best platform for modern IT departments, but on a level that suits their own business, while finding the best approach to monitoring and managing its usage.’

Edge computing is the critical enabler of cloud-based applications and is the key to providing the fast processing speeds necessary to take advantage of internet of things (IoT) applications. There’s no denying the need for the cloud, but those businesses who restrict themselves to its capabilities could be wasting their investment, not to mention enduring detrimental inefficiencies.

Hybrid ultimately introduces complexity and complexity impacts people, process and technology in different but equally detrimentally ways.

The ability to collect and analyse data at the edge fundamentally changes the way IoT can be leveraged – and provides the opportunity for IoT deployments to, finally, realise their business goals.

Edge computing design requires several new considerations. These include: low computing footprint, low power footprint, AI workloads, reliable and predictable networking, distributed compute and storage.

Moving data and applications to the edges of a network narrows the distance between users and data, resulting in improved speed, reliability and efficiency. This allows data centres to generate, process and analyse large volumes of data in a fast, stable and consistent manner, while businesses are able to maintain operational run-time effectively.

The quotes above, taken from some of the contributions to our major edge/Hybrid IT focus in this issue of Digitalisation World, provide a neat cross-section of reasons as to why and how traditional data centre and IT infrastructure is having to adapt and co-exist with newer technologies and topologies. As I’ve written before, ‘hybrid’ is the single most important adjective in the technology world right now. Whether it’s hybrid data centre infrastructure – in-house, colo, hosting/cloud; centralised, regional and local/edge facilities; hybrid IT infrastructure – in-house, colo, cloud and managed services; or hybrid clouds – private, public, with multi-cloud being the latest trend.

The driving force behind the hybrid world, and the emergence of the edge/edge computing, is the ongoing quest to optimise provider/customer interaction, by providing the best possible service, wherever, whenever and however the customer demands. And here we have another hybrid world, where there’s a gradual blurring of the lines between the world of work and leisure (I would say downtime, but that might be confusing!). Increasingly, user experiences and service as delivered into our homes are being sought after in the work place. If we can download and start using an application at home in a matter of seconds or minutes, why should we have to wait days, weeks or even months to be able to access a new application at work?

Dynamic, scalable, flexible, always available and real time are the catch words which are becoming the everyday currency of the digital world. And the edge, much talked about, and now being implemented, is an important part of the infrastructure change required to deliver optimised performance levels to the customer. It’s not the be all and end all, but just one of the newest tools in the Hybrid IT box.

Hopefully, the content of this issue of Digitalisation World will give readers more understanding of how the IT world is evolving and, crucially, how it can make a difference to any business.

Happy reading!

Successful businesses will take advantage of new set of technologies but prioritize trust, responsibility, privacy and security.

The enterprise is entering a new “post-digital” era, where success will be based on an organization’s ability to master a set of new technologies that can deliver personalized realities and experiences for customers, employees and business partners, according to Accenture Technology Vision 2019, the annual report from Accenture that predicts key technology trends that will redefine businesses over the next three years.

According to this year’s report, “The Post-Digital Era is Upon Us – Are You Ready for What’s Next?,” the enterprise is at a turning point. Digital technologies enable companies to understand their customers with a new depth of granularity; give them more channels with which to reach those consumers; and enable them to expand ecosystems with new potential partners. But digital is no longer a differentiating advantage ― it’s now the price of admission.

In fact, nearly four in five (79 percent) of the more than 6,600 business and IT executives worldwidethat Accenture surveyed for the report believe that digital technologies ― specifically social, mobile, analytics and cloud ― have moved beyond adoption silos to become part of the core technology foundation for their organization.

“A post-digital world doesn’t mean that digital is over,” said Paul Daugherty, Accenture’s chief technology & innovation officer. “On the contrary ― we’re posing a new question: As all organizations develop their digital competency, what will set YOU apart? In this era, simply doing digital isn’t enough. Our Technology Vision highlights the ways in which organizations must use powerful new technologies to innovate in their business models and personalize experiences for their customers. At the same time, leaders must recognize that human values, such as trust and responsibility, are not just buzzwords but critical enablers of their success.”

The Technology Vision identifies five emerging technology trends that companies must address if they are to succeed in today’s rapidly evolving landscape:

·DARQ Power: Understanding the DNA of DARQ. The technologies ofdistributed ledgers, artificial intelligence, extended reality and quantum computing (DARQ) are catalysts for change, offering extraordinary new capabilities and enabling businesses to reimagine entire industries. When asked to rank which of these will have the greatest impact on their organization over the next three years, 41 percent of executives ranked AI number one — more than twice the number of any other DARQ technology.

·Get to Know Me: Unlock unique consumers and unique opportunities. Technology-driven interactions are creating an expanding technology identity for every consumer. This living foundation of knowledge will be key to understanding the next generation of consumers and for delivering rich, individualized, experience-based relationships. More than four in five executives (83 percent) said that digital demographics give their organizations a new way to identify market opportunities for unmet customer needs.

·Human+ Worker: Change your workplace or hinder your workforce. As workforces become “human+” — with each individual worker empowered by their skillsets and knowledge plus a new, growing set of capabilities made possible through technology — companies must support a new way of working in the post-digital age. More than two-thirds (71 percent) of executives believe that their employees are more digitally mature than their organization, resulting in a workforce “waiting” for the organization to catch up.

·Secure Us to Secure Me: Enterprises are not victims, they’re vectors. While ecosystem-driven business depends on interconnectedness, those connections increase companies’ exposures to risks. Leading businesses recognize that security must play a key role in their efforts as they collaborate with entire ecosystems to deliver best-in-class products, services and experiences. Only 29 percent of executives said they know their ecosystem partners are working diligently to be compliant and resilient with regard to security.

·MyMarkets: Meet consumers at the speed of now. Technology is creating a world of intensely customized and on-demand experiences, and companies must reinvent their organizations to find and capture those opportunities. That means viewing each opportunity as if it’s an individual market—a momentary market. Six in seven executives (85 percent) said that the integration of customization and real-time delivery is the next big wave of competitive advantage.

According to the report, innovation for organizations in the post-digital era involves figuring out how to shape the world around people and pick the right time to offer their products and services. They’re taking their first steps in a world that tailors itself to fit every moment — where products, services and even people’s surroundings are customized and where businesses cater to the individual in every aspect of their lives and jobs, shaping their realities.

One company taking individualization and customization to a new level is Zozotown, Japan’s biggest e-commerce company. Its skintight spandex Zozosuits pair with the Zozotown app to take customers’ exact measurements; custom-tailored pieces from the company’s in-house clothing line arrive in as few as 10 days. And it’s not just in the fashion industry where technology is enabling customization previously not possible. U.S. retailer Sam’s Club developed an app that uses machine learning and data about customers’ past purchases to auto-fill their shopping lists; the company plans to add a navigation feature to show optimized routes through the store to each item on that list.

The report notes that companies still completing their digital transformations are looking for a specific edge, whether it’s innovative service, higher efficiency or more personalization. But post-digital companies are out to surpass the competition by combining these forces to change the way the market itself works — from one market to many custom markets — on-demand and in the moment, just as Chinese e-retail platform JD.com is doing with its “Toplife” platform. The service helps third parties sell through JD by setting up customized stores, providing access to its supply chain with cutting-edge robotics and drone delivery. In partnership with Walmart, a physical store in Shenzhen will offer more than 8,000 products available in person or delivered from the store in under 30 minutes. By offering unprecedented customization and speed, JD is empowering other companies while creating a new market for itself.

MuleSoft has published the findings of the 2019 Connectivity Benchmark Report on the state of IT. Based on a global survey of IT leaders, the report reveals that while 97 percent of organizations are currently undertaking or planning to undertake digital transformation initiatives, integration challenges are hindering efforts for 84 percent of organizations. Close to half of all respondents (43 percent) reported more than 1,000 applications are being used across their business, but only 29 percent are currently integrated together, trapping valuable data in silos.

The survey of 650 respondents also reveals that IT is struggling to keep up with business demands, as 64 percent of respondents indicated they were unable to deliver all projects last year. In addition, project volumes are only expected to grow, with respondents predicting on average a 32 percent increase this year. If digital transformation initiatives aren’t successfully completed, nine out of ten organizations believe business revenue will be negatively impacted.

Among the key results of the survey:

The IT delivery gap is widening as new technologies enter the scene

The role of IT is changing from a tactical function to a business catalyst. However, the business’ growing need for IT support is reflected in the increasing number of projects IT is expected to deliver. In addition, with a growing investment in new technologies, organizations are seeing integration challenges hinder digital transformation initiatives.

IT’s new role as a business catalyst

IT’s expanding role is driven by a greater need for support across lines of business. As companies race to digitally transform, what was once an IT-specific need for integration has now expanded to business units across the organization.

Preparing for the future one API at a time

For IT to become a business enabler, organizations are increasingly turning to API strategies to support reuse and self-service. By creating reusable assets, IT enables the business to increase overall delivery speed and capacity.

“Today, businesses are competing on speed and agility as they race to meet customer expectations. As a result, every organization is undergoing digital transformation in order to offer a completely connected customer experience. It has put integration in the spotlight as a top-level business priority,” said Greg Schott, CEO, MuleSoft. "With the IT landscape only growing more complex, organizations can build their applications networks one API at a time, providing businesses with an agile foundation for success in the digital era.”

"As organizations across all industries digitize their business models, the ability to connect and reuse technology assets becomes a critical capability,” said Steve Stone, technology advisor and former CIO of several Fortune 500 brands including Lowe’s and L Brands. “Reusable APIs serve as building blocks in the application network, enabling new business models and simplifying the expansion of a connected partner ecosystem. MuleSoft’s Connectivity Benchmark Report demonstrates the importance of adopting a comprehensive API strategy in driving desired business outcomes."

Two-thirds of organisations bypass IT when buying new technologies for digital transformation, based on an EIU report sponsored by BMC Software.

According to a new report released by The Economist Intelligence Unit (EIU), organisations and their IT teams are not in sync when pursuing their digital transformation strategies. The report, From gatekeeper to enabler: the role of IT when digital transformation is the norm, sponsored by BMC Software, shows a prime example of this disconnect. Two-thirds of private and public-sector organisations in a survey (66%) say they buy new systems and solutions without involving IT teams—a situation that flies in the face of IT’s traditional role as a gatekeeper of new technologies.

The findings are based on a survey of senior executives and administrators in Asia-Pacific, Europe, Latin America and North America. Reasons for the shortfall in collaboration with IT departments on digital transformation initiatives include:

·misalignment in objectives, with non-IT teams prioritising revenue growth and reducing costs, in contrast to IT teams that typically prioritise integration within existing systems and overall security; and

·time pressures, as demonstrated by the finding that 37% of respondents cite excessive length of the procurement process for the failure to consult IT teams on the purchase of new technologies.

Nonetheless, despite many companies saying they bypass IT when purchasing new technology, 43% of respondents say their IT teams are still accountable if something goes wrong with a digital transformation initiative. This can be risky if IT teams have not evaluated the technologies in the first place.

This apparent lack of collaboration appears counterintuitive, given the generally positive view of respondents towards the benefits of co-ordination between IT and non-IT teams. Notably, organisations in which IT and non-IT teams collaborate regularly are significantly more confident about overcoming digital transformation challenges. Eighty-nine percent of collaborators say they are confident about overcoming obstacles compared with 55% of non-collaborators.

Another hindrance to seeing the results of digital transformation can be time itself. For organisations who have only had their initiatives in place for one or two years, only 42% strongly agree their organisation is realising the benefits of digital transformation. This is much lower than the 63% of respondents who have had their initiatives in place for three or more years.

Kevin Plumberg, editor of the report, says: “Digital transformation is not a one-off, unique journey that some organisations are experimenting with. It has become the norm, and companies where IT teams are working closely with the business rather than in silos are better positioned to manage the challenges that inevitably arise.”

Chief Information Officers (CIOs) increasingly see IT moving from a ‘cost center’ to a ‘trust center’ – even as the challenges they face abound, according to the 2019 CIO Survey from Grant Thornton LLP in partnership with the Technology Business Management (TBM) Council.

CIOs who participated in the survey reported that “creating and driving an IT strategy that aligns with overall business/agency objectives” is one of their top priorities – second only to “ensuring that IT systems comply with security and regulatory requirements.”

This focus on strategy is also evident in how CIOs see the institutional role of their IT teams. Of those surveyed:

·Eighty-one percent believe “IT drives innovation or modernization programs.”

·Seventy-five percent think “IT has a voice in business/agency strategy and strategic initiatives.”

·Sixty-six percent of CIOs say their performance should be measured based on “successful execution against strategy and plans.”

“The days of the CIO serving strictly as an IT operator are over,” said LaVerne H. Council, national managing principal for Enterprise Technology Strategy and Innovation at Grant Thornton. “CIOs see themselves as trusted business partners, but the road ahead is not an easy one. CIOs should articulate the value of IT spend in the same terms measured by their business partners.”

Demonstrating value will only grow harder in the face of the challenges that CIOs identified in the survey. Chief among these is “conflicting priorities among stakeholders” – followed by “stakeholders’ resistance to change;” “recruiting and retaining talent;” “aligning IT with business goals;” and “articulating the value of IT spend.”

The road to becoming a trusted business partner

The clearest path forward for CIOs to become trusted business partners is to demonstrate that they can control costs and communicate IT value in a way that resonates with the business. Through technology business management (TBM), for example, leaders can help their C-suite peers understand how IT brings value to their organization.

“With TBM, CIOs and their teams use a data-driven financial framework to evaluate investment decisions using a common language that aligns IT spend to business value,” said Todd Tucker, vice president and general manager of the TBM Council. “With this information, organizations can enable prioritization, optimize business costs and accelerate decision-making. In fact, 74 percent of survey respondents identified ‘the ability to shift spending to innovation or growth as the most important benefit of TBM.’”

Shifting priorities

Finally, CIOs are shifting their priorities to meet emerging needs and address critical gaps, most notably:

·Eighty-five percent are investing in automation software deployments over the next two years.

·Eighty-three percent have increased spending on cybersecurity.

·Only 30 percent are currently using data to “move from information to insight.”

·They believe the top two barriers to cybersecurity threats are “retaining top-tier talent” and “the increasing sophistication of threats.”

·They think artificial intelligence will be the most impactful area of IT over the next three to five years.

Grant Thornton and the TBM Council conducted the survey in fall 2018, based on responses from IT leaders in both commercial businesses and the public sector.

74% of businesses that use IoT say that non-adopters will have fallen behind rivals within five years.

Vodafone has published the findings of its latest IoT Barometer. Surveying 1,758 businesses worldwide, the Barometer finds that more than a third (34%) of businesses now use IoT, that 70% of these adopters have moved beyond pilot stage and 95% of adopters are seeing the benefits of investment in this technology as it moves into the mainstream.

While use cases for IoT are varied, ranging from medical exoskeletons to connected tyres, the research has found that IoT impacts businesses regardless of size and sector. Sixty per cent of businesses that use IoT agree that it has either completely disrupted their industry or will do so in the next five years. Eighty-four per cent of adopters report growing confidence in IoT, with 83% enlarging the scale of deployments to take advantage of full benefits.

The report also grades businesses in IoT usage by assessing strategy, integration and implementation of IoT deployments. Globally the report found that 53% of adopters fall into the top two levels out of five. Regionally, the Americas is the most advanced, with 67% of adopters falling into the top two levels, compared to 51% in APAC and 46% in Europe. This suggests that businesses in the Americas are progressing faster than those in other markets, moving from individual projects to coordinated, strategic programmes.

The most advanced companies also saw the greatest return on investment in IoT. Eighty-seven per cent of those in the top level reported significant returns or benefits from IoT, compared to just 17% in the “beginner’s” level. These benefits breed increasing reliance on IoT. Seventy-six per cent of adopters say IoT is mission-critical. Some are even finding it hard to imagine business without it — 8% of adopters say their “entire business depends on IoT”.

Stefano Gastaut, CEO IoT, Vodafone Business, commented: “IoT is central to business success in an increasingly digitised world, with 72% of adopters saying digital transformation is impossible without it. The good news is that IoT platforms make the technology easier to deploy for businesses of all sizes and NB-IoT and 5G will improve services and potential. In this climate, companies need to be considering not if but how they will implement IoT, and they must also be fully committed to the technology to realise the strongest benefit.”

Looking to the future, new technology will continue to power the performance of IoT. Over half (52%) of adopters plan to use 5G, which promises to support higher volumes of data, increase reliability and offer near-zero latency. Combined with mobile edge computing, which will process application traffic closer to the network edge, users can expect better performance, less risk and faster data speeds.

Commenting on the results, Michele Mackenzie, Principal Analyst at Analysys Mason said: “The Barometer makes it clear that businesses are increasing their investment into IoT as they gain confidence and begin to develop more advanced solutions. In the short term, users of IoT will continue to access reduced costs and improved efficiency, but increasingly ambitious projects will offer the opportunity to change business models. For example in cities heavy users of roads could pay more, encouraging the use of different modes of transport with knock-on benefits to public health and the environment.”

Progress has published the results of its 2018 Data Connectivity Survey. In the fifth annual survey, more than 1,400 business and IT professionals in various roles across industries and geographies shared their insights on the latest trends within the rapidly changing enterprise data market. The findings revealed five data-related areas of primary importance for organisations as they migrate to the cloud: data integration, real-time hybrid connectivity, data security, standards-based technology and open analytics.

Significant findings from the survey include:

·Data integration has become the #1 challenge, with nearly 50% of respondents pinpointing ever-increasing disparate data sources as a major pain point.

·44% of respondents are worried about integrating cloud data with on-premises data making real-time hybrid connectivity critical.

·Increased data security vulnerabilities, penalties and regulations are creating new challenges and opportunities for data integration. More than 65% of survey respondents said they must comply with one or more standards.

·Standards-based access is growing in popularity as the number of data sources continues to grow at a rapid pace. Over 50% of survey respondents are currently using ODBC and/or REST.

·REST APIs have become the standard framework for application integration. An impressive 65% of respondents are opting for REST/web APIs for databases.

·The concept of open analytics is on the rise as organizations seek to query cloud applications with their favorite analytics tool or programming language. On average, organizations are using 2.5 different BI reporting tools, underscoring the need for universal BI connectors to support a variety of needs.

·Relational databases are still critical for many enterprises, as SQL Server (55%), MySQL (40%) and Oracle (37%) are still in active use for most of the businesses surveyed.

“Enterprises are looking to release the power of their data for competitive advantage, while upholding the highest standards for data security,” said John Ainsworth, SVP, Core Products, Progress. “Disparate data sources, migration to the cloud, self-service BI demands, and increasing government regulations create challenges for every organization. Progress DataDirect helps customers to overcome these challenges and create the next generation of powerful data solutions and business applications.”

Ready availability of hacking tools, wildfire spread of malware and proliferation of cryptomining has seen social media-enabled cybercrimes grow by more than 300-fold.

Bromium has published the findings of an independent academic study into cybercriminals’ increasingly aggressive exploitation of social media platforms. The report details the range of techniques utilized by cybercriminals to exploit trust and enable rapid infection across social media. It also details the range of services being offered in plain sight on social networks, including: hacking tools and services, botnets for hire, facilitated digital currency scams and more.

The findings come from ‘Social Media Platforms and the Cybercrime Economy’, an extensive six-month academic study sponsored by Bromium and undertaken by Dr. Mike McGuire, Senior Lecturer in Criminology at the University of Surrey. The study is the next chapter of ‘Into the Web of Profit’ and examines the role of social media platforms in the cybercrime economy. Key insights include:

“Social media platforms have become near ubiquitous, and most corporate employees access social media sites at work, which exposes significant risk of attack to businesses, local governments as well as individuals,” commented Gregory Webb, CEO of Bromium. “Hackers are using social media as a Trojan horse, targeting employees to gain a convenient backdoor to the enterprise’s high value assets. Understanding this is the first step to protecting against it, but businesses must resist knee jerk reactions to ban social media use – which often has a legitimate business function – altogether.

“Instead, organizations can reduce the impact of social media-enabled attacks by adopting layered defenses that utilize application isolation and containment,” concludes Webb. “This way, social media pages with embedded but often undetected malicious exploits are isolated within separate micro-virtual machines, rendering malware infections harmless. Users can click links and access untrusted social-media sites without risk of infection.”

Cryptomining and digital currency scams

Since 2017 there has been a 400 to 600 percent increase in the amount of cryptomining malware being detected globally, the vast majority of which has been found on social media platforms. Of the top 20 global websites that host cryptomining software, 11 are social media platforms like Twitter and Facebook. Apps, adverts and links have been the primary delivery mechanism for cryptomining software on social platforms, with the majority of malware detected by this research mining Monero (80 percent) and Bitcoin (10 percent), earning $250m per year for cybercriminals.

“Facebook Messenger has been instrumental in spreading cryptomining strains like Digmine,” said Dr. Mike McGuire, Senior Lecturer in Criminology at the University of Surrey. “Another example we found was on YouTube, where users who clicked on adverts were unwittingly enabling cryptomining malware to execute on their devices, consuming more than 80 percent of their CPU to mine Monero. For businesses, this type of malware can be very costly, with the increased performance demands draining IT resources, network infections and accelerating the deterioration of critical assets.”

In addition, social platforms have become increasingly important to the business of digital currency scams involving fraudulent crypto-currency investments. “One trend on social media has been the hijacking of trustworthy verified accounts,” continued Dr. McGuire. “In one case, hackers took over the Twitter account for UK retailer Matalan and changed it to resemble Elon Musk’s profile. Tweets were then sent out asking for a small bitcoin donation with the promise of a reward. Safe to say, nobody who donated got anything in return.”

Social media in the middle of a chain exploitation and malicious malware attacks

The report found crimeware tools and services widely available on social media platforms. Up to 40 percent of inspected social media sites had a form of hacking service offering hackers for hire, hacking tutorials and tools to help hack websites. Social media platforms also enable an underground economy for the trading of stolen data, such as credit card details, earning cybercriminals $630m per year.

“Social platforms and dark web equivalents are becoming blurred, with tools, data and services being offered openly or acting as a marketing entry-point for more extensive shopping facilities on the dark web,” said Dr. McGuire. “One account on Facebook offers the opportunity to trade or learn about exploits and advertises on Twitter to attract buyers. We also found evidence of botnet hire on YouTube, Facebook, Instagram and Twitter, with prices ranging from $10 a month for a full-service package with tutorials and tech support to $25 for a no-frills lifetime subscription – cheaper than Amazon Prime. For the enterprise, this raises a very real concern that the ready availability of cybercrime tools and services make it much easier for hackers to launch cyberattacks.”

Social media platforms have become a major source of malware distribution. The research found that up to 40 percent of malware infections on social media come from malvertising, and at least 30 percent come from plug-ins and apps, many of which lure users in by offering additional functionality or deals. Once the user clicks, the malware executes – allowing hackers to steal data, install keyloggers, deliver ransomware, persist and hide for future attacks and so on. The spread of malware is facilitated by large user bases and the fact that many social media sites share user profiles across platforms, enabling “chain exploitation”, whereby malware can spread across multiple social media sites from one account.

“While adverts on Facebook or Instagram may look like they’re promoting Ray-Ban sunglasses or Nike shoes, they’re often more sinister and deliver malware once clicked,” explained Dr McGuire. “Cybercriminals have been quick to see how the social nature of such platforms can be used to spread malware. They imbed malware into posts or friends’ updates and use photo tag notifications to persuade users to open infected attachments.”

Social media enabling traditional crime

Social media platforms are also hosting a thriving criminal ecosystem for more traditional crime. They serve as a recruitment center for money mules used for laundering, with posts or adverts offering opportunities to earn large amounts of money in a short time. “As we saw in the previous report, platform criminality extends beyond cybercrime, with traditional crime also being enabled by platforms,” said Dr. McGuire. “These platforms have brought money laundering to the kind of individuals not typically associated with this crime – young millennials and generation Z. Data from UK banks suggests there might be as many as 8,500 money mule accounts in the UK owned by individuals under the age of 21, and most of this recruitment is conducted via social media.”

The illegal sale of prescription drugs is netting criminals $1.9B per year. The report also found a large amount of drugs like cannabis, GHB and even fentanyl being sold on Twitter, Facebook, Instagram and Snapchat. Social media is enabling a wide variety of financial and online romance fraud. “Around 0.2 percent of social media posts examined for this report involved financial fraud, helping to generate $290m in revenue per year,” concluded Dr. McGuire. “Criminals have been quick to understand how to exploit social media to facilitate more traditional crime, whether it’s a vehicle to sell something or research potential victims – for instance, online dating scams generate $138m per year and often rely on using social media pages to trick people.”

Cyber security revenues in 2018 were $160.2 billion and will jump an enormous $11.2 billion during 2019, as the focus moves to GDPR adherence and adherence to similar legislation. Growth slows to around $9.8 billion per annum after this but then spikes once again in 2023/4 as AI based Cybersecurity escalates, reaching $223.7 billion.

This means cybersecurity spending will rise faster than total IT budgets over the next five years. The European Union’s GDPR (General Data Protection Registrar) has set the agenda for legislation over data privacy and protection worldwide and that is generating a spike in spending on security measures that ensure compliance. This will continue to ripple around the world between 2019 and 2021.

Later in our forecast period an arms race will develop around AI and machine learning as major cybercriminal gangs and rogue nation states adopt these to launch increasingly sophisticated cyberattacks, pushing spending on countermeasures.

These predictions are made in Riot’s new report “Privacy and state espionage tightens focus on security - Cybersecurity spending forecast to 2024” released today. This is a global forecast by geography and industry vertical, which also highlights differences in cybersecurity strategy and spending between major regions and sub-sectors.

North America is expected to continue to spend the most on security (27%), but both Europe (22%) and China (20%) which are rapidly accelerating their spend, with the rest of Asia following closely behind on 16%. North America is expected to lead on almost every market with the exceptions of Industrial and Automotive, where China leads, but only by a tiny margin.

Because the US has been driving the eHealth revolution, it has invested sooner than other countries in associated security and that shows up clearly in the 2018 regional breakdown. That gap will remain over the forecast period but narrow as other regions catch up over eHealth.

By contrast in automotive China is emerging as a big spender on cybersecurity, driven by huge investment coupled by a strategy of focusing on safety in autonomous driving, contrasting with the country’s cavalier approach to consumer privacy. Automotive also stands out for having by far the fastest growth in spending on cybersecurity among the vertical sectors covered in the report.

The Riot report describes how cybersecurity threats will evolve over the next five years as monitoring and surveillance based on machine learning algorithms and other AI techniques become widely deployed. But this will have the affect of increasing rather than reducing demand for skilled cybersecurity personnel, because the developing arms race will require experts in what will effectively be a new form of war game.

Cybercriminals will not only attempt to cover their tracks to evade detection by surveillance systems, but will also attack these defenses directly, as is already happening to forensic watermarking systems in TV services.

Seventy-five percent of organisations have expressed concerns about bot traffic (bot robots and scrapers) stealing company information, despite the same number already deploying a bot traffic manager solution, according to new research from the Neustar International Security Council (NISC).

Worried about the theft of digital content, inventory, pricing and other proprietary information, these fears come at a time where the level of concern among cybersecurity experts has almost doubled year-on-year.

The mounting concerns are evidenced in the Cyber Benchmark Index, which is a reflection of the current international cybersecurity landscape. At the start of this year, the index hit the highest ever rating (19.4) since NISC began mapping threat levels in May 2017. During the same period in 2018, the cyber benchmark index only reached 10.5.

Aside from bot traffic, security professionals perceived DDoS attacks to be the highest threat to their enterprise, with over half ofrespondents (52 percent) admitting to being on the receiving end of an attack. This was followed by system compromise, ransomware and financial theft.

However, despite DDoS ranking as the greatest overall danger to businesses, generalised phishing attacks were seen to be the most increasing concern. When considering where these threats might come from, security professionals viewed the world at large to be the biggest worry – a 60 percent rise from the previous reporting period.

“Fears around bot traffic and bot-powered DDoS attacks are extremely valid but by no means new,” said Rodney Joffe, Head of the NISC and Neustar Senior Vice President and Fellow. “However, with the rapid rise of the Internet of Things – whether that be across smart cities, banking or a nation’s critical infrastructure – the ability for bots to cause havoc at a global level has increased significantly. Without the appropriate detection, data scrubbing and mitigation tools in place, IoT devices have the potential to become part of a malicious botnet, whereby hackers essentially weaponise these devices to launch more powerful DDoS attacks. Worryingly, as more and more devices continue to connect to the Internet, these types of attack pose an increased risk to not only the defences of an enterprise, but also to a whole nation.”

“Unfortunately, bot traffic makes up a large proportion of the Internet,” continued Joffe. “So it is key that organisations make sure incoming data is scrubbed in real-time, while also identifying patterns of good and bad traffic to help with filtering. While it is encouraging to see that more organisations are implementing bot traffic manager solutions, it is imperative that businesses employ a holistic protection strategy across every layer for the best level of protection. Implementing a Web Application Firewall (WAF) is crucial for preventing bot-based volumetric attacks, as well as threats that target the application layer.”

Cloud Industry Forum and Ingram Micro Cloud find SMEs are moving towards next generation technologies, though skills shortages and security concerns are hindering progress.

Although UK SMEs are increasingly convinced about the opportunities presented by next generation technologies, such as AI, IoT and blockchain, many are struggling to incorporate them into their technology roadmaps. This is the key finding from a new research report by the Cloud Industry Forum (CIF) and Ingram Micro Cloud, who warn that the channel must do more to help drive digital transformation within the SME community.

The research, which was conducted by Vanson Bourne, sought to understand how far along UK-based SMEs are on their digital transformation journeys and the challenges that are confronting them along the way. It found that around four in ten respondents had already invested in AI (39%), blockchain (46%) or IoT (43%) to some extent and that a similar proportion thought these technologies would be critical or very important for their organisations over the next five years.

However, despite this enthusiasm, the vast majority identified barriers to their organisation’s digital transformation efforts. Just 29% of SMEs have a formulated digital transformation strategy in place, indicating a lack of clear guidance and leadership, 45% lacked digital transformation skills, and almost two third (64%) struggled with security.

For CIF and Ingram Micro Cloud, these findings highlight the opportunity for channel partners who must accelerate their own transformation plans if they are to capitalise on the growing demand for next generation technologies.

Commenting on the findings, Scott Murphy, Cloud and Advanced Solutions Director at Ingram Micro, said: “The findings from the research indicate that SMEs are increasingly aware of the need to embrace a modern workplace and that digital transformation is at the forefront of their business strategies. With this in mind, SMEs are leveraging cloud infrastructure to explore a range of next generation technologies, and are, in fact, further along that road than large enterprises. However, it’s clear that many SMEs don’t have the skills, guidance, and support to safely transform their businesses, and, as such, it’s critical that the channel can step up to the mark and better support their transformation efforts.”

Alex Hilton, CEO of CIF, added: “SMEs are clearly aware of the need for digital transformation and are enthusiastic about the opportunities new digital technologies can deliver. But equally clear is that they’re not getting enough assistance from the channel to see it through. Given that many channel partners are still at the very early stages of their own transformations, and still learning about how they can incorporate next generation technologies into their portfolios, this is to be expected. However, I’d hope that these research findings will spur the reseller community to accelerate their own transformation plans and seize the opportunities on offer.”

Angel Business communications are seeking nominations for the 2019 Datacentre Solutions Awards (DCS Awards).

The DCS Awards are designed to reward the product designers, manufacturers, suppliers and providers operating in data centre arena and are updated each year to reflect this fast moving industry. The Awards recognise the achievements of the vendors and their business partners alike and this year encompass a wider range of project, facilities and information technology award categories together with two Individual categories and are designed to address all the main areas of the datacentre market in Europe.

The DCS Awards team is delighted to announce Kohler Uninterruptible Power as the Headline Sponsor for this year’s event. Previously known as Uninterruptible Power Supplies Ltd (UPSL), a subsidiary of Kohler Co, and the exclusive supplier of PowerWAVE UPS, generator and emergency lighting products, UPSL is changing its name to Kohler Uninterruptible Power (KUP), effective March 4th, 2019.

UPSL’s name change is designed to ensure the company’s name reflects the true breadth of the business’ current offer, which now extends to UPS systems, generators, emergency lighting inverters, and class-leading 24/7 service, as well as highlighting its membership of Kohler Co. This is especially timely as next year Kohler will celebrate 100 years of supplying products for power generation and protection. Kohler Uninterruptible Power Ltd prides itself on delivering industry-leading power protection solutions and services.

Our Headline Sponsor, Kohler, is joined by Entertainment Sponsor Starline and Riello UPS as a category sponsor.

The 2019 DCS Awards feature 26 categories across four groups. The Project Awards categories are open to end use implementations and services that have been available before 31st December 2018. The Innovation Awards categories are open to products and solutions that have been available and shipping in EMEA between 1st January and 31st December 2018. The Company nominees must have been present in the EMEA market prior to 1st June 2018. Individuals must have been employed in the EMEA region prior to 31st December 2018.

The editorial panel at Angel Business Communications will validate entries and announce the final short list to be forwarded for voting by the readership of the Digitalisation World stable of publications during April. The winners will be announced at a gala evening on 16th May at London’s Grange St Paul’s Hotel.

Nomination is free of charge and all entries can submit up to four supporting documents to enhance the submission. The deadline for entries is : 8th March 2019.

Please visit : www.dcsawards.com for rules and entry criteria for each of the following categories:

DCS PROJECT AWARDS

Data Centre Energy Efficiency Project of the Year

New Design/Build Data Centre Project of the Year

Data Centre Consolidation/Upgrade/Refresh Project of the Year

Cloud Project of the Year

Managed Services Project of the Year

GDPR compliance Project of the Year

DCS INNOVATION AWARDS

Data Centre Facilities Innovation Awards

Data Centre Power Innovation of the Year

Data Centre PDU Innovation of the Year

Data Centre Cooling Innovation of the Year

Data Centre Intelligent Automation and Management Innovation of the Year

Data Centre Safety, Security & Fire Suppression Innovation of the Year

Data Centre Physical Connectivity Innovation of the Year

Data Centre ICT Innovation Awards

Data Centre ICT Storage Product of the Year

Data Centre ICT Security Product of the Year

Data Centre ICT Management Product of the Year

Data Centre ICT Networking Product of the Year

Data Centre ICT Automation Innovation of the Year

Open Source Innovation of the Year

Data Centre Managed Services Innovation of the Year

DCS Company Awards

Data Centre Hosting/co-location Supplier of the Year

Data Centre Cloud Vendor of the Year

Data Centre Facilities Vendor of the Year

Data Centre ICT Systems Vendor of the Year

Excellence in Data Centre Services Award

DCS Individual Awards

Data Centre Manager of the Year

Data Centre Engineer of the Year

Nomination Deadline : 8th March 2019

www.dcsawards.comAugmented analytics, continuous intelligence and explainable artificial intelligence (AI) are among the top trends in data and analytics technology that have significant disruptive potential over the next three to five years, according to Gartner, Inc.

Speaking at the recent Gartner Data & Analytics Summit in Sydne, Rita Sallam, research vice president at Gartner, said data and analytics leaders must examine the potential business impact of these trends and adjust business models and operations accordingly, or risk losing competitive advantage to those who do.

“The story of data and analytics keeps evolving, from supporting internal decision making to continuous intelligence, information products and appointing chief data officers,” she said. “It’s critical to gain a deeper understanding of the technology trends fueling that evolving story and prioritize them based on business value.”

According to Donald Feinberg, vice president and distinguished analyst at Gartner, the very challenge created by digital disruption — too much data — has also created an unprecedented opportunity. The vast amount of data, together with increasingly powerful processing capabilities enabled by the cloud, means it is now possible to train and execute algorithms at the large scale necessary to finally realize the full potential of AI.

“The size, complexity, distributed nature of data, speed of action and the continuous intelligence required by digital business means that rigid and centralized architectures and tools break down,” Mr. Feinberg said. “The continued survival of any business will depend upon an agile, data-centric architecture that responds to the constant rate of change.”

Gartner recommends that data and analytics leaders talk with senior business leaders about their critical business priorities and explore how the following top trends can enable them.

Trend No. 1: Augmented Analytics

Augmented analytics is the next wave of disruption in the data and analytics market. It uses machine learning (ML) and AI techniques to transform how analytics content is developed, consumed and shared.

By 2020, augmented analytics will be a dominant driver of new purchases of analytics and BI, as well as data science and ML platforms, and of embedded analytics. Data and analytics leaders should plan to adopt augmented analytics as platform capabilities mature.

Trend No. 2: Augmented Data Management

Augmented data management leverages ML capabilities and AI engines to make enterprise information management categories including data quality, metadata management, master data management, data integration as well as database management systems (DBMSs) self-configuring and self-tuning. It is automating many of the manual tasks and allows less technically skilled users to be more autonomous using data. It also allows highly skilled technical resources to focus on higher value tasks.

Augmented data management converts metadata from being used for audit, lineage and reporting only, to powering dynamic systems. Metadata is changing from passive to active and is becoming the primary driver for all AI/ML.

Through to the end of 2022, data management manual tasks will be reduced by 45 percent through the addition of ML and automated service-level management.

Trend No. 3: Continuous Intelligence

By 2022, more than half of major new business systems will incorporate continuous intelligence that uses real-time context data to improve decisions.

Continuous intelligence is a design pattern in which real-time analytics are integrated within a business operation, processing current and historical data to prescribe actions in response to events. It provides decision automation or decision support. Continuous intelligence leverages multiple technologies such as augmented analytics, event stream processing, optimization, business rule management and ML.

“Continuous intelligence represents a major change in the job of the data and analytics team,” said Ms. Sallam. “It’s a grand challenge — and a grand opportunity — for analytics and BI (business intelligence) teams to help businesses make smarter real-time decisions in 2019. It could be seen as the ultimate in operational BI.”

Trend No. 4: Explainable AI

AI models are increasingly deployed to augment and replace human decision making. However, in some scenarios, businesses must justify how these models arrive at their decisions. To build trust with users and stakeholders, application leaders must make these models more interpretable and explainable.

Unfortunately, most of these advanced AI models are complex black boxes that are not able to explain why they reached a specific recommendation or a decision. Explainable AI in data science and ML platforms, for example, auto-generates an explanation of models in terms of accuracy, attributes, model statistics and features in natural language.

Trend No. 5: Graph

Graph analytics is a set of analytic techniques that allows for the exploration of relationships between entities of interest such as organizations, people and transactions.

The application of graph processing and graph DBMSs will grow at 100 percent annually through 2022 to continuously accelerate data preparation and enable more complex and adaptive data science.

Graph data stores can efficiently model, explore and query data with complex interrelationships across data silos, but the need for specialized skills has limited their adoption to date, according to Gartner.

Graph analytics will grow in the next few years due to the need to ask complex questions across complex data, which is not always practical or even possible at scale using SQL queries.

Trend No. 6: Data Fabric

Data fabric enables frictionless access and sharing of data in a distributed data environment. It enables a single and consistent data management framework, which allows seamless data access and processing by design across otherwise siloed storage.

Through 2022, bespoke data fabric designs will be deployed primarily as a static infrastructure, forcing organizations into a new wave of cost to completely re-design for more dynamic data mesh approaches.

Trend No. 7: NLP/ Conversational Analytics

By 2020, 50 percent of analytical queries will be generated via search, natural language processing (NLP) or voice, or will be automatically generated. The need to analyze complex combinations of data and to make analytics accessible to everyone in the organization will drive broader adoption, allowing analytics tools to be as easy as a search interface or a conversation with a virtual assistant.

Trend No. 8: Commercial AI and ML

Gartner predicts that by 2022, 75 percent of new end-user solutions leveraging AI and ML techniques will be built with commercial solutions rather than open source platforms.

Commercial vendors have now built connectors into the Open Source ecosystem and they provide the enterprise features necessary to scale and democratize AI and ML, such as project & model management, reuse, transparency, data lineage, and platform cohesiveness and integration that Open Source technologies lack.

Trend No. 9: Blockchain

The core value proposition of blockchain, and distributed ledger technologies, is providing decentralized trust across a network of untrusted participants. The potential ramifications for analytics use cases are significant, especially those leveraging participant relationships and interactions.

However, it will be several years before four or five major blockchain technologies become dominant. Until that happens, technology end users will be forced to integrate with the blockchain technologies and standards dictated by their dominant customers or networks. This includes integration with your existing data and analytics infrastructure. The costs of integration may outweigh any potential benefit. Blockchains are a data source, not a database, and will not replace existing data management technologies.

Trend No. 10: Persistent Memory Servers

New persistent-memory technologies will help reduce costs and complexity of adopting in-memory computing (IMC)-enabled architectures. Persistent memory represents a new memory tier between DRAM and NAND flash memory that can provide cost-effective mass memory for high-performance workloads. It has the potential to improve application performance, availability, boot times, clustering methods and security practices, while keeping costs under control. It will also help organizations reduce the complexity of their application and data architectures by decreasing the need for data duplication.

“The amount of data is growing quickly and the urgency of transforming data into value in real-time is growing at an equally rapid pace,” Mr. Feinberg said. “New server workloads are demanding not just faster CPU performance, but massive memory and faster storage.”

Nearly 50 percent of PaaS offerings are Cloud-only

Nearly half of today’s platform as a service (PaaS) service offerings are cloud-only, according to Gartner, Inc. Currently, there are more than 360 vendors across 21 market segments, delivering more than 550 PaaS offerings. Forty-eight percent of these offerings are cloud-only. Not a single vendor has a foothold across all 21 segments, and 90 percent of them only operate within a single PaaS market segment.

“Business and technology leaders are shifting to strategic investment in cloud computing,” said Yefim Natis, research vice president and distinguished analyst at Gartner. “Cloud computing is one of the key disruptive forces in IT markets that is gaining mainstream trust.”

“Although many organizations anticipate a long-term retention of on-premises computing, the vendors of nearly half of the cloud platform offerings bet on the prevailing growth of cloud deployments and chose the more modern and more efficient cloud-only delivery of their capabilities,” said Mr. Natis. Enterprise IT spending for cloud-based offerings will surpass spending for noncloud IT offerings by 2022, according to Gartner.

The total PaaS market revenue is forecast to reach $20 billion in 2019, and to exceed $34 billion in 2022, according to the latest forecast from Gartner. In this shift to cloud, database and application platform services represent the largest market segments, with blockchain, digital experience, serverless and artificial intelligence/machine learning (AI/ML) platform services as the newest.

Thirteen percent of organizations implementing Internet of Things (IoT) projects already use digital twins, while 62 percent are either in the process of establishing digital twin use or plan to do so, according to a recent IoT implementation survey by Gartner, Inc.

Gartner defines a digital twin as a software design pattern that represents a physical object with the objective of understanding the asset’s state, responding to changes, improving business operations and adding value.

“The results — especially when compared with past surveys — show that digital twins are slowly entering mainstream use,” said Benoit Lheureux, research vice president at Gartner. “We predicted that by 2022, over two-thirds of companies that have implemented IoT will have deployed at least one digital twin in production. We might actually reach that number within a year.”

While only 13 percent of respondents claim to already use digital twins, 62 percent are either in the process of establishing the technology or plan to do so in the next year. This rapid growth in adoption is due to extensive marketing and education by technology vendors, but also because digital twins are delivering business value and have become part of enterprise IoT and digital strategies.

“We see digital twin adoption in all kinds of organizations. However, manufacturers of IoT-connected products are the most progressive, as the opportunity to differentiate their product and establish new service and revenue streams is a clear business driver,” Mr. Lheureux added.

Digital Twins Serve Many Masters

A key factor for enterprises implementing IoT is that their digital twins serve different constituencies inside and outside the enterprise. Fifty-four percent of respondents reported that while most of their digital twins serve only one constituency, sometimes their digital twins served multiple; nearly a third stated that either most or all their digital twins served multiple constituencies. For example, the constituencies of a connected car digital twin can include the manufacturer, a customer service provider and the insurance company, each with a need for different IoT data.

When asked for examples of digital twin constituencies, replies varied widely, ranging from internal IoT data consumers, such as employees or security over commercial partners to technology providers. “These findings show that digital twins serve a wide range of business objectives,” said Mr. Lheureux. “Designers of digital twins should keep in mind that they will probably need to accommodate multiple data consumers and provide appropriate data access points.”

Digital Twins Are Often Integrated With Each Other

When an organization has multiple digital twins deployed, it might make sense to integrate them. For example, in a power plant with IoT-connected industrial valves, pumps and generators, there is a role for digital twins for each piece of equipment, as well as a composite digital twin, which aggregates IoT data across the equipment to analyze overall operations.

Despite this setup being very complex, 61 percent of companies that have implemented digital twins have already integrated at least one pair of digital twins with each other, and even more — 74 percent of organizations that have not yet integrated digital twins — will do so in the next five years. However, this result also means that 39 percent of respondents have not yet integrated any digital twins; of those, 26 percent still do not plan to do so in five years.

“What we see here is that digital twins are increasingly deployed in conjunction with other digital twins for related assets or equipment,” said Mr. Lheureux. “However, true integration is still relatively complicated and requires high-order integration and information management skills. The ability of to integrate digital twins with each other will be a differentiating factor in the future, as physical assets and equipment evolve.”

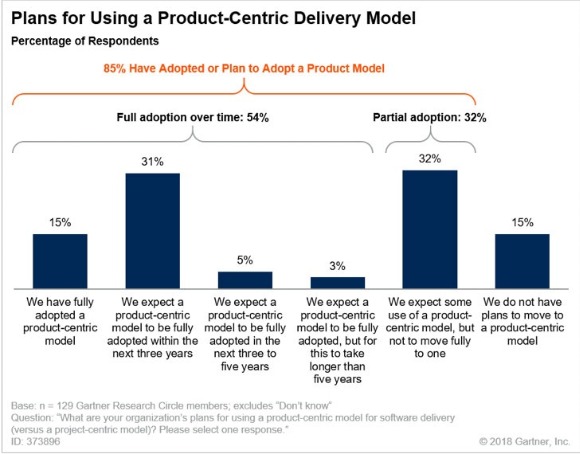

Organisations favour a product-centric application deliver model

Eighty-five percent of organizations have adopted, or plan to adopt, a product-centric application delivery model, according to a survey by Gartner, Inc. Although full adoption is rare, overall, survey respondents use the product-centric model for 40 percent of their work in 2018. Gartner predicts that this figure will reach 80 percent by 2022.

“The increase in how quickly and broadly organizations are adopting the product-centric application model doesn’t arise randomly. It goes hand-in-hand with the adoption of agile development methodologies and DevOps,” said Bill Swanton, distinguished research vice president at Gartner. “In addition, an increasing number of applications that IT teams develop are used by external parties, such as clients or partners, and require the increased customer focus that characterizes the product-centric model.”

The survey found that over half (54 percent) of respondents expect to fully adopt the product-centric application model over time, while roughly one-third (32 percent) plan partial adoption (see Figure 1). Managing everything as a product is unlikely to be justified, as some IT activities, such as initial implementation of a large software package, may well be better managed as projects.

Figure 1: Plans for Adopting a Product-Centric Application Delivery Model

Source: Gartner (February 2019)

Mr. Swanton added: “Business leaders are generally unhappy with the speed at which they get application improvements and how they work. Given that no IT organization gets anywhere near enough funding to do everything everyone wants when they want it, product-centric approaches allow faster delivery of the most important capabilities needed. They also force the business to prioritize the work, and to reprioritize it as requirements are better understood or the market changes.

Speed to Market and Digital Business Motivate a Product-Centric Application Approach

Thirty-two percent of the survey respondents identified a need to deliver more quickly as their main driver of adoption of a product-centric application approach. They said that speed to market was the main driver of their transformation process.

Digital business came second (31 percent of respondents). When organizations start a digital transformation journey, they often find that traditional project methods are not suitable for the uncertainties of a transformative business model. They discover a need to adopt agile methods and to treat the results as products, since they will be used by external customers.

The shift from a project-centric to a product-centric application approach does not come without challenges, however. Concerns about project-based funding and the culture clash between “the business” and “IT” were the top challenges for 55 percent of the respondents.

The Rise of the Product Manager Changes the Role of Application Leader

Forty-six percent of the respondents said their organization had already appointed a product manager, while 15 percent plan to introduce this role by the end of 2018. Ten percent have no plans to introduce this role.

According to the majority of respondents, product managers report, or will report, to the IT organization or project management office. At the same time, respondents said they expect the role of application leader to change. For 43 percent of respondents the role will reside in the IT organization, while for 32 percent it will migrate into business teams where the application leader will lead a product line or be a group or single product manager.

“As organizations gain more experience with product-centric delivery models, we expect product and technical leadership to separate from administrative line management. This will have an impact on the prospects of holders of the application leader role, who will need to choose between product management, engineering team management and administrative people management,” Mr. Swanton said.

Information and communications technology (ICT) spending by service providers will reach $426 billion by 2022, representing average growth of 6% per year, as the ongoing shift from on-premise IT management to the "as-a-service" model continues to gather pace in many regions around the world. Cloud and digital service providers will represent the strongest market opportunities for ICT vendors with overall growth of 9% over the forecast period ($105 billion in annual spend by 2022), but communications service providers will still represent the largest share of service provider spending ($254 billion in 2022). Colocation and managed services providers will increase spending by an average of 7% per year, reaching $67 billion in annual ICT spend by 2022. The ICT spending forecast was published in the first Worldwide Black Book Service Provider Edition from International Data Corporation (IDC).

Service providers already account for 44% of worldwide spending on core infrastructure technologies (server and storage hardware, enterprise network equipment, and storage/network software). The pace of investments by cloud infrastructure providers is expected to slow in the next few years as supply/demand and supply chains normalize, but service providers will continue to account for a growing share of overall spending as the ICT market shifts to the "as-a-service" model and new digital ecosystems emerge in which digital service providers will play a key role.

"The shift from end-users to service providers in core infrastructure technology markets is a profound shift with deep implications for tech vendors," said Stephen Minton, vice president in IDC's Customer Insights & Analysis group. "Cloud infrastructure providers, in particular, have led a surge of spending on servers, storage, and system infrastructure software in the past few years. While this will slow over the long term, we expect new digital service providers to pick up the slack in terms of overall ICT spending as new digital ecosystems are created to serve an increasingly digital global economy. For IT vendors, this has major implications in terms of a major shift of spending from end-users to middlemen."

Last year saw a major spending cycle by cloud and digital providers, who increased their share of worldwide spending on core infrastructure technologies from 24% to 28% (from $39 billion to $51 billion). Spending on core infrastructure by cloud and digital service providers will increase at an average rate of 10% per year over the next five years, compared to growth of 3% by commercial end-users.

This shift has been particularly rapid in the United States, where cloud and digital providers already account for 43% of core infrastructure spending and will make up 47% by 2022, with an average growth rate of 8.5% compared to meagre growth of 1% by commercial end-users. Cloud and digital providers account for a smaller share in China (around 25% in 2018) but will increase spending on core infrastructure by 22% over the forecast period. In Western Europe, cloud and digital providers make up only 12% of core infrastructure spend and this will barely change over the forecast period despite growth of around 6% per year on average. This is largely because a major capital spending cycle in 2018 will be followed by much weaker increases over the next few years as service providers in Western Europe take stock and balance their capital spending needs with end-user demand for services in a more scalable manner amidst a cautious economic outlook.

"There is significant variation by region in service provider spending and growth," said Minton. "For example, cloud and digital providers still represent a relatively small share of spending in the Europe, Middle East and Africa (EMEA) region, where they will account for just 11% of core infrastructure spending by 2022; or Canada, where they will make up just 9%. In the U.S., by contrast, cloud and digital providers will represent almost half of all spending on core infrastructure technology by the same year as a result of the phenomenal growth of cloud providers in the U.S. market, which are serving aggressive early adopters of cloud infrastructure and software as a service."

"The implications for ICT vendors are profound," said Minton. "Not only are their traditional customers moving away to a new model for ICT resource procurement, but they are faced with an increasing share of revenue concentrated in a relatively small number of customers. Of course, many ICT vendors are chasing the service provider trend by transforming their own business models accordingly, positioning themselves as service providers with offerings in the cloud space in particular. However, for many of these firms, revenue from these new business opportunities still represents a relatively small share of overall sales, leading to a bumpy transition. Meanwhile, we expect new digital service providers to add significant disruption to the overall market in the next ten years as the global economy shifts into an 'as-a-service' model for B2C transactions in particular."

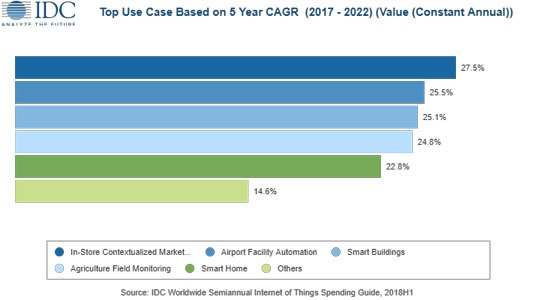

IoT spending to grow by 20 percent

Revenues for the European Internet of Things (IoT) market are forecast to increase by 19.8% year on year to reach $171 billion in 2019, according to the latest update to the Worldwide Semiannual Internet of Things Spending Guide from International Data Corporation (IDC). Total spending on IoT solutions in Europe will maintain a double-digit annual growth rate throughout the 2017–2022 period and is expected to surpass $241 billion in 2022. The forecast is based on IDC's research into the expanding IoT technology market, which addresses business investment opportunities and use case implementations across a spectrum of industries.

While the appetite for IoT solutions is evident across the entire region, Western Europe will account for the lion's share of the market. Germany will be the European IoT champion in 2019, with spending exceeding $35 billion. Adoption of IoT technology in other European countries will also soar, with France and U.K. each spending over $25 billion, followed by Italy with $19 billion. Central and Eastern Europe (CEE) will account for 7% of the total European IoT revenues in 2019.

"We’re still just scratching the surface of how powerful IoT solutions can be when combined with the massive scale of IoT endpoints, world-class connectivity and advanced technology," said Milan Kalal, program manager at IDC. "That said, organizations across industries are gradually experiencing that driving business outcomes with IoT requires not only new technologies and expertise in areas like edge infrastructure, wired and wireless networking, security, and edge-to-cloud architectures, but also viable use cases that deliver short-term results and help drive a strategic IoT innovation road map."

The industries that are forecast to spend the most on IoT solutions in 2019 are discrete manufacturing ($20 billion), utilities ($19 billion), retail ($16 billion), and transportation ($15 billion). IoT spending among manufacturers will be largely focused on solutions that support manufacturing operations and production asset management. In the utilities sector, IoT spending will be dominated by smart grids for electricity, gas, and water. Omni-channel operations will be the single largest use case within the retail sector. In transportation, two thirds of IoT spending will go into freight monitoring and logistics solutions. The industries that will see the fastest annual growth rates throughout the 2017-2022 period are retail (18.5%), healthcare (17.9%), and state/local government (17.1%).

All that said, the true leader for IoT spending in 2019 will be the consumer segment, with revenues exceeding $32 billion. The largest consumer use cases will be related to the smart home, personal wellness, and connected vehicles. Within smart home, home automation and smart appliances will both experience strong spending growth over the forecast period and will help make consumer the fastest-growing industry segment overall with a five-year CAGR of 20.0%.

Forecasting spending growth by use case over the 2017-2022 period provides a picture of where other industries will be making their IoT investments. These include in-store contextualized marketing (retail), airport facility automation (transportation), smart buildings (professional services), agriculture field monitoring (resource industries), and smart home (consumer).

"We are now experiencing a dichotomy scenario across European IoT adopters: While a few advanced users are leveraging IoT technologies in full swing, there is still a large portion of users struggling to prove and replicate initial pilots and proof of concepts," says Andrea Siviero, a research manager at IDC.

Hardware will be the largest technology category in 2019 with revenue of $66 billion led by module and sensor purchases. IoT services will be close behind at $60 billion going toward traditional IT and installation services as well as non-traditional device and operational services. IoT software spending will total $35 billion in 2019 and will see the fastest growth over the five-year forecast period with a CAGR of 18.9%. IoT connectivity spending will total $10 billion in 2019.

Storage capacity to double by 2023

In its inaugural Global StorageSphere forecast, International Data Corporation (IDC) estimates that the installed base of storage capacity worldwide will more than double over the 2018-2023 forecast period, growing to 11.7 zettabytes (ZB) in 2023.

IDC's Global StorageSphere measures the size of the worldwide installed base of storage capacity across six types of storage media, and how much of the storage capacity is utilized or available each year. The installed base of storage capacity is a cumulative metric: it is equivalent to the amount of new storage capacity deployed over the span of several years minus the systems or storage devices that either fail or are retired or decommissioned each year. The Global StorageSphere is a product of IDC's Global DataSphere program, which measures how much new data is created and/or replicated each year.

"The Global StorageSphere is large and diverse, encompassing many different storage technologies, and growing rapidly," said John Rydning, research vice president at IDC. "Within the Global StorageSphere, enterprises, especially cloud providers, are increasingly being relied upon to store and manage the world's repository of data."

Key findings from the Global StorageSphere forecast include the following:

Can data centres handle sporadic faults?

Asks Tony Lock, Director of Engagement and Distinguished Analyst, Freeform Dynamics Ltd.

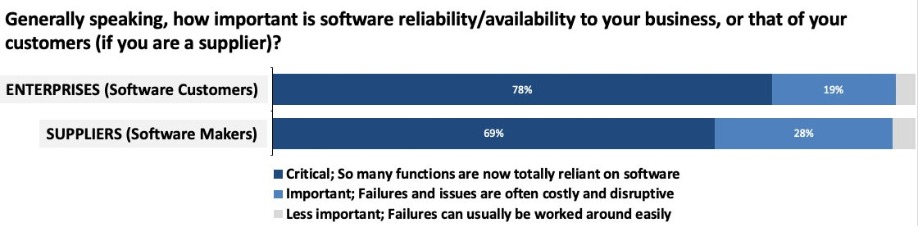

Data centres have been providing services to users for decades and the expectation has always been that system failures and interruptions would be kept to a minimum - and perhaps more importantly, that should something go wrong it would be fixed as quickly as possible.

The problem is that the popular idea of what constitutes an acceptable minimum number of interruptions has fallen so low that many IT services are now expected simply to always work, without fail. And, goodness knows, if something does go wrong, the expectation is that it will be fixed within minutes, if not seconds. This has made software reliability a critical issue for both the vast majority of enterprise users and the technical staff whose job it is to keep everything running smoothly (Figure 1).

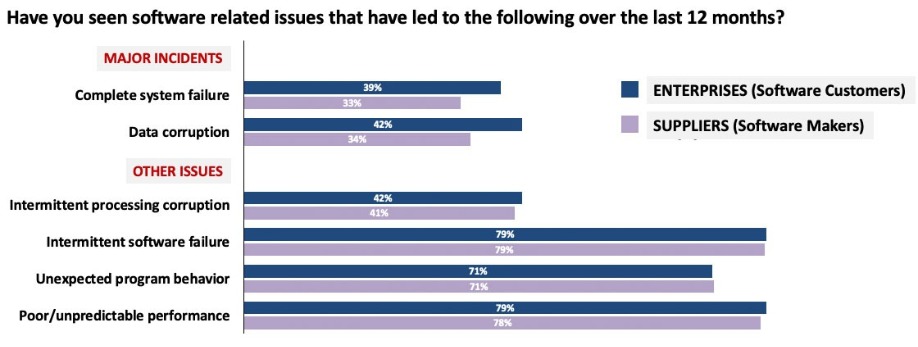

A recent report by Freeform Dynamics (link: https://freeformdynamics.com/software-delivery/optimizing-software-supplier-customer-relationship/) highlights the fact that despite considerable effort and expense, and the development of new architectures and operational processes, IT services still suffer interruptions. This is true across organisations of all sizes and in all verticals, and it applies to the producers of software as well as to the users of commercial applications (Figure 2).

Major incidents and intermittent problems abound

Over a third of respondents reported major incidents such as complete system failure and data corruption. But while major systems failures can have a huge impact, DR plans and procedures often exist to deal with them, with the expectation that key resources will be mobilised immediately. In some ways more challenging are issues that crop up and then disappear. If you’re lucky, such intermittent problems could simply be caused by bugs in the application software in sections that are only run in unusual circumstances, relatively easy to track down, if not always to remediate.

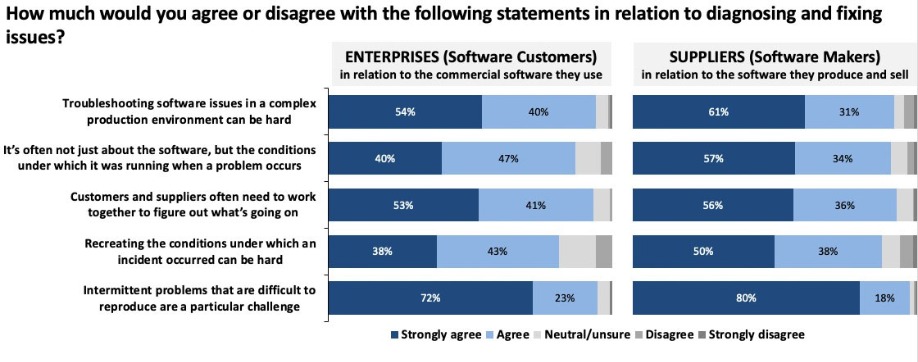

More problematic are failures that only take place due to an unusual combination of the application code execution within the overall systems environment, potentially including factors from the operating system, middleware stacks, virtualisation platforms or even the precise nature of the physical environment. And such unusual combinations of elements are more likely to happen as systems become more dynamic in terms of how resources are allocated at execution time.

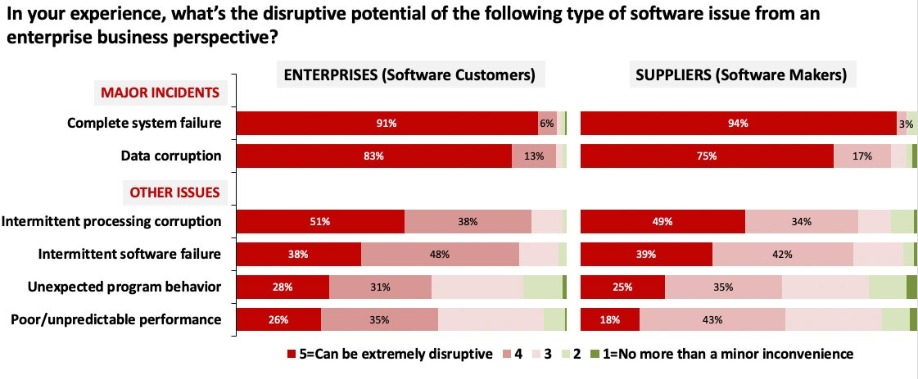

Indeed, more respondents stated they had experienced sporadic problems, such as intermittent software failure or intermittent processing corruption, unexpected program behaviour or unpredictable performance, as compared to those reporting major systems outages. And when it comes to the impact of these software issues, the survey paints a disturbing picture (Figure 3).