By Philip Alsop

Maybe not exactly what those waiting at Gatwick the other week, to take to the skies for their holidays, could be heard shouting, but, nevertheless, a refreshing reminder that, no matter what the level of automation and artificial intelligence, good old human error will never be eliminated. Ah, but surely if it had been a gang of robots working away at the airport, they would have been programmed to recognise what cables to cut through (ideally, none!) and what to leave alone?! Maybe, but that pre-supposes that the person or persons doing the programming would be capable of imagining every single work-related scenario and coming up with the appropriate response for the robots to make.

Okay, so we might end up in a world where robots programme robots, but even then, there will always be fresh, unthought-of situations that are outside the knowledge and experience of both humans and robots. In which case, would you rather trust automation, however intelligent, humans able to think outside the box, or a mixture of the two, working in tandem?

Not sure there’s an easy answer to that question. However, for what it’s worth, my money is on (yet another) hybrid world. A world where robots lead and humans follow/support, sometimes; and where humans lead and robots follow at others. AI, ML, IoT, RPA, VR and more are here to stay, but they just might not eliminate the need for humans any time soon. After all, Gatwick had to rely on humans, plus pens, plus whiteboards, to solve its IT failure!

Lack of digital skill sets is the greatest barrier to transformation.

Infosys has released global research, The New Champions of Digital Disruption: Incumbent Organizations, that reveals that under a quarter of organizations surveyed, understand that commitment to digital is at the heart of true transformation. And, it is these organizations that are reaping rewards from digital disruption. According to the research, more than half of all respondents surveyed, rank focus on digital skillset as the most important factor in successful transformation, followed by senior leadership commitment and change management, implying the need for a conducive organizational culture.

Visionaries, Watchers and Explorers

The research identifies three clusters of respondents based on the business objectives behind their digital transformation initiatives.

True transformation begins from the core

While Watchers and Explorers are primarily focusing on emerging technologies like Artificial Intelligence (AI), Blockchain and 3D printing for digital transformation initiatives, Visionaries are not only looking at emerging technologies, but are also focusing strongly on core areas such as mainframe and ERP modernization.

Visionaries believe that true transformation comes from the core and without this in the background, digital technologies will not perform to their potential. The study reflects that their commitment to modernizing from the core will yield benefits, such as improved productivity and efficiencies.

Agility in championing digital disruption

Visionaries watch and explore futuristic trends which currently escape the notice of the other two cohorts. They boast of increased clarity on opportunities and threats of digital disruption over Explorer and Watchers, as well as an increased ability to execute on them.

Visionaries look further into the future. They attach a higher rating to the impact of market drivers such as Emerging Technologies (86 percent Visionaries vs 63 percent Explorers, 50 percent Watchers) and Changing Ecosystems (63 percent vs 39 percent, 31 percent) – enabling them to be agile and disruptive.

Lack of digital skill set – greatest barrier

When ranking barriers on the path to digitization, building digital skill sets was found to be the most prevalent (54 percent) challenge for organizations, highlighting the lack of digital skill set available.

Transforming from a low risk organization to an organization that rewards experimentation (43 percent) and lack of change management (43 percent) were the second and third greatest barriers, showcasing the turbulence and resistance to change associated with digital transformation.

The importance of establishing an ecosystem

Building in-house capabilities was on the list of 76 percent of Visionaries, who were keen on acquiring digital native firms, to quickly gain the digital skills that 71 percent of the Visionaries believed were lacking in-house. Thereby, showcasing the increasing trend towards acquisitions and development of a sustainable ecosystem. Comparatively, the proportion of Explorers and Watchers looking at the acquisition and ecosystem options was negligible.

Pravin Rao, Chief Operating Officer, Infosys, said, “Navigating the digital disruption requires companies to drive a holistic approach to transformation and foster a digital culture that brings together leadership commitment and a renewed approach to skill building. Infosys with its long standing partnerships with global corporations is focused on accelerating their digital transformation journey from their core systems while building new capability to drive competitive advantage.”

Overcoming barriers to digital transformation

Enterprises are relying on their transformation partners to help them scale barriers. Preparing workforce for digital transformation and developing strong capability in managing large organizational change have emerged as top strategies to overcome these barriers. This is especially critical to Visionaries who are aiming to transform business culture.

Jul 16th, 2018

In Digital Business,

A new survey into digital effectiveness has revealed that ‘Product Thinking’ is helping organisations become more digitally effective.

The survey carried out by digital agency Code Computerlove, a fore-runner of product thinking as an approach, found some clear differences in the attitude, behaviours and methodologies of top-performing business versus the mainstream when it comes to their digital products.

Key findings included:

Top performing business are more likely to be looking to ‘increase customer lifetime value’ than their mainstream counterparts (68% vs. 47%). For successful businesses, the numbers reporting a ‘focus on outcomes in delivery’ (75%) and a ‘focus on long-term goals versus short term targets’ (59%) were almost double that of underperforming businesses reporting the same behaviour. 82% of high performers agree strongly with the statement “Focusing on user needs leads to better business outcomes”.

The research also found that 60% of top performers are satisfied with their ability to deliver digital products on time and on budget. Conversely, only 19% of the mainstream felt the same.

50% of top performing companies (vs. 38% mainstream) are currently aiming to speed up their performance and 69% adopt agile processes in development.

Commenting on the survey, Tony Foggett, CEO of Code Computerlove, said: “Product Thinking has emerged as a way in which organisations are navigating the need to deliver increased consumer centricity and agility using larger more complex, and business critical, technology platforms.

“It’s a way of achieving digital effectiveness as part of a digital transformation process, but it’s not just a question of adopting new digital tools and platforms, it’s the ways of thinking and organising teams around these tools and across an organisation as a whole.

“Taken together the aims and approaches of the top performing businesses that completed the survey reflect ‘product thinking’ - a mindset based on continually building value for the customer through an agile, non-siloed, digitally-savvy culture with clearly defined business goals.”

In its simplest form, Product Thinking is a drive for business effectiveness delivered by maximising value from digital touchpoints. It enables organisations to prioritise their efforts more effectively and immediately correlate investment with commercial return.

Foggett added: “It was interesting to see from the survey that top performing businesses had a stronger focus on value. 68 per cent can attribute return on investment to specific digital projects; three-quarters focus on outcomes in development and are happy to change requirements if needed (during the development process) and over half focus more on long-term goals rather than just short-term targets.”

Foggett concluded: “We’re undoubtedly in a period of change and the pace of this change means companies cannot afford to develop products and projects in the traditional ways. The structures and ways of thinking that dominated businesses in the late 20th century are not suited to the demands of the 21st.

“Our report has shown that businesses that have moved on from ‘big bang’ releases, and are taking a more long term, agile and customer-value approach – akin to Product Thinking, believe that this approach is paying dividends.”

Nearly 90 percent of organizations investing in AI, very few succeeding.

A survey commissioned by the leader in unified analytics and founded by the original creators of Apache Spark™, Databricks, has revealed that only one in three AI projects are succeeding, and, perhaps more importantly, it is taking businesses more than six months to go from concept to production. Organizations are hindered at multiple stages of the process when bringing AI projects to production. According to 95 percent of European respondents, collaboration between data engineering and data science teams is a challenging issue. Nearly all respondents cite data-related challenges when moving AI projects to production. IT executives point to unified analytics as a solution for these challenges with 90 percent of respondents saying the approach of unifying data science and data engineering across the machine learning lifecycle will conquer the AI dilemma.

The research, commissioned by Databricks through CIO/IDG Research Services, shows that more 93 percent of organizations surveyed are investing in technology to help with data prep and data exploration/modeling, including data processing, data streaming, machine learning and/or deep learning tools. As a result, organizations are using an average of seven different tools for data prep and modeling. Based on these results, it is not a surprise that European organizations cited technology as their most common obstacle when moving AI projects to production.

Additional results of the survey speak to the complexity and organizational confusion being creating as companies pursue AI initiatives: The average number of AI projects considered completely successful within enterprises is around 39 percent according to those surveyed in Europe, compared to an average of 35 percent of projects in the US. 98 percent of the surveyed believe preparation and aggregation of large datasets in a timely fashion is a major challenge; 96 percent of respondents found data exploration and iterative model training challenging; 90 percent cited the deployment of models to production quickly and reliably as a significant challenge

David Wyatt, Vice President and General Manager EMEA, Databricks, commented on the survey’s results: “Getting AI right is challenging, and one of the biggest hurdles to success is how teams collaborate around data. The research shows how difficult and time consuming it can be to turn raw data into valuable insights for the business. With unified analytics, enterprises can bring together their people, processes and technology to deliver results faster – not only does this make projects more efficient, it increases the chances that these projects can succeed in meeting their objectives over time.”

So, what will help these organizations conquer the AI dilemma? The surveyed executives said they need end-to-end solutions that combine data processing with machine learning capabilities. These streamlined solutions would simplify workflows, improve efficiency and ultimately accelerate business value.

In fact, nearly 80 percent of executives surveyed said they highly valued the notion of a unified analytics platform. Unified analytics makes AI more achievable for enterprise organizations by unifying data processing and AI technologies. Unified analytics solutions provide collaboration capabilities for data scientists and data engineers to work effectively across the entire AI development-to-production lifecycle. With more than 90 percent of large companies facing data-related challenges and increasing complexity driven by an explosion of machine learning tools, the need for platforms and processes that can remove technology and organizational silos is more pronounced than ever. Unified analytics provides an ideal approach for companies facing modern AI implementation barriers.

Databricks accelerates innovation by unifying data science, engineering, and business. Through a fully managed, cloud-based service built by the original creators of Apache Spark, the Databricks Unified Analytics Platform lowers the barrier for enterprises to innovate with AI and accelerates their innovation.

Databricks commissioned IDG Research to conduct analysis of the AI, machine learning and deep learning landscape in large enterprises. The survey looked to sample the experiences of those working in specific senior data engineering and data science roles at companies with more than 1,000 employees. The audience of 200 people was split equally between the United States and Western Europe.

SnapLogic has released “The 2018 Data Value Report,” a new study that reveals enterprises expect to generate a 547% return on their data investments, increasing revenue by an average $5.2 million as a result of using data more effectively. However, businesses have only scratched the surface in realizing data’s potential: On average, organizations are using only half (51%) the data they collect or generate, and data drives less than half (48%) of decisions.

The new study polled 500 enterprise IT decision-makers (ITDMs) to examine their data priorities, investment plans, and what’s holding them back from maximizing value. Conducted by independent research firm Vanson Bourne, the survey reveals that enterprises plan to invest an average $1.7 million in operationalizing data over the next five years – more than double what they are spending today. Yet enterprises are far from achieving their data-driven ambitions. Despite the clear business case for data investments, enterprises struggle to reap the rewards, held back by manual work, outdated technology, and lack of trust in data quality.

The new report provides a benchmark as enterprises develop strategies for bringing data-driven decision-making to all parts of their organizations. Key findings include:

“There’s a saying that every business must be a software business, but what they should really focus on is becoming a data company,” said Gaurav Dhillon, CEO at SnapLogic. “Businesses understand that dedicating time, money, and talent to data will lead to long-term revenue gains, yet in reality most enterprises are still far from generating significant value and ROI. Legacy systems, tedious manual labor, and the sheer volume of information are preventing organizations from maximizing their data-driven potential. The enterprises that act now to spread data literacy throughout their business will be the ones to thrive.”

A recent article by Steve Gillaspy of Intel outlined many of the challenges faced by those responsible for designing, operating, and sustaining the IT and physical support infrastructure found in today's data centers. This paper targets four of the five macro trends discussed by Gillaspy, how they influence the decision making processes of data center managers, and the role that power infrastructure plays in mitigating the effects of the following trends.

Jul 18th, 2018

In Device Security, Identity/Access Management, Datacentre & Network security, Compliance/Governance/Risk Management, Security-as-a-Service,

Despite 95 percent of CIOs expecting cyberthreats to increase over the next three years, only 65 percent of their organizations currently have a cybersecurity expert, according to a survey from Gartner, Inc. The survey also reveals that skills challenges continue to plague organizations that undergo digitalization, with digital security staffing shortages considered a top inhibitor to innovation.

Gartner's 2018 CIO Agenda Survey gathered data from 3,160 CIO respondents in 98 countries and across major industries, representing approximately $13 trillion in revenue/public sector budgets and $277 billion in IT spending.

The survey indicates that cybersecurity remains a source of deep concern for organizations. Many cybercriminals not only operate in ways that organizations struggle to anticipate, but also demonstrate a readiness to adapt to changing environments, according to Rob McMillan, research director at Gartner.

"In a twisted way, many cybercriminals are digital pioneers, finding ways to leverage big data and web-scale techniques to stage attacks and steal data," said Mr. McMillan. "CIOs can't protect their organizations from everything, so they need to create a sustainable set of controls that balances their need to protect their business with their need to run it."

Thirty-five percent of survey respondents indicate that their organization has already invested in and deployed some aspect of digital security, while an additional 36 percent are actively experimenting or planning to implement in the short term. Gartner predicts that 60 percent of security budgets will be in support of detection and response capabilities by 2020.

"Taking a risk-based approach is imperative to set a target level of cybersecurity readiness," Mr. McMillan said. "Raising budgets alone doesn't create an improved risk posture. Security investments must be prioritized by business outcomes to ensure the right amount is spent on the right things."

Business growth introduces new attack vectors

According to the survey, many CIOs consider growth and market share as the top-ranked business priority for 2018. Growth often means more diverse supplier networks; different ways of working, funding models and patterns of technology investing; as well as different products, services and channels to support.

"The bad news is that cybersecurity threats will affect more enterprises in more diverse ways that are difficult to anticipate," Mr. McMillan said. "While the expectation of a more dangerous environment is hardly news to the informed CIO, these growth factors will introduce new attack vectors and new risks that they're not accustomed to addressing."

Continue to build bench strength

The survey revealed that 93 percent of CIOs at top-performing organizations say that digital business has enabled them to lead IT organizations that are adaptable and open to change. To the benefit of many security practices, this cultural openness broadens the organization's attitude toward new recruitment and training avenues.

"Cybersecurity is faced with a well-documented skills shortage, which is considered a top inhibitor to innovation," Mr. McMillan said. "Finding talented, driven people to handle the organization's cybersecurity responsibilities is an endless function."

According to Gartner, while most organizations have a role dedicated to cybersecurity expertise, and therefore appreciate its needs, the cybersecurity skills shortage continues. Gartner recommends that chief information security officers (CISOs) continue to build bench strength through innovative approaches to developing the security team's capabilities.

Jul 24th, 2018

In Infrastructure, Networking,

Global report finds 73% of consumers would move to a competitor if a website is slow to load.

Over 80% of consumers are more frustrated by consistently slow websites than those that are temporarily down. That’s according to a global online study from Eggplant, the customer experience optimization specialists.

Eggplant polled a combined total of 3,200 UK and US adults on attitudes to website speed and performance, and found that a business with a slow or underperforming website is likely to lose 73% of its customers to a competitor.

While outages are a problem for businesses around the world, the survey reveals that a slow website is much more damaging than one that is temporarily down. To stay competitive and retain customers, retailers must focus on website speed alongside website availability.

UK findings

81% of Brits find a slow website more frustrating to use than one that is down or not working. In fact, 7 in 10 (70%) of UK adults rate website speed as important when it comes to online activity. Of this 81%, a third (33%) said website speed was very important, while only 17% said speed was not important at all.

The online research also found that three quarters (75%) of Brits would be likely to use a competitor website if the one they were using was slow. This is especially important for brands who commoditize based entirely on price such as tickets, hotel and travel sites.

When it comes to UK consumers, site speed is so important that almost 3 in 5 (60%) feel much more negative to a brand if its site is consistently slow to load. This is in contrast to less than a quarter (23%) who feel the same way if a site is down or not working. However, nearly half (49%) of consumers feel slightly negative towards a brand if its website is not working.

US Findings

Across the board, US consumers have the same sentiment to website speed vs. downtime. Only slightly down on the UK, 79% of Americans find a slow running website more frustrating to use than one that is down or not working. In fact, 41% of American adults rate website speed as very important when it comes to online activity. Like the UK, only a tiny proportion (1%) said that website speed was not important at all.

Americans would be fractionally less likely than those in the UK to move to a competitor if a site was slow, with 69% stating they would move (compared to 75% in the UK). Alongside this, 24% of US consumers stated they would eat less than half a donut before giving up on a website and moving to another.

When it comes to American consumers, site speed is so important that well over half (59%) feel much more negative to a brand if its site is consistently slow to load. This is in contrast to less than a quarter (23%) who feel the same way if a site was temporarily down or not working.

Responsive, fast websites are a crucial part of business success worldwide, or organizations risk losing customers to competitors. When it comes to preparing for the peak retail period, website speed is even more important than availability, and businesses need to be ready.

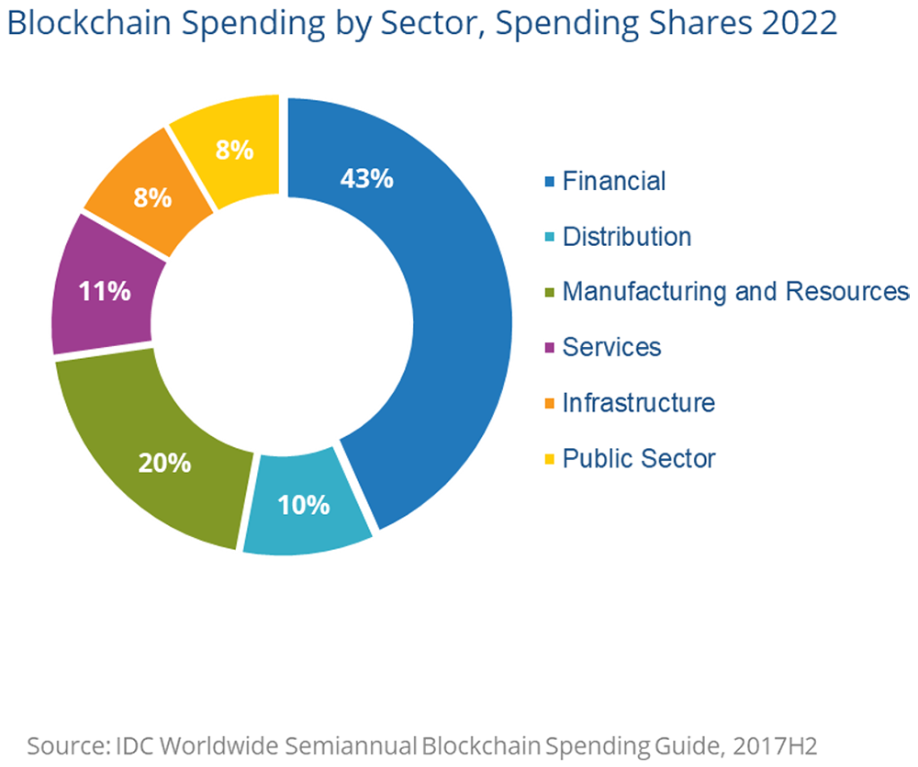

According to IDC's new Worldwide Semiannual Blockchain Spending Guide, Europe will be the second-largest investor in blockchain technologies. With a compound annual growth rate (CAGR) of 80.2% for 2017–2022, Europe will increase its spending from around $400 million in 2018 to $3.5 billion in 2022, helping it to close the gap with the U.S., the biggest blockchain investor.

2017 was a significant year for blockchain in Europe, with companies asking themselves how blockchain solutions can help simplify, improve, and secure their businesses. A recent IDC survey across Europe, however, revealed there is still some way to go in terms of understanding blockchain applicability and usefulness, especially among smaller European companies.

"The European market is less flexible than other regions, and is also more fragmented in terms of business size," said Carla La Croce, senior research analyst, Customer Insights and Analysis, IDC. "Nevertheless, as IDC has already highlighted, 2018 is still the year of blockchain, and European companies are showing increasing interest, supported by growing investments. Companies recognize the importance of the technology and are starting to explore how it can be deployed in their business, going beyond pilots and identifying the best use cases."

According to Mohamed Hefny, systems and infrastructure solutions program manager at IDC CEMA, "Blockchain offers a huge opportunity for start-ups and in the emerging markets of the region where government support and advanced skills offer a fertile ground for things to really happen. The technology is about rapid progress and agility — and the tech giants' size and legacy are not an advantage here."

The largest and fastest-growing industry for blockchain is the financial sector, with projected spending of $173 million this year (accounting for 42% of the total). Insurance and banking are also expected to grow above the average. Other fast-growing markets are supply-chain-related segments such as manufacturing and retail, at 82.7% and 82.5% CAGR respectively. Though the biggest industries are traditionally more inclined to invest in blockchain, sectors such as utilities, professional services, and government are also expected to see strong growth. These sectors will use blockchain for transactions or to track goods and assets, with supply chain quality and provenance control among the key uses of blockchain across all regions. By 2022, IDC believes the top use cases will be trade finance and post-trade/transaction settlements, identity management, regulatory compliance, cross-border payments and settlements, and asset/goods management.

Growth will be driven by IT services, with the highest share devoted to project services and IT consulting. Services will account for more than two-thirds of growth in 2022, slightly increasing over time at the expense of software and hardware, with the latter representing only a very small share of the total. Software technologies will account for slightly less than a third in 2018, and this will decrease to a quarter in 2022. Software spending growth will be driven by security software.

IDC's Worldwide Semiannual Blockchain Spending Guide quantifies the emerging blockchain market by providing spending data for 10 technologies across 19 industries and 16 use cases in nine geographic regions. IDC defines blockchain as a digital, distributed ledger of transactions or records. The ledger, which stores the information or data, exists across multiple participants in a peer-to-peer network. There is no single, central repository that stores the ledger. Distributed ledger technology (DLT) allows new transactions to be added to an existing chain of transactions using a secure, digital or cryptographic signature. Spending associated with various cryptocurrencies that utilize blockchain and distributed ledgers technology, such as Bitcoin, is not included in the spending guide. Unlike any other research in the industry, the comprehensive spending guide was designed to help IT decision makers to clearly understand the industry-specific scope and direction of blockchain spending today and over the next five years.

As organizations continue to embrace digital transformation, they are finding that digital business is not as simple as buying the latest technology — it requires significant changes to culture and systems. A recent Gartner, Inc. survey found that only a small number of organizations have been able to successfully scale their digital initiatives beyond the experimentation and piloting stages.

"The reality is that digital business demands different skills, working practices, organizational models and even cultures," said Marcus Blosch, research vice president at Gartner. "To change an organization designed for a structured, ordered, process-oriented world to one designed for ecosystems, adaptation, learning and experimentation is hard. Some organizations will navigate that change, and others that can't change will become outdated and be replaced."

Gartner has identified six barriers that CIOs must overcome to transform their organization into a digital business.

Barrier No. 1: A Change-Resisting Culture

Digital innovation can be successful only in a culture of collaboration. People have to be able to work across boundaries and explore new ideas. In reality, most organizations are stuck in a culture of change-resistant silos and hierarchies.

"Culture is organizational 'dark matter' — you can't see it, but its effects are obvious," said Mr. Blosch. "The challenge is that many organizations have developed a culture of hierarchy and clear boundaries between areas of responsibilities. Digital innovation requires the opposite: collaborative cross-functional and self-directed teams that are not afraid of uncertain outcomes."

CIOs aiming to establish a digital culture should start small: Define a digital mindset, assemble a digital innovation team, and shield it from the rest of the organization to let the new culture develop. Connections between the digital innovation and core teams can then be used to scale new ideas and spread the culture.

Barrier No. 2: Limited Sharing and Collaboration

The lack of willingness to share and collaborate is a challenge not only at the ecosystem level but also inside the organization. Issues of ownership and control of processes, information and systems make people reluctant to share their knowledge. Digital innovation with its collaborative cross-functional teams is often very different from what employees are used to with regards to functions and hierarchies — resistance is inevitable.

"It's not necessary to have everyone on board in the early stages. Try to find areas where interests overlap, and create a starting point. Build a first version, test the idea and use the success story to gain the momentum needed for the next step," said Mr. Blosch.

Barrier No. 3: The Business Isn't Ready

Many business leaders are caught up in the hype around digital business. But when the CIO or CDO wants to start the transformation process, it turns out that the business doesn't have the skills or resources needed.

"CIOs should address the digital readiness of the organization to get an understanding of both business and IT readiness," Mr. Blosch advised. "Then, focus on the early adopters with the willingness and openness to change and leverage digital. But keep in mind that digital may just not be relevant to certain parts of the organization."

Barrier No. 4: The Talent Gap

Most organizations follow a traditional pattern — organized into functions such as IT, sales and supply chain and largely focused on operations. Change can be slow in this kind of environment.

Digital innovation requires an organization to adopt a different approach. People, processes and technology blend to create new business models and services. Employees need new skills focused on innovation, change and creativity along with the new technologies themselves, such as artificial intelligence (AI) and the Internet of Things (IoT).

"There are two approaches to breach the talent gap — upskill and bimodal," said Mr. Blosch. "In smaller or more innovative organizations, it is possible to redefine individuals' roles to include more skills and competencies needed to support digital. In other organizations, using a bimodal approach makes sense by creating a separate group to handle innovation with the requisite skill set."

Barrier No. 5: The Current Practices Don't Support the Talent

Having the right talent is essential, and having the right practices lets the talent work effectively. Highly structured and slow traditional processes don't work for digital. There are no tried and tested models to implement, but every organization has to find the practices that suits it best.

"Some organizations may shift to a product management-based approach for digital innovations because it allows for multiple iterations. Operational innovations can follow the usual approaches until the digital team is skilled and experienced enough to extend its reach and share the learned practices with the organization," Mr. Blosch explained.

Barrier No. 6: Change Isn't Easy

It's often technically challenging and expensive to make digital work. Developing platforms, changing the organizational structure, creating an ecosystem of partners — all of this costs time, resources and money.

Over the long term, enterprises should build the organizational capabilities that make change simpler and faster. To do that, they should develop a platform-based strategy that supports continuous change and design principles and then innovate on top of that platform, allowing new services to draw from the platform and its core services.

Gartner clients can find more information in the research note "Six Barriers to Becoming a Digital Business, and What You Can Do About Them." More information on digital business can be found in the Gartner Special Report “The Resilience Premium of Digital Business.” This collection of research focuses on how committing to resilience will equip a digital business with the mindset, resources and planning to recover from inevitable disruptions.

How do you know your digital transformation project is - or is not - going to plan? asks Nick Keen, Project Manager at Cloud Technology Solutions.

According to a number of surveys, 9 out of 10 digital transformation projects fail in some way, with over half being quite serious failures. At Cloud Technology Solutions, we know if our projects are going to plan if we have understood and observed the following:

Purpose and Customers Needs

It’s crucial to identify what it is the customer wants to achieve through digital transformation and why, what’s important to them and what their success criteria are. Understanding this and knowing that your product/service is a good fit for the business already puts the project in a good starting position for success.

Business Case and Statements of Work

Both the Business Case and Statement of Work are essential for securing buy-in from senior management and all those involved with the project. Both the business case and statement of work need to be clear and concise and must be in-line with business strategy and priorities. Clearly defining what it is we are doing, and what we are not doing, ensures a robust project kick off with the best chance of everything going to plan.

Success and Leadership

If the reason for the project is well founded, it is simpler to define the success criteria and will be closely linked to the purpose. It’s also important to look at success throughout the implementation itself, such as user and business readiness and satisfaction, engagement and training. We know a project is going to plan if this success is measured throughout the project lifecycle, maintaining leadership, support and accountability.

On-time, On-budget, On-schedule

If we understand the business purpose and needs, we have a strong business case, a detailed set of objectives and good leadership, we know the project will start off well. The project then needs to run in-line with the schedule, remain on budget and within the agreed timescales.

How do you spot the warning signs early enough to avoid disaster?

Communication

Some of our projects are huge, with some taking nearly a year from start to finish. To that end, it is vital that we identify issues early to avoid failure in our delivery.

Lack of communication is one of the biggest problems in most projects and it is important to ensure that communication with all stakeholders is clear, regular and accurate. If members of the project aren’t talking, there’s an increased chance of miscommunication or mismatch of expectations.

Project Interest

The projects we deliver, such as Google G Suite implementation, represent big cultural changes for any business. There therefore needs to be a high level of interest from all stakeholders to ensure the change management element is driven forward and is successful. If there’s a lack of interest and management support at the start of the project this will likely feed through to all staff and undermine “buy in”.

Velocity

There needs to be a number of deliverables at frequent intervals, especially on the bigger projects that last six months or longer. This not only makes tracking the project easier but drives a feeling of success too. This approach goes hand in hand with our resource planning and timeline where each week we will have deliverables against the bigger plan. A classic warning sign of a project in trouble is when things just aren’t moving!

Poorly defined objectives

All members of the project team must understand why they are doing what they’re doing and what they are hoping to achieve. If this isn’t clear from the beginning, the project will be at risk. Objectives should always be clear, concise and detailed to avoid failure or mismatch of expectations.

How do you save the project - and maybe even your job?

This depends on the problem you have to solve...

Scope Creep

Sometimes projects grow beyond original goals and need to be constantly re-assessed. If this happens, it’s important to firstly figure out what you need to do to bring it back into scope, and if you can’t, ensure a change control is in place and that everyone is onboard. Look at where previous similar projects failed and look at the lessons learnt.

At CTS, our approach is to collaborate with the customer. Scope change is not inevitable, but it happens regularly enough that both the supplier and customer will need to adapt to it once it is recognised, understanding the impact that it has on the project.

Budget

This is something that needs to be regularly monitored. Budgets generally only change due to changes in scope or the original assumptions. If budgets change there may have been scope change and that needs to be agreed with the customer to ensure there is mutual understanding of the impact that this has on the project. The earlier this is detected and raised with stakeholders, and even your manager, the better.

Behind Schedule

Assess why the project is behind. Maybe project members are off sick, unplanned leave, a complicated task that took longer to complete? It’s important to devise a plan that doesn’t involve having to assign additional resource to the problem. Prioritise the needs and scale back on objectives. Working harder and faster also introduces mistakes, potentially putting the project further behind. This can be avoided by working out how to be flexible when working towards key milestones. Good project management software is useful for alerts and scheduling and for helping you keep the project on track.

Communication (again!)

Changes in scope, timescales, budget and outcomes must be understood by all participants in the project. Keeping clear, open, non-adversarial communications flowing has to be the primary focus of the project manager. In our experience, our customers can tolerate changes to project timelines, budgets and outcomes, if the causes are reasonable. What they cannot tolerate, is poor communication.

Are the risks in IT projects the same as they always were (or even worse)?

Our projects have changed. Technology is no longer an “outcome” of a project. The technology no longer fails. The outcomes now are more focused on greater efficiencies and savings. These can only be achieved by staff in our customer organisations, who need to change the way in which they interact with technology.

For instance, in two recent projects, we implemented the exact same technology in two different organisations. One organisation has reported a 50 per cent saving in staff travel costs as a result of adopting the collaboration tools. The other organisation has made negligible savings as the organisational change was not supported internally to the same extent.

The risks to our projects now are greater than ever before as most of our success criteria are based on wholesale organisational change, not simply the delivery of some tech.

With the growth of new technologies - AI, robotics, machine learning - will successful IT implementation become even harder to achieve in the future?

Emerging tech like AI, robotics and machine learning are advancing very quickly and I don’t think there’s been much attention to the impact on the digital implementation projects that we see today.

The idea behind these new technologies is that they can improve the speed, quality, and cost of a project or process and maybe even replace human resource in certain areas in the future. That represents a significant challenge to staff, which could see such tech development as a threat. Success of these type of projects requires the impacted staff to recognise and embrace the changes. The power of AI and machine learning can only be harnessed if staff recognise that their role is changing and therefore start to use the data and output from the technology to inform organisational and strategic changes.

The Internet of Things (IoT) is having a huge impact, with experts anticipating a significant increase in adoption of the technology, particularly across the enterprise. Growth Enabler predicts that the global IoT market is set to grow to $457.29 billion by 2020 driven in part by the acceptance, adoption and business applicability of IoT across numerous sectors. By Jason Kay, Chief Commercial Officer, IMS Evolve.

According to research from Vodafone, the number of IoT adopters across all industries has already more than doubled since 2013, equating to 29 per cent of organisations globally and demonstrating how compelling the case for IoT is to businesses. However, the results from Cisco’s IoT deployment survey show how decision makers must place greater emphasis on business objectives when implementing an IoT project; 60 per cent of IoT initiatives stall at the Proof of Concept (PoC) stage and only 26 per cent of organisations have had an IoT project that they believed to be a complete success.

The conclusions of the Cisco survey may appear stark for some. IoT technology was developed with the aim of improving business efficiency and increasing value for adopters, however according to the research, it appears the full potential of IoT is so far, not being truly realised. If businesses do not achieve full value from their IoT deployments, then the growth rate of IoT could be at risk, and as a result businesses could potentially become hesitant to adopt it.

In reality, IoT has the potential to truly revolutionise technology and provide businesses with invaluable benefits, including increased efficiency and greater insight. However, it is vital that organisations and the technology vendors they collaborate with, take a considered approach to guarantee the future success of their project – and this requires a particular mindset.

In many cases, the goal of an IoT implementation is dictated by the technology. But just because the technology can provide a solution for a business, it doesn’t necessarily mean it’s the right one. As such, a shift in mindset is required across the IoT industry to prioritise the essential question when considering an IoT project: ‘why?’

Rather than putting the technology first and asking the question, ‘what technology is available and what problems can it solve?’ stakeholders should prioritise the business issues and ask, ‘what problems do we have, and how can technology help?’. Organisations constantly face challenges on multiple fronts, and collaboration across multiple teams and functions within the business is required to truly determine which solution implemented in which area will contribute the greatest effect on core purpose, and provide the biggest reward.

Consider the rip and replace IoT solutions as an example. There is an opportunity for industries such as food retail to achieve substantial benefits and efficiencies from implementing IoT technology. However, a rip and replace solution to extracting the data from their estate would not be feasible as the industry operates a fast moving environment with low margin consumer goods and a high cost of infrastructure.

Suspending operations or shutting stores for re-fit risks impeding customer experience, loyalty and brand reputation, and diminishing the potential business value of the solution. As revealed by the Cisco research, the value from IoT must be generated quickly, with as little disruption to the business as possible, or the project will quickly stall. By leveraging the existing infrastructure and deploying an IoT layer across the existing environment, organisations can unlock the data that is inherent within it and achieve ROI and tangible value within a matter or months, even weeks. Rapidly releasing value from IoT is essential in adopting an outcomes-led strategy and accelerating the shift into digitisation with low capital investment and high return on investment.

With full business-wide collaboration and an outcomes-led approach, businesses can partner with vendors that can rapidly deploy sustainable, scalable and – above all else – valuable IoT projects. It is only through organisations deploying established and repeatable solutions that deliver results far beyond proof of concept, that IoT will be able to fulfill its true potential.

Managed services providers (MSPs) must see the wider picture in order to provide the strategic vision that resonates with customers. That strategic partner status is a vital part of the relationship but needs nurturing as part of that all-encompassing vision.

It is clear from research by Gartner and others that MSPs globally are facing a multi-faceted struggle to grow their businesses. On the one hand, there is competition from the public cloud players such as AWS, Google and Microsoft who set pricing levels and have reach but are unable to customise or tailor their offerings to specific verticals. The bulk of MSPs are much smaller, and specialist in technologies covered and markets, but need scale to build their profitability and have limited resources.

There is a clear move to consolidate among larger players, with Gartner saying that, when compared to 2017, the entry criteria have become much harder and stringent. The focus has squarely shifted to hyperscale infrastructure providers, it says, and this has resulted in it dropping more than 14 vendors from its top players list. According to Gartner, there are no more visionaries and challengers left in the market; only a handful of leaders and niche players driving the momentum.

At the other end of the market, among smaller players, the pace of competition has stepped up and they are feeling a major pressure to differentiate, either on skills, markets covered, geographical coverage or in customer relations.

This is a common feature of the MSP market on a global scale. The answer is always to build and then demonstrate expertise and understanding in the marketplace. As Gartner's research director Mark Paine told April’s European Managed Services Summit: “The key to a successful and differentiated business is to give customers what they want by helping them (the customer) buy”.

One way to win more customers is by showing them their place in the future, according to Jim Bowes, CEO and founder of digital agency Manifesto, and Robert Belgrave, chief executive of digital agency hosting specialist Wirehive. The two experts will be covering the marketing aspects at the UK Managed Services & Hosting Summit in London on September 29th. Agenda here: http://www.mshsummit.com/agenda.php

They draw on a wider experience, arguing that the managed services and hosting industry isn’t the only one having to undergo rapid adjustments due to technological advances and changing customer expectations. Customers are experiencing the same disorientation, and need help figuring out how their IT infrastructure needs to evolve over the next five to ten years. Which means it’s time to ditch the old marketing models built on email lists and dry whitepapers. It’s time to get agile, personalised and creative, they will say.

A key part of the event will also be hearing from the experiences of MSPs themselves and looking at established winning ideas. MSPs already confirmed will relate their stories on business-building including how they use managed security services, how MSPs can position security without terrifying the customer, and how they work with customers to keep their lights on.

Now in its eighth year, the UK Managed Services & Hosting Summit event will bring leading hardware and software vendors, hosting providers, telecommunications companies, mobile operators and web services providers involved in managed services and hosting together with Managed Service Providers (MSPs) and resellers, integrators and service providers migrating to, or developing their own managed services portfolio and sales of hosted solutions.

It is a management-level event designed to help channel organisations identify opportunities arising from the increasing demand for managed and hosted services and to develop and strengthen partnerships aimed at supporting sales. Building on the success of previous managed services and hosting events, the summit will feature a high-level conference programme exploring the impact of new business models and the changing role of information technology within modern businesses.

You can find further information at: www.mshsummit.com

Artificial Intelligence has lately become one of the most fashionable of technical buzzwords. Historically, though, it has often been used by researchers in the field as an aspirational term to reference technology that doesn’t quite work yet. Behind the cynicism, though, is some technology that really does work and really can do useful tasks. That technology is machine learning, which can be viewed as just a new way to program computers to perform tasks that we don’t quite understand (yet).

By Ted Dunning, Chief Application Architect, MapR.

One interesting way to look at machine learning is to view it as writing a program based on data rather than explicit coding. This is revolutionary compared to standard and can be incredibly powerful when applied to problems where we have lots of the right kind of data and where we don’t entirely understand how to solve a problem explicitly. Through the process of machine learning, we can mathematically solve for a program that can do something that we can’t code.

Let’s take a real-world example of the power of data. One of our customers, a financial service provider, was hit by a major denial of service attack but the security team had archived headers on all web requests for some time by the time of the attack. Having large volumes of detailed data allowed that security team to find a systematic difference between the data flooding in from the attackers and data coming from ordinary users. That difference could never have been noted without the archived data. This was an example where having lots of data turned what would have been an intractable problem into one where an important solution could be inferred from that data.

This all sounds great, but there are some really major gotchas. First of all, the learning itself, the part of the process that everybody is so breathless about is actually only a small part of the problem. The biggest issue is to find a suitable candidate for solving with machine learning. The second biggest issue is the logistics surrounding the deployment and management of models.

Finding a way that machine learning can contribute to a business is both much harder and much easier than it looks. It is much harder because many tasks don’t have clear enough definitions or good enough opportunities to collect the data needed to train a model. It is also much harder because the resulting model not only has to do what the old business process used to do (with acceptable accuracy and such), but it also has to do it in such a way as to improve the business outcomes by a large enough amount to make it worthwhile to build the requisite model and maintain it. For instance, if you have a lemonade stand that brings in $2 each weekend during the summer, adding a machine learning model that improves sales by 20% isn’t going to be enough to pay for a data scientist. That is unless the data scientist is your little sister who will work for a cut of the lemonade. That said, most reasonably large business’ have lots of places where machine learning can make a big difference … it is just that the best opportunities won’t be where you look first.

Dealing with the logistics of deploying and maintaining models is the other major under-recognised challenge of machine learning. Once you know a good niche in your business for a model, you can expect 90% or more of the effort of getting that model into production to be devoted to the logistics of handling data and deploying the model. The biggest reason for this is that a model isn’t really like an ordinary program. The biggest reason for this is that because a model is learned from data to solve a problem that you probably don’t entirely understand (or else you would have just written a program to solve the problem), you probably also don’t know how to test the model except by comparing the results it produces in production. Essentially, you will know a better model when you see one but you can’t say ahead of time which model it will be.

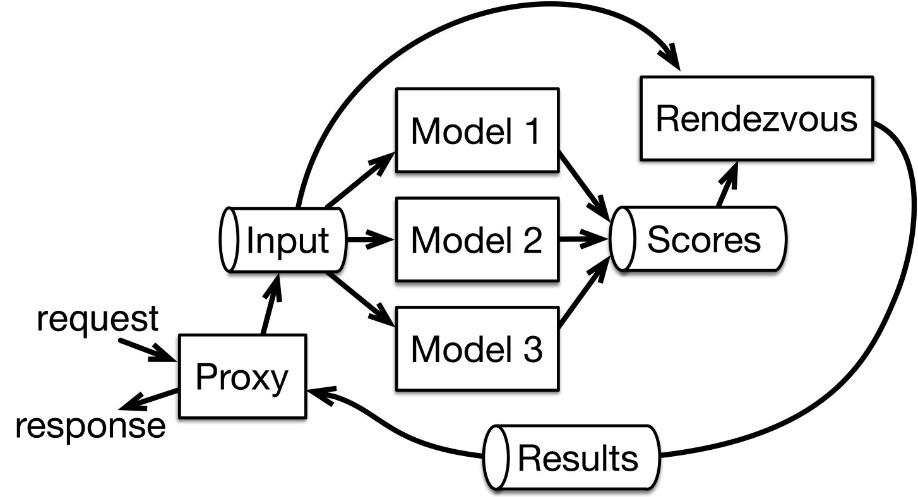

The result of this situation is that, except in the simplest of model applications, it is common to deploy multiple versions of a model. One model, the “champion”, is considered the best performing model. Others, the “challengers”, are either previous champions or are newer models that we think might be good enough to unseat the current champion. It is also common to keep an older champion around as a canary so that we can compare the outputs of different models over a long period of time to get an idea of trends and changes.

Maintaining all of these model versions can be challenging. One approach to this is detailed in the latest book that Ellen Friedman and I have written entitled Machine Learning Logistics. In this book we describe the rendezvous architecture that makes it relatively painless to manage, compare and monitor all of these models, deploying new models and retiring old ones all while maintaining zero downtime.

About the author

Ted Dunning is Chief Application Architect at MapR and has years of experience with machine learning and other big data solutions across a range of sectors. Ted was the chief architect behind the MusicMatch (now Yahoo Music) and Veoh recommendation systems. He built fraud detection systems for ID Analytics (later purchased by LifeLock) and he has 24 patents issued to date plus a dozen pending. Ted has a PhD in computing science from the University of Sheffield and is active with open source projects as committer, PMC member, mentor and currently serving as a board member for the Apache Software Foundation. When he’s not doing data science, he plays guitar and mandolin. He also bought the beer at the first Hadoop user group meeting.

As consumers, we live in a digital ecosystem almost without even realising it. We interact with brand touchpoints many times a day, and in each of these our brand perception is shifting and evolving. More than this, rather than buying products from companies, we buy into their brand. Customers for any product or service are now expecting the personalised touch, and a tailor-made customer experience to be delivered through their screens.

By Ian Matthews, Data Evangelist at NGDATA.

The explosion of big data has therefore presented businesses with the opportunity to rethink and reshape their brand attitude and tone. However, it has also posed a more pragmatic challenge of how to maximise the potential hidden in the huge data sets they have been building over years. This is where customer analytics comes into play. Analytics can turn the massive amounts of data you already have on your customers into insights. Keep in mind that this is something different than traditional analytics or business intelligence. Whereas traditional analytics look into the past to predict trends, customer analytics look for what a customer will do as an individual.

The ability to leverage and maximise the value of this treasure trove is now more mission critical than ever. Building relationships with customers now involves with more frequent, but far shorter interactions. Yet it is on this the brands rely to build the lifeblood of any company – brand loyalty. Customers have more access points than ever before to their service provider. They can interact via a company’s website or app, through phone calls, emails, texts, and via social media and chat. These kinds of interactions take less time than an in-person visit, but they happen much more frequently. They are also more superficial than in-person interactions, making it even more important to have each one count.

Companies need to take advantage of all of these touch points and interaction moments to provide real value. The only way to do this is to be relevant in ALL areas of messaging, timing, and to ensure these are relevant to the customers’ context.

But how does one become relevant? The first step is understanding who your customers are.

Let’s put it in Context

Customer data analytics are powerful. Companies today have access to layers upon layers of information about their customers, from location and age, to spending habits, to attrition tendencies, to product and communications preferences, to behavioural intelligence.

Consider a recent report from IDC which predicts that data creation will reach a total of 163 zettabytes by the year 2025, this is a ten-fold increase in worldwide data. Companies have so much data at their fingertips, but unless that information is channelised and used effectively, it’s useless.

Contextual relevance is the most important factor in extracting value from data. Understanding human behaviour, but not being able to apply it to common-day context, such as where someone is or what were their previous actions in the last hour, will only allow a company to better understand what has happened in the past. There is little value in living in the past, when a business’s value is counting on what happens in the now. This is especially true when considering a customer’s experience. If the content ignores a customer’s context, the brand’s marketing efforts will be futile, the needs of their customers will go unmet and customer relationships will diminish significantly.

Real-time reactions

So how does a company use their data to deliver relevance? The answer boils down to having actionable insights available at your fingertips. In order to leverage knowledge on the customer context, companies must move to a process which combines long-term historical insights with up-to-the-minute processing of real-time behavioural data. Companies must then use the enormous amount of existing user data to constantly create connected user experiences; as compared to those created by technology companies that are dependent on web traffic activity and information alone. Productively utilising customer data allows a company to determine what a customer is most interested in, and to create a personalised experience where content, products and/or services are presented to customers before they even realise their needs. As customers’ expectations of their favourite brands increase, they have more of an affinity for those that offer more pertinent information, instruction and added convenience to their lives.

Companies also need to let marketers truly manage the conversation with the customer, both in- and outbound. Companies can do this by using their data to deliver the most relevant, timely and contextually-aware actions that match the needs of each and every individual customer. When a company or brand can execute on the insights gleaned, they’ll become transformative in the way they approach marketing.

Taking the customer journey full circle

By leveraging the context found in data, brands can provide customers with an optimised experience that is more relevant and consistent across all channels. Personalised service can be adapted to their present needs and interests, and tied to the complete customer context – their location, most recent purchases, complaints, etc.

For a company, the value of processing customer data with analytics software can be about optimum marketing results, with more precise targeting, more connected experiences and increased campaign efficiency. This gives companies the ability to acquire the right customers, provide them with excellent service and products, and be more focused on which customers to retain, at what cost. The value of customer data is beneficial for both the customer and the service provider as they truly begin to operationalise insights on customer data and behaviour.

But crucially, data in itself isn’t smart – it takes a level of processing to develop the insights that drive businesses forward. Companies can only utilise data to its full potential by harnessing and harmonising the assets at their disposal, unifying multiple entry formats, systems, and processes. Only once this has been achieved can the power of AI-powered programmes and platforms drive actionable insights to boost customer engagement. Creating end-to-end systems such as these mimics the customer journey in itself, turning dumb data into true connection between business and customer.

So, how is your business building a marketing segment of one that will secure a plethora of loyal customers for the future?

Despite the rapid rise of public cloud platforms and the various benefits they offer to enterprises, private infrastructure still remains an essential component of many IT strategies.

By Mark Baker, Field Product Manager at Canonical.

This is an idea that many enterprises have traditionally been hesitant to embrace, primarily due to the costs involved. However, recent research has shown that private infrastructure doesn’t have to require the vast investment it once did and can now actually be just as cost-efficient as the public cloud.

What’s more, running private data centres and clouds alongside public platforms enables companies to keep using the infrastructure that they have been investing in for years and which is customised to meet their specific needs. It can also provide reassurances around security and data protection, both of which are key considerations for every organisation in the era of GDPR and stringent compliance requirements.

Adopting a multi-cloud approach that uses a combination of public and private platforms means businesses can run workloads where they are best suited. What’s more, they can be much more flexible in responding to capacity needs and maximise the return on their cloud investment.

As a result, we’re now seeing more and more enterprises turn to multi-cloud strategies, giving them the flexibility and agility required to operate in today’s digital world.

However, such an approach only works if businesses are able to run a cost-efficient data centre themselves, which is not something that all enterprises are able to say.

Dirty data centres

The use of private infrastructure is nothing new. Many organisations have been running their own data centre for years, particularly in industries such as financial services that have strict regulatory requirements around the storage and use of sensitive customer data.

However, a closer look at the inner workings of these data centres often reveals a huge amount of over-expense, accompanied by a tangled mess of machines and wires that very few people properly understand or know what to do with.

This is not unusual when a data centre has been operational for several years. We all know how cluttered computer wires can get in our personal lives and this issue is amplified exponentially when talking about a data centre containing hundreds, thousands or even hundreds of thousands of servers. After all, taking the time to regularly tidy everything up is a luxury few businesses can afford, especially if it requires some system downtime.

Internal knowledge gaps can also be a problem. There may only be a handful of people within the business who are fully aware of the data centre’s inner workings, which could present some serious issues if these people leave the business or aren’t on-hand to respond to an emergency.

Finally, businesses with dirty data centres are unlikely to be getting the best return on their investment. Infrastructure inefficiencies can add significant expense to data centre operations and internal processes, thereby impacting employee productivity and, ultimately, the bottom line.

The result is that many businesses are being tempted into ditching their private infrastructure in favour of public cloud platforms. That way, they can hand off the time-consuming and expensive maintenance jobs to someone else, leaving them to focus on growing their business. But is this really the best way forward?

Spring cleaning

So, data centres can get messy, that’s something no organisation can avoid. For some, this creates the feeling that the public cloud is the only real option and that their own data centres are not worth the hassle.

However, thinking this way would be a mistake. Rather than neglecting them, businesses should be focusing on cleaning up their private infrastructures and re-crafting a leaner, cleaner data centre.

Not only does this have the potential to significantly improve any enterprise’s return on investment, it can also bring private cloud economics back in line with the perceived cost efficiencies of using public cloud providers.

This is where automation plays a key role. Through automation, enterprises can simplify processes and eliminate time-consuming manual operations. The more day-to-day tasks can be automated, the more businesses can remove the administrative burden that has traditionally hampered many data centre operations.

This would free up IT teams to focus on making improvements and bringing value to the business, rather than having to spend time fighting fires and getting bogged down in the nitty-gritty of data centre management.

Other technologies such as machine learning or artificial intelligence can also be incorporated. This can provide insight into operational efficiencies, as well as enabling businesses to optimise their data centre’s performance and save money in the process.

Another option for businesses is to partner with providers and use their expertise to run certain parts of the data centre, which can go a long way towards streamlining internal operations.

Ultimately, cloud economics simply don’t point towards private data centres disappearing any time soon. Moving exclusively to the public cloud would be about as sensible as a business selling all its buildings and only renting a property whenever it needs somewhere new.

Whatever enterprises may think about their cloud infrastructure, it just doesn’t make financial sense for private data centres to go away, it just makes sense to clean them up.

That way, businesses can reap the rewards that come from running an efficient data centre and maximise the return on their cloud investment.

It is astonishing when we take a moment to ponder the prominence and influence of technology in our daily lives. Not only do we rely on it for everyday services, information, and connection, but we expect technology to deliver these experiences instantly. It’s become perfectly commonplace to “uber” a ride and watch in real time as the driver approaches, stream content on demand, or receive notification that someone is checking out your dating profile at the exact moment you’re checking out theirs.

By Priya Balakrishnan, senior director of product marketing at Redis Labs.

As ubiquitous as technology is today, the full impact of digital disruption has yet to be felt. Some industries—gaming, media, retail, commerce to name a few—are further along as a whole in their digital transformation journeys, while others—banking, government, healthcare, insurance—are a few steps behind.

But regardless of industry and distance travelled thus far, it’s become clear that providing innovative, responsive, real-time digital experiences requires a fundamental shift in application development and delivery. This shift has arrived in the form of microservices, an architectural approach that structures an application as a distributed collection of loosely coupled services.

In a microservices architecture, services are fine-grained and can be updated or scaled independently of one another, allowing enterprises to accelerate delivery, more efficiently reuse code, and achieve much higher levels of fault tolerance, to name a few of the benefits realized with the modular nature of microservices.

As organizations are re-platforming and readying their back-end systems for accelerated digital transformation, these benefits have clearly proved compelling. So much so, in fact, that a recent survey of development professionals shows that 86% expect microservices to be the default architecture within five years, with 60% already having microservices in pilot or production.1

But as is so often the case, new technologies that solve problems in one area introduce new problems in others. And microservices are no exception. In this article, I’d like to delve into some of the unique challenges—and strategies to overcome these challenges—that a distributed microservices architecture presents.

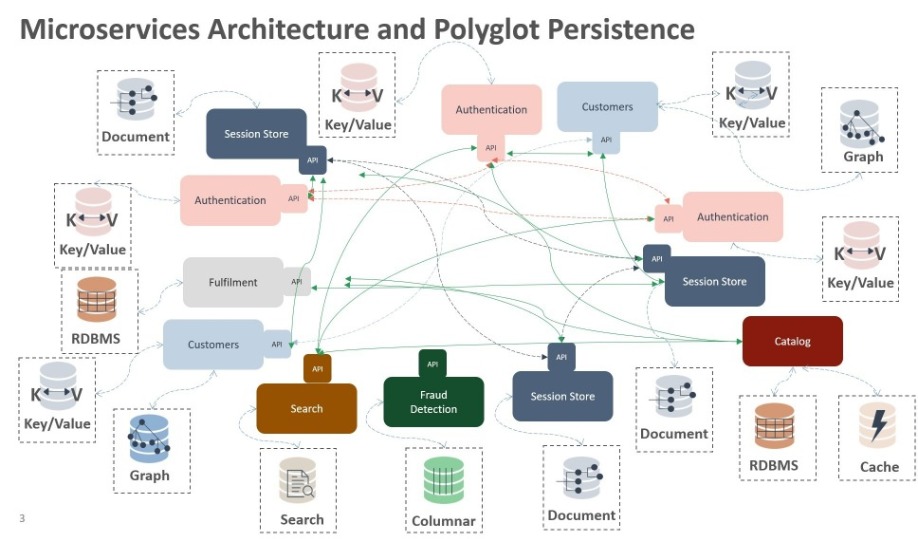

Challenge #1: The High Operational Cost of Polyglot Persistence

In a microservices architecture, instead of employing one single database across the application, each service can decide its own storage. The flexibility of being able to choose the most efficient data storage method for the task at hand (e.g. a key-value store for authentication, a graph database for fraud detection, a session store for customer tracking, and so on) is one of the big draws of microservices.

But the fragmented data management environment that results from running a myriad of different data storage products, known as polyglot persistence, dramatically increases the complexity of both operations and development. It gives rise to the need for subject matter experts with specialized skill sets for each of these data storage tools. For example, the developer must master new languages and APIs. Similarly, the database administrator must learn new backup and recovery utilities; research new optimization techniques; and plan for different layers of high availability, performance, and data durability.

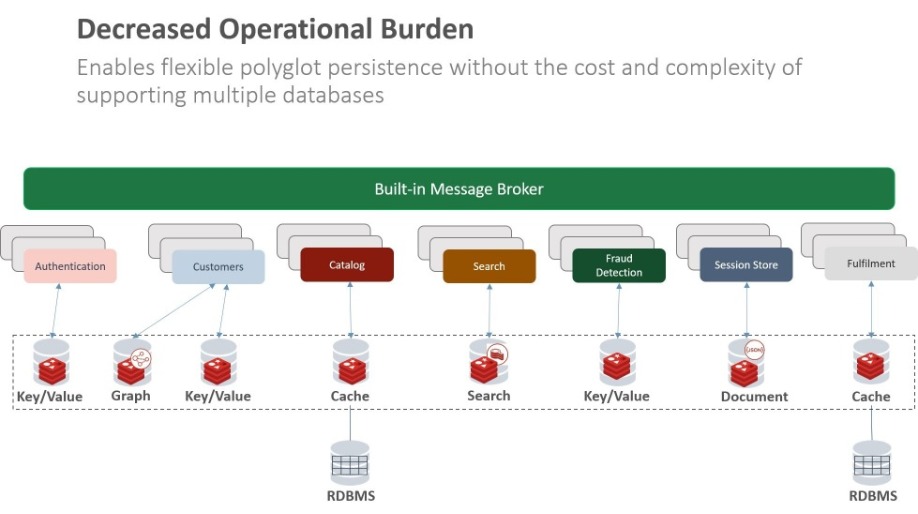

Overcoming the high operational cost of polyglot persistence

An evolving trend in databases is the emergence of multi-model databases, which have the ability to store and process structurally different data (i.e. data with distinct models) on a single database platform. A multi-model database not only solves a variety of different use cases such as cache, session store, message broker, high speed transactions, and so on, it also extends itself to serve as a document store, key-value store, graph database, search engine, etc.

The extreme extensibility and flexibility of a multi-model database effectively removes the operational and development hurdles associated with managing numerous disparate data structures. Polyglot persistence—and its tremendous benefits—are still achieved, but at the much smaller operational cost of a single, elegant database platform.

Challenge #2: Sharing Persistent Data In A Distributed Architecture

When you correctly decompose a system into microservices, each microservice is deployed independently and in parallel with the other microservices. Transactions are spread across multiple services and the only way for these services to communicate with each other is through their published interfaces. It’s precisely this modular design that allows one microservice to be updated with little risk of compromising others, and, therefore, allows for accelerated delivery and improved stability.

However, the nature of this modular design means that it’s now the application, not the database layer, that has to do the heavy lifting when it comes to sharing and synchronizing data. And, while not ideal, if two or more microservices need to share persistent data from the same database, careful and complicated coordination is required to ensure consistent views of the data are maintained and low-latency is preserved as the right snapshot of the data is communicated.

Effective methods of sharing persistent data from the same database

The best way to accomplish data transfer among microservices is through a message broker. Message brokers employ a publish-subscribe model for asynchronous communication between microservices. The publisher publishes that the state of the data has changed (along with the changes themselves) and the subscribers, which are in constant listening mode, update their internal states in response. This communications model is easy to use, memory efficient, and very effective at maintaining data consistency.

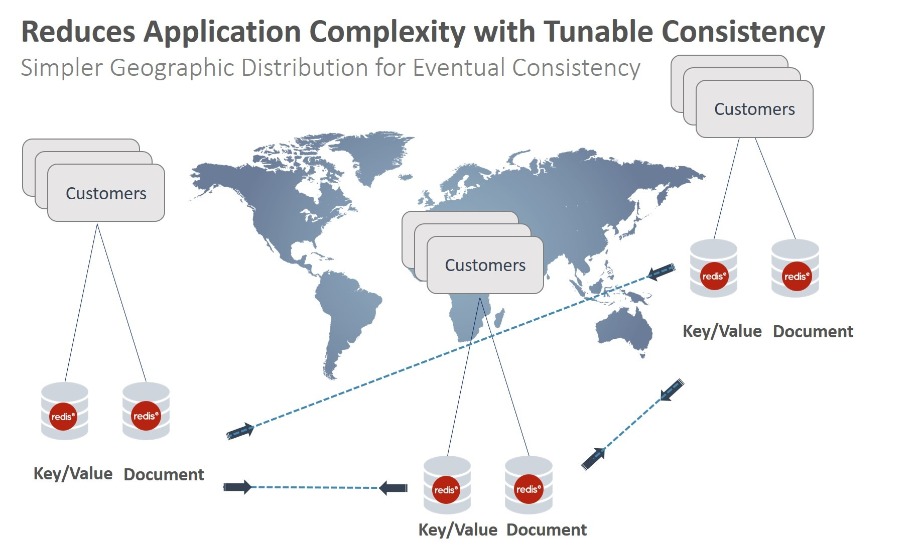

Alternately, if you have multiple instances of the same microservice, each with its own database in distributed data centers across geographies, then the service should share a consistent view of the data and converge to the same final state.

Solutions in existing database platforms range from strict consistency with limited high availability and throughput such as two-phase-commit protocol (2PC) for distributed writes to inadequate conflict resolution mechanisms using clocks that are completely based on as last-writer-wins (LWW). In distributed microservices architecture, careful consideration must be given to how updates are propagated across services, and how to manage consistent views when data appears in multiple places without strong consistency.

Strong eventual consistency guarantees that for a given set of updates all replicas of the data will eventually be converged to the same consistent state without implementing a reconciliation mechanism. This design allows applications to work with multi-region, multi-master database deployments, as if it were local. This approach is especially useful for long-living business operations.

Causal consistency eliminates the overhead and delivers the characteristics of a strongly consistent system by ensuring all replicas of the data see the updates in the exact same order that it was initiated.

Such tunable consistency is most efficiently achieved through active-active replication, based on CRDT (Conflict-free-Replicated Data Types) technology. In CRDT-based active-active replication, all database instances are available for read and write operations and are bidirectionally replicated. These (CRDT-based active-active) databases oversee data conflict resolution, alleviating the need for application developers to tackle consistency issues, and, regardless of where the microservice resides, the application gets local latency even across geographically distributed workloads. A microservices architecture emphasizes transaction-less coordination between services, with explicit acceptance of eventual and causal consistency and therefore is an ideal candidate for implementation under active-active database architectures.

Challenge #3: Managing container sprawl and data ephemerality

Microservices typically run in containerized environments. The portability offered by containers enables effortless relocation or replication of a microservice across heterogeneous platforms.

While a container in isolation can be quite easy to implement, applications conceivably consist of hundreds of containers spread across dozens of physical nodes and interdependent containerized services. Managing these large sprawls of containers creates tremendous operational complexity.

Furthermore, containers, by their very nature, aren't built to persist the data inside them - the state of the data is externalized. Some data is temporary in nature while other data must be retained and needs to be very durable. Persistence in the age of microservices means persistence of data and event streams. And this is critical as data is not ephemeral.

Running stateful services with container orchestration

In order to efficiently deploy and manage containers and microservices at scale, many organizations are turning to container orchestration tools. Kubernetes, an open source platform for managing containerized applications, has become the de-facto standard for container orchestration. It includes many primitives that are critical to scaling your database with ease, ensuring high availability, and managing schedule and balance load as necessary.

With Kubernetes playing such a vital role in the deployment of microservices architectures, you’ll want to ensure that your database is able to take full advantage of the Kubernetes framework to not only preserve the decrease in deployment complexity that the orchestration tool brings, but to also ensure that important data remains persistent and durable in the very dynamic and fast-moving environment of containers.

Challenge #4: Avoiding Performance and Scale Problems in Distributed Environments

A microservices architecture implies a distributed system. Where you previously had a simple method call in a monolithic application, you now have many remote procedure calls (RPCs) with which to contend. As a result, performance and scale problems are more likely to arise from issues such as network latency, fault tolerance, message serialization, unreliable networks, and varying loads within application tiers.

Shared-Nothing, Linearly Scalable Architecture

If the right database(s) are chosen for the environment, the microservices architecture lends itself quite well to massive data processing. A database with shared-nothing architecture inherently supports high throughput and latency requirements for large scale data processing. Scale-out architecture, multi-model, high performance, and reduced TCO should all be key considerations when designing a distributed database architecture. Linearly scaling databases that do not compromise performance reduce your chance of hitting scalability limits and performance problems that could arise as a result of a distributed architecture.

Your Database Makes a Difference