Dealing with change is one of the hardest situations one has to deal with in life – whether it be at work or at home. For most of us, the temptation to carry on doing what we’ve always been doing usually wins over any of the potential benefits of making adjustments. The hassle and the expense of replacing what we know and love with something that just might be better seems a step too far. The new ideas and technology need to offer major cost and/or lifestyle benefits before we ‘take the plunge’ and we each have a different idea of the risks/rewards involved. Furthermore, there are some aspects of our home and work life where we may well be prepared to investigate the new, but there are other areas where we’ll take a whole deal of persuading.

As a rule, the biggest obstacle to change is our familiarity and comfort with the status quo. We may not be quite so blinkered as to say ‘ I’ve always done it this way…’ but we may well be thinking that ‘if it ain’t broke, don’t fix it’. Colleagues, friends and family might cajole us into changing old habits, but the conflict that needs to be resolved is that we know our own lifestyle best, but we might not know the new solutions well. In other words, bringing in a consultant (or life coach!) to try and make us change may not be very helpful as they do not understand how our lives work or how the office functions, although they do know all about the latest ideas and gadgets.

However, there are times when, deep down, we know that we need to change. The businesses that kept faith with canals as their preferred method of transport lost out to those who embraced the combustion engine, and companies that might still rely on paper and pen for correspondence are unlikely to survive in the age of digital communications.

And I think we’ve reached one of those significant milestones right now. Love it or hate it, the digital age is here to stay (at least until we run out of power or cables or places to store all our data!), so it’s time to recognise that, at the very least, the old ways of doing business need to be enhanced by the new and, in most cases, be replaced by the new.

In order to achieve this successfully, we need to take time to understand what’s out there and how it can be applied to our businesses – there’s very little point in paying a third party vast sums of money to put together a digital transformation project unless you have pockets deep enough for this third party to spend long enough inside your business to understand exactly how it works.

So, there are no short cuts to digital success, just the ‘fortitude’ and resilience required to embark on the digital transformation journey for oneself, embracing the opportunity rather than distancing oneself from it so that, when obstacles are encountered (which they will be), you don’t seek to sidestep the blame, rather acknowledge them and work out a solution before continuing on the digital journey. Best of all, if you’ve planned properly, you might actually manage to avoid too many howlers along the way.

As many organizations want to support mobile, team-oriented and nonroutine ways of work, an increasing number of them are looking for assistance in adopting digital workplace technology. A Gartner, Inc. survey* concluded that only 7 percent to 18 percent of organizations possess the digital dexterity to adopt new ways of work (NWOW) solutions, such as virtual collaboration and mobile work.

An organization with high digital dexterity has employees who have the cognitive ability and social practice to leverage and manipulate media, information and technology in unique and highly innovative ways.

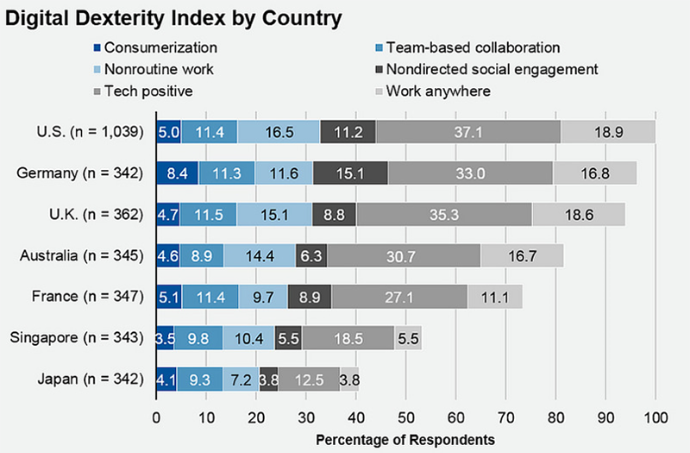

By country, organizations exhibiting the highest digital dexterity were those in the U.S. (18.2 percent of respondents), followed by those in Germany (17.6 percent) and then the U.K. (17.1 percent). "Solutions targeting new ways of work are tapping into a high-growth area, but finding the right organizations ready to exploit these technologies is challenging," said Craig Roth, research vice president at Gartner.

In parallel, the survey found that workers in the U.S., Germany and U.K. have, on average, higher digital dexterity than those in France, Singapore and Japan (see Figure 1).

Workers in the top three countries were much more open to working from anywhere, in a nonoffice fashion. They had a desire to use consumer (or consumerlike) software and websites at work. Some of the difference in workers' digital dexterity is driven by cultural factors, as shown by large differences between countries. For example, population density impacts the ability to work outside the office, and countries with more adherence to organizational hierarchy had decreased affinity for social media tools that drive social engagement.

Figure 1.Openness to Digital Dexterity by Country - Source: Gartner (June 2018)

Older Workers Are Second-Most Likely Adopters of NWOW

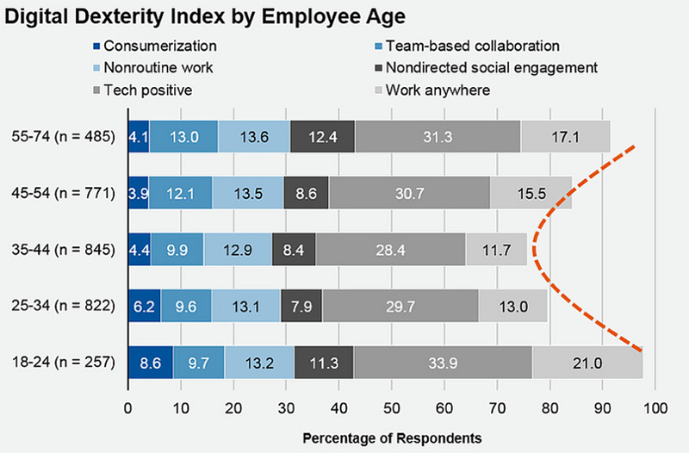

As expected, the youngest workers are the most inclined of all age groups to adopt digital-workplace-driven products and services (see Figure 2). They have a positive view of tech in the workplace and a strong affinity for working in nonoffice environments. Nevertheless, they reported the lowest levels of agreement with the statement that work is best accomplished in teams.

Figure 2.Digital Dexterity Likelihood by Employee Age - Source: Gartner (June 2018)

The survey also showed that the oldest workers are the second most likely adopters of NWOW. Those aged 55 to 74 have the highest opinion of teamwork, have progressed to a position where there is little routine work, and have the most favorable view of all age groups of internal social networking technology.

Workers aged 35 to 44 were at the low point of the adoption dip, potentially feeling fatigued with the routines of life as middle age approaches. They were most likely to report that their jobs are routine, have the dimmest view of how technology can help their work, and are the least interested in mobile work.

Larger organizations on average had higher digital dexterity than smaller ones. "Embracing dynamic work styles, devices, work locations and team structures can transform a business and its relationship to its staff. But digital dexterity doesn't come cheap," said Mr. Roth. "It takes investment in workplace design, mobile devices and software, and larger organizations find it easier to make this investment."

As many IT workers develop greater technology skills and apply them to advance their careers, many digital workers in non-IT departments believe their CIO is out of touch with their technology needs. A Gartner, Inc. survey found that less than 50 percent of workers (both IT and non-IT) believe their CIOs are aware of digital technology problems that affect them.

The survey further revealed that European workers said that their CIO is more aware of technical challenges (58 percent) than U.S. workers believe they are (41 percent).

"Non-IT workers aren't likely to use the IT help desk as their first source of assistance, and are less likely to believe in the value of their IT organization," said Whit Andrews, vice president and distinguished analyst at Gartner. "Only one in five non-IT workers would ask their IT department to supply best practices for employing technology."

The survey also revealed that millennials were less likely to approach IT support teams through conventional means. About 53 percent of surveyed millennials outside the IT department said that one of their first three ways to solve a problem with digital technology would be to look for an answer on the internet.

Non-IT workers were overall more likely than IT workers to express dissatisfaction with the technologies supplied for their work. IT workers express greater satisfaction with their work devices than do workers outside IT departments. Only 41 percent of non-IT workers felt very or completely satisfied with their work devices, compared to 59 percent of surveyed IT workers.

"Many IT departments will be more successful if they are able to provide what workers say they need, and provide inspiration so they can increase the workforce's digital dexterity," Mr. Andrews added.

IT Workers Feel More Confident

IT workers feel more confident than non-IT workers at using digital technology. The survey found that 32 percent of IT workers characterized themselves as experts in the digital technologies they use in the workplace. Just 7 percent of non-IT workers felt the same. "While we expect IT people to feel more confident with digital technologies, these findings highlight how hard it is to help non-IT workers feel as digitally dexterous as IT workers do," said Mr. Andrews.

Sixty-seven percent of non-IT workers feel that their organization does not take advantage of their digital skills. "Organizations seeking to mature and expand their digital workplaces will find that expanding digital dexterity will accelerate this across the organization," added Mr. Andrews.

Digital Technology Satisfies 72 Percent of Digital Workers

About three in four digital workers either somewhat agree (48 percent) or strongly agree (24 percent) that the digital technology their organization provides enables them to accomplish their work.

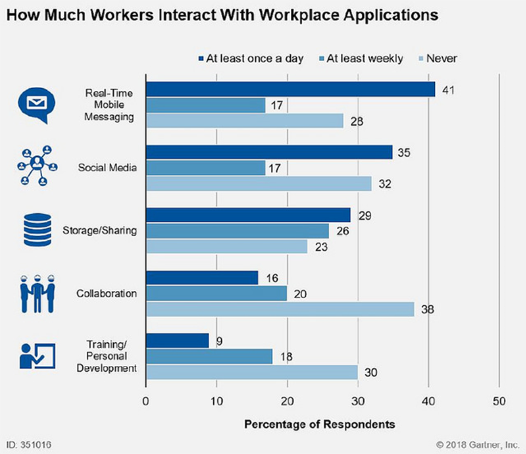

The most common types of workplace application used by survey respondents were real-time messaging (58 percent), sharing tools (55 percent), and workplace social media (52 percent — see Figure 1).

Figure 1. The Shape of Worker’s Days - Source: Gartner (June 2018)

However, significant distinctions exist in the workplace. "Millennial digital workers are more inclined than older age groups are to use workplace applications and devices that are not provided by their organization, whether they are tolerated or not," said Mr. Andrews. "They also have stronger opinions about the collaboration tools they select for themselves. They are more likely to indicate they should be allowed to use whatever social media they prefer for work purposes."

In addition, relative to the total workforce, a larger proportion of millennials consider the applications they use in their personal lives to be more useful than those they are given at work. "Our survey found that 26 per cent of workers between the ages of 18 and 24 use unapproved applications to collaborate with other workers, compared with just 10 per cent of those aged between 55 and 74," Mr. Andrews said.

A significant shift toward digital business models that harness technology trends such as cloud computing, Internet of Things (IoT), analytics and artificial intelligence (AI) is boosting worldwide spending on application infrastructure and middleware (AIM). Gartner, Inc. numbers show that AIM market revenue reached $28.5 billion in 2017, an increase of 12.1 percent from 2016 (see Table 1).

The wider technology trends driving the AIM market are commonly accepted: migration to cloud platforms and services, ever-increasing demand for near-real-time data and analytics, a shift toward an API economy, rapid proliferation of IoT endpoints, and deployment of AI.

"A new approach to application infrastructure is the foundation organizations build their digital initiatives upon, and therefore robust demand in the AIM market is testament to the occurrence of digitalization," said Fabrizio Biscotti, research vice president at Gartner. "The more companies move toward digital business models, the greater the need for modern application infrastructure to connect data, software, users and hardware in ways that deliver new digital services or products."

Table 1. AIM Software Market Share by Revenue, Worldwide, 2016 and 2017 (Millions of Dollars) - Source: Gartner (June 2018)

Gartner forecasts that the AIM market will grow even faster in 2018, after which spending growth will slow each year, reaching around 5 percent in 2022. Moreover, momentum in the AIM market is shifting from market incumbents to challengers.

Licensed, on-premises application integration suite offerings that make up larger segments served by market incumbents such as IBM and Oracle achieved single-digit growth in 2016 and 2017. Gartner expects this growth to continue through 2022. "We can generally describe the products in this slow-growing segment as serving legacy applications," said Mr. Biscotti.

Small challenger segments — built predominantly around cloud and open-source-based application integration (iPaaS) offerings — will continue to enjoy double-digit growth.

"In iPaaS we find the groundwork being laid for a digital future, as the products in this segment generally are lighter, more agile IT infrastructure suited for the rapidly evolving use cases around digital business," said Bindi Bhullar, research director at Gartner. "The result is that well-funded, pure-play iPaaS providers, open-source integration tool providers and low-cost integration tools are challenging the dominant position of traditional vendors."

The iPaaS segment is still a small part of the overall market, topping $1 billion in revenue for the first time in 2017 after growing over 60 percent in 2016 and 72 percent in 2017. This makes iPaaS one of the fastest-growing software segments.

"The iPaaS market is also starting to consolidate, most notably with Salesforce's recent acquisition of MuleSoft," said Mr. Bhullar. "There is still a lot of room for further consolidation, with more than half the AIM market held by vendors outside the top five. This "others" segment is enjoying double-digit growth, which is likely to encourage acquisitions from big players losing market share to challengers."

Mr. Biscotti added that the most successful challengers in the AIM market will be those that position their products as complementary to — rather than replacements for — the existing legacy software infrastructure that is common in most large organizations.

"While new agile challengers may seem better fits for those pursuing digital initiatives, the underlying reality is that legacy middleware and software integration platforms will persist," said Mr. Biscotti. "Pure-play cloud integration is a niche requirement today — most buyers have more extensive requirements as they pursue hybrid integration models. The long-term market composition is likely to consist of a broad spectrum, from generalist comprehensive integration suites to more-specialized fit-to-purpose offerings."

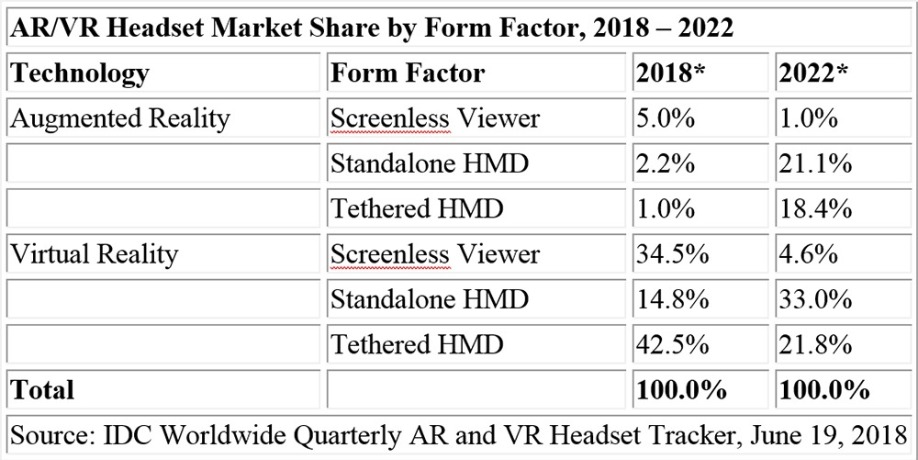

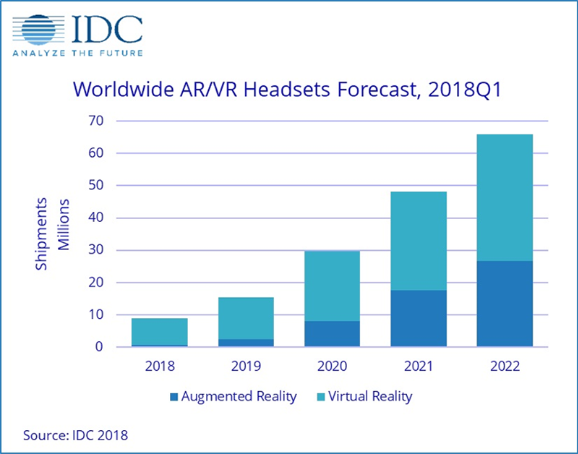

Worldwide shipments of augmented reality (AR) and virtual reality (VR) headsets were down 30.5% year over year, totaling 1.2 million units in the first quarter of 2018 (1Q18), according to the International Data Corporation (IDC) Worldwide Quarterly Augmented and Virtual Reality Headset Tracker. Much of the decline occurred due to the unbundling of screenless VR headsets during the quarter. For much of 2017, vendors bundled these headsets free with the purchase of a high-end smartphone, but that practice largely came to an end by the start of 2018. Despite a poor start to 2018, IDC anticipates the overall market will return to growth over the remainder of the year as more vendors target the commercial AR and VR markets and low-cost standalone VR headsets such as the Oculus Go make their way into stores. IDC forecasts the overall AR and VR headset market to grow to 8.9 million units in 2018, up 6% from the prior year. That growth will continue throughout the forecast period, reaching 65.9 million units by 2022.

"On the VR front, devices such as the Oculus Go seem promising not because Facebook has solved all the issues surrounding VR, but rather because they are helping to set customer expectations for VR headsets in the future," said Jitesh Ubrani senior research analyst for IDC Mobile Device Trackers. “Looking ahead, consumers can expect easier-to-use devices at lower price points. Combine that with a growing lineup of content from game makers, Hollywood studios, and even vocational training institutions, and we see a brighter future for the adoption of virtual reality."

When it comes to augmented reality headsets, many consumers have already had a taste of the technology through screenless viewers such as the Star Wars: Jedi Challenges product from Lenovo. IDC anticipates these types of headsets will lead the market in shipment volumes in the near term. However, non-smartphone-based AR headsets should begin to see greater market availability by 2019 as commercial uptake continues to rise and existing brands launch next-generation products. IDC predicts triple-digit growth in this space between 2019 and 2021.

"Momentum around augmented reality continues to grow as more companies enter the space and begin the work necessary to create the software and services that will drive AR hardware," said Tom Mainelli, program vice president, Devices and Augmented and Virtual Reality at IDC. "Industry watchers are eager to see new headsets ship from the likes of Magic Leap, Microsoft, and others. But for those devices to fulfill their promise we need developers creating the next-generation of applications that will drive new experiences on both the consumer and commercial sides of the market."

Category Highlights

Many consumers' first experience with an Augmented Reality headset will be in the form of a screenless viewer. While large movie properties such as Star Wars helped move significant volumes of these headsets the past holiday season, uptake for the remainder of the year is likely to slow as the headsets have limited functionality beyond their core applications. In the latter years of the forecast, IDC expects such products to decline in relevance, although they are likely to remain in the market, often sold as toys. Meanwhile, standalone AR head-mounted displays (HMDs) should grow to reach 194,000 units in 2018 and will experience a compound annual growth rate (CAGR) of 190.9% over the five year forecast. More advanced headsets such as Microsoft's Hololens and Magic Leap's One will help drive adoption in the commercial and consumer markets. Finally, tethered headsets will grow with a five-year CAGR of 241.8%. This last category will be the eventual home of products with lower-cost headsets based on Apple's ARKit and Google's ARCore that tether to smartphones or tablets.

IDC forecasts Virtual Reality headsets to grow from 8.1 million in 2018 to 39.2 million by the end of 2022, representing a five-year CAGR of 48.1%. While many think of VR as a consumer technology, IDC believes the commercial market to be equally important and predicts it will grow from 24% of VR headset shipments in 2018 to 44.6% by 2022. From a platform perspective, the market has been dominated by Oculus largely due to the initial volumes around Samsung's Gear VR. This will likely continue in the near term as the Go brings VR to more consumers. However, the Oculus platform is likely to face pressure from both HTC's VIve platform and Microsoft's Windows Mixed Reality platform. The latter should see strong opportunities in the commercial market as brands such as HP, Dell, and Lenovo bring their years of experience catering to enterprise buyers to the market.

* Note: Market share for 2018 and 2022 are forecast projections.

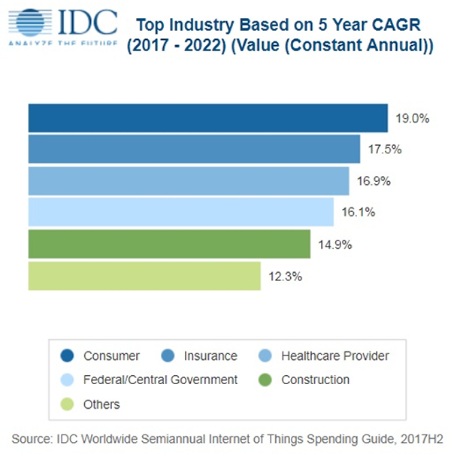

International Data Corporation (IDC) has published the latest Worldwide Semiannual Internet of Things Spending Guide (version 2H17). The Spending Guide forecasts Internet of Things (IoT) spending will experience a compound annual growth rate (CAGR) of 13.6% over the 2017-2022 forecast period and reach $1.2 trillion in 2022. The forecast is based on the latest research in the burgeoning IoT technology market, which offers business investment opportunities across a spectrum of industries and illuminated through use case implementations.

As the diverse IoT market reaches broad-based critical mass, innovative offerings in analytics software, cloud technologies, and business and IT services have expanded rapidly. "The IoT market is at a turning point – projects are moving from proof of concept into commercial deployments," said Carrie MacGillivray, group vice president, Internet of Things and Mobility. "Organizations are looking to extend their investment as they scale their projects, driving spending for the hardware, software, services, and connectivity required to enable IoT solutions."

The intersection of multiple technology domains is one key to successfully understanding and developing a supply-side product and market development strategy. The IDC IoT Spending Guide is an industry defining market intelligence tool that details end-user adoption and spending across multiple segmentations. "The latest IoT Spending Guide release fully aligns to IDC's Industry Taxonomy. We now forecast all 20 standard IDC Industries," said Marcus Torchia, research director, Customer Insights & Analysis. "As a result, we are proactively mapping IoT use cases that have segmentations in shared domains, such as in Smart Cities and Digital Transformation investment areas. As a part of these improvements, IoT supports spending forecasts for 100 use cases."

Forecast highlights show that the consumer sector will lead IoT spending growth with a worldwide CAGR of 19%, followed closely by the insurance and healthcare provider industries. From a total spending perspective, discrete manufacturing and transportation will each exceed $150 billion in spending in 2022, making these the two largest industries for IoT spending. From an enterprise use case perspective, vehicle-to-vehicle (V2V) and vehicle-to-infrastructure (V2I) solutions will experience the fastest spending growth (29% CAGR) over the forecast period, followed by traffic management and connected vehicle security.

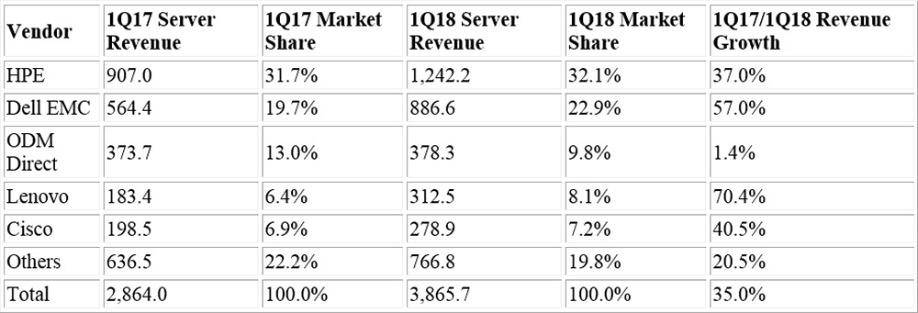

International Data Corporation's (IDC) EMEA Server Tracker shows that in the first quarter of 2018, the EMEA server market reported a year-on-year (YoY) increase in vendor revenues of 35.0% to $3.9 billion and a YoY increase of 2.0% in units shipped to 542,000. In euros, 1Q18 EMEA server revenues increased by 17.0% YoY to €3.1 billion. Exchange rate fluctuations impacted the strong dollar revenues recorded over the quarter, due to a higher euro/dollar value compared with 1Q17. The top 5 vendors in the region and their quarterly revenues are displayed in the table below.

Top 5 EMEA Vendor Revenues ($M)

Source: IDC Quarterly Server Tracker, 1Q18

At a product level, standard rack optimized, as the largest revenue generator, grew 45.2% YoY pushed by large deals in the U.K. and the Netherlands. Standard multinode shipments grew at a significant 251.4% YoY rate, driven largely by the U.K., Germany and the Netherlands. Another standout contributor to quarterly growth in EMEA were custom multinode units, which grew 107.3% YoY in terms of revenues. Higher average selling prices in the high-specification custom servers drove the improved performance in this product segment. Custom rack optimized was the only server segment to decline over the quarter, due to a continuing transition to custom multinode units providing superior performance specifications.

"The first quarter of 2018 saw the average selling prices (ASPs) of the top 5 x86 server vendors in western Europe increase by an average of 32% YoY. Central to this increase were fluctuations in exchange rates, but also increased attach rates, inclusion of Intel's new Skylake processors and the continued pressure felt by the demand for memory components," said Eckhardt Fischer, senior research analyst, IDC Western Europe.

In comparison to OEM vendors, original design manufacturers (ODMs) growth rates were significantly lower over 1Q18. The 1.4% YoY increase was primarily due to a drop in ODM hyperscale datacenter deals in Ireland, Finland, and Russia. ODM growth is expected to accelerate in late 2018 and 2019 with new cycles of datacenter launches for Apple, AWS, and Google.

Regional Highlights

Segmenting market performance at a Western European level, the U.K. and the Netherlands were standout performers in 1Q18. With 65.3% growth to $733.6 million, the former overtook Germany as the region's largest market, driven by strong growth for all the top five vendors. Discounting major hyperscale datacenter investments, the U.K.'s rapid growth may be attributed to a subsiding of Brexit fears that paused datacenter investment in the U.K. In the Netherlands, a 120.2% growth to $322.0 million was primarily the result of continued hyperscale datacenter investments made into the country. The Swiss server market was also a notable performer in the quarter, increasing 64.4% on the back of substantial IBM Large System growth. Finland was the only Western European country to experience a decline in revenue over the quarter, due to a lack of significant ODM Custom multinode and rack deals.

"Central and Eastern Europe, the Middle East, and Africa (CEMA) server revenue continued on its upward path in the first quarter of year 2018, increasing by 28.5% year-over-year to $688.36 million, despite the decline in terms of units," said Jiri Helebrand, research manager, IDC CEMA. "Ongoing product refresh cycle and maintained positive economic momentum were the main drivers for the strongest revenue growth recorded in the last ten years. Central and Eastern Europe (CEE) subregion grew by 24.3% year-over-year with revenue of $302.28 million led by strong demand for servers in the Czech Republic and Hungary, which observed demand from the public sector.

"The Middle East and Africa (MEA) subregion grew by 31.9% year-over-year to $386.09 million in 1Q18, and similar to CEE saw a decline in terms of units as both large businesses and the public sector consolidate their infrastructure and opt for richer configurations. Saudi Arabia and Turkey were the top performers, with the latter benefiting from an HPC deal in the public sector."

95% of global business decision makers face challenges when it comes to achieving a more successful digital strategy, including budget constraints, lack of visibility to manage the digital experience and legacy infrastructure.

The global survey, which includes responses from 1,000 business decision makers at companies with $500 million or more in revenue across nine countries, also found that while digital services and applications are critical to future business success, 80% of respondents reported that critical digital services and applications are failing at least a few times a month.

“This survey underscores the tremendous opportunity that maximizing digital performance can have on the user experience and bottom line, while simultaneously highlighting the real challenges companies face today,” said Subbu Iyer, CMO, Riverbed Technology. “The findings reinforce that forward-thinking companies are well positioned to lead their industries in the race towards digital transformation by prioritizing investments in modernizing their networks and tools to measure and manage the digital experience for their customers and employees. Those who hesitate to embrace digital strategies and processes will quickly fall by the wayside, and those who drive digital performance will see significant business outcomes.”

Awareness is High, Need is Immediate

The need for companies to provide a successful digital experience for customers, partners and employees is well recognized, and it continues to grow in importance. Some 91% of global business decision makers agree that providing a successful digital experience is even more critical to the company’s bottom line than it was just three years ago.

Likewise, 99% of global business decision makers believe their company would benefit from improving the performance of their company’s digital services and applications. They see this happening primarily through:

Hurdles to Implementing a Digital Strategy are Real

However, it is widely recognized that inadequately performing systems today are a key limitation to a successful digital strategy. In fact, of the 95% of global business decision makers who said they face significant challenges when it comes to achieving a more successful digital strategy, most cited multiple challenges including:

Accelerating technology cycles are impacting the workplace with unprecedented speed. By Matt Cain, vice president and distinguished analyst at Gartner.

Application leaders and business executives haven’t traditionally spent much time contemplating how work will change in years to come. That’s largely because the IT organisation has focused on operational excellence and due to the fact that over the past three decades, the pace of change in the workforce has been relatively slow and predictable.

Circumstances have changed. The IT charter is expanding to include a larger focus on individuals, teams and overall business performance, and accelerating technology cycles are rapidly increasing the pace of change in work patterns.

Digital business models and platforms are fundamentally restructuring how business is conducted. Cloud services are increasing the speed of technological change at a rate unthinkable in the days of on-premises deployment. At the same time, the nature of work is being transformed with new business patterns, such as the gig economy and flatter organisation models, while artificial intelligence (AI) is set to transform how work is carried out.

Application leaders need to anticipate the future of work to understand what IT skills are needed to support change, and ensure that technology aligns with future work patterns.

Below are three overarching future work trends expected in developed nations between 2022 and 2026, along with some of their key impacts.

Worker digital dexterity will become critical

No one knows exactly how this change will impact business, but one thing is certain — the digital dexterity of the workforce is the most effective mechanism to ensure that it can keep pace with and exploit vast amounts of change. Digital change will manifest itself in a number of ways, including:

AI will prevail

Converting rich input patterns into data that can be readily processed by conventional software is at the heart of today’s AI hype. AI will have a profound impact on how work is assigned, completed and evaluated. And although AI will provide a number of workplace trends in the coming years, workers are experiencing the impact of robobosses and smart workplaces right now.

The gig economy will thrive

Businesses will increasingly learn and borrow from freelance management and gig economy platforms, which dynamically match short-term work requirements directly with workers who possess the relevant knowledge, experience, skills, competencies and availability. This will mean moving away from traditional structures to more fluid arrangements.

In my experience there are a number of common blind spots associated with vendor risk management (VRM), or ‘third party risk management’ as it is sometimes called. In this article I will share with the readers what I see as six top misconceptions surrounding VRM and suggest strategies for businesses to overcome or avoid some of these pitfalls. By Tom Turner, CEO and President, BitSight.

1. Only the highest value business relationships have the most inherent risk

Today we see many high profile data breaches hitting the headlines. That’s because businesses are more connected than ever before, and organisations are having to deal with increasing numbers of third parties. Often, there will be a direct relationship where data is exchanged. However, we’re seeing more indirect relationships where a third party may not be deemed critical to the organisation's service or product, yet they still have the potential to introduce risk. Take the Netflix ‘Orange Is The New Black’ leak in April last year from Larson Studios. This was a post-production company that was probably thought to be a distant vendor in the supply chain, yet when they were hacked it had a massive impact on the core business.

Likewise, many businesses are using the same third party, which is often unavoidable. For some products and services, there's only one dominant player in the market to choose from if you need to outsource. This situation can result in massive downstream effects if there's a data breach, compromise, or service disruption. For example, the NotPetya malware hit many companies in Ukraine particularly hard, such as the shipping giant Maersk. This happened because a Ukrainian based software accounting platform was compromised, and the ransomware spread to its customer base.

Breaches and outages aren't just resulting from typical third parties anymore. They're also stemming from more distant vendors. While these organisations may not have access to your network, you may rely on their technology or services which could cause considerable risk downstream.

2. Your most trusted form of assurance is a diligence questionnaire

VRM programmes have traditionally focused on setting contractual obligations for vendors. Risk managers would periodically check on whether vendors were meeting certain obligations and move on to the next item on their “to do” list. For a long time, the only way to manage risk was to use questionnaires, audits, and penetration tests. This has changed, and businesses are now actively ‘hunting’ for risk. They are consuming multiple data feeds about operational, financial, and cyber security risk. In doing so, many organisations have taken a more collaborative approach with vendors, rather than a combative one. The notion that VRM is a game of strong arming between risk and legal departments is changing. Organisations and their vendors are having more constructive dialogues.

3. VRM is not a Board level issue

According to Gartner, 80% of security risk management leaders are being asked to present to senior executives on the state of their security and risk programme and 75% of Fortune 500 companies are now expected to treat VRM as a board level initiative to mitigate brand and reputation risk. Boards are beginning to request updates more than once a year and this has led to the emergence of security committees.

The challenge for risk managers is how best to contextualise the company's level of risk. This is where objective, quantitative measurement can really help. For example, being able to say that the aggregate level of cyber risk posed by vendors has dropped 20 percentage points is a lot more insightful than saying, "We've mandated that all of our vendors implement multifactor authentication.” It’s important to learn how to speak the right language to the Board.

4. Regulations and VRM programmes are two different issues

The impact of regulation very much depends on the industry sector, but if you are subject to any regulation at all, then it needs to be included in your VRM programme. Regulations that encompass all industries, such as General Data Protection Regulation (GDPR) which comes into force on 25th May this year, will need to be part of the risk management programme of every single organisation. Article 32 states that organisations that collect personal data must have rigorous due diligence processes to ensure that appropriate controls are in place before sharing data with vendors.

5. VRM can be handled manually with existing resources

Relying solely on subjective point-in-time questionnaires can leave a lot of risk unidentified or unaddressed. Many companies now understand that having a continuous objective view is needed. Also, you can’t simply just throw people at this problem. There are too many vendors connected to the enterprise and not enough risk professionals in the world to manage them. Companies need to automate processes whenever possible to manage this risk. There’s going to be a huge breakthrough when businesses across all sectors recognise the importance of automation and allow human intervention when urgent action is required.

6. Engaging with vendors and the supply chain to correct risk is difficult and confrontational

Companies have different approaches for engaging with vendors and some have more influence than others. However, we are learning that presenting data and accessing a common platform provides significant benefits.

Giving non-customers free access to a security ratings platform via a trusted partner will allow third party vendors to investigate potential network issues and allow access to remedial resources. This is a good example of how engagement with vendors can be driven by objective data. It also offers vendors a benefit in return for their engagement and reduces some of the confrontation that can accompany risk assessment.

With economies of scale at play, there are potentially long-term benefits too. With many organisations using the same vendors to rectify issues, we can reach a wider audience and the whole digital economy is better off.

From Artificial Intelligence (AI) and machine learning to IoT and blockchain, the pace of technology change is both exciting and daunting, especially for those tasked with enticing new talent to the industry. Companies have claimed for years that computer science and software engineering degrees do not deliver ‘work ready’ employees; with acceleration in technology innovation, what are the options for the developers for the future? In fact, as Alexis Shirtliff, Technical Director, DCSL Software, explains, there is no need for developers to focus on any one specific toolset or technology area; instead they need versatility, communication skills and an ability to see the big picture – all underpinned by a strong foundation in IT process and methodology.

AI or IoT or Blockchain

When a high street bank can be brought to its knees by a mis-managed IT development, the fundamental importance of technology to every business becomes painfully clear. Alongside extraordinary innovation, there is now far greater understanding of technology from businesses, as well as ever higher expectations regarding quality and app-influenced user experience.

How does this shift affect the requirements for the developers of the future? Should they be fine tuning AI expertise? Unlocking the mysteries of blockchain or understanding the opportunities of IoT? Or is this focus on bleeding edge technologies missing the point?

These three technology areas demonstrate perfectly the new mindset requirements of the developer of the future. IoT is becoming business as usual; soon it will be hard to consider a device that isn’t connected in some way. As a result, technology maturity means devices can be connected to any data source, network or cloud infrastructure with pretty much any language. There is no one specific IoT tool set or skillet; developers simply need to understand the concepts and visualise the opportunities.

AI development is increasingly based on a ‘toolset as a service’ model; a developer can plug in to a growing raft of amazing tools from IBM, Microsoft, Google et al, that enable AI style functionality. Want to embed facial recognition to support a specific development? There is no need for old fashioned coding, simply choose the right toolset and get started. The skill is, again, in picturing the possible and determining the best tool set for the job.

Blockchain, on the other hand, is less likely to stand the test of time. The role of distributed ledgers may evolve, but there appears to be little value in any future developer heading down that rabbit hole right now. As, when and if the technology gains mainstream acceptance, without doubt tools as a service will appear that enable developers to leverage blockchain as required.

Depth and Breadth

This new accessibility provides developers with unprecedented opportunities to rapidly embrace innovative technologies. There far less risk of being side-lined as a result of a dated skill set. Indeed, developers now have an extraordinary range and diversity of tool sets to support amazingly innovative solutions. Rather than specialising in any one technology, or language, developers require a different approach. It is a new mindset, rather than new technology expertise per se, that is required; an ability to be versatile and to embrace skills across the entire technology stack; from back end database integration to front end user experience.

How does this affect the way developers of the future are attracted by technology at school, prepared at university and then enticed into the industry? While there are concerns that teachers lack the technology skills and confidence required to inspire the next generation, that shouldn’t really be the constraint. How many youngsters are intuitively gaining amazing technical skills through their daily use of Minecraft, for example? The key is to understand how best to build on this interest and confidence in a way that relates to the next stage of education without forcing children down a technology specific cul de sac.

The fact is that the developers of the future will need a raft of soft skills that perhaps were not in the traditional remit – including great communication, as well as an ability to visualise possible outcomes and solutions. The skills to rapidly understand customer expectations and create a compelling response, to collaborate with a development team that could be scattered across the world and the ability to recognise which tools to apply to a specific development are now incredibly valuable. And that does not always mean the latest or most exciting: developers will always want to get a chance to use the newest and shiniest new toolkit on the block, being able to recognise when not to reinvent the wheel is also an essential skill.

Underpinning all of this, therefore, must be the fundamental discipline of good, well structured IT development. The ability to follow proven methodologies such as agile is absolutely critical in an era of incredibly high customer expectation and a demand for continuous – even daily – feedback and project update. And it is this foundation in development best practice, the ability to follow a process, that will stand the developers of the future in great stead, irrespective of technology change or innovation.

Conclusion

Technology education has always created conflict – should an individual opt for a vendor led course to attain a specific skill set, or opt for a broader, more generic education? What should companies expect from recent graduates and how much additional investment is required to make individuals work ready? While the rise in AI and blockchain, machine learning and IoT may appear to suggest that young developers need to push the boundaries, the fact is that it has never been easier to access and embrace new and innovative technologies. What is required from the developer of the future is the right mind set; the ability to balance innovation with proven solutions, the skills to communicate with clients and colleagues and the vision to create technology solutions that truly enable, not disable, a business.

It was made clear in the presentation from Gartner at April’s European Managed Services Summit that customers were finding it hard to differentiate individual managed services providers and their services. Research director Mark Paine from Gartner told a packed event that while MSP Services were growing at over 35%, it might not last, and certainly the pace of competition was not going to let up. Even so, at the latest estimate, the global managed services market is expected to grow from €150bn last year to €250bn by 2022.

“The key to a successful and differentiated business is to give customers what they want by helping the customer buy,” he told the Amsterdam audience.

In a business where 65% of the buying process is over before the buyer contacts the seller, because of all the information gathered beforehand, the MSP is even less in charge of what happens than in the old VAR model. Without differentiation, the customer is more likely to buy from their usual sources, or at the least, ignore an MSP who does not offer anything different, he says.

But the rewards are waiting for those MSPs who can prove what problems they solve and what makes them special, particularly when the MSP can show how the deal will work and how customers get value, he says. Research shows that product success and aggressive selling carry no weight with the customer, compared to laying out a vision for the customer’s own growth and success.

So a major part of the message in the Amsterdam event, and in the forthcoming London summit on September 29th will be on marketing issues with the aim of getting MSPs up to speed on the current best techniques in building sales pipelines, leveraging available marketing resources, and best practice using social media.

Among the issues challenging the MSP marketing teams is that fact that buying teams are large and extended, containing a variety of influencers, with decision-making spread throughout the organisation. Getting a consistent message through in this environment is a problem which MSPs themselves will be discussing at the London event, which will have speakers who have successfully promoted their message at the highest levels.

One secret about which more will be revealed is that MSPs need to align their businesses with their customers, so that a win for either is a win for the other. Being able to demonstrate this is a good deal-closer. Being able to lay out a convincing vision to improve the customer’s business and to offer this as a unique and critical perspective will win business, says Gartner.

Now in its eighth year, the UK Managed Services & Hosting Summit event will bring leading hardware and software vendors, hosting providers, telecommunications companies, mobile operators and web services providers involved in managed services and hosting together with Managed Service Providers (MSPs) and resellers, integrators and service providers migrating to, or developing their own managed services portfolio and sales of hosted solutions.

It is a management-level event designed to help channel organisations identify opportunities arising from the increasing demand for managed and hosted services and to develop and strengthen partnerships aimed at supporting sales. Building on the success of previous managed services and hosting events, the summit will feature a high-level conference programme exploring the impact of new business models and the changing role of information technology within modern businesses.

The UK Managed Services and Hosting Summit 2018 on 19 September 2018 will offer a unique snapshot of this fast-moving industry. MSPs, resellers and integrators wishing to attend the convention and vendors, distributors or service providers interested in sponsorship opportunities can find further information at: www.mshsummit.com

More and more businesses are appreciating the benefits that the cloud delivers in terms of scalability, flexibility, efficiency and cost. Big enterprises, for example, are now migrating mission-critical SAP workloads to Microsoft Azure, and with the growth in Azure revenue exceeding 90% for every one of the last four quarters, the faith placed in it by Microsoft CEO and cloud advocate, Satya Nadella, is paying off. By Matt Hilbert, Technical Writer, Redgate Software.

Where does this leave the database? The good news is that change is afoot for the SQL Server database world as well. The recent public preview of Azure SQL Database Managed Instance marks a significant step-change in Microsoft’s managed database service. It’s important because it elevates the simplified database-scoped programming model used in the first two flavours of Azure SQL Database, Single and Elastic Pool, to the instance level.

Managed Instance shifts the balance to the cloud by providing near 100% feature compatibility with on-premises SQL Server, yet also offering the benefits of a cloud service like built-in high availability, automated patching, dynamic scalability, and backup management with point-in-time recovery.

Organisations can therefore migrate existing on-premises SQL Server workloads to the cloud and retain the features they’re accustomed to, while also gaining many of the manageability benefits of a PaaS offering.

The promise is that an on-premises SQL Server database can be migrated by simply backing it up and restoring it to an Azure SQL Database Managed Instance. It will look and behave just as it did before, with security guaranteed because it will be isolated from other customer instances and assigned a private IP address.

Those are the headlines, but behind the friendly phrases like ‘lift and shift’ and ‘frictionless migration’ which are now being used, there are three questions that need to be answered before making the decision to migrate to Managed Instance when it moves from public preview to general availability:

1. Is your database compatible with Managed Instance?

It’s the talk about near 100% compatibility that’s the issue here. The words in large print are about supporting compatibility back to SQL Server 2008, and allowing for direct migration of database versions starting with SQL Server 2005.

The small print is slightly different because, while the on-premises and Azure versions of SQL Server are built on the same engine, there remain some variations in the features and capabilities that are supported.

Fortunately, Microsoft’s Data Migration Assistant provides a simple way to identify compatibility issues. While it doesn’t yet support Managed Instance as a migration destination, it can still be used to perform a SQL Server migration assessment. Any breaking changes, differences in behaviour, and deprecated features will be shown, together with details of the affected objects, along with any migration blockers that have been identified.

This is the perfect starting point for a migration plan because, before any real time or effort is spent weighing up the long term pros and cons of moving to Azure, the real impact on the database itself can be assessed.

2. What’s the cost of migrating to Managed Instance?

As anyone who has embraced the cloud knows, there are two immediate areas where savings can be gained by migrating from on-premises.

Firstly, it moves the CapEx cost of hardware that will need to be maintained and upgraded regularly to the OpEx cost of a service model, which helps balance the books and will please Financial Directors.

Secondly, and of particular interest to fast-growing startups or companies with fluctuating server-side demand, the CPU or storage can be increased or decreased in seconds via the Azure portal using an online slider.

That’s the upside. The direct cost savings, however, are not as large as might be expected. Given the availability, scalability, and backup advantages of PaaS, Microsoft has priced Managed Instance accordingly, and the current monthly costs are:

(These prices are for the East US region, as provided by Microsoft at the time of writing this article, June 2018)

Two things to add. There’s a 40% saving under Microsoft’s Azure Hybrid Benefit for SQL Server program which lets companies use their existing SQL Server licenses with Software Assurance to reduce the standard rates. So the 8-core pricing would fall to ~$444.39 per month.

And Microsoft itself adds the following caveat: “Managed Instance is in public preview. Prices reflect preview rates, which are typically 50% of general availability rates”.

So look at the costs carefully before making a firm commitment, and also find out what the additional costs for I/O requests and backups are, once Managed Instance moves to general availability.

3. Do your third party tools support Managed Instance?

Many SQL Server developers and Database Administrators use third party tools to help in areas like writing and sharing code, implementing version control, provisioning database copies for development and testing, and monitoring. Some go further and use the tools to integrate and align database development with application development, reducing errors and speeding up deployments.

Microsoft actively encourages it too, because having a rich ecosystem of third party vendors to enhance, ease and extend the capability of SQL Server increases its appeal.

The need for those tools exists whether databases are on-premises or migrated to Managed Instance. So if you have favourite tools, the last item on the checklist is to ensure they support Azure.

This is particularly true for mixed estates, where you might want to migrate servers with fluctuating demand to the cloud so that additional capacity can be added only when required, while keeping other databases on-premises to maximise the investment in the current infrastructure. Here, you’ll want to work in the same way as before, wherever your data is.

So if your database truly is compatible, if the costings work for you, and if you can take your favoured tools with you, the question isn’t whether you should migrate to your SQL database to the cloud through Managed Instance, it’s why you haven’t done it already.

The Ocado founders were way ahead of their time when, back in 2000, they started the UK’s only ‘pure play’ online grocery operator. Today Ocado is the world’s largest dedicated online grocery supermarket, exceeding £1 billion in annual sales. Developing the innovative software and systems that power the online retail business is Ocado Technology, a division of software developers, engineers, researchers, and scientists spread across five offices: Hatfield (UK), Wroclaw and Krakow (Poland), Sofia (Bulgaria), and Barcelona (Spain).

Ocado delivers happiness when its drivers trudge through the snow delivering Christmas orders; indeed, this going-the-extra-mile attitude is what keeps its 600,000 customers coming back for more. But when you consider the Ocado motto ‘shopping made easy’, there is nothing easy about delivering on that promise. In a mind-bogglingly complex process, a typical Ocado warehouse packs and ships more than one million items each day, supported through a technology platform built in house. Additionally, the Ocado family now also includes Fetch (a specialist online pet store) and Sizzle (homeware), both firmly based on the technology platform built by Ocado Technology. Recent e-commerce sites such as Morrisons and Fabled, a beauty destination site in partnership with Marie Claire, are more recent success stories for Ocado Technology.

In parallel, Ocado Technology is also developing the Ocado Smart Platform (OSP), an end-to-end e-commerce solution designed to put other brick-and-mortar retailers around the world online. From its user-friendly mobile apps and webshop to highly efficient automated warehouse technology, OSP offers superior customer experience and a highly efficient end-to-end supply chain solution.

Ocado now offers OSP as a managed service capability to partners internationally, enabling them to build sustainable, scalable, and profitable online grocery businesses in their own markets.

Cloud move demands increased visibility

In 2014 Ocado found itself in a transitional period. Demands on its business were increasing and Ocado felt that a move to a cloud infrastructure would give the company more flexibility and scalability. Amazon Web Services (AWS) was chosen as the target environment.

Peter Thomas, head of E-Commerce OSP at Ocado Technology, shares his insight: ‘One of the challenges was that the dashboards we had developed to monitor the various sites and the platform behind it were not compatible with AWS and needed to be replaced. I had used New Relic technology before and wondered if that might be our answer.’

New Relic’s Digital Intelligence Platform was introduced to support the cloud migration, including integrating well over 100 microservice applications into the OSP platform. New Relic acted very much as a ‘security blanket,’ as Thomas termed it: ‘New Relic APM provided the extra visibility into our systems and platforms, which we needed. It gave us confidence that our migration was going as expected, and, if not, we were in a very good position to address any issues early. We learned lots about cloud architecture, and it was great having New Relic there to reassure us nothing was quietly going wrong behind the scenes.’

New Relic became more pervasive within Ocado and is now often used to pinpoint the root cause of any performance issues. A notable example was when one of the OSP backend systems showed a gradual performance degradation that was in danger of impacting customer orders. Customer satisfaction is vitally important for Ocado, and a lot of effort was spent on finding out what was wrong. Confusingly, different systems that were seemingly unconnected were affected. It wasn’t until an engineer used New Relic Insights to represent the problem that it became clear that although the database systems were all different, they were hosted on the same physical machine, which was the root cause of the problem.

‘New Relic made it easy for us to visualise and track this issue in real time’, says Thomas. ‘It helped resolve this particular problem a lot faster than we could otherwise have done.’

Better collaboration for a better customer experience

Ocado Technology works with a globally distributed team of 1,000 developers, all working on different parts of the estate simultaneously. New Relic is used to provide a central overview and a common communication tool. Thomas loves that the features to enable this effective team collaboration come straight out of the box with New Relic: ‘We’ve tried other products which needed a lot of configuration. As soon as you start customising the solution with so many teams, it becomes very difficult to manage and maintain. New Relic did an excellent job at giving us some very sensible defaults which we could easily build on.’

It wasn’t just the internal collaboration that made New Relic a success within Ocado; the New Relic team itself took an active interest in how Ocado used the toolset. A centre of excellence was introduced, consisting of New Relic and Ocado experts. As a team, they discuss the New Relic road map and what other features could prove useful. Having that deep insight into how OSP operates has enabled this team to really add value to Ocado.

New Relic visualisation supports development effort

When Ocado rebuilt the frontend of some of its non-food sites to become more customer-responsive, New Relic Browser proved invaluable to the experience. ‘The general trend we’re seeing is that code is moving from the backend to the frontend’, says Thomas. ‘Most other solutions don’t seem to take this into account, but New Relic Browser enables us to see what’s actually happening within our customers’ browsers. It gives us confidence that we are building solutions which work for our customers in the real world, and that if they don’t, we’ll know about it straightaway. You can’t get this kind of insight just from looking at your server logs.’

The combined data from New Relic APM and Browser has been used to highlight performance issues, and identify where problems originate so they can be addressed.

New Relic Insights, meanwhile, delivered solid ROI in one of Ocado’s warehouses. These highly automated customer fulfilment centres pack and ship more than one million items a day from a stock of over 50,000 different grocery items. A part of the warehouse suffered a broken sensor that was reporting an exception, even when this wasn’t accurate. The systems are event-driven so without counting the frequency of these exceptions Ocado had no way of identifying this issue. Through the power of New Relic Insights, alerts, and visualisation, the issue was quickly identified and passed over to engineering for fixing. The storage capacity, which was lost due to the error, is worth £35,000 per year in real labour costs. It showed Ocado the potential for making incremental gains through the New Relic Digital Intelligence Platform. Overall, improving automation and monitoring capabilities with New Relic has realised a savings of £100,000 per year.

Thomas concludes: ‘The world is changing fast and the relentless growth of online shopping is powered by improved technology, faster broadband, and better mobile devices. With the New Relic Digital Intelligence Platform, we feel prepared to take on these challenges and deliver on our motto “shopping made easy” to provide the superior customer experience we are best known for.’

Progressive business leaders understand the need to establish operational strategies that integrate mobile device analytics allowing data-driven decisions to be made - allowing for improved performance, security and cost control.

Setting free your mobile data

Essentially, any solution that addresses mobile network analysis, seeks to collect and organise real-time data on mobile deployments; this will typically include things such as performance, security, connectivity and behaviour. What makes this useful for any business is that the data collected allows for the tuning of device policies and workflows allowing for increased employee productivity, compliancy and ROI.

Another way to address an organisation’s unique needs is to build customised alerts, queries and dashboards. In this way you could, for example, track data usage and performance issues in real-time and be notified of changes in data usage patterns which could be symptoms of a data surplus or perhaps, a security breach.

Organisations can begin to make data-driven decisions around mobile deployments using mobile device analytics. This can be done in a number of different ways, including:

Why is a mobile data strategy important?

The competition for bandwidth between applications and mobile endpoints is currently multiplying exponentially.

Organisations need to analyse real-time performance and proactively manage employee workflows and behaviour in order to maximise the return on their mobile investments - this is in the face of the high cost per gigabyte of wireless data combined with premium-priced mobile devices.

As large organisations continue spend significant portions of their annual IT budgets to mobile initiatives (hardware, applications, services or network infrastructure) then the ability to analyse how these mobile assets perform in real-time will be fundamental. Mobility needs to be optimised as we see operational intelligence markets converging. Mobility in general is outgrowing the need to be simply managed.

Solutions that provide real-time mobile device analytics allow business leaders crucial visibility into their large technology investments. In turn, this insight can lead to a positive impact to the bottom line - an important consideration for any organisation.

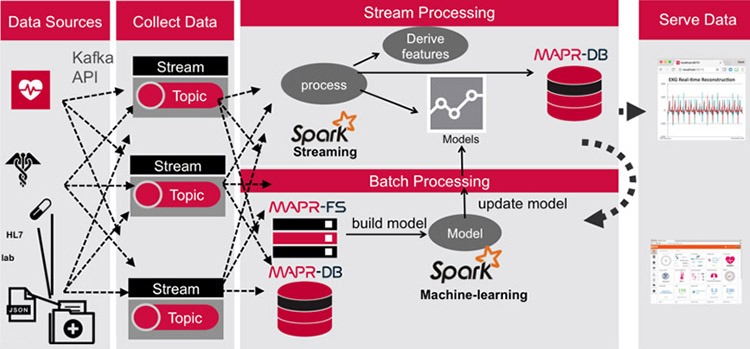

From automobile manufacturers to oil and gas companies, businesses across the globe are placing big bets on “industrial IoT” to derive real business value from outcomes like predicting equipment failures, avoiding accidents, improving diagnostics, and more. Unlike consumer IoT where the volume of data generated by each device is typically low, industrial IoT sources create a significant amount of data. Unfortunately, many of these deployments are hamstrung by technical constraints and other limitations that prevent businesses from maximising their IoT investment returns.

Many of the use cases require the collection of sensor data from edge devices that is sent over a network connection to a centralised application for analysis before an action is carried out; often back at the edge. This classic input, process and output methodology is well understood, but any IoT environment can be a data management challenge because of the huge volumes of data that are created and the latencies inherent in having global distribution. However, the case is not cut and dry and, in some instances, processing data close to the source is a better option.

Bigger IoT data

The challenges of aggregating data from consumer-oriented devices, like wearable technologies and smart thermostats, are well understood. For those types of devices, the volume of data is due to the large number of devices, and each individual device doesn’t necessarily create much data. However, there are a new set of challenges for IoT devices that generate megabytes or gigabytes of data per second. For example, real time analysis of audio, video and other rich sensor data are all areas where the incoming streams could overwhelm traditional data storage architectures. Vehicles, medical devices, and oil rigs are perfect examples of sources of data that need a much more powerful architecture than those needed by consumer-oriented devices. And as these IoT data streams reach the centralised clouds for processing it will increasingly be Artificial Intelligence and Machine Learning that will help to find insights and generate the subsequent actions.

Healthcare example

However, talking in the abstract when it comes to IoT is difficult as each use case will have different drivers and requirements. Instead, let’s look at a few concrete examples as a proxy for the types of challenges that are involved. The early detection and treatment of chronic diseases—such as heart disease can save lives and reduce the cost of healthcare. Two of the biggest issues are coordination of care and preventing hospital admissions for people with chronic conditions. Several trials are using cheaper sensors that can monitor patients’ vital signs and send this data along with Electrocardiogram (ECG) readings over cellular networks as a regular stream to applications in the cloud. These diagnostic and monitoring applications analyse each patients’ vitals and ECG readings while considering historical data from medical records. The flow of data into the system include real-time streams, historical data, patient data and benchmark data created by aggregating huge volumes of previous scans from other patients.

In this example, like many others within the IoT landscape, the clinicians require a workflow that collect data, aggregate, and learn across a whole population of devices to understand events and situations. In this scenario, the detection of an anomaly such as over medication or warning signs of an imminent cardiac event may require more intelligence at the edge so they can react to those events very quickly. The researchers have built a platform that uses common elements to process both stream and batch data within a common data fabric that can help handle all the data in the same way, control access to the data, and apply intelligence in a high performance and scalable fashion.

More at the edge

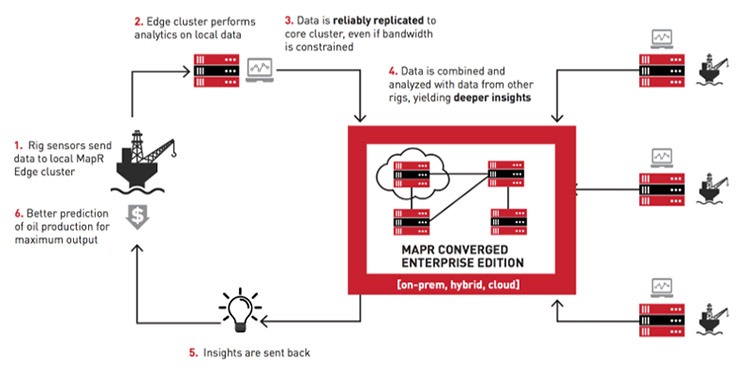

However, in some use cases, sending all the data back to the cloud for analysis is impractical or inefficient. Increasing, a growing requirement of the industrial IoT is to have computing power available close to the data sources. Take for example, oil production. With crude oil prices at historic lows, oil and gas companies are actively looking for ways to cut production costs and streamline their operations.

These companies are investing heavily in technology, including IoT, to improve their bottom line. For example, sensors attached to various parts of oil rigs are being used to better predict oil production and to position drills for maximum output. Yet seismic data and oil rig sensors generate large quantities of data, with some estimating close to 1.5 TB (from 19 million readings) per day. Oil rigs are often in remote locations with limited bandwidth, preventing global aggregation and analysis of the data. An example of this includes the work carried out by Royal Dutch Shell that realised a $1 million return on an $87,000 investment in digital technology to monitor oilfields in some of Nigeria’s toughest terrain.

Privacy concerns

The choice to process at the edge or bring into the core should also be tempered by privacy or compliance requirements—such as those driven by data residency regulations that might dictate that some data needs to stay at the IoT edge and not be copied to other locations for further processing. Enforcement of these policies is problematic, since there is often no good way of differentiating between data that needs to stay put and data that can be moved around. In other cases, data can and should be processed at a more central cluster with bigger compute resources, where deeper analytics can be performed on data coming in from many different edge devices. Consider this situation in the context of telemedicine, for example. While the output of medical devices at one edge can be used to achieve some basic diagnosis, more accurate diagnoses can be achieved by analysing output from many such medical devices—potentially spread throughout the world. So, it would be important to aggregate and analyse this output in a central, more powerful cluster and return the results immediately back to the medical centre.

Convergence and fabrics

Healthcare researchers and petrochemical firms are just two groups that are examining new ways to build the next-gen apps. At the heart of these projects are several common technologies including cloud-scale data store to powerful database and integrated persistent streaming to create new possibilities for enterprise developers looking to architect, develop and deploy applications that were impossible until now. The combination of these elements is often called a converged data platform and is starting to be adopted across a wider range of IoT use cases. These platforms provide benefits including the creation of a high IOPS, low latency data fabric for high performance computing apps. Another advantage is in real-time analytics scenarios where a data fabric can simultaneously ingest, store, analyse, process, and decide, without making copies of data. As IoT data moves from the edge to the cloud and back again, organisations will need to forget the monolithic architectures of the past and consider convergence as the starting point to deliver the scale needed for innovative new use cases.

Businesses are demanding more from their IT departments — more innovation, more change, greater agility and speed — and a programme of migration to the cloud is the enabler.

Yet upscaling to this extent requires a change in mindset, and not only for the IT function. Mass migration will have an impact on the whole organisation. So if your IT department doesn’t want to be left behind in the race to digital transformation, then make sure you take these five critical business groups with you on your journey.

1. Finance

Migration to the cloud will have a big impact on finance. This isn’t just in relation spending on IT, but the financial model that is used across the wider business and the CapEx to OpEx implications that come as a result of a ‘consume as you go’ approach.

CFOs will have the option to attribute any consumption in the cloud directly to a line of business, rather than putting ‘IT services’ against an overhead line. Now the infrastructure can be related directly to the business application, giving much more visibility into the costs of running that product or department.

What’s new for Chief Financial Officers?: It’s a much less predictable purchase process that must take into account the value of the investment to the business as a whole.

2. Procurement

The cloud presents procurement with the challenge of completely re-thinking an established way of working. Used to dealing in supply chain procurement buys at a massive scale, with discounts front of mind — and infrastructure is no different, typically buying everything upfront for the next five (or more) years.

But the rules of engagement for procurement change for a cloud migration. The focus is on having the flexibility to buy only what is needed, when it’s needed, under a master agreement. The key is to help procurement understand that ambiguity is a good thing during the initial cost setting phase as the risk is much smaller than in physical infrastructure, as you’re optimising and fine-tuning continuously.

The procurement team is invaluable to you as there will likely be current licensing in place for legacy applications where renegotiation will be required. Work alongside your procurement team and allow them to play to their strengths of negotiation and generating value.

What’s new for Chief Procurement Officers? With the cloud, you’re not bulk buying at a discount, the real savings come from re-architecting how you work with public cloud providers

3. Risk and compliance

Under a cloud model, business continuity and disaster recovery (DR) change massively. You move into a world where DR is configured as exact copies via scripts and sits idly, paying a minimal amount until your organisation needs that capacity.

It also changes the business dynamic on security and removes a big compliance issue. Hyperscale providers are highly certified and invest heavily in security in a way that few organisations could with their own infrastructure. Risk mitigation is built into the cloud offering, with continuous refreshing of patches and underlying hardware, high-level encryption, with auditing carried out at source. The job for the CIO is to focus on how the building blocks of a mass migration to the cloud are put together so that any configuration does not leave security holes.

What’s new for Compliance? The cloud means you need to move from a ‘test’ to trust mind-set which can require a huge cultural shift.

4. Corporate governance

The shift from CapEx to OpEx that comes with the cloud is one that needs to be controlled. For CapEx outlays, controls are seldom required after purchase, but for cloud-based services costs can fluctuate and there’s a risk these will nudge up over time if they are not monitored or continuously optimised.

It’s a risk that needs to be managed at a corporate level, as it’s difficult to forecast accurately without the right governance. Outcomes need to be monitored against the original intent and optimised as you go.

Your public cloud consumption is governed by a master agreement and legal should ensure this is adequate upfront. However, if additional internal governance rules to use cloud are too strict and there is an approval route for every element of a project, this will inhibit the ability for the IT team to innovate quickly. IT needs to work with corporate governance teams to strike the right balance; delegating authority and monitoring effects to balance control with innovation.

What’s new for corporate governance officers? Ensuring compliance with cloud procurement framework requirements (such as G-Cloud for public sector organisations) and building flexibility so you can balance control with innovation.

5. HR

Under a cloud mass migration, the traditional roles within IT will change. Thanks to automation, some roles, such as testing, will reduce and there’s a requirement for far greater commercial awareness. Everything you ‘scale up’ suddenly has a cost attached to it and technicians can lose sight of this.

People will need to reskill to understand the services in the cloud and how to manipulate them. For many this reskilling creates the opportunity to grow into a new role. Yet it is culturally that mass migration will force a significant change, particularly in organisations that are particularly bureaucratic. Working with HR will be vital to manage such a cultural shift for established teams, communicating what this means for the department, promoting the opportunities it offers and ensuring a supportive and enjoyable working environment.

What’s new for the Chief People Officer? The provision of training will be key. Not just in cloud architecture but also in management skills. While it is tempting to play down the changes required via internal communications, get advice from your cloud or integration partners on how upskilling has worked in other companies. If they’re worth their salt, they should be able to provide the training. As with anything important, success does happen in a vacuum; efficient collaboration is vital and moving your business en masse to the cloud is no different. My advice to CIOs is to be mindful of the priorities and pressures of your peers in other functions.

The cloud gives the CIO the opportunity to create a new operating model for their part of the business and beyond. It requires an agile way of working from those who may be new to the concept. Not everyone will feel empowered to embrace it so the sooner you can educate them on what’s required from their teams, the sooner you can all begin heading in the same direction, in the same way.

The results of his experiment betrayed a fundamentally broken system. The authors were only successful in 39 of their 100 attempts. In other words, 61 per cent of the published scientific literature could not be replicated. To the research community, these results didn’t come as much surprise.

Perhaps the most serious – and certainly the most infamous – case of falsified research was the MMR vaccine controversy: a study in The Lancet linking the combined measles, mumps, and rubella (MMR) vaccine to colitis and autism spectrum disorders. While the paper was eventually retracted with the revelation of multiple undeclared conflicts of interest as well as manipulated evidence, the damage had already been done; its quackery had been widely reported and had already reached the minds of the general public. A small but noteworthy subsection of society continues to cling to it as truth.

Admittedly, this is an extreme example, but it demonstrates a valuable lesson: the scientific ecosystem is extraordinarily slow to redress errors. Flawed literature can and does slip through the editorial and peer review process. By the time bad papers are disproved, they’ve already metastasised into new research, causing irreparable damage. The world’s ten most ‘popular’ retracted papers have been cited on over 7,500 occasions, and that’s a conservative estimate. Research, including bad research, spreads like wildfire.

The phenomenon is partly inherent to science itself. The scientific ecosystem is fragile – built painstakingly upon the results of older science. It’s a wholly a posteriori network. Citations proliferate. That level of interdependence is eminently useful, but also makes it vulnerable. People can’t cross check each and every hypothesis that leads to their own hypothesis because of the time-pressure to publish.

But science’s inter-reliant makeup is also an opportunity. It’s a system ripe for disruption - highly suitable for a technology that has only been around for about a decade: blockchain.