Yes, so it’s finally here. GDPR has, no doubt, turned your life upside down. Well, in the run-up to the 25 May deadline, there was a gradual increase of emails received by myself, ending in a manic frenzy on the 24th and 25th May, re GDPR, as organisations of all shapes and sizes told me that, thanks to the demands of GDPR they would have to do everything from never, ever talk to me again unless I asked them really nicely to do so to nothing much different from what they’re already doing but, if I was concerned, I could click on a link and read their updated data compliance/security policy. So, if I learnt one thing during the whole GDPR process it was the fact that no one really seemed to know what they should or should not be doing!

On the understanding that GDPR was not designed to prevent companies doing businesses with each other, which clearly requires some form of communication – frequently electronic – it seems safe to say that companies that confidently informed me that they could no longer contact me unless I gave them permission to do so were being over-cautious. Or, has GDPR killed the idea of sales for good?! Are there offices full of sales folk knowing that they need to contact potential customers in order to make sales, but afraid to do so because potential customers might object? I really hope not.

The most sensible emails I received were ones along the lines of: ‘We’re pretty sure that, as we’ve been contacting you over the past x years, there’s a legitimate business relationship that exists between us. This being the case, we’re going to carry on contacting you, but if you don’t want us to do so, just let us know and we’ll remove you from our database. Oh, and if you want to read our updated data privacy policy, you can do so here…’

Rightly, or wrongly, I’m guessing the companies who have, quite literally, binned all their contacts who did not reply to email requests to opt-in to their databases, might just regret such actions. And, I suspect that they would have better spent their time concentrating on other aspects of GDPR – most notably, their data breach notification policy (even if it has a marvellous, vaguely worded get out clause) and how to deal with an individual’s request to view their personal record. Data breach management could just become an industry in itself; while the processing of a data record request (now free of charge) could be annoyingly time consuming, especially if multiple requests need to be processed at the same time.

I’ll leave you to guess my views as to whether the GDPR has been well thought out and/or executed and hope that, if you work in the storage networking or wider ICT industry, you are happy that SNS still arrives in your inbox!

The Storage Networking Industry Association (SNIA), the Distributed Management Task Force (DMTF), and the NVM Express Inc. organizations have formed a new alliance to coordinate standards for managing solid state drive (SSD) storage devices.

This alliance brings together multiple organizations and standards for managing the issue of scale-out management of SSD's to help improve the interoperable management of information technologies to enable a holistic management experience.

The alliance's collaborative work will include the following standards:

In related news, The Storage Networking Industry Association (SNIA) and the OpenFabrics Alliance (OFA) have formed an alliance to collaborate on activities that advance remote access to persistent memory and the adoption of remote persistent memory as a mainstream technology. Remote access is important because modern applications span multiple systems, and this collaboration will bring the benefit of persistent memory to these applications.

Initial objectives for the collaboration between the SNIA Non-Volatile Memory (NVM) Programming Technical Work Group (TWG) and the OFA OpenFabrics Interfaces Working Group are to describe a series of usage models for remote persistent memory (i.e. resilience, disaggregation, shared information, etc), and to define the application programming interfaces (APIs) needed to support those usage models.

Polish Credit Office, the largest credit bureau in CEE, commits to implementing Billon blockchain for storage and secure access to sensitive customer information.

Billon, the technology company that civilised blockchain, and the Polish Credit Office (Biuro Informacji Kredytowej - BIK), the largest credit bureau in Central and Eastern Europe, will implement blockchain for storage and secure access to sensitive customer information. Billon's blockchain technology will benefit the bureau through superior security, integrity and immutability of data. The fully-GDPR compliant solution guarantees total visibility, trackable history and full data integrity for any client-facing document including banking records, loan agreements, insurance claims, telephone bills and terms & conditions.

BIK, owned by the largest banks in Poland including Pekao, ING, mBank, Santander and Citi, tracks nearly 140 million credit histories of over 1 million businesses and 24 million people. "Our cooperation with Billon is long-term. We believe that blockchain technology will transform client communications in the financial sector. Our solution will soon be expanded to include electronic delivery with active confirmation and remote signing of online agreements. It is also important that the solution meets legal requirements of a durable medium of information, as well as the EU GDPR requirements," said Mariusz Cholewa, President of BIK.

BIK and Billon developed the solution for durable medium of information, defined by EU regulations and directives such as MIFID II and IDD directives. The partnership saw eight Polish banks participating in trials, which established that Billon's scalable blockchain architecture could publish over 150 million documents every month. This would be more than sufficient for even the largest institutions to move to paperless customer service.

The solution has been approved following extensive consultation with the Polish Office of Competition (UOKiK) and Data Protection Regulator (GIODO), making it one of the world's first Regtech compliant blockchain solutions, and the only one with on-chain data storage and a mechanism enabling "the right to erase personal data". Currently, the only major alternatives to this are hardware-based archive solutions such as legacy WORM drives. Compared to them, Billon's solution offers 30% saving in TCO, ensuring minimal upfront costs.

"Our partnership is the start of a true revolution in information management. It is now possible to move away from the constraints of closed central databases to a democratic blockchain-based Internet where every user will be able to control their identity," explained Andrzej Horoszczak, CEO of Billon. "This solution provides the world's first GDPR-compliant blockchain platform that streamlines customer service processes and implements customer rights such as the "right to be forgotten". We're fixing the problem of consumer data control, creating a level playing field between individuals and corporations. The benefits could affect more than the financial sector, and we anticipate it will soon be adopted by industries such as telecommunications, insurance and utilities. Our cooperation is only the first step to introducing mass blockchain technology use for trusted document management."

With cloud computing firmly entrenched in current IT operations, organisations are turning their focus to optimised architectures and using the cloud as an enabler for new and emerging technologies, according to a new report from CompTIA, the world’s leading technology association.

CompTIA’s survey of more than 500 U.S. businesses finds that 91 percent of firms are using cloud computing in some form. Three-quarters of businesses have between one and five years of experience with cloud solutions. Six in 10 companies have more than 40 percent of their IT architecture in the cloud.

“At the same time that the percentage of cloud-based IT architecture is approaching critical mass, we’re seeing rising interest in cutting-edge trends that are largely driven by cloud computing,” said Seth Robinson, CompTIA’s senior director for technology analysis.

For example, 81 percent of companies say that the cloud has enhanced their efforts around automation.

“First and foremost, cloud computing allows users to widen the scope of technology possibilities, whether it’s accelerating existing plans or experimenting with new uses,” Robinson explained. “By engaging with cloud providers, they gain access to powerful new tools without having to make a full investment or build in-house skills.”

Adoption Momentum Accelerates

In earlier CompTIA research, organisations’ loose definitions or misunderstandings of what constituted cloud computing made it appear that adoption had slowed or stalled. The new report signals that many firms have come to a better understanding about what constitutes a real cloud offering and that cloud adoption momentum has picked up.

The clear majority of companies – 83 percent – have performed some type of secondary cloud migration. Most of these migrations involved a move of either infrastructure or applications to a second cloud provider; done to take advantage of better offerings and features (44 percent of migrating firms), better security (41 percent), lower costs (37 percent), or more open standards (35 percent).

Half the companies surveyed for the CompTIA report say they rely on a mix of cloud vendors and third parties for their cloud services. About 40 percent of firms primarily work directly with cloud vendors.

The Evolving IT Function

The role of internal IT staff was a major question mark during the early years of cloud adoption, with fears that those jobs would shrink in importance or even disappear.

“In most instances, the internal IT function has transformed to handle more strategic work as routine tasks are offloaded to cloud providers,” Robinson said. “But specific details of the internal IT role in a cloud-centric environment are still being determined.”

The most common changes that have occurred within internal IT operations are the creation of new policies (51 percent of companies surveyed) or the updating of existing procedures (50 percent) to account for the cloud. Security tops the list of policies that have been created or modified, with 71 percent of companies saying they have focused on new security practices as their cloud use has increased.

Security considerations lead the discussion about cloud skill-building within internal IT teams. New or improved cloud security skills were cited as a need by 69 percent of companies. Other entries on the skills-building list include app-specific knowledge (59 percent), virtualisation (53 percent), optimisation (52 percent), and performance analytics (52 percent).

Only 41% of privileged accounts are assigned to permanent employees of the business with the majority being made up of contractors, third-party vendors and resellers – indicating IT has less visibility of privileged account access.

Nearly half (44%) of data breaches in the last year involved privileged identity according to a research report from Balabit, a One Identity business and a leading provider of Privileged Access Management and Log Management solutions. The report titled, IT Out of Control, also revealed that only two out of five (41%) of these privileged accounts are assigned to permanent employees with the majority being made up of contractors, vendors and third-parties. This is a problem that is getting worse, with 71% of businesses saying the number of privileged accounts in their network grew last year, and 70% expect the number of accounts to grow even more this year.

The IT out of Control eGuide is part of the Unknown Network Survey, which was conducted in the UK, France, Germany and the US, and reveals the attitudes of 400 IT and security professionals surrounding their concerns over IT security and their experience of IT security breaches, their understanding of how and when breaches occur and how they are trying to combat hackers and privileged account misuse.

When privileged accounts are misused in a data breach, often a malicious insider has misused their access, or a criminal hacker has hijacked the account through social engineering methods. Subsequently, finding the identity of the criminals is an impossible task. It should come as no surprise that IT teams have low confidence when it comes to having visibility of what is going on in their networks, with only 48% believing they can account for all permanent staff’s privileged access and the data they have access to. Only a further 44% believed they could account for all third-party vendors’ privileged access and the data they have access to.

This has led to 58% of respondents saying their company must take security threats related to privileged accounts more seriously. Worryingly, 67% of respondents say it’s quite possible that former employees retain credentials and can access their old organisation’s network.

This highlights the urgent need for the board to recognise the risks of privileged account misuse. More privileged accounts have led to increased risks for organisations. Simultaneously, it has become increasingly difficult for IT managers to keep track of who is accessing what data files and applications. As a result, ensuring that trust is validated and verified has become an overwhelming undertaking. In the same way that trusted employees can turn on a business, so can a vetted outsider.

‘Privileged Identity Theft is a widespread technique in some of the largest data breaches and cyber-attacks. A wide range of organisations have fallen victim to sophisticated, well-resourced cyber criminals but often these attacks are easy to carry out, through the use of social engineering techniques such as a simple phishing email.’ said Csaba Krasznay, Security Evangelist, Balabit. ‘Measures exist to mitigate the risks of the attack. Relatively straightforward process improvements combined with the correct technologies such as session management and account analytics can help detect compromised privileged accounts and stop attackers before they are able to inflict damage on organisations.’

Solutions such as privileged access management (PAM) can help. Unlike traditional security systems, which see IT managers relying on manual methods of privileged user management, PAM provides replicable processes to track and manage privileged credentials.

When it comes to an effective security strategy, there are three pillars of defence that need to be taken into account. The first line of defence should be Password Management tools which protect privileged credentials. The second should be Privileged Session Management, which continuously monitors privileged accounts to identify anomalous activity. The third pillar should then be Privileged Account Analytics, a continuous verification of users, based on behaviour. Security teams can then identify whether a privileged account has been hijacked or if a trusted insider has turned malicious.

Nowadays, cyber breaches are coming from all directions. Businesses must be able to protect themselves from threats at home as well as those from the unknown corners of the internet. But with the proliferation of third-party partners, contract workers, remote working and BYOD policies protecting an organisation is now a borderless challenge.

New analysis finds that businesses with over 6,000 records face risk of economic loss without cyber defences, but that the likelihood of a data breach varies between industries.

New analysis from NCC Group has found that businesses with over 6,000 data records face a higher risk of economic loss without adequate cyber security defences in place

The cyber security and risk mitigation expert looked into the average cost of cyber security across multiple sectors in one year, including staff, hardware and software, against the average UK cost of a single data breach, which is £120 per record, according to The Ponemon Institute. It found a theoretical cut-off point at which the cost of a single breach exceeded this cyber security cost, which occurred where businesses held between 5,000 and 6,000 records.

It also found that the higher the turnover of a business, the higher the average cost of a data breach, with the average loss rising from £1.5m to £10m for companies with a turnover between £5m and £9.9m, and over £50m respectively.

However, this analysis found that the likelihood and cost of a data breach varied between sectors, with 61% of local government organisations, 10% of central government organisations, and 18% of utilities companies reporting a breach between Q1 2016 and Q1 2017. The healthcare sector faced the highest breach cost per record, with each breached record costing organisations £267 on average. While businesses in the marketing sector had the lowest chance of a breach, with only 1 in 25,000 UK business reporting a breach during the same period.

Commenting on these findings, Nick Dunn, managing security consultant at NCC Group, said: “Of course, implementing robust cyber security measures is vital for businesses of every size and in every industry, particularly with GDPR coming into force next month which is likely to raise breach costs to higher levels than before.

“This analysis demonstrates that cyber resilience when it comes to the security of sensitive data needs to be a priority for all businesses, and it is important to note that this analysis only takes into account the impact of one data breach. Even though one breach alone can cause a lot of damage, organisations should also have solid procedures and cyber incident response plans in case they face repeated attacks.

“With the amount of sensitive data held by organisations only increasing in size, it is crucial for all businesses to ensure that they have considered every possibility and taken tangible steps towards enhancing their security posture.”

Harvard University’s Faculty of Arts and Sciences Research Computing (FASRC) has deployed DDN’s GRIDScaler® GS7KX® parallel file system appliance with 1PB of storage. The installation has sped the collection of images detailing synaptic connectivity in the brain’s cerebral cortex.

Researchers at Harvard’s Conte Center and the Center for Brain Science conduct pioneering behavioral and neurological studies to better understand the origins of neurological and psychiatric disorders, such as Alzheimer’s, anxiety, autism, depression, Parkinson’s disease and schizophrenia. Thousands of users, consisting of university researchers and others affiliated with external organizations, create a remarkable amount of data at an order of magnitude greater than any other research group, including gene sequencing research.

The use of powerful scientific instruments, including ZEISS MultiSEM 505 electron microscope, placed an inordinate strain on the university’s legacy NAS storage. The NAS could not accommodate stringent demands for simultaneous data reads/writes, which created synchronization delays and calibration problems with the mission-critical microscopes. Moreover, constraints on storage availability caused resource contention among thousands of servers performing computational analysis. To alleviate these bottlenecks, FASRC deployed the GS7KX to achieve the ideal balance of parallel performance and optimized availability.

“DDN’s scale-out, parallel architecture delivers the performance we need to keep stride with the rapid pace of scientific research and discovery at Harvard,” said Scott Yockel, Ph.D., director of research computing at Harvard’s FAS Division of Science. “The storage just runs as it’s supposed to, so there’s no contention for resources and no complaints from our users, which empowers us to focus on the research.”

At Harvard’s Lichtman Lab, electron microscopy is used to capture large volumes of mouse neocortex images at nanometer resolution, generating up to 3TB of data per hour at speeds of up to 6GBps. High-resolution images are generated from the ZEISS microscope’s 61 cameras and collected on eight PCs connected to the GS7KX via the GRIDScaler native Windows client. DDN’s increased storage speed and parallel processing streamline the collection, compression and preprocessing of more than 16,000 1GB files during a typical five-hour lab run.

“Harvard’s brain exploration is poised to revolutionize the entire field of neuroscience, which is why it’s so critical for DDN Storage to ensure the highest levels of scalability and reliability,” said Paul Bloch, DDN president and co-founder. “The GS7KX has been engineered to deliver high-speed data ingest from the most sophisticated instrumentation while supporting computational processing and large-scale data analysis to speed the rate of scientific discoveries.”

College strengthens commitment to Tintri platform for enhanced performance, simplified management, VM-level visibility and control.

Syddansk Erhvervsskole is the largest technical college system in Denmark, with around 5,300 students and more than 870 employees. Erhvervsskolernes IT Samarbejde (ESIS), is an IT department for the vocational college system in Denmark. It is part of an IT cooperation between eight of Denmark’s technical colleges, providing back office support to local IT staff and supporting all network-related configurations. ESIS hosts all servers and routes their internet through its main data centre in Odense, Denmark.

ESIS found its Dell EqualLogic storage systems were struggling to deliver the required performance as it virtualised more and more servers in its data centre using VMware. They also required too much maintenance.

After deciding not to continue investing in EqualLogic, ESIS considered a number of options, including Nexenta, Oracle, EMC and NetApp, but opted for Tintri because it was the only solution designed for virtual environments. ESIS ran a successful proof of concept with two boxes so it could replicate all the data off-site while testing the production environment. The Tintri systems surpassed all its expectations.

Solution

When it came to installing the Tintri appliance, it was incredibly easy to get up and running. ESIS booted it up, gave it an IP address, connected to the VMware hosts and started migrating everything over. The entire environment was up and running in 30 minutes.

After three years with Tintri, ESIS had to choose between extending the four-year maintenance deal on its existing appliance for an additional year, or buying a new all flash Tintri box. “The choice was easy since we had already been looking at going all-flash for more performance and space,” says Sebastian Kim Morsony, IT architect and System Consultant at ESIS. In addition, all-flash has deduplication support.

The EC6000 series delivers all-flash performance for up to 7,500 virtualised applications in two rack units. The storage file system built specifically for virtualised and cloud workloads controls each application automatically and helps match capacity to business needs one drive at a time. It gives ESIS complete visibility of latency across every application in the data centre. ESIS is still using its previous Tintri solution but plans to migrate all workloads to the all flash system in the near future.

“It’s the perfect fit,” Morsony says. “It has the same management as the previous system and the same way of doing things. Everybody not using Tintri is doing storage the wrong way.”

Results

Performance and capacity

Before moving to Tintri, the college’s users were frustrated with poor application performance. “Everything was lagging and exhibiting very high latency,” says Morsony. Tintri delivered a significant increase in performance and capacity. “It has performed perfectly and surpassed the Dell EqualLogic platform by many lengths,” he reveals.

Ease of deployment and management

Under the old system it took hours to spin up and tear down applications. With Tintri, it takes seconds. In addition, the whole team can manage Tintri and use it for quick cloning and recovery services, compared to a limited number of staff who could do those tasks with Dell EqualLogic storage. Anyone in the data centre is able to manage their own storage footprint. According to Morsony, Tintri requires almost no management.

Better visibility

Tintri provides complete visibility of latency in every application in the data centre and the ability to see across the infrastructure in real-time. It has simplified troubleshooting across the host, the network and storage.

Reduced footprint and power

By moving from the Dell environment to Tintri, Syddansk Erhvervsskole reduced its data centre footprint and power by replacing five Dell EqualLogic boxes with a single Tintri box, achieving a five to one reduction in footprint and power costs.

This year’s DCS Awards – as ever, designed to reward the product designers, manufacturers, suppliers and providers operating in the data centre arena – took place at the, by now, familiar, prestigious Grange St Paul’s Hotel in the City of London. Host Paul Trowbridge coordinated a spectacular evening of excellent food, a prize draw (with champagne and a team of four at the DCA Golf Day up for grabs), excellent comedian Angela Barnes, music courtesy of Ruby & the Rhythms and, of course, the reason almost 300 data centre industry professionals attended – the awards themselves. Here we salute the winners.

The next Data Centre Transformation event, organised by Angel Business Communications in association with DataCentre Solutions, the Data Centre Alliance, The University of Leeds and RISE SICS North, takes place on 3 July 2018 at the University of Manchester.

The programme is nearly finalised (full details via the website link at the end of the article), with some some top class speakers and chairpersons lined up to deliver what is probably 2018’s best opportunity to get up to speed with what’s heading to a data centre near you in the very near future!

For the 2018 event, we’re taking our title literally, so the focus is on each of the three strands of our title: DATA, CENTRE and TRANSFORMATION.

This expanded and innovative conference programme recognises that data centres do not exist in splendid isolation, but are the foundation of today’s dynamic, digital world. Agility, mobility, scalability, reliability and accessibility are the key drivers for the enterprise as it seeks to ensure the ultimate customer experience. Data centres have a vital role to play in ensuring that the applications and support organisations can connect to their customers seamlessly – wherever and whenever they are being accessed. And that’s why our 2018 Data Centre Transformation Manchester will focus on the constantly changing demands being made on the data centre in this new, digital age, concentrating on how the data centre is evolving to meet these challenges.

We’re delighted to announce that GCHQ have confirmed that they will be providing a keynote speaker on their specialist subject – security! Has IT security ever been so topical? What a great opportunity to hear leading cybersecurity experts give their thoughts on the issues surrounding cybersecurity in and around the data centre.

We’re equally delighted to reveal that key personnel from Equinix, including MD Russell Poole, will be delivering the Hybrid Data Centre keynote addresss. If Adam knows about cybersecurity, it’s fair to say that Equinix are no strangers to the data centre ecosystem, where the hybrid approach is gaining traction in so many different ways.

Completing the keynote line-up will be John Laban, European Representative of the Open Compute Project Foundation.

Alongside the keynote presentations, the one-day DCT event will include:

A DATA strand that features two workshops - one on Digital Business, chaired by Prof Ian Bitterlin of Critical Facilities and one on Digital Skills, chaired by Steve Bowes Phipps of PTS Consulting.

Digital transformation is the driving force in the business world right now, and the impact that this is having on the IT function and, crucially, the data centre infrastructure of organisations is something that is, perhaps, not as yet fully understood. No doubt this is in part due to the lack of digital skills available in the workplace right now – a problem which, unless addressed, urgently, will only continue to grow. As for security, hardly a day goes by without news headlines focusing on the latest, high profile data breach at some public or private organisation. Digital business offers many benefits, but it also introduces further potential security issues that need to be addressed. The Digital Business, Digital Skills and Security sessions at DCT will discuss the many issues that need to be addressed, and, hopefully, come up with some helpful solutions.

The CENTRES track features two workshops on Energy, chaired by James Kirkwood of Ekkosense and Hybrid DC, chaired by Mark Seymour of Future Facilities.

Energy supply and cost remains a major part of the data centre management piece, and this track will look at the technology innovations that are impacting on the supply and use of energy within the data centre. Fewer and fewer organisations have a pure-play in-house data centre real estate; most now make use of some kind of colo and/or managed services offerings. Further, the idea of one or a handful of centralised data centres is now being challenged by the emergence of edge computing. So, in-house and third party data centre facilities, combined with a mixture of centralised, regional and very local sites, makes for a very new and challenging data centre landscape. As for connectivity – feeds and speeds remain critical for many business applications, and it’s good to know what’s around the corner in this fast moving world of networks, telecoms and the like.

The TRANSFORMATION strand features workshops on Automation (AI/IoT), chaired by Vanessa Moffat of Agile Momentum and The Connected World. together with a Keynote on Open Compute from John Laban, the European representative of the Open Compute Project Foundation.

Automation in all its various guises is becoming an increasingly important part of the digital business world. In terms of the data centre, the challenges are twofold. How can these automation technologies best be used to improve the design, day to day running, overall management and maintenance of data centre facilities? And how will data centres need to evolve to cope with the increasingly large volumes of applications, data and new-style IT equipment that provide the foundations for this real-time, automated world? Flexibility, agility, security, reliability, resilience, speeds and feeds – they’ve never been so important!

Delegates select two 70 minute workshops to attend and take part in an interactive discussion led by an Industry Chair and featuring panellists - specialists and protagonists - in the subject. The workshops will ensure that delegates not only earn valuable CPD accreditation points but also have an open forum to speak with their peers, academics and leading vendors and suppliers.

There is also a Technical track where our sponsors will present 15 minute technical sessions on a range of subjects. Keynote presentations in each of the themes together with plenty of networking time to catch up with old friends and make new contacts make this a must-do day in the DC event calendar. Visit the website for more information on this dynamic academic and industry collaborative information exchange.

The headlines these days are full of reports of data breaches, privacy scandals and cybercrime around the world. In the face of all these issues, it’s easy to feel overwhelmed. Organizations need to find ways to appropriately plan, design, document, and implement systems to keep their (and their customers’) data secure while it’s stored as well as when it’s transferred to other systems.

By Eric Hibbard, Chairman of the SNIA Storage Security Technical Work Group.

The ISO/IEC 27040:2015 international standard for storage security provides detailed technical guidance on how organizations can take a “consistent approach to the planning, design, documentation and implementation of data storage security.”

The Storage Networking Industry Association (SNIA) is an association of producers and consumers of computer data storage networking products, a non-profit global group dedicated to developing standards and education programs to advance storage and information technologies. SNIA recognizes that the ISO/IEC 27040 standard, while thorough on some aspects of storage, doesn’t adequately address specific elements in the area of data protection, including data preservation, data authenticity, archival security and data disposition.

The organization has just released its own white paper to complement, extend, and build upon the ISO 27040 standard, while also suggesting best practices in the above areas. This is one of a series of whitepapers prepared by the SNIA Security Technical Working Group (TWG) to provide an introduction and overview of important topics in ISO/IEC 27040:2015, Information technology – Security techniques – Storage security. While not intended to replace this standard, these whitepapers will provide additional explanations and guidance beyond that found in the actual standard.

Standards to protect your data

According to SNIA, data protection involves three facets: storage, privacy, and information assurance/security. Clarifying any ambiguity as to what “data protection” means is paramount to any organization’s plans to protect that data. As defined by SNIA, data protection is the “assurance that data is not corrupted, is accessible for authorized purposes only, and is in compliance with applicable requirements.” In other words, organizations must make sure that their data is usable, can only be used by authorized people and systems, and is usable for its intended purpose. Data must also be available when it’s needed, too.

Data must be stored, and clear decisions must be made about who needs access to the date, where that data resides, what types of devices and data exist in the system, how data is recovered during disasters or regular operations, and what best practice technologies should be in place.

Protection Guidance from SNIA

Businesses need to take reasonable due care when dealing with personal and organizational data. Failure to understand the possible risks associated with the care and security of such information can lead to legal and social consequences. Additional care must be taken if data breaches occur; mishandling the notification of such events can be catastrophic for a business. Because storage ecosystems are integral to a company’s information and communication technologies, it’s important to recognize that protections are necessary and risks can be high, leaders at such companies need to be sure they are aware of and implementing as many of the best practices and guidance given by both the ISO/IEC and SNIA organizations.

Data should be treated confidentially, and not made available to anyone without the right authorization. While this is typically achieved via encryption, SNIA notes that authentication processes, authorization and access controls, and specific data classifications must be assigned and committed to organizational policy. There must also be proof of these controls as well as audit logging. “Maintaining data confidentiality,” notes the white paper authors, “is one of the most important aspects of ensuring protection of personal data.”

Preservation, Retention, and Archiving

Preserving the electronic record of business transactions can help inform current and future management decisions, satisfy customers, show regulatory compliance and protect against litigation. Companies need to have a records management policy that defines what records are and how they will be managed. Not everything needs to be a “record,” of course, but businesses should have a regular set of retained records as well as associated metadata.

SNIA recommends that these records be retained during the ordinary course of business. The length of the retention period should be noted in written policy, and determined based on legal and statutory considerations. Once a record has been retained for its defined period, it should then be disposed of properly. The sheer volume of US records retention requirements can make it difficult to design a storage ecosystem.

Archives of data over time, whether long-term or short-term, must ensure the integrity, immutability, authenticity, and confidentiality of the information contained within. Electronic document-based information should be accessible on the system that originally created it, currently access is, or will be used to store it in the future. It should also be intelligible, identifiable (using organization and classification systems, retrievable, understandable (to both computing systems and humans), and authentic.

Data Authenticity

Business and personal data is often replicated, migrated and archived. At times, archives are used to verify the integrity of various copies of the data. In this situation, the SNIA recommends that any guarantees are implemented in such a way to ensure data integrity or authenticity even after the records have left the control of the organization that created them.

Best Practices to Monitor, Audit, and Report

The latest ISO/IEC 27040 guidance for audit logging systems is relevant, say the known industry experts in the Storage Security TWG of the SNIA, and common sense. However, when adding privacy as a consideration for audit logging, organizations may encounter further issues. Without specific design for privacy, general logging strategies can record all access and update of data, which might include personal information. The European Union requires that if such data is retained, it must be anonymized and tagged with the purpose for collecting it. It must also be tagged with the specific amount of time it will remain in the system before it is expunged. Individuals can demand what information an organization has on them, and that it be removed, as well. When businesses design their monitoring and auditing systems, the SNIA recommends taking this issue into account.

What To Do With Old Data

All data has a life cycle. Records must be either destroyed or archived, with the latter perhaps a mere reprieve from the former. As records become unneeded for historical or statutory purposes, companies need to decide what to do with them. The SNIA Storage Security TWG recommends that records be confirmed as unusable or no longer needed for operational, legal, governmental, or compliance reasons before being destroyed. The working group also recognizes that digital records can be subject to retrieval even after they have been rendered inaccessible (by regular means) from electronic media. Sanitization (and proof of sanitation) may also be an important step in a company’s process as it disposes of unneeded records and data.

SNIA Is Here To Help

While the ISO/IEC 27040 standard around data storage security is useful and thorough, SNIA recognizes that additional guidance may be needed by organizations concerned with data protection and privacy. To raise awareness of data protection, this SNIA Storage Security whitepaper highlights the relevant data protection guidance from ISO/IEC 27040 and then builds upon it, covering topics that include data classification, retention and preservation, data authenticity, and data disposition. As part of this expanded material, SNIA provides guidance and considerations that augment the existing storage security standard. This is but one of many benefits of belonging to and supporting such an association.

Data security is an integral part of any business endeavor; making sure that organizations have considered and implemented as many best practices is made easier by SNIA’s members and guidance. The SNIA TWG worked closely with the ISO standard to show further alignment with industry and global standards.

For more information about the work of SNIA’s storage security group, visit: www.snia.org/security. Click here to download the complete Storage Security: Data Protection whitepaper.

A quiet revolution is taking place in networking speeds for servers and storage, one that is converting 1Gb, 10Gb and 40Gb connections to 25Gb, 50Gb and 100Gb connections in order to support faster servers, faster storage, and more data. The switch to these new speeds is changing not just networking equipment but also network deployment models.

By John Kim, Chair SNIA Ethernet Storage Forum

The Need for Speed

Several technology changes are collectively driving the need for faster networking speeds.

· Faster Servers – The first driver of faster network speeds is the spread of faster servers. Modern x86 servers can push more than 100Gb/s of throughput, if their entire workload is focused on network I/O. While very few servers reach that level, it’s increasingly common for one server to need more than the throughput that a 10GbE or 8Gb FC port can deliver. The recent robust competition between Intel, AMD, Open Power, and various ARM CPU vendors means server power will keep increasingly rapidly.

· Denser compute – As IT departments switch from virtualization to containers, the lower overhead of containers lets them pack more compute instances on each server than using virtual machines. This requires more storage performance and network throughput.

· Faster Storage – Everyone knows flash has been replacing disk, but in addition to the continuation of this trend, the flash drives now have faster NVMe interfaces, which deliver higher throughput and lower latency than SAS or SATA SSDs.

· Bigger Data – The amount of data and size of individual files and databases is growing rapidly due to increased capture of video, higher-resolution video (4K and 8K takes up approximately 4X and 8X more space and bandwidth than regular 1080p high-definition video), smart phones, social media, and the Internet of Things.

· Distributed Compute – Various applications distribute compute and storage across a cluster of servers. Big data (Hadoop and NoSQL databases), machine learning, scale-out storage, and hyperconverged infrastructure all do this and require high levels of “east-west” network traffic between the compute or storage nodes.

Networking Vendors Deliver

Any technology revolution requires both supply and demand. If customers want faster speeds but network vendors don’t offer it—or if the prices are too high, then implementation will only be in niches and adoption will be slow. Fortunately the networking vendors are delivering 25/100GbE networking solutions to market quickly. There are an abundance of 25/100GbE NICs (network interface cards) and switches available today.

At least four switch silicon vendors (Broadcom, Cavium, Cisco, and Mellanox) sell 25/100GbE products, leading to a multitude of 25/100GbE switches from Cisco, Dell/EMC, HPE, Huawei, Lenovo, Mellanox, Quanta, Supermicro and others, including “whitebox” switches from original design manufacturers (ODMs). Network adapter silicon and cards in these speeds are widely available from Broadcom, Cavium, Chelsio, Intel, Mellanox, Solarflare and others, with these cards being sold through many server original equipment manufacturers (OEMs), storage OEMs, resellers and systems integrators.

Competition and increasing volumes have already driven price premiums down so that 25GbE NICs cost only 20-30% more than 10GbE NICs, and 100GbE switches cost only 50% more per port than 40GbE switches. Customers enjoy 2.5 times more bandwidth than before, along with lower latency, at only slightly higher prices, and the trends show 25/100GbE network equipment pricing will move closer and closer to 10/40GbE pricing over time. As a bonus, existing fiber optic cabling designed for 10GbE can be used for 25GbE, and cabling designed for 40GbE can be reused for 100GbE, simply by installing new transceivers or modules at the ends of the cables.

Because servers are typically deployed for 2-4 years and switches for 3-5 years, it makes financial sense to “future-proof” networks by ensuring all new Ethernet adapters, switches and cables can support 25GbE (or 50GbE) endpoints and 100GbE links between switches.

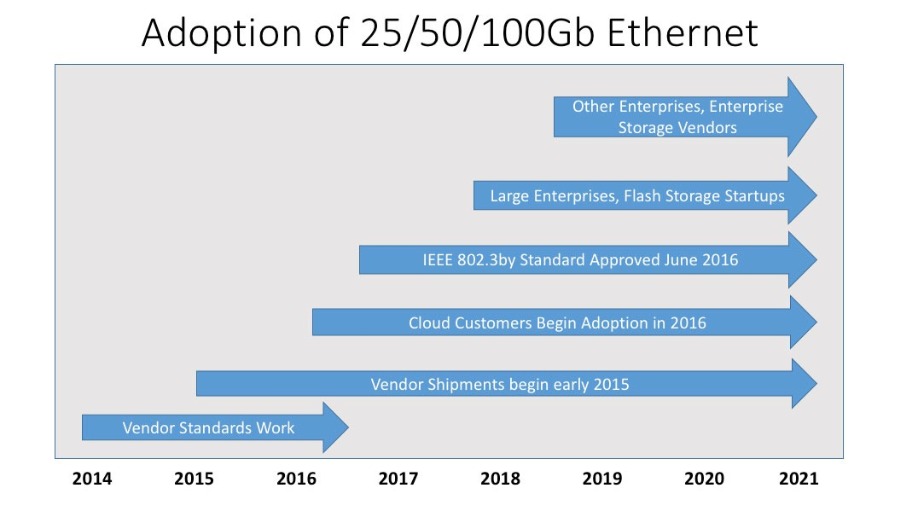

Cloud Goes First, Technical Computing Second

The customers first to adopt these faster speeds have been hyperscalers (such as Facebook, Google, Microsoft or Amazon) and other large cloud service providers (SPs). They need the increased bandwidth and can easily change their server and network designs to take advantage of faster speeds and new features in the network. They are relentlessly driven to improve performance and efficiency, since pricing for cloud Infrastructure, Platforms, Software, and Storage-as-a-Service (IaaS, PaaS, SaaS, and StaaS) is constantly declining.

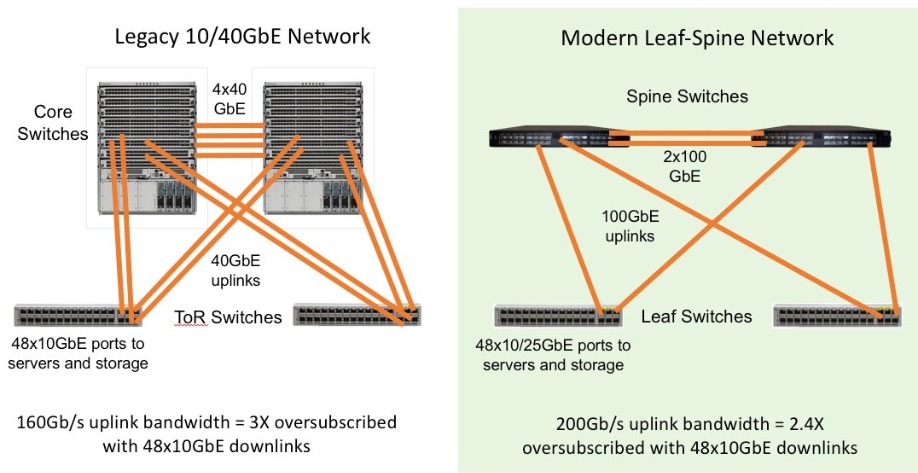

With large scale networks, the uplink speed between switches is a critical factor to maximizing efficiency and lowering costs. With 40GbE uplinks, each “leaf” or top-of-rack (ToR) switch might require 4-10 (160Gb/s to 400Gb/s) uplinks to the spine switches, which then require 4-10 uplinks to core routers. By using 100GbE the number of uplinks per switching layer can be reduced by 60%. For example, two or four 100GbE links would provide the same or greater bandwidth as using four or ten 40GbE links. The reduction in links allows for more servers per rack and reduces the floor space, power, and heat footprint of the network.

Figure 1: 100GbE uplinks allow for more bandwidth using fewer uplinks, simplifying the design of large networks.

Because large cloud SPs deploy tens or hundreds of thousands of servers and hundreds of switches each year, they replace or install new racks, rows or even complete data centers all at once using newer and faster networking to support newer and faster servers and storage. The largest SPs have already moved new deployments to use 25 or 50GbE on the servers and storage, with 100GbE uplinks between switches and across the data center. Some high-end all-flash arrays with NVMe SSDs—sometimes with NVMe over Fabrics as the storage networking protocol—are also running 100GbE. Due to the desire for network uplink efficiency explained above, many of the largest cloud vendors are already demanding 200GbE and 400GbE connections between switches. (200 and 400GbE switches and cables are expected to arrive towards the end of 2018.)

Technical computing is also driving the network need for speed. This includes fields such as oil and gas exploration; genomic research and new drug discovery; computer chip design; weather forecasting and climate modeling; automotive and aerospace design; artificial intelligence training and inferencing; and the editing/production of high-resolution video. While cloud deployments range from thousands to tens of thousands of servers at a time, technical computing clusters range from as few as 8 up to several thousand servers. Many of these deployments have traditionally used non-Ethernet interconnects such as InfiniBand or Intel OmniPath Architecture for design, research and simulation workloads, or Fibre Channel and the Serial Digital Interface (SDI) for video editing, but as they require faster speeds, many of these workloads are moving to 25Gb, 40Gb, 50Gb and 100Gb Ethernet.

Enterprises and Storage Vendors Follow Soon After

Enterprises often lag behind the cloud in adopting new IT paradigms, so it’s not surprising that these enterprises are moving more slowly to adopt 25GbE and 100GbE. The networking vendor sales teams are showing up with shiny new 25/100GbE adapters and switches, just as many enterprise are finishing their upgrades to 10GbE client connections and 40GbE uplinks. But while most enterprise networking today is still at 10GbE and 40GbE in the datacenter (1GbE to the desktop and 1/2.5/5GbE for wireless), it’s clear that the largest enterprises are also starting to deploy 25GbE to the server and 100GbE uplinks to aggregation switches or to the core routers.

Enterprise storage vendors have also lagged but are rapidly moving towards faster speeds. Today about half of them support 40GbE and/or 32G Fibre Channel (FC), while the other half are still shipping 10GbE and 8/16G FC ports. By mid-2019 it’s likely all of them will support 40GbE and 32G FC, while the more innovative storage vendors will also be shipping 25GbE, with 100GbE support for the faster all-flash arrays.

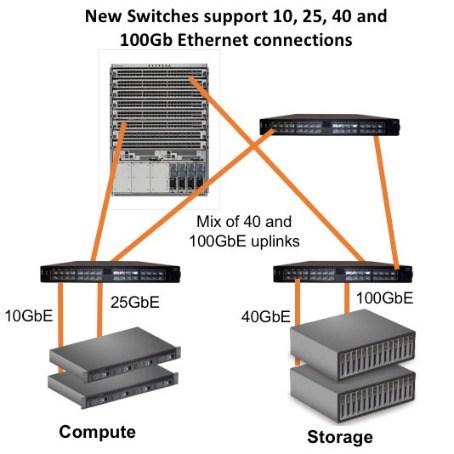

To simplify enterprise deployments, nearly all 25GbE adapters and switch ports are backwards compatible with 10GbE, while 50GbE and 100GbE adapters and switch ports are backwards compatible with 40GbE. (32/128G FC equipment is also backwards compatible with 8/16G FC.) Modern Ethernet switches can usually support a mix of 10Gb, 25Gb, 40Gb, and 100Gb speeds on the same switch. Customers are starting to roll out 25/100GbE equipment for all new network infrastructure knowing it can support legacy servers, storage, and uplinks with 10/40GbE speeds as well as new servers, storage and uplinks with 25/100GbE speeds.

Figure 3: New Ethernet switches are backwards-compatible, supporting both 10/40GbE connections to older infrastructure as well as 25/100GbE connections to newer servers, storage and switches.

Where Were You When the Revolution Happened?

The 25/100GbE Revolution is well underway. It’s already happening—and nearly done in some cases—amongst the cloud service providers, underway for technical computing, and in the late planning stages for enterprise and enterprise storage vendors. Due to the speed advantages, backwards compatibility, and near-cost-parity of 25G/100GbE over 10/40GbE, it makes financial sense for customers and vendors to choose 25GbE to the server and 100GbE for switch uplinks and some flash storage. Designing networks that can support these higher speeds helps create a datacenter infrastructure that is future-proof and ready for faster servers, faster storage, and new applications such as AI, Big Data, and hyperconverged infrastructure. Customers, vendors and system integrators should all consider the advantages of a 25/100GbE network infrastructure for new build-outs and network upgrades.

About the SNIA Ethernet Storage Forum

The SNIA Ethernet Storage Forum (ESF) is committed to providing vendor-neutral education on the advantages and adoption of Ethernet storage networking technologies. Learn more at http://www.snia.org/esf.

The rate of innovation taking place today is enough to make any business feel permanently caught in a hamster wheel; trying to keep up with the latest trends to stay relevant. From small businesses to large enterprises, introducing anything new into a company can be a painful process. But if done right, it’s highly rewarding.

By Ian Stone, CEO of Vuealta.

The problem is, the hamster wheel means that businesses feel constantly under pressure to move onto the next “big thing. There is a tendency for them to implement new technology and then quickly move on to the next project. Often, they’re then left feeling disappointed with the impact of that new piece of technology because just winding it up and expecting it to deliver isn’t enough. Businesses need to ensure that there is a dedicated team focusing on getting the most out of what is probably quite a significant investment. They need to consider the wider implications and success factors, such as people and processes.

Business need to look at how the technology will impact and benefit everyone, not just the IT team. It also needs to consider how it will integrate with existing workflows and procedures that are currently in place. Time is a luxury for today’s businesses so doing all of that whilst continuing with the day to day jobs can be difficult. Which is why working with a partner who can manage a project from start to finish and ensure that it delivers can be a game changer.

A new technology implementation is like a cycle race. Racing cyclists are decked out head to toe in the latest gear, accompanied by a highly technologically-advanced, custom-built bicycle built for precision and speed. The cyclist themselves are passionate and highly trained, knowledgeable about the course and the competition. They work together to be the best that they can be and to win the race. But without the rider, the bike is just a bike.

It’s the same in business. A company must be in the best shape possible, adapting processes and training people where needed to ensure that they can use new technology to its full advantage. Without that, the technology will sit there, having only the most basic impact or even no impact at all. For many businesses today, what they lack is time and skills – neither of which are easy to come by within existing internal teams.

In the pro peloton, the rider is supported by a group of team-mates and a huge support team, working tirelessly throughout the races to help achieve the best result possible. Building a long-term, working relationship with a partner, who has the skills and time to help the business through the entire journey of a new technology implementation is highly rewarding. By providing ongoing support, the partner can help the business start small, delivering immediate benefits, and then over time expanding the reach and impact of the investment to benefit other areas of the business. They are there to help figure out where to start, carrying out pilots to get an idea of any issues or unexpected benefits, from what the design and build should look like through to carrying out regular health-checks to ensure everything is working well.

It’s hard enough wading through all the technology solutions out there to find the best one, let alone achieving the promised benefits of the technology once you’ve invested in it. Businesses need to surround themselves with the best technology implementation partner possible to ensure that they’re reaping the rewards from their technology investments. As we know, in today’s digital age, technology can be the game changer for businesses looking to come out on top and win the race.

When it comes to IoT data, one thing is certain: analysing it continues to accelerate as companies get better results by including sensor-based / IoT device data in their decision-making process - and there is no slowing down in sight. We see this in particular on the IT side - as IT spending increases, so will the investment in IoT. Organisations will build on hardware and connectivity layers supporting IoT as well as the services and analytics software to integrate IT, security, transactional and IoT data.

By Erick Dean, Product Director, IoT, Splunk.

And this makes sense - for organisations looking to expand their existing data footprint, IoT is the logical next step. Companies that are successfully integrating their IT, operations and transactional data are now looking to ingest and correlate IoT data into existing infrastructure.

The risk is real

On the security side, IoT brings a tangible risk. As we continue to entrench our daily lives with more ‘connected things’, we drive both new levels of innovation, and at the same time, open ourselves up to a security minefield. In 2018, security for IoT isunder heavy scrutiny. Cyber security risk is increasing exponentially as people, processes and businesses continue to connect every part of our daily lives and our economy. Each ‘connected thing’ opens new doors into personal intelligence, corporate intelligence and public safety. Through these doors we open ourselves up - as individuals and organisations - to new weaknesses hackers could exploit. We are looking into a future where attacks can be orchestrated not just from public networks, but from private devices such as a smartphone or a smart home. So, while the IoT revolution is exciting, consumers and businesses should be thinking of the tradeoffs. This will be particularly relevant to businesses where a breach will lead to a potentially fatal loss of consumer trust. Gartner predicts that by 2020, more than 25 percent of identified attacks in enterprises will involve IoT, although IoT will account for less than 10 percent of IT security budgets. This gives businesses something to think about.

industry adoption first?Machine learning and artificial intelligence represent a tremendous opportunity to IoT. The increasing commoditisation and scale of sensor devices will drive a new wave of smart industries and have significant impacts on existing ones. Being able to predict when machinery will need to be repaired, self-optimising production, and demand response are only a few application examples.

With existing network infrastructure likely to be used for ‘connected things’, the investment spend on analytics technology will be higher as companies find new ways to make sense of the vast amounts of smart device-generated data. Industrial asset management, fleet management in transportation, inventory management and government security are the current hottest areas for IoT growth.

SNS talks to Mark Young, VP systems engineering and field CTO for EMEA at Tintri, examining the impact of flash storage to date, the role flash storage has to play in some of the emerging technologies, such as IoT and AI, and predicting an increasingly important role for flash storage into the future.

1. What has flash storage achieved to date, and what more can we expect from it, in terms of market size and share?

Flash solves a long-standing problem of worst-case performance with hard-disk drives. Hard-disk struggles with random reads, but flash buries the majority of that challenge with hardware. To solve the problem more completely the right software is required.

That said, there is every indication that all-flash is coming to dominate the data centre.

2. How likely (and when) is the enterprise all-flash environment?

We are already seeing the emergence of all-flash enterprise environments and this will steadily increase as organisations are increasingly dependent on the performance of their applications.

3. Flash is a crucial part of hyperconverged offerings – what are the pros and cons of using flash as part of such a solution, as opposed to having ‘stand-alone’ flash storage?

Flash plays a critical role in hyperconverged infrastructure (HCI), but there’s a key difference with stand-alone all-flash storage. Stand-alone flash uses 28-40 cores per controller. HCI limits the CPU available to storage, typically to 8 CPUs. That means a lot of the potential of flash is wasted in an HCI architecture. And with HCI, every I/O operation flows through the scheduler 4 times, which is a possible bottleneck. Organisations that are prioritising predictable performance should be wary about HCI.

4. How has hyperconverged storage been received to date?

The promise of HCI is that by bringing compute, network, and storage together in a fully-tested, controlled environment, infrastructure administrators can be freed from the challenges of integrating point solutions and can scale more readily.

The reality can be quite different. HCI can be difficult to manage beyond a few nodes. For example, when one node fails storage has to be distributed across all other nodes. If those nodes cannot handle the extra storage, they also fail. As for scale, it is generally recommended that HCI nodes remain balanced. As a result, when you need more compute, you often have to buy a node that includes both compute and storage. This over-provisioning is commonly referred to as the ‘HCI tax’. For small footprints, the simplicity benefits of HCI outweigh these cons. For larger enterprises, HCI will rarely be the right solution.

5. What role does flash storage have to play in the emerging IoT space?

As IoT emerges, there is a clear need for greater application performance. Flash technology can solve this by providing organisations with the ability to automate mundane management processes and speed up application development. Organisations need to maximise application performance in order to promote the development of IoT.

6. Similarly, how does flash storage contribute to the developing AI market?

As the use cases for AI and Machine Learning (MI) grow, so does the volume of data which is being generated. Flash storage will be the backbone that aids in the growth and development of AI. AI technology that has the capacity to store and utilise data at speed will be able to learn and push forward.

7. What about Flash storage’s role at the edge?

The movement to edge computing is based on its increased performance and speed. This is what flash technology is fundamentally designed to offer. We will see more on-premises solutions designed to suit edge computing needs and flash can play an integral part of this. Legacy storage still requires manual intervention but with flash solutions, organisations can ensure processes are automated and streamlined while simultaneously enhancing performance and speed.

8. Moving on to the actual technology, what further developments can we expect to see around core technology?

As IT continues to innovate, we will see the evolution of self-driving data centres. This is where flash technology will grow and develop, traditional legacy storage systems are ill equipped and require a significant amount of manual intervention and management.

As part of this, flash technology will evolve to offer guaranteed, real-time predictable performance without IT intervention. This will allow IT admins to concentrate on more critical tasks that add value to the company rather than just keeping the engine running.

9. What further developments can we expect to see in performance and capacity?

As applications grow the need for seamless continuous delivery and integration is vital. Organisations must adapt to the digital era by delivering new software and services faster and more efficiently. As a result, we will see flash storage continue to offer increased speed and performance.

Flash will also develop in terms of scalability, providing higher capacity while streamlining bottleneck issues. Fundamentally, flash technology will become easier and more simple to scale out, removing the need to cobble together different storage elements.

10. What further developments can we expect to see in flash storage prices/value?

One of the biggest inhibitors to the adoption of flash storage has been its cost. In the early days of flash, accessing its 10x advantages in performance, power and density meant an associated 10x increment in cost/GB. This effectively relegated flash to very high-end applications and only where absolutely required, such as supercomputing or complex algorithm or video processing. In a very short span of time, however, flash has seen a dramatic 80% cost reduction and its advantages have accelerated in terms of performance, power and density. This innovation has pushed flash deeper into traditional enterprises and increased its adoption rate.

11. In summary, how will the flash storage landscape vary between early 2018 and, say, the end of 2020?

With the decreasing price of flash coupled with its accelerated performance, power and speed we will see the continued adoption throughout the next few years. However, it is likely that the role of flash will change from sitting in enterprise data centres to moving towards the edge, helping AI and IoT to grow across the market.

We will see flash continue to offer greater performance, agility and automation helping to streamline business processes and free up IT admins from mundane management tasks.

As cloud solutions are becoming increasingly popular options for data storage and security, IT leaders need to consider which solutions are most suitable for their organisation. This, however, entails more than just choosing the best provider – it also requires an understanding of cloud technologies and the combinations that would be most appropriate, depending on individual workloads and requirements.

In order to do this, businesses should consider a multi-cloud option. Multi-cloud solutions allow for different types of cloud – public, private and hybrid options – to be adopted alongside each other, providing the benefits of each. In this article, five industry experts offer their thoughts on the reasons to implement multi-cloud, and what to look for when choosing a solution.

“The multi-cloud offers great benefits for organisations that can derive the greatest value from each platform. At the same time, it also poses new challenges to eliminate barriers for data and cloud storage management,” explains Michael Tso, CEO of Cloudian. “To maximise the benefits and overcome the challenges, organisations need a scale-out storage solution that bridges both on-premises and cloud environments, letting users store and manage data in both private and public clouds around the globe as a single, unified storage pool for files and objects. That way, users can store, protect and search data from one management screen, no matter where the data physically resides.”

Mat Clothier, CEO, CTO and Founder, Cloudhouse, discusses how multi-cloud relies on application compatibility. “Not all applications are cloud-ready, and migration from legacy operating systems can be challenging. Often written specifically for the system they run on and unsupported by the likes of Azure, AWS and Citrix Cloud, IT teams are left with the headache of addressing how to get from A to B. And the problem doesn’t end there – once you’ve made it off-premises, your applications still might not be compatible across clouds; the free movement of workloads, as per their individual requirements, is where the real value lies. Whereas previously this situation often called for a complete re-write of non-cloud-native apps, IT teams can now save time, money and effort through the use of compatibility containers that provide ‘lift and shift’ portability to, from and even any way between multiple clouds.”

“Most organisations already employ different cloud ecosystems, depending on the use case,” claims Mark Young, VP Systems Engineering & Field CTO EMEA, Tintri, “but using different platforms at the same time doesn’t constitute a successful multi-cloud strategy. A good strategy enables IT teams to achieve full ‘cloud-flexibility’ and get the best possible solution for every scenario. Some of those scenarios are best served running on a local cloud architecture, like VDI, DevOps or databases. In those instances, it is critical to ensure they are underpinned by a powerful and modern cloud storage architecture that is fast, easy to manage, integrates well with hypervisors and is ready for automation. By covering this key part of the multi-cloud strategy, businesses will be able to reap the full benefits of the private cloud element of their multi-cloud strategy.”

James Henigan, Cloud Services Director, Six Degrees, states that investing in a range of solutions can reduce headaches. "For me, 'multi-cloud' is just another variation of the 'hybrid cloud' model - it can include on-premises, private and public cloud locations with, in some cases, businesses making use of multiple external providers in each of these buckets. The challenge with this approach however, can be variances in supplier and vendor management as well as inconsistencies in operational and commercial processes.

“Organisations can of course reduce the headache of working in multiple cloud environments by investing in a range of solutions from one 'umbrella' supplier, such as a managed service provider (MSP). An MSP can offer a variety of expert services for on-premises IT, private cloud and public cloud from all of the major suppliers, while facilitating consistent billing and support processes.

“We have seen an explosion of multi-cloud procurement from early adopters who bought theoretical best-of-breed from different suppliers, only to find challenges with managing such services. Customer feedback is consequently suggesting that organisations want multi-cloud and best-of-breed, but from a single supplier, which is exactly what an MSP can provide."

Gijsbert Janssen van Doorn, Tech Evangelist, Zerto, encourages IT teams to consider the future: "Indeed, organisations today want the freedom to move to, from and between any combination of clouds, including the big guys like Azure, AWS and IBM Cloud, as well as the hundreds of smaller local cloud service providers. However, using software and tools that are not purpose-built for multi-cloud scenarios can result in an incredibly frustrating time suck; completely negating the benefits multi-cloud can offer. To adopt a true multi-cloud strategy effectively, organisations need to approach the process, not by trying to force legacy tools to work in their new environments, but by adopting new solutions that are built with the future in mind – a future where the majority of businesses are leveraging more than one cloud platform to move workloads freely as they see fit for their business, while also fully protecting them.”

It is clear that multi-cloud can, if integrated effectively, help organisations to store their data efficiently and securely. However, as these experts have discussed, IT decision-makers do need to take time to identify the best solution for their organisation, to create the best outcome.

Companies are increasingly focused on unlocking the potential of their businesses through digital transformation and omnichannel marketing initiatives.

By Abe Kleinfeld, President and CEO of GridGain Systems.

These efforts might be powered by web-scale applications, mobile applications, social media, in-store systems, or IoT use cases. For example, retailers are deploying recommendation engines for massive customer bases. Enterprises with high-value assets are deploying IoT sensors to measure and increase asset utilization while reducing operational costs. And financial services firms are enabling 24/7, real-time, omnichannel experiences through services available via on-premises, web and mobile device touch points.

All these initiatives have two critical requirements in common: the need for real-time performance and the ability to scale out to handle terabyte- or even petabyte-scale data sets for their new or existing applications. How can businesses that are already struggling with performance issues with their existing applications cost-effectively meet these new data-intensive challenges? By implementing a hybrid/transactional analytical processing (HTAP) strategy powered by an in-memory computing platform.

HTAP

HTAP is the ability to process database transactions while at the same time performing real-time analytics on the operational data set. In the early days of computing, performing analytics on operational data could significantly degrade system performance. This led to the development of separate online transactional processing (OLTP) and online analytical processing (OLAP) systems. The separation required transactional data in the OLTP database to be extracted, transformed and loaded (ETL) into the OLAP database prior to analysis. While this architecture addressed the original challenges, it simply can’t deliver the real-time analysis of data that today’s web-scale applications and omnichannel marketing initiatives require.

The goal of implementing a HTAP architecture is to eliminate separate OLTP and OLAP databases and enable real-time analytics on the operational data set. Until recently, however, HTAP was an extremely expensive proposition requiring very expensive, proprietary hardware. As a result, it was reserved only for the most high-value applications, and many businesses have been slow to move forward with their digital transformation and omnichannel initiatives because they struggle to justify a complex and costly rip-and-replace effort.

In-Memory Computing Platforms: The Alternative to Rip-and-Replace

In-memory computing platforms offer an alternative to rip-and-replace. Like HTAP, in-memory computing technologies were too expensive for all but the most high-value applications until recently. Today, however, the cost of memory has fallen sharply, giving rise to a variety of mature in-memory technologies, including in-memory data grids, in-memory databases, and in-memory computing platforms that combine the two with streaming analytics in a single solution. The latest open source in-memory computing platforms make the technology even more flexible and cost-effective, while delivering greater innovation.

Today’s in-memory computing platforms are deployed on a server cluster, and they can utilize the RAM and CPU power of all the servers in the cluster. The in-memory computing platform is inserted between the application and data layers of an existing application and loads the disk-based data from an RDBMS, NoSQL or Hadoop database into RAM. Processing then takes place without any of the delay caused by disk reads and writes. Whether deployed in a public or private cloud environment, on-premises, on in a hybrid environment, nodes can be added to or subtracted from the in-memory computing platform cluster on-demand, providing cost-effective, flexible scaling. Some in-memory computing platforms include support for ANSI-99 SQL and ACID transactions, advanced security, streaming analytics, machine learning, and Spark, Cassandra and Hadoop acceleration.

GridGain, an enterprise-grade in-memory computing platform based on the open source Apache Ignite project, provides the speed, scalability and high availability that today’s demanding HTAP architectures require. GridGain Systems, the company which develops and markets the GridGain in-memory computing platform, offers bundled support and software license subscriptions and professional services to support organizations deploying GridGain in mission-critical production environments.

With an in-memory computing platform, HTAP now becomes a feasible strategy and cost-effective solution for a variety of data-intensive use cases. For example, Wellington Management has more than $1 trillion in client assets under management and offers a broad range of investment approaches. The firm’s investment book of record (IBOR) is the single source of truth for investor positions, exposure, valuations and performance. This means all real-time trading transactions, all related account activity, third-party data such as market quotes, and all related back-office activity flow through the IBOR in real time. The IBOR is also a key source of analytics that support performance analysis, risk assessments, regulatory compliance and more.

Faced with the challenge of upgrading their systems to handle the extreme volume of transactions and the intensive analytics required today while still controlling costs, Wellington deployed an in-memory computing platform from GridGain. The in-memory computing platform powers the Wellington HTAP architecture, offers unlimited horizontal scalability, and supports ANSI-99 SQL queries. In various tests, the new platform performed at least 10 times faster than the company’s legacy Oracle database. Moving forward, Wellington plans to control costs by using the “persistent store” feature of the GridGain platform to maintain older data only on SSDs. Keeping only recent data in RAM will save money on hardware costs without degrading the ability to satisfy aggressive SLAs.

To deliver on the promise of their digital transformation and omnichannel marketing initiatives, companies should immediately begin investigating the power of in-memory computing to power an HTAP strategy. They will find it is now possible to cost-effectively achieve real-time performance and the scalability to handle terabyte-or even petabyte-scale data sets for their web-scale and IoT applications.

Like that clip in nearly any space-fi movie when the starship goes into hyperspace, it feels like the future is accelerating toward us in a blur. Yet IT leaders are being asked to prepare for change that may be unforeseeable from the CIO’s helm.

By Paul Mercina, Head of Innovation, Park Place Technologies.

The good news is established and emerging solutions can help usher in technologies we haven’t envisioned today. Many of them fall into the fast-gelling field of the software-defined data centre. It’s a concept gaining traction in the market, but the varying maturity levels of its components—from virtual storage to software-defined power—make adoption complex.

Expect the Unexpected

“Future proofing” was once synonymous with long-range planning—essentially, life-cycle management that enables data centre facilities and hardware investments to deliver full value before redevelopment or replacement. The definition has steadily evolved to connote a flexible, resilient architecture capable of supporting accelerated business-driven digital transformation.

Of course, product-specific choices—such as whether to go with up-and-comer Nutanix or the more established EMC for a given appliance—will remain. Those building or retrofitting data centre facilities must carefully consider cabling, power and cooling with a 6- to 15-year horizon in mind.

But even the most rigorous cost-benefit analyses, needs projections and product evaluations won’t by themselves produce a future-proof IT infrastructure. There are simply too many unknowns beyond mere capacity forecasts. Perhaps Benn Konsynski of Emory University’s Business School put it best in an MIT Sloan Management Review paper:

“The future is best seen with a running start….Ten years ago, we would not have predicted some of the revolutions in social [media] or analytics by looking at these technologies as they existed at the time….New capabilities make new solutions possible, and needed solutions stimulate demand for new capabilities.

If the IT architect or data centre team can’t know in detail what the next revolution will look like—be it in machine learning, artificial intelligence or a field existing only in the most esoteric research—how can a data centre infrastructure conceived today support it down the road?

Enter SDDC

The software-defined data centre (SDDC), in which infrastructure is virtual and delivered via pooled resources “as a service,” has promised to deliver the sought-after agility that businesses require. It offers many advantages in this regard. Specifically, it does the following: