Working across a range of business titles, it’s quite easy to forget that, while some industry sectors are fully on-board with the digital world, plenty of others are only now just beginning to discover what is not just possible but will shortly be compulsory if many companies are not to struggle as disruptors change the rules. Obviously, everyone in the IT space understands Cloud, IoT, AI, ML, AR and VR, DevOps, containers, 5G and the like.

However, when stepping from our Digitalisation World (DW) magazine and website back to the world of data centres, I’m only too aware that this industry sector, outside of the household name hyperscalers, has a little bit of catching up to do in two areas.

Firstly, the potential of many of these new ideas and technologies to help transform the data centre environment is fairly spectacular. As yet, there doesn’t seem to be any data centre colo or hosting organisation that leveraged ‘digitalisation’ to put itself head and shoulders above the pack – and maybe it isn’t necessary right now as there’s still a very high demand for new data centre space, almost whatever the quality. However, at some stage, the data centre providers will start to have to use the new technologies to distinguish themselves and it will be interesting to watch this race to the top.

Secondly, while no one quite knows how, when or where, there’s no doubt that IoT, VR and AR, AI and various other digital ideas will have a massive impact on the demands being placed on data centre infrastructure. The sheer quantity and size of the data sets that will need to be housed, accessed, stored, retrieved, archived is going to go mad. There’s going to need to be much more granularity around data centre infrastructure quality of service. In crude terms, right now we have fast, medium-pace and slow data. In the matured digital world, we’re going to have many more levels of service, depending on the importance of the data at any particular moment in time.

Up until now, I think it’s fair to say that data centres have ‘bumbled’ along, evolving gradually as new ideas come along and make new demands on the infrastructure, but not at any great rate of change. Looking ahead, I can’t help feeling that we might just need to witness something of a data centre revolution if digital potential is to become reality.

Fasten your seatbelts and enjoy the (autonomous?) ride!

New Cognizant report finds 60% of senior IT executives claiming there are more cyber threats in their organisation than they can currently control.

A new report by Cognizant’s Center for the Future of Work, Securing the Digital Future, reveals that, in the pursuit of digital transformation, organisations have overlooked one critical factor that could put all their transformation efforts - and even share prices- into jeopardy: cybersecurity.

The research, which surveyed over 1,000 senior IT executives in 18 countries, found that only 9% of organisations have made cybersecurity a board-level priority. This is despite respondents acknowledging that digital is opening their businesses to more cybersecurity vulnerabilities than ever, with 60% of respondents saying there are more emerging cyber threats than they can currently control.

The report found that cybersecurity vulnerabilities stem from a range of sources, including not only technology itself, but also the design and execution of business processes and, employees within the organisation. Respondents believe that migrating data to the cloud (74%), social media (66%) and careless employees (64%) pose the highest risk to businesses in the next 12 months, stating that they need to be addressed now to bolster their organisation’s security.

Rather worryingly though, over 60% of respondents believe they have inadequate resources (namely access to cybersecurity talent due to staffing budget issues) to address gaps in the business’s cyber defences. As a result of this shortage, unsurprisingly, almost a third (31%) also admit they only refresh their cybersecurity strategies on an annual basis, potentially leaving glaring gaps in their cyber defences.

Combined with fast-changing threats, this talent and budget shortage has many organisations looking to technologies, particularly artificial intelligence (AI)-driven automation, to improve their cybersecurity outlook. However, while technology can close the gap, it cannot solve the security short fall alone.

Future-proofing digital operations

The study identified four critical elements that organisations can follow to bolster their cybersecurity strategies, allowing them to future-proof digital operations:

Euan Davis, European Lead for Cognizant’s Center for the Future of Work said: “While not a silver bullet, the introduction of AI tools into cybersecurity platforms will spur organisations to rethink how they approach cybersecurity and reduce the burden left by talent shortages. Cybersecurity needs to be an ongoing endeavour however, and failure to adapt processes and systems on a regular basis will leave an organisation open to further attacks.

“Leadership must take the initiative when it comes to ensuring this is embedded into the business’s DNA, or else face losing customers, reputation and revenue. Ultimately, any company that hopes to do business in the digital economy must make cyber defences a key part of their business strategy.”

Nearly half of employees (45%) have accidentally shared emails containing bank details, personal information, confidential text or an attachment with unintended recipients.

New research by data security company, Clearswift, has shown that 45% of employees have mistakenly shared emails containing key data with unintended recipients, including personal information (15%), bank details (9%), attachments (13%) and other confidential text (8%).

The research, which surveyed 600 senior business decision makers and 1,200 employees across the UK, US, Germany and Australia, also found that employees regularly receive these unintentional emails, as well as being guilty of sending them, highlighting an inbound and outbound opportunity for data leakages. 27% of employees claim to have received emails containing personal information in error from people outside of their company, with 26% also admitting to receiving attachments in error and 12% saying they had wrongly received personal bank details.

“With GDPR, the new tenet of shared responsibility makes the problem of receiving and sharing unauthorised information a serious issue. Email communication is a real pitfall for organisations trying to comply with the regulation”, said Dr Guy Bunker SVP products at Clearswift.

“Stray bank details and ‘hidden’ information in attachments, spreadsheets or reports can create a serious data loss risk. The occasional email going awry may seem innocuous, but when multiplied by the amount of employees within a business, the risk becomes more severe and could lead to a firm falling foul of the new GDPR penalties; up to 4% of global turnover, or even those in place already, such as The Payment Card Industry Data Security Standard. If contravened this can lead to a firm having the ability to process data removed, which could see some businesses grind to a halt.”

The research also found that upon receiving a misplaced email, 31% of employees said that they would read the email, with 12% even admitting they would scroll through to read the entire email chain. 45% of employees did say that they would alert the sender to their mistake, giving them the opportunity to take some action, however a lowly 27% said they would delete the email from their inboxes and deleted items leaving an element of uncertainty.”

Less than half (45%) of employees were familiar with the agreed process or course of action to take upon receiving an email from someone in another company where they were not the intended recipient, and 22% admitted there was no formal process in place whatsoever in their organisation for such situations.

Bunker added, “To offset the inevitable risk associated with email communications, companies need a clear strategy, which encompasses people, processes and technology.”

“Instilling the values of being a ‘good data citizen’ can engender a sense of data consciousness in the workplace, ensuring that employees are aware of responsible disclosure, and with whom this responsibility sits upon receiving an email in error. However, a formally agreed process or course of action is also a must. There is not a silver bullet and technology can once again offer assurances to help mitigate risks. Adaptive Data Loss Prevention (DLP) technologies can automate the detection and protection of critical information contained in emails and attachments, removing only the information which breaks policy and leaving the rest to continue on to its destination.”

A study of two data centres found that utilising higher temperatures resulted in energy savings of between 41% and 64%, whilst driving significant improvements in PUE.

Energy efficiency is an issue that concerns all who are involved with the design and operation of data centres. The cooling function in general, and the operation of water chillers in particular, are large consumers of power and as such, require focused efforts to improve overall energy efficiency.

Water chillers account for between 60 and 85% of overall cooling-system energy consumption. Consequently, data centres are designed, where possible, to keep usage of chillers to a minimum and to maximise the amount of available “free cooling”, in which less power-hungry systems such as air coolers and cooling towers can keep the temperature of the IT space at a satisfactory level.

One approach to reducing water chiller energy consumption is to design the cooling system so that a higher outlet water temperature (CHW) from the chillers can be tolerated while maintaining a sufficient cooling effort. In this way, chillers consume less energy by not having to work as hard, and the number of free cooling hours can be increased.

As with any complex system, attention needs to be paid to all parts of the infrastructure, as changes in one area can have direct implications for another. A new White Paper from Schneider Electric, the global specialist in energy management and automation, examines the effect on overall cooling system efficiency by operating at higher chilled water temperatures.

White Paper #227, “How Higher Chilled Water Temperature Can Improve Data Center Cooling System Efficiency”, outlines the various strategies and techniques that can be deployed to permit satisfactory cooling at higher temperatures, whilst discussing the trade-offs that must be considered at each stage, comparing the overall effect of such strategies on two data centres operating in vastly different climates.

Among the trade-offs discussed were the need to install more air-handling units inside the IT space to offset the higher water-coolant temperatures, in addition to the need for redesigned equipment such as coils, to provide adequate cooling efforts when CHW (chilled water temperature) exceeds 20C. The paper also advises the addition of adiabatic, or evaporative, cooling to further improve heat rejection efficiency. Each approach requires an additional capital investment, but results in lower long-term operating expenses due to the improved energy efficiency.

White Paper 227 details two real-world examples in differing climates; the first is in a temperate region (Frankfurt, Germany) and the second in a tropical monsoon climate (Miami, Florida). In each case, data was collected to assess the energy savings that were accrued by deploying higher CHW temperatures at various increments, whilst comparing the effect of deploying additional adiabatic cooling.

The study found that an increased capital expenditure of 13% in both cases resulted in energy savings of between 41% and 64%, with improvements in TCO between 12% and 16% over a three year period.

Another inherent benefit of reducing the amount of energy expended on cooling is the improvement in a data centres PUE (Power Usage Effectiveness) rating. As this is calculated by dividing the total amount of power consumed by a data centre by the power consumed by its IT equipment alone, any reduction in energy expended on cooling will naturally reduce the PUE figure.

The Schneider Electric study found that PUE for the two data centres examined was reduced by 14% in the case of Miami and 16% in the case of Frankfurt.

76% of organisations across Europe have increased IT and technology budgets this year.

Toshiba reveals that IT and technology budgets within European businesses will increase this year for more than three quarters (76%) of organisations. This rise in IT spend is directly linked to the number of remote workers within businesses, with those companies with higher numbers of remote workers indicating greater increases in their investment in new solutions and technologies.

The study of more than 1,000 senior IT decision makers from medium and large organisations, which was conducted in partnership with Walnut Unlimited, demonstrated that priorities for this increased investment are focused on `data security (62%), cloud-based solutions (58%) and improving productivity (54%). When compared to similar IT decision maker research conducted by Toshiba back in 2016, data security has increased in terms of importance (54% in 2016), as has investment in cloud-based solutions, with 58% of organisations considering it a top priority today compared to 52% in 2016.

While all country markets surveyed (UK, France, Germany, Spain and Benelux) saw an increase in IT spend, Spain demonstrated the most significant change, with 86% of organisations indicating an increased IT and technology budget for the next twelve months. Similarly, businesses in the transport and logistics sector were the most likely to have an increased budget (89%), while only 52% of government and public sector organisations noted that there would be a larger spend on IT and technology.

Security concerns and evolving working patterns

Offering employees flexibility in their working patterns continues to be of paramount importance to organisations across Europe. The study reveals that 68% of respondents said they had at least a tenth of their employees work primarily while travelling or in no fixed location.

This increase in flexible working is a clear driver behind the top three investment priorities being data security, cloud-based solutions and improving productivity. When asked about priorities for improving productivity for this increasingly mobile workforce, almost half (47%) of respondents indicated that better employee training was critical, with 43% of respondents stating that more innovative use of digital tools was a priority.

Technology to support remote and frontline workers

To help ensure worker productivity, regardless of where employees are working from, there is a distinct shift in the solutions IT decisions makers are rolling out across their organisation. At present, 61% of respondents indicated that they provide laptops for their remote teams and 55% offer business-provided smartphones. However, when asked what devices will be used most over the next three years, smartphones caught up with laptops (both at 38%) and businesses also indicated an appetite for newer technologies such as mobile edge computing devices (10%) and thin/zero client solutions (9%).

Larger enterprises (500+ employees) are set to lead the way when it comes to rolling out wearables in the workplace, with 24% predicting that a smart glasses solution will be rolled out for employees within the next 12 months. This is compared to just 16% of respondents from organisations with 100-499 employees. 82% overall predict that smart glasses will be used within their business in the next three years.

The drivers behind the uptake of enterprise smart glasses use include the arrival of 5G, as referenced by 40% of respondents. Furthermore, 59% of those working in the manufacturing sector stated the hands-free functionality as a key benefit of rolling out smart glasses to employees.

Maki Yamashita, Vice President, B2B PC, Toshiba Europe comments, “While the technologies available to employees are constantly evolving, it’s really interesting to see that the key challenges that IT decision makers are looking to address have remained relatively constant when compared to opinions in 2016. Organisations are continuing to balance how best to achieve the perfect blend of unhindered mobile productivity, while being protected by a robustly secure IT infrastructure. New solutions coming into the enterprise are helping to achieve this, but IT teams need to focus on the varying challenges and benefits for their individual sectors when determining how best to make these solutions work for their business.”

“Industry is precisely at that tipping point where the physical environment of computing gives way to the virtual world of cloud and its associated enabling technologies”, Apay Obang-Oyway, Director of Cloud & Software, UK&I at Ingram Micro comments.

He continued: “This is not just in bold initiatives here, and ingenious transitions there, not just from pioneers within certain verticals, and visionary disruptors – but for every organisation, everywhere, in every industry”.

Research that Ingram Micro conducted in mid-2017 showed that 71% of organisations believed they would be embarking on a conversion of being digitally transformed within the organisations’ DNA by 2018. According to Apay, underpinning this entire revolution is the cloud, and it is without doubt the single most transformative element in this radical rethinking of the way we work, live and play every day. “I suggest that cloud is no longer a journey – a view that implies new departures and sometimes extended good-byes to all that went before – it’s where we are, where we have arrived,” he commented.

“We have reached our destination. We can consider ourselves in a place where agility, scalability and speed are no longer qualities we discuss with awe, expectation, and anticipation. They are just part of the fabric of what we are, the infrastructure of what we do, the platform from which we spring off and up into achievements that not many years ago were no more than dreams and aspirations,” Apay added.

He believes that humankind will look back on 2017 in particular as a year that “brought such acceleration to change, that we’ll very soon be looking back on it like one of those epoch-defining moments where we all remember exactly what we were doing when they happened.”

Apay continued: “There are four key elements of the Fourth Industrial Revolution that fuse together to ignite infinite possibilities we can view as a cohesive change in how we do things. I for one do not believe that the word ‘revolution’ is in any way an overclaim for this period we’re embarking upon. The exciting and fundamental factor in this revolution is being driven by the channel, and Ingram Micro continues to play an essential role in enabling partners to realise these opportunities.”

According to Apay, the four elements are:

The Internet of Things

There is an overwhelming amount of data being produced by a world that has over 8.3 billion connected devices, and the average UK household has an average of 8.3 connected devices.

We can do two things with the resultant data: we can ignore it, or just store it to satisfy regulators, or we can interact with it to get smarter at what we do, how we do it, and how well we deliver on our customers’ expectations and needs. Also, none of this can be done without artificial intelligence.

Artificial Intelligence (AI)

Many organisations still have little understanding and those that do have inherent fears around AI, relating primarily around the security issues of AI-dependent networks. Yet AI is critical to the new world of the fourth revolution, especially in making sense of the vast volumes of data any organisation can take advantage of if it has the right tools to make sense of it securely.

These are ‘legacy fears’. They date back to before we knew how robust and secure the cloud can be. Such fears fail to embrace solutions which leverage the “shared security model” as well as understanding the value of Infrastructure as a Service and Platform as a Service.

Automation

Far from reducing the demand for skilled labour, it will increase it. Automation is not about the invasion of the droids, the assumption of human characteristics by robots who we get to know and love.

It’s about making tedious processes faster and removing the human element to drive cost-effectiveness and to serve customers better. It’s a tool to embrace, not an entity to distrust.

Cloud Native verticals

We’ve been accustomed to seeing agile start-ups disrupting all types of businesses, while bigger players contend with legacy investments. An essential step in any digital transformation strategy has always been to migrate to the cloud or to deploy hybrid strategies, moving partly to the cloud and retaining critical operations down here in the physical sphere. Once again this is legacy thinking. There is value in “Cloud First” strategies in defining and empowering successful organisations.

The role of the myriad constituents of the channel is to augment Cloud, AI, IoT and BigData to create and accelerate organisational value in this fourth industrial revolution. These themes and content will be further explored at this year’s Ingram Micro UK Cloud Summit.

A recent article by Steve Gillaspy of Intel outlined many of the challenges faced by those responsible for designing, operating, and sustaining the IT and physical support infrastructure found in today's data centers. This paper targets four of the five macro trends discussed by Gillaspy, how they influence the decision making processes of data center managers, and the role that power infrastructure plays in mitigating the effects of the following trends.

Outpost24 survey reveals security professionals have least confidence in the security of the cloud infrastructure and most confidence in their owned infrastructure and data centres

LONDON, U.K. - May 10, 2018 – Outpost24, a leading provider of Vulnerability Management solutions for commercial and government organisations, today announced the results of a survey of 155 IT professionals, which revealed that 42 percent ignore critical security issues when they don’t know how to fix them (16 percent) or don’t have the time to address them (26 percent).

The survey, which was carried out at the RSA Conference in April 2018, also asked respondents what area of their IT estate they consider to be the least secure. This revealed 25 percent are most concerned about their cloud infrastructure and applications, 23 percent are most concerned about their IoT devices, 20 percent said their mobile devices, 15 percent said their web applications, while 13 percent were most concerned about their data assets, databases and shares. Owned infrastructure and data centres seems to cause the least concern, with only five percent saying they were least secure.

Additionally, when survey respondents were asked how quickly their company remediates known vulnerabilities, 16 percent stated they review their security at a set time every month, seven percent said they do it every quarter, however a worrying five percent said they only carry out assessments and apply fixes once or twice a year. Only 47 percent of organisations patch known vulnerabilities as soon as they are discovered.

“The trend lines have already been drawn, and we can see from the survey results that they are not improving,” said Bob Egner, VP at Outpost24. “Our survey results suggest that businesses are adding technology as a key element of their strategy but not preparing their security teams with the skills and resources to keep up. It’s vital that organisations have full awareness of all assets that the business relies on, and that they are constantly tuning for the lowest possible level of cyber security exposure.”

Respondents were also asked if security testing is conducted on their enterprises systems, which revealed that seven percent fail to conduct any security testing whatsoever, however, reassuringly, 79 percent of respondents said they do carry out testing. Respondents were also asked if their organisation had hired the services of penetration testers and 68 percent revealed they had. The study also found that of those organisations that had hired penetration testers, 46 percent had uncovered critical issues that could have put their business at risk.

Egner added: “Outsourcing services like penetration testing can be an excellent way to get a holistic overview of the cyber security exposure across an organisation’s assets as well as expose threats within systems that may well have gone unnoticed. To maximize the value of testing investment, remediation action should be taken as close to the time of testing as possible. With the proliferation of connected technologies, the knowledge and resource gap continue to be key challenges. Security staff can easily become overwhelmed and lose focus on the remediation that can be most impactful to the business.”

Take a New Look at 3-Phase Power Distribution.

Alternating phase outlets alternate the phased power on a per-outlet basis instead of a per-branch basis. This allows for shorter cords, quicker installation and easier load balancing for 3-phase rack PDUs. Shorter cords mean less mass, making them less likely to come unplugged during transport of the assembled rack.

This year’s DCS Awards – as ever, designed to reward the product designers, manufacturers, suppliers and providers operating in the data centre arena – took place at the, by now, familiar, prestigious Grange St Paul’s Hotel in the City of London. Host Paul Trowbridge coordinated a spectacular evening of excellent food, a prize draw (with champagne and a team of four at the DCA Golf Day up for grabs), excellent comedian Angela Barnes, music courtesy of Ruby & the Rhythms and, of course, the reason almost 300 data centre industry professionals attended – the awards themselves. Here we salute the winners.

The next Data Centre Transformation event, organised by Angel Business Communications in association with DataCentre Solutions, the Data Centre Alliance, The University of Leeds and RISE SICS North, takes place on 3 July 2018 at the University of Manchester.

The programme is nearly finalised (full details via the website link at the end of the article), with some some top class speakers and chairpersons lined up to deliver what is probably 2018’s best opportunity to get up to speed with what’s heading to a data centre near you in the very near future!

For the 2018 event, we’re taking our title literally, so the focus is on each of the three strands of our title: DATA, CENTRE and TRANSFORMATION.

This expanded and innovative conference programme recognises that data centres do not exist in splendid isolation, but are the foundation of today’s dynamic, digital world. Agility, mobility, scalability, reliability and accessibility are the key drivers for the enterprise as it seeks to ensure the ultimate customer experience. Data centres have a vital role to play in ensuring that the applications and support organisations can connect to their customers seamlessly – wherever and whenever they are being accessed. And that’s why our 2018 Data Centre Transformation Manchester will focus on the constantly changing demands being made on the data centre in this new, digital age, concentrating on how the data centre is evolving to meet these challenges.

We’re delighted to announce that GCHQ have confirmed that they will be providing a keynote speaker on their specialist subject – security! Has IT security ever been so topical? What a great opportunity to hear leading cybersecurity experts give their thoughts on the issues surrounding cybersecurity in and around the data centre.

We’re equally delighted to reveal that key personnel from Equinix, including MD Russell Poole, will be delivering the Hybrid Data Centre keynote addresss. If Adam knows about cybersecurity, it’s fair to say that Equinix are no strangers to the data centre ecosystem, where the hybrid approach is gaining traction in so many different ways.

Completing the keynote line-up will be John Laban, European Representative of the Open Compute Project Foundation.

Alongside the keynote presentations, the one-day DCT event will include:

A DATA strand that features two workshops - one on Digital Business, chaired by Prof Ian Bitterlin of Critical Facilities and one on Digital Skills, chaired by Steve Bowes Phipps of PTS Consulting.

Digital transformation is the driving force in the business world right now, and the impact that this is having on the IT function and, crucially, the data centre infrastructure of organisations is something that is, perhaps, not as yet fully understood. No doubt this is in part due to the lack of digital skills available in the workplace right now – a problem which, unless addressed, urgently, will only continue to grow. As for security, hardly a day goes by without news headlines focusing on the latest, high profile data breach at some public or private organisation. Digital business offers many benefits, but it also introduces further potential security issues that need to be addressed. The Digital Business, Digital Skills and Security sessions at DCT will discuss the many issues that need to be addressed, and, hopefully, come up with some helpful solutions.

The CENTRES track features two workshops on Energy, chaired by James Kirkwood of Ekkosense and Hybrid DC, chaired by Mark Seymour of Future Facilities.

Energy supply and cost remains a major part of the data centre management piece, and this track will look at the technology innovations that are impacting on the supply and use of energy within the data centre. Fewer and fewer organisations have a pure-play in-house data centre real estate; most now make use of some kind of colo and/or managed services offerings. Further, the idea of one or a handful of centralised data centres is now being challenged by the emergence of edge computing. So, in-house and third party data centre facilities, combined with a mixture of centralised, regional and very local sites, makes for a very new and challenging data centre landscape. As for connectivity – feeds and speeds remain critical for many business applications, and it’s good to know what’s around the corner in this fast moving world of networks, telecoms and the like.

The TRANSFORMATION strand features workshops on Automation (AI/IoT), chaired by Vanessa Moffat of Agile Momentum and The Connected World. together with a Keynote on Open Compute from John Laban, the European representative of the Open Compute Project Foundation.

Automation in all its various guises is becoming an increasingly important part of the digital business world. In terms of the data centre, the challenges are twofold. How can these automation technologies best be used to improve the design, day to day running, overall management and maintenance of data centre facilities? And how will data centres need to evolve to cope with the increasingly large volumes of applications, data and new-style IT equipment that provide the foundations for this real-time, automated world? Flexibility, agility, security, reliability, resilience, speeds and feeds – they’ve never been so important!

Delegates select two 70 minute workshops to attend and take part in an interactive discussion led by an Industry Chair and featuring panellists - specialists and protagonists - in the subject. The workshops will ensure that delegates not only earn valuable CPD accreditation points but also have an open forum to speak with their peers, academics and leading vendors and suppliers.

There is also a Technical track where our sponsors will present 15 minute technical sessions on a range of subjects. Keynote presentations in each of the themes together with plenty of networking time to catch up with old friends and make new contacts make this a must-do day in the DC event calendar. Visit the website for more information on this dynamic academic and industry collaborative information exchange.

This month’s journal theme is centred on Energy Efficiency and I would like to take the opportunity to thank all members who contributed to this edition.

By Steve Hone, CEO & Founder The DCA

For the past 36 months the DCA together with seven other DCA strategic partners have been working on a joint Horizon 2020 project called EURECA. This represents the second of three projects the data centre sector has secured research funding for as a direct result of DCA involvement. The bids to apply for projects take literally hundreds of combined hours and the team was up against some very stiff competition - only 1-50 bids receive funding! This is why as a trade association, we are so proud when these efforts pay off to the benefit of the whole data centre sector.

The project call came from the Horizon 2020 innovation and research programme dealing with the adoption of energy efficient best practice and market uptake of energy efficient products, services within Europe’s Public-Sector organisations. Although originally focused towards the public sector the services developed are of equal value to the private sector as well as they share the same challenges and are all equally looking for best practice guidance and independent support for their IT transformation projects; support which EURECA can provide. For more information visit the website www.dca-global.org/research.

“Member support and strong collaboration with Strategic/Academic Partners were very much key to the success of this project with all energy saving targets and KPIs being met”; so I would like to take the opportunity to send my personal thanks to Mark Acton from CBRE, John Booth from Carbon3IT, Dr Frank Verhaegen from Certios, Mark Andre Wolf from Maki Consulting, Dr Jon Summers, Zaak, Esther and Julie from Green IT Amsterdam and finally but no means least Prof Rabih Bashroush and his team at the University of East London as project coordinators. This was a great team effort and a clear demonstration of the value a trade association such as the DCA can bring to the table and the benefits of working together, sharing knowledge and promoting best practice. As Anton Chekhov said “knowledge is of no value unless you put it into practice”.

By the time you read this forward we will be well into May so if you have missed out on some of the great conferences in the first half of the year there are still lots more coming up you should consider attending. We have Datacloud Europe in Monaco 12-14th June so hopefully by the time you are reading this you will still have time to register to attend, this is then followed by the DCA Trade Associations own annual workshop-based conference DCT 2018 (Data Centre Transformation) hosted in conjunction with Data Centre Solutions in July.

For the first time DCT2018 will be hosted at Surrey University on the 5th July as well as Manchester University on the 3rd July this is to provide DCA members and delegates every opportunity to attend and benefit from the educational workshop/network sessions taking place irrespective of where you are based.

A full list of all the events the DCA host, sponsor, endorse or promote can be found on the DCA website www.dca-global.org/events. We can then all can enjoy a short summer recess to take a breath and recharge for what will be a busy end to the year with events in Ireland, Nordics, Africa, Singapore, Frankfurt, Paris and London all supported by the data centre trade association, we look forward to seeing you at an event near you!

The DCA

W: www.dca-global.org

T: 0845 873 4587

E: info@dca-global.org

By Janne Paananen, technology manager at Eaton EMEA

Over the past decade, data centres have become one of the biggest culprits when it comes to electricity consumption. So much so that just last year, the world’s data centres used 416.2 terawatt hours of electricity – which is higher than the UK’s total energy consumption. This leap in power usage has been a direct result of the consistently evolving technological landscape, especially as both businesses and consumers demand greater connectivity and technologies such as the Internet of Things (IoT) continue to expand.

It is estimated that by 2025, data centres could be using 20 per cent of all available electricity in the world. Furthermore, if current projections are true, it’s likely that the world’s data centres’ energy consumption will triple over the next ten years. This increasing demand for energy will result in major environmental issues unless companies begin powering their data centres in a more sustainable way. If not, experts believe that by 2040, the ICT industry will be responsible for more than 14 per cent of global carbon emissions.

While it’s unnerving that data centres have the ability to impact the environment in such a major way, it’s clear that the industry has taken notice and is shouldering the responsibility of reducing its combined carbon footprint as well as decreasing overall energy usage. According to a recent survey by Data Center Knowledge, 70 per cent of users consider sustainability issues when selecting data centre providers. Beyond that, about one third of those who take sustainability into consideration believe it’s very important that their data centre providers power their facilities with renewable energy.

With that in mind, many data centre providers have already taken various steps to reduce their energy usage while prioritising efficiency. Google is a great example of this. The tech giant has recently pledged that between 2020 and 2025, all of its operations will be powered by renewable energy. And they’re not the only FAMGA members making big promises – both Facebook and Apple have made similar statements in recent months.

It’s reassuring to see that so many companies – especially major players in the industry – are making changes to become more sustainable. This self-awareness and push for alternate energy sources is working. The demand for green energy is changing our energy mix – just last year, 24 per cent of global electricity demand was produced through renewables such as wind, solar and hydro-power. Unfortunately, there’s still a catch – renewable energy generation can be intermittent.

Managing intermittency

At first, intermittency can sound like a major concern. As the energy market moves away from traditional fuel-based energy towards renewable energy, production will become more volatile, making it harder to predict – and therefore manage – electrical supply.

As data centres rely on a stable and reliable supply of energy, this instability is not ideal.

To diminish the risk, data centres can help energy providers maintain power quality by balancing consumption with power generation. On a global scale, providers within the energy sector need to work with organisations to respond quickly to grid-level power demands and keep frequencies within manageable boundaries to avoid grid-wide power outages.

Moving away from risk management and towards an extra revenue stream, data centres could be given a financial incentive to manage their demands on the grid, and even act as a power supplier. For example, data centres could make money from their existing investment in an uninterruptible power supply (UPS) by helping energy providers balance sustainable power demands by offering capacity back to the grid – without compromising critical loads. The UPS, which uses stored power in the event of a power failure, can be used to regulate demand from the grid, as well as for up and down stream charging, essentially to discharge the battery back to the grid.

This is becoming known as UPS-as-a-Reserve (UPSaaR). By putting data centres in control of their energy distribution and giving them the power to select how much capacity – and at what price – to offer back to the grid, they’re able to make a significant return on investment. This would be a big step towards the more sustainable management and use of energy, as well as putting money into the pockets of data centre providers, demonstrating a better ROI for their services.

Data centres have the potential to support the global transition to a low carbon economy. By working together, data centres and energy providers can balance consumption – creating a well-established and thought out approach to energy generation and a greener future.

A multisectorial approach to energy efficiency

By Vasiliki Georgiadou, Project Manager, Green IT Amsterdam

The continuous infusion of IT services in our daily lives, with the emergence of the Internet of Things (IoT), distributed data centres and the cloudification of legacy computer systems, brings data centres to the front lines. Data centres are often, and accurately, perceived as critical infrastructures of our times with concerns regarding their energy consumption dominating public discussions. Partially such concerns are very much valid indeed. Their energy consumption in EU is predicted to reach 104 TWh in 2020, after all. And energy is a precious commodity.

As such, to ensure sustained availability, reliability and security of Europe’s critical infrastructures, data centres continuously reinforce their investments towards energy efficient business innovation. However, with the highly efficient and ever-evolving cooling technologies available along with IT consolidation and virtualization techniques, PUE focused energy reduction and efficiency solutions do no longer offer high returns.

For sure the usefulness of a data centre resides in the data processed, stored and transferred within and outside its boundaries. And although difficult at times to measure uniformly among data centres, the industry has made leaps and bounds on handling their core business effectively.

Nevertheless, a fundamental viewpoint, so far overlooked in the mainstream discussions, must be considered: at the end of the day, a data centre is nothing else but a system where electricity comes in and heat comes out. Heat that in most cases is being just rejected to the surrounding environment, wasted. But looking at the energy flows within a data centre a new series of solutions can emerge.

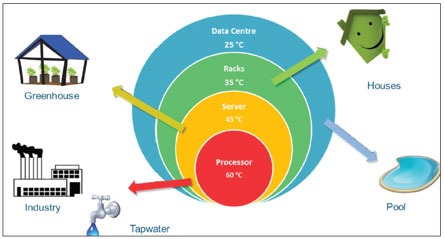

A data centre can optimize both its design and operations to deliver heat to local heating (and cooling) networks. Recover, redistribute and reuse their residual heat for building space heating (residential and non-residential such as hospitals, hotels, greenhouses and pools), service hot water and industrial processes.

Depending on the cooling technology in use, the data centre may harvest heat at the desirable temperature level. In any case, a heat pump may be in place to increase as necessary the low calorific heat generated by the data centre before its delivery to the heat grid. The data centre may also be able to adjust its server room temperature set points to increase the amount of thermal energy generated. A data centre may capitalize on the use of a heat storage, such as a thermal energy storage system, to store heat during the summer and deliver it to the heat grid during the winter in addition to the direct heat normally supplied, increasing thusly its heat capacity.

Source: Datacentres connected to an intelligent energy network, M. Arts, Z. Ning (Royal Haskoning DHV), TTVL Magazine, 2016 DATACENTRES. (Publication in Dutch)

The main barrier in these scenarios is actually raised by local policies, operations and infrastructures that may or may not be in place to enable recovery, redistribution and reuse of residual heat.

So, it also falls on the shoulders of the local communities and area developers. There are examples where indeed all stakeholders work together to ensure data centres can integrate their own operations to the needs and wants of other sectors, linking their commons (B2B). Such an example is the Green Datacenter Campus in the Amsterdam Metropolitan Area, with the Schiphol Area Development Company (SADC), a Green IT Amsterdam participant, orchestrating the efforts [https://www.sadc.nl/en/locations/green-datacenter-campus/]. Other communities are also offering similar solutions each of them leveraging on their unique geographical, technological and business characteristics. For example, at Stockholm Data Parks [https://stockholmdataparks.com/], a data centre is by default offered the opportunity to plug into the city’s district heating network (B2C).

Heat services are not however the only answer. Data centres have actually the potential to offer a diverse portfolio of energy related services by exploiting their IT operations and power and cooling infrastructure to also participate at emerging electricity, heat and energy flexibility markets. Following this line of thought, the next generation of data centres should, by design, utilize resources effectively, while ensuring seamless integration with their smart city ecosystem: smart grids and heating networks. In this context, once again simply focusing on energy reduction and efficiency practices applied only within the boundaries of one’s data centre, is no longer an option for those with the ambition to own and operate the Green Data Centres of the future. Silos must be broken down for data centres to reach their full potential capitalizing on their unique position as overlaying multiple networks: IT, electricity and heat.

Such is the frame of reference for the EU H2020 CATALYST project [http://project-catalyst.eu/] that aspires for data centres to become flexible energy hubs, which can sustain investments in renewable energy sources and energy efficiency. Leveraging on results of past projects, CATALYST will adapt, scale up, deploy and validate an innovative technological and business framework that enables data centres to offer a range of mutualized energy services to both electricity and heat grids, while simultaneously increasing their own resiliency to energy supply.

Mutual energy services will be consisting of energy flexibility, security and optimized management tailored to data centre operators and targeting at managing the available non-grid renewable (PV, local storage, heat pumps) and non-renewable (backup generators) energy assets as well as the IT assets (via cloud-based geo-spatio-temporal IT virtualization). Such energy services will be provided by data centres through appropriate open, standardized energy flexibility marketplaces, based for example on market models as defined by the Universal Smart Energy Framework (USEF) [https://www.usef.energy/]. These marketplaces may be instantiated either as mono-carrier energy marketplaces (electricity vs heat marketplace) cleared sequentially, or as multi-energy marketplaces. Along this innovative value chain, new stakeholders will be willing to provide such energy services to data centres, like ESCOs, energy suppliers, aggregators, IT and cloud solution and technology providers. Cross-energy carrier synergies among electricity and heat can also be exploited and managed with a view to leverage and exploit flexibility potential of one energy carrier to offer energy services to another.

In this way, the CATALYST vision introduces a “Marketplace as a Service” (MaaS) instantiated in three emerging and innovative data centre revenue streams and markets: a) IT workload b) Electricity & Heat and c) Energy Flexibility.

To reach this vision however it is imperative that the data centre and energy sectors are brought closer together and start talking the same language. The newly launched Green Data Centre Stakeholder Group, established by the CATALYST consortium, aims to do just that. For data centres to take up a pivotal role in energy transition, will bring opportunities for energy efficient data centres to not only reduce their operating costs and improve their performance and efficient use of resources, but also create new revenue streams through waste energy reuse and energy flexibility services offerings.

Green IT Amsterdam is a non-profit organization that supports the wider Amsterdam region in realizing its energy transition goals. Our mission is to scout, test and showcase innovative IT solutions for increasing energy efficiency and decreasing carbon emissions. We share knowledge, expertise and ambitions, for achieving these sustainability targets with our public and private Green IT Leaders. Follow us on twitter @GreenItAms; visit our website http://www.greenitamsterdam.nl/.

By Colin Dean, Managing Director, Socomec U.K. Limited

The resilience of a Data Centre - the ability to remain operational even when there has been a power outage, hardware failure or other unforeseen disruption – is fundamental when it comes to maximizing energy efficiency and uptime.

The remit of the Data Centre Manager is to balance the upward trend in energy costs, stringent legislative requirements and environmental policy, alongside the proliferation of big data – all whist generating efficiency gains and minimizing costly downtime. Whether associated with productivity losses, revenue losses, longer term customer attrition, system recovery costs or the longer term impact of reputational damage, the total cost of downtime can be crippling – and is simply not an option in today’s hard working electrical infrastructures.

Cause and effect

When it comes to voltage quality problems and associated downtime, every situation is unique. A typical server system can experience more than 125 events each month – 88% of which are attributed to surges and transient events. Black-outs caused by accidental events, short-circuits, switching on of heavy loads and overloads – as well as weather events – can all negatively impact trading and revenues, resulting in the loss of data and hardware damage or disk crashes.

Impurities will also take their toll – the culprit of data corruption and wear to electronic parts - sometimes the cause of irreparable component failure. Attributed to a range of factors including spikes, lightning, surges, harmonics and noise, the resilience of Data Centres against both impurities and black-outs is brought into sharp relief when the total potential cost of downtime is considered.

But how achievable is the desired 99.999% availability? How can Data Centres – of all shapes and sizes – mitigate against threats to their resilience and achieve a continuous and high quality power supply?

How achievable is Can you be sure of 99.999% availability?

Configurable redundancy, no single point of failure, devices designed for superior robustness, anomaly detection, rapid repair time and maintenance based on hot-swap modules … the delivery of a reliable, safe, high quality power supply requires an optimized combination of vital factors, all of which are key improving resilience.

Furthermore, it is increasingly important to consider the complete economic model when specifying equipment and system upgrades, treating investment as a strategic asset rather than a short-term cost burden.

Resilience through modularity

The flexibility of a modular architecture enables an organization to adapt – rapidly – to ever changing requirements.

Rightsizing through modularity in design enables the power protection capacity to be added - when it’s needed - to meet actual or existing demand, instead of total upfront deployment. This approach means that capacity wasted is minimized in the case of variance between projected future loads and actual future loads.

Furthermore, whilst redundancy provides an attractive MTBF, the rapid repair times associated with a modular configuration can reduce MTTR to a level that enables Six Nines to be achieved – 99.9999% availability. By working directly with a manufacturer, with intricate knowledge of a system, it is possible to identify and replace a defective module in under 30 minutes.

Global power management expert, Socomec, is driving a stream of innovation in this field – specifically engineered to guarantee the performance of the new electrical ecosystem. One such example is the Socomec Modulys GP2 - a 3-phase modular UPS system specifically engineered with full flexibility and fewer parts in order to simplify and optimise every step of the integration process – from sizing to installation – de-risking the entire project.

The Modulys GP2 RM version is designed for existing 19” rack integration across multiple applications. Easy to integrate and install whilst simple to manage and maintain, it provides maximum availability and power protection in a compact design – leaving space for other rack-mounted devices.

A scalable concept – for larger data centre applications

The most comprehensive UPS ranges available today include products that have been developed specifically for large scale data centres.

When performance has been verified by independent, external bodies, users can be assured that the product has been tested in real and varied data centre working conditions. Designed to provide complete and scalable systems that are easy – and safe – to extend, it’s important to look for online double conversion load protection – this means that when systems are extended or maintenance is being carried out, the intervention is safe for both operators as well as the load.

Agility, accuracy, availability

Availability – and therefore resilience – can also be optimised through a proactive approach to monitoring and therefore expediting remedial action where required, reducing the MTTR.

The status of key operating parameters can be tracked in real time, delivering a greater degree of agility and accuracy – both virtual and physical anomalies can be addressed rapidly, in turn achieving maximum uptime and reduced operating expenditure.

Fully digital, multi-circuit plug and play measurement concepts, with a common display for multi-circuit systems, can provide accurate and effective metering, measurement and monitoring of electrical power quality. Infinitely scalable, the latest systems are capable of monitoring thousands of connection points from 20A to 6300A – and will offer an accuracy of class 0.5 to IEC61557-12 from 2% to 120% of the current sensor primary rating.

Equipped for the smart facilities of today – and tomorrow – these latest product developments are connecting the world of energy with the digital revolution to help reduce installation costs and improve performance levels, securing power and making energy management simple across critical applications.

Smart and connected – the future of power monitoring has been reinvented

The digital industrial revolution is creating a new breed of electrical ecosystem. The drive towards common digital architecture is maximising the potential value of the Internet of Things - the result is unsurpassed efficiency including the benefits of more automated, centralised systems.

For smart and connected energy management, it is now possible to more precisely monitor protective devices - remotely and in real time - across the entire electrical installation – without any wiring or additional equipment.

Socomec’s Diris A40 and Diris Digiware metering and monitoring solutions guarantee the availability and safety of the electrical installation, whilst also monitoring performance, checking power quality and managing loads.

With the simplest possible integration, the Diris A40 is easy to fit within new and existing installations. Assisted configuration and error detection cuts the commissioning time by half whilst also guaranteeing the accuracy of the measurements. Furthermore, the connection to the Cloud means that data can be automatically exported for remote processing.

With three additional new technologies available with both the Diris D40 and Diris Digiware systems, unsurpassed levels of accuracy can now be achieved.

Track the status of protective devices without additional wiring

When a protective device trips it means that a process or a system has been unexpectedly shut down. This can rapidly escalate into a crisis if the load is critical to life safety or economically essential.

Monitoring the status of a protective device is traditionally done using the auxiliary contact of the circuit breaker or a fuse blown indication system. These signals are then wired back to a PLC outstation adding more hardware and manufacturing time.

Status change immediately detected

Socomec’s VirtualMonitor technology – with iTR retrofit current sensors and Digiware S Monobloc current module - is able to detect that a protective device has been opened and will alert the site team over the associated meter’s communication bus. The status change is detected immediately and an alarm can be generated and shared.

The system can even differentiate between a trip due to a fault and a manual opening or tripping of the protective device so that the site teams knows if they need to investigate further.

The Digiware S brings this technology down to the final circuit (MCB level) where it was previously impossible to monitor an auxiliary contact. VirtualMonitor marks a major step forward in metering, removing additional hardware and wiring whilst retaining or enhancing visibility of the electrical installation.

Energy quality and resilience

By fitting permanent power quality monitoring systems, it is possible to check the reliability, efficiency and safety of an organisation’s electrical system. Data is collected and analysed in order to diagnose problems, identify deterioration in performance and highlight areas of risk – as well as locating the causes of electrical disturbances.

The latest network analyser equipment will ensure that the electrical system runs continuously and at optimized rates. By measuring electrical parameters and status, analyzing the quality of energy according to class A IEC 61000-4-30, and measuring differential current – whist also providing GPS synchronization – downtime and associated production losses are minimized, efficiency is improved and running and maintenance costs are optimized.

Power hungry cooling systems

All Data Centre facilities have dynamic environments, making it difficult to manage thermal airflow. The challenge is to match the cooling delivered to a facility with the heat generated by the current IT load – all of which needs to be monitored.

Automatic transfer switches can enhance power availability and simplify the electrical architecture, ensuring standby and alternate power availability. Deployed in the most challenging applications around the world, they have been specifically engineered to be virtually maintenance-free.

By ensuring that the switching system is fully certified to BS EN 60947-6-1, and choosing a manufacturer-built solution, these fully programmable switches can be integrated into the data centre management system via serial communication. When fitted with a maintenance bypass, they can be commissioned, tested and inspected with no down-time for the mechanical loads they typically serve.

Often mistakenly overlooked in favour of circuit breakers, fuses provide a compact protection solution for low current (~32A) loads fed from a busbar or PDU with a high prospective short circuit current. As energy efficiency is improved by reducing the distance and impedance between transformer and final load, prospective short circuits can approach 100kA.

For very large switchboards, there is the added security of short circuit and heat rise testing by Tesla lab (12,000 A AC three phase and 6,000 A AC three phase and DC) – an independent laboratory specialising in testing of LV components, switchgear and switchgear assemblies.

High performance switching

One example, the Socomec’s ATyS d H, is a remote three-phase transfer switch with 3 and 4 poles and integrated dual power supply – and has been engineered for low voltage high power applications that demand high performance and rapid, reliable switching.

The open transition transfer is performed on-load, in line with IEC 60947-6-1 and GB 14048-11 standards (Class PC).

Offering high withstand short circuit current ratings of 143kA lcm (making) and 65kA for 0.1 second lcw (withstand), the performance in terms of load switching capacity is AC33iB (6xIn cos Ø 0.5) without derating.

Safe on load transfer: I-0-II

The ATyS d H includes two mechanically interlocked switches to ensure fast switching whilst providing a neutral (Off - 0) position. This ensures that the main and alternative power supplies do not overlap. The 0 position can also be used for safe maintenance of the installation, providing isolation between both sources and the load – a vitally important factor in this specific application.

Automatic (ATSE) or remotely operated (RTSE) controls

The ATyS d H is an RTSE that can be easily used in conjunction with an ATS controller – C20/30 or C40, depending on the application - in order to operate as an ATSE.

Business continuity - guaranteed

By working with the original manufacturer, it is possible to access a superior understanding of changeover technology, the product itself and its software – as well as in inspection and testing safety procedures and the integration of equipment within a customer’s unique working environment.

With the benefit of hindsight, preventive maintenance would be top of all of our agendas when it comes to Data Centre resilience. A comprehensive preventive maintenance programme will optimise operating efficiency. Mechanical, electrical and battery inspections are carried out along with environmental checks. Equipment cleaning and dust removal is undertaken, and electronics testing programmes and software updates are completed.

With a detailed maintenance report, it is possible to increase resilience and reliability with a regular maintenance programme.

To learn how you can benefit from an integrated approach to energy efficiency within Data Centres, contact Socomec’s team info.uk@socomec.com www.socomec.co.uk 01285 86 33 00

By Chris Cutler, Corporate Account Manager and Data Centre Efficiency Specialist at Riello UPS Ltd

It was long-standing publication The Economist that said it best: “data is to this century what oil was to the last one – a driver of growth and change”. The relentless rise of Industry 4.0 and the ‘Internet of Things’ have led to an explosion of interconnectivity. By 2025 it’s predicted that the average person will interact with connected devices around 4,800 times a day – that’s once every 18 seconds!

And just as there was a price to pay for our reliance on oil to fuel previous industrial revolutions, there’ll be consequences too for society’s dependency on data to drive future growth and technological advances. According to the Global e-Sustainability Initiative (GeSI), datacentres already consume over 3% of the world’s total electricity and generate 2% of our planet’s CO2 emissions – the equivalent of the entire global aviation industry.

All these extra sensor-fitted devices, apps, and gadgets will create enormous amounts of data that will require safe, reliable storage and processing. The National Grid is creaking from decades of under-investment, so it’s not simply a case of cranking up electrical capacity to satisfy this increased demand.

Data centres will have to do more with less, and with energy bills totalling up to 60% of total running costs, operators need to embrace energy efficiency for the economic as well as the environmental reasons.

We’re living in the age of hyperscale data centres. Apple is building a single $1 billion super-facility in Ireland that will eventually use 8% of the national power capacity. At that scale, any efficiency shortcomings and unnecessary waste will obviously be amplified. But whatever size or set-up, whether on-site, colocation, or cloud, no data centre can afford to ignore the need to become more efficient and reduce their power consumption.

The concept of a ‘green data centre’ is nothing new and great technological strides have already been made in recent years by many facilities to minimise their environmental footprint, thanks in no small part to initiatives such as the DCA’s own Certification Scheme, which has helped to place energy efficiency firmly on the industry’s agenda.

Significant progress has been made with cooling technologies and techniques. Necessary advances when you consider air conditioning can account for around half of a data centre’s overall power usage. But these efficiency gains on their own aren’t enough. Fortunately there are other essential parts of a data centre’s infrastructure that can produce similar energy savings.

Modular UPS – Power Protection Using Less Energy

At the heart of any data centres lies its uninterruptible power supply (UPS) system. The first line of defence against any power outages or disruption, and the ultimate insurance policy to minimise damaging downtime on those inevitable occasions where disaster strikes.

Up until recently, a data centre’s UPS units were very much part of its energy consumption problem. Typically large standalone towers, these critical power protection systems relied on older technology that could only achieve optimum efficiency when carrying heavy loads of 80-90%. There was a tendency to oversize such fixed-capacity units during initial installation to provide the required redundancy, so systems regularly ran at lower loads than was ideal – an inefficient process wasting significant amounts of energy.

These sizeable towers also pumped out plenty of heat from their fans and electrical components, so needed significant levels of energy-intensive air conditioning to keep them cool enough to operate safely.

However, technology has moved on rapidly in recent years to the point where a UPS can now actually be part of a data centre’s energy efficiency solution. Modular UPS systems – which replace the older standalone units with compact individual rack-mount style power modules paralleled together to provide capacity and redundancy – deliver performance efficiency, scalability, and ‘smart’ interconnectivity far beyond the capabilities of their predecessors.

The modular approach ensures capacity corresponds closely to the data centre’s load requirements, removing the risk of oversizing and the initial installation costs, while reducing day-to-day power consumption. This leads to the double benefit of cutting both energy bills and the site’s carbon footprint. It also gives facilities managers the flexibility to add extra power modules in as and when the need arises, offering the in-built scalability to ‘pay as you grow’.

Transformerless modular UPS units generate far less heat than static, transformer-based versions and need significantly less air conditioning too. They are also smaller and lighter, so have a substantially reduced footprint, and are easier to maintain because each individual module is ‘hot swappable’ so can be replaced as and when required without the whole system having to go offline.

Another benefit of the move to modular is that the units easily integrate with Energy Management Systems (EMS) or Data Centre Infrastructure Management (DCIM) software, transforming them into networks of ‘smart’ UPS’s that constantly collect, process, and exchange performance data such as operating temperatures, UPS output, and mains power voltage.

This information is used in real-time to help constantly optimise the system’s performance, as well as highlighting other areas where additional efficiency savings can be made. In hyperscale datacentres where UPS’s are spread across several sites in different cities or even countries, this interconnectivity combined with the ability to remotely monitor performance helps to optimise load management and minimise the amount of energy used.

Harnessing Battery Power As Renewable Energy

One final area to highlight is the potential a UPS unit, or more specifically its batteries, has as a generator of renewable energy.

Many modern UPS’s have the option to use Lithium-Ion (Li-Ion) batteries rather than the typical sealed lead acid (SLA) type. Li-Ion batteries deliver far greater power density even though they only take up approximately half the space of SLA. This enables them to store a surplus of electricity generated during off-peak periods when prices are lower. This saved power can either be used during expensive peak times, to ward off downtime in the event of an outage, or even sold back to the National Grid.

There’s already 4 GW of power stored in UPS units across the UK, and as the nation edges towards a demand side response (DSR) model, tapping into the potential of Li-Ion batteries as a renewable energy source could pay economic and environmental dividends. It will, however, need something of a shift in mindset for mission-critical organisations to think of their power protection system – which is their ultimate insurance policy – as a means to generate green energy.

Every UPS has a lifespan, with industry best-practice suggesting a system should be replaced every 7-10 years. Many of the units installed during the boom years of data centre growth earlier in the decade will soon be ready for replacement. If that’s the scenario facing your facility in the near future, you’ll be in prime position to reap the energy efficiency and cost benefits that the move to modular UPS can bring.

With more than a decade’s experience in the critical power protection industry and a proven track-record in the datacentre sector, Chris Cutler is Corporate Account Manager for Riello UPS. He has particular expertise regarding large-scale 3 phase UPS installations and datacentre UPS efficiency.

Riello UPS is a leader in the design, manufacture, installation, and maintenance of award-winning UPS and standby power systems from 400VA to 6MVA that promote uptime and minimise system downtime in datacentres and industries such as manufacturing, healthcare, transportation, education, and the emergency services.

For further information call 0800269 394, email sales@riello-ups.co.uk or visit www.riello-ups.co.uk

By Andrew Warren, Chairman of the British Energy Efficiency Federation

The National Infrastructure Committee is due to reveal details of a nationwide energy efficiency programme. The time is right to reverse the trend in government investment

This month the National Infrastructure Commission (NIC) is due to publish details, revealing just what an ambitious nationwide programme to stimulate energy efficiency looks like.

After several years of seriously declining activity on installing energy saving measures, with activity and employment down, such a potential step change is completely overdue.

For too long, there has been a failure to consider how energy efficiency might be considered as other than as a set of individual, unrelated programmes. There is an Energy Company Obligation here. An Energy Saving Obligation Scheme there. And a landlords’ minimum energy standards regulation, a Climate Change Agreement, a Home Energy Conservation Act, a Green Deal, an emissions trading scheme.

Civil servants administering one programme frequently have little or no knowledge of the workings of, let alone the possible synergies with, programmes other than their own - particularly if they are run from different departments. Relevant initiatives taken by one or more of the devolved administrations are too often met with blank stare in Whitehall.

Across the years, there have been several attempts to draw the different parts together, to make energy efficiency policy more than the sum of its (sometimes contradictory) parts. The most recent attempt to introduce coherence came under the Coalition government, with the formation of the Energy Efficiency Deployment Office. But this was scrapped as the 2015 general election was called. And the different programme administrators seem to have gone off along their own pathways.

This has coincided with the creation of the National Infrastructure Commission, charged with ensuring purposeful delivery of investment programmes. But initially, as the Conservative MP for Eddisbury, Antoinette Sandbach, so pointedly observed: “When MPs talk about infrastructure spending, one is put in mind of boys with their toys: big trains, roads, railways and power stations.”

Her “boys’ toys” dig about conventional infrastructure thinking, was made last month, as she launched the first Parliamentary debate on energy efficiency for over six years. It became the leitmotif of the entire occasion.

The reasons given for urging the government to designate energy efficiency measures as infrastructure spending were compelling.

Energy efficiency spending is a one-off cost, so it is closer to capital than revenue expenditure. By reducing energy consumption, those investments free up energy sector capacity. That reduces (or at least delays) the need for new capacity to come online. That same new capacity - in the form of generation plants, networks and energy storage - would be considered infrastructure spending by the Government, and potentially would involve a large amount of Government expenditure.

So the question Ms Sandbach – described by fellow Conservative MP James Heappey as Parliament’s leading champion for energy efficiency - poses is stark: “Why invest in the big plant if we can roll out energy efficiency measures across the country, as part of an infrastructure project? Energy efficiency measures provide a public service: they insulate consumers – literally - against the volatility of energy markets.

“Likewise, they provide health and well-being benefits, by enabling consumers to heat buildings more effectively. And they have the knock-on consequences of reducing our carbon emissions and contributing towards our overall aim of clean, green growth.”

Last October the National Infrastructure Committee published a report setting out its carbon priorities in particular. That report states categorically “the UK has old and leaky buildings - both residential and commercial. This increases the amount of energy needed to regulate their temperature…it is therefore essential that demand for energy from these buildings is reduced.”

The NIC is acknowledging that progress on improving energy efficiency in the UK had slowed. In particular, in the housing stock, “annual rates of cavity wall and loft insulation in 2013-15 were respectively 60 per cent lower and 90 per cent lower than annual rates in 2008-11.”

Their conclusions are very pointed: “There are no plans on the part of the Government to reverse this trend.”

Acknowledging all this, in the same parliamentary debate, the energy minister Clare Perry did caution: “There is no packet of money under the Chancellor’s desk marked infrastructure.”

Back in 1982 the Commons energy select committee posed the basic conundrum: why do governments refuse to compare the cost-effectiveness of investments in energy conservation measures, with the alternative of providing still further energy supplies.

There has never been a satisfactory official answer. But with overall UK energy consumption already down 16 per cent this past decade, the answer from the market place has become very obvious.

No question. The debate is now over, signaled by the preparedness of the NIC to alter the definition of which toys the boys concerned with energy policy should be playing with in future.

This surely must galvanise the government to recognise those recent declines in energy efficiency investment. And to produce policies that will seriously “reverse this trend.”

IT teams charged with migration projects shouldn’t be afraid to wring as much support and advice out of cloud service providers as possible so that they can achieve a pain-free migration and start reaping the benefits of the cloud.

By Monica Brink, EMEA Marketing Director, iland.

Cloud adoption by UK companies has now neared 90%, according to Cloud Industry Forum, and it won’t be long before all organisations are benefiting to some degree from the flexibility, efficiency and cost-savings of the cloud. Moving past the first wave of adoption we’re seeing businesses ramp up the complexity of the workloads and applications that they’re migrating to the cloud. Perhaps this is the reason that 90% is also the proportion of companies that have reported difficulties with their cloud migration projects. This is frustrating for IT teams when they’re deploying cloud solutions that are supposed to be reducing their burden and making life simpler.

With over a decade of helping customers adopt cloud services, our iland deployment teams know that performing a pain-free migration to the cloud is achievable but that preparation is crucial to project success. Progressing through the following key stages offers a better chance of running a smooth migration with minimum disruption.

1. Set your goals at the outset

Every organisation has different priorities when it comes to the cloud, and there’s no “one cloud fits all” solution. Selecting the best options for your organisation means first understanding what you want to move, how you’ll get it to the cloud and how you’ll manage it once it’s there. You also need to identify how migrating core data systems to the cloud will impact on your security and compliance programmes. Having a clear handle on these goals at the outset will enable you to properly scope your project.

2. Before you begin – assess your on-premises

Preparing for cloud migration is a valuable opportunity to take stock of your on-premises data and applications and rank them in terms of business-criticality. This helps inform both the structure you’ll want in your cloud environment and also the order in which to migrate applications.

Ask the hard questions: does this application really need to move to the cloud or can it be decommissioned? In a cloud environment where you pay for the resources you use it doesn’t make economic sense to migrate legacy applications that no longer serve their purpose.