Risk assessment is the obvious theme that dominates at the present time. The imminent arrival of the GDPR legislation has required all organisations to undertake a strategic review of the way in which they obtain, process, store and retrieve their data – although the GDPR suggests that the data actually belongs to the customers! I have seen so many weird and wonderful emails and other communications from companies clearly keen to be seen to be doing something about what we shall call the business of ‘data logistics’, but it’s not immediately obvious that many of the senders of these missives understand what the GDPR does or doesn’t require. In the case of GDPR, the risk assessment process appears to have been carried out rather too diligently! Still, it will be fun to watch what happens after 25 May. No doubt Fleet Street will ‘engineer’ shocking examples of how some unfortunate blue chip corporation or other plays fast and loose with its customers’ data and there may even be some court cases to add to the general entertainment. Whether we as private citizens will notice any major changes – especially when it comes to the companies that ignore the requirements of the existing Data Protection Act – remains to be seen.

And then we have the unfortunate IT meltdown at TSB. Pity the banking sector, struggling to cope with the shame of bringing the world to its knees in 2008 (!), and now, increasingly frequently, beset with IT-related problems. Data breaches are almost impossible to prevent, but IT crashes are, almost invariably, totally avoidable. It’s all about risk assessment. Making sure that every angle of a planned IT refresh/migration is looked at in detail and the knock-on impact of every single stage of the process is fully understood and allowed for. Disappointingly, the explanation for the kind of IT disaster that hits the headline is never a much more complicated variation of: “We didn’t realise that there would be a minor compatibility issue between our 30 year old application and the new servers on which it now sits”. Really? What’s most depressing here is the fact that while almost every one of our personal possessions is refreshed on something rather shorter than a 30 year cycle (clothes, gadgets, cars, white goods, we even tend to ‘refresh’ our home within this time frame), it’s apparently accepted business practice to rely on a very old application because ‘it’s too difficult and expensive’ to re-write. And I guess freezing out customers for a few days is ‘simple and cheap’?!

Hey-ho. No one pretends that the IT world is easy to control, but it would be great to think that the many household names who, quite rightly, pride themselves on their innovation and their desire to embrace digital transformation, don’t let themselves down by lazy logic and risk assessment. There’s not much point if you have the world’s greatest digital infrastructure, but allow a single point of failure to render it all but useless.

The Data Economy Report by Digital Realty reveals that the UK’s data is worth £73.3 billion to the UK economy, powered by the country’s data centre industry.

In the most comprehensive, first-of-its-kind look at the contribution that data provides to the UK economy, an independent report commissioned by Digital Realty reveals that the UK’s data economy is currently worth £73.3 billion annually. This figure reflects how data is making businesses’ existing services simpler, faster and more reliable, as well as enabling them to open up new business possibilities, such as new operating models, new revenue streams and new markets to enter.

The Data Economy Report also highlights the continued contribution data brings, with growth (7.3%) outstripping the wider economy (1.8%). This growth is powered by the UK’s data centre industry – the industry creates between £291 million and £320 million in value every year from each data centre, with the range even higher for new data centres: £397-£436 million in extra annual value from each new data centre.

Data centres create this value by providing and managing the infrastructure, connectivity and services that underpin success across the full range of economic activity. This includes not only I.T. and financial services, such as powering high-speed trading platforms and cloud storage services, but also other sectors such as agriculture where data allows more precise use of pesticides, better adaptation to weather trends and automation such as drones to survey crops.

Investment in the data centre foundations which enable all this is essential for the future prosperity of British businesses and the economy.

“The UK’s goal is to lead the world in creating innovative businesses, and continued growth of its data economy is vital in achieving that goal.” said Jeremy Silver, CEO, Digital Catapult. “The Data Economy Report provides a clear road map for businesses, suggesting that by investing in the right foundations, technology innovations and partners, they will grab a significant dividend.”

The £6.2 billion added value that data centres create demonstrates the rewards to be won by businesses investing in their data infrastructure. In fact, business investment increases of 5%-11% in data infrastructure and new technologies like IoT sensors and big data analysis software could mean the difference between the UK data economy growing at its current rate and hitting £94.6 billion by 2025 and a best case scenario in which it grows to £101.6 billion by 2025, creating a £7 billion per year data dividend.

“Data infrastructure and services underpin the UK’s digital economy but its value is often underestimated. With The Data Economy Report, its worth to businesses and the wider economy is apparent,” said Rob Coupland, Managing Director EMEA, Digital Realty. “We urge British businesses to invest in the right tools, infrastructure and partners to get more value out of data and take a piece of a £101.6 billion national opportunity.”

Survey data shows transformed companies are 22x more likely to get new products and services to market ahead of the competition.

Dell EMC has published the results of new research conducted by Enterprise Strategy Group (ESG) into the benefits of IT Transformation which validates that IT Transformation can result in bottom-line benefits that drive business differentiation, innovation and growth.

Today’s business landscape is rife with disruption, much of it driven by organisations using technology in new or innovative ways. In order to survive and thrive in today’s digital world, businesses are implementing new technologies, processes and skillsets to best address changing customer needs. A fundamental first step to this change is transforming IT, to help organisations bring products to market faster, remain competitive and drive innovation. According to ESG’s 2018 IT Transformation Maturity Study [ii] commissioned by Dell EMC and Intel:

81 percent of survey respondents agree if they do not embrace IT Transformation, their organisation will no longer be competitive in their markets, up from 71 percent in 2017. 88 percent of respondents say their organisation is under pressure to deliver new products and services at an increasing rate. Transformed organisations are 22x as likely to be ahead of the competition when bringing new products and services to market.Transformed organisations are 2.5x more likely to believe they are in a strong position to compete and succeed in their markets over the next few years. Transformed companies are 18x more likely to make better and faster data-driven decisions than their competition and are 2x as likely to exceed their revenue goals.

“Data is the new competitive edge – yet it’s become highly distributed across the edge, the core data center and cloud. Organisations realise they have to move quickly to turn that data into business intelligence – requiring an end-to-end IT infrastructure that can manage, analyse, store and protect data everywhere it lives,” said Jeff Clarke, Vice Chairman, Products and Operations, Dell Technologies. “We’re in the business of better business outcomes, giving our customers the ability make that end-to-end strategy a reality, driving disruptive innovation without the fear of being disrupted themselves.”

The ESG 2018 IT Transformation Maturity Study

The ESG 2018 IT Transformation Maturity Study follows the seminal study commissioned by Dell EMC, the ESG 2017 IT Transformation Maturity Study, and was designed to provide insight into the state of IT Transformation, the business benefits fully transformed companies experience, and the role critical technologies have in an IT Transformation. ESG employed a research-based, data-driven maturity model to identify different stages of IT Transformation progress and determine the degree to which global organisations have achieved those different stages, based on their responses to questions about their organisations’ adoption of modernised data center technologies, automated IT processes and transformed organisational dynamics.

“Companies today need to be agile to stay competitive and drive growth, and IT Transformation can be a major enabler of that,” said John McKnight, Vice President of Research, Enterprise Strategy Group. “It’s clear that IT Transformation is increasingly resonating with companies and that senior executives recognise how IT Transformation is pivotal to overall business strategy and competitiveness. While achieving transformation can be a major endeavour, our research shows ‘Transformed’ companies experience real business results, including being more likely to be ahead of the competition in bringing new products and services to market, making better, faster data-driven decisions than their competition, and exceeding their revenue goals.”

This year’s 4,000 participating organisations were segmented into the same IT Transformation maturity stages:

Stage 1 – Legacy (6 percent): Falls short on many – if not all – of the dimensions of IT Transformation in the ESG study. Stage 2 – Emerging (45 percent): Showing progress in IT Transformation but having minimal deployment of modern data center technologies. Stage 3 – Evolving (43 percent): Showing commitment to IT Transformation and having a moderate deployment of modern data center technologies and IT delivery methods. Stage 4 – Transformed (6 percent): Furthest along in IT Transformation initiatives.

This year’s findings show organisations are progressing in IT maturity and generally believe transformation is a strategic imperative.

96 percent of respondents said they have Digital Transformation initiatives underway – either at the planning stage, at the beginning of implementation, in process, or mature. Respondents whose organisations have achieved Transformed status are 16x more likely to have mature Digital Transformation projects underway versus Legacy companies (66 percent compared with 4 percent). Transformed organisations were more than 2x as likely to have exceeded their revenue targets in the past year compared with Legacy organisations (94 percent compared to 44 percent). 84 percent of respondents with mature Digital Transformation initiatives underway said they were in a strong or very strong position to compete and succeed.

IT Transformation maturity can accelerate innovation, drive growth, increase IT efficiency and reduce cost. More specifically:

Transformed organisations are able to reallocate 17 percent more of their IT budget toward innovation. They complete 3x more IT projects ahead of schedule and are 10x more likely to deploy the majority of their applications ahead of schedule. Transformed organisations also report they complete 14 percent more IT projects under budget and spend 31 percent less on business-critical applications.

Making IT Transformation and Digital Transformation Real

Organisations like Texas-based Rio Grande Pacific understand IT Transformation benefits first-hand. The company has branched from a railroad holding company – moving and physically handling railcars – into a provider of technology services for other short line railroads and commuter operations. Rio Grande Pacific pursued IT Transformation to support its aggressive growth. By modernising its data center, the company has increased speed of services tenfold, experienced a 93 percent reduction in data center electricity use, significantly improved rack performance and provisioning time, and created a new business – the “RIOT” domain or Railway Internet of Things.

“As part of a 150-year-old industry, we recognise that the future of rail is tied to technology,” said Jason Brown, CIO, Rio Grande Pacific. “Railroads are in need of real-time information in order to make rapid decisions. Combining several systems into one single dashboard though our RIOT domain provides a holistic view to customers and helps keep the trains running on time. These new services, using the most modern technology, sets Rio Grande Pacific apart from the competition and has led to strong growth.”

Bank Leumi, Israel's oldest and leading banking corporation, is also experiencing the benefits of IT Transformation, bringing to life its mobile-only bank, Pepper. The organisation set out to create a platform that provides customers with a better experience, engages them quicker and reaches a new generation of clients. In order to do this, the company needed a faster, more flexible infrastructure and began leveraging a hybrid cloud model and software-defined data center. This has allowed them to move code from development to production within hours, compared to weeks, establish new environments faster and do this at less cost. This has helped them to bring a new, innovative product to market.

“We are in the midst of an era of digital disruption, where customer demands and expectations are changing rapidly,” said Ilan Buganim, Chief Technology and Chief Data Officer, Bank Leumi. “We as a bank need to adapt ourselves and continue providing a superior customer experience. We saw the opportunity to do this with our new mobile-only bank, ‘Pepper.’ Moving to a hybrid cloud model and a software-defined data center environment provided the infrastructure needed for real-time banking, with the ability to run fast and to shortcut the time to deliver new functionalities - thus making this new customer experience possible.”

Worldwide information and communications technology (ICT) spending, including new technologies, is expected to exceed $5.6 trillion in 2021 with growth accelerating through the end of the forecast period as new categories account for a growing proportion of overall investments, according to the latest version of the Worldwide Black Book: 3rd Platform Edition from International Data Corporation (IDC).

By 2021, new 3rd Platform technologies, including Internet of Things (IoT) solutions, robots and drones, augmented reality and virtual reality (AR/VR) headsets, and 3D printers, will account for almost a quarter (23%) of total ICT spending. Overall, 3rd Platform investments, including the F our P illars of cloud, mobile, big data & analytics, and social, will make up more than 70% of worldwide ICT spending.

The fastest-growing technology markets last year were AR/VR, cognitive and artificial intelligence (AI), 3D printing, and robotics. Meanwhile, IoT has already grown to account for 15% of ICT spending, including new operational technology (OT) software and services, which represent expanding opportunity and potential disruption for traditional software and services vendors. But while the adoption of 3rd Platform technologies is broadly positive across all countries, there are key geographic differences in terms of early adoption and short-term opportunities.

"Mature economies are leading the way in some 3rd Platform markets, thanks to more advanced cloud infrastructure and software innovation driving rapid adoption of solutions around big data and analytics, cognitive AI, and cloud-based software," said Stephen Minton, vice president, Customer Insights & Analysis at IDC. "It's a different story with technologies that are focused on industrial use cases in the manufacturing industry, such as IoT and robotics. Emerging leaders like China are driving much of the innovation in real-world deployments around these industry-focused technologies."

China accounted for 28% of worldwide IoT spending in 2017, and 29% of total robotics investments, compared to just 12% of traditional ICT spending categories (hardware, software, services and telecom). Japan and some other Asia/Pacific countries are also early adopters of robotics and IoT. 3D printing has seen strong early adoption in China and Germany. Cognitive AI investments are dominated by U.S. businesses, who are also leading the way in AR/VR prototypes. Emerging markets, such as India and Brazil, are major contributors to overall mobility spending, but are still playing catch up when it comes to cloud.

"While the traditional ICT market has become more homogenous in the last few years, as emerging markets caught up to mature economies and often leapfrogged legacy technologies in their adoption of mobile solutions, the 3rd Platform brings with it a new period of fragmentation," added Minton. "The U.S. is once again at the forefront of much new software innovation, while countries like China and Germany are driving industry-focused categories. Understanding these regional and country-level differences, including the drivers and inhibitors behind likely adoption curves for new technologies, will be key to ICT vendor strategy in the next 5-10 years."

The latest version of IDC's Worldwide Black Book: 3rd Platform Edition includes forecasts for 33 countries segmented by 44 technologies and 11 platforms. IDC defines the 3rd Platform as a leading driver and force of innovation consisting of the Four Pillars of cloud, mobility, big data & analytics, and social, plus the I nnovation A ccelerators of IoT, robotics, cognitive AI, AR/VR, 3D printing, and next-generation security.

Research indicates UK IT departments spend just 12 per cent of their time on innovation.

New research commissioned by managed services provider Claranet has revealed that a failure to automate IT processes and a heavy reliance on manual intervention is hindering the ability of organisations to embrace innovation. Despite a prediction by Gartner that 75 per cent of enterprises will have more than six diverse automation technologies within their IT management portfolios by 2019, almost half of organisations still have some way to go to put this into practice.

The research, conducted by Vanson Bourne and surveying 750 IT and Digital decision-makers from a range of organisations across Europe, is summarised in Claranet’s new Beyond Digital Transformation research report. The findings reveal that infrastructure configuration at nearly half (48 per cent) of UK businesses remains mostly or heavily manual. At the other end of the scale, only 11 per cent said that their infrastructure is highly automated. This is having a direct impact on the amount of time IT teams spend on maintenance and administration tasks.

According to the survey responses, IT teams spend over half (53 per cent) of their time on operational projects, general maintenance, responding to user problems, and unplanned work, with just 12 per cent of their time focused on new approaches that can lead to real business improvement and innovation.

For Michel Robert, Managing Director at Claranet UK, these responses underline the scale of the work that needs to be done to improve the efficiency of the IT department, as well as the overall impact it has on the wider business:

“Automation is a critical enabling technology that can give organisations the agility, speed, scalability, resilience and compliance they need to compete and succeed in the age of digital business.

“Unfortunately, it appears that many UK companies are struggling to adopt automation, from both an infrastructure and application perspective. This not only makes the day-to-day activity of the IT department less efficient, but also has a negative impact on the wider business, as new initiatives that are underpinned by technology cannot be leveraged to their full potential. At the same time, this lack of automation opens up the organisation to the threat of human error, and the financial and administrative impact this can have.”

In order to effectively address this low level of automation, Michel believes that businesses need to focus on making a series of organisation-wide changes to help reduce manual processes and facilitate greater efficiency. This should include taking steps to free up time for IT teams, and implementing processes to help join up various departments and responsibilities more effectively. To be a success, all of this needs steadfast support from leaders across the business.

“Crucial to making automation more commonplace is a commitment by leadership to making it a reality. This means that the C-suite – whether directly involved with IT or otherwise – need to be fully aware of its benefits and work together to create and implement plans to increase the pace of automation. This includes working towards freeing IT teams of the burden of the more basic maintenance and administration tasks, and then introducing comprehensive, well-planned processes that join up everything that goes on in the IT department. Partnering with an external service provider can be an effective way of bringing these changes to fruition.”

He concluded: “By taking an agile approach to increasing automation which includes organisation-wide support and openness to working with third-party providers, organisations can gain the means to accelerate the move to automation across both infrastructure and applications, without diverting time and resource from the IT department. If this approach is taken, addressing the automation challenge need not be a daunting task and can be addressed in a practical manner, and IT teams can begin to think more deeply about how they can drive the needs of the wider business.”

Jaguar Land Rover deploys their data analysis files from a local data centre to Google Cloud Storage without losing compatibility with DataFinder by using the SME Native Drive.

Jaguar Land Rover has deployed Storage Made Easy’s Cloud Drive in the Google Cloud, allowing critical file system-oriented applications to access data stored securely on Google Object Storage.

Jaguar Land Rover (JLR) uses National Instrument’s DataFinder to index and analyse over a terabyte of time-series data created each day. The data is generated from test drive sensors and measurements, and adhoc measurements from 400 dataloggers in the powertrain calibration and controls department. It is then and saved directly on Google Cloud Storage. The challenge has been how to take advantage of the cloud for increased durability and agility when the application can only index and manage data from file-based storage systems.

The Storage Made Easy Google Storage Drive allows applications, and end users, to connect to Google Cloud Storage as if it was a local read-write hierarchical file system. The drive allows Jaguar Land Rover to move their data management and analytics platform to Google Cloud with minimal migration costs.

The Cloud Drive is optimised for large data sets and deep folder hierarchies and does not obfuscate object metadata, allowing concurrent access by apps directly to Google Cloud Storage.

Maxime Lecuona, Power Train Calibration and Controls Data Processing team leader at Jaguar Land Rover, said: “Moving our infrastructure to Google cloud is a critical project for us. We are planning on leveraging this new platform to gain in flexibility and ultimately provide our services to a wider portion of the company. Storage Made Easy Google storage drive is allowing us to transfer our current infrastructure to this new environment with minimal change to the existing code, and at a very reasonable price.”

Jim Liddle, Storage Made Easy CEO, said: “Cloud technology is undoubtedly a great way for companies to free up IT to concentrate on innovation, but there is still a need to bridge the gap between remote cloud storage and critical business applications, and this is what the Storage Made Easy solutions provide.”

Improves scalability, stability and increase regulatory-compliant access to data.

Spirit Healthcare, a supplier of innovative products and services that aims to make the nation healthier and happier by delivering real value in healthcare, has welcomed Netmetix as its expert cloud provider to migrate the business to the cloud.

The main drivers to migrate the business to the cloud included increased stability over traditional on-site servers and mitigation of data loss risk. Another driver was scalability. The company had been expanding at a rapid rate over the last three years and required a solution that could grow alongside the business. Introducing more equipment on site was not a practical option, so gaining scalability without the added physical apparatus was key. The final driver for migration was impending regulatory compliance; employees required ease of access to information, including Intranet-based files, in one hosted central location, whether working in the head office or remotely. Due to the sensitive nature of some of the information, the data needed to be hosted in a secure setting and regulated for the GDPR.

In order to address the issues raised, Spirit evaluated several options and moving to a cloud environment made the most sense, where firewalls, enterprise-grade security and file accessibility was key. Netmetix was able to offer exactly what they required.

After speaking with various other cloud providers, it was a recommendation from other companies that have worked with Netmetix that sealed the deal. The level of service provided to its other customers was a big deciding factor for the Spirit team.

Kirk Harland, Head of IT at Spirit Healthcare stated, “We have clear ambitions for the business over the next 12 months and having a cloud hosted system is key to making those ambitions a reality. From supporting process improvements that enable our trusted employees to adhere to new data regulations such as GDPR, to solving the issue of multiple data sets across various databases and platforms, with Netmetix’s cloud implementation underway, it won’t be long until all of our employees working remotely, are able to securely access any file required for their day to day work activity, rather than needing to be onsite to gain this access.”

Managing Director at Netmetix, Paul Blore adds, “We’re really excited to be working with Spirit Healthcare and we’re thrilled they approached us after receiving recommendations from some of our other clients. We will work with Spirit to deliver a future-proof, scalable infrastructure that can support their business goals and ambitions.”

Ensono chosen to help align the organisation’s business and IT strategies.

City & Guilds Group, the leading provider of global skills development, has selected Ensono to lead the migration of its critical on-premise applications to a managed Microsoft Azure environment. The migration closely follows Microsoft’s announcement in November 2017 detailing how Microsoft Azure will allow customers to run SAP S/4HANA in a secure, managed cloud. This mission critical replatforming exercise will enable City & Guilds Group’s IT strategy to facilitate the broader business plan of diversification through acquisition. The project future-proofs the skills organisation, ensuring it can integrate additional acquisitions and services for enterprise level qualifications, e-learning and executive coaching.

City & Guilds Group have appointed Ensono to migrate applications including SAP, Sitecore, E-volve, Smartscreen and Biztalk, increasing business agility and driving cost savings of well over £1 million a year – helping offset the loss of public sector funding. Ensono’s strategic purchase of Inframon, which has over a decade of Microsoft cloud migration and management expertise and is an award winning Microsoft partner, was crucial in City & Guilds Group’s decision.

The SAP on Azure migration will help City & Guilds Group deliver a better service to its customers and provide greater flexibility, enabling the organisation to scale up and down as demand requires.

Alan Crawford, CIO, City & Guilds Group , said: “This is City & Guilds Group’s first migration of mission-critical applications to a managed public cloud environment. The replatforming will increase our ability to scale up and down, depending on business needs and as we add new services, courses and companies to our portfolio. In addition to the cost-savings from becoming a cloud-first organisation, the replatforming will also drive innovation across the business. Innovation is at the heart of what we do and this project, with Ensono and Microsoft working as true partners, means City & Guilds Group will continue on the path to becoming the world’s leading skills development and e-learning organisation, with a passion for constant innovation.”

What will it take to get investment?

Asks Tony Lock, Distinguished Analyst and Director of Engagement, Freeform Dynamics Ltd.

There is a quote that almost everyone working in a management role will recognise: “if you can’t measure it, you can’t manage it”. Naturally enough, IT is no exception. After all, if you haven’t got good visibility of how the data centre is running, how can you ensure it keeps functioning effectively, and efficiently? This makes the title of a recent research note by Freeform Dynamics, The monitoring capability gap (link: https://freeformdynamics.com/core-infrastructure-services/monitoring-capability-gap/), something of a concern for data centre managers.

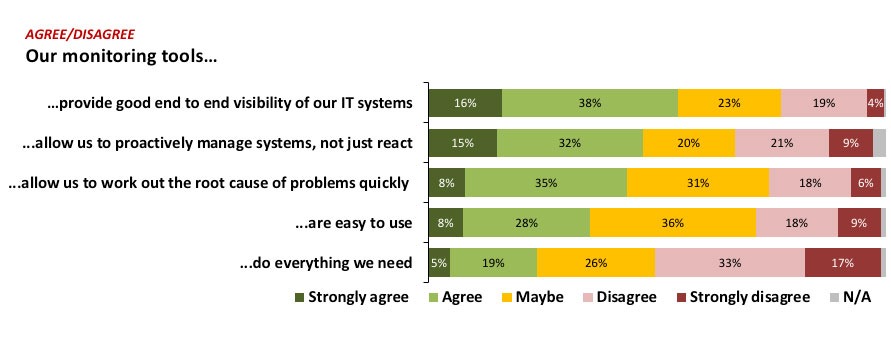

Starting with the basics, the report makes it very clear that the monitoring tools used by respondents – and maybe you – to keep systems and services operational have plenty of scope to be improved. (Figure 1).

The first thing that leaps out from the results is that half of respondents say the monitoring tools they use do not provide all the functionality they need, twice as many as those who say that they do. Even if everyone always wants more (and we do), the other responses reveal other issues that need attention. For example, significant numbers of those taking part report that their monitoring capabilities do not allow the speedy identification of the root cause of a problem.

Even though a majority of those taking part in the survey agree or strongly agree that their tools provide good end to end visibility, almost a quarter disagree or strongly disagree. Indeed, almost one in three say their tools do not allow them to proactively manage systems, a matter of concern when data centre complexity is increasing, and as line of business users become ever more intolerant of IT problems or slow-downs, since so many business functions can no longer operate without IT. Add Cloud and other external resources into the mix and it is easy to see why many IT teams sometimes have to battle with monitoring and diagnostics.

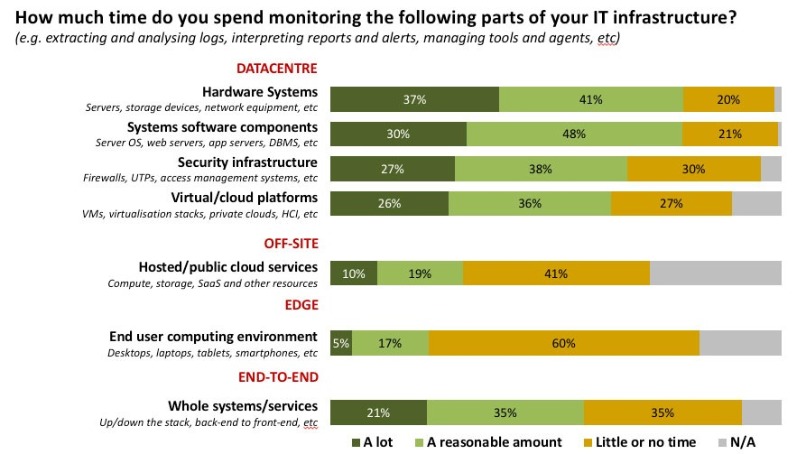

Data centre and infrastructure monitoring have traditionally been at the heart of IT operations, and the results show this is very much still the case. (Figure 2).

Business users have obviously benefited greatly as computer systems have become ever more reliable, and as data centre managers have built systems with availability a primary concern. But while resilience was once the preserve of just a few systems, the portfolio of applications and services regarded as critical has now expanded dramatically. This explains why, despite the advances in core IT technologies, monitoring the health of systems and components still consumes considerable time and resources. When combined with the fact that critical business operations rely on IT systems not just to be available but also on them delivering excellent performance, it is clear that the need for accurate, sophisticated monitoring is not going away any time soon (Figure 3).

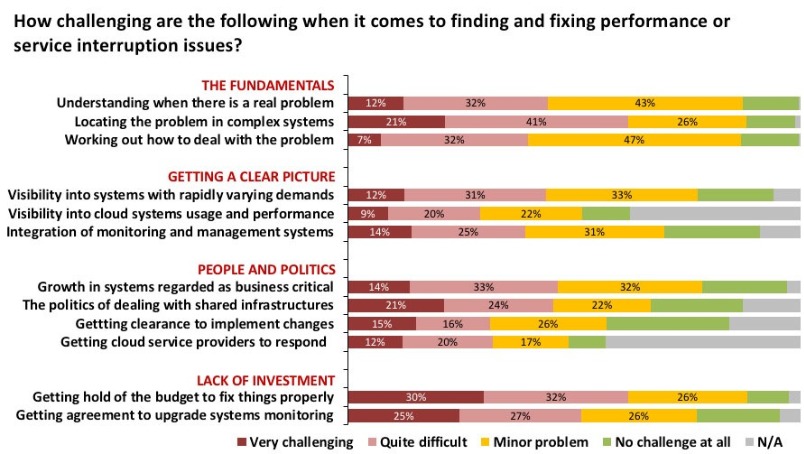

The results highlight the widespread distribution of the challenges faced by data centre professionals today. It is very clear that in every area – even in the fundamentals of problem identification and system visibility – there are usually more survey respondents finding things difficult or very challenging than there are who report no challenges.

What is less obvious to anyone outside of IT is just how important monitoring tools are in these matters. This may well reflect that unless you operate monitoring tools, day in, day out, it is very easy to overlook just how significant they are. It is even true that some IT professionals can take things for granted, especially if they have built complex scripts and daemons to help with some routine functions.

The IT landscape is changing dramatically and rapidly, innovative technologies are arriving in the data centre every year with cloud systems becoming important to an expanding range of business services. And then there are new architectures for developing applications, such as containers and serverless, that are beginning to hit the mainstream. Taken together these make the results at the bottom of the chart perhaps the most alarming. Despite the changes mentioned above, over half of those taking part report it is at least quite difficult, or even very challenging, to get agreement to upgrade monitoring capabilities to cater for the new IT landscape. Even more of them report that it is hard to get budgets to fix things that are sustainable in the long term.

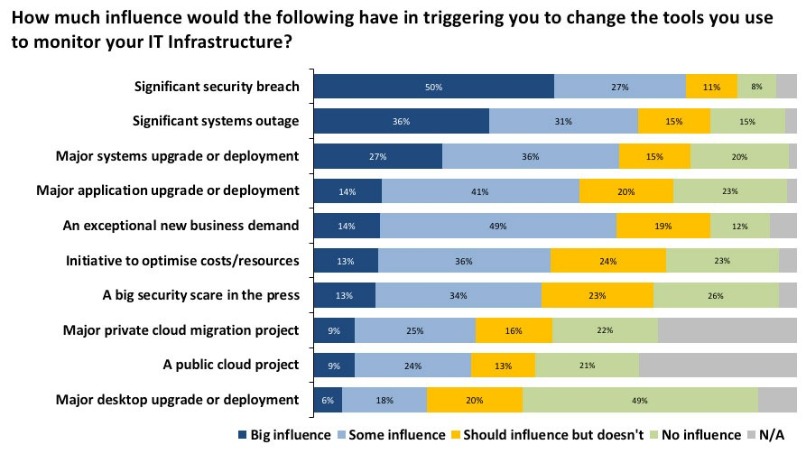

But can anything trigger investment for monitoring capabilities (Figure 4)?

Perhaps unsurprisingly the events that are likely to have the biggest influence on triggering investment in monitoring tools are anything but desirable. Even in a world where customer trust has never been so important, and the attention paid to data security is gathering momentum, the event most likely to get the business ready to spend money is a significant security breach, with significant systems outage only slightly behind.

It is interesting to note that far fewer respondents stated that a big security scare in the press would have the same impact. With GDPR a reality from May 25th, 2018 and the potential for penalties of up to €20 million or 4% of worldwide revenues, I suspect that once a large fine has been handed out the first time, the number of organisations investing in monitoring technologies may well increase dramatically.

The Bottom Line

Every manager and IT professional working in a data centre understands just how important IT is to keep the business running. This makes monitoring technologies more relevant and essential than they have ever been. The complexity of IT systems, the critical functions they support and the stress under which they must operate are all increasing the need to ensure systems run effectively.

At the same time, financial demands dictate they be run effectively – underutilised IT resources are becoming a luxury of the past. Monitoring is essential, but has been underappreciated. Yet if you can get it right, things are more likely to work well; conversely, if you neglect it, the negative impact for the business will be noticed.

The next Data Centre Transformation events, organised by Angel Business Communications in association with DataCentre Solutions, the Data Centre Alliance, The University of Leeds and RISE SICS North, take place on 3 July 2018 at the University of Manchester and 5 July 2018 at the University of Surrey. The programme is nearly finalised (full details via the website link at the end of the article), with some some top class speakers and chairpersons lined up to deliver what is probably 2018’s best opportunity to get up to speed with what’s heading to a data centre near you in the very near future!

For the 2018 events, we’re taking our title literally, so the focus is on each of the three strands of our title: DATA, CENTRE and TRANSFORMATION.

This expanded and innovative conference programme recognises that data centres do not exist in splendid isolation, but are the foundation of today’s dynamic, digital world. Agility, mobility, scalability, reliability and accessibility are the key drivers for the enterprise as it seeks to ensure the ultimate customer experience. Data centres have a vital role to play in ensuring that the applications and support organisations can connect to their customers seamlessly – wherever and whenever they are being accessed. And that’s why our 2018 Data Centre Transformation events, Manchester and Surrey, will focus on the constantly changing demands being made on the data centre in this new, digital age, concentrating on how the data centre is evolving to meet these challenges.

We’re delighted to announce that Adam Beaumont, Visiting Professor of Cybersecurity at the University of Leeds, and CEO of aql, will be delivering the Simon Campbell-Whyte Memorial Lecture. Has IT security ever been so topical? What a great opportunity to hear one of the industry’s leading cybersecurity experts give his thoughts on the issues surrounding cybersecurity in and around the data centre.

We’re equally delighted to reveal that key personnel from Equinix, including MD Russell Poole, will be delivering the Hybrid Data Centre keynote addresss at both the Manchester and Surrey events. If Adam knows about cybersecurity, it’s fair to say that Equinix are no strangers to the data centre ecosystem, where the hybrid approach is gaining traction in so many different ways.

Completing the keynote line-up will be John Laban, European Representative of the Open Compute Project Foundation.

Alongside the keynote presentations, the one-day DCT events will include:

A DATA strand that features two workshops - one on Digital Business, chaired by Prof Ian Bitterlin of Critical Facilities and one on Digital Skills, chaired by Steve Bowes Phipps of PTS Consulting.

Digital transformation is the driving force in the business world right now, and the impact that this is having on the IT function and, crucially, the data centre infrastructure of organisations is something that is, perhaps, not as yet fully understood. No doubt this is in part due to the lack of digital skills available in the workplace right now – a problem which, unless addressed, urgently, will only continue to grow. As for security, hardly a day goes by without news headlines focusing on the latest, high profile data breach at some public or private organisation. Digital business offers many benefits, but it also introduces further potential security issues that need to be addressed. The Digital Business, Digital Skills and Security sessions at DCT will discuss the many issues that need to be addressed, and, hopefully, come up with some helpful solutions.

The CENTRES track features two workshops on Energy, chaired by James Kirkwood of Ekkosense and Hybrid DC, chaired by Mark Seymour of Future Facilities.

Energy supply and cost remains a major part of the data centre management piece, and this track will look at the technology innovations that are impacting on the supply and use of energy within the data centre. Fewer and fewer organisations have a pure-play in-house data centre real estate; most now make use of some kind of colo and/or managed services offerings. Further, the idea of one or a handful of centralised data centres is now being challenged by the emergence of edge computing. So, in-house and third party data centre facilities, combined with a mixture of centralised, regional and very local sites, makes for a very new and challenging data centre landscape. As for connectivity – feeds and speeds remain critical for many business applications, and it’s good to know what’s around the corner in this fast moving world of networks, telecoms and the like.

The TRANSFORMATION strand features workshops on Automation (AI/IoT), chaired by Vanessa Moffat of Agile Momentum and The Connected World. together with a Keynote on Open Compute from John Laban, the European representative of the Open Compute Project Foundation.

Automation in all its various guises is becoming an increasingly important part of the digital business world. In terms of the data centre, the challenges are twofold. How can these automation technologies best be used to improve the design, day to day running, overall management and maintenance of data centre facilities? And how will data centres need to evolve to cope with the increasingly large volumes of applications, data and new-style IT equipment that provide the foundations for this real-time, automated world? Flexibility, agility, security, reliability, resilience, speeds and feeds – they’ve never been so important!

Delegates select two 70 minute workshops to attend and take part in an interactive discussion led by an Industry Chair and featuring panellists - specialists and protagonists - in the subject. The workshops will ensure that delegates not only earn valuable CPD accreditation points but also have an open forum to speak with their peers, academics and leading vendors and suppliers.

There is also a Technical track where our sponsors will present 15 minute technical sessions on a range of subjects. Keynote presentations in each of the themes together with plenty of networking time to catch up with old friends and make new contacts make this a must-do day in the DC event calendar. Visit the website for more information on this dynamic academic and industry collaborative information exchange.

Vote Now for DCS Awards 2018 – online voting closes 11 May.

With thousands of votes already cast for this year’s DCS Awards, the competition is hotting up. Online voting stays open until 17.30 on Friday 11 May so make sure you don’t miss out on the opportunity to express your opinion on the companies, products and individuals that you believe deserve recognition as being the best in their field.

Voted for by the readership of the Digitalisation World portfolio of titles, the Data Centre Solutions (DCS) Awards reward the products, projects and solutions as well as honour companies, teams and individuals operating in the data centre arena.

Winners of this year’s 21 categories will be announced at a gala ceremony taking place at London’s Grange St Paul’s Hotel on 24 May.

All voting takes place on line and voting rules apply. Make sure you place your votes by 11 May when voting closes by visiting: http://www.dcsawards.com/voting.php

The full 2018 shortlist is below:

Data Centre Energy Efficiency Project of the Year

Romonet supporting Fujitsu UK & Ireland

Riello UPS supporting the Rosebery Group

EcoRacks Data Centre supported by Asperitas

London One Data Centre by Kao Data

New Design/Build Data Centre Project of the Year

Inzai 2 by Colt Data Centre Services

University of Exeter University supported by Keysource

Data Hub, Biel supported by Schneider Electric

Kao Data London One supported by JCA Engineering

Data Centre Consolidation/Upgrade/Refresh Project of the Year

EcoRacks supported by Asperitas

Willis Towers Watson supported by Keysource

Generator Control Panel Replacement by CBRE DC Solutions

Consolidation and expansion Africa Data Centres

New data halls in Corsham and Farnborough by CBRE, ARK and Corning Optical Communications

Data Centre Fire Protection by Bryland Fire Protection Ltd

Data Centre Power Product of the Year

Liebert® APM 30-600 kW by Vertiv

Delta 500kVA UPS by Eltek Power

Integrated Terminal Lug Temperature Sensors by Starline UE

Micro Data Center by Optimum Data Cooling

Data Centre PDU Product of the Year

Intelligent Power Distribution Unit (iPDU) Family by Excel Networking solutions

SmartZone G5 Intelligent PDUs by Panduit Europe

High Density Outlet Technology (HDOT) by Server Technology, a brand of Legrand

Data Centre Cooling Product of the Year

Liebert® PCW High Chilled Water Delta T by Vertiv

En-10 DX by Optimum Data Cooling

1U immersion cooled server by Iceotope Technologies

Oasis Indirect Evaporative Cooler by Munters

Data Centre Facilities Automation and Management Product of the Year

Nlyte 9.0 Data Center Infrastructure Management (DCIM) Solution by Nlyte Software

Micro Data Center by Optimum Data Cooling

Diris Digiware Power Metering and Monitoring System by Socomec

Data Centre Safety, Security & Fire Suppression Product of the Year

303 ECO SSF cabinet by Dataracks

IG55 Extinguishing System by Bryland Fire Protection Ltd

Data Centre Cabling & Connectivity Product of the Year

4K HDMI Single Display KVM over IP Extender by ATEN Technology

EDGE™ Mesh Modules by Corning Optical Communications

LABACUS INNOVATOR SOFTWARE & Fox-in-a-Box by Silver Fox Ltd

Data Centre ICT Storage Product of the Year

Anti-Ransomware Data Protection by Asigra

GridBank's Enterprise Data Management Platform by Tarmin

Computational Storage Solutions by Scaleflux Computational Storage

JovianDSS by Open E

Cohesity DataPlatform by Cohesity

StorPool Storage by StorPool

Data Centre ICT Security Product of the Year

Automated Endpoint Security and Incident Response by Secdo

Cloud Protection Manager by N2W Software

SecuStack by SecuNet Security Networks

Data Centre ICT Management Product of the Year

Tarmin GridBank by Tarmin

VirtualWisdom 5.4 by Virtual Instruments

Ipswitch WhatsUp Gold® 2017 Plus by Ipswitch

HC3 platform by Scale Computing

EcoStruxure IT by Schneider Electric

ParkView by Park Place Technologies

Data Centre Cabinets/Racks Product of the Year

Environ CL Series by Excel Networking Solutions

Knürr DCD Rear Door Heat Exchanger by Vertiv Integrated Systems GmbH

303 ECO SSF cabinet by Dataracks

HyperPod Rack Ready System by Schneider Electric

Data Centre ICT Networking Product of the Year

PORTrockIT by Bridgeworks

Unity EdgeConnect by Silver Peak

Secure Cloud-Native Networking by Meta Networks

Data Centre Hosting/co-location Supplier of the Year

Workspace Technology

Colt Data Centre Services

Volta Data Centres

UKFast.Net Limited

Rack Centre

LuxConnect

Green Mountain

Data Centre Cloud Vendor of the Year

Zerto

N2W Software

PhoenixNAP

Zadara

Claranet

Asigra

Data Centre Facilities Vendor of the Year

Nlyte Software

Dataracks

Asperitas

Excellence in Data Centre Services Award

Rack Centre

Park Place Technologies

4D Data Centres Ltd

Data Centre Energy Efficiency Initiative of the Year

EU Horizon 2020 EURECA Project

Green Mountain

DAMAC

Data Centre Innovation of the Year

Cloud Protection Manager by N2W Software

ParkView by Park Place Technologies

Green Peak – Dashboard by Green Mountain

HyperPod Rack Ready System by Schneider Electric

Data Centre Individual of the Year

Anuraag Saxena, Ekkosense

Konkorija Trifonova, CBRE

Ole Sten Volland, Green Mountain

Dan Kwach, East Africa Data Centre

Although GDPR is probably the best-known example, a wave of regulation and compliance legislation is being enacted across the world, and particularly in Europe, as regulators get to grips with the modern data economy. This can mean conflicting requirements in some territories, or confusing messages for customers and organisations.

The trend in previous years towards a reduction in regulation seems to have ground to a halt. And while the tone and mood of the new rules such as GDPR is seen to be persuasive and “nudging” by those at the senior levels of policy-making, their actual implementation could well see a “big stick approach” by local and national law-makers.

GDPR itself already promises swingeing fines and there is every chance that prominent and perhaps not-so-prominent companies and organisations may be made examples of with some headline-grabbing penalties. Suppliers of Managed Services will have many new responsibilities and may well find themselves in the firing line as the legal implications take their courses.

This is the main point behind the latest speaker announcement for the European Managed Services & Hosting Summit 2018, to be held in Amsterdam on May 29, 2018. A full session will be devoted to the issue of working across the rising tide of compliance in Europe. With all indications that many MSPs are looking to expand by partnering or acquiring operations in other geographies, this will be an essential item for discussion at senior levels. Any senior figure in a managed services company will need to be familiar with both the processes and implications of the new levels and nature of compliance requirements in any territory they are working in, and beyond.

GDPR is not the only game in town, says Ieva Andersone, a senior associate from Sorainen, a major legal firm in the IT industry, based in the Baltics, a region with a high degree of interest in pan-European business relations and one of the fastest growing regions in IT generally and in managed services adoption. Parts of Europe, even parts of countries, will have their own local rules or GDPR interpretations, she will argue, which managed services companies will need to be aware of, and which may well apply to IT projects with connections outside their core territory. As an experienced, Cambridge-educated lawyer working in multiple cultures and markets in Europe, her presentation discusses the nature of the regulations, their intentions and direction and how they may affect suppliers of services, including managed services, in unexpected ways.

With plenty of discussion points on how to keep the MSP business on the right side of the law, and with guidance as to strategies to adopt, the annual Managed Services and Hosting Summit (MSHS) on May 29 in Amsterdam always aims to use experts to advise European MSPs on these major issues. The first keynote presentation, from Gartner, will address the key issue of how MSPs can differentiate themselves in an increasingly competitive market.

This MSHS event offers multiple ways to get answers: from plenary-style presentations from experts in the field to demonstrations; from more detailed technical pitches to wide-ranging round-table discussions with questions from the floor. There is no excuse not to come away from this key event with ideas for a strategy to keep the business out of trouble.

One of the most valuable parts of the day, previous attendees have said, is the ability to discuss issues with others in similar situations, and attendees are all hoping to learn from direct experience.

In summary, this is a management-level event, held in English, designed to help MSP and channel organisations identify opportunities arising from the increasing demand for managed and hosted services and to develop and strengthen partnerships, while keeping up with the latest compliance and legal requirements in multiple markets.

Registration is free-of-charge for qualifying delegates - i.e. director/senior management level representatives of Managed Service Providers, Systems Integrators, Solution VARs and channels. More details: http://www.mshsummit.com/amsterdam/register.php

In this month’s DCA journal we will be focusing on data centre security both physical and cyber. I’d like to address the issue of cyber Security first.

By Steve Hone, CEO & Founder The DCA

I spotted a billboard on the tube the other day that claimed you were 40% more likely to be a victim of cyber crime than to have your house robbed. This claim was backed up by the Office for National Statistics who have seen a steady rise in reported cybercrime year on year, with more than 6m incidents of cybercrime being reported each year.

This is far more than previously predicted and enough to nearly double the headline crime rate, that equates to more than 40% of all crimes committed in England and Wales.

Data centres represent a very attractive target for criminals. If someone manages to breach these defences, the data halls should be protected by a host of biometric security systems, man-traps and other security protocols, meaning physical access to the servers is in no way guaranteed, however this assumes that the criminal has a crowbar, swag bag and wearing a balaclava. What happens if the attacker is not planning on abseiling across the roof tops and dropping in through the air duct; what if he can break into your facility and steal your data or plant a virus/DDos all done from the comfort of his or her armchair with out you even knowing about it.

According to a Cyberthreat report, business-focused cyber-attacks – including ones specifically targeting datacentres – has increased by 144% in the past four years and data centres have become the number one target of cyber criminals, hacktivists and state-sponsored attackers. Although physical security should remain a top priority for datacentre operators, equal consideration needs to be given to the increasing threat posed by cyber-attacks with the same level of due care and attention.

Although I personally do not confess to be an expert when it comes to cyber security the good news is as the saying goes “I know someone who is” if fact the DCA has access to lots of members who could help so If you would like to speak to a specialist then the Trade Association can facilitate this for you.

That leads us nicely in to centre physical security

The aim of physical data centre security is, to keep out the people you don’t want in your building or accessing your data. Simply put if you are not on the list you can’t come in. Assuming their name is on the list; equally important once inside is to continue to keep an eye on them. If you discover that someone be it a customer, contractor or even staff member is guilty or suspected of committing a security breach, identify them as soon as possible - containment of the situation is paramount.

Through the Data Centre Trade Association, you have access to a wealth of specialists and experts and I would especially like to thank Datum, South Co, Chatsworth and EMKA who have all summitted articles in this month’s edition of the DCA journal.

When looking at physical security for a new or existing data centre, its sensible to first take 4-4 steps back and perform a risk assessment of the actual data and equipment that the facility will hold. Fully understanding the risks and potential breaches that could occur is essential; as is establishing the likelihood of such a breach taking place and the impact it could have on your business (be that reputational or financial). This type of drains up assessment should be your first port of call when defining your physical security requirements and determining how far you need to go.

I have often heard it said that “security of any facility needs to be like and an onion” made up of multiple layers of security which emanate out from what it is you are trying to protect.

When we are talking in terms of a physical data centre what typically makes up the layers of a data centre onion?

Keep a low profile: Especially in a populated area, you don’t want to be advertising to everyone that you are running a data centre.

Avoid windows: There shouldn’t be windows directly onto the data floor.

Fencing: Granted not always possible if located in a city location, which is where the avoid windows advice kicks in (see above); however, if you are going to have fencing make sure it not just a token gesture. There are plenty of guidelines when it comes to security fencing and the Trade Association can point you in the right direction in the form of fellow members who can offer you guidance as required.

Limit entry points: Access to the building needs to be controlled. Think not just main entrance and fire exits and loading bays but also roofs access points as well.

Fire Exits: When it comes to those fire exits make sure they are exactly that “exit only” (and install alarms and monitoring on them, as they are often frequented by smokers popping out for a crafty one, who then politely hold the door open for a stranger to wander in). I’m not saying this could happen by the way, I know it does happen.

Hinges on the inside: Which makes it far too easy for someone to pop the pins out to gain access. Sounds basic but this is a common mistake I often see with repurposed buildings.

Tailgating: Following someone through a door before it closes know as ‘tailgating’ is one of the main ways that an unauthorized visitor will gain access to your facility. By implementing man-traps that only allow one person through at a time, you force visitors to be identified before allowing access.

Smile you are on camera :0) You can never have enough cameras - CCTV cameras are a very effective deterrent for an opportunist as is proximity flood lighting. All footage should be stored digitally and archived offsite, don’t forget new GDPR rules BTW.

Access control: You need granular control over which visitors can access certain parts of your facility. The easiest way to do this is through proximity access card readers, biometrics, retinal scans on the doors.

Pre-approval and Personal Identification: Many data centres operate on a pre-approval system whereby you advance warn the DC that someone will be attending site and normally this person will need to show some form of photo ID (driving licence, ID card or passport) Cast iron rule “no ID = no entry” irrespective of how much they protest.

Compound entry control: Access to the facility compound, be that pedestrian or vehicle via a parking lot, needs to be strictly controlled either with gated or turn style entry that can be opened remotely by the reception/security once the person/driver has been identified. Ram Raiders don’t just target retail stores, metal bollards or large boulders can just as effectively act as a protective exterior layer to prevent a vehicle itself being used as a 15th Century battering ram.

Processes and training: This might sound out of place in this list of essentials; but having all the security layers in the world will be worthless unless you have the processes and procedures documented and have your staff vetted and trained to prevent security breaches from happening and this needs to include any 3rd party contract staff you employ.

You can never test enough: It’s only by regular testing and auditing of your security systems that any gaps will be identified before someone else can exploit them.

At the end of the day both cyber and physical security considerations come down to managing risk so make sure you carry out a regular risk assessment, try to think of data centre security like an onion and remember not all Burglars wear balaclavas.

Thank you again to all the contributors in this month’s edition. Next month’s journal theme is focusing on Energy Efficiency and by then I will also be able to report on Data Centres North from Manchester. Deadline for article submission will be the 15th May.

The DCA

W: www.dca-global.org

T: 0845 873 4587

E: info@dca-global.org

Simon Williamson, Business Development Manager, Electronic Access Solutions, EMEIA (SOUTHCO) TBA

In today’s world, data is fast becoming the new global currency and as data volumes continue to grow at an exponential rate, the issue of data security continues to cause concern within the industry. While there is widespread awareness of the many digital attacks that compromise data, less is said about the physical threats to information stored in data centres.

Despite extensive measures in place to secure the perimeter of a data centre, often the biggest threat to security can come from within. It is not uncommon for individuals entering these facilities to cause accidental security breaches. In fact, IBM Research states that 45 percent of breaches occur as a result of unauthorized access, costing over $400B annually.

This issue is particularly prevalent for colocation data centre providers, who host data cabinets for multiple clients. Each server cabinet should be secured at the rack level with access only granted to authorized personnel. Traditionally, access to individual racks has been protected by key-based systems with manual access management. In some instances, data centre managers have turned to a more advanced coded key system, but even this approach provides little in the way of security—and no record of who has accessed the data centre cabinets.

Electronic Access Solutions Enhance Physical Security

To alleviate the problem of unauthorized access and concerns surrounding data security, traditional security systems are quickly being replaced by intelligent electronic access solutions. Above all, these solutions provide a comprehensive locking facility while offering fully embedded monitoring and tracking capabilities. They are a vital element of a fully integrated access-control system, bringing reliable access management to the individual rack. The system also enables the creation of individual access credentials for different parts of the rack, all while eliminating the need for cages, thereby saving costs.

Simplified Installation

Any physical-security upgrade in the data centre has its issues, of course. Uninstalling existing security measures in favour of new ones costs both time and money, which is why data centre owners are turning toward more-intelligent security systems such as electronic-locking swinghandles, which can be integrated into new and existing secure server-rack facilities. They employ existing lock panel cut outs, eliminating the need for drilling and cutting. This approach allows for lock standardisation in the data centre, saving considerable time (and therefore cost)—something that holds real value given the pressing demand for data centre services.

Credential Management

Physical access to the rack can be obtained using an RFID card, pin code combination, BLUETOOTH®, Near Field Communication (NFC) or biometric identifications. The addition of a manual override key lock allows emergency access to the server cabinet. Even in the event that security needs to be overridden, an electronic access solution can still track the audit trail, monitoring time and rack activity. Solutions such as this have been designed to lead protection efforts against physical security breaches in data centres all over the world.

By enhancing security at the individual rack level, providers can restrict rack access to only those with authority, which is especially relevant in colocated data centres, where data cabinets are under threat from both accidental and malicious breaches. Installing the right electronic access solution can help to eliminate costly breaches in a short time-frame and maximize colocation security.

For more information about Southco’s Data Centre security solutions visit www.racklevelsecurity.com.

-tn4ev0.jpg)

By Luca Rozzoni, European Business Development Manager, Chatsworth Products (CPI)

Whilst security has always been a key consideration for the data centre industry, the upcoming EU General Data Protection Regulation (GDPR) – a strict set of regulations set to protect data privacy – means that data protection and security policies have taken on a new level of priority.

Regulatory and Compliance Requirements

The GDPR requirements will come into force on 25th May 2018 and affect organisations worldwide. Whilst EU countries must comply, any organisation collecting or processing data for individuals within the EU should also be developing their compliance strategy. The UK Government has indicated that, even taking Brexit into account, it will implement an equivalent set of legislation and UK organisations must review their security practices in regards to the protection of personal data and consider their own routes to compliance.

So How Should Data Centres Prepare?

Whilst organisations are expected to use their own judgment in regards to making sure they have taken the ‘appropriate technical and organisational measures’ to ensure compliance, Regulation (EU) 2016/679 stresses the need for secure IT networks, and provides an example of “preventing unauthorised access to electronic communications networks and malicious code distribution and stopping ‘denial of service’ attacks and damage to computer and electronic communication systems.” Put simply, whilst access control may seem an obvious part of any security policy, data centres must be able to demonstrate that they have the appropriate access policies in place.

Cabinet-level security has always been an important part of data centres’ data protection and security policies. Strict regulatory compliance requirements, such as HIPAA in health care and PCI DSS in online retail, demand audit logs of every access attempt as part of physical access control to help ensure data privacy and security. Automatic logging of cabinet access is also important, given that a large portion of attacks within these industries (58 percent in the financial and 71 percent in the health care, to be more precise) are carried out by insiders advertently or inadvertently, according to a 2017 report by IBM X-Force.

This makes sole reliance on mechanical keys not effective at best and, at worst, has the potential of resulting in privacy related lawsuits.

Electronic access control (EAC) solutions are essential in addressing user access management issues within the data centre and can be an extremely cost-effective method of delivering intelligent security and dual-factor authentication to the cabinet.

Key features to look out for when selecting an EAC solution include:

Dual-factor Authentication

Dual-factor authentication enables data security to be taken to the next level. One of the most secure forms of physical access verification is biometric authentication. However, many organisations have dismissed this in the past due to cost, as it typically requires additional readers to be installed to every cabinet or facility door.

A cost-effective and secure dual-factor authentication solution is a fingerprint-activated card that is able to work with existing EAC or other card-activated locks. A card that is compatible with readers for 125 KHz, HID ICLASS and MIFARE® proximity cards and can work with existing campus security systems eliminates the need for expensive deployments and means data centre employees only need to carry a single card.

Remote Management and Reporting

Using a simple, user-friendly web interface to remotely manage the networked EAC locks allows the user to remotely monitor, manage and authorise each cabinet access attempt. Crucially, using this type of intuitive interface provides an audit trail for regulatory compliance through log reports. The logging report can be easily exported and emailed to the administrator.

Managing the networked EAC locks through the web interface also reduces the need for wiring the electronic access systems to expensive security panels which are usually managed through Building Management Systems.

IP Consolidation

Data centres can realise dramatic savings in networking costs and deployment times through the ability to network several locks through IP consolidation. It is now perfectly feasible to choose a solution that will allow up to 32 EAC controllers (32 cabinets) to be networked under only one IP address.

Combining EAC with Environmental Monitoring

Choosing an EAC solution which offers added benefits, such as environmental monitoring, can ensure a much faster return on any initial investment, especially when you consider the savings which can be made by utilising one IP port for an appliance that offers both EAC and environmental monitoring.

There are solutions available which can monitor and manage both temperature and humidity through the same web interface, issuing proactive notifications to help data centre managers ensure service reliability by taking action before issues turn into downtime.

The infrastructure can be badly affected by water, dust and other harmful particles so it is worth looking for a solution which also has the capability to monitor and detect smoke, water and even motion.

The Future

As outlined in Regulation (EU) 2016/679, ‘rapid technological developments and globalisation have brought new challenges for the protection of personal data’ and ‘the scale of the collection and sharing of personal data has increased significantly’.

As a result, customers’ needs and expectations regarding privacy of data have increased, as has the sophistication of the threats now posed. Data centres must look for more powerful and effective methods of delivering peace of mind to the customer as well as compliance to new and emerging regulations and electronic access control (EAC) solutions are a key weapon in their arsenal. Fortunately, delivering intelligent security and dual-factor authentication to the cabinet is no longer out of reach for organisations needing to meet strict budgets.

Dr. Nigar Jebraeili Research Assistant at University of East London (UEL)

Today we see a small number of progressive (some would say maverick) fully implemented software-defined data centres (SDDC) in operation. While these front runners lead the way, right behind them is a huge number of enterprises being in another vortex which will force them to adopt these new ways sooner than we had expected. This can be seen as a classic IT market development pattern.

Today, roughly 70% of the IT organisations are involved with some form of server virtualisation. This automatically puts the user of such techniques in the top wide section of a funnel & there is only one way for them to go: Down the defined path!

As data centres evolve to embody the new generation of modern IT technologies, they are expected to offer the benefits of fully integrated solutions, provide services for pre-sales and post-sales, allow for continues modifications of standard product and enable easy sourcing, etc. as they offer packages of ultimate reliability and flexibility. However security is a decisive factor that remains the centre of attention for both parties, the users as well as the providers.

New figures suggest that each year, businesses lose $400 billion to hackers! Hence, security remains amongst the main concerns of IT organisations. Choosing how to construct a private, public or indeed a hybrid cloud is probably amongst the most critical strategic decisions to make for IT leaders nowadays and to a great degree it determines the enterprises’ flexibility, reliability and competitiveness.

Today we hear about a relatively new trend in data centres called SDDC (Software-Defined Data Centre). I say a “relatively” new, as the concept is based on the containerisation techniques that have been around since early 1980s. The SDDC’s agile platform not only enables IT organisations to adapt with the high speed of rapid business growth through vitalizing workload, networking as well as storage, but this new concept also can offer a high level of security that was hard to achieve previously.

The question might arise, what are the implications of SDDC on the overall security in Data centres?! To name a few, here I will lightly touch on a few benefits of SDDC that can have a positive impact on the overall security regulations.

As the nature of SDDC implies, generally there are three main aspects of security to be identified:

1. Workload-specific security aspects;

2. Network-specific security aspects;

3. Storage-specific security aspects;

A single configuration mistake in a traditional data centre can lead to a totally dysfunctional data centre, whereas a major advantage of SDDC network security is the unified controller that is in charge of various aspects of the network functionality including the security functions. Hence consolidation of policies can happen across the SDDC data centre infrastructure.

As its main characteristics, SDDC allows for a high degree of automation which minimises the human intervention/ error as opposed to traditional data centres which are inherently error-prone and dependant on brittle physical characteristics, even if using centralised management applications. This is particularly the case where there are recurring configuration tasks and various rules distributed across infrastructure that need manual configuration.

SDDCs on the other hand consistently enforce a high level of policy-based automation that facilitates not only the configuration tasks, but more importantly swift “reconfigurations” of enforced regulatory compliances, which can result in high levels of security and the ability to sustain the rapid changes demanded by today’s business environment and moreover reduce the typical risk of out of date security policies.

Another positive advantage of workload security in SDDC is switching from the traditional legacy security boundary to the SDDC’s functional boundary. It improves the visibility of security software such that it is possible to control the data and the workload’s behavioural patterns, by consistent monitoring, blocking and immediate confrontation of the threats. As a result, it creates a stronger and more reliable security as opposed to traditional legacy security boundaries such as outdated design of DLP (Data Loss Protection) and/or IPS (Intrusion Prevention System) which mostly focus on protecting the borders rather than context and flow. Having said that, adding robust internal layer of security requires consistent policy enforcements, which once again is another affirmation on the importance of automation in SDDCs.

Last but not least, in order to benefit from SDDC’s security advantages it is decisively important to ensure the security models applied to virtualised environment are adequate SDDC-aware security tools, adapted and designed to meet the requirements of these virtualised, centralised and fast-paced environments.

The nature of threats we face change almost as fast as we develop systems & thus the agility with which we respond is the key to our protection. With all the advantages of the new paradigm of SDDC one is still left to find ways of assessing the vulnerabilities. The key becomes the evaluation of the quality of automation, & ease of manipulating central control to adapt to new regulations we wish to impose on the system. Perhaps now is the time to embrace a new vocation that can potentially elevate the industry, by taking the initiation of nurturing & training in a systematic way if we are to make the transition with less risk.

Lexie Gower, Datum Datacentres

GDPR – burgeoning cloud storage – cyber-attacks – ransomware. We live in a digital world with ever increasing digital threats and stringent digital regulations because we are our data. Everything we do as individuals and as corporations creates data and leaves a data trail. This vital information is our strength and our Achilles heel. People responsible for managing, processing and storing data are the gatekeepers to more than just interesting facts, they have the keys to our lives.

A recent survey on behalf of the Information Commissioner’s Office found that only one fifth of the UK public (20%) have trust and confidence in companies and organisations storing their personal information – and in the meantime, the world continues to be a dangerous place, both on the ground and in the cybersphere.

Organisations of all kinds are highly exercised working out ways to ensure they do not fall foul of the DPA or the GDPR, and there is a strong focus on ensuring corporate reputations and customer confidence are not flattened by the negative consequences of a data leak. One drastic solution could be to wipe all the data…. but as the organisation would no longer be a viable functioning entity, it is unlikely to be very popular!

As with all exercises, actual solutions are a mix of people, processes and tools, all supported by responsibility and due diligence. When everything is held in-house, all necessary precautions can be taken, but at a probably untenable cost. Opting for “everything in the cloud” offers the flexibility and power we may want at a more controlled cost, but on the flip side, the security risks are undoubtedly harder to guarantee which may be unacceptable for some data. Hybrid solutions combine the cost advantages and flexibility of the cloud with the ability to apply more rigid safeguards where the information is critical.

Cybersecurity is not just air

Cyber security is the body of technologies, processes and practices designed to protect networks, computers, programs and data from attack, damage or unauthorized access. In a computing context, the topic of security includes both cybersecurity and physical security as, regardless of the solution pursued, at some stage the data will be stored, processed and managed in a physical entity on the ground. Whilst many sparkling brains and enormous investments go into developing the tools and apps that help to shield networks and data from unwanted intruders, the solid physical base needs more than a padlock on the door. Too many data centres treat security as a box-ticking exercise whereas real confidence in physical security can only be justified where multiple layers of people, processes, tools and diligence are invested, implemented, and accredited.

Whilst all organisations are obligated to safeguard their data, those that hold particularly sensitive information are further compelled to ensure they do not slip up, perhaps because the threat to them is greater, or perhaps because of additional regulations or requirements. Whatever the driver, a data centre business that can attract and retain such organisations has to pay considerably more than lip service to the notion of security. Take Datum as a case in point. Built in a secure List-X park, with full perimeter security and CCTV, permanently guarded security gates and highly controlled access, the data centre itself provides further 24x7x365 manned security, CCTV inside and out, building entry controls and biometrically regulated data hall access. For those clients with even greater concerns, dedicated locked cages, and even cages within cages, are provided within the data hall. The overall business model and approach to security, and to service, has ensured that major national and international clients have audited and tested the security before, during and after moving their kit into the data centre.

Accreditations speak volumes