Technology and morality have been strange bedfellows ever since an enlightened caveman, or cavewoman, came up with the idea of the wheel. Ever since that time, technology developments have presented humans with an amazing world of positive possibilities. The downside being that the very same developments that promise so much good can also be used to cause mischief, death and destruction on a spectacular scale.

And nothing has changed with the advent of the Digital Age. There are many phenomenal upsides to the introduction of smart technologies across all levels of society, all geographical locations and across all industry sectors. And I’m sure that we all take advantage of these every day, without giving too much thought as to the overall impact of these developments. Of course, we can’t uninvent things, but if we’re all going to be kept alive until we’re 120 so that we can just sit in an armchair and do nothing with our days, is this such a major achievement? And if robots really are going to replace much of today’s workforce, what will we do with so much spare time (there are only so many rounds of golf I can play!) and how will we earn any money to pay for our hobbies and our food?

History students will point out that, no matter how civilised we like to think that we are right now, there’s almost certainly going to be some major global conflict during the 21st century. Add into this the effects of climate volatility (whether the result of human-created global warming or not), and maybe there’s no need to worry about a technology-driven future. Human nature and nature itself will restore some kind of a balance. We might eventually run out of resources and the population will start to shrink…

This comment is offered up as nothing more than a suggestion that it might benefit us all to understand the lifecycle impact of any digitally-driven changes we might choose to adopt in our own lives, for better and for worse.

With many options for digital business transformation requiring significant investment, Gartner, Inc. has identified the top 10 ways to fund the shift to digital business.

According to Gartner's 2017 CEO survey, 42 percent of CEOs are now taking a digital-first approach to business change or taking digital to the core of their enterprise model. To fund digital initiatives, CEOs indicate that the largest bulk of money comes from self-funding, rather than existing budgets, as they see the primary purpose of digital initiatives to win revenue rather than to save costs.

"This should give CIOs pause for thought, given conventional IT management works mostly on the basis of using operating budgets," said Andy Rowsell-Jones, vice president and distinguished analyst at Gartner.

"Transformation requires commitment, leadership, strategy, technology, innovation and importantly, money," Mr. Rowsell-Jones said. "This year and next are likely to be the optimal timing points of overlap between the business cycle and the tide of digital business change. In two years' time, the rising cost of capital could make strategic investment more expensive, and playing digital catch-up is harder."

The top 10 ways to fund the shift to digital business are:

1) Internal self-funding: digital revenue pays

This will only work for short-term projects to gain immediate revenue returns, such as for digital marketing campaigns or price-elevating digital product features. This approach needs clear revenue attribution and is good for continuous, incremental growth, but will not work for disruptive market change.

2) Within existing budgets

It can work for relatively superficial digital business change over two to three years, if budgets are healthy, already generous and need trimming. It is not good for rapid transformation as it might throttle existing business.

3) Investment from reserves

Reserves are the part of profit set aside for internal reinvestment to help the business in tough times, which digital disruption and market loss might fit under. If reserves are healthy, it might accelerate digital transformation with low financial impact on current operations.

4) Increase relevant budgets and cut others

This option requires a very clear understanding of how digital business growth will substitute heritage business slowdown. It is useful if digital business is recognizable and deliverable in the same corporate structure to the same customer base, but not appropriate for adjacency moves or radical industry reinvention.

5) Increase relevant budgets and cut profits

Relevant to deep, multiyear strategic change, requiring clear and careful explanation to investors. It may be easier if a disruptive, threatening competitor makes the transformation need more obvious to all, or for private or family-held companies with long-term planning horizons and fewer owners to convince.

6) New bond or equity capital from investors

If digital transformation requires heavy, multiyear investment, fresh capital may need to be raised. Smaller companies with faster growth rates can raise equity capital from investors by issuing more shares. Larger mature companies with strong reputations can raise debt capital by issuing more corporate bonds.

7) Borrow capital from lenders

Loan capital is typically shorter term, more tactically arranged and helps bridge gaps arising from digital transformation. It is usually only available for conventionally describable, measured risk situations, rather than for speculative entrepreneurial action or situations of industry reinvention.

8) Off balance sheet entries

Another option is to place all or part of the new digital product, service or activity in a separate company shell with investors, benefiting "risky" or "unusual" experiments. This is useful for "farming" digital ecosystems and startups by working with VCs and incubators as co-founders, as well as for industry consortiums.

9) Divestitures

When digital disruption is serious in an industry, one strategy can be to sell legacy business units early to buyers that are happy to run them in their declining years. The capital receipts from divestitures can then be used to help fund the growing new digital business ventures and revenue streams.

10) Asset disposals

Some assets that were useful in the past but have less relevance in digital business may have a market value to others. Cycling out old physical assets to pay for digital growth can work where "dematerialization" is in play.

Intelligent automation will transform workplace outsourcing according to Gartner, Inc. Sourcing and vendor management leaders must prepare to restructure these services and renegotiate contracts to leverage intelligent automation.

Gartner defines intelligent automation services as the umbrella term for a variety of strategies, skills, tools and techniques that service providers are using to remove the need for labor, and increase the predictability and reliability of services while reducing the cost of delivery.

“Intelligent automation will alter the provision of managed workplace services over the next few years, increasing service quality at a lower price,” said DD Mishra, research director at Gartner. “Sourcing and vendor management leaders must prepare to restructure these services and renegotiate contracts to leverage intelligent automation. Automation-driven improvements in service delivery and pricing will allow sourcing and vendor management leaders to select a wider range of moving managed workplace services (MWS) outcomes that will improve quality and cost simultaneously.”

Moving MWS functions from ones that are solely resourced by humans to functions that have a mix of humans and intelligent automation services (IAS) will create benefits in both pricing and service quality. The replacement of human labor by such mixed services can only occur if the automated services offer a cost reduction for the service provider. Many service providers recognize that they cannot continue to resource MWS by simply adding more service heads and thus are investing heavily in IAS for this reason.

As IAS provision becomes part of MWS, providers will pass on part of the resultant cost savings to clients in an attempt to win business. For services such as service desks, intelligent automation tools can be up to 65 percent less expensive than offshore-based staff. Up to 2021, Gartner expects the costs of commodity services to decline by 15 percent to 25 percent annually, as they move toward this price point.

Ongoing reductions in outsourced head count due to intelligent automation will eventually force sourcing and vendor management leaders to redesign the workplace services for their organizations' users. This will result in a corresponding drop in the numbers of staff required on the service desk, so that when 70 percent of the workload is dealt with by IAS, only 30 percent of the staff will remain. Eventually, the potential for vendor lock-in, driven by a dependency on new tools and the IP they create, will require sourcing and vendor management leaders to incorporate new risk management provisions in MWS contracts.

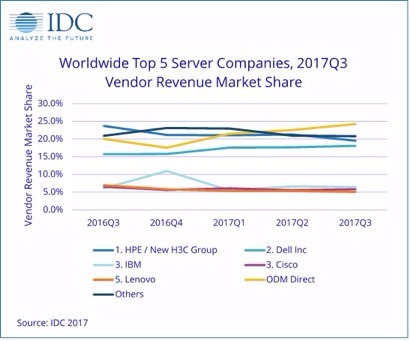

According to the International Data Corporation (IDC) Worldwide Q uarterly Server Tracker, vendor revenue in the worldwide server market increased 19.9% year over year to $17.0 billion in the third quarter of 2017 (3Q17).

The server market has strengthened recently after several slow quarters, in which much of the market waited for the Purley and EPYC launches. While demand from cloud service providers has propped up overall market performance, other areas of the server market are beginning to show growth now as well. Worldwide server shipments increased 11.1% year over year to 2.67 million units in 3Q17.

Volume server revenue increased by 19.3% to $14.2 billion, while midrange server revenue grew 26.9% to $1.4 billion. High-end systems grew 19.4% to $1.3 billion, benefitting from IBM's z14 launch this quarter. IDC expects continued long-term secular declines in high-end system revenue, with short periods of growth related to major platform refreshes.

"Hyperscalers continued driving volume demand in the third quarter, with Amazon again leading the charge, as Google and Facebook also began ramping up their server deployments again," said Kuba Stolarski, research director, Computing Platforms at IDC. "While ODMs have largely been the beneficiaries of hyperscaler server demand, some OEMs have now begun to experience significant growth related to the enterprise segment. Dell Inc grew its server business by 37.9%, relying on the strong synergy between its server team and the storage team incorporated from the EMC acquisition. HPE has been pivoting away from hyperscaler business and focusing on the enterprise, hurting year-over-year comparisons in the short term, but showing strength in the enterprise. China has become a strong market for enterprise growth, as evidenced by Dell Inc growing 42.3% year over year to $433 million, and HPE/New H3C Group growing 49.6% to $421 million. In addition, IBM has demonstrated that the enterprise still has space for non-x86 systems, growing the newly refreshed system z business by 63.8% year over year to $673 million."

Overall Server Market Standings, by Company

HPE/New H3C Group remained first in the worldwide server market with 19.5% market share in 3Q17, as revenue decreased 1.1% year over year to $3.3 billion. HPE's share and year-over-year growth rate includes revenues from the H3C joint venture in China that began in May of 2016; thus, the reported HPE/New H3C Group combines server revenue for both companies globally. Dell Inc maintained the second position in the worldwide server market with 18.1% of vendor revenue for the quarter and 37.9% year-over-year growth to $3.1 billion. IBM and Cisco were statistically tied* for the third market position. IBM had 6.4% share, with revenue growing 26.5% year over year to $1.1 billion. Cisco had 5.8% share, with revenue increasing 6.9% to $992 million. Lenovo was ranked fifth with 5.1% share and revenue declining 12.6% to $861 million. The ODM Direct group of vendors grew revenue by 45.3% to $4.1 billion. HPE and Dell Inc were in a statistical tie* for first place in unit share, each with 18.8%. IDC initiated reporting Super Micro results in the Server Tracker with this release.

| Top 5 Companies, Worldwide Server Vendor Revenue, Market Share, and Growth, Third Quarter of 2017 (Revenues are in Millions) | |||||

| Company | 3Q17 Revenue | 3Q17 Market Share | 3Q16 Revenue | 3Q16 Market Share | 3Q17/3Q16 Revenue Growth |

| 1. HPE / New H3C Group | $3,317.4 | 19.5% | $3,355.4 | 23.7% | -1.1% |

| 2. Dell Inc | $3,070.4 | 18.1% | $2,226.7 | 15.7% | 37.9% |

| 3. IBM* | $1,093.7 | 6.4% | $864.4 | 6.1% | 26.5% |

| 3. Cisco* | $992.5 | 5.8% | $928.0 | 6.6% | 6.9% |

| 5. Lenovo | $861.2 | 5.1% | $985.0 | 7.0% | -12.6% |

| ODM Direct | $4,118.7 | 24.3% | $2,834.5 | 20.0% | 45.3% |

| Others | $3,528.7 | 20.8% | $2,965.5 | 20.9% | 19.0% |

| Total | $16,982.6 | 100.0% | $14,159.5 | 100.0% | 19.9% |

| IDC's Worldwide Quarterly Server Tracker, November 2017 | |||||

| Top 5 Companies, Worldwide Server Unit Shipments, Market Share, and Growth, Third Quarter of 2017 (Units are in Thousands) | |||||

| Company | 3Q17 Units | 3Q17 Market Share | 3Q16 Units | 3Q16 Market Share | 3Q17/2Q16 Unit Growth |

| 1. Dell Inc* | 503.0 | 18.8% | 452.3 | 18.8% | 11.2% |

| 1. HPE / New H3C Group* | 501.4 | 18.8% | 510.2 | 21.2% | -1.7% |

| 3. Lenovo* | 151.8 | 5.7% | 226.8 | 9.4% | -33.1% |

| 3. Inspur* | 149.1 | 5.6% | 108.2 | 4.5% | 37.9% |

| 3. Super Micro* | 136.7 | 5.1% | 106.4 | 4.4% | 28.4% |

| 3. Huawei* | 133.3 | 5.0% | 127.9 | 5.3% | 4.2% |

| ODM Direct | 668.0 | 25.0% | 461.7 | 19.2% | 44.7% |

| Others | 428.0 | 16.0% | 409.7 | 17.0% | 4.5% |

| Total | 2,671.2 | 100.0% | 2,403.3 | 100.0% | 11.1% |

| IDC's Worldwide Quarterly Server Tracker, November 2017 | |||||

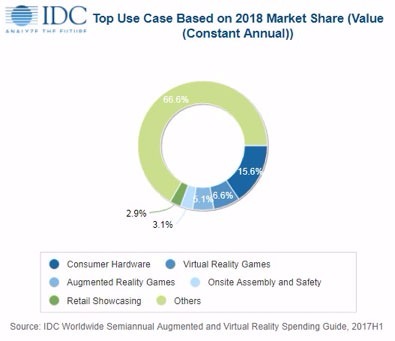

Worldwide spending on augmented reality and virtual reality (AR/VR) is forecast to reach $17.8 billion in 2018, an increase of nearly 95% over the $9.1 billion International Data Corporation (IDC) expects will be spent this year. A new update to IDC's Worldwide Semiannual Augmented and Virtual Reality Spending Guide also shows that worldwide spending on AR/VR products and services will continue to grow at a similar rate throughout the remainder of the 2017-2021 forecast period, achieving a five-year compound annual growth rate (CAGR) of 98.8%.

"Virtual reality will continue to drive greater levels of spending in the next 12-18 months, as both consumer and commercial use cases gain traction. There is currently a huge appetite from companies that see tremendous potential in the technology, from product design to retail sales to employee training," said Tom Mainelli, program vice president, Devices and AR/VR at IDC. "Meanwhile, the augmented reality market will deliver more modest levels of spending near term with mobile AR on smartphones and tablets likely to garner the most attention from consumers, while head-mounted displays will primarily sell into commercial use cases."

The consumer sector will remain the single largest source of spending for AR/VR products and services with worldwide spending in 2018 expected to reach $6.8 billion. Nearly three quarters of this total will be for VR hardware and software while AR spending will be dominated by software purchases. Gaming will be the dominant AR/VR use case for consumers throughout the forecast period. The five-year CAGR for consumer AR/VR spending will be 45.2% with total spending exceeding $20 billion in 2021.

In contrast, the commercial sectors will represent more than 60% of AR/VR spending in 2018 and grow to more than 85% of the worldwide total in 2021. Each of the five commercial sectors is forecast to undergo triple-digit spending growth throughout the forecast, led by the public sector with a five-year CAGR of 156.7%. The largest of the commercial sectors in 2018 will be distribution and services ($4.1 billion), led by the retail, transportation, and professional services industries. The second largest sector will be manufacturing and resources ($3.2 billion) with balanced spending across the process manufacturing, construction, and discrete manufacturing industries. Retail will be the industry with the largest AR/VR spending in 2018, followed by the process manufacturing and construction industries.

Commercial use cases will vary from sector to sector and industry to industry. In the distribution and services sector, retail showcasing and online retail showcasing will be the two largest use cases with combined spending of more than $950 million in 2018. Online retail showcasing will also experience exceptional spending growth with a five-year CAGR of 225%. Onsite assembly and safety, process manufacturing training, and industrial maintenance will be the largest use cases in the manufacturing and resource sector. In the public sector, infrastructure maintenance and government training will be the two largest use cases in 2018.

"Commercial entities are ready to embrace virtual reality for both customer-facing use cases and internal ones," said Marcus Torchia, research director of IDC Customer Insights & Analysis. "There are a lot of opportunities here to develop commercial-grade hardware and applications that meet the needs of these industries. Meanwhile, phone-based AR is likely to garner most of the excitement for the near term and many companies are already experimenting with AR apps and services. Some of these will be useful, many won't be, but over the course of the next 12–18 months, we should start to see developers beginning to grasp the potential of AR."

On a geographic basis, the United States will be the region with the largest AR/VR spending total in 2018 ($6.4 billion), followed by Asia/Pacific (excluding Japan)(APeJ) ($5.1 billion) and Europe, the Middle East and Africa (EMEA) ($3.0 billion). The U.S. will experience some acceleration in growth over the forecast period, with spending expected to peak in 2020. Meanwhile, APeJ will see its spending growth slow somewhat by the end of the forecast. Both regions will see five-year CAGRs in excess of 100%, trailing Canada (139.9% CAGR) and Central and Eastern Europe (CEE) (113.5% CAGR) as the fastest growing regions. The slowest regional growth will be in Japan, where the CAGR will only reach 36.5%.

With AWS adding 53,000 SKUs in the last few weeks, analysts predict the rise of cloud dealers with simple, fixed-price offerings aimed at untangling this complexity.

At AWS re:Invent, 451 Research revealed how quickly enterprises are moving to hybrid and multi-cloud environments; the growth of the cloud market to$53.3 billion in 2021 from $28.1 billion this year; and the impact of cloud service providers’ ever-expanding portfolio of offerings.

451 Research’s most recent Voice of the Enterprise: Cloud Transformation survey finds that cloud is now mainstream with 90% of organizations surveyed using some type of cloud service. Moreover, analysts expect 60% of workloads to be running in some form of hosted cloud service by 2019, up from 45% today. This represents a pivot from DIY owned and operated to cloud or hosted third-party IT services.

451 Research finds that the future of IT is multi-cloud and hybrid with 69% of respondents planning to have some type of multi-cloud environment by 2019.

The growth in multi- and hybrid cloud will make optimizing and analyzing cloud expenditure increasingly difficult. 451 Research’s Digital Economics Unit has analyzed the scope of AWS offerings and reveals that there are already over 320,000 SKUs in the cloud provider’s portfolio. This complexity is likely to increase over time – in the first two weeks of November 2017, for example, AWS added more than 53,000 new SKUs.

“Cloud buyers have access to more capabilities than ever before, but the result is greater complexity. It is a nightmare for enterprises to calculate the cost of computing using a single cloud provider, let alone comparing providers or planning a multi-cloud strategy,” said Dr. Owen Rogers, Research Director at 451 Research. “The cloud was supposed to be a simple utility like electricity, but new innovations and new pricing models, such as AWS Reserved Instances, mean the IT landscape is more complex than ever.”

Flexibility has become the new pricing battleground over the past three months, with Google, Microsoft and Oracle all announcing new pricing models targeted at AWS. Analysts believe there will be a market opportunity for cloud dealers that can resolve this complexity, giving users simple and low-cost prices – similar to how consumer energy suppliers abstract away the complexity of global energy markets.

451 Research’s quarterly Cloud Price Index continues to track the global cost of public and private clouds from over 50 cloud providers.

Cloud market growth

The latest data from 451 Research’s Market Monitor finds that the cloud computing as a service market is expected to grow 27% to $28.1 billion in 2017 compared to 2016. With a five-year CAGR of 19%, cloud computing as a service will reach $53.3 billion in 2021.

The report examines revenue generated by 451 global cloud service providers across infrastructure as a service(IaaS) and platform as a service (PaaS), as well as infrastructure software as a service (ISaaS), which includes IT management vas a service and SaaS storage (online backup/recovery and cloud archiving).

The report predicts that IaaS will account for 57% of cloud computing as a service revenue in 2017.

451 Research analysts forecast that ISaaS will see the fastest growth through 2021 with a 21% CAGR, while Integration PaaS will be the fastest growth sector within the PaaS marketplace with a five-year CAGR of 27%.

European Frozen food company turns to SOTI MobiControl to make the most secure and productive use of mobile devices.

apetito has partnered with leading IoT and device management expert, SOTI to protect its highly sensitive business data across its multi-platform device estate.

For almost 60 years the European frozen food company has supplied nutritious meals to the health and social care sector. The ability to continue to protect its customer information - often about vulnerable clients, and keep communication confidential is therefore pivotal for the business to ensure it complies with new data protection industry regulations.

apetito has deployed MobiControl to over 250 iPhones, enabling the IT team to manage devices in the field. It also uses the solution on 60 ruggedised Zebra TC55 devices which provide its delivery drivers with the same management, as well as intelligent route planning and real-time navigation to optimise apetito's daily deliveries of tens of thousands of frozen meals across the UK, France, Germany, the Netherlands and Canada.

Mike Calverley, IT Service Delivery Manager at apetito, explains, "Both categories of devices contain highly sensitive and personal customer information. Most companies only worry about the cost of replacing a device if it's lost but for us the data is far more important. A data breach, either through the loss or theft of a device, could be disastrous."

SOTI's MobiControl provides apetito with a one-stop shop to give complete peace of mind over data vulnerabilities. The application is used to easily wipe, track, locate and re-set devices via the SOTI server.

Richard Smith, regional manager at SOTI, says, "Enterprises often struggle to manage the chaos of connected devices, especially where mobility is critical to their business. apetito's mobility strategy is focused on the security and protection of data to the highest level, without compromising daily routines and working processes. SOTI MobiControl has the key features to eliminate customer data loss; alongside location services and device management, it provides complete peace of mind in every way possible.

"apetito, like many firms, is rightly putting a major focus on cyber security, with new initiatives around data encryption and data loss prevention. This is not only spurred by the need to protect clients, but also the need to comply with new regulation on data protection. A mobility strategy which focuses on security is therefore a vital component to any mobile business - data protection doesn't stop at the desktop. Those who fail to do so could not only face heavy fines but also risk reputational damage and the potential loss of customer confidence."

Mike Calverley concludes, "SOTI MobiControl has become critical to our business in ensuring we are meeting data protection requirements. The maths is simple: it doesn't come down to the number of lost phones the company has been able to recover. Instead, it comes down to the ability to continue to protect our customers and keep communication confidential, which is priceless. We now have plans across our apetito management services to add more devices to the business, including iPads. It will therefore continue to be essential to have a centralised system and base to manage all of our devices at apetito."

NEC Deutschland GmbH has delivered an LX series supercomputer to Johannes Gutenberg University Mainz (JGU), one of Germany’s leading research universities and part of the German Gauss Alliance consortium of excellence in high-performance computing (HPC). The new HPC cluster ranks 65th in the most current TOP500 list of the fastest supercomputers in the world from November 2017 and 51st in the Green500 list of the most energy-efficient supercomputers.

This cluster extends the existing MOGON-II cluster, thereby providing a total computational capacity of approximately 1.9 Petaflop/sec. It offers high performance computing services for researchers at JGU and the Helmholtz Institute Mainz (HIM), a research institute specializing in high-energy physics and antimatter research. JGU is a member of the “Alliance for High-Performance Computing Rhineland-Palatinate” (AHRP) and offers access to MOGON-II to all universities in Rhineland-Palatinate.

The new MOGON-II HPC cluster upgrade consists of 1040 dual-socket compute nodes, each equipped with two Intel(R) Gold 6130 CPUs and a total memory of 122 TB.

The nodes are connected through a high-speed Intel(R) Omni-Path network with a topology that allows continuous expansion of the system, which meets the ongoing growth of HPC demand from researchers from JGU and HIM.

The MOGON-II cluster is connected to a 5 PetaByte NEC LxFS-z parallel file-system capable of 80 GigaByte/s bandwidth. This highly innovative ZFS-based Lustre solution provides advanced data integrity features paired with a high density and high reliability design.

“We have been working together with NEC for many years now, and we are happy to confirm that this collaboration has always been very fruitful to our research members and to the excellence in research at Mainz University. The high sustained performance and stability of NEC’s HPC solution, as well as the dedication and skill of their team continuously deliver exceptional results,” emphasizes Professor André Brinkmann, Head of the Zentrum für Datenverarbeitung and of the Efficient Computing and Storage Group at JGU.

“We are honored to see Johannes Gutenberg University Mainz and Helmholtz Institute Mainz, two highly respected members of the research community, adopt NEC’s latest HPC solution as part of extending the capabilities of the MOGON-II cluster,” said Yuichi Kojima, Vice President HPC EMEA at NEC Deutschland.

By moving mission-critical applications to the public cloud, Harte Hanks increases the stability and reliability of its hybrid IT infrastructure.

Harte Hanks has selected Ensono, a leading hybrid IT services provider, to manage its digital transformation.

Harte Hanks began working with Ensono in 2016 when the hybrid IT services provider managed Harte Hanks’ physical data centres in Texas and Pennsylvania. With the changing needs of its clients, the lack of flexibility with data centres made it difficult for Harte Hanks to serve clients in an effective manner. The increased desire for analytics in the marketing field is ultimately what led Harte Hanks to transition to the public cloud.

The marketing firm leveraged Ensono’s infrastructure management expertise to deploy select applications to the public cloud, including a new analytics platform. From delivery to support, Ensono seamlessly migrated Harte Hanks to AWS. By moving critical applications to the public cloud, Harte Hanks has been able to focus on meeting business objectives while providing superior service to its clients. Harte Hanks has also experienced a significant decrease in overall downtime and fewer tickets processed monthly.

“We’re able to better focus on meeting the needs of our customers without the burden of day-to-day management of our hosting infrastructure,” said Russ Thackston, managing director of marketing technology services at Harte Hanks. “Ensono demonstrates a level of expertise and technological capabilities superior to other vendors, which is why we moved forward with them and plan to have a long relationship in the future.”

In addition to leveraging Ensono for its managed public cloud services, Harte Hanks is continuing to use Ensono for physical infrastructure management.

“When Harte Hanks came to us, we knew that our managed public cloud service was the best solution to fit their business needs. Ensono had a strong understanding of where they started and where they wanted to go,” said Brett Moss, senior vice president and general manager of hyperscale cloud at Ensono. “We look forward to our continued partnership with Harte Hanks as they move forward in their digital transformation journey.”

Swedish municipality Haninge has selected Tieto to supply it with user-friendly IT-services. Under the agreement, Tieto will deliver IT work stations to facilitate the work for both school students and the municipality’s administrative staff.

The new solutions are in line with Haninge’s overall digitalization strategy and will render the municipality’s current work processes more efficient and allow for the municipality’s staff to better communicate with citizens wherever they are at. The two-year agreement, which goes into force on March 31, 2018, is worth a total of 32 million SEK and includes an extension option for another two years.

“Haninge municipality must be present in whatever way businesses and citizens may want to reach us. Modern IT-solutions are essential to ensure we can provide digital services in ways that are both socially and financially sustainable. With this agreement, we improve Haninge’s possibilities to offer the types of services that the citizens expect, without implying higher costs,” says Magnus Strömberg, IT Manager at Haninge municipality.

The agreement covers the implementation of IT work stations, including hardware, for the municipality’s 4,400 staff, as well as in some schools. Tieto will also deliver operating services, printing services and media equipment for conference rooms. The hardware related to that part of the order will be delivered by a partner, however.

In 2016, Haninge decided to modernize its IT infrastructure to secure efficiency, quality and sustainability after which the procurement process for the above-mentioned agreement followed.

“Haninge is one of our biggest customers within the municipal sector. We’re very proud that they have granted us renewed confidence, allowing us to continue to support them on their digitalization journey, which now also includes schools. Haninge has a very clear strategy when it comes to using IT services in a way that supports its citizens and Tieto looks forward to helping the municipality to reach its goals,” says Mats Brandt, head of public sector, Tieto Sweden.

Organisations possess a never-ending litany of hardware. This amount of kit is also home to an enormous volume of mobile data, information that if used intelligently, should allow businesses to make more informed decisions.

By Chris Walters, UK&I Country Manager, NetMotion Wireless.

When technology fails or it becomes difficult to get connected, employees who are mobile or who work remotely can get very frustrated. IT departments can use this operational and business data in a productive manner to analyse connectivity status and potential security threats.

A recent survey by WBR Digital, commissioned by NetMotion Software, pointed out the problems faced by many employees. Nearly 50% of those surveyed raised the issue of not even being able to get common mobile problems diagnosed. And whilst nearly 40% of those who responded pointed out a significant number of connectivity issues that occurred on a monthly basis, many organisations were looking to expand mobile operations. Clearly this would only serve to make things worse.

When considering how to use big data in order to facilitate mobile IT operations, there are some commonplace challenges that IT departments should have in mind:

The information contained within most network adaptor or operating systems is very useful as it can contain valuable data on the behaviour and health of a device along with any connectivity issues. The problem is that traditional operational and business intelligence tools are unable to extract this information - they were not designed to deal with mobile deployments. BI visualisation tools should work across all platforms, something that becomes greatly appreciated as an organisation grows. Aside from being optimised for mobile use, any such platform must be able to offer information regarding users, networks, and devices both inside and outside any corporate firewall.

Sometimes a tool already exists within an organisation but depending on which department ‘owns’ it then you get control issues that might leave teams waiting around. Ideally of course, every team would have access to the perfect solution for their needs. However, if money is tight then teams will need to compromise accordingly. The IT team and those holding the purse strings should communicate closely in order to achieve a win-win situation.

Any tool that is eventually selected should be helping IT teams to perform real-time analysis and receive alerts on mobile connectivity, security and performance, in order that the team can make faster, more informed decisions. Most available mobile BI solutions can effectively understand connectivity challenges and will address security issues on mobile devices.

Organisations will continue to invest in mobility, increasing the numbers of remote workers and adding new devices and business critical mobile applications. Therefore they will need more effective solutions to ensure connectivity and data protection. Purpose-built mobile business and operations solutions are the best way to maximise performance and get the most value from any mobile deployments - the right tools should automate the analysis of mobile data.

Companies need to learn how to work better with big data, to analyse that information for mobile workers and determine ways to tailor it to specific tasks. If they do not, then they run the risk of failing to deliver efficiencies in big data analysis.

Until organisations figure out the best way to present and collect data then we’re unlikely to see a good blend of mobile technology and actionable analysis of big data. Most companies will require years of testing and adjustment, a trial and error approach at best.

‘Digital workplaces are the natural conclusion’ says Jordi Suner, vice-president, product management, ASG Technologies.

When Bring your own Device (BYOD) concerns were at their peak, a couple of statistics stuck in my mind far longer than any others.

The first, from Gartner, was 50% of Generation Y and Z workers feel they have better technology at home than at work. The other stems from Forrester Research which revealed 36% of information workers were willing to invest their own money in a laptop if they could have one of their choice. In other words, they would rather spend their own hard-earned salary to work more effectively than jeopardise their productivity by using what they saw as inferior technology supplied by their employer.

These sentiments sum up the BYOD challenge – and the related problem of shadow IT – in a nutshell. Although many companies have found ways to make peace with the invasion of consumer technologies, or at least discovered how to make their usage more secure, the situation has continued to evolve. Now it appears to have come to a natural conclusion in the emergence of the digital workplace and innovations in workspace technology.

Paul Miller, CEO and founder of the Digital Workplace Group, describes the digital workplace as, “the technology-enabled space where work happens – the virtual, digital equivalent of the physical workplace.” Although the concept embraces processes and workflow, at the heart is the technology layer that manages all the things a user needs to do their job flexibly and effectively at any time and from anywhere. To differentiate the technology from the broader term, this is sometimes known as the workspace.

Workspaces set out to balance the yin and yang of business; a freedom of choice with security and compliance. By their nature workspaces are role-based, intuitive and customisable. Ideally, they provide a personalised interface to applications, mail, content, diary, self-service help or whatever the user needs to be productive via a ‘single pane of glass’ experience.

Critical to BYOD approaches, access to the workspace and the user experience must be the same whether viewed on a PC, laptop, tablet or smartphone. It is not uncommon for employees today to use up to five devices to access corporate information. By providing a consistent single point of access, regardless of the device used, employers can streamline business processes, maximise productivity and save time.

Intuitive, web-based access to applications, services and content are prepared and presented to employees across business departments and roles. Workspaces can then be modified to meet individual user requirements and preferences and end-users can customise them to become even more productive. Integration with an enterprise service store enables users to search for an app or service to be automatically added to their workspace.

While users can use the technology in any way they want, they can be assured that they do so in a safe and secure environment. Configuration drift, often caused by end-users installing their own unapproved software, is no longer a problem as workspaces provide central governance by enabling end-users to add their favourite SaaS and local applications for which access is approved. This approach eliminates the need to bypass the IT department and use unsanctioned software, and appropriately balances the centralised control of the employer with the consumer-like technology expectations of employees.

The workspace works well for the IT department because there’s no longer a focus on managing the entire operating system, but rather on delivering the required role-based applications, data and services directly to a browser-based dashboard in a targeted manner.

Beyond the compelling cost and time savings benefits, digital workspaces give employees the freedom and flexibility to do their jobs in the best way available, wherever they are working. Employees are empowered to do their best work when technology supports their role instead of inhibiting it. It’s interesting that employers talk about needing to delight their customers. Just imagine the business outcomes they can achieve by delighting their employees too.

If this is where BYOD finds its natural outcome, it’s worked out better than we ever thought it would.

The SVC Awards reward the products, projects and services as well as honour companies and teams operating in the cloud, storage and digitalisation sectors. The SVC Awards recognise the achievements of end-users, channel partners and vendors alike and have become established as one of the most prestigious events that recognise the innovation and dynamism of the IT sector.

Paddington’s Hilton Hotel was the venue for a highly successful and enjoyable evening – one senses that the Isambard Kingdom Brunel, founder of the Great Western Railway and the founder and first managing director of the awards venue, would have approved of the engineering achievements being celebrated at the SVC Awards.

A drinks reception, sponsored by Touchdown PR, was followed by an excellent three course dinner and comedy, courtesy of Edinburgh Festival regular, Jimmy McGhie.

STORAGE PROJECT OF THE YEAR

Sponsored by: IGEL

Award presented by: Iris Hatzenbichler-Durchschlag, Director Marketing

Runner up: Mavin Global supporting The Weetabix Food Company

WINNER: DataCore Software supporting Grundon Waste Management

Collecting: Brett Denly, Regional Director

CLOUD/INFRASTRUCTURE PROJECT OF THE YEAR

Award presented by: Jason Holloway, Director of IT Publishing @ Angel

Runner up: Axess Systems supporting Nottingham Community Housing Association

WINNER: Navisite supporting Safeline

Collecting: Elizabeth Redpath, Marketing Director

HYPER-CONVERGENCE PROJECT OF THE YEAR

Award presented by: Peter Davies, Senior Sales Executive on the IT Portfolio of Angel title and events

Runner up: HyperGrid supporting Tearfund

WINNER: Pivot3 supporting Bone Consult

Collecting: Chris Deacon of Pivot 3 and Ole Nielsen of Bone Consult

UK MANAGED SERVICES PROVIDER OF THE YEAR

Presented by: Philip Alsop, Editor Digitalisation World stable of publications

Runner up: Storm Internet

WINNER: EBC GROUP

Collecting: Richard Lane, Group Managing Director

VENDOR CHANNEL PROGRAM OF THE YEAR

Sponsored by: Touchdown PR

Presenting: James Carter, CEO

Runner up: Veeam

WINNER: NetApp

Collecting: Irene Marin, Sales Manager & sales representative Kayleigh Bull

INTERNATIONAL MANAGED SERVICES PROVIDER OF THE YEAR

Presented by: Jason Holloway

Runner-up: Claranet

WINNER: Datapipe

Collecting: Tony Connor - Marketing Director, EMEA

BACKUP & RECOVERY / ARCHIVE PRODUCT OF THE YEAR

Sponsored by IMPARTNER

Award presented by: Pierre Poggi, Impartner EMEA Sales Director

Runner up: NetApp

WINNER: Altaro Software

Collecting: Colin Wright, VP EMEA Sales

CLOUD-SPECIFIC BACKUP & RECOVERY PRODUCT OF THE YEAR

Sponsored by: EBC Group

Award presented by: Richard Lane, Group Managing Director

Runner up: Acronis

WINNER: Veeam Software

Collecting: Jason Holloway on Veeam’s behalf

STORAGE MANAGEMENT PRODUCT OF THE YEAR

Award presented by: PETER DAVIES

Runner up: SUSE

WINNER: Virtual Instruments

Collecting: Sean O’Donnell, Managing Director EMEA

SOFTWARE-DEFINED/OBJECT STORAGE PRODUCT OF THE YEAR

Award presented by: Phil Alsop

Runner up: DDN Storage

WINNER: Cloudian

Collecting: Regional Sales Managers, Dan Chester & Arian Everett

SOFTWARE DEFINED INFRASTRUCTURE – PRODUCT OF THE YEAR

Award presented by JASON HOLLOWAY

Runner up: Runecast Solutions

WINNER: SUSE

Collecting: Jeff Kirkpatrick, UK Alliances Manager

HYPER-CONVERGENCE SOLUTION OF THE YEAR

Award presented by: PETER DAVIES

Runner up: Pivot 3

WINNER: Scale Computing

Collecting: Regional Channel Manager Doug Williams & EMEA Systems Engineer, Leonard Powers

HYPER-CONVERGENCE BACKUP AND RECOVERY PRODUCT OF THE YEAR

Award presented by: PHIL ALSOP

Runner up: Cohesity

WINNER: Exagrid

Collecting: Graham Woods, VP International Sales Engineering

PLATFORM AS A SERVICE (PAAS) SOLUTION OF THE YEAR

Sponsored by: NetApp

The award will be presented by: Kayleigh Bull, NetApp

Runner up: SnapLogic

WINNER: Cast Highlight

Collecting: Pierre Poggi of Impartner collected the award on behalf of Cast Highlight

SOFTWARE AS A SERVICE (SaaS) SOLUTION OF THE YEAR

Award presented by: Jason Holloway

Runner up: Impartner

WINNER: Adaptive Insights

Collecting: Rob Douglas, VP UKI & Nordics

IT SECURITY AS A SERVICE SOLUTION OF THE YEAR

Award presented by: Peter Davies

Runner up: Alert Logic

WINNER: Barracuda MSP

Collecting: Jason Howells, Director of EMEA MSP Business

CLOUD MANAGEMENT PRODUCT OF THE YEAR

The award is presented by: Phil Alsop

Runner up: ZERTO

WINNER: Hypergrid

Collecting: Doug Rich, VP EMEA

STORAGE COMPANY OF THE YEAR

Sponsored by: SUSE

Presented by: David Winter, Data Centre Sales

Runner up: NetApp

WINNER: Data Direct Networks / DDN

Collecting: Ed Browne - UK&I and Nordics Sales Director, Chris Kenny - VP Gen Manager Europe and International Sales, Mark Rothwell - Sales Manager UK.

CLOUD COMPANY OF THE YEAR

Award presented by: Jason Holloway

Runner up: Databarracks

WINNER: Six Degrees Group

Collecting: James Hall, Sales Director

HYPER-CONVERGENCE COMPANY OF THE YEAR

Award presented by: Peter Davies

Runner up: Pivot3

WINNER: Cohesity

THE STORAGE INNOVATION OF THE YEAR

Presenting the award: Phil Alsop

Runner up: Nexsan

WINNER: Excelero

Collecting: Axel Rosenberg, Sr Technical Director

THE CLOUD INNOVATION OF THE YEAR

Presenting the award: Peter Davies

Runner up: StaffConnect

WINNER: Zerto

Collecting: Peter Godden, VP EMEA

HYPER-CONVERGENCE INNOVATION OF THE YEAR

Presenting the award: Jason Holloway

Runner up: Syneto

WINNER: Schnieder Electric

Collecting: Yakov Danilevskij, Head of Strategic Marketing

DIGITALISATION INNOVATION

Sponsored by Touchdown PR

Presenting the award: James Carter, CEO

Runner up: MapR

WINNER: IGEL

Collecting: Iris Hatzenbichler-Durchschlag, Director Marketing

SVC INDUSTRY AWARD

Each year Angel likes to recognise a significant contribution to or outstanding achievement in the industry – this award is not voted for by the readers but based on the publisher’s and editorial staff’s opinions.

Presented by: Jason Holloway, Director of IT Publishing at Angel Business Communications.

SVC Industry Award – Zerto

Collecting: Peter Godden, VP of EMEA

Our first industrial revolution was characterised by steam and wind powered machinery, our second by linear automated assembly lines, and the third by digitalisation, e-commerce and social networks. Technological innovations have been perpetually improving the way we live and work for centuries, and Industry 4.0 is the next logical step in the process.

By Gary McKay, CEO and founder of APPII.

A combination of artificial intelligence (AI), robotics, the Internet of Things (IoT) and blockchain technologies are set to streamline processes in an unprecedented way, saving us time and money, and ultimately revolutionising the way that we live.

Industry 4.0 will see processes that have typically been manually operated undertaken by autonomous digital systems in a great many ways. For example, within the home, this could involve your heating turning on when your car tells it that you’ll be home soon, and that it’s cold outside. On a wider scale, these automated processes could see the role of broker services diminished across many sectors.

The potential of blockchain technology in particular in reducing the need for broker services has already been well documented with regard to the financial sector. Real-world applications of blockchain technology could save between US$5billion and US$10billion by eliminating the need for administrative work involved with running claims and compliance checks. Essentially, blockchain is a vast, open and decentralized ledger where information can be stored that is both interoperable and immutable. The availability of verified information means that eligibility for a loan, for instance, can be decided instantly.

Blockchain prospective applications reach across virtually all broker service sectors including para-legal, art, real-estate, insurance and recruitment. As the technology that underpins the outsourcing of manual processes across so many sectors, with the potential to save masses of time and money, blockchain is set to be a fundamental pillar of Industry 4.0. As a result, blockchain start-ups are of great interest to investors.

Blockchain start-ups offering platforms that facilitate financial services have been the first to benefit from capital injection, with over $2billion reaching finance-focused blockchain start-ups in the last year alone through Initial Coin Offerings (ICO) and Token Sales. However, as the ubiquitous nature of these platforms has become increasingly apparent, the movement of capital to support blockchain projects has turned to other markets.

As blockchain is positioned as an essential, foundational aspect of Industry 4.0 it has become an attractive investment opportunity. However, blockchain-supported cryptocurrencies and tokens are also projected to have a profoundly disruptive impact on the way that start-ups raise capital, and the way that value is transferred within sectors on a wider scale. This is known as a token model economy.

In the recruitment sector, for example, there is potential for tokens to be used as a reward for organisations who verify information on CVs, or to reward candidates whose CVs are viewed by organisations. Currently, most people give away their data for free, but by changing the way that we value this exchange, a token economy model could drastically alter the way we share data. Token incentives are likely to create a comprehensive network of verified individuals, who could then utilise tokens in a variety of different ways. The token economy is a fundamental shift from the traditional means of exchanging value that is seen today and democratises the distribution of benefit in business models where benefit usually finds its way to the centre, and to the few.

Of course, crypto-sceptics have identified the use of tokens as risky, due to the market’s history of short-term volatility, but investors are looking at blockchain as being capable of offering longer-term delivery of value, and are expecting great returns once Industry 4.0 is in full swing.

Essentially, Industry 4.0 is the next rational step in centuries worth of technological advancements designed to improve the quality of our lives. Our lives are set to become increasingly streamlined as manual processes are outsourced to decentralised automated networks. These networks are intrinsically dependent on blockchain technology, because it offers the most secure way of storing data and this has not gone unnoticed by sophisticated investors. Blockchain and the token economy will fund the fast-approaching fourth industrial revolution and help it reach its full potential.

It’s not a new concept but service providers still need to improve their relationships with customers. The ultimate aim is to achieve a good understanding of every customer and their issues, resulting in improved relationships and the delivery of services that advance the productivity of that customer’s business.

By Edmund Cartwright, Marketing and Business Development Director at Highlight.

However, achieving the right mindset within large managed service providers remains a significant task; moving to a solution sell is one of the most difficult challenges they face. The main issue is they continue to focus on ever increasing sales targets in a highly commoditised market. The common references to customer churn rates betrays an engrained acceptance that customers will regularly look for new providers.

Reliance on Service Level Agreements (SLA) is another negative step on the road to developing good customer relationships. The SLA is a by-product of providers failing to deliver a good-enough service and is built on penalties and fines imposed when services under perform. This is extremely unhelpful to customers who are not interested in beating their providers with a stick; they just want good services that enable their businesses to run efficiently.

Service providers need to change this approach to customers. They need to turn around their relationships with customers by becoming trusted advisors. This can only be achieved by empowering sales, service management and operations teams with the information they need to create truly unique customer relationships. This will not only Improve contract renewal rates but also increase the margins on accounts.

A Tactical Plan

Service providers will often say that they have all the tools they need when it comes to monitoring their customers’ networks and applications. The problem is that technologies are usually managed by engineers, using tools that by their very nature are highly technical and rarely designed to be understood by those managing a business. These tools do not facilitate interaction with the end customer and certainly do not allow a proactive and inclusive level of customer service.

Service managers can spend many hours, if not days, requesting data from their technical teams to create reports for the customer; only for the customer to find the report is not a true reflection of their experiences. This begins the cycle of mistrust, misunderstandings and re-negotiations.

Highlight offers a way to revolutionise what service providers do. It is designed for providers who want to share with clients a clear, business-level picture of how their networks and applications are performing, and it delivers a tangible way for service providers to reverse poor contract renewal rates and minimise churn

Regardless of whether an application or service is managed in-house or by third-party suppliers, technical and non-technical decision makers need to know if there is a problem with the delivery of their business services, together with an understanding of where that problem is, who is affected and for how long. They also want to know when the problem is resolved, whether by internal teams or external service providers.

Highlight answers these questions. It is a cloud service enabling sales, service managers and operations management to see clearly, showing all managed services through a single pane of glass. It provides accurate, impartial evidence of applications and network service performance across all locations, helping to create trusted advisor relationships between service providers and their customers.

Improving Services

Alongside delivering visibility and analytics, Highlight enables service providers and enterprise customers to both have visibility of the same accurate, easy to use graphical information. This critical data supports the right conversations concerning issue resolution, planning and capacity management. It supports and enhances ICT expansion, new technology deployment and cloud transition initiatives, where both providers and corporates are confident in the partnership.

Alerts offer a powerful feature that enables providers to go beyond the SLA and deliver an unrivalled customer experience. Sales and Service Managers can also set up reports with ease, which can be sent to their inbox on the first of the month, weekly or daily to show all issues and outages for a customer. The service provider can now demonstrateproactive service management, leading to higher rates of contract renewals rates

Highlight has the ability to quickly identify all the applications on a network, show how vital services are performing and monitor the growing use of unauthorised applications. It provides insight into the performance of business-critical applications, wherever they are hosted, to benefit both business users and technical teams.

It also shows what networks are doing, moment by moment, in a clear and business-friendly way. No matter what wide area network solution, IPVPN MPLS, i-WAN, or Hybrid network … Highlight shows the demands that different applications make on a wide area network and reveals if a Hybrid WAN is working and re-routing traffic as required to match set policies. It automatically detects all Wireless Access Points after a controller is configured. This real-time view shows the usage and health of a WiFi estate, across all access points, on one panel.

Market Differentiation

Fast and easy to deploy, Highlight has simple pricing structures and zero CAPEX, making it a business enabler which is budget sensitive. Providers and corporate customers gain better business results in network and applications service management, operations and customer experience. It is currently used in 90 countries, on 6,000 enterprise networks including 40% of the FTSE-100.

Reduced mean time to repair (MTTR) – Highlight’s graphical display brings the most relevant performance information to the surface enabling proactive issue management. Service providers and customers can see issues as they develop therefore resolutions can be sought quickly and efficiently. This condition facilitates a significant improvement in the eyes of the customers.

Improved service reviews - Real-time and static reports drawing on both raw and pre-summarised data are available readily from a single platform enabling service managers to provide high-quality service reviews at any time the customer desires without impacting resources.

Increased proactivity - Service managers can work proactively towards problem prevention with their customer contacts, plus can add value to their customer IT infrastructure and application capacity planning activities.

Case study: Gamma selects Highlight to enhance Customer Experience

Gamma chose Highlight to help deliver the highest level of customer experience. Gamma is offering Highlight’s monitoring services to its large to medium sized enterprise customers so that they have clear, real-time visibility into the performance of their managed communications, including voice, data and mobility services.

David Macfarlane, a managing director at Gamma, says, “Highlight enables us to watch over our customers’ experiences as we strive to remove the burden of customers having to manage their own communications infrastructure.

“In the past, we had one monitoring tool for our broadband environment and another for our Ethernet services, but were missing the ability to monitor our customers’ applications,” adds David. “Highlight was the silver bullet. We now use Highlight internally to monitor the infrastructure and applications across our customers’ environments, but the big bonus is that we share the same tool with customers, so they can see how everything is performing. No information is redacted, and all data is real-time, so customers can hold our feet to the fire to ensure they get the services they expect.”

Highlight’s cloud application is integrated within the Gamma network. It monitors every end point that Gamma supplies, both direct and indirect, capturing the data from equipment which is then processed by Gamma’s platform and fed automatically into its monitoring and ticketing system. If Highlight picks up an issue – anything from basic availability through to application miss-performance – tickets are raised on Gamma’s platform for an engineer to action.”

Highlight has enabled Gamma to move up the value chain in terms of the management information it gives to customers. And customers really value it.

“All our direct customers have access to Highlight and we’re now layering Highlight on to our products, so channel partners can also see the value; they will be able to monitor and share Highlight with their customers who use Gamma infrastructure,” adds David. “Going forward we want to have more application visibility to deliver greater detail into the quality and responsiveness of the applications.”

Gamma also uses Highlight for planning. As a large network operation, Highlight monitors some of Gamma’s core infrastructure; helping to identify any potential issues. “Mean-time-to-innocence is a favourite term we use, and Highlight allows us to get to what ‘isn’t’ at fault very quickly, so we can then drill down to an engineering level to find the issue,” confirms David.

“The benefits of Highlight are numerous. As a SaaS-based platform, we only consume what we need, when we need it. This means we haven’t had to make large capital investment in complex tools that quickly go out of date. Working with Highlight has been exceptional. It is a high value relationship where they are looking beyond the product at how they can help me solve the challenges in my business.”

The potential to transform business lies with big data, by enabling leaders to make data-driven decisions faster. Organisations will spend $187 billion on big data and analytics technology by 2019, an increase of 50 per cent from the $122 billion invested in 2015, according to the research firm IDC.

By Francois Cadillon, Vice President of Sales, UK, Ireland, and Southern Europe, MicroStrategy, Inc.

New ways of tapping into this potential are being discovered and organisations are able to glean actionable insights. This means they can turn big data into a real-world competitive edge that will help them push ahead of their competitors. Strategies that democratise data and put analytics into the hands of employees across the business, empowering them to make data driven decisions quickly, yield the best results.

‘Self-service’ business intelligence (BI) is gaining popularity because it enables users across different levels and departments to act on insights and better serve their customers and partners. However, once organisations decide to embark on this approach, many face the challenge of implementing a complete analytics solution that meets the needs of everybody from business users, senior executives, and IT departments. As a result they rely on a myriad of point solutions, forcing them to grapple with data silos, complicated workflows, limited scalability, and lagging user adoption. These challenges, compounded with issues around data quality, poor performance, and unfriendly application design will get in the way of realising the true value of data.

To avoid such pitfalls which only serve to hinder growth and efficiency, businesses must balance end-user flexibility with the performance and governance of a true enterprise-grade analytics platform. To ensure success, organisations must establish roles and processes early, publish a verified system of record, and give business users the power to publish new data and dashboards in a governed environment. Widespread adoption can be driven by considering the needs of the user and then providing access to data via web, mobile, and desktop applications—all using a single, unified enterprise platform. This systematic approach enables organisations to deploy intelligence everywhere, meaning that intuition is replaced by data-driven decisions at every level.

However, the road to digitalisation is not without its potential pitfalls. By seeking a more data-driven approach, organisations will start dealing with larger and larger volumes of data—making the availability of high-quality data more important than ever before. Unfortunately, the increase in data volume makes it more difficult to quickly deliver data to analysts. That’s where automation through AI and machine learning will prove invaluable. This technology can sift through huge volumes of data and surface the most important insights, thus freeing up more time for analysts to create data-driven strategies and think critically about the business.

Ensuring data hygiene is also a massive challenge for organisations that are democratising data. Contaminated data can be a costly problem that takes significant time and resources to resolve. Minor inconsistencies introduced into data sets can have exponential effects across multiple departments, as users share unverified information with colleagues, clients, and others outside the organisation. Without even realising that an issue exists, well-intentioned employees can make decisions based on faulty data that lead to a waste of company resources, increased maintenance costs, and distorted results.

‘Reverse engineering’ irrelevant, out-of-date, or erroneous data is a tedious, time-consuming process. Company resources are diverted to cleaning up and restoring data to its uncontaminated state, allowing the competition to jump ahead in the interim. Prevention is always best. A robust and comprehensive data governance framework can ensure that every user across the organisation is granted the right level of access to the correct information. This, coupled with employee education, is the best way to ensure the quality of enterprise data and security is maintained.

As companies recognise the potential benefits of implementing a holistic approach to harnessing big data, the use of BI and analytics will become more pervasive. Choosing the correct technology is the key to ensuring that users get the most from content they create while operating within a governed enterprise BI ecosystem.

Organisations choose to work with a Managed Security Services Provider for a number of reasons, from security talent shortages and restricted IT budgets, to the complexity of staying on top of sophisticated threats and a bewildering number of technology choices for data protection.

By Jan Van Vliet, VP and GM EMEA, Digital Guardian.

Selecting the right partner can bring a broad range of specialised skills and tools that you would otherwise lack the time, budget, and resources to develop in-house. While choosing an MSSP can seem to be a difficult task, you can simplify the selection process by asking the right questions, so that you gather all the information needed to make the best decision.

The following are five key questions to help you choose the right MSSP for your organisation’s data protection needs.

1. Does the MSSP have the right credentials?

Find out if the MSSP knows what it takes to protect your data. Have they been in the business of protecting organisations' sensitive data long enough? Do they understand your organisation’s specific needs for data protection? The MSSP you choose should not only be able to reduce the workload for your IT security team but also act as a remote extension of your team, protecting your data from insider and outsider threats. Important aspects to consider would be experience in the industry, longevity in business and strong industry partnerships. For example, look out for certifications such as Certified Information Systems Security Professional (CISSP) and Computer Hacking Forensic Investigator (CHFI), as this will indicate that the company has the necessary skill set and experience to keep your data safe.

2. How qualified is the team?

As you evaluate different MSSPs, make sure to qualify the engineers and staff behind the scenes. Are they the subject matter experts? Can they put you ahead of advanced threats? How accessible are they? Where are they based? You also need to know whether they have some experience in your particular vertical. An MSSP that specialises in healthcare services may not be a good fit for a logistics and transport or manufacturing company. It’s not that IT systems are dramatically different; it really is about the language and abbreviations used in those industries and the ability to communicate with end users. A well-qualified MSSP should be willing to answer all the questions above and allow you to interview the key team members. After all, you are putting your sensitive data protection in their hands.

3. Can the MSSP protect you from advanced threats?

Make sure the MSSP offers a broad range of solutions, not only for data protection, but also to protect sensitive data from malware – this is particularly important as today’s malware is becoming increasingly sophisticated, targeted, and difficult to detect. Your MSSP should be looking out for advanced threats on your behalf, allowing you to focus on your core business. A good MSSP will also bring together knowledge from other customers and security sources, including research, threat intelligence and government alerts, to keep you ahead of advanced malware. You could never achieve this collective knowledge on your own, so it is a critical benefit of using a MSSP.

4. How will your sensitive data be handled?

It is important to understand where your sensitive data resides and how the managed service provider handles your data. Make sure you have complete transparency into how the data movements happen within the environment and ensure the MSSP provides you with complete data visibility. Ideally, the MSSP should offer to store and handle data in a secure cloud environment.

5. Does the MSSP have good references?

Pulling out public references in the security industry is challenging, so you might not find this information on the MSSP’s website. However, have a conversation with the company about how it has positively impacted the security posture of comparable companies that are similar to you in size, needs and industry. Your MSSP may be able to set up private interviews with their reference customers if you ask for them.

Choosing the right service provider for your data protection needs can seem quite a challenge. After all, there is no “one-size-fits-all” MSSP. However, by arming yourself with these five questions and asking for as much information on these points as possible from each potential MSSP, you will be in a good position to create a shortlist of the most suitable MSSPs that meet your specific needs.

There has been much talk about DevOps in recent times, and its adoption is on the rise. Puppet’s 2017 State of DevOps Report found that the number of respondents working on DevOps teams had almost doubled to 32% between 2014 and 2017. The rate of adoption reinforces the point that many businesses see an increasing need for introducing DevOps practices into their organisations.

By Alberta Bosco, Puppet senior product marketing manager.

Where did the need for DevOps come from?

Traditionally, IT has been a separate entity that rarely interacted with the rest of the business. However, the emergence of the Internet and other new technologies forced the business and IT departments to interact much more. They also made it much harder for IT to summarily dismiss ideas from the business by arguing it couldn’t implement them.

In addition to an ingrown antipathy to user requirements, most IT teams suffer from an internal inflexibility that reinforces the yawning gap between the software development and IT operations teams. Developers are motivated to deliver new features, but their responsibility ends as soon as the software is handed to operations to deploy. Meanwhile, operations teams have no role in the software development, only in its deployment.

In many cases, the objectives for developers and operations are not only completely opposed, but the lack of collaboration between the two acts as a hindrance to the effective development and implementation of IT projects.

Why do we need DevOps ?

By combining software development and IT operations, DevOps seeks to set practices for collaboration and communication, and automate the process of software delivery and infrastructure changes. Unsurprisingly, implementing DevOps is not as easy as taking the start of two words and putting them together.

Organisations need to have a clear idea of where they want to go and an understanding of why DevOps will help them achieve it. There are bottlenecks in every organisation. In today’s world where the speed of change is relentless, the development and operations functions are in danger of failing to keep up with those changes unless they collaborate.

Another way to try and remove some of the delays is to consider automating tasks that take up significant amounts of the IT department’s time. By identifying areas where unnecessary manual processes can be replaced by automation, organisations can significantly improve their performance. The highest performing organisations have automated significant parts of their configuration management processes, freeing them from wasting time on manual processes that delay deployments and hinder innovation.

DevOps can fulfil the digital transformation vision

IT makes a very important contribution to an organisation’s success (or its failings) and it will also play a prominent role in delivering on the vision enterprises have for their business going forward. Many have embarked on their own digital transformation journey to provide a service to customers that is available everywhere and on every single device.

DevOps is the means to achieve that objective because it introduces a way for all parts of the IT environment to work together seamlessly on one project. DevOps is not just about technology, but also about culture and processes. Organisations have to stay focused on all three areas to be successful, working only on one or two of these areas won’t get them there .

Technology, processes and people have to be viewed through the prism of DevOps as the most effective way to deliver better software faster, and achieve the ultimate goal of giving their customers the greatest level of service everywhere they go.

London drivers spend 110 hours stuck in traffic per year (this is on top of their usual commute time). That is 45 minutes PER DAY stuck in traffic – every minute generating more pollution in our cities. Each year, in most major cities, traffic grows heavier, delays get longer and air quality gets worse.

By Matt Smith, CTO, Software AG.

Traffic causes many problems for local governments, but there are also other issues; safety and health, crime and environmental concerns have to be tackled as populations grow. For grow they will; by the year 2050, 70% of the world’s population will be living in cities, according to UNICEF.

A paper by the Brookings Institution, “Benefits and Best Practices of Safe City Innovation” suggests that technology infrastructure is the first step to managing cities of the future.

“Investing financial resources in digital infrastructure and solutions pays off in improved productivity, competitiveness, and innovation,” said the paper, which also mentioned that funding challenges are one of the biggest difficulties.

So how can municipalities transform themselves into smart, smooth-running digital cities? And where does the money come from? Governments around the world are discussing this now and, in one case, a technology association found a unique solution: Let someone else pay for it.

This summer, German IT association Bitkom held a competition to find a city in Germany that would be the ideal model digital city. In total, 14 mid-sized cities across Germany applied for the title. Among the top five contestants were Darmstadt, Heidelberg, Kaiserslautern, Wolfsburg and Paderborn.

The Hessian city of Darmstadt won. Starting in early 2018, areas such as the transport sector, energy supply, schools and healthcare will be equipped with the latest digital technologies. In addition, the public administration will add innovative online applications and intelligent delivery services.

Telecommunications networks are also to be expanded and improved. Intelligent traffic control, 5G networks, governmental e-services for citizens, smart hospitals and autonomous driving projects will all be implemented in Darmstadt over the next two years.

Darmstadt has the unique opportunity to implement a data platform that will monitor, control and analyze various processes linked to its administrative duties. And its citizens will profit from a range of new digital services, making living in the city easier and more comfortable. This is the greatest innovation project in Germany to date and the digital transformation of this city will become a lighthouse project for Europe.

Multimodal e-mobility will become reality: There will be a free choice between all kinds of public and individual transportation systems, ensured by close cooperation between energy providers, public transport providers, vehicle manufacturers and rental car companies.

Ambient assisted living, which combines new technologies and the social environment, can improve the quality of living while reducing health care costs.

New services can allow close cooperation between pharmaceutical manufacturers, home appliance manufacturers and medical services.

Darmstadt will receive innovative technology products worth more than €20 million from the sponsors of the competition, which include software and telecommunications companies, as well as financial support from the State of Hesse. It is a win/win for Darmstadt, as well as the sponsors – who will gain not only knowledge and experience but also bragging rights if the project is a success.

But not every project can be paid for with other people’s money. Experts estimate that cities around the world will invest a total of about $41 trillion over the next 20 years to upgrade their infrastructure to benefit from the network of connected devices (IoT). So where will the money come from?

Up-front funding will remain a challenge, but the return on investment should make it more appealing for governments and investors. In the US, one study says that “every $1 increase in state CIO budgets is associated with a reduction of as much as $3.49 in state overall expenditures.”

One way or another, once cities find the money to build their connected smart, responsive infrastructures it will pay off. Operational efficiencies save cities money by better managing resources such as water and electricity. E-government services can be delivered to citizens, faster, and at a lower operating cost. Smart traffic and parking management enable improved productivity and help to attract more businesses to the area. Data collected from IoT devices, such as weather and traffic sensors, can be sold for profit.

There are many, many benefits to becoming a digital city, but the most important one is human. Living in one is better – easier, faster, cleaner and generally more pleasant - than the alternative.