In this issue of DCS you’ll find plenty of food for thought. Our Uptime Institute article gives a great insight into the thinking behind the Green Mountain data centre operation in Norway – where sustainability and energy efficiency are very much top of the agenda. Likewise, the Q and A with The Green Grid has a major focus on the same themes. Cynics might continue to suggest that no organisation will spend money on becoming green unless there’s a worthwhile financial payback, but the good news is that, broadly speaking, sustainability and energy efficiency are, more often than not, about improving the performance of some aspect of the data centre, hence there often is some kind of a monetary ‘reward’.

As I think I’ve written previously, for an industry that does consume significant amounts of energy, the data centre market has managed to remain pretty much ‘under the radar’ when it comes to attracting the attention of the environmental lobby. For as long as data centre owners, operators and industry organisations continue to focus on optimising the performance of the facilities that underpin almost every aspect of 21st century life – and certainly the rapidly developing digital age – then they can rest assured that they will be able to counter any criticism of their environmental performance with a degree of authority and pride.

Key to this successful future is understanding and adopting as appropriate the many new developments and innovations that are becoming such a hallmark of the IT and data centre landscape. The glass half-empty view sees far too many new ideas and products – how is anyone supposed to keep on top of all these developments, let alone decide if, how and when to deploy them? The glass half-full sees nothing but opportunity to continue improving performance, hence productivity and profitability. Our Q and A with Panduit provides a great insight into what’s going on in the world of fibre connectivity and networks – and I’m delighted to say that we’ll be running a series of articles on the future of this sector of the IT/data centre space over the coming months.

Not so long ago, 10G connectivity seemed a long way away for the average enterprise, but now companies like Panduit are already plotting a course towards 400G! Okay, so many end users might be bemused as to why they would ever need such speed. However, as the digital age evolves, who’s to say that, at some stage in the future, 400G will become just another stepping stone in the quest for ever faster feeds and speeds?

Advertisement: Server Technology

With tens of thousands of HDOT (High Density Outlet Technology) PDUs already installed, Server Technology has now completed its most popular and innovative product line ever with the addition of the HDOT Switched POPS® (Per Outlet Power Sensing) PDU. Now with device level monitoring, the most uniquely valuable rack PDU on the market provides the #1 solution for density, capacity planning, and remote power management for the modern data center.

Enterprise server rooms will be unable to meet the compute power and IT energy efficiencies required to meet the demands of fluctuating technology trends, pushing a higher uptake in hyperscale cloud and colocation facilities.

Citing the latest IDC research, which predicts a growing fall in the number of server rooms globally, Roel Castelein, Customer Services Director, at The Green Grid argues that legacy server rooms are failing to keep pace with new workload types and causing organisations to seek alternative solutions.

“It wasn’t too long ago that the main data exchanges going through a server room were email and file storing processes, where 2-5KW racks was often sufficient. But as technology has grown, so have the pressures and demands placed on the data centre. Now, we’re seeing data centres equipped with 10-12KW racks to better cater for modern-day requirements, with legacy data centres falling further behind.

“IoT, social media, and the number of personal devices now accessing data are just a handful of factors that are pushing the demands of compute power and energy consumption, which is causing further pressures on legacy server rooms used within the enterprise. As a result, more organisations are now shifting to cloud-based services, dominated by the likes of Google and Microsoft, and also colo facilities. This trend is not only reducing carbon footprints, but also guarantees that the environment organisations are buying into are both energy efficient and equipped for higher server processing.”

In IDC’s latest report, ‘Worldwide Datacenter Census and Construction 2014-2018 Forecast: Aging Enterprise Datacenters and the Accelerating Service Provider Buildout’, it claims that while the industry is at a record high of 8.6 million data centre facilities, after this year, there will be a significant reduction in server rooms. This is due to the growth and popularity of public cloud based services, occupied by the large hyperscalers including AWS, Azure and Google, which is expected to grow to 400 hyperscale data centres globally by the end of 2018. Roel continued:

“While server rooms are declining, this won’t affect the data centre industry as a whole. The research identified that data centre square footage is expected to grow to 1.94bn, up from 1.58bn in 2013. And with hyperscale and colo facilities offering new services in the form of high-performance compute (HPC) and Open Compute Project (OCP), more organisations will see the benefits in having more powerful, yet energy efficient IT solutions that meet modern technology requirements.”The growth of cloud and industrialized services and the decline of traditional data center outsourcing (DCO) indicate a massive shift toward hybrid infrastructure services, according to Gartner, Inc.

In a report containing a series of predictions about IT infrastructure services, Gartner analysts said that by 2020, cloud, hosting and traditional infrastructure services will come in more or less at par in terms of spending.

"As the demand for agility and flexibility grows, organizations will shift toward more industrialized, less-tailored options," said DD Mishra, research director at Gartner. "Organizations that adopt hybrid infrastructure will optimize costs and increase efficiency. However, it increases the complexity of selecting the right toolset to deliver end-to-end services in a multisourced environment."

Gartner predicts that by 2020, 90 percent of organizations will adopt hybrid infrastructure management capabilities.

The traditional DCO market is shrinking, according to Gartner's forecast data. Worldwide traditional DCO spending is expected to decline from $55.1 billion in 2016 to $45.2 billion in 2020. Cloud compute services, on the other hand, are expected to grow from $23.3 billion in 2016 to reach $68.4 billion in 2020. Spending on colocation and hosting is also expected to increase, from $53.9 billion in 2016 to $74.5 billion in 2020. In addition, infrastructure utility services (IUS) will grow from $21.3 billion in 2016 to $37 billion in 2020 and storage as a service will increase from $1.7 billion in 2016 to 2.7 billion in 2020.

Advertisement: Vertiv

In 2016, traditional worldwide DCO and IUS together represented 49 percent of the $154 billion total data center services market worldwide, consisting of DCO/IUS, hosting and cloud infrastructure as a service (IaaS). This is expected to tilt further toward cloud IaaS and hosting, and by 2020, DCO/IUS will be approximately 35 percent of the expected $228 billion worldwide data center services market.

"This means that by 2020 traditional services will coexist with a minority share alongside the industrialized and digitalized services," said Mr. Mishra.

A 2016 Gartner survey of 303 DCO reference customers worldwide found that 20 percent use hybrid infrastructure services and 20 percent more intend to get them in the next 12 months.

Gartner also predicts that through 2020, data center and relevant "as a service" (aaS) pricing will continue to decline by at least 10 percent per year.

From 2008 through 2016, Gartner pricing analysis of data center service offerings shows prices have dropped yearly by 5 percent to 7 percent for large deals and by 9 percent to 12 percent for smaller deals.

More recently — from 2012 to the present — prices for the new aaS offerings, including IaaS and storage as a service, have dropped in similar to higher ranges.

Traditional DCO vendors will exit the DCO market due to price pressure, while others will develop solution capabilities and continue to compete. Buyers will have the ability to choose between many more vendors, choose traditional or new solutions and achieve price reductions year over year through 2020.

By 2019, 90 percent of native cloud IaaS providers will be forced out of this market by the Amazon Web Services (AWS)-Microsoft duopoly.

Over the last four years, the public cloud IaaS market has begun to develop two dominant leaders — AWS and Microsoft Azure — that are beginning to corner the market. In 2016, they both grew their cloud service businesses significantly while other players are sliding backward in comparison. Between them, they not only have many times the compute power of all other players, but they are also investing in innovative service and pricing offerings that others cannot match.

According to Gartner, it is only in new markets that the dominance of AWS and Microsoft will be challenged by businesses such as Aliyun, the cloud service arm of Alibaba, the top player in China.

"The competition between AWS and Azure in the IaaS market will benefit sourcing executives in the short to medium term but may be of concern in the longer term," said David Groombridge, research director at Gartner. "Lack of substantial competition for two key providers could lead to an uncompetitive market. This could see organizations locked into one platform by dependence on proprietary capabilities and potentially exposed to substantial price increases."

New data from Synergy Research Group shows that hyperscale operators are aggressively growing their share of key cloud service markets, which are themselves growing at impressive rates.

Synergy’s new research has identified 24 companies that meet its definition of hyperscale, and in 2016 those companies in aggregate accounted for 68% of the cloud infrastructure services market (IaaS, PaaS, private hosted cloud services) and 59% of the SaaS market. In 2012 those hyperscale operators accounted for just 47% of each of those markets. Hyperscale operators typically have hundreds of thousands of servers in their data center networks, while the largest, such as Amazon and Google, have millions of servers.

In aggregate those 24 hyperscale operators now have almost 320 large data centers in their networks, with many of them having substantial infrastructure in multiple countries. The companies with the broadest data center footprint are the leading cloud providers – Amazon, Microsoft and IBM. Each has 45 or more data center locations with at least two in each of the four regions (North America, APAC, EMEA and Latin America). The scale of infrastructure investment required to be a leading player in cloud services or cloud-enabled services means that few companies are able to keep pace with the hyperscale operators, and they continue to both increase their share of service markets and account for an ever-larger portion of spend on data center infrastructure equipment – servers, storage, networking, network security and associated software.

Advertisement: Geist

“Hyperscale operators are now dominating the IT landscape in so many different ways,” said John Dinsdale, a Chief Analyst and Research Director at Synergy Research Group. “They are reshaping the services market, radically changing IT spending patterns within enterprises, and causing major disruptions among infrastructure technology vendors. Our latest forecasts show these factors being accentuated over the next five years.”

Worldwide IT spending is projected to total $3.5 trillion in 2017, a 1.4 percent increase from 2016, according to Gartner, Inc. This growth rate is down from the previous quarter's forecast of 2.7 percent, due in part to the rising U.S. dollar (see Table 1.)

"The strong U.S. dollar has cut $67 billion out of our 2017 IT spending forecast," said John-David Lovelock, research vice president at Gartner. "We expect these currency headwinds to be a drag on earnings of U.S.-based multinational IT vendors through 2017."

The Gartner Worldwide IT Spending Forecast is the leading indicator of major technology trends across the hardware, software, IT services and telecom markets. For more than a decade, global IT and business executives have been using these highly anticipated quarterly reports to recognize market opportunities and challenges, and base their critical business decisions on proven methodologies rather than guesswork.

The data center system segment is expected to grow 0.3 percent in 2017. While this is up from negative growth in 2016, the segment is experiencing a slowdown in the server market. "We are seeing a shift in who is buying servers and who they are buying them from," said Mr. Lovelock. "Enterprises are moving away from buying servers from the traditional vendors and instead renting server power in the cloud from companies such as Amazon, Google and Microsoft. This has created a reduction in spending on servers which is impacting the overall data center system segment."

Table 1. Worldwide IT Spending Forecast (Billions of U.S. Dollars)

|

| 2016Spending | 2016 Growth (%) | 2017Spending | 2017 Growth (%) | 2018 Spending | 2018 Growth (%) |

| Data Center Systems | 171 | -0.1 | 171 | 0.3 | 173 | 1.2 |

| Enterprise Software | 332 | 5.9 | 351 | 5.5 | 376 | 7.1 |

| Devices | 634 | -2.6 | 645 | 1.7 | 656 | 1.7 |

| IT Services | 897 | 3.6 | 917 | 2.3 | 961 | 4.7 |

| Communications Services | 1,380 | -1.4 | 1,376 | -0.3 | 1,394 | 1.3 |

| Overall IT | 3,414 | 0.4 | 3,460 | 1.4 | 3,559 | 2.9 |

Source: Gartner (April 2017)

Advertisement: Sudlows

Driven by strength in mobile phone sales and smaller improvements in sales of printers, PCs and tablets, worldwide spending on devices (PCs, tablets, ultramobiles and mobile phones) is projected to grow 1.7 percent in 2017, to reach $645 billion. This is up from negative 2.6 percent growth in 2016. Mobile phone growth in 2017 will be driven by increased average selling prices (ASPs) for phones in emerging Asia/Pacific and China, together with iPhone replacements and the 10th anniversary of the iPhone. The tablet market continues to decline significantly, as replacement cycles remain extended and both sales and ownership of desktop PCs and laptops are negative throughout the forecast. Through 2017, business Windows 10 upgrades should provide underlying growth, although increased component costs will see PC prices increase.

The 2017 worldwide IT services market is forecast to grow 2.3 percent in 2017, down from 3.6 percent growth in 2016. The modest changes to the IT services forecast this quarter can be characterized as adjustments to particular geographies as a result of potential changes of direction anticipated regarding U.S. policy — both foreign and domestic. The business-friendly policies of the new U.S. administration are expected to have a slightly positive impact on the U.S. implementation service market as the U.S. government is expected to significantly increase its infrastructure spending during the next few years.

Green Mountain operates two unique colo facilities in Norway, having a total potential capacity of several hundred megawatts. Though each facility has its own strengths, both embody the company’s commitment to providing secure, high-quality service in an energy efficient and sustainable manner. CEO Knut Molaug and Chief Sales Officer Petter Tømmeraas recently took time to explain to the Uptime Institute how Green Mountain views the relationship between cost, quality, and sustainability.

Tell our readers about Green Mountain.

KM: Green Mountain focuses on the high-end data center market, including banking/finance, oil and gas, and other industries requiring high availability and high quality services.

PT: IT and cloud are also very big customer segments. We think the US and European markets are the biggest for us, but we also see some Asian companies moving into Europe that are really keen on having high-quality data centers in the area.

KM: Green Mountain Data Centers operates two data centers in Norway. Data Center 1 in Stavanger began operation in 2013 and is located in a former underground NATO ammunition storage facility inside a mountain on the west coast. Data Center 2, a more traditional facility, is located in Telemark, which is in the middle of Norway.

Today DC1-Stavanger is a high security colocation data center housing 13,600 square meter facility (m2) of customer space. The infrastructure can support up to 26 megawatts of IT load today. The main data center comprises three two-story concrete buildings built inside the mountain, with power densities ranging from 2-6 kW/m2, but the facility can support up to 20 kW/m2. NATO put a lot of money into creating their facilities inside the mountain, which probably saved us 1 billion Kroners ($US 150 million).

DC2-Telemark is located in a historic region of Norway and was built on a brownfield site with a 10-MW supply initially available. The first phase is a fully operationa1 10-MW Tier lll Certified Facility, with four new buildings and up to 25 MW total capacity planned. This site could support even larger facilities if the need arises.

Green Mountain focuses a lot on being green and environmentally friendly, so we use 100% renewable energy in both data centers.

How do the unique features of the data centers affect their performance?

KM: Besides being located in a mountain, DC1 has a unique cooling system. We use the fjords for cooling year-round, which gives us 8°C (46 °F) water for cooling. The cooling solution (including cooling station, chilled water pipework and pumps) is fully duplicated, providing an N+N solution. Because there are few moving parts (circulating pumps) the solution is extremely robust and reliable. In-row cooling is installed to client specification using Hot Aisle technology.

We use only 1 kilowatt of power to produce 100 kilowatts of cooling. So the data center is extremely energy efficient. In addition, we are connected to three independent power supplies, so DC1 has extremely robust power.

DC2 probably has the most robust power supply in Europe. We have five independent hydropower plants within a few kilometers of the site, and the two closest are just a few hundred meters away.

Advertisement: Schneider Electric

How do you define high quality?

PT: High quality means Uptime Institute Tier Certification. We are not only saying we have very good data centers. We’ve gone through a lot of testing so we are able to back it up, and the Uptime Institute Tier Standard is the only standard worldwide that certifies data center infrastructure to a certain quality. We’re really strong on certifications because we don’t only want to tell our customers that we have good quality, we want to prove it. Plus we want the kinds of customers who demand proof. As a result, both our facilities are Tier III Certified.

Please talk about the factors that went into deciding to obtain Tier Certification.

KM: We have focused on high-end clients that require 100% uptime and are running high-availability solutions. Operations for this type of company generally require documented infrastructure.

The Tier III term is used a lot, but most companies can’t back it up. Having been through testing ourselves, we know that most companies that haven’t been certified don’t have a Tier III facility, no matter what they claim. When we talk to important clients, they see that as well.

What was the on-site experience like?

PT: When the Uptime Institute team was on site, we could tell that Certification was a quality process with quality people who knew what they were doing. Certification also helped us document our processes because of all the testing routines and scenarios. As a result, we know we have processes and procedures for all the thinkable and unthinkable scenarios and that would have been hard to do without this process.

Why do you call these data centers green?

KM: First of all we use only renewable energy. Of course that is easy in Norway because all the power is renewable. In addition we use very little of it, with the fjords as a cooling media. We also built the data centers using the most efficient equipment, even though we often paid more for it.

PT: Green Mountain is committed to operate in a sustainable way and this reflects in everything we do. The good thing about operating in such a way is that our customers benefit from this financially. As we bill power cost based on consumption of power, the more energy efficient we operate the smaller the bill to our customer. When we tell these companies that they can even save money going for our sustainable solutions this makes their decision easier.

More and more customers require that their new data center solutions are sustainable, but we still see that price is a key driver for most major customers. The combination of having very sustainable solutions and being very competitive on price is the best way of driving sustainability further into the mind of our customers.

All our clients reduce their carbon footprint when they move into our data centers and stop using their old and inefficient data centers.

We have a few major financial customers that have put forward very strict targets with regards to sustainability and that have found us to be the supplier that best meets these requirements.

KM: And, of course, making use of an already built facility was also part of the green strategy.

How does your cost structure help you win clients?

PT: It’s important, but it’s not the only important factor. Security and the quality we can offer are just as important, and that we can offer them with competitive pricing is very important.

Were there clients who were attracted to your green strategy?

PT: Several of them, but the decisive factor for customers is rarely only one factor. We offer a combination between a really, really competitive offering and a high quality level. We are a really, really sustainable and green solution. To be able to offer that at competitive price is quite unique because often people think they have to pay more to get a sustainable green solution.

Are any of your targeted segments more attracted to sustainability solutions?

PT: Several of the international system integrators really like the combination. They want a sustainable solution, but they want the competitive offering. When they get both, it’s a no-brainer for them.

How does your sustainability/energy efficiency program affect your reliability? Do potential clients have any concerns about this? Do any require sustainability?

PT: Our programs do not affect our reliability in any way. We have chosen only to implement solutions that do not harm our ability to deliver the quality we promise to our customers. We have never experienced one second of SLA breakage on any customer in any of our data centers. In fact, some of our most sustainable solutions, like the cooling system based on cold sea water, increase our reliability as it takes down the risk of failure considerably compared to regular cooling systems. We have not experienced any concerns about these solutions.

Has Tier Certification proven critical in any of your client’s decisions?

PT: Tier certification has proved critical in many of our client`s decision to move to Green Mountain. We see a shift in the market to require Tier certification, whereas it used to be more in the form of asking for Tier compliance, that anyone could claim without having to prove it. We think the future of quality data center providers will be to certify all their data centers.

Any customer with mission critical data should require their supplier/s to be Tier certified. At the moment this is the only way for a customer to secure that their data center is built and operated in the way it should in order to secure the quality that the customer needs.

Are there other factors that set you apart?

PT: Operational excellence. We have an operational team that excels every time. They deliver to the customers a lot more than expected every time, and we have customers that are extremely happy with their deliveries from us. I hear that from customers all the time, and that’s mainly because our operations team do a phenomenal job.

Uptime Institute testing criteria were very comprehensive and helped us develop our operational procedures to an even higher level as some of the scenarios created during the certification testing were used as a basis for new operational procedures and new tests that we now perform as part of our normal operating procedures.

Green Mountain definitely benefitted from the Tier process in a number of other ways, including training gave us useful input to improve our own management and operational procedures.

What did you do to develop this team?

KM: When we decided to focus on high-end clients, we knew that we needed high-end experience and expertise and knowledge on the ops side, so we focused on that when recruiting as well as building a culture inside the company that focused on delivering high quality the first time every time.

We recruited people with knowledge of how to operate critical environments, and we tasked them with developing those procedures and operational elements as a part of their efforts, and they have successfully done so.

PT: And the owners made the resources available so that they could spend the resources—both financial and staff-hour wise to create the quality we wanted. We also have a very good management system, so management has good knowledge of what’s happening, so if we have an issue it will be very visible.

KM: We also have high-end equipment and tools to measure and monitor everything inside the data center as well as operational tools to make sure we can handle any issue and deliver on our promises.

How the Cloud changes storage.

By John Kim, Chair, SNIA Ethernet Storage Forum.

Everyone knows cloud is growing. According to analysts, cloud and service providers consumed between 35-40% of servers in 2016 while enterprise data centers consumed 60-65%. By 2018, cloud will deploy more servers each year than enterprise.

This trend has challenged traditional storage vendors because more storage has also moved to the cloud each year, following the servers and applications. But it’s also challenging storage customers—the IT departments who buy and manage storage—as well, because they are expected to offer the same benefits as cloud storage at the same price.

The appeal of cloud storage is four-fold:

1) Price: Cloud storage might be cheaper than on-premises storage, as public cloud providers leverage economies of scale and frequently lower prices.

2) Rapid deployment: Application users can rent cloud storage capacity in a few hours, using a credit card, whereas traditional enterprise storage often requires weeks to acquire, provision and deploy.

3) Flexibility and automation: Cloud allows rapid increases or decreases in the amount and performance of storage, with no concerns about hardware management or refreshes, while changes and monitoring can be automated with scripts or management tools.

4) Cost structure: Cloud storage is billed as a monthly operating expense (OpEx) instead of an upfront capital expense (CapEx) that turns into a depreciating asset. You only pay for what you use and it’s typically easy to charge storage costs to the application or department using it.

Despite this appeal, many enterprise users are against moving all their storage to the public cloud for various reasons. Security: they might not trust their data will be sufficiently private or secure in the cloud. Regulations: government regulations might prevent them from using shared cloud infrastructure. Or from a performance standpoint, they might have locally-run applications that cannot get sufficient performance from remote cloud storage. (This can be resolved by moving applications to run in the same cloud as the storage.)

Other times, hardware is already purchased and the IT team strives to prove they can deliver on-premises storage solutions at a lower price than the public cloud. Either way, in the face of public cloud storage that is easy to consume and always falling in price, enterprise IT departments need to make storage cheaper and more flexible, either with a private cloud deployment or more efficient enterprise storage.

One way to “cloudify” the enterprise is software-defined storage (SDS). This separates the storage hardware from the software, and in some cases separates the storage control plane from the data plane. The immediate benefit is the ability to use commodity servers and drives to reduce storage hardware costs by 50%. Other benefits include increased agility and more deployment flexibility. You can choose different types and amounts of CPU, RAM, drives (spinning and/or solid-state), and networking for different projects and refresh or upgrade the hardware when you want instead of the storage vendor’s schedule. If you buy some of the fastest servers and SSDs, they can be your fast block/database storage today with one SDS solution then converted to archive/object storage three years from now using a different SDS solution.

Some SDS solutions let you choose between scale-up vs. scale-out and even hyper-converged deployments, and you can deploy different SDS products for different workloads. For example it’s easy to deploy one SDS product for fast block storage, a second one for cheap object storage, and a 3rd one for hyper-converged infrastructure. Compared to traditional arrays, SDS products are more likely to be scale-out and based on Ethernet (rather than on Fibre Channel or InfiniBand), but there are SDS products that support nearly every kind of storage architecture, access protocol, and connectivity option.

Other SDS vendors include more automation, orchestration, monitoring and charge-back/show-back (granular billing) features. These make on-premises storage seem more like public cloud storage, though it’s important to note that many enterprise storage arrays have also been adding these types of management features to make their products more cloud-like.

The benefits of SDS are appealing but not “free” because it requires integration and testing work. Achieving the 5 or 6-nines (99.999 % or 99.9999% availability) desired for enterprise storage typically requires careful qualification and testing of many aspects including server BIOS, drive firmware, RAID controllers, network cards, and of course the storage software. Enterprise storage vendors do all this in advance with rigorous qualification cycles and develop detailed plans for each model that covers support, upgrades, parts replacement, etc.

This integration work makes the storage more reliable and easier to support and service, but it takes a significant effort for an enterprise to do all this. It could easily require a few months of testing for the first rollout, followed by more months of testing every time the server model, software, drive model, or network speed changes. Cloud providers—and very large enterprises—can easily invest in hardware and software integration work then amortize the cost of their thousands of servers and customers. The larger ones customize the hardware and software while the huge Hyperscalers typically design their own hardware, software, and management tools from scratch. Enterprises need to determine if the savings of SDS are worth the cost of integrating it themselves.

Customers who want the cost savings and flexibility of SDS without the testing and integration requirements often turn to SDS appliances or bundles created by server vendors and system integrators who do all the testing and certification work. These appliances may cost more to buy and be less open to hardware choices than a “raw” SDS solution that is 100% integrated by the end user. But they still cost less and offer more frequent hardware refreshes than a traditional enterprise storage array. For these reasons the SDS appliances offers a good solution to customers who want the benefits of SDS but don’t want to do their own testing and integration work.

In the end choosing between SDS and traditional enterprise arrays usually comes down to a tradeoff between time and money. SDS lets you save money on hardware by investing a lot of time up-front for qualification and testing, while traditional arrays cost more to buy but don’t require the upfront time investment. Generally speaking, larger customers find SDS more appealing than smaller customers, but choosing a pre-integrated SDS appliance—which can include hyper-converged or hypervisor-based solutions—can make SDS accessible and affordable to customers of any size.

For more perspective on how the cloud changes storage, see the following SNIA resources on Hyperscaler Storage at www.snia.org/hyperscaler

By Steve Hone, CEO, DCA Data Centre Trade Association

Making predictions about the future is never easy, especially when it comes to technological advances or the impact these might have on the data centre sector as it attempts to keep up with demand and change.

We live in a fast moving world whose insatiable appetite for digital services is both rapidly growing and evolving at an alarming rate leaving Moore’s Law in its wake. In this month’s edition of the DCA Journal Dr Jon Summers article titled “standing on the shoulders of giants” touches on this very point. Additional contributions from Ian Bitterlin, David Hogg from ABM (formally 8 Solutions) and Laurens Van Reijen from LCL all provide additional insight into what might lie ahead and the impact this could have both positively and negatively on the data centre sector.

You would be wise not to ignore the past when peering into our crystal ball to predict what’s likely to be round the next corner. It’s also healthy to review some of the previous forecasts and predictions to see if they were proved correct or were widely over or under estimated, as Robert Kiyosaki (American Author) said “if you want to predict the future, study the past”.

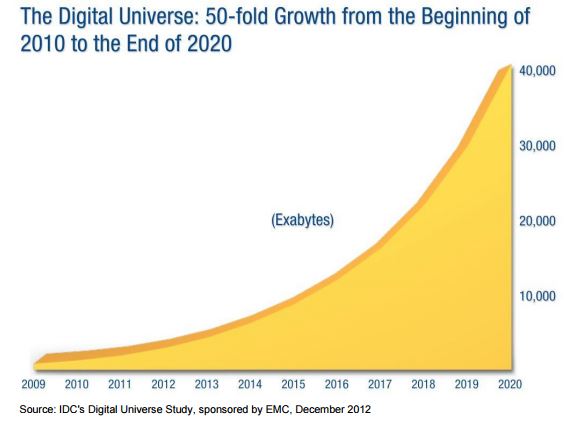

There is no denying that we are using far more digital services than we ever predicted, to put this into perspective in 2012 an IDC's Digital Universe Study*1, sponsored by EMC, calculated that based on historical data collected since 2010 the worlds data usage would rise from a modest 10,000 Exabyte’s to 40,000 Exabyte’s by 2020. Well, a Cisco white paper*2 confirmed that we had reached and exceeded that forecast by January 1st 2016 in mobile data alone, so it’s anyone’s guess where we go from here! Up and on a very steep curve would be a very safe prediction! Remember the concept of ‘Smart Cities’ and the ‘Internet of Everything’ is only just warming up.

Now I’m not suggesting for one minute that this explosion in demand for digital services is something to be frowned upon or that we should try in some way to slow it down, as quite frankly any attempt to do so would be utterly futile, now the genie is out of the bottle it’s simply unstoppable.

I was still in a highchair throwing food at my parents in 1969 when an American flag was first planted on the moon; that was nearly 50 years ago, today it is reported that the same amount of computing power it took to put man on the moon can now be found in my sons Xbox 360! If you need even more statistics take a look at the info graphic below produced in 2015 – 2M Google searches and 204M emails sent every 60 seconds together with over 4300 Hours of You Tube videos uploaded every 60 minutes. These figures are staggering and remember these numbers are now two years old and were compiled before the likes of On Demand TV, Netflix and Now TV streaming services kicked off.

It is only when you take these sorts of statistics into consideration that you realise how far we have travelled in such a short amount of time and why predicting the future is proving so hard to predict! As uncomfortable as it may be, the undeniable fact is we are now completely reliant and if you take my kids as a good (or bad) example, utterly dependant on the IT based technology and online digital services we use every day without thinking twice about them.

We have also become completely intolerant when it fails us, you would have thought the world was about to end if you can’t get 3G or Wi-Fi. We expect access to these services 24x7xforever[SH1] and to make that happen an unbelievable amount of work goes on behind the scenes at an infrastructure level to ensure you are not let down.

The Data Centre Industry, represents the beating heart of any digital infrastructure and is arguably now just as important to the health of our nation as Water, Gas and Electricity - ironically the supply of which are all controlled by servers located in data centres.

If the revised statistics coming out are to be believed, the demands on the Data Centre Operators and the import role they play in supporting our digital world is probably going to increase five times quicker than originally predicted. Like the Enterprise in Star Trek, life is now moving at warp speed and we need to find the right solutions to keep up with this voyage into the unknown.

The DCA plays a vital role as the Trade Association for the Data Centre Sector in ensuring the industry remains on the ball and fit for purpose. It was created with the express purpose of both supporting existing business leaders attempting to address the many challenges faced today and to collaborate with suppliers on R&D, training and skills development programmes to ensure we meet future demand. This is a team effort and we are here to help.

Next month’s DCA Journal theme is focused on Energy Efficiency, deadline for copy is the 16th May this is followed by Education, Skill and Training with a copy deadline of 13th June. If you would like to submit articles for either one of these editions please contact info@datacentrealliance.org, full details are on the DCA website http://data-central.site-ym.com/page/DCAjournal.

By David Hogg, Managing Director, ABM Critical Solutions

With the advent of the UK’s forthcoming departure from the European Union, much has been made of the ‘uncertainty’ that threatens to dog future trading relations.

But of all the challenges the data centre industry faces over the next few years, Brexit is, perhaps surprisingly, the least of our concerns. British firms and the UK Government are hopefully leaning towards pragmatism and avoiding unnecessary complexity. It is highly likely, therefore, that the UK will adopt the same data control laws as exist in the EU currently, meaning that there will be no difference for the major US tech players in where their data is stored.

It is true to say, of course, that restrictions on the free movement of labour may drive up the cost (and reduce the availability) of labour, but this tends to impact the lower skilled ‘commodity’ roles (e.g. within the hospitality sector) and will therefore have little or no impact on data centres.

Indeed, for all of our predictions for the future, most are likely to have a positive impact on the data centre industry of tomorrow. Consolidation of data centres, for example, continues at a pace, and this will include more pan-European deals as the maturity of the market and expansion of clients continues. This in turn will add to an increased focus on achieving best practice.

Whilst there is yet to be a ‘one-size fits all’ set of standards, best practice levels have risen markedly across the UK in the past few years. Bodies such as the Data Centre Alliance have been key to promoting the need for best practice, complemented by the commercial imperatives of the co-location data sector segment looking for a differentiator in order to attract new clients. The updated European standard for data centres (EN50600 – Information Technology – data centre facilities and infrastructures) will also drive an increase in best practice adoption rates.

Leading on from this, the increasing density of IT equipment is similarly prompting closer attention on performance against best practice. As a case in point, ABM Critical Solutions recently upgraded one of its client’s data centres (the customer is a major high street retailer) following the client’s investment in new IT. The retailer is now able to generate the same IT computing power using only 25% of their previous space occupied by the ageing IT infrastructure.

Best practice is similarly enabling a focus on insurance, and driving down the cost of premiums. There is already evidence that insurance companies recognise that data centres that adopt best practice inherently contain less risk, and therefore adjust premiums directly. Allianz, for example, appears to be taking the lead in this area, and we predict that others are likely to follow once the idea fully takes hold.

From a technology perspective, ‘Edge’ data centres will become increasingly important as high-speed networks such as 5G are rolled out (5G is currently expected in 2020). We predict a real growth opportunity in this type of facility, especially where the big data centre players don’t have a presence, or there is demand from a specific market niche. The Internet of Things (IoT), Virtual Reality (VR) and 5G will lead to a massive growth in the world’s data centre volume, and a key opportunity for data centre providers and service companies alike.

We also predict a period of evolution in the way services to data centres will be delivered. There is already an increased demand for companies to provide a full complement of services based around a core expertise or skilled workforce. This is being driven, in part, by a desire by data centre operators to manage a smaller number of external suppliers, wherever possible, to reduce costs.

By way of example, ABM Critical Solutions is now using the same teams that complete its technical cleans to also undertake simple, additional tasks such as cleaning CRAC units and changing filters. AC engineers are expensive, and this allows their time on site to be more productively utilised in areas where their skills can be better deployed. In this way, service providers will be able to deliver greater value, while supporting their clients’ need for greater operational efficiency.

By Ian Bitterlin, Consulting Engineer & Visiting Professor, Leeds University

Most of us like a bit of speculation and making lists, so this month, when asked for ‘predictions’, I have decided to make a list of my top three.

The first in my list, ‘in no particular order’, concerns ASHRAE and their Thermal Guidelines for microprocessor based hardware. We, the data centre industry in Europe, are very lucky to have ASHRAE. OK, they are a purely North American based trade association that serves its members but they are the sole global source for the limits of temperature, humidity and air-quality for ICT devices and have proven themselves to be far more progressive for the environmental good than anyone could have expected. If you follow their guidelines from the first to the latest you could be saving more than 50% of your data centre power consumption since the members of TC9.9, who include all the ICT hardware OEMs, have consistently and regularly updated their Thermal Guidelines, widening the temperature/humidity window to enable drastic improvements in cooling energy. So, what is my prediction? Well it certainly is not that they will make the same improvements in the future that they have made in the past, since the latest iteration leaves server inlet temperature warmer than most ambient climates where people want to build data centres and requiring almost zero humidity control. My prediction is, in fact, that the conservative nature of our data centre users will keep the constant lag in ASHRAE adoption at a lackadaisical and slightly unhealthy five years. What I mean by that is simple – the 2011 ‘Recommended’ Guidelines are, in 2017, just about accepted by the mainstream users as ‘risk free’ whilst many users still regard the 2011 ‘Allowable’ limits as avant-garde. So, I predict that ‘no humidity control’ and inlet temperatures of 28-30°C will be mainstream by 2022….

The second prediction in my trio concerns the long-forecasted, but now clearly closer, demise of Moore’s Law. When Gordon Moore, chemical engineer and founder of Intel, wrote his Law it was clear to him that the photo-etching of transistors and circuitry into silicon wafer strata doubled in density every two years. That was soon revised by his own company to a doubling of capacity every 18 months to consider the increasing clock-speed and, more recently by Raymond Kurzweil (sometime nominated as the successor to Edison), to 15 months when considering software improvements. It lost its simple ‘transistor count per square mm’ basis long ago but Koomey’s Law took up the baton and converted the 18 months’ capacity doubling to computations per Watt. Effectively that explains why it is so beneficial to refresh your ICT hardware every 30 months (or less) and more than halve your power consumption for the same ICT load. To make a little visualisation experiment in ‘halving’ take a piece of paper of any size and fold it in half, and again, and again... You will not get to seven folds since you will have reached the physical limit.

So why have data centres been growing if Moore’s Law and its derivatives have been providing a >40% capacity compound annual growth rate (CAGR)? The explanation is simple – our insatiable hunger for IT data services (notably including social networking, search, gaming, gambling, dating and any entertainment based on HD video such as YouTube and Netflix et al) has been growing at 4% per month CAGR (near to 60% per year) for the past 15 years. The delta between Moore’s Laws’ 40-45% and the data traffic rise at 60% gives us the 15-20% growth rate in data centre power. The problem comes when Moore’s Law runs out, which it surely will with a silicon base material, as then we will have to manage the 60% traffic per year without any assistance from the technology curve. Moore’s Law probably has 5 years left without a paradigm shift away from silicon (to something like graphene) but that is unlikely to happen ‘in bulk’ within the 5-year time frame. Looking at one of the major internet exchanges in Europe shows that peak traffic is running at 5.5TB/s with reported capacity at 12TB/s – but if we consider even a slight slowing down of the annual growth rate to 50% then it will be less than 2 years before the peak traffic will be pushing the present capacity limits. I predict a couple of years of problems during the dual-event of a paradigm shift away from silicon and a sea-change in network photonics capacity.

The last of my trio of predictions concerns the reuse of waste heat from data centres and is simply stated as: By 2027 waste heat will not be ‘wasted’ from a huge array of ‘edge’ facilities and they will become close to net-zero energy consumers. From my perspective, there is a gathering ‘perfect storm’ of drivers that will converge to drive infrastructure designers to liquid based cooling:

The solution is simple and within our grasp today – liquid cooling of the heat generating components, particularly the microprocessors. With liquid immersed or encapsulated hardware and heat-exchangers pushing out 75-80°C into a local hot-water circuit with 94% efficiency the data centre will have a net power draw of just 6%. Just five cabinets (a micro-data centre by todays definition), equivalent to 80x todays ICT capacity, will be able to offer to the building 100kW of continuous hot-water. Consider embedded 100kW micro-facilities in offices, hotels, sports centres, hospitals and apartment buildings. Indeed, could this be the ‘major’ future? Could giant, remote, air-cooled facilities become obsolete? Probably not for twenty years, but then…

By Dr Jon Summers, University of Leeds

How much do you trust the weather predictions for tomorrow? If you are observant you may have noticed that such predictions have improved over time. This is in fact a direct consequence of Moore’s law, which I am sure you have heard much about, but suffice it to say it has been a self-fulfilling prophecy for successful growth of the ICT industry for nearly 50 years. Weather predictions become more accurate with faster supercomputers, which can then provide predictions in time for the broadcast weather forecast.

Talking about Moore’s law and making predictions and forecasts, it is interesting to ask the question if there are physical limits that restrict Moore’s law from continuing as it has done for many decades. Recently the academic and technical literature abounds with indications that manufacturing transistors with gate lengths of only a couple of atoms is limited due to two main reasons, namely fabrication cost and quantum effects. The former is likely to be the main limitation as indicated in the 2016 Nature News article called the chips are down for Moore’s law, which included the quote “I bet we run out of money before we run out of physics”.

In 1961, IBM employee Rolf Landauer published a paper that highlighted a relationship between energy and information that amongst other things reinforced the point that digital information is not ethereal. What the paper implied was that there was an ultimate minimum amount of energy dissipated (as heat) in a transistor (switch) at room temperature of 3 zeptoJoules (0.000000000000000000003 Joules), which is due to the erasure of information as part of the logical steps in the digital processes, which lead to the notion of the “physics of forgetting”. This minimum energy became known as the Landauer limit, but if information is never erased it would be theoretically possible to build switches that do not adhere to this limit. In fact this was discussed by a colleague of Landauer, Charles Bennett, in 1973 where it was suggested that if the computer logic was made reversible so that information could flow both ways without digital erasure, then it would be possible to compute with far less energy. This was in fact recently achieved in the laboratory using what is called an adiabatically clocked microprocessor.

The question you may be asking yourself now is how the demise of Moore’s law impacts on data centres. The answer is probably not much since the heat removal and power requirements will continue to be an issue for facility management, but it is worth trying to understand how ICT may change as we march into the next decade. Analysing the literature there are a number of interesting developments in building a replacement for Field Effect Transistors (FETs), the switch that creates the logic necessary for processing digital information. The immediate idea with today’s technology is to keep heat dissipation down but continue to increase transistor count by lower voltages, introducing three dimensional features, switching at lower speeds and making use of new materials. There are also a range of activities that are being pursued in the laboratory, namely computers that use reversible logic, superconducting switches, quantum processes, approximation and neuromorphic processes, which are not ready for the mainstream data centres. However, the issue of dark silicon, i.e. part of the “silicon chip” that cannot use power simultaneously with other parts, is likely to grow. This in effect has already happened when multicore microprocessors were introduced in 2005, but rather than having more “general purpose” processing cores they will be specific for certain functions, such as encoding, encryption, compression, etc., a development that is already occurring in the smartphone. ICT hardware is likely to become heterogeneous and application software development will then become the main focus for energy efficiency.

Our ability to make prediction based on scientific theory has only been possible with the developments of calculating machines, but predicting how ICT will develop in the future may be a question that we really need to ask the machines themselves.

“If I have seen further, it is by standing on the shoulders of giants” was the phrase that Isaac Newton used in a letter to his rival, Robert Hooke, in 1675.

LCL Survey of Belgian Companies By Laurens van Reijen, Managing Director of LCL Belgium

Belgium's listed companies have a false sense of security when it comes to data storage. 97% do not test power back-up systems and 50% plan to outsource activities. CIOs and IT managers of listed companies incorrectly assume that their corporate data is stored safely and securely. According to a survey of Belgian listed companies carried out by LCL Data Centers, they underestimate risks such as power cuts and fire, they fail to test their protective systems and they do not invest sufficiently in redundancy.

The survey of Belgian, quoted companies that LCL ordered, shows that data security is not seen as essential within IT governance, not even with quoted companies. For instance: with only one data center, in case of a disaster, you risk losing absolutely all your data. After your power shuts down, your company does too. If you really want to be safe, at least 30k’s should separate both data centers. Moreover, best practices dictate that one should separate the development environment from the production systems.

The CIOs and IT managers of 168 Belgian quoted companies took part in the survey. Of these companies, 87.5% felt they were protected from disasters such as fire or lengthy power cuts. Surprisingly, these respondents said that this was ‘because power cuts rarely happen’. The fact that they also have a disaster recovery service also added to their sense of security. Just 5% of respondents indicated that their organization was ‘reasonably protected', while 7.5% said that their organization had inadequate protection. This final group stated that in the event of a disaster it would not be possible to guarantee the continuity of the organization.

However, when asked whether their systems are also tested by switching off the electricity supply, only 3% of respondents answered yes. This means that a full 97% of respondents will effectively ‘test’ their backup systems for the first time when a disaster occurs.

“Our conclusion is that Belgian listed companies have a false sense of security,” Laurens van Reijen, LCL's Managing Director, said. “Many of the smaller listed companies, and some of the larger organizations, are not adequately equipped to deal with power cuts or other risks. They don't even know how well-protected their systems are, as they don't test their power backup systems. All organizations, and quoted companies in particular (in the context of corporate governance), should have all the protective systems they need to guarantee that the servers are dependable 24 hours a day, 7 days a week and they should actually test these systems on a regular basis.”

More than half of the listed companies store their data internally at the head office. One tenth of them rely on their own server room or a data center at another location owned by the company. A total of 44% of the respondents have a server room that is less than 5 m² in area. In this kind of set-up it is clearly impossible to include appropriate protective measures or specialized staff.

That said, most of the respondents do not have a second data center: 53%. That means, they have no backup in case of fire or theft of the servers. At the same time, half of the listed companies included in the survey have plans to outsource activities. At one third of the quoted companies that have a second data center, the second data center is located less than 25 km from the company's first data center. A major power cut is therefore likely to affect both data centers, which means the back-up plan will not be very effective.

“And yet business continuity is a must for virtually every business today,” Laurens van Reijen added. “The rise of digital technology has led to more and more business processes being digitized. Digital technology is being adopted in new, disruptive business models more than ever before, and these business models are thus dependent on the availability of the IT infrastructure. Shutting down servers in order to carry out maintenance work is no longer an option, as customers also need to be able to visit the website at night to submit orders. And as we have seen recently in Belgium at Delta Airlines, Belgocontrol and the National Register, a server breakdown can cause serious problems.”

“What are the odds that the current mentality – we all trust that all will go well - will change in the short term? Only a minority of companies interviewed said they were planning to set up a second data center. If we really want change, it will have to be directed by the Belgian stock exchange control body: FSMA. So in the best interest of our Belgian quoted companies, for the sake of their business continuity and employment - not to mention the shareholders who want return on their investment; data loss will almost certainly cause share devaluation - we call upon FSMA to issue a new guideline for quoted companies. A guideline pushing quoted companies to have a second data center, and to either thoroughly test all back-up systems, including power backup, or to confide in a party that does just that for them. It’s a pain in the lower back part, but people will not move unless they have to”, Laurens van Reijen concluded.

LCL has many years' experience and know-how in data centers and colocation. The company has three independent data centers: in Brussels East, Brussels West and Antwerp. The Belgian IDC-G member is ideally located in the center of Europe. At 4 miliseconds from Amsterdam and 5 miliseconds from London and Paris in terms of round trip latency, LCL is a vital link in IDC-G’s international data center network.

LCL has clients in a wide range of sectors. Multinationals and small and medium-sized enterprises, government bodies, internet companies and telecom operators all call upon the services of LCL. The company is ISO 27001 and Tier 3 certified. LCL also opts resolutely in favor of sustainability and is 14001 certified.

Laurens van Reijen, LCL’s CEO, is a seasoned data center professional. He was a founder and Operations Director at Eurofiber before founding data center company LCL in 2002.

For more information:

http://www.lcl.be

A key revelation to some at the first European Managed Services and Hosting

Summit in Amsterdam on 25th April was that, outside of the managed services

industry, no-one is calling it that. With a strong focus on customers and how

they engage with managed services, the event discussed how the model had become

mainstream in the last year, and was now the assumed way of working for many

industries.

Over 150 attendees from nineteen different European countries met to review the state of the market and the ways to take the industry forward. Bianca Granetto, Research Director at Gartner, set the scene with a keynote on how Digital Business redefines the Buyer-Seller relationship. In this she showed how customers are using more and more diverse IT suppliers, while still looking for a trust relationship with those suppliers, and that this process will continue in coming years. “The future managed services company will look very different from today’s,” she concluded.

This was reinforced by TOPdesk’s CEO Wolter Smit who, in a discussion on the new services model, said that MSPs were actually in the driving seat as the larger IT companies could not reach their level of specialisation. Dave Sobel, SolarWinds MSP’s partner community director also pointed out that many of the existing IT services companies were decades old and, with management due for replacement, new thinking among the providers was inevitable.

The top trends affecting the market were outlined by several speakers, with IoT, user experience and smart machines within the list – and IoT will be profitable for suppliers, according to Dave Sobel, with the MSPs top of the list as beneficiaries.

IT Europa’s editor John Garratt highlighted the differences between the US and European managed services markets, with the US more focused on financial returns. Price was apparently less important to European customers, who were more focused on gaining control of their IT resources. Autotask’s Matthe Smit said that price indeed mattered less than a good supportive relationship. But, he said, less than half of providers actually measured customer satisfaction, and this would have to change.

If anyone was in any doubt of the impact of the new model, Robinder Koura, RingCentral’s European channel head, showed how cloud-based communications had pushed Avaya into bankruptcy, and the new force was cloud-based and more flexible.

Security was never going to be far from the discussions, and Datto’s Business Development Director Chris Tate shook up the meeting with some of the latest statistics on ransomware. MSPs are in the firing line in the event of an attack like this, and he gave some sound advice on responses and precautionary measures. Local MSP Xcellent Automatisering’s MD Mark Schoonderbeek also revealed how he launched new services using a four-layered security offering: “First we'll search for vendors through our existing partnerships. When we find a good product - we'll R&D it from a technical standpoint. If the product meets our quality standards we will roll out within our own production environment. Then we'll go to one of our best customers in a very early stage, we tell them it's a test-phase and we'll implement the service for free, but in return we want the customers feedback (what went well, what went not so well and what is the perceived value of the service that is offered). Then we'll make a cost calculation and ask the customer what the service is worth. We'll put a price on the product and deliver it fixed price. Next step is to sell the product to all existing customers.”

The impact of the new EU General Data Protection Regulation (GDPR) was starting, but there were many unknowns, not least how various regulators across Europe would react to the provisions, warned legal expert and partner at Fieldfisher, Renzo Marchini, while the opportunities and general strong confidence in the European IT market were illustrated by Peter van den Berg, European General Manager for the Global Technology Distribution Council (GTDC).

Finally, a well-received analysis of what was going on in the tech M&A sector showed attendees where to make their fortunes and how to do so quickly. Perhaps unsurprisingly the key to creating value within a company turns out to be generating highly repeatable revenues – which is what managed services is all about.

For further information on the European Managed Services and Hosting Summit visit www.mshsummit.com/amsterdam.

Many of the issues debated during the European Managed Services event will be further discussed at the UK Managed Services and Hosting Summit, which will be staged in London in September – www.mshsummit.com

The server industry is becoming more and more automated. Robots are helping to deploy servers and clouds that are moving away from large racks of blades and hardware managed by teams of administrators to machines that are deployed and managed with minimal human interaction. The way information is delivered as well as improvements in automation technologies are fundamentally changing the central role of IT.

By Mark Baker, OpenStack Product Manager, Canonical.

Data centres are becoming smaller, distributed across many environments, and workloads are becoming more consolidated. CIOs realize there are less dependencies on traditional servers and costly infrastructure; however, hardware is not going anywhere. For IT executives, servers will be part of a bigger solution that creates new efficiencies that will make cloud environments quicker and more affordable to deploy.

CIOs wishing to run either cloud on premises (private cloud) or as a hybrid with public cloud, need to master both bare metal servers and networking. This has caused a major transition in the data centre. Big Software, IoT (Internet of Things), and Big Data are changing how operators must architect, deploy, and manage servers and networks. The traditional Enterprise scale-up models of delivering monolithic software on a limited number of big machines are being replaced by scale-out solutions that are deployed across many environments on many servers. This shift has forced data centre operators to look for alternative methods of operation that can deliver huge scale while reducing costs.

As the pendulum swings, scale-out represents a major shift in how data centres are deployed today. This approach presents administrators with a more agile and flexible way to drive value to cloud deployments while reducing overhead and operational costs. Scale-out is driven by a new era of software (web, Hadoop, Mongodb, ELK, NoSQL, etc.) that enables organisations to take advantage of hardware efficiencies whilst leveraging existing or new infrastructure to automate and scale machines and cloud-based workloads across distributed, heterogeneous environments.

For CIOs, one of the most often overlooked components to scale-out are the tools and techniques for leveraging bare metal servers within the environment. What happens in the next 3-5 years will determine how end-to-end solutions are architected for the next several decades. OpenStack has provided an alternative to public cloud. Containers have brought new efficiencies and functionality over traditional Virtual Machine (VM) models, and service modelling brings new flexibility and agility to both enterprises and service providers, while leveraging existing hardware infrastructure investments to deliver application functionality more effectively.

Because each software application has different server demands and resource utilization, many IT Organizations tend to over-build to compensate for peak-load, or they will over-provision VMs to ensure enough capacity years out. The next generation of hardware uses automated server provisioning to ensure today’s IT Pros no longer have to perform capacity planning five-years out.

With the right provisioning tools, they can develop strategies for creating differently configured hardware and cloud archetypes to cover all classes of applications within their current environment and existing IT investments. Effectively making it possible for administrators to make the most of their hardware by having the ability to re-provision systems for the needs of the data centre. For example, a server used for transcoding video 20-minutes ago is now a Kubernetes worker node, later a Hadoop Mapreduce node, and tomorrow something else entirely.

These next generation solutions bring new automation and deployment tools, efficiencies, and methods for deploying distributed systems in the cloud. The IT industry is at a pivotal period, transitioning from traditional scale-up models of the past to scale-out architecture of the future where solutions are delivered on disparate clouds, servers, and environments simultaneously. CIOs need to have the flexibility of not ripping and replacing their entire infrastructure to take advantage of the opportunities the cloud offers. This is why new architectures and business models are emerging that will streamline the relationship between servers, software, and the cloud.

Advertisement: DT Manchester

Employees are demanding a different experience and require new ways of working. At the centre of this shift is the mobile and IoT device; but European businesses are failing to place this at the heart of their technology strategies. The compelling evidence speaks for itself: Gartner predicts a $2.5m spend per minute on IoT and one million new IoT devices sold every hour by 2021.

By Nassar Hussain, Managing Director, Europe, Middle East & Africa at SOTI.

So what is the main barrier holding businesses back from getting a competitive edge from mobility?

Mobility is an essential enabler of digital transformation. Yet, when it comes to Enterprise Mobility Management (EMM) and Unified Endpoint Management (UEM), there is a lack of strategic planning among European businesses. In terms of implementing and utilising EMM solutions much of the enterprise focus has centred around how to manage and secure mobile devices at a basic level.

Recent research discovered 61 per cent of European businesses are making little to no progress towards mobility objectives, with a further 26 per cent yet to realise any value from mobility. This lack of recognition and appropriate response to changing user demands will impact an organisation’s productivity, dynamism, experience and competitiveness.

Furthermore, half of European businesses are failing to impose basic mobile device management to administer their smartphones, tablets and laptops. This raises concerns about the ability to combine people, processes and technology in an EMM solution.

To address this, businesses must ensure they not only have a vision, but the strategy and fundamentals in place to meet their employees’ expectations. This is a major cause for concern if enterprises are to create competitive advantages from mobility as it gives employees freedom, while also encouraging mobile working.

A lack of vision is very much with Europe business leaders, who are failing to understand the strategic benefits of managing their mobile strategies and investment. The leaders in question have cited budgetary, security and privacy concerns as the main obstacle to their organisation realising value from EMM and UEM.

However, with better understanding of mobility at a top level, a business can implement the correct mobility strategy to combat these concerns and ensure its data and information is safe no matter what type of device and its location.

Traditionally IT departments worked in silos. However with the rise in BYOD, they are now working with a varied range of devices not owned by the organisation. Therefore to see a mobility strategy put in place across an organisation, business leaders must have the vision to allow IT departments to take the lead.

IT departments must be involved at a closer level, supporting employees’ broad use of technology. In parallel, they must extricate themselves from old corporate silos and help to design systems with mobility at their core. In the fast pace of today’s digital revolution, business leaders should engage IT managers and mobility specialists to take charge, making sense of the chaos.Starting with a clear vision will not inform the strategy, but allow organisations to benefit from business critical mobility, enhancing their customer experience and place mobility at the heart of their digital transformation plans.

DCS kicks of a series on fibre connectivity and optical networks by talking with Panduit’s President and CEO, Tom Donovan.

Panduit was born from innovation. In 1955 we launched our first product, Panduct Wiring Duct, a new invention that uniquely organised control panel wiring and allowed new wires to be added quickly and neatly. Since that time Panduit has introduced thousands of problem solving new products and remained committed to providing innovative electrical and network infrastructure solutions.

Today, customers look to Panduit as a trusted advisor who works with them to address their most critical business challenges within their Data Centre, Enterprise, and Industrial environments. Our proven reputation for quality and technology leadership coupled with a robust ecosystem of partners across the world enables Panduit to deliver comprehensive solutions that unify the physical infrastructure to help our customers achieve operational and financial goals.

Our mission is to leverage the full portfolio and capabilities of Panduit, and our partner ecosystem, in order to support our customers in the design, development and deployment of infrastructure solutions that enable the achievement of superior results.

1955 Jack Caveney invents wiring duct, 1955

1959 First patent awarded for PanDuct®

1960 LOK-Strap cable tie is developed

1970 – 1979 Period led by international expansion

1980 Formal Research and Development facility established

1989 Network Connectivity Group established

1989 Panduit is the network infrastructure provider for the largest fibre communication network (150 mB ATM) in an enterprise (Sprint Campus in Kansas, City, MO USA)

1990 – 1999 Network Connectivity Group expands with Data Communications suite of products: copper and fibre connectivity, patch panels, racks, cabinets and cable management products

1990 Six Sigma implemented

1994 Fibre connectors and accessories launched to address longer length and high data transmission rates in the data centre

1999 Telecommunications Racks are introduced

1999 Cisco Systems selects Panduit as their infrastructure provider of choice and collaborates in designing new infrastructure solutions. Cisco is now a key Global Alliance Partner for Panduit.

2005 OptiCam® fibre optic connector is introduced

2006 High Speed Data Transport solutions is introduced to deliver reliable 10Gb/s performance

2007 EMC partnership established

2007 Fibre laboratory capabilities are UL certified

2008 Launches intelligent infrastructure management solutions

2009 Panduit, Cisco and Intel join forces to promote 10G over Ethernet

2009 Panduit partners with VCE to develop optimised cloud solutions

2011 Signature Core™ Fibre introduced

2012 Introduces new Energy Efficient Cabinet system for the Data Centre

2012 Panduit and Rockwell Automation expand longstanding relationship and form a strategic alliance to drive Ethernet to the factory floor.

2012 & 2013 For two years in a row, Panduit is awarded InformationWeek’s 500 Masters of Technology

2013 PanMPO™ connector is introduced to provide easy migration from 10G to 40G in the data centre

2013 Panduit introduces SmartZone™ Solutions for physical infrastructure management

2014 Panduit acquires SynapSense® to provide wireless monitoring and cooling control solutions for data centres

Advertisement: Server Technology

Both HDOT and Alternating Phase are available through Server Technology's “Build Your Own PDU” configurator. Build Your Own PDU takes a Switched, Smart or Metered 42-outlet High Density Outlet Technology (HDOT) PDU and allows you to build an HDOT PDU your way in Four Simple Steps. Choose a configuration. Download a spec sheet and request a quote in four simple steps.

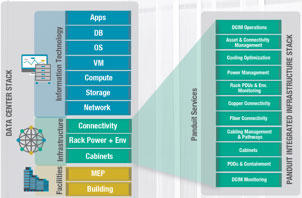

This is an overview of our Data Centre products and services portfolio:

At Panduit, we find that our customers don’t just have to worry about designing, procuring and implementing the right IT and facilities. They must also focus more than ever on controlling costs and conserving energy usage. The bottom line is they’re struggling to deploy a data center—whether that means reconfiguring their existing one or creating a new one—that addresses every need.

Converged Infrastructure: Panduit’s Converged Infrastructure solution offers unique value to the market by delivering a comprehensive and reliable infrastructure framework that bridges the gap between the traditional Facility and IT stacks to ensure a seamless physical to logical convergence. Given the enormous expense of operating data centres, organisations must design and deploy an architecture that is built to meet future needs, requiring scalability to meet changing business demands and optimisation of IT investments – delivering value throughout the data centre lifecycle. Panduit accelerates the design cycle, simplifies implementation, optimises operations and improves total cost of ownership.

Fibre Solution Set: Panduit High Density Fibre Solutions help customers maximize and transform their data centre space to accommodate NextGen technologies and evolve within IoT and beyond to IoE. Creating the most agile, scalable, high performance system in the market. As a cohesive set, HD Flex™ 2.0, PanMPO™ and Signature Core™ solve in tandem: network performance, system reliability, energy efficiencies, seamless integration, space and savings, installation and uptime, data transfer speed and migration to future demands.

Cooling Optimisation and Thermal Solutions: The one-size-fits-all approach of just maintaining a constant temperature to keep data centres cool is no longer a relevant solution with energy prices rising and capacity concerns become more prevalent. Panduit’s energy efficient cabinets and SynapSense® cooling optimisation solution allow higher data center set points and reduce cooling system energy consumption by up to 40%. From controlling small leaks, maintaining hot/cold air separation and deploying real-time monitoring and mapping of the data centre environment, ensures optimal energy efficiency and cooling energy savings across the operations.

The major pain points that Panduit has encountered at the data centres we visit are downtime and saving money via energy savings. Especially in colocation facilities, these two issues are top of mind and flow directly to the business’ bottom line. By far, the largest amount of operations budgets spent in the data centre are around cooling. Energy efficiency being the greatest opportunity for cost savings, Panduit often leads with SynapSense® Cooling Optimisation solutions. SynapSense is a turn-key wireless monitoring and cooling control solution that uses intelligent software, leading edge wireless nodes, and professional services to gain real-time visibility into current data centre operating conditions.