I guess that we can still rely on our old friends ‘death and taxes’ as further certainties, but 2020 has proved that there is no place for complacency in the fast-moving, even chaotic world in which we now live. While the world rushes headlong towards a digital future, we would do well not to ignore a relatively old, established discipline – history. While all manner of algorithms, machine learning and artificial intelligence crunch numbers in an attempt to predict the future of almost everything, a look at the past gives us a pretty accurate picture of what to expect in the future.

Those of a nervous disposition, should look away now. For, much as we like to think that we have never had it so good in the relatively early years of the 21st century, the truth is that we are still wrestling with almost all of the issues which concerned our ancestors, and we have a few new ones added into the mix as well! Massive disparities between the haves and have-nots in society continue to exist; political instabilities across the globe remain a constant – while physical warfare is not always the result, cyber and financial warfare are very much on the rise; technology continues to create massive social change and unrest; dishonesty, corruption and plain old incompetence continue to dog governments across the world; and, a relatively new concept, climate change threatens to overshadow everything.

So, we should not be that surprised or indignant when history repeats itself in so many ways and so regularly. The questions is, do we ever learn from what happened in the past, and use this learning to help us plan for the future?

And the answer to that question is likely to determine the future of your business. Okay, so no one could have predicted the exact timing or the scale of the Covid-19 pandemic, but how many businesses allowed for such an event (which history tells us was going to happen sooner or later) in their business continuity/disaster recovery plans? And how many businesses are agile and fast enough to move to address rapid changes?

In summary, expecting the unexpected has to be a part of any credible business plan.

Working with Kaspersky on its upcoming enterprise campaign, security practitioner Naveen Vasudeva, Founder and CEO of The CyberTree Paradox LLC , addresses the current disjoint which exists between cybersecurity vendors and their customers. Naveen believes as companies wrestle with the complexities of security in the digital age, they need trusted partners, such as Kaspersky, who will work with them to help identify and implement best cybersecurity practice.

AS MANY END USERS STRUGGLE to understand the complexities of cybersecurity, it seems right to ask whether the vendor community is doing enough to help customers (actual and potential) with this challenge. I think they are, but only to a certain degree and for a specific audience segment: The very technically minded. Unfortunately within businesses this segment rarely makes decisions as they don’t have access to budgets and aren’t held to account because of that.

So, I would raise another question - Where’s the thought leadership in the way that these technology companies are actually talking about cybersecurity and applying it to real life problems that these businesses face?

Vendors and end users must come together and find a way to communicate better. In particular, vendors have a key role to play when it comes to helping end users understand the key pillars of an end to end security strategy - many of whom may well be somewhat in the dark as to what these should be.

So, do end users fully appreciate cybersecurity strategies within their businesses? The answer depends on whether they’re an SMB enterprise, or a large corporate; if they’re regulated or not regulated; if they are compliance or not compliance driven.

I believe that organisations have an absolute responsibility to ensure that their end users are educated, are made aware and are trained correctly. It is then the responsibility of vendors that supply and support those tools and technologies - that help prevent particular breaches or malware or viruses or conducting penetration testing. They have to find a way of communicating what they’re doing so that the end user is again made aware and educated.

Within the wider conversation about responsibility, it’s important to acknowledge the importance of individual accountability. If a person works for a large organisation, they might argue that the company has a couple of “IT people" that are responsible for cybersecurity. Actually, a company of 250 employees has 250 people that are responsible for cybersecurity. This is where the mentality has to shift. I think we all have a good understanding of what it means to protect ourselves and to protect those around us, so it’s time to make sure that understanding is put into action.

In my opinion, vendors and customers need to address how they communicate with each other. Communication needs to flow both ways to ensure that the other is informed enough about a business’ functionality to service their cyber security needs adequately. Individuals within organisations may not have the necessary skills to understand all that they need to and that’s where a vendor should step in to educate and mentor, but I acknowledge that this is a lot of responsibility to put on individual vendors.

The perfect situation would be where there is a symbiotic relationship between vendor and customer – Where the vendor understands the pain points and can suggest cost-effective fixes. At the same time the customer accepts these suggestions and takes the advice of the vendor, this is why these companies outsource their security to a third party, right?

To make this sort of relationship work, vendors need to be transparent in what they’re trying to achieve and I think that’s what’s really great about Kaspersky, the level of transparency in the way that they’re trying to deliver products and services. It’s not about selling a product. It’s about supporting a business in the delivery of the right cybersecurity strategy that’s going to help them.

In terms of moving forward, there needs to be a better way of vendors and end users working together. That’s one of the reasons why I teamed up with Kaspersky - to address this particular issue. And I’m so happy that they stepped up to take on the challenge, which is effectively: Chief Information Security Officers (CISOs) or anyone that’s accountable for cybersecurity with an organisation have to start having more transparent, open dialogues with vendors. So let’s start with the practitioners.

The whole world has seen a massive shift come about in 2020 due to the global pandemic. We have all been affected in one way or another, and more people than ever are working from home. This change has resulted in a digital transformation for many businesses who suddenly found themselves desperate to transform and secure their systems to make remote working safe and efficient.

So if you think about organisations compressing what would have been two to three years’ worth of digital transformation into months’ worth of work, that’s a huge change in security landscape. And therefore, you require a huge pool of vendors to help support the delivery of that transformation.

One thing that we might have missed in the rush to make home-working cyber secure was the communication piece. What I was witnessing within the industry was people complaining about the number of vendors trying to contact them, the number of people trying to sell them stuff, the number of people not listening to them.

And you could sense the hostility, vendors were saying ‘we’re trying to help you, we’re trying to provide services. But businesses saw it as another affront by the vendors wanting to take up their time. One is not listening to the other and vice versa.

So what would I recommend? We need to stop and assess what that relationship actually is, because it’s about trust. Forget the tools and the technology and the processes for a second.

If I believe in what somebody is trying to tell me about a solution, I want to build a relationship with that individual, and I want to trust them. So let’s distil it down to simple human engagement. Everyone’s time is valuable but when there’s something important to get across, that is worth taking the time to press pause.

Once that relationship is built, a company can then go on to build a business case because they believe in the solution being presented. The customer needs to understand how it all works so that they can become your representative in-house and defend that spend.

There’s a level of patience vendors need to have with businesses, and especially now we’re entering into a period of economic uncertainty. Cybersecurity has never been more important and businesses need to continue to invest to protect their assets.

My final piece of advice for any vendor wishing to create a relationship with their customers is to engage with working groups. Effective working groups that debate and challenge what vendors are doing. I actually sit on multiple working groups for particular vendors, whether it’s governance, risk compliance, threat intelligence or penetration testing. The idea is, is that as part of a CISO group, I can say what works, what doesn’t work, at a technical level, at an operational level, at a strategic level.

Moving on from this, it makes sense to understand what a basic checklist for end users looks like, when assessing a cybersecurity vendor, such as Kaspersky. What is the sort of language you want to hear from them when they’re talking to you?

There are a lot of vendors out there. I think at the last count, in the UK alone, there are probably about 7000 cyber security vendors, so it’s hard for customers to know who to trust.

There are a couple of pieces of advice I have to impart on this. One is, there are many different ways customers can be informed about what cybersecurity tools and services are out there. It is a really complex market. There are a couple of organisations that are trying now to challenge that. But people’s dependency has mainly been on the likes of Gartner and analytics.

Businesses often rely on Magic Quadrant, then, for a lot of the boards and CEOs, they’re going to look at that and say, OK, you know, let’s go in this direction. I don’t necessarily think that always gives an accurate picture of whether something is good or not. The individuals that are then having the responsibility for going down that path must ensure they do their own discovery and their own homework and their own assessment of the applicability of any solution.

My advice for customers is to reverse this to the vendor - tell me whether I need this or not. Is this actually appropriate for me? As an example, I’m worried about my intellectual property being stolen because I’m working on some top secret engineering project, but I’ve only got 10 people working in my company. So what do I need to do? Do I need some intelligence because I’d be worried about spies coming in and stealing that data.

Do I need any additional protection to add to any malware or antivirus solutions I might have? Do I need to encrypt stuff? An opportunistic vendor would say, yes, sure, you need them. And then, before you know it, you can’t operate as a business because you’re so locked down that it’s non-functional. What I would like to see is those vendors saying, well, actually, there are multiple different things that you can see here that you need to achieve. Let’s break it down and do it in such a way that you understand what those business critical assets are. And let’s look to see how we can then apply that to tech. And see what that solution is. I still think it’s just too much about the products. And, as practitioners, we switch off when we start hearing about products, because there are 10 other products that do exactly the same thing.

I want a vendor’s expertise. I want Kaspersky expertise when it comes to intelligence because they are one of the key players in threat intelligence. All the efforts that they’re making in terms of transparency of their operations is key. And they are one of the only vendors I know who are doing that, which is excellent.

Gartner, Inc. has revealed its top strategic predictions for 2021 and beyond. Gartner’s top predictions explore the role of technology in resetting, restarting, and responding to a world of uncertainty.

“Technologies are being stressed to their limits, and conventional computing is hitting a wall,” said Daryl Plummer, distinguished research vice president and Gartner Fellow. “The world is moving faster than ever before, and it’s essential that technology and processes are able to keep up to support digital innovation needs. Starting now, CIOs can expect a decade of radical innovation led by nontraditional approaches to technology.

“The future technologies that will lead the ‘reset of everything’ have three key commonalities: they promote greater innovation and efficiency in the enterprise; they are more effective than the technologies that they are replacing; and they have a transformational impact on society.”

By 2024, 25% of traditional large enterprise CIOs will be held accountable for digital business operational results, effectively becoming “COO by proxy.”

After years of decline, the chief operating officer (COO) role is rising in prominence among born-digital companies. A COO is an essential component for digital success, as they understand both the business and the ecosystem in which it operates. The CIO, with an in-depth knowledge of the technology that facilitates business impact, can increase enterprise effectiveness by taking on components of the COO role to fuse technology and business goals.

“As more CIOs become accountable for the enterprise’s digital performance results, the trend of CIOs in highly digitalized traditional businesses reporting to the CEO will become a flood,” said Mr. Plummer.

By 2025, 75% of conversations at work will be recorded and analyzed, enabling the discovery of added organizational value or risk.

Conversations at work are shifting from traditional, real-time, face-to-face communications, to taking place over cloud meeting solutions, messaging platforms and virtual assistants. In most cases, such tools are keeping a digital record of those conversations. Analytics of conversations happening in the workplace will be used to not only help enterprises comply with existing laws and regulations, but also to help them predict future performance and behavior. As the use of these digital surveillance technologies increases, ethical considerations and actions that bring privacy rights to the forefront will be critical.

By 2025, traditional computing technologies will hit a digital wall forcing the shift to new paradigms such as neuromorphic computing.

CIOs and IT executives will be unable to deliver on critical digital initiatives with current computing techniques. Technologies such as artificial intelligence (AI), computer vision and speech recognition, which demand substantial computing power, will become pervasive, and general-purpose processors will be increasingly unsuitable for these digital innovations.

“A variety of advanced computing architectures will emerge over the next decade,” said Mr. Plummer. “In the short-term, such technologies could include extreme parallelism, DNN-on-a chip or neuromorphic computing. In the long-term, technologies such as printed electronics, DNA storage, and chemical computing will create a wider range of innovation opportunities.”

By 2024, 30% of digital businesses will mandate DNA storage trials, addressing the exponential growth of data poised to overwhelm existing storage technology.

As humanity’s computing needs evolve, more advanced systems will be required, capable of radical adaptation and resilience in complex and hostile environments. DNA is inherently resilient, capable of error checking and self-repair, which makes it an ideal data storage and computing platform for a range of applications.

“More information is being collected than ever before, but today’s storage technology has critical limitations on how long data can be stored and remain uncorrupted,” said Mr. Plummer. “With DNA storage, digital data is encoded in the nucleotide-based pairs of a synthetic DNA strand. This provides a longevity that traditional storage mechanisms simply do not have.”

By 2025, 40% of physical experience-based businesses will improve financial results and outperform competitors by extending into paid virtual experiences.

The increasing capability of internet of things, digital twins and virtual and augmented reality (VR/AR) is making the provision of immersive experience more attractive and affordable to a wider range of consumers. This trend has been accelerated as the social effects of the pandemic have positively altered people’s attitudes towards remote and virtual engagement. Physical experience companies must begin building and acquiring skills in disciplines related to creating, delivering and supporting immersive, virtual experiences.

By 2025, customers will be the first humans to touch more than 20% of the products and produce in the world.

New technologies are automating an increasing number of human tasks, a trend that has been hyper-accelerated by the pandemic. This leads to new opportunities to rethink product design, material use, plant locations and use of resources. As automation becomes the new imperative, customers will increasingly become the first humans to touch manufactured products and agricultural produce.

“Automation is a new source of competitive advantage and disruption,” said Mr. Plummer. “For example, an intelligent machine may not squish the grapes in the same way a human packing them might. CIOs should see hyperautomation as a principle, not a project, as they move forward in updating their processes for the future.”

By 2025, customers will pay a freelance customer service expert to resolve 75% of their customer service needs.

Traditional customer service methods create bottlenecks and pain points for customers. Resolving service issues outside of official company channels is often more effective and creates a better customer experience. Rather than contacting the company directly, customers will increasingly turn to freelance customer service professionals who are experts in the technology for which they are seeking assistance. CIOs must look to partner with these freelancers early on to reduce the customer experience, brand and monetization risks created by third-party customer service providers.

By 2024, 30% of major organizations will use a new “voice of society” metric to act on societal issues and assess the impacts to their business performance.

The “voice of society” is the shared perspective of people in a community that drives the desire to represent and shift ethical values toward a commonly acceptable outcome. Business measurement tactics are expanding to include a focus on opinion-based metrics, such as voice of society, equal to that of more tangible metrics like click-through rates. Such measurement will become a C-Suite imperative so that business composition can react quickly to societal change.

“As we’ve seen time and time again, being tone deaf to societal issues can rapidly and irreparably hurt a brand,” said Mr. Plummer. “By responding to the voice of society, more product brand names or messages are going to be changed or dropped through next year than in the previous five years combined.”

By 2023, large organizations will increase employee retention by more than 20% through repurposing office space as onsite childcare and education facilities.

Global worker demand for childcare assistance is still unmet. This will become even more challenging in the wake of COVID-19, as Gartner predicts that by early next year, one-in-five private childcare centers will have closed their doors permanently. To meet increased demand, large companies will begin repurposing empty facility spaces for offerings that have a societal value-add, such as childcare or educational services. This will significantly increase employee satisfaction, productivity and retention, particularly among women in the workforce.

By 2024 content moderation services for user generated content will be surveyed as a top CEO priority in 30% of large organizations.

With the social unrest of the past year, content volatility on social media has increased. For brand marketers and advertisers, this creates brand-safety concerns and other related challenges. Investing in content moderation, enforcement and reporting services will be critical for enterprises to understand the providence of the content on their sites.

“In many cases, brands are going dark altogether on user-generated content platforms until appropriate policing measures are in place. Yet site and app publishers must walk the line between enforcing policies to provide a safe environment and being accused of censorship. Therefore, brand advertisers will become responsible for neutralizing polarizing content, and industry standards for content moderation will emerge,” said Mr. Plummer.

Six forces that will impact tech providers through 2025

Six forces in the IT industry will present a fundamental threat to technology and service providers (TSPs) through 2025, according to Gartner, Inc.

“Forces outside of a TSP’s control demand a response – adapt to thrive or struggle to survive,” said Rajesh Kandaswamy, research vice president at Gartner. “Impact from six forces are already being felt by providers today, but over the next five years Gartner expects these forces to accelerate trends and pose problems that will demand providers create new models, products and relationships to survive and ultimately succeed.”

The six forces Gartner expects will have the greatest impact on TSPs into 2025 are:

Disruption from Geopolitics and World Events

Increasing global trade tensions are the most significant geopolitical risk in terms of impacts to global markets. As providers seek to serve global customers and drive geographic expansion, both global trade tensions and the erosion of U.S.-China relations become significant influences in terms of product strategies, customer acquisition, business performance management, and corporate development. TSPs expecting to approach global markets in 2025 as they do in 2020 will be displaced by competition that incorporates these new realities into their business and operating models.

COVID-19 has made remote work become the standard across many organizations. While the increasingly digital nature of human interactions presents numerous benefits to providers, including reduced travel expenses and improved relevance and responsiveness to buyers, it can present some negative side effects. Gartner predicts that by 2025, loneliness, collaboration and communication obstacles will be the top workplace struggle for 50% of remote workers. TSPs must adapt their talent management strategies to mitigate this risk and be aware that this trend influences not only their employees and contingent workers, but customers and buyers alike.

Changing Customer Demand and Expectations

Through 2025, TSPs must adapt to changing buyers and buying conditions driven by transformed organizations and technology buyers within them. Business-driven and line of business (LOB)-resident technology buyers will drive more purchases, hastening moves to cloud products and platforms, investing more in automation and online interactions in order to optimize business processes and compete more effectively. Products will address a broader variety of vertical market requirements through tighter partnerships and integrations among providers. Additionally, customers who will demand a clearer picture upfront of the value such solutions will deliver will also require technology providers to measure results postimplementation. Those that can’t prove realized value will fail to grow or renew their customers.

Disruption from Emerging Technologies and Trends

Emerging technologies enable TSPs to enter new markets, strengthen their products and services, ward off competition, and become more efficient. The proliferation of new technologies present opportunities and challenges for TSPs. The right levels of investments in the right emerging technologies at the right time are crucial for creating and capturing the most value from them.

Changing Industry Dynamics

Over the next five years, changing industry dynamics will force technology providers to adjust their strategies, routes to market, and their willingness to simultaneously collaborate and compete with other providers.

Challenges from New (and Old) Entrants

Changing industry dynamics and rapid development cycles make the dedicated pursuit of competitive intelligence an absolute must for technology providers. However, following the known list of competitors no longer is enough — TSPs must be particularly mindful of challenges from new entrants to the market. In some cases, providers in adjacent markets may move into new markets as a way of growing revenue and mind share.

TSPs should not only prepare for new and different types of competitors, but also consider ways to stay competitive. This may mean assessing purchasing models, ease of doing business, customer experience, generational demands and offerings — especially when many technology products and services will be built by nontechnology professionals.

“In the era where ‘every company is a technology company,’ product leaders will have to compete harder with former nontech providers, end users and megavendors for market share,” said Mr. Kandaswamy.

Disruptive Business Models

Through 2025, technological advancements, availability of capital and shorter development cycles will provide opportunities for innovative vendors leveraging disruptive business models. For example, leading providers will create generative solutions which create new value beyond traditional approaches through new combinations of information, technology and operations across an extended ecosystem. Gartner predicts that by 2025, the fastest growing major tech providers will generate 50% of revenue from generative or platform business models leveraging cloud computing.

Gartner, Inc. has revealed the top strategic technology trends that organizations need to explore in 2021.

“The need for operational resiliency across enterprise functions has never been greater,” said Brian Burke, research vice president at Gartner. “CIOs are striving to adapt to changing conditions to compose the future business. This requires the organizational plasticity to form and reform dynamically. Gartner's top strategic technology trends for 2021 enable that plasticity.

“As organizations journey from responding to the COVID-19 crisis to driving growth, they must focus on the three main areas that form the themes of this year’s trends: people centricity, location independence and resilient delivery. Taken together, these trends create a whole that is larger than its individual parts and focus on social and personal demand from anywhere to achieve optimal delivery.”

The top strategic technology trends for 2021 are:

Internet of Behaviors

The internet of behaviors (IoB) is emerging as many technologies capture and use the “digital dust” of peoples’ daily lives. The IoB combines existing technologies that focus on the individual directly – facial recognition, location tracking and big data for example – and connects the resulting data to associated behavioral events, such as cash purchases or device usage.

Organizations use this data to influence human behavior. For example, to monitor compliance with health protocols during the ongoing pandemic, organizations might leverage IoB via computer vision to see whether employees are wearing masks or via thermal imaging to identify those with a fever.

Gartner predicts that by year-end 2025, over half of the world’s population will be subject to at least one IoB program, whether it be commercial or governmental. While the IoB is technically possible, there will be extensive ethical and societal debates about the different approaches employed to affect behavior.

Total Experience

“Last year, Gartner introduced multiexperience as a top strategic technology trend and is taking it one step further this year with total experience (TX), a strategy that connects multiexperience with customer, employee and user experience disciplines,” said Mr. Burke. “Gartner expects organizations that provide a TX to outperform competitors across key satisfaction metrics over the next three years.”

Organizations need a TX strategy as interactions become more mobile, virtual and distributed, mainly due to COVID-19. TX strives to improve the experiences of multiple constituents to achieve a transformed business outcome. These intersected experiences are key moments for businesses recovering from the pandemic that are looking to achieve differentiation via capitalizing on new experiential disruptors.

Privacy-Enhancing Computation

CIOs in every region face more privacy and noncompliance risks than ever before as global data protection legislation matures. Unlike common data-at-rest security controls, privacy-enhancing computation protects data in use while maintaining secrecy or privacy.

Gartner believes that by 2025, half of large organizations will implement privacy-enhancing computation for processing data in untrusted environments and multiparty data analytics use cases. Organizations should start identifying candidates for privacy-enhancing computation by assessing data processing activities that require transfers of personal data, data monetization, fraud analytics and other use cases for highly sensitive data.

Distributed Cloud

Distributed cloud is the distribution of public cloud services to different physical locations, while the operation, governance and evolution of the services remain the responsibility of the public cloud provider. It provides a nimble environment for organizational scenarios with low-latency, data cost-reduction needs and data residency requirements. It also addresses the need for customers to have cloud computing resources closer to the physical location where data and business activities happen.

By 2025, most cloud service platforms will provide at least some distributed cloud services that execute at the point of need. “Distributed cloud can replace private cloud and provides edge cloud and other new use cases for cloud computing. It represents the future of cloud computing,” said Mr. Burke.

Anywhere Operations

Anywhere operations refers to an IT operating model designed to support customers everywhere, enable employees everywhere and manage the deployment of business services across distributed infrastructures. It is more than simply working from home or interacting with customers virtually – it also delivers unique value-add experiences across five core areas: collaboration and productivity, secure remote access, cloud and edge infrastructure, quantification of the digital experience and automation to support remote operations.

By the end of 2023, 40% of organizations will have applied anywhere operations to deliver optimized and blended virtual and physical customer and employee experiences.

Cybersecurity Mesh

The cybersecurity mesh enables anyone to access any digital asset securely, no matter where the asset or person is located. It decouples policy enforcement from policy decision making via a cloud delivery model and allows identity to become the security perimeter. By 2025, the cybersecurity mesh will support over half of digital access control requests.

“The COVID-19 pandemic has accelerated the multidecade process of turning the digital enterprise inside out,” said Mr. Burke. “We’ve passed a tipping point — most organizational cyberassets are now outside the traditional physical and logical security perimeters. As anywhere operations continues to evolve, the cybersecurity mesh will become the most practical approach to ensure secure access to, and use of, cloud-located applications and distributed data from uncontrolled devices.”

Intelligent Composable Business

“Static business processes that were built for efficiency were so brittle that they shattered under the shock of the pandemic,” said Mr. Burke. “As CIOs and IT leaders struggle to pick up the pieces, they’re beginning to understand the importance of business capabilities that adapt to the pace of business change.”

An intelligent composable business radically re-engineers decision-making by accessing better information and responding more nimbly to it. For example, machines will enhance decision making in the future, enabled by a rich fabric of data and insights. Intelligent composable business will pave the way for redesigned digital business moments, new business models, autonomous operations and new products, services and channels.

AI Engineering

Gartner research shows only 53% of projects make it from artificial intelligence (AI) prototypes to production. CIOs and IT leaders find it hard to scale AI projects because they lack the tools to create and manage a production-grade AI pipeline. The road to AI production means turning to AI engineering, a discipline focused on the governance and life cycle management of a wide range of operationalized AI and decision models, such as machine learning or knowledge graphs.

AI engineering stands on three core pillars — DataOps, ModelOps and DevOps. A robust AI engineering strategy will facilitate the performance, scalability, interpretability and reliability of AI models while delivering the full value of AI investments.

Hyperautomation

Business-driven hyperautomation is a disciplined approach that organizations use to rapidly identify, vet and automate as many approved business and IT processes as possible. Although hyperautomation has been trending at an unrelenting pace for the past few years, the pandemic has heightened demand with the sudden requirement for everything to be “digital first.” The backlog of requests from business stakeholders has prompted more than 70% of commercial organizations to undertake dozens of hyperautomation initiatives as a result.

“Hyperautomation is now inevitable and irreversible. Everything that can and should be automated will be automated,” said Mr. Burke.

The COVID-19 pandemic has had widespread impact on the global economy, leaving many CIOs with the challenge of making immediate IT cost savings, according to Gartner, Inc.

IT spending is forecast to contract across all categories and regions in 2020. While businesses in most industries have begun to reopen, pandemic mitigation measures such as lockdowns, social distancing, travel restrictions and border shutdowns have created financial burdens, with the transportation, manufacturing and natural resources industries the most severely impacted.

Speaking at the recent Gartner IT Symposium/Xpo APAC, Chris Ganly, senior research director at Gartner said COVID-19 has fundamentally transformed the way people are spending their money, and organizations simply have to respond.

Gartner advocates a strategic cost optimization approach, which is a continuous discipline to managing spending while maximizing business value, rather than simply cutting costs.

“Difficult times call for difficult actions,” said Mr. Ganly. “But even in organizations fighting to survive, CIOs need to approach cost cutting in the least damaging way to the medium-and long-term health of the business. This will help them recover faster in 2021 and beyond.”

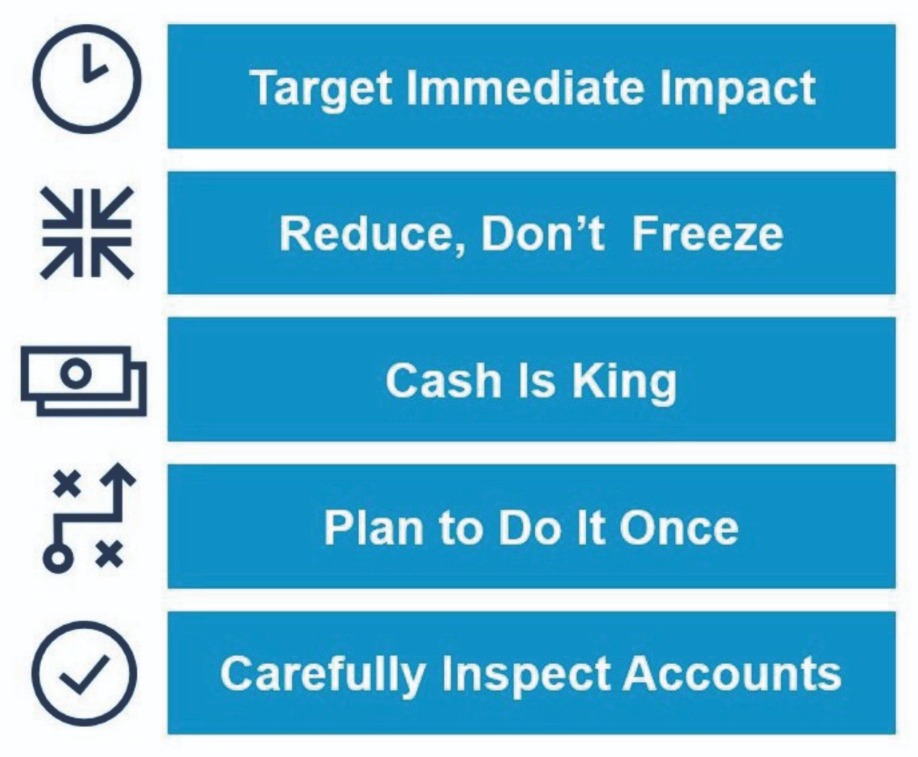

Gartner advises CIOs to follow 10 rules when faced with the need to cut IT budgets quickly (see Figure 1).

Figure 1: Ten Rules for Rapid IT Cost Reduction

Source: Gartner (October 2020)

1. Target immediate impact. Eliminate, reduce or suspend items that will hit the budget in weeks or months, not in years. Examples include expenses that are incurred and paid monthly or quarterly on a “pay as you go” basis, rather than annually.

2. Reduce, don’t freeze. Focus on costs that can truly be reduced or eliminated, not just frozen for the current period, only to reappear again further down the line.

3. Cash is king. Target items that will have a real cash impact on the profit and loss statement rather than noncash items like depreciation or amortization.

4. Plan to do it once. Most organizations don’t cut deeply enough the first time, which means they often need to revisit costs and do it again. This is particularly relevant for staff cuts, where cycles of ongoing reductions can be very damaging.

5. Carefully inspect accounts. Work with your finance partner to obtain a solid view of the expense level detail, such as expense accounts, accruals and prepayments. Use this view to identify specific cash reductions that will immediately have an impact.

6. Target unspent and uncommitted expenses. Unless payments (or commitments) can be recovered or prepayments returned, the most immediate impact will be on unspent or uncommitted payments. Evaluate contracts for renegotiation and termination clauses.

7. Be Holistic: Include Capital. Typically, operating expenditures are the easiest to impact, but capital expenditures can also be reduced. Gartner’s IT Key Metrics Data shows that 25% of the average IT budget is spent on capital, so ensure that the complete range of IT spend is considered for rapid reductions.

8. Sunk costs are irrelevant. When it comes to saving money, it is commonly said that “sunk costs are irrelevant,” meaning that future spend should be considered without relation to past spending or “sunk costs.” From a rapid cost reduction standpoint this is certainly true, but it’s still worth considering whether the saving will be more than the benefit that can and will be delivered by continuing.

9. Address discretionary and nondiscretionary cost. Discretionary spending, such as for new projects, additional capability or services, is often seen as an easier place to cut. However, even nondiscretionary “run the business” expenses such as IT infrastructure and operations can be cut by reducing usage or service levels.

10. Tackle both variable and fixed costs. Fixed costs are expenses that remain constant, regardless of activity or volume, such as office rent, subscriptions and payroll. For fixed costs, focus on elimination. Variable costs change with activity or volume, for example, telecommunications, contractors and consumables. For variable costs, focus on both reduction and elimination.

Top performing enterprises are prioritise digital innovation during the pandemic

Top performing enterprises are accelerating digital innovation and leveraging emerging technologies to come out stronger on the other side of the COVID-19 pandemic, which has arguably been the most significant “turn” in 2020, according to Gartner, Inc.’s annual global survey of CIOs. 2021 will be a race to digital, with the spoils going to those organizations that can maintain the momentum built up during their response to the pandemic.

“Nothing, yet everything, has changed for the CIO,” said Andy Rowsell-Jones, distinguished research vice president at Gartner. “The support for remote work that the COVID-19 pandemic brought on might be the biggest win for CIOs since Y2K. They now have the attention of the CEO, they have convinced senior business leaders of the need to modernize technology, and they have prompted boards of directors to accelerate enterprise digital business initiatives. CIOs must seize this moment, because they may never get another opportunity like it.”

The 2021 Gartner CIO Agenda survey gathered data from 1,877 CIO respondents in 74 countries and all major industries, representing approximately $4.7 trillion in revenue/public-sector budgets and $85 billion in IT spending.

Survey Reveals Four Ways CIOs Can Seize the Moment

The 2021 Gartner CIO Agenda survey revealed four ways in which CIOs can make a difference both in digital business acceleration and in long-term agility: win differently, unleash force multipliers, banish drags and redirect resources.

Win Differently

CIOs can help the enterprise anticipate the increasingly digital interactions expected by customers. Seventy-six percent of survey respondents said that demand for new digital products and services increased in 2020, with even more respondents (83%) reporting that it will increase in 2021.

“This is a watershed moment for CIOs,” said Mr. Rowsell-Jones. “There is no going back to the way business used to be.”

The survey uncovered two areas of customer digitalization where top performers* are significantly more aggressive than typical performers: the use of digital channels to reach customers and achieve citizen engagement, along with the rate of introduction of new digital products and services. Nine out of ten of the top performers are pursuing digital channels, and almost three-quarters are introducing digital products faster.

Organizations that have increased their use of digital channels to reach customers are 3.5 times more likely to be a top performer than a trailing performer. “Those at the top have gone all-in on digital business, and they have developed the capabilities to allow them to do it,” said Mr. Rowsell-Jones.

Unleash Force Multipliers

Respondents were asked to characterize certain changes related to enterprise IT leadership trends as a result of the pandemic. Roughly 70% of CIOs deepened their knowledge of specific business processes to advise the business, and the same proportion did more to measure and articulate the value of IT.

“Although the COVID-19 response appeared to be a simple exercise of deploying PCs, it created profound opportunities for CIOs,” said Mr. Rowsell-Jones. “CIOs were able to refocus IT leadership around digital business acceleration and remodel the enterprise’s core technology. At one point or another, every CIO got a chance to shine during COVID-19.”

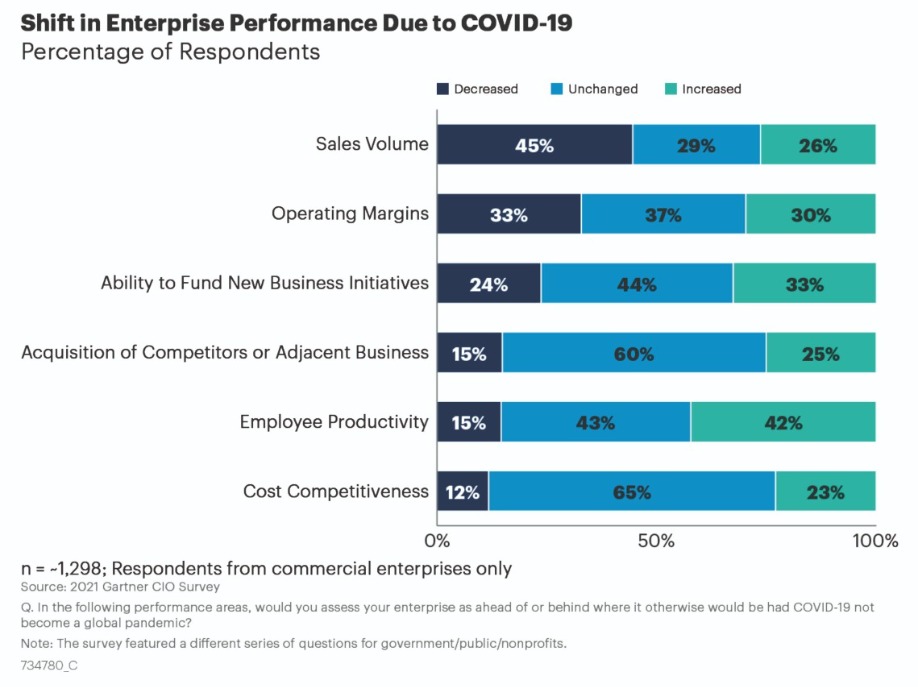

Banish Drags

The survey found that CIOs can help accelerate digital by systemically seeking out and eliminating drags (e.g., detrimental supplier performance during COVID-19). While most respondents reported they were behind in sales volumes during the pandemic, only 29% of top performers reported a decrease in sales volume versus 45% of typicals and 62% of trailings.

However, there were still a few areas that stood strong: Respondents reported increased performance for new business initiatives, acquisitions, cost competitiveness, and employee productivity (see Figure 1).

Figure 2: Shift in Enterprise Performance Due to COVID-19 (% of Respondents)

Source: Gartner (October 2020)

“Although revenue took a big hit, CIOs decided to fight back rather than go into a defensive crouch,” said Mr. Rowsell-Jones.

When asked about shifts in demand, 58% of top performers reported an increase in demand from new post-COVID customers versus 49% for the typical group and 37% for those trailing.

Redirect Resources

Survey respondents projected a 2% IT budget increase for 2021, on average – slightly down from the 2020 survey (2.8%). In order to direct investments and people toward new business priorities in the Renewal phase, top performers are leaning into this shift more than typical or trailing performers, with 63% of top performers stating funding for digital innovation has increased and only 52% of typical performers reporting the same. Organizations that have increased their funding of digital innovation are 2.7 times more likely to be a top performer than a trailing performer.

“Top performers got a head start because their CIOs faced fewer constraints,” said Mr. Rowsell-Jones. “They were more likely to secure additional IT funding to support experimentation than their typical and trailing counterparts.”

CIOs are Continuing to Prioritize Cybersecurity Investments

CIOs reported investment shifts toward technologies that support digitalization. With the opening of new attack surfaces due to the shift to remote work, cybersecurity spending continues to increase. 61% of respondents are increasing investment in cyber/information security, followed closely by business intelligence and data analytics (58%) and cloud services and solutions (53%).

“Last year, I told CIOs that success in 2020 meant increasing the preparedness of both the IT organization and the enterprise as a whole to withstand impending business disruption,” said Mr. Rowsell-Jones. “This truth came at enterprises full force with the COVID-19 pandemic. In 2021, CIOs must build on the momentum they created for their enterprises and continue to be involved in higher-value, more strategic initiatives. The better CIOs perform for the business, the more the business will ask of them next year.”

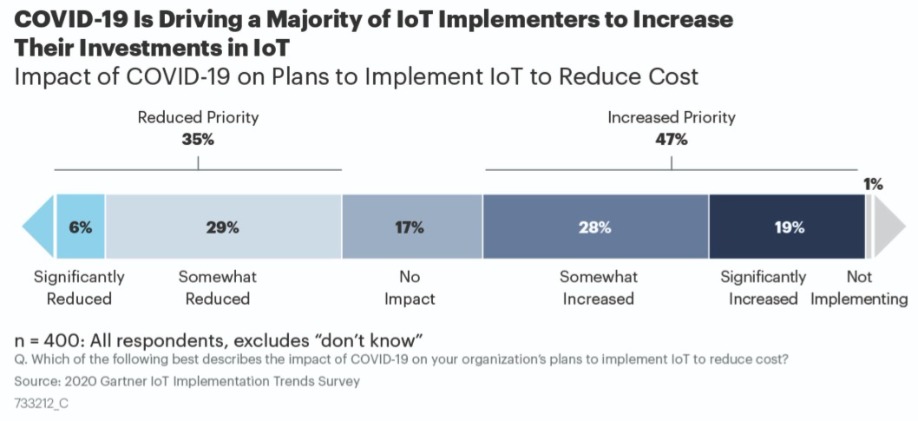

IoT investments to increase

Despite the disruptive impacts of COVID-19, 47% of organizations plan to increase their investments in the Internet of Things (IoT), according to a recent survey from Gartner, Inc.

Following the COVID-19 lockdown, the survey found that 35% of organizations reduced their investments in IoT while a larger number of organizations are planning to invest more in IoT implementations to reduce costs (see Figure 1).

Figure 3. Impact of COVID-19 on Plans to Implement IoT to Reduce Costs

Source: Gartner (October 2020)

One reason behind the increase is that while companies have a limited history with IoT, IoT implementers produce a predictable ROI within a specified timeframe. “They use key performance indicators (KPIs) to track their business outcomes and for most of them they also specify a time frame for financial payback of their IoT investments, which is on the average three years,” said Benoit Lheureux, research vice president at Gartner.

In addition, as IoT investments are relatively new, most companies have plenty of “low hanging fruit” cost-saving opportunities to pursue, such as predictive-maintenance on commercial and industrial assets like elevators or turbines, and optimization of processes such as increasing manufacturing yield.

Digital Twins and AI Drive IoT Adoption

As a result of COVID-19, 31% of survey respondents said that they use digital twins to improve their employee or customer safety, such as the use of remote asset monitoring to reduce the frequency of in-person monitoring, like hospital patients and mining operations.

The survey showed that 27% of companies plan to use digital twins as autonomous equipment, robots or vehicles. “Digital twins can help companies recognize equipment failures before they stall production, allowing repairs to be made early or at less cost,” said Mr. Lheureux. “Or a company can use digital twins to automatically schedule the repair of multiple pieces of equipment in a manner that minimizes impact to operations.”

Gartner expects that by 2023, one-third of mid-to-large-size companies that implemented IoT will have implemented at least one digital twin associated with a COVID-19-motivated use case.

The enforcement of safety measures has also fueled the adoption of artificial intelligence (AI) in the enterprise. Surveyed organizations said they have applied AI techniques in a pragmatic manner. Twenty-five percent of organizations are favoring automation (through remote access and zero-touch management), while 23% are choosing procedure compliance (safe automation measures) in order to reduce COVID-19 safety concerns. For example, organizations can monitor work areas using AI-enabled analysis of live video feeds to help enforce safe social distancing compliance in high-traffic areas such as restaurants and manufacturing lines.

Gartner expects that by 2023, one-third of companies that have implemented IoT will also have implemented AI in conjunction with at least one IoT project.

Worldwide IT spending to grow 4% in 2021

Worldwide IT spending is projected to total $3.8 trillion in 2021, an increase of 4% from 2020, according to the latest forecast by Gartner, Inc. IT spending in 2020 is expected to total $3.6 trillion, down 5.4% from 2019.

“In the 25 years that Gartner has been forecasting IT spending, never has there been a market with this much volatility,” said John-David Lovelock, distinguished research vice president at Gartner. “While there have been unique stressors imposed on all industries as the ongoing pandemic unfolds, the enterprises that were already more digital going into the crisis are doing better and will continue to thrive going into 2021.”

All IT spending segments are forecast to decline in 2020 (see Table 1). Enterprise software is expected to have the strongest rebound in 2021 (7.2%) due to the acceleration of digitalization efforts by enterprises supporting a remote workforce, delivering virtual services such as distance learning or telehealth, and leveraging hyperautomation to ensure pandemic-driven demands are met.

Table 1. Worldwide IT Spending Forecast (Millions of U.S. Dollars)

| 2019 Spending | 2019 Growth (%) | 2020 Spending | 2020 Growth (%) | 2021 Spending | 2021 Growth (%) |

Data Center Systems | 214,911 | 1.0 | 208,292 | -3.1 | 219,086 | 5.2 |

Enterprise Software | 476,686 | 11.7 | 459,297 | -3.6 | 492,440 | 7.2 |

Devices | 711,525 | -0.3 | 616,284 | -13.4 | 640,726 | 4.0 |

IT Services | 1,040,263 | 4.8 | 992,093 | -4.6 | 1,032,912 | 4.1 |

Communications Services | 1,372,938 | -0.6 | 1,332,795 | -2.9 | 1,369,652 | 2.8 |

Overall IT | 3,816,322 | 2.4 | 3,608,761 | -5.4 | 3,754,816 | 4.0 |

Source: Gartner (October 2020)

Spending on data center systems will experience the second highest of growth of 5.2% in 2021 as hyperscalers accelerate global data center build out and regular organizations resume data center expansion plans and allow staff to be physically back onsite.

Despite the increase in cloud activity in 2020 as organizations shifted to a remote-work-first environment, enterprise cloud spending – which falls into multiple categories – will not be reflected in vendors’ revenue until 2021.

“The spending slowdown that took place from roughly April through August of this year, coupled with cloud service providers’ ‘try before you buy’ programs, is shifting cloud revenue out of 2020,” said Mr. Lovelock. “Cloud had a proof point this year — it worked throughout the pandemic, it scaled up and it scaled down. This proof point will allow for accelerated penetration of cloud through 2022.”

“With revenue uncertainty promoting cash from being King to being Emperor, CIOs are now prioritizing IT projects where the time to value is lowest,” said Mr. Lovelock.

“Companies have more IT to do and less money to do it, so they are pulling money out of the areas they can afford, such as mobile phone and printer refreshes, which is why there will be less growth in the devices and communications services segments,” added Mr. Lovelock. “Instead, CIOs are spending more in areas that will accelerate their digital business, such as IaaS or customer relationship management software.”

Moving forward, digital transformation will not be subject to the same ROI justification it was pre-pandemic as the mandate for IT becomes business survival, rather than growth.

Accelerated DX investments create economic gravity as companies build on existing strategies and investments, becoming digital-at-scale future enterprises.

International Data Corporation has unveiled IDC FutureScape: Worldwide Digital Transformation 2021 Predictions. According to the new report, despite a global pandemic, direct digital transformation (DX) investment is still growing at a compound annual growth rate (CAGR) of 15.5% from 2020 to 2023 and is expected to approach $6.8 trillion as companies build on existing strategies and investments, becoming digital-at-scale future enterprises.

The predictions from the IDC FutureScape for Worldwide Digital Transformation are:

According to Shawn Fitzgerald, research director, Worldwide Digital Transformation Strategies, "Organizations with new digital business models at their core that are successfully executing their enterprise-wide strategies on digital platforms are well positioned for continued success in the digital platform economy. Our 2021 digital transformation predictions represent areas of notable opportunity to differentiate your own digital transformation strategic efforts."

Worldwide CIO agenda 2021 predictions

While technology is critical factor, protecting and promoting the health, safety, and welfare of all key stakeholders is lynchpin to gaining trust and loyalty, foundations of business success today.

As the COVID-19 pandemic unfolded, CIOs faced epic challenges and the road to recovery stretches ahead. For many business leaders, recovery isn't just a return to their former state, but a top to bottom rethinking of what business they need to be in, and how they must be run. To support CIOs and IT leaders in this endeavor, International Data Corporation (IDC) has unveiled the IDC FutureScape: Worldwide CIO Agenda 2021 Predictions. As the chief owners of the digital infrastructure that underpins all aspects of modern enterprises, CIOs must play pivotal roles in the road to recovery, “seeking the next normal” while still performing their traditional roles. This new IDC study outlines concrete actions that CIOs can and must take to create resilient and adaptive future enterprises with technology.

IDC analysts Joe Pucciarelli and Serge Findling recently presented the key predictions that will impact CIOs and IT professionals worldwide over the next one to five years. With the insights and guidance of IDC's global CIO Agenda team, senior IT leaders and line-of-business executives will be armed with the tools and strategies needed to effectively manage and communicate their IT investment priorities and implementation strategies, leading IT through the "next normal."

"In a time of turbulence and uncertainty, CIOs and senior IT leaders must discern how IT will enable the future growth and success of their enterprise while ensuring its resilience," said Findling, vice president of Research for IDC's IT Executive Programs (IEP)."The ten predictions in this study outline key actions that will define the winners in recovering from current adverse events, building resilience, and enabling future growth."

The predictions from the IDC FutureScape for Worldwide CIO Agenda are:

Prediction 1 - #CIOAIOPS: By 2022, 65% of CIOs will digitally empower and enable front-line workers with data, AI, and security to extend their productivity, adaptability, and decision-making in the face of rapid changes.

Prediction 2 - #Risks: Unable to find adaptive ways to counter escalating cyberattacks, unrest, trade wars, and sudden collapses, 30% of CIOs will fail in protecting trust —the foundation of customer confidence — by 2021.

Prediction 3 - #TechnicalDebt: Through 2023, coping with technical debt accumulated during the pandemic will shadow 70% of CIOs, causing financial stress, inertial drag on IT agility, and "forced march" migrations to the cloud.

Prediction 4 - #CIORole: By 2023, global crises will make 75% of CIOs integral to business decision making as digital infrastructure becomes the business OS while moving from business continuation to re-conceptualization.

Prediction 5 - #Automation: To support safe, distributed work environments, 50% of CIOs will accelerate robotization, automation, and augmentation by 2024, making change management a formidable imperative.

Prediction 6 - #RollingCrisis: By 2023, CIO-led adversity centers will become a permanent fixture in 65% of enterprises, focused on building resilience with digital infrastructure, and flexible funding for diverse scenarios.

Prediction 7 - #CX: By 2025, 80% of CIOs alongside LOBs will implement intelligent capabilities to sense, learn, and predict changing customer behaviors, enabling exclusive customer experiences for engagement and loyalty.

Prediction 8 - #Low/NoCode: By 2025, 60% of CIOs will implement governance for low/no-code tools to increase IT and business productivity, help LOB developers meet unpredictable needs, and foster innovation at the edge.

Prediction 9 - #ControlSystems: By 2025, 65% of CIOs will implement ecosystem, application, and infrastructure control systems founded on interoperability, flexibility, scalability, portability, and timeliness.

Prediction 10 - #Compliance: By 2024, 75% of CIOs will absorb new accountabilities for the management of operational health, welfare, and employee location data for underwriting, health, safety, and tax compliance purposes.

The COVID-19 pandemic has largely proven to be an accelerator of cloud adoption and extension and will continue to drive a faster conversion to cloud-centric IT. According to a new whole cloud forecast from International Data Corporation (IDC), total worldwide spending on cloud services, the hardware and software components underpinning cloud services, and the professional and managed services opportunities around cloud services will surpass $1.0 trillion in 2024 while sustaining a double-digit compound annual growth rate (CAGR) of 15.7%.

"Cloud in all its permutations – hardware/software/services/as a service as well as public/private/hybrid/multi/edge – will play ever greater, and even dominant, roles across the IT industry for the foreseeable future," said Richard L. Villars, group vice president, Worldwide Research at IDC. "By the end of 2021, based on lessons learned in the pandemic, most enterprises will put a mechanism in place to accelerate their shift to cloud-centric digital infrastructure and application services twice as fast as before the pandemic."

The strongest growth in cloud revenues will come in the as a service category – public (shared) cloud services and dedicated (private) cloud services. This category, which is also the largest category in terms of overall revenues, is forecast to deliver a five-year CAGR of 21.0%. By 2024, the as a service category will account for more than 60% of all cloud revenues worldwide. The services category, which includes cloud-related professional services and cloud-related management services, will be the second largest category in terms of revenue but will experience the slowest growth with an 8.3% CAGR. This is due to a variety of factors, including greater use of automation in cloud migrations. The smallest cloud category, infrastructure build, which includes hardware, software, and support for enterprise private clouds and service provider public clouds, will enjoy solid growth (11.1% CAGR) over the forecast period.

While the impact of COVID-19 could have some negative effects on cloud adoption over the next several years, there are a number of factors that are driving the cloud market forward.

· The ecosystem of tech companies helping customers migrate to cloud environments, create new innovations in the cloud, and manage their expanding cloud environments will enable enterprises to meet their accelerated schedules for moving to cloud.

· The emergence of consumption-based IT offerings are aimed at leveraging public cloud-like capabilities in an on-premises environment that reduces the complexity and restructures the cost for enterprises that want additional security, dedicated resources, and more granular management capabilities.

· The adoption of cloud services should enable organizations to shift IT from maintenance of legacy IT to new digital transformation initiatives, which can lead to new business revenue and competitiveness as well as create new opportunities for suppliers of professional services.

· Hybrid cloud has become central to successful digital transformation efforts by defining an IT architectural approach, an IT investment strategy, and an IT staffing model that ensures the enterprise can achieve the optimal balance across dimensions without sacrificing performance, reliability, or control.

Recent IDC data shows that actual market performance has been stronger than suggested by survey and market indicators, especially in the U.S., due largely to cloud and remote work support. Service provider investments to meet demand for cloud and digital services are stable compared to other sectors and remote work/learning has driven stronger PC volume and a greater focus on security for the year. "Overall information and communications technology (ICT) spending is expected to have a 5% compound annual growth rate (CAGR) through 2024. In terms of total IT spending, we are seeing a more shallow V-shaped drop this year. Total IT spending will drop to about 1% growth this year, but this is far stronger than the 3% decline that was expected earlier in the year," said IDC President Crawford Del Prete.

In a recent IDC survey, 42% of technology decision makers indicated that their organizations plan to invest in technology to close the digital transformation gap. "The pandemic created a business necessity for increasing technology investment and accelerating digital transformation timetables," said Meredith Whalen, Chief Research Officer at IDC. "What we are learning is that many of these initiatives that started as ways to mitigate the economic impact of COVID-19 have become permanent roadmap requirements for Future Enterprise success in the digital economy."

IDC's outlook for the Future Enterprise identifies three overarching initiatives that directly link technology investment to digital transformation efforts – creating digital parity across the workforce, designing for new customer demands, and accelerating automation initiatives.

Creating Digital Parity

Before the pandemic, organizations, on average, had only 14% of their employees working from home. That percentage has increased dramatically – to 45% – and many organizations anticipate that work-from-home employees will remain a large proportion of the workforce going forward. Supporting hybrid workforces and ensuring that remote and work-from-home employees have the same sets of connectivity and productivity tools as their in-office counterparts will be essential to long-term success.

· Prediction: By 2023, 75% of the G2000 will commit to providing technical parity to a workforce that is hybrid by design rather than by circumstance, enabling them to work together separately and in real-time.

· Prediction: By 2022, an additional $2 billion will be spent on desktop and workspace as a service by the G2000, as 75% of them incorporate employees' home network/workspace as part of the extended enterprise environment.

Designing for New Customer Demands

Almost half (47.6%) of all U.S. consumers are "very concerned" about their personal health as it relates to the COVID-19 virus, according to IDC's recent U.S. consumer survey. This concern for safety has spurred many businesses to create new contactless consumer experiences, including curbside pickup. Enterprises will also invest in design and user interface requirements for contactless process automation with an emphasis on voice-based experiences and self-service options through mobile apps.

· Prediction: By 2023, 75% of grocery ecommerce orders will be picked up curbside or in store, driving a 35% increase in investment in onsite or nearby micro-fulfillment centers.

· Prediction: In 2021, 40% of development activities will reprioritize design and user interface to support contactless process automation.

Accelerating Automation Initiatives

Enterprises will increasingly adopt automated IT operations practices to support the greater scale required for digitally driven enterprises. Robotic process automation (RPA), robotics, and artificial intelligence (AI) technologies will play a more important role in labor automation while a continued focus on autonomous operations will drive investment in Digital Engineering organizations and digital operations technologies.

· Prediction: By 2022, 45% of repetitive work tasks will be automated and/or augmented by using "digital co-workers," powered by AI, robotics, and RPA.

· Prediction: By 2023, 75% of Global 2000 IT organizations will adopt automated operations practices to transform their IT workforce to support unprecedented scale.

COVID-19's Impact on Industries

The COVID-19 pandemic has created unique situations for specific industries, including healthcare, hospitality, retail, and small and medium businesses (SMBs), requiring them to rethink the way they use technology to engage with customers.

· Healthcare: Telemedicine will be a permanent fixture going forward. With nearly a third of consumers interested in having a telemedicine option post-pandemic, healthcare providers are predicted to increase spending by 70% on connected health technologies by 2023.

· Hospitality: Despite being an industry known for people-based services, 85% of hospitality brands will implement self-service technologies by 2021, changing how they engage with guests.

· Restaurants: Restaurants have taken the economic brunt of the pandemic and many have turned to home delivery out of necessity. Post-pandemic, 30% of restaurants using third party delivery platforms will deploy native delivery options to eliminate third-party fees, increasing profit by 25%.

· Retail: Contactless payments have seen increased adoption during the pandemic and will be viewed as a customer experience imperative going forward, causing 85% of retailers to offer at least two contactless payment options by 2023.

· SMBs: At least 30% of SMBs will fail by 2021 leading to a new wave of microbusiness-powered and ecosystem-first disruptors by 2023. These microbusinesses will be single employees that leverage the power of a digital platform to obtain and fulfill work.

You know you need AI. You have already committed to delivering AI, and you’re working to build your organisation into an AI-driven enterprise. To do so, you have hired top-notch data scientists and invested in data science tools -- a great start. Yet, somehow your AI projects are still not getting off the ground.

By Sivan Metzger, Managing Director of MLOps & Governance at DataRobot.

Research shows that the share of AI models deemed ‘production worthy’ but never put into production is anywhere between 50 and 90%. A recent survey by NewVantage Partners showed that only a mere 15% of leading enterprises have deployed any AI into widespread production. These are staggering figures that mean models are indeed being built and then not going anywhere. So, why is this actually so difficult? What are leaders and teams actually missing? What can they do to finally obtain the long-anticipated value from AI?

Data scientists typically do not see their role as including production rollout and management of their models, and IT or Operations, who usually own all production services across the company, are reluctant to take ownership for machine learning services. This is actually pretty natural from their perspective, as neither group is even remotely familiar with the others’ concerns and considerations. However, without bridging the gap between them, progress will simply not happen.

Enter MLOps. MLOps precisely solves this challenge by bridging this inherent gap between data teams and IT Ops teams, providing the capabilities that both teams need to work together to deploy, monitor, manage, and govern trusted machine learning models in production without introducing unnecessary risk to their organisation.

What is MLOps?

MLOps, which takes its name from a mature industry process for managing software in production by the name of DevOps, is a practice for alignment and collaboration between data scientists and Operations professionals to help manage production machine learning. This ensures that the burden of productionalising AI does not entirely rest on the data scientist’s shoulders. It also ensures that a data scientist doesn’t just throw a model ‘over the wall’ to the IT team and then forget about it.

Additional issues stem from the fact that machine learning models are typically written in various languages on MLDev platforms unfamiliar to Ops teams, while these models also need to technically run in complex production environments that are unfamiliar to the data teams. Therefore, typically the models ignore the sensitivities and characteristics of those environments and systems. Needless to say, this only amplifies the gaps.

What problems does it solve?

With MLOps, users have a single place to deploy, monitor, and manage all of their production models in a fully governed manner, regardless of how they were created or where they are to be deployed. This is

essentially the ‘missing link’ between creating and writing machine learning, to actually obtaining business value from machine learning.

MLOps removes the inherent business risk that comes with deploying machine learning models without monitoring them, ensuring processes for problem resolution and model replacements. These capabilities become increasingly important particularly during these tumultuous times. It also ensures that all centralised production machine learning processes work under a robust governance framework across your organisation, leveraging and sharing the load of production management with additional teams you already have in place.

DataRobot’s MLOps centralised hub allows you to foster collaboration between data science and IT teams, while maintaining control over your production machine learning to scale up your AI business adoption with confidence.

How do organisations derive value with AI?

With the right processes, tools, and training in place, businesses will be able to reap many benefits from AI using MLOps, including gaining insight into areas where the data might be skewed. One of the many frustrating parts of running AI models, especially right now, is that the data is constantly shifting. With MLOps, businesses can quickly identify and act on new information in order to retrain production models on the latest data, using the data pipeline, algorithms, and code used to create the original.

The outline above is what allows users to scale AI services in production, while also minimising risk. Scaling AI across the enterprise is easier said than done. There can be numerous roadblocks that stand in the way, such as lack of communication between the IT and data science teams or lack of visibility into AI outcomes. With MLOps, you can support multiple types of machine learning models created by different tools, as well as supporting software dependencies needed by models across different environments.

Adopting MLOps best practices, processes, and technologies will get your AI projects out of the lab and into production where they can generate value and help transform your business. With MLOps, your data science and IT Operations teams can collaborate to deploy and manage models in production using interfaces that are relevant for each role. You can continuously improve model performance with proactive management and, last but not least, you can reduce risk and ensure regulatory compliance with a single system to manage and govern all your production models.

Put it all together and you can lead a true transformation throughout your organisation. By bridging the gap between IT and data science teams, you can truly adopt AI and demonstrate value from it, while ensuring that you can scale production of your models, eliminating all unnecessary AI-related risk for your company.

COVID-19 has widened the gap between digitally advancing and digitally struggling organizations. Our recent research found that when it comes to AI, 78% of high-performing AI organizations – those who had deployed AI at scale within their organization – continued to progress their initiatives at the same pace as before the pandemic. On the other hand, more than half of the organizations who were struggling to implement AI had to pull their projects.

Valerie Perhirin, Managing Director, Insight-Driven Enterprise, Capgemini Invent.

However, the demand for AI-based technologies is soaring, even among the general population. Almost two thirds of consumers expect to increase the use of touchless interactions through voice assistants, facial recognition, or apps to avoid human interactions and touchscreens post-COVID-191. These technologies, of course, rely on scaled AI.

Businesses need to understand the impact that AI can have on the bottom line and how it can support their recovery strategy as they bounce back from the impacts of COVID-19. Adding AI to business processes speeds up decision making and creates the essential companion for symbiotic operations. When used correctly, the true value of AI is that people can delegate not just processes, but also decision making, and this has a significant impact on efficiency and productivity. But, as many organizations have already discovered, implementing AI at scale is a challenging journey.

Data quality, skills gaps and ethics are all vital considerations to building an AI-powered enterprise. Our recent research also found that seven in ten organizations find a lack of mid to senior-level talent a major challenge for scaling AI. Less than one third of struggling AI organizations feel they have a detailed knowledge of how and why their AI systems produce the output they do. Moreover, nine out of ten organizations believe that ethical issues have resulted from the use of AI systems over the last 2-3 years.

To overcome these barriers and harness the power of AI, there are four principles essential to successful implementation which organizations must consider:

Strategize: While the promise of AI might make IT leaders excited to delve straight into implementation, strategy is key. It’s important to think beyond just the short-term goals of implementing AI and consider what the potential goals are for the next 3-5 years. Laying the necessary foundations before commencing wide-scale deployment is also essential. AI needs access to vast amounts of quality proprietary and third-party data which has to be stored and managed properly; deciding how to do so is a fundamental building block to effective AI implementation.

However, legacy IT systems can delay the collection, analysis and understanding of data. To resolve this, data needs to be managed as a strategic asset in an organization. Establishing data governance to design, set up, scale, and continuously monitor the data in a firm has clear benefits in supporting the scaling of AI use cases. IT estate modernization also addresses the challenges of fragmented and legacy IT systems and provides faster access to information within a secure environment.

Ethical considerations must also be woven into an organization’s strategy from the outset. Customer and employee privacy in particular are a prerequisite. The mindset among IT leaders must be one of transparency, accountability and fairness, building AI systems with ethics-by-design in mind. To do this, the right governance structures must be put in place, as well as building diverse teams to prevent AI bias, with the aim of empowering people in the knowledge that they are interacting with AI – and that the AI systems themselves are trustworthy.

Operationalize: By creating a tiered responsibility system, organizations can ensure that AI implementation is pushed forward at a steady pace. It’s recommended to have a central team for

policy and strategy; a center of excellence (CoE) for optimizing resources, embedding ethics and facilitation of ideas; helping to weave AI initiatives into the enterprise’s wider business goals. After all, AI initiatives are not scaled in silos. They impact multiple business units, so wider involvement makes sense if the aim is to have buy-in across the business.

Nurture: AI requires a new host of skill sets within an organization, from data architects to designers to data scientists. To keep implementation initiatives on track for the long haul, business leaders must also consider a range of business and change management roles including data strategists, AI ethicists and process and automation engineers.

While data literacy is important, so are the soft skills to communicate the importance of the new technology to an organization and make sure the whole business is on board. Training and upskilling are key here. Given the complexity of achieving scaled AI many organizations choose to work with service providers to address the challenges of this structural and cultural transformation. This can help to alleviate the workload for internal teams while also bringing fresh perspectives, knowledge, and guidance to AI initiatives.

Monitor: AI models cannot be left to run without intervention. Variations in the nature of data and new information can change outcomes, leading to mistakes and vulnerabilities in AI algorithms. In response, organizations should systematically rate AI models based on the likelihood of them making mistakes and determine appropriate action plans, such as the frequently of monitoring or updating, or which modelling technique to implement.

Once these four principles have been established, businesses must create a collaborative digital workplace that’s fit to embrace AI at scale. This means eliminating information silos and enabling self-organizing teams for better collaboration and faster decision making. Combining AI with agile cognitive systems makes business processes more automated and intelligent, driving both decision-making and execution forwards to boost performance.

COVID-19 has put the onus on organizations to embrace AI faster than before. Adopting AI is a complex journey, but the benefits are transformational both in the short- and long-term. No matter where organizations are on their journey, they must invest now to build resilience and agility – and so the future can be AI-enabled.

It’s no understatement to say businesses are currently navigating one of the most difficult and extended periods of uncertainty in recent history. Coronavirus has turned the world of work upside-down, with many businesses committing to become fully or partially remote for the foreseeable future.

By Nigel Seddon, VP Northern Europe, Ivanti.