Many successful individuals are proud to explain just how clever they have been in spotting a market opportunity, or several, building a business and making large amounts of money. Rather fewer recognise the substantial part that luck plays in the process. Every news programme I watch seems to include an interview with a café owner, who recently opened up in a strategic location to catch the commuter and/or work traffic which came past the front door, and who now faces a grim future. Ok, so maybe opening a café isn’t the fast track to success and riches (although I’m guessing even the massive coffee shop chains started small some time and place), but the business plan which relied on a certain number of customers has been blown to shreds by the coronavirus. And so it is across many industry sectors. Yes, there have been some upsides as well, but, sadly, rather more down sides.

Our own industry – events – has taken a major pasting. Everyone has moved into the world of virtual events, with varying degrees of success. We’ve been lucky in so far as we’ve had the option to adapt our business. Back to our café owners, and it’s not so obvious how they can adapt to the complete absence of customers.

And this is the problem that faces organisations and governments across the globe. How do you re-start an economy, when people’s behaviours have changed so dramatically and, potentially, so permanently?

As I’ve written previously, digital transformation was already impacting the world of work and leisure. And, with AI just around the corner, further major disruption was on the horizon. But all this change, which might have been managed over a number of years, seems to have been accelerated and shoe-horned into a matter of weeks. And, no matter how smart a business person you might be, the consequences are proving devastating for many.

Integrated, intelligent solutions are required, whereby individuals, the organisations for which they work, and the governments which make many of the business rules, all work together to understand what is going on, what changes are likely to be permanent and which ones might only be temporary. Once this debate has occurred, decisions can be made and the current catastrophic business conditions for many can be used as a catalyst for some positive changes.

Homeworking or, more accurately, hybrid working, is just one example of this. Our city centre café owner might never see potential customer levels return to pre-Covid levels, but it just might be that, by relocating the business to a smaller town, where those unable or unwilling to home work, but who don’t want to commute many miles to large city centres, are attending office hub locations, the chance to sell food and drinks is still there.

Flexibility and agility are key building blocks for digital transformation. And for digital transformation, post-lockdown, they have only increased in importance.

New report from PFU (EMEA) Limited highlights the challenges organisations face on their digital transformation journeys.

PFU (EMEA) Limited has unveiled the findings of the Fujitsu Image Scanner Organisational Intelligence Survey 2020, a landmark research project into the state of digital transformation in Europe. The study, which examines the level to which organisations are forging a path to digital transformation projects shows that 35% do not yet have a clear plan in place, hampering their ability to operate in increasingly competitive environments. The research also finds:

Despite many not having a clear path to digital transformation, 86% say that managing the amount of information in their business poses a challenge. Coupled with almost a quarter citing inaccurate decision-making and lost documents as a result of the information overload, the study indicates a disconnect between the problems organisations want to overcome and the path to achieving success.

A promising sign is that organisations are now recognising that they need to do more to better manage their information as part of their digital transformation journeys, with 80% turning to external experts to help them do so. This creates clear opportunities for resellers who provide information capture solutions that will enable organisations to ingest data and use it to make intelligent decisions that will allow them to remain competitive, especially as 61% believe the paperless office is an impossible dream.

Spanning 1,000 IT and business decision makers across France, Germany, Italy, Spain and the UK, the research also suggests that of those initiating digital transformation projects, the majority have a clear goal in mind. Over half (51%) said their goal for driving technology innovation was to accelerate business growth or remain competitive in a disruptive environment. However, many are facing internal and external barriers to delivering successful projects, including security risks (34%) and regulatory compliance (24%).

“Viewing the organisation as a single interconnected system of knowledge flows that can complement each other, opens up opportunities for a business to gain maximum value from the information it has access to and increase organisational intelligence,” said John Mancini, past President of AIIM. “It’s reassuring to see that, despite the challenges businesses face, many are actively navigating their digital transformation journey to unlock their full potential.”

“Digital transformation is no longer a ‘nice to have’ for organisations, it’s a business imperative as more accelerate their technology investments to be able to thrive in today’s highly-dynamic workplace,” said Mike Nelson, Senior Vice President, PFU (EMEA) Limited. “However, without the capacity to gain valuable insight from the information they hold, organisations will not unlock the full capabilities that digital transformation can enable. Through our Organisational Intelligence report, we hope to highlight where the pain points still exist and support organisations in building a successful path to transformation projects that drive growth.”

Twilio has published the results of a global survey measuring the impact and outlook of the COVID-19 pandemic on businesses’ digital engagement strategies.

Twilio powers communications technology for organisations across a range of sectors, giving it first-hand insight into the ways in which the pandemic has impacted customer and business communications. To better understand this impact, Twilio surveyed over 2,500 enterprise decision makers globally to gauge the effect on their company’s digital transformation and communication roadmap. The COVID-19 Digital Engagement Report is a snapshot of how businesses have addressed the complex challenges posed by this crisis and how they will continue to evolve moving forward.

“Over the last few months, we’ve seen years-long digital transformation roadmaps compressed into days and weeks in order to adapt to the new normal as a result of COVID-19. Our customers in nearly every industry have had to identify new ways to communicate with their customers and stakeholders – from patients, to students, to shoppers, and even employees – essentially overnight,” said Glenn Weinstein, Chief Customer Officer at Twilio. “Cloud scale, speed, and agility are enabling organizations to innovate faster than ever. We believe the solutions being built today will be the standard for digital engagement in the future.”

Key findings of the COVID-19 Digital Engagement Report include:

Pandemic accelerates business continuity investments in cloud migration, productivity tools 94 percent of IT leaders say IT automation a priority going forward.

A new global study from LogicMonitor examines how IT departments are evolving in a time of crisis to maintain business continuity and best meet the needs of their customers. LogicMonitor, the leading cloud-based provider of IT infrastructure monitoring, intelligence and observability, has released its Evolution of ITreport, which is the result of a survey of 500 IT decision makers from across North America, the United Kingdom, Australia and New Zealand during the COVID-19 pandemic. The report reveals a number of important trends, and identifies several IT challenges faced as countries and enterprises shut down physical offices and move operations online.

For example, the study found that 84 percent of global IT leaders are responsible for ensuring their customers’ digital experience, but nearly two-thirds (61 percent) do not have high confidence in their ability to do so. LogicMonitor’s research found that more than half (54 percent) of IT leaders experienced initial IT disruptions or outages with their existing software, productivity, or collaboration tools as a result of shifting to remote work in the first half of 2020. Within the Education sector, nearly a quarter (24 percent) of IT professionals stated that their employer did not have a business continuity plan in place to deal with the current crisis.

Overall, 70 percent of IT professionals are finding it challenging to adapt to their new responsibilities of supporting a remote workforce. Respondents report significant concerns relating to security and stability; specific challenges experienced include the struggle to deal with outages remotely, and the network strain from the increase in remote employees using IT systems. These concerns represent a serious threat to the ability to deliver seamless digital experiences that consumers increasingly demand.

“Maintaining business continuity is both more difficult and more important than ever in the era of COVID-19,” said Kevin McGibben, CEO and president of LogicMonitor. “IT teams are being asked to do whatever it takes – from accelerating digital transformation plans to expanding cloud services – to keep people connected and businesses running as many offices and storefronts pause in-person operations. Our research confirms that the time is now for modern enterprises to build automation into their IT systems and shift workloads to the cloud to safeguard IT resiliency.”

IT teams lack confidence in their infrastructure’s resilience

Business continuity plans are integral to companies’ ability to withstand an unanticipated crisis. While LogicMonitor’s new study found that 86 percent of companies have a business continuity plan in place prior to COVID-19, 12 percent of respondents have minimal or no confidence at all in their organisation’s plan to withstand an unanticipated crisis. Only 35 percent of respondents feel very confident in their plan.

IT decision makers also expressed overall reservations about their IT infrastructure’s resilience in the face of a crisis. Globally, only 36 percent of IT decision makers feel that their infrastructure is very prepared to withstand a crisis. And while a majority of respondents (53 percent) are at least somewhat prepared to take on an unexpected IT emergency, 11 percent feel they are minimally prepared or believe their infrastructure will collapse under pressure.

Learning from this crisis, IT decision makers report they’re investing in productivity tools and expanding the use of cloud-based solutions and platforms to maintain business continuity and serve customers during the global pandemic and into the future.

Overall, 35 percent of organisations are investing additional funds in IT infrastructure monitoring, and 23 percent are investing in artificial intelligence and machine learning as ways to better cope with company-wide remote work policies.

COVID-19 is dramatically accelerating cloud adoption

The survey identified that 91 percent of respondents are working remotely and a full 78% said their entire company is working remotely. Indeed, 87 percent of IT leaders report COVID-19 is driving the need to work from home, which in turn is accelerating their migration to the cloud.

Prior to COVID-19, IT professionals said 65 percent of their workload was in the cloud. However, just six months later, that number increased to 78 percent. With this in mind, 74 percent think it will take five years or less for more than 95 percent of all workloads to run in public, private, and hybrid cloud environments.

While cloud migrations and usage soars, on-premises IT workloads are experiencing a substantial decline due perhaps in part to the global pandemic. Pre-COVID-19, 35 percent of workloads were housed on-premises. Now, IT professionals expect on-premises workloads to decrease to 22 percent by 2025.

IT leaders are embracing automation

The benefits of IT automation have become increasingly clear in the first half of 2020: 50 percent of IT leaders who have a “great deal of automation” within their IT department also say they’re very confident in their ability to maintain continuous uptime and availability during a crisis.

While the vast majority of IT decision makers (88 percent) say there has been a greater focus on automation in their department over the past three years, an even greater majority, 94 percent, say they expect this focus on automation to increase in the coming three years.

In more normal times, IT leaders see automation as a business enabler that allows them to operate more efficiently and focus on innovating rather than keeping the lights on. 74 percent of IT leaders say they employ intelligent systems like artificial intelligence and machine learning to provide insight into the performance of their IT infrastructure. And 93 percent of IT leaders say automation is worthwhile because it allows IT leaders and their teams to focus on more strategic tasks and initiatives.

However, although some IT professionals fear job loss due to automation, others view it as a saving grace when faced with the spectre of pandemic-related layoffs or budget cuts. Nearly three quarters (72 percent) of IT leaders believe that the automation of IT tasks would enable their department to operate effectively in the case of staff reduction.

Predicted rise in spending on technology as decision makers focus on digital collaboration tools.

Over half of IT leaders (55%) say their current IT systems are not fully equipped to handle the new post-pandemic requirements, according to a survey of enterprise professionals conducted by 451 Research and commissioned by Smartsheet. As a result, many decision makers expect technology spending to increase across the board over the next six months, with top areas of focus being team collaboration tools, digital workspace, content storage and sharing tools.

Digital transformation has been top of mind for many IT leaders but the pandemic is pushing many of these leaders to jumpstart their efforts by re-evaluating the tools they currently use to meet the needs of a distributed workforce. This “new work norm” is revealing the struggles many companies are facing due to technologies and apps that do not seamlessly integrate, or the use of a platform that requires IT support in order to run effectively.

“As the COVID-19 crisis continues and the shift to remote work lasts longer than many leaders anticipated, executives are finding that they need better technology to fuel employee productivity,” said Gene Farrell, Chief Product Officer of Smartsheet. “As IT leaders think of how to remedy this in the long-term, many will invest in no-code collaboration tools that integrate with other widely used solutions to provide their employees with a single platform to get work done.”

The survey also looked to identify some of the blockers that IT leaders feel are impeding workforce productivity and uncovered the following:

“Whether it's in no-code workflow automation, easier synthesis of data across different business systems by nontechnical users, new and more flexible digital workspace canvases, or other capabilities, one of the key consequences of the pandemic will be more empowerment at the edge of the workforce,” said Chris Marsh, a research director at 451 Research. “As businesses adjust to the impacts of the pandemic, having more employees more empowered than they traditionally have been to create increased agility across the long tail of their workforce processes will be critical to getting on the front foot.”

43% of IT decision makers feel their relationship with business leaders has improved since the start of the pandemic.

New research from hybrid IT services provider, Ensono, has found that the relationship between IT decision makers and business leaders has improved since the start of the pandemic. 43% of the 153 IT decision makers studied across the US and UK revealed that IT now commands more respect from the business.

With this increased respect, businesses have also grown hungrier for innovation, and consequently 1 in 3 (32%) IT decision makers are seeing IT budgets increase. 33% have been given more scope to define IT spend since the beginning of the coronavirus pandemic, versus just 5% who feel they have been given less scope to define IT spend.

Along with an uptick in funding, more organizations are looking to digitally transform due to pressures from COVID-19. In fact, 56% of IT decision makers have witnessed a greater urgency for digital transformation over the next few years. And almost 1 in 4 organizations surveyed have been forced to begin digitally transforming now.

When asked whether the coronavirus has changed their business’ view of IT, 38% of respondents confirmed the pandemic has helped improve understanding of IT. 30% of IT decision makers now have more control over business decisions, versus just 4% who stated they have less control now. Only 10% felt the pandemic had not changed their relationship in any way with the business.

Paola Doebel, Senior VP, Managing Director of North America, Ensono said: “As businesses have had to quickly adapt to the new working realities brought on by the COVID-19 pandemic, IT departments too have had to adjust their priorities to keep pace with the speed of change created by COVID. The IT department has been trusted to act fast and deliver so businesses can continue to move forward and safely address the needs of their team members and customers.”

Despite the major disruption the pandemic brought to businesses, both in terms of working from home due to social distancing and increases in digital service demand, the IT department has been able to prove its value by keeping businesses running with 1 in 4 having faced no issues with downtime versus just 2% having recorded between 24 and 48 hours of downtime.

The research also found that 1 in 5 (20%) have found their scope of work has increased to include areas outside of IT, versus 4% who feel they have been relegated to IT and ignored by the rest of the business.

One respondent stated: “While some plans were put on hold, for example those that focused on reducing technical debt, others have been expedited. Those transformation projects include supporting innovations that lead to new revenue streams.”

Barney Taylor, Managing Director, Europe, Ensono said: “It’s important that digital transformation happening now and into the future continues to deliver to the business. CIOs need to ensure they are not innovating in a vacuum and are taking the necessary steps to ensure they are delivering not only to the business and shareholders, but ultimately to their end users.”

Apptio has revealed survey data detailing significant cuts to IT budgets and shifting business priorities in the wake of the COVID-19 pandemic and subsequent economic fallout. Across all sectors, companies have had to re-plan budgets, while some sectors, including healthcare and financial services, are seeing even more remarkable shifts.

Apptio’s data shows that:

“Leaders are facing some of the most difficult decisions of their careers. We are seeing organizations from all industries impacted, some harder than others. In all cases, these organizations have had to look at how technology will enable them to come out of this disruption stronger than when they went in,” said Jarod Greene, GM of the Technology Business Management Council. “But they have to balance the need to manage costs and accelerate innovation, particularly in an environment where cash is king and plans can change on a daily basis. With financial management software, organizations can automate these processes, surface insights they would not have otherwise found in their data and make collaborative, informed decisions that take into account the business impact of their choices.”

Worldwide 5G network infrastructure market revenue will almost double in 2020 to reach $8.1 billion, according to the latest forecast by Gartner, Inc.

Total wireless infrastructure revenue is expected to decline 4.4% to $38.1 billion in 2020. Investment by communications service providers (CSPs) in 5G network infrastructure accounted for 10.4% of total wireless infrastructure revenue in 2019. This figure will reach 21.3% in 2020 (see Table 1).

“Investment in wireless infrastructure continues to gain momentum, as a growing number of CSPs are prioritizing 5G projects by reusing current assets including radio spectrum bandwidths, base stations, core network and transport network, and transitioning LTE/4G spend to maintenance mode,” said Kosei Takiishi, senior research director at Gartner. “Early 5G adopters are driving greater competition among CSPs. In addition, governments and regulators are fostering mobile network development and betting that it will be a catalyst and multiplier for widespread economic growth across many industries.”

Rising competition among CSPs is causing the pace of 5G adoption to accelerate. New O-RAN (open radio access network) and vRAN (virtualized RAN) ecosystem could disrupt current vendor-lock-in and promote 5G adoption by providing cost-efficient and agile 5G products in the future. Gartner predicts that CSPs in Greater China (China, Taiwan and Hong Kong), mature Asia/Pacific, North America and Japan will reach 5G coverage across 95% of national populations by 2023.

Table 1: Wireless Infrastructure Spending Forecast, Worldwide, 2019-2020 (Millions of U.S. Dollars)

Segment | 2019 | 2019 Growth (%) | 2020 | 2020 Growth (%) |

5G | 4,146.6 | 576.6 | 8,127.3 | 96.0 |

LTE and 4G | 20,693.2 | 1.2 | 16,402.0 | -20.8 |

3G | 4,146.6 | -25.7 | 2,608.4 | -37.1 |

2G | 797.4 | -46.9 | 472.2 | -40.8 |

Small Cells | 5,342.7 | 11.6 | 5,736.6 | 7.4 |

Mobile Core | 4,744.7 | 3.2 | 4,780.3 | 0.3 |

Total | 39,871.2 | 6.2 | 38,126.7 | -4.4 |

Due to rounding, some figures may not add up precisely to the totals shown.

Source: Gartner (July 2020)

“Despite investment growth rates in 5G being slightly lower in 2020 due to the COVID-19 crisis (excluding Greater China and Japan), CSPs in all regions are quickly pivoting new and discretionary spend to build out the 5G network and 5G as a platform,” said Mr. Takiishi.

Over the short-term, Greater China leads the world in 5G development, with 49.4% of worldwide investment in 2020 attributed to the region. Cost effective infrastructure manufactured in China coupled with state sponsorship and reduced regulatory barriers is paving the way for major CSPs in China to quickly build 5G coverage. “However, other early adopting and technologically adept nations are not far behind,” said Mr. Takiishi.

Gartner expects that 5G investment will rebound modestly in 2021 as CSPs seek to capitalize on changed behaviors sparked by populations’ elevated reliance on communication networks. 5G investment will exceed LTE/4G in 2022.

CSPs will gradually add stand-alone (SA) capabilities to their non-stand-alone (NSA) 5G networks, and Gartner predicts by 2023, 15% of CSPs worldwide will operate stand-alone 5G networks that do not rely on 4G network infrastructure. This will rapidly divert wireless investment away from LTE/4G and spending on legacy RAN infrastructure will rapidly decline.

Significant growth in IaaS Public Cloud Services

The worldwide infrastructure as a service (IaaS) market grew 37.3% in 2019 to total $44.5 billion, up from $32.4 billion in 2018, according to Gartner, Inc. Amazon retained the No. 1 position in the IaaS market in 2019, followed by Microsoft, Alibaba, Google and Tencent.

“Cloud underpins the push to digital business, which remains at the top of CIOs’ agendas,” said Sid Nag, research vice president at Gartner. “It enables technologies such as the edge, AI, machine learning and 5G, among others. At the end of the day, each of these technologies require a scalable, elastic and high-capacity infrastructure platform like public cloud IaaS, which is why the market witnessed strong growth.”

In 2019, the top five IaaS providers accounted for 80% of the market, up from 77% in 2018. Three-quarters of all IaaS providers exhibited growth in 2018.

Amazon continued to lead the worldwide IaaS market with an estimated $20 billion of revenue in 2019 and 45% of the total market (see Table 1). Amazon leveraged its No.1 spot in 2018 to build out its capabilities beyond the IaaS layer in the cloud stack and maintain its top position in 2019.

Table 1. Worldwide IaaS Public Cloud Services Market Share, 2018-2019 (Millions of U.S. Dollars)

| 2019 Revenue | 2019 Market Share (%) | 2018 Revenue | 2018 Market Share (%) | 2018-2019 Growth (%) |

Amazon | 19,990.4 | 45.0 | 15,495.0 | 47.9 | 29.0 |

Microsoft | 7,949.6 | 17.9 | 5,037.8 | 15.6 | 57.8 |

Alibaba | 4,060.0 | 9.1 | 2,499.3 | 7.7 | 62.4 |

2,365.5 | 5.3 | 1,313.8 | 4.1 | 80.1 | |

Tencent | 1,232.9 | 2.8 | 611.8 | 1.9 | 101.5 |

Others | 8,858 | 19.9 | 7,425 | 22.9 | 19.3 |

Total | 44,456.6 | 100.0 | 32,382.2 | 100.0 | 37.3 |

Source: Gartner (August 2020)

Microsoft remained in the No. 2 position in the IaaS market with more than half of its nearly $8 billion in revenue coming from North America. Microsoft’s IaaS offering grew 57.8% in 2019, as the company leveraged its sales reach and ability to co-sell its Azure offerings with other Microsoft products and services in order to drive adoption.

The dominant IaaS provider in China, Alibaba Cloud, grew 62.4% in 2019 with revenue surpassing $4 billion, up from $2.5 billion in 2018. Alibaba Group will continue to expand its cloud infrastructure business in the coming years and aim to offer cloud-based intelligent solutions to its customers to support their digital transformation process.

China-based Tencent grew its IaaS offering by over 100% in 2019. It is the second largest provider of cloud services in China, after Alibaba. “As the cloud market matures, and its leaders experience natural market share erosion as a result, China-based providers such as Alibaba, Tencent and Huawei will start to gain more traction. It will also be hard for other providers, such as the North America based cloud providers, to enter the China market given the country’s highly regulated market,” said Mr. Nag.

Google’s IaaS revenue grew from $1.3 billion in 2018 to $2.4 billion in 2019, experiencing 80.1% growth. Google’s cloud services focused on providing organizations with industry specific solutions on robust computing infrastructure. North America accounts for nearly half of Google’s IaaS revenue.

Moving forward, Gartner will be combining the IaaS and platform as a service (PaaS) segments into a single, complementary platform offering, cloud infrastructure and platform services (CIPS). The worldwide CIPS market grew 42.3% in 2019 to total $63.4 billion, up from $44.6 billion in 2018. Amazon, Microsoft and Alibaba secured the top three positions in the CIPS market in 2019, while Tencent and Oracle were in a virtual tie for the No. 5 position with 2.8% of the market each.

“There will be a continued push of cloud spending as an outcome of the coronavirus pandemic,” said Mr. Nag. “When enterprises were compelled to move their applications to the public cloud as a result of the pandemic, they realized the true benefits of public cloud and it is unlikely that they will change course. In the recovery and rebound phase, CIOs are recognizing that they don’t need to bring workloads back on premises, which will further increase cloud spending and drive new applications around cloud-hosted collaboration that incorporate emerging technologies such as virtual reality and immersive video experiences.”

Worldwide Public Cloud revenue to grow 6.3% in 2020

The worldwide public cloud services market is forecast to grow 6.3% in 2020 to total $257.9 billion, up from $242.7 billion in 2019, according to Gartner, Inc.

Desktop as a service (DaaS) is expected to have the most significant growth in 2020, increasing 95.4% to $1.2 billion. DaaS offers an inexpensive option for enterprises that are supporting the surge of remote workers and their need to securely access enterprise applications from multiple devices and locations.

“When the COVID-19 pandemic hit, there were a few initial hiccups but cloud ultimately delivered exactly what it was supposed to,” said Sid Nag, research vice president at Gartner. “It responded to increased demand and catered to customers’ preference of elastic, pay-as-you-go consumption models.”

Software as a service (SaaS) remains the largest market segment and is forecast to grow to $104.7 billion in 2020 (see Table 1). The continued shift from on-premises license software to subscription-based SaaS models, in conjunction with the increased need for new software collaboration tools during COVID-19, is driving SaaS growth. The second-largest market segment is cloud system infrastructure services, or infrastructure as a service (IaaS), which is forecast to grow 13.4% to $50.4 billion in 2020. The effects of the global economic downturn are intensifying organizations’ urgency to move off of legacy infrastructure operating models.

Table 1. Worldwide Public Cloud Service Revenue Forecast (Millions of U.S. Dollars)

| 2019 | 2020 | 2021 | 2022 |

Cloud Business Process Services (BPaaS) | 45,212 | 43,438 | 46,287 | 49,509 |

Cloud Application Infrastructure Services (PaaS) | 37,512 | 43,498 | 57,337 | 72,022 |

Cloud Application Services (SaaS) | 102,064 | 104,672 | 120,990 | 140,629 |

Cloud Management and Security Services | 12,836 | 14,663 | 16,089 | 18,387 |

Cloud System Infrastructure Services (IaaS) | 44,457 | 50,393 | 64,294 | 80,980 |

Desktop as a Service (DaaS) | 616 | 1,203 | 1,951 | 2,535 |

Total Market | 242,697 | 257,867 | 306,948 | 364,062 |

BPaaS = business process as a service; IaaS = infrastructure as a service; PaaS = platform as a service; SaaS = software as a service

Note: Totals may not add up due to rounding.

Source: Gartner (July 2020)

Public cloud services serve as the one bright spot in the outlook for IT spending in 2020. Cloud spending in many regions is expected to grow rapidly as economies reopen and more normal economic activity resumes, with regions such as North America expecting to return to higher spending levels as early as 2022.

“The use of public cloud services offer CIOs two distinct advantages during the COVID-19 pandemic: cost scale with use and deferred spending,” said Mr. Nag. “CIOs can invest significantly less cash upfront by utilizing cloud technology rather than scaling up on-premises data center capacity or acquiring traditional licensed software.”

“Any debate around the utility of public cloud has been put aside since the onset of COVID-19. For the remainder of 2020, organizations that expand remote work functionality will prioritize collaboration software, mobile device management, distance learning educational solutions and security, as well as the infrastructure to scale to support increased capacity.”

The top 5 enterprise applications vendors in 2019 were SAP (7.7% revenue share), Oracle (5.1% share), Salesforce (5.0% share), Intuit (3.0% share), and Microsoft (2.1% share).

As businesses undergo digital transformation to meet the challenges of the digital economy, modern software with its properties of automation, connectivity, and visibility has become critical to achieving competitive advantage. Enterprise applications are the engine of the business, providing the data, intelligence, and computational tools necessary to function in the digital economy and every line of business within an organization depends on multiple software applications to function.

"Digital transformation initiatives are bringing impactful changes to organizations such as the ability to work anywhere and anytime, identifying new insights because of cognitive and predictive processes, and reshaping the enterprise experience using modern and cloud-based enterprise applications," said Mickey North Rizza, program vice president, Enterprise Applications and Digital Commerce at IDC, "Enterprise applications are the foundation of business processes, employee engagement, and customer experience."

Several trends currently impacting the enterprise applications market include:

The enterprise applications market consists of the following secondary markets: enterprise resource management, customer relationship management, engineering applications, supply chain applications, and production applications. Each of these secondary markets consists of multiple functional markets.

IDC's software market sizing and forecasts are presented in terms of commercial software revenue. The term commercial software is used to distinguish commercially available software from custom software. Commercial software revenue typically includes fees for initial and continued right-to-use commercial software licenses. These fees may include, as part of the license contract, access to product support and/or other services that are inseparable from the right-to-use license fee structure, or this support may be priced separately. Upgrades may be included in the continuing right of use or may be priced separately. Commercial software revenue excludes service revenue derived from training, consulting, and systems integration that is separate (or unbundled) from the right-to-use license but does include the implicit value of software included in a service that offers software functionality by a different pricing scheme.

Growth in worldwide and European* AR/VR spend will decline in 2020 compared to the pre-COVID-19 forecast scenario according to the June release of the International Data Corporation (IDC) Worldwide Augmented Reality (AR) and Virtual Reality (VR) Spending Guide. Marked reductions in IT spend and an economic downturn due to the pandemic will slow worldwide AR/VR spend to $10.7 billion — a tempered 35.3% growth from the $7.9 billion spent in 2019. But the long-term outlook remains strongly positive — IDC estimates a five-year compound annual growth rate (CAGR) in AR/VR spending of 76.9% worldwide in 2019–2024 to reach $136.9 billion by 2024.

"The latest release of the AR/VR Spending Guide was adjusted for the impact of COVID-19," said Marcus Torchia, research director, IDC Customer Insights & Analysis. "Supply chain disruptions, store closures, and delayed enterprise implementations cast shadows on the short-term outlook for the coming quarters into 2021. However, the longer-term growth opportunities for AR/VR may emerge even stronger. Remote working requirements, contactless business processes, augmented meeting places, and virtual social togetherness portend an updraft in expected demand for the enabling of AR and VR technologies."

Europe accounts for roughly 15% of worldwide AR/VR spend, with European spending forecast to be $1.6 billion in 2020. Europe is among the regions hardest hit by the pandemic, showing a 58 percentage point reduction in 2020 AR/VR spending compared to the pre-COVID scenario, against a 43 percentage point worldwide average decline.

"The pandemic and containment measures are heavily impacting the European economy, with GDP expected to decrease 8% in 2020, before bouncing back in 2021," said Giulia Carosella, senior research analyst, IDC European Customer Insights & Analysis. "In verticals that have been highly impacted, such as retail, the strong focus on cash preservation has led to the pushing back of some large AR/VR deployments until the return to growth. But elsewhere the shift in priorities, with a greater focus on ROI optimization and productivity and efficiency gains, will continue to ramp up interest in AR and VR led by the 'remote everything' digital trajectory."

The impact of the pandemic and related spending reduction varies across industries. Commercial use cases will account for nearly half of all AR/VR spending in 2020, led by training ($1.3 billion) for virtual reality and industrial maintenance ($375.7 million) for augmented reality. The AR/VR use cases forecast to see the fastest spending growth in 2019–2024 are lab and field (post-secondary, 133.9% CAGR), lab and field (K-12, 127.0% CAGR), and public infrastructure maintenance (111.4% CAGR). On the consumer side, spend will be led by two large use cases: VR games ($3.0 billion) and VR feature viewing ($1.2 billion).

Source: IDC's Worldwide Semiannual Augmented and Virtual Reality Spending Guide 2020, June (V1, 2020)

"The COVID-19 pandemic has created a shift in mindset. With so many employees working remotely, augmented and virtual reality are being considered as necessary tools to engage with consumers and drive business processes within and across organizations," said Stacey Soohoo, research manager, IDC Customer Insights & Analysis. "Unsurprisingly, face-to-face industries such as retail are expected to be the most negatively impacted due to the pandemic, along with education, discrete manufacturing, and process manufacturing. Despite a moderate decline in growth, there is an uptick in demand for innovative technologies as retailers shift their focus from selling the product to creating a personalized, immersive customer experience. In other industries, enterprises are focusing on knowledge capture and transfer initiatives, enabling front-line workers to be more efficient and collaborative while keeping safety in mind."

Though commercial AR/VR spending is expected to surpass consumer spend next year in Europe, the latter will still lead the market in 2020. Virtual reality games and video/feature viewing (VR) together will account for more than half of all AR/VR spending in this segment. On the AR side, the most severe contraction is expected to be seen in retail and media, but also in the finance sector. Healthcare and government are expected to be the most resilient in 2020, showing interesting pockets of growth related to monitoring of social distance compliance, anatomy diagnostics, and emergency response. Due to the expected high demand of industrial AR/VR solutions, industrial maintenance will be the fastest growing use case in terms of CAGR over the forecast (2019-2024). On the VR side, consumer spending will be the most resilient in 2020, driven by demand for at-home entertainment. Remote training and collaboration will also sustain demand in the commercial space. Training and industrial maintenance will be the main AR/VR commercial applications in Europe, and together will garner around 46.3% market share.

Spending in VR solutions will be greater than that for AR solutions initially. However, strong growth in AR hardware, software, and services spending (184.5% CAGR) will push overall AR spending well ahead of VR by the end of the forecast. Hardware will account for nearly three-quarters of all AR/VR spending throughout the forecast, followed by software and services.

In Europe, hardware is expected to be the largest technology category in 2020, with more than 60% market share. Software spending will maintain its second largest share. AR/VR services-related spending will stay with the lowest share in the following years at under 10%, but will see the fastest growth, registering a CAGR of 126.2%, driven mainly by consulting services and system integration.

From a reality type perspective, VR solutions will have the largest portion of spending in 2020, achieving more than 70% share, but AR spending will overtake it by the end of the forecast due to increased demand and strong growth in all AR technologies (176.8% CAGR).

"AR and VR investments have slowed due to the pandemic, which has required companies to review their road maps," said Lubomir Dimitrov, senior research analyst, IDC European Customer Insights & Analysis. "However, as companies progress along their road to recovery, spending on AR/VR will accelerate quickly, with a focus on targeted investments that can bring European companies clear benefits, including the ability to address many of the challenges associated with COVID-19."

The IDC Worldwide Augmented and Virtual Reality Spending Guide examines the augmented reality and virtual reality (AR/VR) opportunity and provides insights into this rapidly growing market and how it will develop over the next five years. Revenue data is available for nine regions, 20 industries, 47 use cases, and 6 technology categories and two reality types. Unlike any other research in the industry, the comprehensive spending guide was created to help IT decision makers to clearly understand the industry-specific scope and direction of AR/VR expenditures today and in the future.

According to the June update of the Worldwide Black Book Live Edition published by International Data Corporation (IDC), European ICT spending will decline by 3.7% year on year in 2020 to total $897.08 billion. However, slight recovery of the ICT market is expected in 2021, when ICT spending in Europe will increase by 1.9% year on year, in line with the gradual recovery in macroeconomic conditions and consumer confidence.

All hardware markets will continue on a negative trajectory in 2020, with overall hardware spending declining by 4.07% year on year. Spending on infrastructure will be most affected, due to reduced business activity, focus on capital preservation, and expense reduction.

"During the Covid-19 crisis, there has been a boost in adoption of OPEX-based consuption models, which will drive spending on IaaS. The market is forecast to grow in the double-digits in both the short and long term," says Lubomir Dimitrov, senior research analyst with IDC's Customer Insights & Analysis team. Although demand in the PC market increased during the second quarter of 2020, annual spending in the overall hardware market will decline due to the global economic challenges among both consumers and businesses, resulting from the impact of the pandemic.

In 2020, the European IT services market is expected to decline by 4.2% year on year, as the current economic uncertainty is causing delays or reductions in existing projects, and investments planned pre-crisis are being postponed. Next year, the services market is expected to rebound slightly, with negligible annual growth of around 1%.

Spending on software in Europe is expected to slow down in 2020, declining by 2.61% year on year, as organizations try to limit their resources and place any projects on hold that are not crucial for maintaining core business activities. On the other hand, the increased adoption of the remote working/work from home model among public and private organizations gave a boost to spending on collaborative and communication tools, as well as on software security spending, as protecting devices and expanded networks became an urgent need.

The European software market will rebound slightly next year, with companies renewing some previously delayed digital transformation initiatives, particularly those relating to AI, analytics, and automation of business processes.

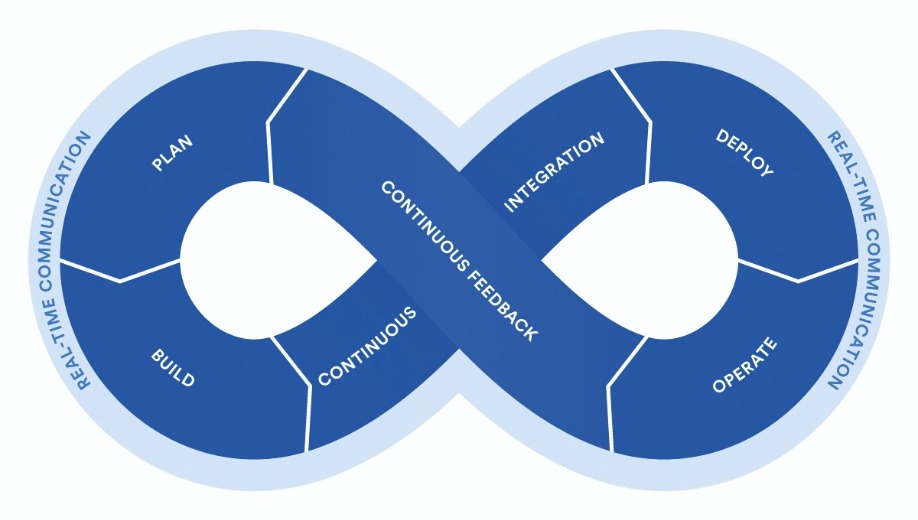

To understand the impact of DevOps you need to think about how software was traditionally built and run.

By Andrew Davis, Senior Director Product Marketing, Copado.

Organisations continually hold in tension innovation and stability. Every business needs to innovate and develop new functionality for their customers and employees. To do this, they build and customise applications. This is the role of the Development team (Dev).

Those same organisations also need existing systems to be stable, reliable, and secure. This is the role of the Operations team (Ops), which might consist of system, database and website admins. Their main job is to make sure servers are up and running, service level agreements are being met, the application is performing as expected and so on.

Working with a cloud platform like Salesforce makes it a lot simpler to build applications. Instead of needing an ‘Operations’ team to keep production systems running, the platform takes care of this aspect.

However, there’s a natural tension. Developers want and need to keep changing the system, while Operations wants and needs the system to remain as stable as possible so they can optimize performance and reduce risk.

Compounding the issue, in large organisations, Dev and Ops teams historically work in silos. The end goal for both teams is customer satisfaction, but specific goals for ‘Devs’ include quickly delivering features and bug fixes. Whereas for ‘Ops’ the goal might be to maintain 99.99% server up-times.

These goals can often be in conflict, leading to inefficiency and finger pointing when things go wrong.

Breaking Down Silos

DevOps was born to alleviate conflict between the Dev and Ops teams. It focuses on using tools and techniques that enable everyone to work together more smoothly. It’s about collaboration, working towards the shared goal of benefitting end users.

It is born out of a recognition that the whole team needs to work together to build trust while delivering innovation. Because delivering an application is only the beginning. Once it’s running in production, businesses need a way to ensure it’s working, and to gather feedback to make improvements.

Historically, development was thought of as an assembly line, beginning with development and ending in production. But DevOps teams prefer to use a circle or an infinity loop to indicate that this is an ongoing process.

In The DevOps Handbook Gene Kim and co-authors describe “three ways” of DevOps: Flow, Feedback, and Continuous Improvement. Flow refers to the “left-to-right” movement of changes from development to production. The goal of this flow is early and continuous delivery of value to end users. Developers can then monitor how the app is running in production, and get input from end users, leading to the “right-to-left” movement of Feedback back to development.

However, just like learning, DevOps is not a journey you ever finish, thus the third way is Continuous Improvement, striving to always improve both our work and the way we work.

Focusing on the customer - DevOps in practice

The purpose of every task in business is to deliver value. That might be a person who pays for a service or someone in the same organisation. Although that might sound obvious, it’s important to understand the value that a given team delivers, so that the business can focus on activities that bring value and avoid things that don’t.

One great example of this is waiting time. Imagine that it takes one hour to build a great new feature for an application. Clearly, that’s an action that brings value. But imagine that the team only releases once per week, so that feature needs to wait for a week before it can be released. That waiting time does not bring any value to the customer. If there is a way to reduce that waiting time, it’s beneficial for the customer.

The modern world has gotten a lot faster compared to previous generations. Many of the changes we have seen are designed to bring benefit to customers more quickly and more efficiently. For example, manufacturing has been transformed over the last century to reduce the amount of non-value adding steps in a process, and to optimize for quick delivery.

DevOps aims to bring similar benefits to the process of developing IT applications. DevOps focuses on closing the gap between creative people like developers and those who can benefit from the end result.

The Research on DevOps

There is increasing research to back up these claims. The Accelerate State of DevOps Report is the largest and longest-running research of its kind, and provides independent scientific evidence of the effectiveness of the practices that fall under the DevOps heading.

The State of DevOps Report points to four key metrics that can be used to measure the effectiveness of a development process:

The first two measure the speed of innovation, and the last two measure the degree of reliability provided. Measuring both innovation and reliability allows a business to assess these dual goals of DevOps.

The research discerned that some organisations performed far better than others on all four of these metrics. Based on these metrics, they categorized respondents into Elite, High, Medium, and Low performers. They found that Elite performers had 106x faster lead time than Low performers, deployed 208x more frequently, had 7x lower change failure rate, and 2,605x faster time to restore!

Importantly, Elite and High performers were both faster and more stable, and low performers suffered from both low rates of innovation and high rates of failures. That indicates that an ability to innovate quickly and the ability to do so safely are related, just as better brakes enable a racecar to go faster.

Performance on these four key metrics is called “software delivery performance”. This performance doesn’t just impact the development team, it has an impact on the whole business. Organisations with elite software delivery performance are twice as likely to meet their overall goals, both commercial and non-commercial.

Copado conducted a similar survey called the State of Salesforce DevOps Report. For organisations that had more than 25 admins or developers contributing to Salesforce customizations, the same trend appeared: teams that were able to release innovation more quickly and reliably to Salesforce performed better as a company!

Why doDevOps practices have such a big impact? It is because a development team may be only a small part of the overall organisation, but they have an outsized impact. By building the systems a company uses, they enable the whole organisation to succeed.

DevOps is - like all new terminology - a little shrouded in mystery, but by bringing these teams together and making collaboration the cornerstone of ALL activity, it is set to delvier huge benefits to enterprises.

The four questions (and answers) that lead to success.

By Jeff Keyes, VP of Product at Plutora.

Almost all businesses today will rely on project management to ensure tasks are completed on time and all employees are meeting their targets. Ultimately, this is what makes a business successful. Its necessity is recognised across multiple industries and so many team leaders may believe there’s no need to justify its worth. But this isn’t always the case, particularly for software development. Another vital management role to be considered is product management – after all, the focus here tends to be on the product that is created. But for those more used to working with project management, and potentially questioning the value of both approaches, it’s important to understand the similarities and differences between the two, and what managers in these areas can each contribute.

The key to this starts with the descriptive writing mantra that everyone learned back in school: the 5 W’s (and 1 H), otherwise known as who, what, when, where, why, and how. Though the “who” and “where” are fairly self-explanatory, one way to understand the difference between product management and project management is by understanding which of the other four questions each job resolves. It’s useful to note: both positions fulfill critical roles in effective product delivery.

Product Management: The foundations of the job

The answers to “what” and “why” are typically found in product management. For every single product being delivered, these questions require solid, confident answers.

“What are we building?”

Many enterprises have a product range that spans dozens of different markets. Though this is great for the business and investors, it’s not an effective way for a team to work. When you ask a team to build or support a product, they need a clear vision of what that product needs to do. This is especially true for software products because it’s easy for them to lose their identity if they attempt to cover too many functions.

In a large enterprise, a “do everything” mentality can cost more over time. Chances are there are many software teams, with each one building software that’s designed to suit a specific part of the market. When those teams spend time implementing software that duplicates some functionality already provided by your business, that’s time and effort being wasted.

This is where a good product manager is valuable. They help keep software teams focused on what they’re building and rein in the desire (by developers and executives alike) to expand the software outside the specific job it needs to do.

“Why are we building this?”

For developers, “why” tends to be more important than “what”. Work is almost always interconnected between different teams. When they understand why they’re building something, it’s much easier to understand how those connections should work. It also gives developers information about which compromises they should make when implementing a particular feature, such as simplicity and usability versus speed.

The product manager is responsible for understanding and conveying the “why” of the product. They do this by conversing with executives and project stakeholders to understand how the product fits into the overall business need.

Project Management: Getting the product over the line

In contrast, project management has a different focus. Here the biggest concerns are the “how” and “when”.

“How will we build this?”

Large software projects are typically complicated, with multiple interdependent and interlocking pieces. The most effective software project managers will understand these interdependent requirements and plan for them accordingly. They organise the individual actions so that by the time someone is ready to work on one piece, its dependencies have already finished. Thanks to this efficiency, developer downtime is minimised and those developers can focus only on the problems they need to solve for each task.

This management is arguably the most valuable thing that project managers do for their software developers, though it can often be missed by executives more focused on the second project management question: “when?”

“When will the project be finished?”

For external stakeholders, the “how” or “why” doesn’t matter, and they usually only care about the “what” when the final product isn’t what they expected. However, “when” is a question that’s always top of mind for every team leader waiting for a software project to be finished.

It’s also the trickiest question to answer. It’s almost impossible to reliably predict how long it will take to deliver a single feature, let alone a whole project. Something that initially seems simple can turn out to be much more complicated than anticipated. Alternatively, sometimes something you think might take a few weeks instead takes only a day or two.

Striking a balance between the two

In many traditional software organisations, both roles are equally as important for the efficient delivery of software releases. However, many enterprises are changing their deployment infrastructure towards a continuous delivery model with DevOps principles. Teams practicing continuous delivery may find that the question of when something is going to be completed can feel less important. Additionally, implementing agile software principles makes the collective software team responsible for the question of “how”. Third party planning tools can also make it easier for software teams to answer the “how” and “when” questions for themselves.

For this reason, dedicated project managers are becoming less common in some organisations. Instead, much of that responsibility is moved to the teams who then focus their planning efforts on the product itself. However, the most successful projects are those that answer all four questions. When an answer to one or more of these questions is unknown, the project can end up stressful and frustrating.

Different job roles come and go frequently enough in the technology sector, as advancements are made so regularly that staff roles have to change to keep up. The main point to take on board when it comes to product management vs project management is that there should be at least a few people in an organisation that are responsible for answering these four questions, and ensuring that their teams work to these guidelines. Doing so keeps the pipeline moving and keeps customers happy, which is the ultimate goal.

APIs play a central role in both enabling digital business and powering modern, microservices-based application architectures.

By Micheál Kingston, Technical Solutions Architect, NGINX at F5.

The other day I went to dinner and it made me appreciate the need for fast application programming interfaces (APIs). Confused? Let me explain.

To get to dinner I used an app to hail a car from my smartphone. While you’re waiting for the driver to pick you up, the map updates in real time to indicate the location of the car on approach. But on that day, the app did not update the map. After 10 minutes, I got frustrated and switched to an alternative ride‑hailing app! This time I was successful and watched in real time as my driver approached and picked me up.

Let’s look at another example. I recently checked out an Amazon Go store in San Francisco. With the Go app downloaded, you just approach the door and it unlocks automatically. As you walk around the store, any item you pick up is automatically added to your virtual cart, and automatically removed if you put it back on the shelf. When you are done, you just walk out!

Yet again, we see real‑time information is critical to a good experience.

APIs Are the Connective Tissue of Good Digital Experiences

What’s the technology powering such convenient, and thus satisfying, consumer experiences? APIs! Specifically, real‑time APIs. There’s a lot riding (pun intended) on consumers having good, real‑time experiences. The barrier to switching to a competitor in the digital world is very low.

What Does “Real Time” Mean?

Research suggests real‑time must be less than 30 milliseconds (ms). Consider these proofpoints:

Real-Time Experiences Require Real‑Time APIs

Real‑time experiences rely on API connectivity. Uber retrieves Google Map data via an API call. Amazon connects in‑store Go infrastructure with sensor, vision, and analytics capabilities via API calls. That means your API infrastructure needs to process API calls in 30 ms or less. For some use cases, you need as little as 6 ms!

That might not sound difficult, but let’s consider that API infrastructure has to:

Everyday Use Cases for Real‑Time APIs

There are plenty of activities in the digital world that harness the benefits of real‑time APIs, including:

Managing APIs

A lack of real‑time APIs can prevent adoption of disruptive services like voice‑controlled smart devices, in‑home medical care, and driverless cars. Preventing these new services from reaching potential stalls revenue and market expansion. Delivering transformative experiences inevitably requires a high‑performance API management solution. This will enable infrastructure, operations and DevOps teams to define, publish, secure, monitor, and analyse APIs, without compromising performance.

Ultimately, APIs play a central role in both enabling digital business and powering modern, microservices-based application architectures. No organisation can afford to ignore their pivotal role APIs in application and business modernisation. Indeed, those delaying on placing APIs at the core of their IT strategy will soon face substantial challenges to transform their technology and business foundations.

In recent years, Kubernetes has exploded in popularity among organisations trying to harness the power of cloud native. And it’s been hugely successful, with project teams able to adopt new Kubernetes infrastructure – or clusters – at an incredible pace.

By Tobi Knaup, Co-CEO & Co-Founder at D2iQ.

For a few years, all has been going well but many organisations more advanced in their Kubernetes journey are now running into a problem. Cluster-sprawl. The ease of spinning up new environments with Kubernetes means disparate clusters exist across the organisation, with little standardisation and, as a result, significant waste and risk.

While the problem of sprawl in IT isn’t a new one (a similar problem occurs with virtual machines, for example), the lack of maturity in the Kubernetes market means it’s one most aren’t aware they need to act on. Understanding how to manage cluster sprawl – and how to avoid it in the first place – will be important for businesses scaling their cloud native infrastructure.

What is cluster sprawl?

Not too long ago, applications were simple and limited. Developer teams knew where an application resided because they were typically monolithic, connected to simple middleware and backend data sources, and all components were manually assigned to on-site systems which often had pet names to make them easy to remember. Today it isn’t quite so simple. To keep pace with the ever-changing digital landscape, organisations are adopting open source and cloud native technologies quickly, and that means more clusters. One team may be building a stack on one cloud provider using their favorite set of tools, while another team is building a different stack on a different cloud provider, using that team’s favorite tools. And, if they’re provisioning and using clusters with different policies, roles, and configurations, you can quickly lose sight of where those clusters exist and how they are being managed. This is cluster sprawl.

Finding yourself in the midst of cluster sprawl is not only a headache from the point of view of managing your infrastructure, it can also lead to security issues and a huge amount of waste. With no centralised governance or visibility into clusters deployed across the organisation, security controls may be inconsistent, increasing the risk of vulnerabilities within applications and making them more difficult to support – as well as lead to compliance, regulatory and IP challenges down the line.

In addition, cluster sprawl leads to waste in resources as with each new added cluster comes new overhead to manage a separate set of configurations. When it comes to patching security issues or upgrading versions, a team is doing multiple times the amount of work, deploying services and applications repeatedly within and across clusters. On top of that, all configuration and policy management, such as roles and secrets, are repeated, wasting time and creating a greater opportunity for mistakes.

Visibility brings everything together

One of the reasons Kubernetes has become so popular amongst developers is because it allows them to spin up their own environments with ease, enabling them to rapidly deploy code at scale. Exactly the issue that leads to cluster sprawl. As such, they tend to lose that flexibility when their platforms are brought into IT operations, who need consistent ways of administering, standardised user interfaces, and the ability to manage and obtain insights about their infrastructure. So, dealing with cluster sprawl (and preventing it occurring at all) requires a careful balance between developer flexibility and the need for IT governance.

The first step on this journey is visibility. Organisations need to have a clear view of all their clusters and workloads at once, so they know what they are dealing with through a centralised control-plane. Not only does this provide an understanding of where clusters are, it also allows IT teams to obtain insights and troubleshoot problems much more quickly. It will provide centralised governance to ensure consistency, security, and performance across the business’s digital footprint - key in the long term and as the number of deployments grows. With centralised visibility, mission-critical cluster information can be viewed at a glance and any issues arising within applications monitored in one place, and without valuable time and resources being lost to troubleshooting problems. Ideally, this visibility will be in place from the very start of an organisation’s Kubernetes journey however, as a nascent technology, many organisations may suddenly find themselves in the weeds. Visibility can, and should, still be achieved with a control-plane that offers a birds-eye view of the cluster landscape.

Maintaining governance

While visibility initially provides the insight into what you are dealing with, in order to ensure everything continues to run smoothly it has to be combined with the creation of policies. All organisations will have unique governance and access control requirements based on the type of business they are in, but policies allow admins to assert control over how clusters are being created and run, reducing risk in the environment. For example, organisations need to be able to govern the usage of sanctioned software and which versions can be used within which projects. This type of version control reduces the potential vulnerability surface area and also helps to more effectively deliver support by providing a catalogue of software that has been approved by the organisation for when they are needed. Policies are also critical for access control.

On the Kubernetes journey, staff may change their roles and responsibilities and that makes it difficult to manage individual logins, account privileges, assess governance risk, and perform compliance checks against industry models and in-house policies. Admins need a simple way to provision Role-Based Access Control (RBAC) that provides flexibility in configuring access as users’ roles within an organisation change. This also balances the need for developer flexibility and IT control by empowering division of labour across developers, operations and any other necessary roles across the business.

Of course, very few organisations will move to the cloud in one quick sprint and, as such, many will maintain a combination of on-premise and cloud-based infrastructure. As such, any governance framework has to extend to all aspects of cloud use and all processes must be standardised across the whole infrastructure, whether it’s on-premise or in the public cloud.

A smoother journey to cloud native

Without intervention, many organisations are going to find themselves dealing with cluster sprawl at some point on their Kubernetes journey. However, with a centralised control plane, oversight can be regained, and cluster sprawl eased. With governance over, and lifecycle management of, disparate Kubernetes clusters, admins will be able to maintain multi-cluster health, manage distributed operations, leverage operational insights and retain control of policies without interfering with the development process. Organisations that are able to contain cluster sprawl to increase the security, manageability and governance for enterprise-grade Day 2 operations will find themselves on a much smoother cloud native journey.

Getting a DevOps strategy correct requires having a progressive shared mindset that can learn from previous delivery cycles and has support from the business to embed those learnings into future delivery cycles.

By Matt Saunders, Head of DevOps, Adaptavist.

Fundamentally, DevOps helps shorten the time between an idea being coded and it being production-ready, and to do this consistently and quickly you need to carefully define and automate your testing. You must put the right processes and tools in place to make testing an integral part of the cycle.

As continuous delivery becomes the norm, expectations have increased amongst those not doing it. The ability to release good quality code quickly and support continuous feedback during delivery is absolutely critical. The role of testing is often overlooked in the DevOps process, and many underestimate the benefits automation and putting the right test tooling in place can provide. When thinking about all of these aspects, companies can more effectively release quality software in today’s age of continuous delivery. Continuous delivery is about being able to efficiently release code quickly and with a high degree of certainty that things are going to work. And work securely.

How can companies get DevOps working well?

One of the ways to get DevOps working well is to focus on automating testing. The path of testing units of code is already well-defined, though also looking at end-to-end user testing gets you further down the path. This takes away repetitive error-prone manual browser-based testing steps where people are examining a web site or application and trying to decide “does that look right?” or “has that regressed since last time?” or “has the associated change had the correct effect?” Instead of making a subjective judgement, you can use tools that look to automate those tests to a high degree of certainty.

According to Alex Zavorski, VP of Atlassian Products at SmartBear: “Another key component of modern software delivery workflows is continuous testing. The antiquated approach of leaving testing in a time-boxed, late-stage of your delivery lifecycle is impossible in true agile and DevOps at scale. Testing must be performed not just earlier, but continuously throughout the entire software delivery lifecycle.”

Below are three guiding principles for successful DevOps which are worth bearing in mind when thinking about the role and importance of testing:

Flow Feedback Learning

Enable rapid flow of ideas, code and design through the system Find problems quickly, validate ideas rapidly, let the system take the work Provide a culture where we can learn and experiment, and fail fast

The role of testing

A key achievement in getting teams to work in an agile way is having your developers able to work in a culture of innovation. So, in development and in testing they might be saying “let's try this, let's test it and

let’s get some rapid feedback so that we can decide whether this was the right thing to do or not”. The DevOps angle on this is in enabling the team to get that work deployed and tested effectively. It's the same agile mindset, but at an operational level.

Ultimately, the cycle should move fast, so that code can be written and quickly put in an environment where the presumptions on which they were first written are rapidly validated or invalidated. Perhaps this involves testing out different hypotheses on different groups of users, or perhaps it’s just delivering a new feature or fix quickly. The key point is getting frequent flow into the system so you can learn as much as you can and then move to the next thing.

Benefits of automation

Companies often come from a scenario where a release manager is responsible for releases and they do their work manually. Every time they do a release, they follow a document which describes how to deploy the code in a sequence of commands and manual steps. Pre-release environments may also look different from production, thus leading to complex human decisions being made during releases. This is error-prone and time-consuming and doesn’t scale well when a company tries to increase the frequency of software releases.

Automation becomes essential when this time comes, and this, in turn, frees people up to do more creative and more knowledge-intensive work. It also lets you scale whatever you're trying to automate much faster than you could without it, without just hiring additional engineers to run more tests.

Putting the right test tooling in place

Once you’ve adopted a mentality of automating testing, you can begin unlocking all the automation potential in your organisation by getting people to use the right tools. And using the technology for the purpose it’s supposed to be used. The above diagram shows examples of tools for the various parts of the DevOps process. Examples of testing tools include Kitchen CI to test infrastructure - making sure that files and packages are in the right place and have the correct permissions, and Cucumber for acceptance testing to ensure that the code is working before it’s sent out.

We also saw that the coronavirus pandemic accelerated the growth of tools such as Slack acting as an information hub, making it easier for people to find the data that they need. According to Stewart Butterfield, the CEO of Slack, this is “a shift that’s inevitable over the next decade. And I think it just accelerated by a couple of years because there were also people who thought Slack was great and really enjoyed it, but essentially just used it in the way that they might have used AIM or Yahoo messenger or something like that 20 years ago. It was essentially for direct messaging. Who suddenly are beginning, depending on what they do, bringing in integrations with Salesforce, or marketing automation tools, or their HR system.”1

Finally, the COVID-19 pandemic highlighted the importance of tools that work remotely, especially when enabling more nuanced collaboration. DevOps teams are often distributed across multiple time zones - there is no 9 to 5 anymore and people are having to work remotely. This means that more than ever, having centralised, consistent and automated processes are vital for visibility; with testing the area where there is the most to lose by not making test data consistent and visible.

In conclusion

Increasingly in today’s remote working environment, the ability to integrate testing and ensure quality is critical to any business’ DevOps strategy and combining the right tools and automation to decrease time to market is increasingly important. Implementing effective testing into your DevOps strategy gives you the confidence that you can release quickly, leaving you confident that your customers are getting the value they need from you.

App development isn’t as long and complex a process as many would imagine, and it can provide huge long-term benefits to your business.

By Ritam Gandhi, Founder and Director, Studio Graphene.

For businesses that previously enjoyed meeting their customers and clients face-to-face, the obstacles posed by coronavirus have been devastating. A company may have invested thousands into ensuring that their premises signal the highest quality offerings possible, be it through a contemporary high-street store or luxurious client meeting room, but now find themselves denied full use of those very same spaces due to COVID-19.

Fear not – it is still possible to ensure that anyone engaging with your business can have a fantastic experience. App-based solutions mean that anyone wishing to utilise your services, buy your products, or simply speak to your staff can enjoy the same level of care and attention that they would have enjoyed in person.

SMEs that previously conducted most business affairs on-site might initially overestimate the effort developing an app requires, while underestimating the huge benefits it may bring.

In a world of social distancing, increasing your online presence has the potential to offer a new and engaging customer experience while at the same time building brand loyalty. Importantly, developing a sleek mobile app is not the large undertaking it may have been when smartphones first entered the market. It is now an option that is accessible to businesses of all sizes and should be considered by anyone who wants their services to be accessible by as many means possible.

To emphasise this point, I want to dispel the three main misconceptions I believe decision makers may have about mobile app development.

You don’t have to start from scratch

As anyone who has ordered food delivery online will know, apps use a variety of pre-existing resources to build a high-quality product. The maps displayed on such apps are rarely designed by, for example, Deliveroo or Just Eat. Rather, these popular applications function on Apple, Google, and Bing map services which have been integrated into the software.

Similarly, developers don’t need to spend weeks designing a fraud-proof online payment system as there are established companies who have ready-made solutions that can be used to facilitate payments. Stripe is an example of an American company that you have probably already used to pay for a service or product online. If you’ve ever used Booking.com, Lyft, or Shopify, you’ve already paid through their system without even knowing it. This goes to show just how seamless it can be to incorporate pre-existing digital solutions into your own app. There’s also one significant advantage to relying on trusted technologies: they have already been proven to work, so you don’t have to invest too heavily in ironing out potential issues that may crop up.

When you are building something completely new, every aspect of the build becomes more complicated and, by extension, development becomes more time-consuming generally. Integrating existing solutions into your app and conducting a test-run before launch will tell you whether these ready-made resources could be a good fit for your app, and if they will enable you to seamlessly deliver your intended services.

Before you launch to market, it is therefore worthwhile to lead with an MVP (minimum viable product), which will allow you to test the functionality of the app and better understand the user experience. Not only is this a way of testing market demand for the product, but it will also enable you to gather feedback in the shortest time possible. If the integrated services are proven effective, this will save you time and money developing the core features and allow you to focus instead on tailoring the customer experience.