As the home becomes the new office, there has never been such a heavy reliance on technology to keep the notion of the ‘workplace’, and its culture, alive. Technology is the backbone of most 21st-century enterprises, supporting everything from data storage and security systems, to the software that employees use every day to get their work done. As employees continue working remotely, modern businesses understand that now, more than ever, a technology issue can quickly become a significant risk or loss of productivity.

By Chris Terndrup, Business Transformation Architect at Nexthink.

Employees are being offered a range of new collaboration tools and ways of working from home, but they have high expectations of the technology they are given to work with. Despite the desperate need for reliable and fully-functioning tech, the reality of this is far from perfect. A recent study found that 61% of employees report IT downtime as an accepted norm in their organisation, with IT disruptions occurring on average twice a week. But how are these delays impacting the average employee’s working day? To what extent are technology issues impacting employees’ mood and motivation?

The bottom line

In the 2020 Experience Report, we’ve found that employees are being set back by an average of 28 minutes every time they encounter a technology problem at work. For projects that are particularly time sensitive, technical issues like these can result in missed deadlines and a drop in work quality, putting the employee in a difficult position through no fault of their own. This could be particularly disruptive for an employee who is due to host a presentation or live webinar.

The same study gained insight directly from IT leaders, who reported an average of two technology interruptions for each employee per week. But, with employees only reporting just over half of incidents (55%) the real productivity drain could be almost twice as bad as IT estimates. When these figures are extrapolated, the loss in productivity is evident. For a company of 10,000 employees, this downtime equates to a loss of £20 million per year. The impact of workforce engagement on a businesses’ bottom-line is very real.

Keeping colleagues connected and emotionally supported

With the recent shift to remote working, the kitchens, bedrooms and living rooms of millions of employees have become their new workplace. And as they look at their companies solely through the window of their devices, technology is expected to fill this gap. Not only is the computer now the conduit to productivity – it is also the main (for many, the only) social and collaborative tool that keeps colleagues connected.

Consequently, in addition to ensuring a consistent technology experience, increasingly IT is tasked with helping find solutions to employees mental well-being. Supporting in the deployment of employee surveys that gauge emotional stress or helping to measure where employees might be suffering from video call / meeting fatigue.

Be wary of demoralising employees

In some environments, new software is being released on a daily basis, workers are forced to learn new technological procedures and functions while still under intense pressure to meet deadlines. Throw in common IT problems, such as crashes and data loss, and it’s increasingly difficult for employees to maintain a state of flow and work productively.

In contrast, the positive link between happy employees and improved productivity is proven and well documented. A recent report found reduced stress levels in 72% of workers who have access to technology that helps them to work more productively. The same study also found that automation helps to reduce workload and stress in 64% of employees.

A happy and engaged workforce can transform a business, which is why organisations need to take practical steps to improve the digital experience for employees.

Proactive IT is the solution

To deliver high-quality IT services and improve employee satisfaction, businesses should focus on proactive IT management to prevent issues before they arise. The reality is that for every end user who takes the time to report an incident, there are many more with the same problem who suffer in silence due to the perceived hassle of reporting to IT.

Organisations shifting to a more proactive approach will see an increase in visibility into the performance, behaviour and compliance of employee devices. By analysing user engagement and implementing comprehensive, real-time monitoring of devices on the network, IT teams can shed light on the affected services underneath the radar. Not only will this help IT to provide a new level of digital satisfaction for employees, it will also have a positive knock-on effect for their level of engagement and productivity.

From the data centre to user endpoints, IT represents the nervous system for any enterprise, and every employee depends on it to be productive. Anger, frustration and wasted time are bad enough consequences of technology designed fundamentally to improve employee experience. It’s time for IT teams to take a more proactive approach, to eliminate issues before they arise and create a smooth digital experience for employees. After all, providing workers with fully-functioning and reliable technology can be instrumental in boosting their wellbeing and reinforcing the feeling of connectedness, particularly during this period of remote working.

Welcome to the July issue of Digitalisation World. Below, I’ve written a few observations about the state of security but, before you get to that part, I’d like to highlight the comprehensive new, or next, normal content that has been assembled for you in this issue. Recent issues of DW have covered much of the amazing work that has been done in terms of IT helping organisations cope with the world of lockdown (working from home, embracing and accelerating digital solutions and the like), and, in many cases, helped those who are actually battling COVID-19 itself, whether that be the medical practitioners who have done so much in hospitals and care homes, or those looking for a vaccine.

This issue of DW focuses on the ‘what next?’ scenario. I guess the simple answer to that question is ‘nobody knows’, but the more practical answer is that businesses of all shapes and sizes are having to make significant adjustments to the way that they interact with both their employees and customers, often with some very positive results.

Indeed, a recent press release from IT giant, Fujitsu, detailing how the company is making a massive, and seemingly permanent, change to its working practices in Japan, could well be a sign of things to come. Clearly, no two businesses are exactly the same, so there are different approaches required. I hope that the articles in this issue give you plenty of food for thought and, hopefully, some ideas as to how to move forward.

On a related note, I’m delighted to say that we’re organising a one-day virtual event on Digital Change Management, in late September. The idea being that SMEs in particular need help and guidance as to the way forward, both in terms of the technology solutions which they embrace but also, and crucially, the way in which they manage their employee and customer expectations along the way. I guess ‘people, processes, products’ is a reasonable summary of the mindset required. More details of this event can be found at:

Security – the mixed message

At work, at home, on the move, we’re constantly bombarded with messages reminding us that, when it comes to using IT and communications devices, security is paramount. So important is it, that we should have separate passwords for each application and device we need to access. Never mind that we might end up with 20 or 30 password, all of which we must commit to memory because, of course, if we write them down somewhere, then someone might be able to steal them and then have access to our whole lives.

Ok, so the reality is that we may well use the same password in multiple scenarios and we probably will write down passwords somewhere, as a reminder, but disguising them so that they cannot immediately be ‘hacked’ if discovered. But we are told not to do this, and if any breach does occur, and it turns out we didn’t obey the ‘rules’, there are likely to be consequences. A reprimand or worse at work; financial loss at home – and maybe the bank isn’t that keen to pay any compensation.

And yet, despite this ‘draconian’ security imperative, what happens during the sign in process to almost any application you care to mention? You input your password and you are asked ‘Save/remember password?’ Furthermore, in the updated version of an online application I’m now using, it also asks me: ‘Stay signed in?’

So, on the one hand, we are told that, on no account whatsoever must we share, write down or duplicate user names and passwords; and on the other, almost all applications encourage us to go for the easy option and have the application remember a password, or to keep us signed in. Confused?

Biometrics and/or multi-factor authentication have to make the most sense when it comes to security into the future – and let’s hope that future arrives soon. But, for the time being, it seems we are stuck in a contradictory world, where security is critical, but, because it’s a bit difficult to manage, the easy option is offered freely and, I suspect, accepted eagerly by many of us who just cannot deal with the logistics of managing multiple user names and passwords.

So, security industry, and the IT industry more generally, please do tell us, if security really does matter that much, why are lazy, insecure short cuts routinely offered when it comes to accessing many applications and devices?!

Fujitsu will further accelerate its shift to becoming a Digital Transformation (DX) company with an ambitious campaign to redefine working styles for its employees in Japan in the wake of the COVID-19 pandemic. As part of this "Work Life Shift" campaign, Fujitsu will introduce a new way of working that promises a more empowering, productive, and creative experience for employees that will boost innovation and deliver new value to its customers and society through the power of DX.

"Work Life Shift" is not only a concept of "work," but represents a comprehensive initiative to realize employee well-being by shifting preexisting notions of "life" and "work" through digital innovation. This concept demonstrates Fujitsu’s leadership in driving the digital transformation of working culture and spaces in Japan, where many companies have yet to fully embrace the potential of digital technologies to maximize efficiency and creativity in the workplace. As a pioneer in workplace reform through DX, Fujitsu was one of the first large companies in Japan to actively promote remote working practices, which it introduced companywide in Japan in 2017. For employees in Japan, this latest initiative will mark the end of the conventional notion of commuting to and from fixed offices, while simultaneously granting them a higher degree of autonomy based on the principle of mutual trust.

This process will be achieved through the measures outlined below, which will address both changes to the personnel system as well as the office environment for workers in Japan in order to accelerate their transition to a new work style. Fujitsu will additionally streamline its use of office space in Japan to reduce its current footprint by 50% by the end of fiscal 2022, introducing a hot desk system where employees are not assigned to a fixed desk.

Fujitsu will continue to pursue optimal ways of working to achieve its recently announced corporate "Purpose," making DX a reality for its customers by leveraging technology and know-how gained through internal experience as a point of departure.

Three Core Principles to Delivering a New Working Paradigm

Fujitsu's "Work Life Shift" initiative relies on three core principles to achieve this vision: "Smart Working," "Borderless Office," and "Culture Change".

1.Smart Working: realizing optimal working styles

Approximately 80,000 Japan-based Fujitsu Group employees will begin to primarily work on a remote-basis to achieve a working style that allows them to flexibly use their time according to the contents of their work, business roles, and lifestyle. Fujitsu anticipates that this will not only improve productivity but also mark a fundamental shift away from the rigid, traditional concept of commuting leading to enhanced work-life balance.

[Related Measures]

2. Borderless Office: reassessment of the ideal office environment

Fujitsu will shift away from the conventional practice of working from a fixed office towards a seamless system that allows employees to freely choose the place they want to work, including from home, hub, or satellite offices, depending on the type of work they do.

[Related Measures]

3. Culture Change: transforming corporate culture

Fujitsu will work to realize a new style of management based on employee autonomy and trust to maximize team performance and improve productivity. In addition, Fujitsu will continue to seek ways to optimize working styles by continuously listening to the voices of its employees regarding the dramatic shift toward physically separated working spaces, and by leveraging a digital platform that visualizes and analyzes working conditions.

Study finds that employees are being overlooked when businesses adopt new technology.

Lenovo has unveiled a new study which found that organisations are placing business and shareholder goals above employee needs when adopting new technologies. The research, conducted among 1,000 IT managers across EMEA, found that just 6% of IT managers consider users as their top priority when making technology investments. This approach to IT adoption is ultimately leading to productivity being stifled.

When businesses implement new technologies without considering the human impact, many employees become overwhelmed due to the complexity and pace of change, with 47% of IT managers reporting that users struggle to embrace new software.

With all industries having to adapt to the ‘next normal’ and take stock of their responsibility – to employees, to the environment and to the wider world – Lenovo encourages businesses to place the needs of their people at the heart of IT decisions.

Untapped potential

There is an understandable desire for businesses to embrace transformational technologies, such as Artificial Intelligence, and the Internet of Things, as soon as possible. The benefits these promise – innovation, improved productivity, reducing cost and greater customer experience most importantly – are tantalising for any organisation, but their true potential is completely untapped if adoption is purely led by business goals.

While successfully implemented technology should act as an enabler for employees and businesses to achieve greater things, a poor strategy can see technology become an inhibitor – hampering users whose needs have not been carefully considered and catered for. Almost half (48%) of respondents reported a negative outcome where technology implementations have actively inhibited their teams’ ability to operate.

Businesses need to focus on people, offering everything from comprehensive training, to change management, while ensuring leadership KPIs, robust policy & strategy and thorough rollout analyses are aligned with a people-first ethos. Businesses should also ask people-centric questions during any adoption process – is this technology intuitive, will it solve rather than create challenges for employees, will users get a good experience. By taking these steps, businesses can realise the benefits new tools promise, seeing greater productivity and driving innovation. In fact, 52% of IT managers are optimistic about emerging tech’s ability to deliver improved productivity.

However, with 21% of users reporting new technology has actually slowed down processes, it is imperative for businesses to embrace the right technology at the right time. It’s also vitally important businesses consider everyone in the organisation – from those who use it every day, to the IT teams implementing it, to the boardroom decision makers.

The goal should be to adopt smarter technology that is always connected, seamless, agile, flexible, easy to collaborate, adaptive to needs, reliable, high performance and with enhanced security and privacy. Not only that, but it should be suited to the needs of everyone in an organisation.

Responsible business in the ‘next normal’

Organisations are currently re-evaluating how they operate in order to thrive in the next normal. Being a responsible business must now be a priority – placing human impact on the same level as achieving business goals. With 62% of IT managers reporting their investment decisions are entirely business-centric, it will require a fundamental mindset shift for many businesses.

However, as flexible working policies are embraced in order to provide more support to employees during the COVID-19 outbreak, a people-first approach is beginning to emerge, with 70% of respondents seeing more emphasis within their organisation on responsible business.

Giovanni Di Filippo, President of Lenovo’s Data Center Group, EMEA, says: “Times are changing rapidly, not only for businesses, but the technology industry as a whole. Stripped of office walls, we are seeing organisations place greater emphasis on the wellbeing of their employees, and it’s heartening to see this shift in priorities from being all about the bottom line. But the study shows that this is only the beginning.”

“If there is a change of heart and mind within the industry, taking a people-first approach to IT adoption, we will see positive change for both organisations and wider society. Happier employees, greater productivity and a faster pace of innovation – these are the benefits of placing people at the centre of IT decisions.”

Time to think human

IT vendors whose portfolio can empower businesses to think human, will help employees embrace change and enable them to be more productive. Such vendors do this by having an open mindset in working with other organisations, thinking about customer outcomes, not just adoption, reducing the burden on customers as well as the IT department and by helping put usability and experience first.

Giovanni Di Filippo says, “For too long IT decisions have placed pure cost above a business’s most valuable asset: people. It’s people that change the world, and we know that data and technology cannot be transformative without humans bringing it to life and giving it purpose.”

“We want businesses to think human by investing in ‘Smarter Technology for All’. As for vendors – it’s time to think beyond what they make and consider who they make it for. If people are put first, we know the benefits and desired company outcomes will be great.”

Pandemic exposes gaps in existing systems and increases urgency for digital transformation.

More than one in three business decision makers have admitted to damaging customer trust and negatively impacting their own brand during the COVID-19 crisis, as a result of communication failures, according to research from Pegasystems. The global study, which was conducted by research firm Savanta, explored the effect the global pandemic has had on businesses and their ability to adapt in a time of crisis.

Thirty six percent of respondents said they actually lost customers during the pandemic due to failings in their communications, while a similar number (37%) admitted to communicating at least one message to customers that was badly received and damaged their brand reputation. More than half (54%) of respondents conceded they should have done more to help customers during the crisis.

The study also revealed how the pandemic increased the urgency for digital transformation (DX), with 91% percent of all respondents admitting that changes are now needed for their business to survive in a post-crisis world. Almost three quarters (74%) of decision makers reported that the pandemic exposed more gaps in their business operations and systems than they anticipated. Only 6% reported no gaps in their existing systems during the crisis.

As a result, 62% said they will increase the priority level of DX within their organization, with 58% increasing the speed of existing DX projects and 56% increasing the overall level of DX investment. Seventy one percent said the crisis accelerated their digital projects aimed at better engaging with customers. The top three most popular DX projects needed to prepare for future crises were: cloud-based systems (48%), CRM (41%), and AI-driven analytics and decisioning (37%).

Other findings suggest the COVID-19 experience could have some positive outcomes:

“What this research makes clear is that digital transformation can no longer be seen as a ‘nice to have’ for today’s businesses as they face a radically changed landscape,” said Don Schuerman, CTO and vice president of product marketing, Pegasystems. “Now, it’s become a top priority and organizations are beginning to wake up to the fact that ineffective communication with their customers in uncertain times can do them serious damage.”

“Today’s business leaders find themselves at a crossroads. The question for them is not ‘should I invest in digital transformation?,’ but ‘where do I start and how fast can I make it happen?’” continued Mr. Schuerman. “If today’s organizations are to truly learn the lessons of the current crisis and future proof themselves against mass-scale events, then they need to understand that the customer must be put at the center of everything they do. Unfortunately, for many, it appears that it’s a lesson that may have been learned in the hardest way possible.”

Boomi, a Dell Technologies business, has published a new global survey, commissioned with Vanson Bourne that reveals although organizations are reaping the rewards of IT modernization, digital transformation and innovation, there’s still more work to do.

Now more than ever, technology supports and drives every business, from banks to retailers, whether customer-facing or internally focused. Companies that find ways to maximize their budget when investing in digital strategies and technologies have the opportunity to improve their ROI – read about how one company improved by more than 1,000%.

The report, “The State of Modernization, Transformation, and Innovation in the Digital Age,” outlines that 59 percent of survey respondents said effectively using technology has been the key to transformational success. However, 1 in 2 decision makers admit their company isn’t innovating at a competitive rate. Organizations still face multiple challenges to more quickly and efficiently roll out their modernization, innovation and transformation programs.

The top barriers for digital transformation and innovation efforts include insufficient in-house skills (41%) followed by a restrictive budget (33%).

“The next decade will undergo an even more rapid pace of change than the 2000s and 2010s,” said Chris Port, Chief Operating Officer for Boomi. “Though modernization, transformation, and innovation have paid dividends in recent years, organizations can’t afford to rest on their laurels. Especially now. Not when business priorities, drivers of change, and technology needs are rapidly converging, as reflected in this survey.”

The Vanson Bourne survey went on to uncover:

Additional data revealed:

“Employees drive every business process and interaction. Investing in your workforce today by improving their training, workflow, and resources with technology will position your company as the one to beat,” continued Port. “It takes the right kind of culture and the right people to continuously out-change and get ahead of the competition. Modernization and innovation needs to start today and then never stop.”

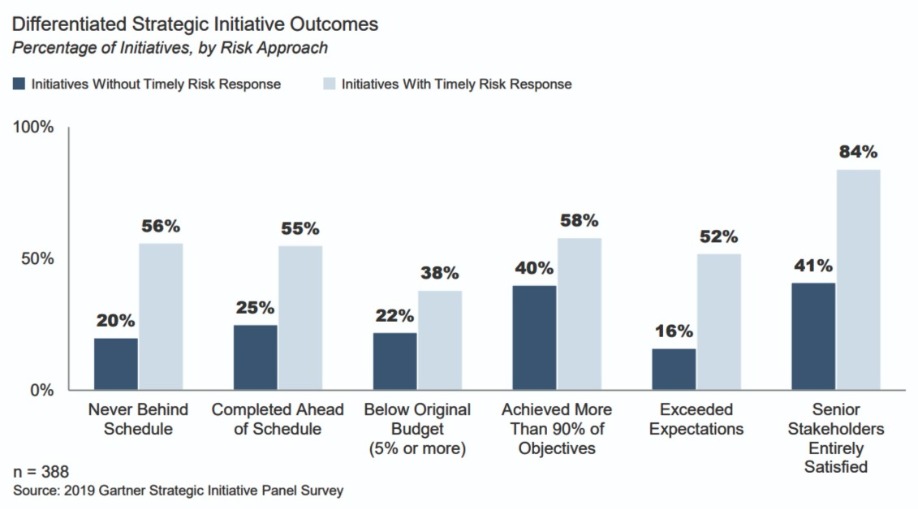

A Gartner, Inc. survey of more than 382 strategic initiative leaders quantified the cost of missing risks in strategic initiatives. For an average $5 billion revenue company it amounts to $99 million annually in opportunity cost from delayed new product launches alone.

Initiatives where risks are not surfaced and mitigated in a timely fashion are delayed by an average of five weeks per year.

Moreover, in a related survey of 111 emerging risk management (ERM) leaders just 6% felt that their organization’s risk response was timely during strategic initiatives.

“These findings show that risk response usually is not timely,” said Emily Riley, senior principal, research in the Gartner Audit and Risk practice. “But they also show the huge cost of an untimely response. The recent COVID-19 pandemic illustrates the need for an agile response to unexpected risks.”

Gartner experts looked at how strategic initiatives performed against several measures and how this was affected by the timeliness of risk responses (see Figure 1.)

“The performance benefits of a timely risk response stand out clearly,” said Ms. Riley. “There’s a business opportunity here because ERM leaders expressed their desire to be more involved in supporting strategic initiative success.”

Seventy six percent of ERM heads said they wanted to increase the proportion of their time they spend on strategic initiatives. More than half said that their involvement should come at the earliest stages of a strategic initiative. Yet currently just 11% feel they are involved before an initiative’s execution.

Information Roadblocks

“The problem we often see is initiative teams are not getting the information they need to act on risks in a timely manner,” said Ms. Riley. “This is one area where ERM teams can add value.”

This can have several root causes. Sometimes many individuals are involved in an initiative without clear accountability to one another. There is also often a sensitivity to candidly sharing information about threats to high stakes projects. Another common cause is a focus on performance metrics that overshadows forward-looking considerations.

“ERM’s role should be to connect initiative teams with subject matter experts, to facilitate opportunities for anonymous sharing of concerns, and to develop risk indicators that consider leading indicators of project success,” said Ms. Riley.

Worldwide container management revenue will grow strongly from a small base of $465.8 million in 2020, to reach $944 million in 2024, according to a new forecast from Gartner, Inc. Among the various subsegments, public cloud container orchestration and serverless container offerings will experience the most significant growth.

This is the first time Gartner has published a forecast for container management, in response to the increasing importance of the underlying technology.

“There has been considerable hype and a high level of interest in container technology, but a lower level of production deployments to date,” said Michael Warrilow, research vice president at Gartner.

Containers have become popular because they provide a powerful tool for addressing several critical concerns of application developers, including the need for faster delivery, agility, portability, modernization and life cycle management.

Gartner predicts that by 2022, more than 75% of global organizations will be running containerized applications in production, up from less than 30% today.

As a result of the growing use of containers, enterprise demand for container management is increasing. Container management provides software and/or services that support the management of containers, at scale, in production environments.

Popularity of cloud-native applications behind container management growth

The forecast growth in enterprise adoption of container management indicates the intrinsic appeal of cloud-native architecture, according to Gartner.

“Understanding of ‘cloud-native’ varies, but it has significant potential benefits over traditional, monolithic application design, such as scalability, elasticity and agility,” said Mr. Warrilow. “It is also strongly associated with the use of containers.”

Recessed economic conditions to curb growth in medium-term

Several factors will restrict adoption among organizations developing or modernizing custom applications. Despite the need to support digital transformation, initiatives will be curbed by recessed economic conditions for at least the medium term, as organizational priorities shift to cost optimization.

Gartner expects that up to 15% of enterprise applications will run in a container environment by 2024, up from less than 5% in 2020, hampered by application backlog, technical debt and budget constraints.

“The bottleneck will be the speed at which applications can be refactored and/or replaced,” Mr. Warrilow said.

Containers will fuel an open ecosystem

Direct revenue for container management software and services will remain a small portion of the container ecosystem. Additional revenue will come from a range of adjacent segments that are not included in Gartner’s container management forecast. This includes application development, managed services, on-premises hardware and infrastructure as a service (IaaS) among other segments.

For example, the IaaS revenue associated with container management is expected to reach $1 billion before 2023. Many of the adjacent segments are already reported in existing Gartner forecasts

“Although the direct incremental revenue may be less than many expect, containers may have a different role to play,” said Mr. Warrilow. “Containers could ultimately fuel an open ecosystem similar to Linux.”

Security and Risk management spending growth to slow

Worldwide spending on information security and risk management technology and services will continue to grow through 2020, although at a lower rate than previously forecast, according to Gartner, Inc.

Information security spending is expected to grow 2.4% to reach $123.8 billion in 2020 (see Table 1). This is down from the 8.7% growth Gartner projected in its December 2019 forecast update. The coronavirus pandemic is driving short-term demand in areas such as cloud adoption, remote worker technologies and cost saving measures.

“Like other segments of IT, we expect security will be negatively impacted by the COVID-19 crisis,” said Lawrence Pingree, managing vice president at Gartner. “Overall we expect a pause and a reduction of growth in both security software and services during 2020.”

“However, there are a few factors in favor of some security market segments, such as cloud-based offerings and subscriptions, being propped up by demand or delivery model. Some security spending will not be discretionary and the positive trends cannot be ignored,” he said.

Table 1

Worldwide Security Spending by Segment, 2019-2020 (Millions of U.S. Dollars)

Market | 2019 | 2020 | Growth (%) |

Application Security | 3,095 | 3,287 | 6.2 |

Cloud Security | 439 | 585 | 33.3 |

Data Security | 2,662 | 2,852 | 7.2 |

Identity Access Management | 9,837 | 10,409 | 5.8 |

Infrastructure Protection | 16,520 | 17,483 | 5.8 |

Integrated Risk Management | 4,555 | 4,731 | 3.8 |

Network Security Equipment | 13,387 | 11,694 | -12.6 |

Other Information Security Software | 2,206 | 2,273 | 3.1 |

Security Services | 61,979 | 64,270 | 3.7 |

Consumer Security Software | 6,254 | 6,235 | -0.3 |

Total | 120,934 | 123,818 | 2.4 |

Due to rounding, some figures may not add up precisely to the totals shown.

Source: Gartner (June 2020)

The ongoing shift to a cloud-based delivery model makes the security market somewhat more resilient to a downturn, with an average penetration of 12% of overall security deployments cloud-based in 2019, according to Gartner research. Cloud-based delivery models have reached well above 50% of the deployments in markets such as secure email and web gateways.

Networking security equipment including firewall equipment and intrusion detection and prevention systems (IDPS) will be most severely impacted by spending cuts this year. Consumer spending on security software is also forecast to decline in 2020.

Global Workplace Restrictions Will Expand Cloud Conferencing User Base Throughout 2020, But Growth Will Taper Off in 2021

Global end-user spending on cloud-based web conferencing solutions will grow 24.3% in 2020, according to the latest forecast by Gartner, Inc.

Global workplace restrictions spurred by the coronavirus pandemic will expand the cloud conferencing user base throughout 2020, but growth will taper off in 2021 as the lasting effects of a remote workforce render conferencing services commonplace.

End-user spending on cloud-based conferencing is projected to reach $4.1 billion in 2020, up from $3.3 billion in 2019. It is the second-fastest growing category in the unified communications (UC) market, behind spending on cloud-based telephony, which is forecast to reach $16.8 billion in 2020.

Overall UC market end-user spending is projected to decline 2.7% in 2020 and return to growth in 2021, as cloud telephony initiatives regain momentum.

“Cloud collaboration investments will buoy the UC market downturn as remote work initiatives spurred by the COVID-19 outbreak drive conferencing adoption and market growth,” said Megan Fernandez, senior principal analyst at Gartner.

Gartner predicts that by 2024, in-person meetings will account for just 25% of enterprise meetings, a drop from 60% prior to the pandemic, driven by remote work and changing workforce demographics. As a result, there is a higher demand for convenient access to videoconferencing and other collaboration tools.

Cloud Telephony Adoption Will Experience a ‘Push and Pull’

In 2020, new premises-based telephony investments will drop sharply as existing installed telephony system life spans are stretched and investment priorities shift to the cloud.

“Cloud telephony adoption will experience a ‘push and pull’ from competing market pressures,” said Ms. Fernandez. “Overall, the market will be negatively impacted by organizations that were planning near-term premises to cloud migrations but are now extending legacy life spans instead.”

However, cloud telephony will experience a boost once its benefits are recognized, namely the ease at which it can accommodate a changing workforce, update and extend existing features, and integrate with adjacent applications.

The cloud telephony market is projected to grow 8.9% in 2020 and 17.8% in 2021.

“As a result of workers employing remote work practices in response to COVID-19 office closures, there will be some long-term shifts in conferencing solution usage patterns. Policies established to enable remote work and experience gained with conferencing service usage during the outbreak is anticipated to have a lasting impact on collaboration adoption,” said Ms. Fernandez.

According to the June update of the Worldwide Black Book Live Edition published by International Data Corporation (IDC), European ICT spending will decline by 3.7% year on year in 2020 to total $897.08 billion. However, slight recovery of the ICT market is expected in 2021, when ICT spending in Europe will increase by 1.9% year on year, in line with the gradual recovery in macroeconomic conditions and consumer confidence.

All hardware markets will continue on a negative trajectory in 2020, with overall hardware spending declining by 4.07% year on year. Spending on infrastructure will be most affected, due to reduced business activity, focus on capital preservation, and expense reduction.

"During the Covid-19 crisis, there has been a boost in adoption of OPEX-based consuption models, which will drive spending on IaaS. The market is forecast to grow in the double-digits in both the short and long term," says Lubomir Dimitrov, senior research analyst with IDC's Customer Insights & Analysis team. Although demand in the PC market increased during the second quarter of 2020, annual spending in the overall hardware market will decline due to the global economic challenges among both consumers and businesses, resulting from the impact of the pandemic.

In 2020, the European IT services market is expected to decline by 4.2% year on year, as the current economic uncertainty is causing delays or reductions in existing projects, and investments planned pre-crisis are being postponed. Next year, the services market is expected to rebound slightly, with negligible annual growth of around 1%.

Spending on software in Europe is expected to slow down in 2020, declining by 2.61% year on year, as organizations try to limit their resources and place any projects on hold that are not crucial for maintaining core business activities. On the other hand, the increased adoption of the remote working/work from home model among public and private organizations gave a boost to spending on collaborative and communication tools, as well as on software security spending, as protecting devices and expanded networks became an urgent need.

The European software market will rebound slightly next year, with companies renewing some previously delayed digital transformation initiatives, particularly those relating to AI, analytics, and automation of business processes.

Cloud IT infrastructure spending continues to grow

According to the International Data Corporation (IDC) Worldwide Quarterly Cloud IT Infrastructure Tracker, vendor revenue from sales of IT infrastructure products (server, enterprise storage, and Ethernet switch) for cloud environments, including public and private cloud, increased 2.2% in the first quarter of 2020 (1Q20) while investments in traditional, non-cloud, infrastructure plunged 16.3% year over year.

The broadening impact of the COVID-19 pandemic was the major factor driving infrastructure spending in the first quarter. Widespread lockdowns across the world and staged reopening of economies triggered increased demand for cloud-based consumer and business services driving additional demand for server, storage, and networking infrastructure utilized by cloud service provider datacenters. As a result, public cloud was the only deployment segment escaping year-over-year declines in 1Q20 reaching $10.1 billion in spend on IT infrastructure at 6.4% year-over-year growth. Spending on private cloud infrastructure declined 6.3% year over year in 1Q to $4.4 billion.

IDC expects that the pace set in the first quarter will continue through rest of the year as cloud adoption continues to get an additional boost driven by demand for more efficient and resilient infrastructure deployment. For the full year, investments in cloud IT infrastructure will surpass spending on non-cloud infrastructure and reach $69.5 billion or 54.2% of the overall IT infrastructure spend. Spending on private cloud infrastructure is expected to recover during the year and will compensate for the first quarter declines leading to 1.1% growth for the full year. Spending on public cloud infrastructure will grow 5.7% and will reach $47.7 billion representing 68.6% of the total cloud infrastructure spend.

Disparity in 2020 infrastructure spending dynamics for cloud and non-cloud environments will ripple through all three IT infrastructure domains – Ethernet switches, compute, and storage platforms. Within cloud deployment environments, compute platforms will remain the largest category of spending on cloud IT infrastructure at $36.2 billion while storage platforms will be fastest growing segment with spending increasing 8.1% to $24.9 billion. The Ethernet switch segment will grow at 3.7% year over year.

At the regional level, year-over-year changes in vendor revenues in the cloud IT Infrastructure segment varied significantly during 1Q20, ranging from 21% growth in China to a decline of 12.1% in Western Europe.

Top Companies, Worldwide Cloud IT Infrastructure Vendor Revenue, Market Share, and Year-Over-Year Growth, Q1 2020 (Revenues are in Millions) | |||||

Company | 1Q20 Revenue (US$M) | 1Q20 Market Share | 1Q19 Revenue (US$M) | 1Q19 Market Share | 1Q20/1Q19 Revenue Growth |

1. Dell Technologies | $2,535 | 17.4% | $2,509 | 17.6% | 1.0% |

2. HPE/New H3C Group (b) | $1,495 | 10.3% | $1,695 | 11.9% | -11.8% |

3T. Inspur/Inspur Power Systems (a, c) | $868 | 6.0% | $636 | 4.5% | 36.4% |

3T. Cisco (a) | $847 | 5.8% | $1,038 | 7.3% | -18.4% |

5. Lenovo | $674 | 4.6% | $670 | 4.7% | 0.5% |

ODM Direct | $4,726 | 32.5% | $4,422 | 31.1% | 6.9% |

Others | $3,390 | 23.3% | $3,258 | 22.9% | 4.1% |

Total | $14,535 | 100.0% | $14,228 | 100.0% | 2.2% |

IDC's Quarterly Cloud IT Infrastructure Tracker, Q1 2020 | |||||

Notes:

a. IDC declares a statistical tie in the worldwide cloud IT infrastructure market when there is a difference of one percent or less in the vendor revenue shares among two or more vendors.

b. Due to the existing joint venture between HPE and the New H3C Group, IDC reports external market share on a global level for HPE as "HPE/New H3C Group" starting from Q2 2016 and going forward.

c. Due to the existing joint venture between IBM and Inspur, IDC reports external market share on a global level for Inspur and Inspur Power Systems as "Inspur/Inspur Power Systems" starting from 3Q 2018.

Long term, IDC expects spending on cloud IT infrastructure to grow at a five-year compound annual growth rate (CAGR) of 9.6%, reaching $105.6 billion in 2024 and accounting for 62.8% of total IT infrastructure spend. Public cloud datacenters will account for 67.4% of this amount, growing at a 9.5% CAGR. Spending on private cloud infrastructure will grow at a CAGR of 9.8%. Spending on non-cloud IT infrastructure will rebound somewhat in 2020 but will continue declining with a five-year CAGR of -1.6%.

External enterprise storage market shrinks

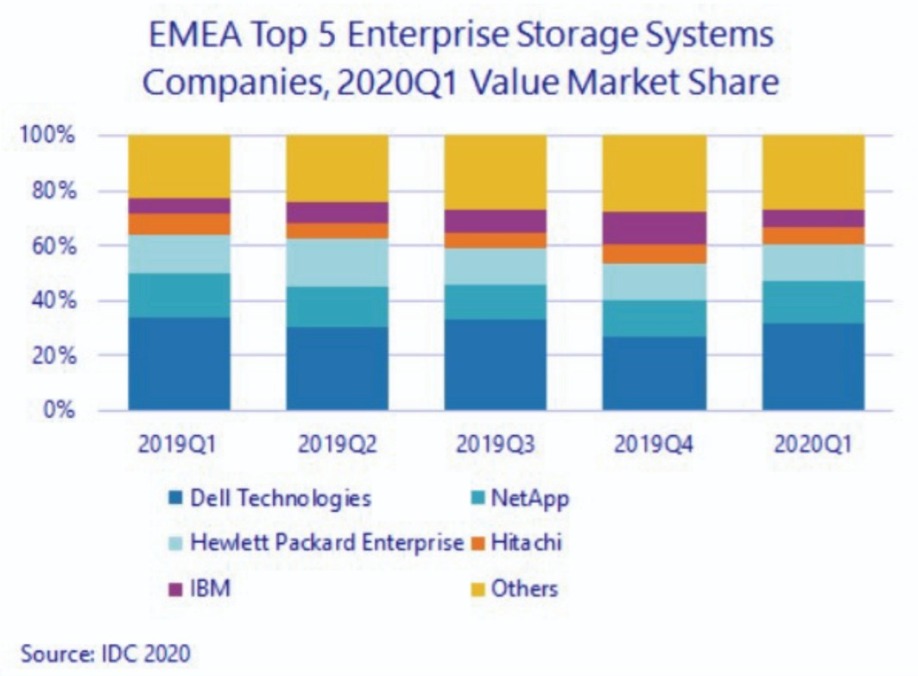

EMEA external storage systems market value in 2020Q1 was down 10.7% year on year in dollars and 8.1% in euros, according to International Data Corporation's (IDC) EMEA Quarterly Disk Storage Systems Tracker.

Once again, the quarter saw marked differences across subregions, with Western Europe down 16.5% year on year and CEMA up 9% (both in dollars).

On a bright note, the all-flash-array (AFA) segment retained its steady path to growth at 4% year on year, further increasing its share of the external storage market value to 47%. The increase happened at the expense of both hybrid flash arrays (HFAs), down by almost 25% year on year and covering 36% of value shipments, and HDD-only arrays, down by roughly 11% and representing only 17% of shipment value.

"Although IDC expects the market decline to persist for the remaining of the year, the COVID-19 pandemic will also be remembered as a watershed moment for the datacenter sector, accelerating the transition to public cloud and pay-per-use consumption models for on-premises equipment, while also driving more investment in digital transformation (DX)", said Silvia Cosso, Associate Research Director, Storage Systems, IDC Western Europe.

Western Europe

The Western European External Storage market value was down again by 16.5% in dollars (-14.1% in euros).

All-flash arrays jumped to 49% of total value, recording a small 1.6% decrease year on year and therefore proving to be considerably more resilient than HFA and HDD-only arrays.

The German market returned to positive territory, but it was not enough to compensate for the heavy declines in the other major countries such as the U.K.

"Despite the decline, expenditure in AFA and HCI (hyperconverged) systems has proven more resilient, and, while in major markets some unbudgeted investments have understandably been put on hold, the general consensus is that expenditure in certain areas such as VDI deployments, collaborative tools and business continuity has been an important driver for the quarter", said Cosso. (see also IDC's " How will COVID-19 Affect IT Infrastructure Spending in 2020? ")

The outlook for the full year is still negative, with the stringent lockdown measures which have brought recession in many European markets expected to take a toll during the second quarter of 2020, before seeing a rebound in 2021.

Central and Eastern Europe, the Middle East, and Africa

Despite the start of COVID-19 pandemic, the storage market value in Central and Eastern Europe, the Middle East, and Africa (CEMA) grew by 9% YoY to reach $514.1М in the first three months of 2020. This development was more optimistic than expected fueled mostly by large telco investments in Russia and data centre projects in the Middle East that were executed prior to implementing rigid restrictions across the region.

AFA and HDD-only systems recorded growth at the expense of hybrid storage systems. All-flash HCI continued to be the fastest growing segment of the market, recording a solid double-digit increase albeit from a small basis. On the other hand, purpose-built backup solutions sustained HDD growth as backup and recovery became crucial in remote working and collaboration environments.

"While storage-related projects were still in the pipeline in 2020Q2, a negative repercussion is expected till the end of the year, with worsening GDPs and business sentiment and limited budgets," said Marina Kostova, research manager, Storage Systems, IDC CEMA. "Only cost-optimized solutions supporting mission-critical primary workloads as well as backup, DR, VDI, and collaboration will witness accelerating growth compared to the declining overall storage systems market. The continuing expansion of hyperscalers in CEMA underlines the fast move towards a cloud consumption model which will further restrain spending on infrastructure in the long-term no matter the expected recovery after 2020."

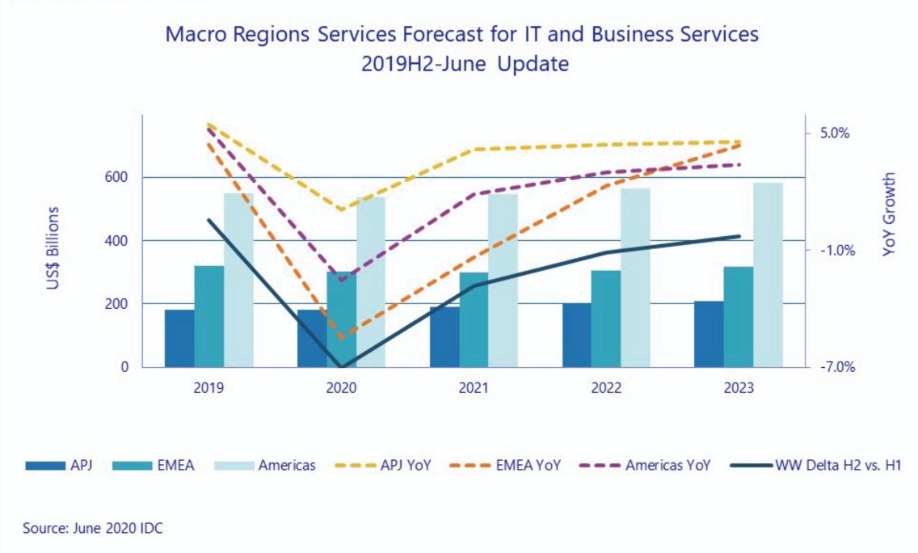

Worldwide Services market growth disrupted

In April, International Data Corporation (IDC) forecast worldwide IT services and business services revenue would decline 1.1% year over year in 2020 due to the impact of the COVID-19 pandemic. In a new update to the Worldwide Semiannual Services Tracker, the market is now forecast to shrink further, declining 2.8% this year. However, the 2021 growth rate has improved slightly from 1% to 1.4%, reflecting IDC's optimism for a market rebound.

The newest forecast is based on the Economist Intelligence Unit's May forecast for worldwide GDP in 2020, which will likely contract by around 4.4%, more than twice as much as the March forecast. After almost four months of shutdowns across most developed markets, the economic downturn in the first half of 2020 will be so severe that even a robust recovery in the next six months will not offset it.

IDC's view on the supply side remains largely intact. Even as the major delivery countries (India, the Philippines, Czech Republic, etc.) were shutting down, services providers adapted quickly to working from home at scale and hatched contingency security plans. Buyers also have largely been quick to sign off on these plans. The transition has been a predominantly smooth one without major disruptions. Most providers see the COVID-19 crisis tipping organizations and consumers over to the digital world – a net positive in the long run.

The downward adjustment in market size was largely attributed to a bigger demand-side shock. The scale and duration of the lockdowns are better reflected in these updated economic metrics. All major markets, according to May's GDP forecast, are suffering greater economic slowdowns or steeper declines compared to projections made in March.

The Americas services markets are now forecast to decline 2.5% year over year in 2020, compared to the March forecast of nearly flat growth. Mid- and-long term prospects remain unchanged and the region is expected to return to growth of 2% in 2021 and more than 3% in subsequent years. In the near term, the economic outlook for Canada, Latin America, and the USA have all worsened. The US unemployment rate rose and Q1 GDP growth was particularly lackluster considering the shutdowns affected just one month in the quarter (March). We are seeing buyers pulling back or deferring projects (IT and business) to save cash. As a result, IDC lowered the US growth forecast to -2.7% in 2020. The project-oriented markets, particularly business consulting, bore the brunt as large US consultancies have already announced workforce reductions worldwide. IDC also tempered the 2020 outlook for managed services by roughly 1%, now down 1.6%. The outlook for the support services market is unchanged and remains at -1.0% with growth in hardware and software support offset by sharp declines in training and education. We still believe that outsourcing and support services are driven more by structural market forces than the demand shock. Overall, except for business consulting, all US foundation markets are forecast to outpace projected 2020 GDP growth.

Services markets in Canada also saw a sharper decline in 2020 and weaker recovery is expected across most foundation markets in the coming years, reflecting the gloomier economic outlook as the shutdown drags on. Latin America will continue to grow but will slump to less than 2% for 2020 with the outlook remaining unchanged from the March forecast.

IDC has also updated its forecasts moderately in other regions due to changing economic outlooks. Western Europe will decline 5.2% year over year in 2020 moved downward by almost one percentage point from the March forecast. The worsening pandemic situation and subsequent longer-than-expected shutdowns will inevitably impact short-term revenue. However, as we are now less uncertain about the future and more confident of the path to recovery, the mid- to-long-term growth prospect was adjusted by increasing 2021 and 2022 growth rates by 1.5—2.0 percentage points per year to -1.8% in 2021 and +2% in 2022.

Similarly, Central & Eastern Europe's 2020 short-term outlook was lowered while the mid- and long-term growth improved. This was largely due to changing conditions in Russia related to the pandemic and oil prices, and the availability of additional market data in smaller markets, such as the Baltics and central Asia.

The Middle East & Africa market will contract by more than 5% in 2020 as major markets in the region are also flanked by shutdowns and the collapse in oil prices. We are still optimistic about a quick recovery and expect budgets and spending to return.

In Asia/Pacific, a few key markets declined further since March, including Japan, Australia, and India, and the forecast was updated to reflect this. Japan will contract this year by 2.8% in 2020, revised downward by more than 1 percentage point with more economic metrics, such as weaker consumer spending in April and May, pointing to a weaker economy. We still expect the China market to deliver growth of 2.7% for 2020. Other major markets (Australia, India, South Korea, etc.) are slowing down dramatically in lieu of worsening economies. Overall, the Asia/Pacific region will slow to just 1.1% growth in 2020, revised down from1.9% in the March forecast, but will likely see a faster recovery in 2021 and beyond.

"Over the last few months of shutdowns around the world, services providers have largely shifted clients' core IT and business operations to 'work from home' environments relatively overnight without major hiccups," said Lisa Nagamine, research manager with IDC's Worldwide Semiannual Services Tracker. "This further demonstrates how adaptive and resilient vendors and buyers can be in the 'digital age'."

"We will continue to see the services market growth outpace GDP growth, even during a crisis like this," said Xiao-Fei Zhang, program director, Global Services Markets and Trends. "The pandemic is clamping down on discretionary spending, and puts the brake on many projects for now, but this will be somewhat cushioned by managed services and support services contracts that support core operations of large enterprises and government agencies."

DW talks predictive analytics and databases with Michele Crockett, Director of Product Marketing at SentryOne.

1. Please can you provide some background on SentryOne?

SentryOne, formerly known as SQL Sentry, was founded in 2004 by Greg Gonzalez, who continues to lead innovation at the company as our Chief Technology Officer. The first product released, SQL Sentry Event Manager, was a solution that grew out of the company’s Microsoft-focused hosting business and it provided intuitive visualisation and management of SQL Server jobs. Performance Advisor followed in 2008 to address performance monitoring and tuning. Over time, SentryOne introduced eight other solutions and multiple enhancements to the existing line-up of products and added groundbreaking monitoring for cloud, physical and virtual systems. In 2016, SentryOne became the new company name and our unified brand. In 2017, SentryOne increased its international presence by creating a formal Global Partner Network and in early 2018 we opened our first international office in Dublin. In April 2018, SentryOne acquired Pragmatic Works Software, making us a comprehensive platform provider for the Microsoft Data Platform.

From our very beginning, we have felt our success stems from our people: the industry’s best SQL Server and Microsoft Data Platform experts working alongside the most compassionate and driven customer engagement teams in the business. Our people partner with our customers to create value and ensure success.

2. What have been the key company milestones to date?

● 2004—Company founding

● 2016—Rebranding as SentryOne with monitoring solution that covers the entire Microsoft Data Platform environment, including SQL Server, Azure SQL Database, SQL Server Analysis Services, and virtual machines running on VMware or Windows Hyper-V

● 2018—Acquisition of the software division of Pragmatic Works, which opened opportunities for the companies to expand offerings for DataOps teams

● 2018—Expansion into EMEA with opening of office in Dublin, Ireland

● 2019—Introduction of first SaaS products, including SentryOne Monitor and SentryOne Document

3. Can you give us a brief overview of SentryOne’s technology and product portfolio?

SentryOne helps companies accelerate delivery of business-critical information with top-rated Database Performance Monitoring and DataOps solutions for SQL Server, Azure SQL Database, and the Microsoft Data Platform. We help data teams manage complex cloud and hybrid data environments, streamline DataOps processes, and migrate and optimise cloud databases. Our areas of focus:

● Optimised data delivery

o SQL Sentry, our flagship database performance monitoring product, provides top-rated, highly scalable monitoring to optimise SQL Server performance

o SentryOne Monitor provides powerful monitoring capabilities in a cloud solution For more information: https://www.sentryone.com/sql-server/sql-server-monitoring

● Streamlined DataOps

o SentryOne Document, automated database documentation and data lineage analysis, available with cloud or software deployment models

o Task Factory, a set of high-performance SSIS components that eliminate tedious programming for data professionals managing data warehousing tasks such as ETL

o SentryOne Test, an automated test framework for validating data For more information: https://www.sentryone.com/dataops-overview

4. How does this distinguish SentryOne from its competitors?

SentryOne helps data teams improve data quality and accelerate data delivery to stakeholders with solutions that are specific to the Microsoft Data Platform and address some of the most challenging pain points in ensuring data quality and performance—no matter how large the database environment.

● In the Database Performance Monitoring category, SentryOne SQL Sentry provides the most actionable, scalable Database Performance Monitoring for the Microsoft Data Platform: here are more details on our capabilities compared with competitors. SQL Sentry also uses predictive analytics powered by machine learning to help data professionals accurately predict future database storage capacity.

● In the DataOps category, SentryOne Document provides in one solution 1) automated database documentation; 2) data lineage and impact analysis; 3) metadata snapshot management; and 4) data dictionary creation. We have competitors that offer data documentation or data lineage analysis, but none that offer both in one solution.

● Our solutions have won various awards from independent review sites and publications: o SQL Sentry was named Trust Radius Top Rated 2020 in the database monitoring category. Here’s a link to our reviews; our current rating is 9.3/10.0 o Task Factory was named Trust Radius Top Rated 2020 in the data integration category o SQL Sentry was named in the Database Trends and Applications Trend-Setting Products and Data and Information Management for 2020 o SQL Sentry won “Best DBA Solution” in Database Trends and Applications Readers’ Choice Awards 2019

● SentryOne has one of the highest Net Promoter Score ratings in the software industry, currently at 72, compared with the average of 44.

● We are the preferred Database Performance Monitoring and DataOps solution for companies with the highest demands for data accuracy and performance, including Lloyds Bank, Henkel AG, Froneri, DHL, Ticketmaster UK, DocuSign, Subway, Humana, United Parcel Service, Amazon, and Tableau.

5. In more detail, please can you talk us through the company’s database monitoring solutions and DataOps products?

SQL Sentry, the flagship monitoring and observability product in the SentryOne portfolio, was built by SQL Server experts to help companies save time and frustration in troubleshooting database performance problems. SQL Sentry offers powerful capabilities in an intuitive dashboard that gives DBAs an at-a-glance picture of your SQL Server environment health, and makes it easy to drill down for more details. SQL Sentry monitors the entire Microsoft data estate, including SQL Server Analysis Services (SSAS), Azure SQL Database, and VMs running on VMware or Hyper-V. Features include an Environment Health Overview, Top SQL (which helps identify and fix high-impact SQL queries), Advisory Conditions (proactive and customisable alerting system), storage forecasting (predictive storage capacity planning), and sophisticated troubleshooting tools for deadlocks, blocking, and index management. For DBAs who prefer a hosted solution, SentryOne Monitor is our cloud product for database performance monitoring.

SentryOne Document, available as a cloud solution or installed software, automates database documentation and provides data lineage and impact analysis and metadata snapshot capabilities. SentryOne Document helps data managers understand the landscape of their data environment with visual data mapping that highlights data dependencies. With SentryOne Document, data managers can clearly see dependencies among data sources and understand who has changed the data. SentryOne Document helps companies stay ahead of data privacy regulations such as GDPR by showing data origin and the path traveled by the data. SentryOne Document also helps data managers create comprehensive data dictionaries.

Task Factory is a set of high-performing SQL Server Integration Services (SSIS) components that eliminate mundane programming tasks that consume time and resources on the part of data warehouse managers performing ETL tasks. Task Factory includes 70+ components, and a version is available for use with Microsoft Azure Data Factory. For data managers who need specific data connectors, we offer a suite of modules that connect to, for example, Salesforce or social media data sets. Task Factory also works with REST-enabled data sources.

SentryOne Test is an automated test framework that helps companies validate data. SentryOne Test uses proven, industry-standard technology to help companies test and validate data throughout the data lifecycle. SentryOne Test consists of three core elements that form a secure, automated testing framework:

● Remote agent that enables distributed test execution

● SentryOne Test Visual Studio Extension

● SentryOne Test web portal that lets data managers deploy test projects and then programmatically call them as needed

SentryOne Test targets four specific use cases:

1) Validating data during data-centric application development,

2) Enabling an agile approach to validating data in ETL processes,

3) Facilitating Master Data Management processes, and

4) Validating data in production databases.

6. Is cloud migration also a major focus for SentryOne?

Yes, SentryOne is focused on helping companies streamline data migrations to the cloud, whether on AWS or Microsoft Azure. From data testing and validation through monitoring and optimising performance of cloud-based databases, SentryOne has a full suite of capabilities that can simplify the journey to the cloud:

● Hybrid and Multi-Cloud Performance Monitoring

SentryOne solutions help companies monitor, diagnose, and optimise database performance before and after migration to a hybrid or hosted cloud environment with the SentryOne Monitoring platform, which provides proven scalability (to 800+ targets currently) and the deepest level of actionable metrics in the industry.

● Data Testing and Validation

SentryOne helps companies ensure the integrity of data throughout the migration process with SentryOne Test, a SaaS-based automated testing framework, which simplifies data testing and validation. With SentryOne Test data teams can conduct actionable tests, schedule test runs, and view test metrics in a dashboard throughout the data lifecycle—application development, ETL processes, Master Data Management (MDM), and production database validation.

● Data Lineage Analysis

Understanding the origin of data and how it’s being used is a critical component of a successful cloud migration. SentryOne Document helps companies trace the source of data within a system, in visual or text mode views.

● Database Documentation Throughout the cloud migration process, you can use SentryOne Document to produce customisable documentation in various formats. You can also take a metadata snapshot of every property and store the intelligence in a shared database for your team.

SentryOne works closely with the engineering teams at both Microsoft and Amazon Web Services to support monitoring and optimising SQL Server on hybrid and hosted cloud services, including:

● A marketplace image on Amazon Web Services, with support for both Amazon RDS for SQL Server and Amazon EC2.

● A marketplace image on Microsoft Azure, with support for both Azure SQL Database and Azure SQL Data Warehouse.

● Support for monitoring Azure SQL Database Managed Instance, a deployment model of Azure SQL Database released by Microsoft that provides near 100 per cent compatibility with on-premises SQL Server.

7. Are the SentryOne solutions aimed at database admins / IT managers / developers / Business Intelligence professionals?

SQL Sentry is primarily targeted to database administrators and IT generalists who are managing SQL Server in addition to other systems. Developers can use SQL Sentry to help build high-performing data-centric applications. The integrated Plan Explorer feature in particular helps developers optimise queries. BI professionals use Task Factory for data warehouse management. And technology professionals throughout the organisation use SentryOne Document to document and map the data environment.

8. Moving on to some recent company news, SentryOne has signed a partnership with QBS in the UK – can you explain a bit more about that?

The new relationship with QBS will simplify the process of selecting and purchasing SentryOne top-rated Database Performance Monitoring and DataOps solutions for data professionals managing systems across the Microsoft data platform, including SQL Server and Azure SQL Database. Known for exceptional customer service and speedy delivery, QBS is the ideal partner to help bring our top-rated technology to DataOps teams across Europe.

9. You’ve also recently outlined the work you’ve done on behalf of DocuSign?

DocuSign is a prime example of an enterprise company that chose SentryOne because of our ability to support a demanding production database environment—more than 300,000 transactions per second in this case. DocuSign has about 400,000 customers and hundreds of millions of users. Because of the company’s commitment to high standards for HA/DR, they needed a monitoring solution that could help them get to the “five nines” of reliability that their customers expected. The DocuSign team chose SentryOne because of its low overhead on their system, the ease of extracting information from the SQL Sentry system, and the level of detail about performance metrics that they can get through SQL Sentry. For more information: https://www.sentryone.com/were-the-one-case-study-docusign

10. Please can you give us any details about plans you might have to expand the company’s coverage further – both in terms of geography and any specific industry sectors?

SentryOne will continue to provide top-rated Database Performance Monitoring and DataOps solutions to companies in any industry, as most businesses rely on highly performing databases to make critical business decisions, serve customers, and deliver products and services. In terms of geography, aside from our well-established business in the U.S., we will continue to expand in EMEA and APAC, particularly through managed service providers and resellers in strategic locations and through a direct sales team based in Ireland.

11. The saying that ‘data is the new oil’ has become something of a cliché…but can you give us SentryOne’s view on how your customers are leveraging data for business advantage right now, as opposed to, say, a couple of years ago?

At SentryOne, we believe that data is your business. It’s not a problem to be managed—it’s your most critical asset and, in some cases, your product offering. Businesses that recognise the value of elevating data to business-critical status are able to innovate more quickly, grow revenue, and get ahead of customers’ needs. As they grow, the most successful businesses move beyond simply managing data for efficiency and accuracy to driving revenue and innovation by effectively harnessing their data. The most data-driven companies are using AI capabilities and connected

devices to deliver all kinds of smart applications and devices to customers. All these data-driven products and services require systems that can support massive processing demands.

12. Before we finish, what advice would you give to end users looking at evaluating their database monitoring and DataOps activities with a view to identifying areas for improvement?

For users looking for Database Performance Monitoring solutions, primary criteria should be scalability, ease of use, and granularity of metrics. You’ll want to choose a solution that can support your environment as it grows and collects monitoring data in a way that doesn’t levy additional overhead on the system. In other words, you don’t want the monitoring solution to become part of the performance problem. Regarding ease of use, look for a solution that provides clear dashboards with useful, actionable information displayed clearly. You should be able to come in every morning and see, at a glance, the health of your database environment, with problem areas called out clearly. It also helps if the monitoring solution is accessible through a web interface so you can manage your systems anywhere, at any time. Finally, look for a monitoring solution that collects highly granular metrics—you will need detailed information to troubleshoot problems. Systems that don’t provide a sufficient level of detail simply waste data professionals’ time. For more information about choosing a database performance monitoring solution, check out this recent blog post, 7 Features to Look for in a SQL Server Monitoring Solution, by Richard Douglas, our principal solutions engineer based in the UK.

Regarding DataOps solutions, data teams will need a clear view of what problems they’re trying to solve as this market is still being defined by the players. It’s very hard to do an apples-to-apples comparison of vendors at this point. SentryOne focuses on data integration with Task Factory, data documentation and lineage analysis with SentryOne Document, and data validation with SentryOne Test. SQL Sentry can also be a key part of the DataOps solution by offering observability across the data-centric application lifecycle. With any DataOps solution, choosing a product that is specific to your data environment can save frustration and resources.

13. Finally, what can we expect from SentryOne over the next year or so in terms of new ideas and solutions?

Our focus in the next year will be continuing to produce SaaS editions of our products (while maintaining support for our on-premises solutions), expanding our use of predictive analytics to help DBAs auto-tune database performance, and continuing to integrate our solutions with other technologies.

Digital transformation and IT modernisation initiatives provide innovations to create a competitive edge and drive business growth. But they’ve also created increasingly complex environments that need to be managed by teams that are strapped for time and resources.

By David Cumberworth, MD EMEA, Virtana.

IT organisations are good at taking on new tools but really bad at retiring older ones. But how do you know which tools are critical to keep, where there’s overlap, and what’s no longer needed? Part of the promise of AIOps is to rationalise tools so you end up with a single-pane-of-glass view of the IT environment. This, however, is unrealistic. Most analysts agree that you need a number of different tools to manage everything. The challenges, therefore, are to reduce the number of legacy tools and replace them with a platform that does the collective work better. The final tool selection should operate together in a fully integrated manner.

The IT infrastructure is made up of servers and their related VMs and hypervisors, the network and related switches, and a storage layer (traditional or NAS). A good starting point is to evaluate what you are using to manage these layers. Then look at the infrastructure from an application point of view – do the legacy tools give you an application view or do they just show their particular silo? You need a view of how the applications using the infrastructure are performing so you can create a baseline. Once established, you can then look at pinch points and capacities to optimise the system. This new application-centric approach also gives you valuable insight you can share with the business – after all, they are only interested in how the applications are performing and not what technology they are running on.

The next stage is to look at the applications themselves and the customer experience they provide. For this you will need an Application Performance Monitor (APM) that shows end-user experience, the coding and all IT components outside the data centre. A good example of this is AppDynamics from Cisco.

You now have application and infrastructure views, offering an integration interface so analytics can be viewed holistically rather than by platform. This enables you to report to the business how their applications are running and have performed during the time since last reviewed, transforming IT from overhead into a source of competitive advantage and business value.

There is an incredible amount of literature about the post-COVID workplace. Some companies have already sent their employees back to the office where allowed, and others are considering not renewing leases, instead preferring to invest the money in off sites and employee happiness.

By John Appleby, CEO at Avantra

Wherever your company lies on the gamut, IT Operations will inevitably change for the good, and better. What might this look like, and how can you prepare?

Recognise the criticality

Many businesses apply a one-size-fits-all approach to IT when the reality is there are a handful of business processes, and associated systems, which will cripple a business if interrupted.

Review your business continuity strategy and check that you recognise the most critical systems and processes. For instance, your finance expenses process can probably wait a few days, but if payroll doesn't run, people get upset pretty quickly.

Don't be dependent on Service Level Agreements

I see many big businesses that outsource the problem to a third party. Here's the thing: you can outsource work, but if something goes wrong, your only protection is the contract, and trust me: the outsourcer is an expert in contracting, and it is nearly impossible to get any remedy. In one situation I saw, the outsourcer countersued for breach of contract and won.

When you consider outsourcing, you need to take control of how the provider services the contract.

The same applies if you use Software as a Service (SaaS): you need to understand how the vendor provides support and hold them to the same standards you would if you were delivering it yourself.

Multiple locations

One issue that occurred with several businesses during COVID-19 was that they had a large number of employees in a single office. Concentrating IT Operations into a single location is an unacceptable risk: if an outbreak were to occur, it might be impossible to keep critical business processes running.

The sensible approach is to spread the risk between multiple locations and even countries. With modern technologies, this has never been easier.

Automate

I talk about this a lot, but there are a ton of IT Operations tasks that are easily automated, and organisations must look at this urgently. It is entirely unacceptable that a repetitive task could be automated, and is not.

Not only does this risk your business, but it also is a terrible waste of human capital, which can focus instead on innovation and business transformation.

Besides, bots are excellent at repetitive tasks and are happy to do them all day long, completing thousands of times more checks than a human could ever do.

Insource

I believe there will be a trend towards insourcing the operations of critical business processes. Many outsourcers found themselves unable to support businesses during COVID-19 because they didn't have remote working capabilities.

For non-critical processes, this might be fine, but if you are unable to pay suppliers or ship product, you might want to think about taking control.

Home Working Fridays

You might want your workforce to get back to the office, but there is a huge advantage to doing a home working day: it will ensure everyone is out of the office, and the business can still run.

Too many businesses found themselves scrambling to put remote working in place for thousands of employees. Some companies tied up teams of people for weeks, ensuring that employees could work remotely.

Home Office

One of the issues which most businesses have not solved is the issue of safe home office working. One well-known IT company was the recipient of a lawsuit from former employees who found themselves unable to work for life, due to chronic Repetitive Strain Injury.

A quality work environment is straightforward to ensure in an office setting, but almost impossible to police at home.

Smart employers will create guides for home working and ensure that people have the right screen height, keyboard, and chair. They will use HR resources to check in on employees and make sure they have what they need to stay healthy.

Collaboration Tools

Companies like Zoom saw considerable increases in usage, but most businesses use Zoom as a band-aid and not a strategy.

I can't recommend a strategy of implementing real collaboration tools like Microsoft Teams or Slack and implementing them well.

For example, in an IT Operations use case, it is possible to integrate Slack into Freshdesk and Jira, so an incident coming in can be routed into a chat channel and directly into the bug system.

Final Words

I believe there will be significant COVID-19 benefits to the workplace, many of which are well overdue.

We can drive efficiencies in IT Operations with automation, better tooling, and give people more exciting work to do, as well as flexible work locations. Let's make sure we take the opportunity before life goes back to normal!

Without a doubt we will see more organisations embracing agile working and digital technologies, now that they’ve witnessed a cloud-enabled workforce in action during COVID-19.

By Justin Augat, VP Product Marketing, iland.

Recent market data from Synergy Research Group via CRN suggests 2019 was a milestone for IT and that for the first time ever, enterprises are spending more money annually on cloud infrastructure services than on data centre hardware and software. For example, total spend on cloud infrastructure services reached $97 billion, up 38 percent year over year, whereas total spend on data centre hardware and software hit $93 billion in 2019, an increase of only 1 percent compared to 2018.

This means that many companies that have historically owned, maintained, and managed their own IT operations in their own data centre are now evolving how they support their business operations by transforming their IT to cloud.

Moreover, the cloud continues to be the foundation upon which most organisations’ digital transformation efforts are built, with more than eight out of ten businesses considering the cloud to be either important or crucial to their digital strategies.

What are the key reasons underpinning this shift to cloud? Much of it is based on the modern organisation’s need for greater agility and flexibility. There has never been a better example of this demand than demonstrated during this COVID-19 pandemic, as companies have hastily decamped employees to home working.