Okay, so I haven’t seen the phrase being bandied around in the data centre or wider IT industry just yet, but there’s little doubt that, right now, organisations are focusing on technology not as a means in itself, but as a means to an end – the end being business transformation. And most of this (digital) transformation process revolves around the creation, use and storage of data, or information. With the GDPR legislation on the horizon, the idea of ILM has just become a whole deal more important.

Once upon a time in the storage networking industry, ILM was the next big thing. All the vendors were falling over themselves to offer ILM solutions (which didn’t really exist), and the importance of managing data throughout its lifecycle was understood, if not acted upon, by all.

Fast forward to today, and the importance of managing data is back at the top of the agenda. Only, this time, it’s not just the storage networking industry that sees ILM as important, but the whole data centre/IT industry. After all, every aspect of data centres and IT is, ultimately, to do with data logistics. And with IoT just around the corner, the idea of effective data management, or ILM, has never been so crucial.

When the GDPR does (finally!) arrive, ILM will need to take on a whole new dimension. It will no longer be enough for an organisation to have some vague idea as to what data it has created, where it came from, what it has done with it, where it’s stored and when, if ever, it was deleted. No, accurate, open record keeping, available for examination by customers as well as regulators, along with the actual data records themselves in many cases, demands a whole new level of data management.

For the time being, there seems to be a whole wave of GDPR-related scaremongering going on throughout the data centre/IT industry. Yes, compliance and security are vitally important to ensure that you do not fall foul of the new legislation. However, rather than do the minimum to ensure compliance, why not take the GDPR ‘frenzy’ as an opportunity to overhaul your overall data centre/IT infrastructure to ensure that you have the best possible hardware and software to provide the optimised ILM experience.

After all, GDPR is all about the need for proper data management, and no one should be complaining about that!

-l1uge6.jpg)

Research from The Green Grid highlights that while most view a broad range of KPIs as useful, many are yet to implement them.

IT leaders must use a diverse range of metrics to attain the level of detailed measurement and analysis required to ensure long term sustainability and to drive data centre efficiency. This is according to Roel Castelein, EMEA Marketing Chair for The Green Grid, who suggests it is important to adopt a three dimensional approach in order to gain a complete view of a data centre and to improve the effectiveness of their operations.

The latest annual Data Centre Industry Survey from Uptime Institute reveals that, in many cases, IT Infrastructure teams are still relying on the least meaningful metrics to drive efficiency. The majority of IT departments are positioning total data centre power consumption and total data centre power usage as primary indications of efficient stewardship of environmental and corporate resources.

Additionally, research from The Green Grid, which surveyed 150 IT decision makers, demonstrates that while most recognise that a broad range of KPIs are useful in monitoring and improving their data centre efficiency, many are yet to implement them. Key findings include:

88 per cent view Water Usage Effectiveness (WUE) as a useful metric but only 27 per cent use this; 82 per cent view Power Usage Effectiveness (PUE) as a use metric but only 29 per cent use this; 80 per cent view Data Centre Infrastructure Efficiency (DCiE) as a useful metric but only 59 per cent use this; 77 per cent view Data Centre Predictive Modelling (DCPM) as a useful metric but only 15 per cent use this; 77 per cent view Data Centre Energy Productivity (DCEP) as a useful metric but 31 per cent use this; 71 per cent view temperature monitoring as a useful metric but 16 per cent use this; 70 per cent view Carbon Usage Effectiveness (CUE) as a useful metric but 35 per cent use this.

Roel comments: “Our research clearly shows that there is an understanding of how useful each KPI can be. However, the reason for limited adoption may come down to the perception that implementation will have a negative impact on CAPEX and also OPEX. This doesn’t have to be the case. With enough resourcefulness and data centre know-how, you don’t necessarily have to be a big-spender to increase your data centre efficiency and therefore save money and do less harm to the environment. Oversimplifying or even focusing on a single metric can create wider business issues as key factors are ignored – the impact is however very real.”

“There’s no doubt that data centre energy consumption is a critical aspect of the maintenance, improvement, and operational planning of any facility. However, data centre providers need to harness an array of metrics in order to gain a holistic view of their facilities and to drive environmental stewardship. Poor measurement is just as bad as no measurement at all. Therefore, IT leaders need to expand from a single-metric view and include broader technical metrics and KPIs into a meaningful message. This will also help all parties within an organisation to improve their understanding. In essence, organisations should focus on metrics that range from detailed technical information right through to key performance efficiency indicators.

“By way of example, cooling is a key chokepoint in data centre efficiency, a place where significant cost savings and sustainability progress can be made with the judicious application of meaningful analysis, metrics and clever ideas. As such, a key metric here to take into consideration is Water Usage Effectiveness (WUE). Organisations should also be considering Carbon Usage Effectiveness (CUE) as well as the data centre lifecycle, taking into account how best to dispose of e-waste,” concludes Roel.Despite the rising complexity of IT, respondents see promise in DevOps to help achieve future mission success. Download the full 2017 Splunk Public Sector IT Operations Survey on the Splunk website.

The survey polled a wide range of Public Sector IT professionals from national and local government agencies, national security, emergency services, higher education institutions and aerospace and defense. A converging host of factors and trends including constantly shifting budgets, changing regulatory compliance and modernisation initiatives have contributed to declining confidence, but emerging technologies focused on automation and increased visibility are helping Public Sector organisations today. Among the key findings, at least 60 percent of respondents felt as or less confident in carrying out their responsibilities in the following areas than they did 12 months ago:

Handling the scale and complexity of IT operationsAssuring performance and availability to consistently meet service level agreementsPinpointing root-causes and sources of failure quicklyEnsuring efficiency of IT operationsMigrating workloads and applications to the cloud

“The confidence gap we are seeing maps to other industry and government technology trends including growing public scrutiny, ever-present resource limitations and rapidly increasing expectations of technology by end-users. There’s never been a more important time for public sector organissations to embrace analytics to help them face and overcome these challenges with data,” said Larry Ponemon, chairman and founder of the Ponemon Institute. “It’s a challenging time to work in Government IT, but there are plenty of reasons to be hopeful for the future. It’s not surprising public sector IT leaders are looking to analytics, cloud and DevOps to help accelerate IT performance and management.”

IT Operations in Constant, Reactive Fire Drill Mode Due to Silos and Lack of Visibility

The survey also highlighted reasons for the overall loss of confidence across the Public Sector. Respondents felt that siloed IT systems and technologies, and an inability to integrate those systems (72 percent), were keeping them in a constant reactive state rather than being able to proactively plan for the future. IT managers also cited the lack of end-to-end visibility (73 percent) and too many alerts and false-positives (55 percent) among the biggest threats to service delivery along with a lack of skills, expertise and resources to effectively accomplish their jobs. Even where analytics tools were in place, most respondents felt they were ineffective at helping quickly pinpoint issues and determine root causes (78 percent).

As a result of limited visibility, overly manual processes and alert fatigue, the survey also found that the average system outage took 44 hours to resolve, while requiring 12.5 staff remembers to restore operational status to IT systems. This extended length of time and confusion often puts IT operators further underwater as they struggle to find the balance of executing on day-to-day operations while setting long-term proactive IT strategy.

Alert Logic has published the results of a comprehensive research, “Cybersecurity Trends 2017 Spotlight Report,” that explores the latest cybersecurity trends and organisational investment priorities among companies in the UK, Benelux and Nordics.

Conducted amongst 317 security professionals, the survey indicates that while cloud adoption is on the rise, the top concern is how to secure data in the cloud and protect against data loss (48 per cent). The next two biggest priorities for security professionals were threats to data privacy (43 per cent) and regulatory compliance (39 per cent).

The study also examined the top constraints faced by these organisations in securing cloud computing infrastructures. The study found that organisations lack internal security resources and expertise to cope with the growing demands of protecting data, systems and applications against increasingly sophisticated threats (42 per cent). This is closely followed by a desire to reduce the cost of security (33 per cent), moving to continuous 24x7 security coverage (29 per cent), improving compliance (24 per cent) and increasing the speed of response to incidents (20 per cent).

Public cloud platform providers like Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform offer many security measures, but organisations are ultimately responsible for securing their own data and the applications running on those cloud platforms.

According to Verizon’s recent security report, attacks on web applications are now the no. 1 source of data enterprise breaches, up 300 per cent since 2014. Similarly, the report found cybersecurity professionals – more than half of survey participants – to be most concerned about customer-facing web applications introducing security risk to their business (53 per cent). This is followed by mobile applications (48 per cent), desktop applications (33 per cent) and business applications such as ERP platforms (31 per cent). Application related breaches have negative consequences and can lead to revenue loss, significant recovery expense, and damaged reputation.

“Web applications are the most significant source of breaches for organisations leveraging cloud and cloud hybrid computing infrastructures,” said Oliver Pinson-Roxburgh, EMEA Director at Alert Logic. “They are complex, with a large attack surface that can be compromised at any layer of the application stack and often utilise open source and third-party development tools that can introduce vulnerabilities into an enterprise.”

Organisations can implement incentives to prevent gaps in the security policy of an application or to avoid vulnerabilities in the underlying system that are caused by flaws in the design, development, deployment, upgrade, maintenance or database of the application. Additionally, many businesses turn to cloud security vendors with a “products + services” strategy rather than technologies alone to fight web application attacks. Businesses increasingly find that a combination of cloud-native security tools provided in combination with 24x7 security monitoring by security and compliance experts is the best way to secure their sensitive data – and the sensitive data of their customers – in the cloud.

“A multi-layer web application attack defence is the cornerstone of any effective cloud security solution and strategy,” said Pinson-Roxburgh.

Software-defined wide area network (SD-WAN) solutions have only been commercially available for a few years, but the technology's ability to address pressing enterprise networking needs has led to remarkable growth. A new forecast from International Data Corporation (IDC) estimates that worldwide SD-WAN infrastructure and services revenues will see a compound annual growth rate (CAGR) of 69.6% and reach $8.05 billion in 2021.

The most significant driver of SD-WAN growth over the next five years will be digital transformation (DX) in which enterprises deploy 3rd Platform technologies, including cloud, big data and analytics, mobility, and social business, to unlock new sources of innovation and creativity that enhance customer experiences and improve financial performance. DX generally increases network workloads and elevates the network's end-to-end importance to business operations.

Another factor driving the growth of SD-WAN is the continued rise of public cloud-based software-as-a-service (SaaS) applications. The increase in SaaS adoption for business applications throughout the enterprise disrupts the prominence of MPLS-based WAN connectivity to the branch. SD-WAN is increasingly leveraged to provide dynamic connectivity optimization and path selection in a policy-driven, centrally manageable distributed network architecture.

Finally, the growth in SD-WAN will benefit from the broader acceptance, and adoption, of software-defined networking (SDN) throughout the enterprise. As virtualization, cloud management, and SDN continue to gain traction throughout enterprise networks, SD-WAN will benefit from this paradigm shift and receive increasing consideration.

"SD-WAN is not a solution in search of a problem," said Rohit Mehra, vice president, Network Infrastructure at IDC. "Traditional WANs were not architected for the cloud and are also poorly suited to the security requirements associated with distributed and cloud-based applications. And, while hybrid WAN emerged to meet some of these next-generation connectivity challenges, SD-WAN builds on hybrid WAN to offer a more complete solution."

SD-WAN leverages hybrid WAN, but includes a centralized, application-based policy controller; analytics for application and network visibility; a secure software overlay that abstracts the underlying networks; and an optional SD-WAN forwarder (routing capability). Together these technologies provide intelligent path selection across WAN links, based on the application policies defined on the controller.

The benefits of SD-WAN include cost-effective delivery of business applications, meeting the evolving operational requirements of the modern branch/remote site, optimizing software-as-a-service (SaaS) and cloud-based services such as UC&C, and improving branch-IT efficiency through automation. These benefits have resonated across the spectrum of enterprise IT and service providers alike, ensuring a broad-based uptake for this new paradigm in WAN architectures.

Worldwide IT spending is projected to total $3.5 trillion in 2017, a 2.4 percent increase from 2016, according to Gartner, Inc. This growth rate is up from the previous quarter's forecast of 1.4 percent, due to the U.S. dollar decline against many foreign currencies (see Table 1.)

"Digital business is having a profound effect on the way business is done and how it is supported," said John-David Lovelock, vice president and distinguished analyst at Gartner. "The impact of digital business is giving rise to new categories; for example, the convergence of "software plus services plus intellectual property." These next-generation offerings are fueled by business and technology platforms that will be the driver for new categories of spending. Industry-specific disruptive technologies include the Internet of Things (IoT) in manufacturing, blockchain in financial services (and other industries), and smart machines in retail. The focus is on how technology is disrupting and enabling business."

The Gartner Worldwide IT Spending Forecast is the leading indicator of major technology trends across the hardware, software, IT services and telecom markets. For more than a decade, global IT and business executives have been using these highly anticipated quarterly reports to recognize market opportunities and challenges, and base their critical business decisions on proven methodologies rather than guesswork.

Table 1. Worldwide IT Spending Forecast (Billions of U.S. Dollars)

|

| 2016 Spending | 2016 Growth (%) | 2017 Spending | 2017 Growth (%) | 2018 Spending | 2018 Growth (%) |

| Data Center Systems | 170 | -0.3 | 171 | 0.3 | 173 | 1.2 |

| Enterprise Software | 326 | 5.3 | 351 | 7.6 | 381 | 8.6 |

| Devices | 630 | -2.4 | 654 | 3.8 | 677 | 3.6 |

| IT Services | 894 | 3.2 | 922 | 3.1 | 966 | 4.7 |

| Communications Services | 1,374 | -1.3 | 1,378 | 0.3 | 1,400 | 1.6 |

| Overall IT | 3,396 | 0.3 | 3,477 | 2.4 | 3,598 | 3.5 |

Source: Gartner (July 2017)

The worldwide enterprise software market is forecast to grow 7.6 percent in 2017, up from 5.3 percent growth in 2016. As software applications allow more organizations to derive revenue from digital business channels, there will be a stronger need to automate and release new applications and functionality.

"With the increased adoption of SaaS-based enterprise applications, there also comes an increase in acceptance of IT operations management (ITOM) tools that are also delivered from the cloud," said Mr. Lovelock. "These cloud-based tools allow infrastructure and operations (I&O) organizations to more rapidly add functionality and adopt newer technologies to help them manage faster application release cycles. If the I&O team does not monitor and track the rapidly changing environment, it risks infrastructure and application service degradation, which ultimately impacts the end-user experience and can have financial as well as brand repercussions."

IT spending increased in 2016, but only two of the top 10 IT vendors posted organic revenue growth. With revenue sources still tied to the Nexus of Forces (the convergence of social, mobility, cloud and information), some of the top 10 vendors will fare better in 2017 due to strength in mobile phone sales. Worldwide spending on devices (PCs, tablets, ultramobiles and mobile phones) is projected to grow 3.8 percent in 2017, to reach $654 billion. This is up from the previous quarter's forecast of 1.7 percent. Mobile phone growth will be driven by increased average selling prices (ASPs) for premium phones in mature markets due to the 10th anniversary of the iPhone and the increased mix of basic phones over utility phones. However, the tablet market continues to decline, as replacement cycles remain extended.

The growth of digital business and the Internet of Things (IoT) is expected to drive large investment in IT operations management (ITOM) through 2020, according to Gartner, Inc. A primary driver for organizations moving to ITOM open-source software (OSS) is lower cost of ownership.

While acceptance of OSS ITOM is increasing, traditional closed-source ITOM software still has the biggest budget allocation today. Moreover, complexity and governance issues that face users of OSS ITOM tools cannot be ignored. In fact, these issues open up opportunities for ITOM vendors. Even vendors that are late to market with ITOM functionality can compete in this area," said Laurie Wurster, research director at Gartner.

Gartner believes many enterprises will turn to managed ITOM or ITOM as a service (ITOMaaS) enabled by open-source technologies and provided by a third party. With OSS, vendors can provide more cost-effective and readily available ITOM functions in a scaled manner through the cloud.

Through 2020, public cloud and managed services are expected to be leveraged more often for ITOM tools, which will drive growth of the subscription business model for both cloud and on-premises ITOM. However, on-premises deployments will still be the most common delivery method. This imposes multiple challenges to incumbent ITOM vendors. First, those vendors that do not offer a cloud delivery model will face continuous cannibalization from ITOM vendors that can deliver ITOM through both cloud and on-premises.

Second, platform vendors, such as Microsoft Azure and Amazon Web Services (AWS), are providing some native ITOM functionalities on their public clouds. Customers that are running workloads solely on these platforms may prefer these native features. There are also "hybrid" requirements for ITOM tools that can seamlessly manage both cloud and on-premises environments.

"Customer demand has driven traditional software vendors to transform and adapt to the changing technology and competitive landscapes. Competitive pressure from cloud (SaaS offerings) and commercial OSS (offerings with a free license plus paid support) is forcing ITOM providers to move toward subscription-based business models for both cloud and on-premises deployments," said Matthew Cheung, research director at Gartner. "This shift will eliminate revenue growth spikes as the large upfront investment seen in traditional models is spread out over time in a repeatable revenue stream."

The influx of new, smaller ITOM vendors focused on one or two major tool categories will continue to cause disruption for large traditional suite vendors. Given this situation, traditional vendors will need to react by changing how their products fit together. More importantly, traditional vendors need to change how their solutions are sold as customers exert significant pressure to shift to offering cloud-based services.

Angel Business Communications is pleased to announce the categories for the SVC Awards 2017 - celebrating excellence in Storage, Cloud and Digitalisation. The 30 categories offer a wide-range of options for organisations involved in the IT industry to participate. Nomination is free of charge and must be made online at www.svcawards.com

Storage Project of the Year - Open to any specific data storage related project implemented in any organisation of any size in EMEA.

Digitalisation Project of the Year - Open to any ICT project that has incorporated/implemented one or more digital technologies to transform a business model and provide new revenue and value-producing opportunities.

Cloud Project of the Year - Open to any implementation of a cloud-based project (public cloud, private cloud or hybrid cloud) in any organisation of any size in EMEA.

Hyper-convergence Project of the Year - Open to any implementation of a project (public cloud, private cloud or hybrid cloud) based on a set of hyper-converged products/solutions in any organisation of any size in EMEA.

Managed Services Provider of the Year - Open to any Managed Services Provider operating in the storage, virtualisation and/or cloud technologies market in the EMEA region.

Vendor Channel Program of the Year - Open to any IT vendor’s channel program in the storage, virtualisation and/or cloud technologies market introduced in the EMEA market during 2017 that has made a significant difference to the vendor’s and their channel partners business in terms of storage revenue increases, improved customer satisfaction or market awareness.

Channel Initiative of the Year - Open to any IT system reseller, distributor, MSP or systems integrator who has introduced a distinctive or innovative vendor specific or vendor independent program of their own design/specification to boost sales and/or improve customer service in EMEA.

Excellence in Service Award - Open to any IT vendor or reseller/business partner delivering end-user customer service in the UK or EMEA markets. Entries must be accompanied by a minimum of THREE customer testimonials (in English) attesting to the high level of customer service delivered that sets the entrant apart from their competition.

Channel Individual of the Year - Open to any senior individual working within any organisation manufacturing, selling and/or supporting the storage, digitalisation and/or cloud sectors in the EMEA market that has made a significant contribution to his or her employer’s business or that of their partners or customers.

Infrastructure

Backup and Recovery/Archive Product of the Year - Open to any solution that’s primary design is to enable data backup and restore or long-term archiving.

Cloud-specific Backup and Recovery/Archive Product of the Year - Open to any solution that’s design is to enable cloud-based data backup and restore or long-term archiving via a service offering.

SSD/Flash Storage Product of the Year - Open to any storage solution that can be classified as using SSD/Flash or NVMe to store and protect IT data and information.

Storage Management Product of the Year - Open to any system management solution that delivers effective and comprehensive storage resource management in either single or multi-vendor environments.

Software Defined/Object Storage Product of the Year – Open to any product that delivers a ‘software-defined- storage model’ or any ‘object storage’ product or solution.

Software Defined Infrastructure Product of the Year: Open to any solution that enables a ‘software defined’ set of solutions normally associated with traditional non-physical infrastructure deployments such as networks, application servers etc.. (excludes storage).

Hyper-convergence Solution of the Year – Open to any single or multi-vendor solution that delivers on the promise of an architecture that tightly integrates compute, storage, networking and virtualisation resources for the client.

Cloud

IaaS Solution of the Year - Open to any product/solution that delivers or contributes to effective Infrastructure-as-a-Service implementations for users of private and/or public cloud environments.

PaaS Solution of the Year - Open to any product/solution that delivers or contributes to effective Platform-as-a-Service implementations for users of private and/or public cloud environments.

SaaS Solution of the Year - Open to any product/solution that delivers or contributes to effective Software-as-a-Service implementations for users of private and/or public cloud environments.

IT Security as a Service Solution of the Year - Open to any product/solution that is specifically designed to enable the securing of data within private and/or public cloud environments

Cloud Management Product of the Year – Open to any product/solution that delivers or contributes to effective Cloud management or orchestration for users and/or providers of private and/or public cloud environments.

Co-location / Hosting Provider of the Year – Open to any company/organisation offering hosting and/or co-location services to end-users and/or service providers in the EMEA market.

Companies of the Year

Storage Company of the Year - Open to any company supplying a broad range of storage products and services in the EMEA Market.

Cloud Company of the Year - Open to any company supplying a wide range of cloud services or products in the EMEA Market.

Hyper-convergence Company of the Year - Open to any company supplying a clearly defined Hyper-converged product set in the EMEA Market.

Digitalisation Company of the Year - Open to any company supplying a clearly defined set of digitalisation services and or products in the EMEA Market.

Innovations of the Year (Introduced after 1st June 2016)

Storage Innovation of the Year – open to any company that has introduced an innovative and/or unique storage service, product, technology, sales program, or project since 1st June 2016 in the EMEA market.

Cloud Innovation of the Year – open to any company that has introduced an innovative and/or unique public, private or hybrid cloud service, product, technology, sales program, or project since 1st June 2016 in the EMEA market.

Hyper-convergence Innovation of the Year – open to any company that has introduced an innovative and/or unique hyper-convergence service, product, technology, sales program, or project since 1st June 2016 in the EMEA market.

Digitalisation Innovation of the Year – open to any company that has introduced an innovative and/or unique digitalisation service, product, technology, sales program, or project since 1st June 2016 in the EMEA market.

The re-emergence of the edge is not inconsistent with the growing concentration of IT capacity in central sites and at the hands of IT service providers. On the contrary, it is a direct consequence of it: operators will maintain and even increase edge capacity closer to users and connected machines as data volumes keep growing and as the cost of subpar response times (let alone downtime) escalates.

By Rhonda Ascierto Research Director, Datacentre Technologies & Eco-Efficient IT, 451 Research and Daniel Bizo Senior Analyst, Datacentre Technologies, 451 Research.

If there is a single underlying thread behind the anticipated wave of new edge capacity, it is about data: speed of access, availability and protection. Workload requirements across these three vectors can vary considerably. While the datacentre they are best suited for will often be determined by location as it relates to data requirements, other factors will come into play, including specific business requirements.

Here at 451 Research, we are noting multiple application types that show affinity for an edge presence outside of core datacentre sites. This affinity is based on their data requirements defined as a combination of latency, volume, and availability and reliability requirements.

To help give structure to the growing complexity of workload placements, we have devised a framework that evaluates, in broad strokes, the types of workloads that may benefit from an edge presence.

This is not to say that data is the sole determiner of where to run an application. There are many other factors that organisations weigh up when making such decisions, including compliance, security and manageability, and costs of any changes to the software architecture.

This framework is designed to help businesses identify candidates, ranging from IoT gateways to branch back-up and recovery, virtual reality consumer applications and Industry 4.0.

The edge affinity framework

Much of the IT industry shares a consensus that most workloads are migrating to large datacentres and ultimately headed to public clouds running out of hyperscale sites. This view is somewhat supported by sales dynamics at large IT and datacentre equipment vendors, which are reporting stagnant or eroding enterprise sales, offset to some degree by sales to commercial datacentre service providers growing steadily.

However, we believe most of the smaller datacentre sites will remain, albeit transformed. Even with the rapid increase in network access bandwidth, realised data speeds remain insufficient to move large data sets around on demand. Reliability and availability of network connections are generally lacking and, in most locations, cannot be relied on for critical applications.

This means that demand for locally running applications and data stores remains – even in the face of the centralisation of core systems. We anticipate public and private cloud-based digital services to be no different and, contrary to some views, to ultimately generate demand for even more edge capacity to optimise the delivery of cloud-originated services including CDNs as well as get data from users and the myriad connected machines.

We view the edge as being defined and driven by data requirements, whether speed, availability and reliability of access, rate of generation or security (or any combination thereof). The key technology component is the WAN, which largely defines these aforementioned characteristics, much more so than relatively inexpensive compute or storage capacities. Scaling bandwidth or adding redundant WAN paths is typically much more difficult, slower and ultimately more expensive than upgrading computing and storage speeds and feeds. Improvements in latency are limited by the laws of physics.

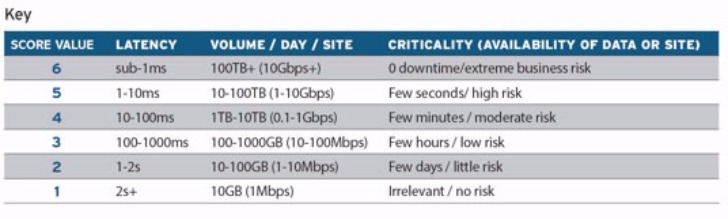

Through this lens we have classified a limited, select number of workload types per site by:

· Latency tolerance: Applications differ greatly in how sensitive their performance is to speed of access to data.

Criticality: What the availability and reliability requirements are for the application to meet business objectives.

· Data volume per site: Data that is aggregated, generated, processed or stored at a location.

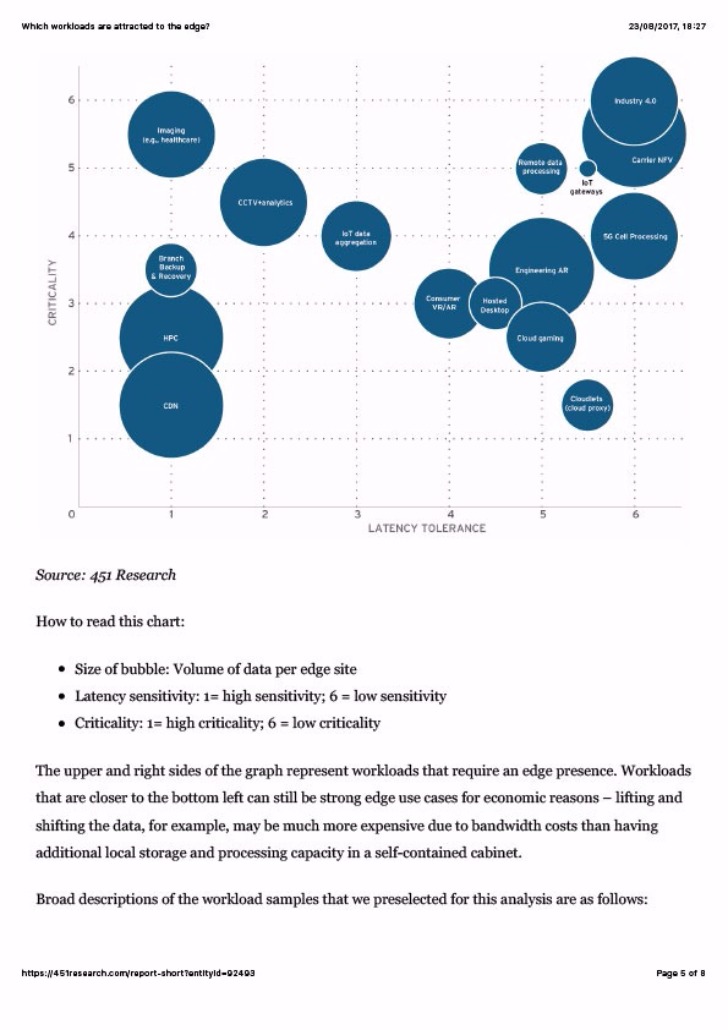

Ours is not a vetted, scientific approach. Instead, our objective was to visualise in broad strokes different workloads by their affinity for an edge presence. We preselected 16 workloads we felt were strong candidates for local presence, including those that are considered to be in an established edge area, as well as those that are expected to be, as part of emerging distributed IT infrastructures.

The workloads that score very high in either latency or criticality requirements (that is, they have no tolerance for losing access to the data or application from a site) can be thought of as having hard (technical) requirements for an edge presence, while those that score lower in these but generate high volumes of data specific to a location (e.g., rich sensory data) have a soft (economic) preference for edge capacity.

We used scores from 1-6 that represent a logarithmic scale as the steps between scores are typically an order of magnitude. For example, a score of 6 for latency requirements means a sub-1ms latency, while a score of 5 maps to 1-10ms, and so on. For criticality, we used the amount of time for losing data access to/from the site that's still acceptable for the business.

Again, in a similar fashion, we scored 6 for those where we thought 0 downtime was acceptable, 5 for a few seconds, 4 for a few hours. Volume of data originating from, heading to or transiting through the site defines the size of the bubble – again, using a factor of 10-step change between categories.

Figure 1: Scoring values for an edge affinity

Putting it all together

Using this scoring system, we mapped out select edge workloads, weighting criticality and latency requirements and taking into account the relative volume of data per datacentre site (as illustrated by the size of the bubble in Figure 2).

Broad descriptions of the workload samples that we preselected for this analysis are:

By Steve Hone, DCA CEO and Cofounder of the Data Centre Trade Association

The DCA summer edition of

the DCA journal focuses on Research and Development and I’m pleased to say we

have received some great articles this month. Research leads to increased

knowledge and the ability to develop and innovate, the benefits of this

investment was plain to see in July in Manchester at the DCA annual conference.

The DCA’s update seminar on the 10th was not only an opportunity to bring DCA members up to speed with the work undertaken to date but also to share the plans for the future in its continued support of members and the data centre sector.

The Seminar also provided an opportunity for members to gain updates from some of the DCA’s strategic Partners including Simon Allen who spoke about the new DCiRN (Data Centre Incident Reporting Network) and Emma Fryer who provided an update on the valuable work Tech UK do in supporting of the data centre sector, this was followed by networking drinks in the evening.

On the 11th July the DCA hosted its 7th Annual Conference which took place at Manchester University. Data Centre Transformation Conference 2017 organised in association with DCS and Angel Business Communications was a huge success and continues to go from strength to strength.

The quality of content and healthy debate which took place in all sessions was testament as to just how well run the workshops were; So, I would also like to say a big thank you to all the chairs, workshop sponsors and the committee who worked so hard to ensure the sessions were interactive, lively and educational.

The workshop topics covered subject matter from across the entire DC sector, however research and development continued to feature strongly in many of the sessions which is not surprising given the speed of change we are having to contend with as the demand for digital services continues to grow.

Having seen the feedback sheets from all the attending delegates it was clear that a huge amount was gained from the day, not just in respect of contacts and knowledge but also the insight gained from speaking too and listening to others who share the same issues and same business challenges. One delegate said it was “refreshing to come to an event where he felt comfortable enough to speak out and learn on his own terms, without feeling we was being sold too”. High praise indeed so thankyou to all the delegates who attended and helped make the day such a success.

We closed the conference with a sit-down dinner in the evening with good food & wine served by the university students and of course great company which for some meant we were still out to watch the sun come up!

Although some will be taking the opportunity to slip away to recharge their batteries; you still have time to submit articles for the DCM buyers guide; the theme is “Resilience and Availability”;

Copy deadline date for this is the 20th August. There is also still space for copy in the next edition of the DCA journal with a theme of Smart Cities, IOT and Cloud which always seems to be a popular subject matter, copy deadline for this is the 12th September. Please forward all articles to Amanda McFarlane (amandam@datacentrealliance.org) and please call if you have any questions.

By Dr Jon Summers, University of Leeds, July 2017

For the last six years at the University of Leeds a group of researchers in the Schools of Mechanical Engineering and Computing have been trying to deal with the extremely complex question of how to manage simple thermodynamics and fluid flows derived from very complex digital workloads.

It is a question that does now need to be addressed and this can only be achieved using real live Datacom equipment – servers, switches and storage. Data centres should really be considered as integrated, holistic systems, where their prime function is to facilitate uninterrupted digital services. Would you go out and purchase a car with all of its technology of aerodynamics, road handling, crashworthiness and engine management without an ENGINE, which you as the driver would need to specify and source for engine size, shape, capacity and performance? No you would not, they are integrated systems. The same is true of data centres – the engine of the car is equivalent to the datacom, which is key to the provision of the intended function of the system. That is why research and development around providing the facility for the datacom should actually use real live datacom.

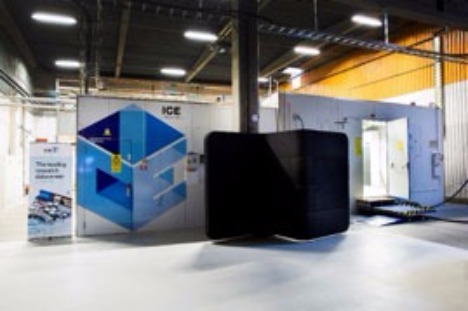

The group at the University of Leeds have in the past received funding from data centres, namely Digiplex and aql to develop experimental setups that can lead to a better understanding of the Datacom operation and performance with differing thermal and fluid flow scenarios. With the involvement of Digiplex we constructed a large data centre cube with a hot and cold aisle arrangement and have run this with live Datacom and some Hillstone loadbanks, the latter has been shown as a 4U proxy to replicate (with one fan and one heater) 4 times 1U pizza box servers operating at full capacity. The data centre cube is shown in Figure 1 and to augment this activity of thermal management of real Datacom in a live data centre environment, a generic server wind tunnel supported by the Leeds based data centre and hosting company, aql. The wind tunnel offers finer control on the thermal and airflow aspects of management Datacom and is shown in Figure 2.

Figure 1: Left shows a front view of the cube with a standard wind tunnel connected to the left of the cube. Right highlights the connection of the wind tunnel exhaust to the inlet to the cold aisle of the data centre cube.

The generic server wind tunnel offers the capability to test Datacom equipment at different inlet temperatures and humidity, although the latter is not easily controlled. The equipment has enabled the team to look at Datacom performance in terms of power requirements when the facility fan pressurises the cold aisle. Both the cube and the wind tunnel have helped to look at the effects of pressure and airflow on the Datacom delta temperature between front and back.

Figure 2: Left shows the exit of the generic server wind tunnel. Right shows the full extent of the wind tunnel with the working cross section.

The work at Leeds has come to the attention of the nearly 2 year old Data Centre research and development group that operates as part of Government Research Institutes in Sweden, namely SICS North, under the leadership of Tor Bjorn Minde. We are now forging a strong collaboration between SICS North and the University of Leeds with the exchange of expertise with my taking up a study leave for two years at SICS North, where we will continue to grow integrated and holistic data centre research and development using live Datacom.

Figure 3 shows the two new data centre pods with real Datacom available for a number of research projects around data centre control, operation and performance. The figure shows SICS ICE module 1 to the left, which houses the world’s first open Hadoop-based research data centre offering the capability to do open big data research. Module 2 to the right is a flexible lab with 10 rack much like the cube but with additional functionalities.

Figure 3: The two data centre pods at SICS North, Sweden, with SICS ICE on the left housing the open Hadoop-based research data centre.

By combining the expertise at Leeds on thermal and energy management of a myriad of Datacom systems with the data centre operational capabilities at SICS North, we anticipate to be able to offer a stronger understanding of the integration of the Datacom with the Data Centre facilities at a time of great need.

Acknowledgements

I would like to acknowledge the contribution of PhD student Morgan Tatchell-Evans and my colleague Professor Nik Kapur for the design and construction of the data centre cube with kind support from Digiplex and PhD student Daniel Burdett for the design and construction of the generic server wind tunnel and generous support from AQL.

Dr. Jon Summers is a senior lecturer in the School of Mechanical Engineering at Leeds. During the last 20 years, he has worked on a number of government and industry funding projects which have required different levels of computational modelling. Since 1998, having built and managed compute clusters to support many research projects, Jon now chairs the High-Performance Computing User Group at Leeds University and is no stranger to high performance computing having developed software that uses parallel computation. Applications of his modelling skills have led to publications in the areas of process engineering, tribology, through to bioengineering and as diverse as dinosaur extinction. In the last three to four years Jon’s research has focussed on a range of air flow and thermal management and energy efficiency projects within the Data Centre, HVAC and industrial sectors.

By Mark Fenton, Product Manager at Future Facilities

When one of our developers, Bo Xia at Future Facilities HQ put on the Oculus Rift headset for the first time, we were skeptical about what his reaction would be.

To the rest of the team watching from the real world, it was a curious scene: Bo was standing in our office strapped into a VR headset, moving his head and arms around wildly. But Bo had been transported and was now completely immersed in one of our data centre models—walking down the aisles, looking at live power consumption and watching simulated airflows. He was experiencing for the first time a fully-immersive data centre simulation. He took off the headset and delivered his verdict with a huge grin: “Amazing!”.

Everyone we have delivered the Rift experience to has had this reaction. Often, this has been their first experience of VR and so has come with a healthy level of skepticism and even trepidation towards the technology. What is amazing is watching how quickly that melts away once they are transported to a rooftop chiller plant or back in time to an IBM mainframe facility. Once immersed, there is full freedom to explore the data centre as you please. Walk an aisle, fly through the duct system, watch airflows or engage with any asset of interest. You quickly forget the limitations of being human and fly up to get a bird’s eye view before diving into the internals of a cabinet.

This fully-flexible experience may be the foundation of almost unlimited opportunities for our data centre ecosystem: designers walking clients around their concepts, colocation providers selling a proposed cage layout, upper management touring their investment, facility engineers troubleshooting their own sites and much more. It’s clear that VR will not only change the way we visualise our data centres but more excitingly, it will change the way we work with them as well.

For operational sites, VR will naturally progress to AR (augmented reality), where performance data can be overlaid onto the real world. Imagine walking through your data centre, putting on your AR glasses and superimposing live DCIM data or simulation results directly onto your view. With human error causing the largest percentage of data centre outages, AR could be invaluable in training and assisting site staff to ensure fewer mistakes are made.

When looking at cooling performance, site staff could visualise the airflow around overheating devices to fully understand the thermal environment - and then interactively make improvements. In addition, IT and Facilities could use this technology to proactively visualise their next deployments, a maintenance schedule or even a worst-case failure. VR offers a fully-immersed testbed, where you can experience first-hand the engineering impact of any data centre change you’re planning to make.

So what about when you can’t physically walk around the data hall floor? With the rapid growth of IoT and edge computing, there is a drive towards smaller local facilities that provide low-latency connectivity between users and their cloud requirements. From autonomous cars to the next Pokemon Go, there is an exponentially-increasing volume of data being produced, and an unwavering pursuit towards faster connectivity to make use of it.

This trend towards larger cloud data centres supported by a discretized network of hundreds - or even thousands - of remote edge sites will be a significant management challenge. This lends itself beautifully to the VR world: VR provides remote operators the tools to assess alarms and find faults, then make adjustments to mitigate the risk of downtime - all from the comfort of their chair. This concept was demonstrated by Vapor IO at the recent Open19 launch: they showed a Vapor chamber being used in a remote edge location, streaming live data from OpenDCRE and simulation airflow from our own 6SigmaDCX software.

The future of data centres is embarking on an exciting journey towards higher demand, local edge connectivity and a fully-connected IoT world. Engineering simulation techniques will ensure these sites can deliver the highest number of applications with the lowest energy spend - all with no risk to downtime. Combining the power of simulation with VR will allow data centre professionals to engage and immerse themselves in their remote environments and, for the first time, truly understand the impact of any change they wish to make. VR certainly has the ‘wow’ factor, but it is becoming increasingly clear the technology will also provide a huge benefit to the running and optimising of the next generation of data centres.

By Professor Xudong Zhao, Director of Research, School of Engineering and Computer Science University of Hull

It is universally acknowledged that the cooling systems consume 30% to 40% of energy delivered into the Computing & Data Centres (CDCs), while electricity use in CDCs represents 1.3% of the world total energy consumption. The traditional vapour compression cooling systems for CDCs are neither energy efficient nor environmentally friendly.

Several alternative cooling systems, e.g., adsorption, ejector, and evaporative types, have certain level of energy saving potential but exhibit some inherent problems that have restricted their wide applications in CDCs.

One of the most promising directions is the application of the dew point cooling system, which has been widely used in other industrial fields potentially has the highest efficiency (20-22 electricity based COP) over other cooling systems if designed properly.

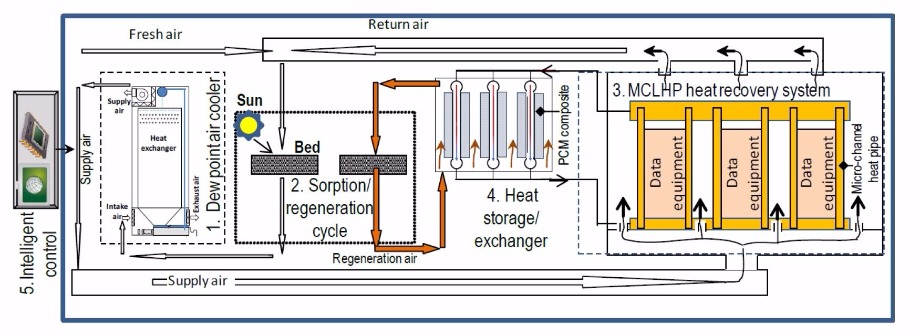

To promote its application in CDCs, an international and inter-sectoral research team, led by University of Hull and supported by the DCA Data Centre Trade Association, has been formed to work on a joint EU Horizon 2020 research and innovation programme dedicated to developing the design theory, computerised tool and technology prototypes for a novel CDC dew point cooling system. Such a system, included critical and highly innovative components (i.e., dew point air cooler, adsorbent sorption/regeneration cycle, microchannel loop-heat-pipe (MCLHP) based CDC heat recovery system, paraffin/expanded-graphite based heat storage/ exchanger, and internet-based intelligent monitoring and control system), it is expected to achieve 60% to 90% of electrical energy saving and is expected to have a comparable initial price to traditional CDC air conditioning systems, thus removing the above outstanding problems remaining with existing CDC cooling systems.

Five major parts in the innovated system, as shown in Fig. 1, are being jointly developed by several organizations of the research team, including:

(1) a unique high-performance dew point air cooler;

(2) an energy efficient solar and (or) CDC-waste-heat driven adsorbent sorption/desorption cycle containing a sorption bed for air dehumidification and a desorption bed for adsorbent regeneration; both are functionally alternative;

(3) a high efficiency micro-channels-loop-heat-pipe (MCLHP) based CDC heat recovery system;

(4) a high-performance heat storage/exchanger unit; and

(5) internet-based intelligent monitoring and control system.

Fig. 1 Schematic of the CDC dew point cooling system

During operation, mixture of the return and fresh air will be pre-treated within the sorption bed (part of the sorption/desorption cycle), which will create a lower and stabilised humidity ratio in the air, thus increasing its cooling potential. This part of air will be delivered into the dew point air cooler. Within the cooler, part of the air will be cooled to a temperature approaching the dew point of its inlet state and delivered to the CDC spaces for indoor cooling. Meanwhile, the remainder air will receive the heat transported from the product air and absorb the evaporated moisture from the wet channel surfaces, thus becoming hot and saturated and being discharged to the atmosphere.

As the adsorbent regeneration process requires significant amounts of heat while the CDC data processing (or computing) equipment generate heat constantly, a micro-channels-loop-heat pipe (MCLHP) based CDC heat recovery system will be implemented. Within the system, the evaporation part of the MCLHP will be stuck to the enclosure of the data processing (or computing) equipment to absorb the heat dissipated from the equipment, while the absorbed heat will be released to a dedicated heat storage/exchanger via the condenser of the MCLHP.

The regeneration air will be directed through the heat storage/exchanger, taking away the heat and transferring the heat to the desorption bed for adsorbent regeneration, while the paraffin/expanded-graphite within the storage/exchanger will act as the heat balance element that stores or releases heat intermittently to match the heat required by the regeneration air. It should be noted that the heat collected from the CDC equipment and (or) from solar radiation will be jointly or independently applied to the adsorbent regeneration, while the system operation will be managed by an internet-based intelligent monitoring and control system.

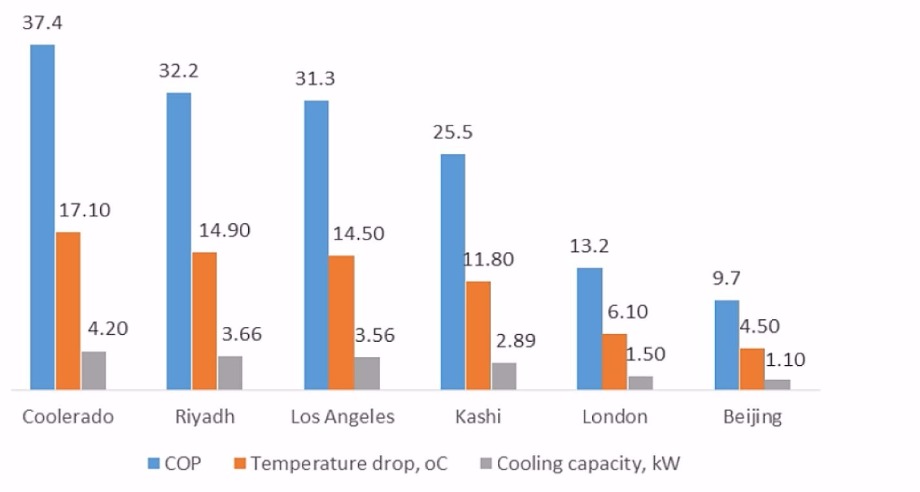

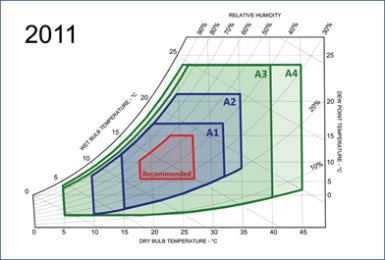

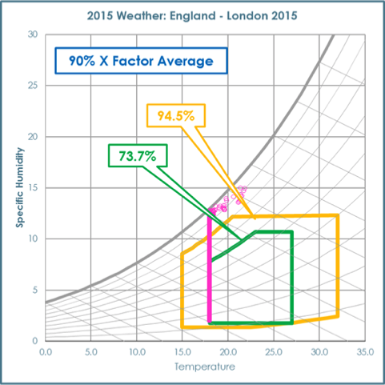

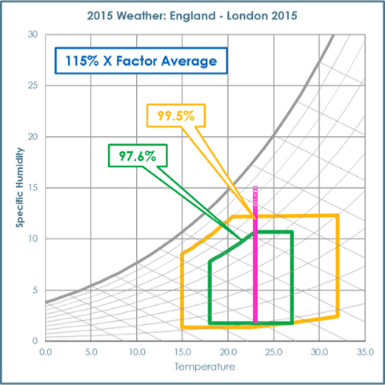

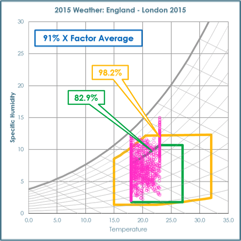

This super high performance has been validated by simulation and the prototype experiment carried out in Hull and other partners’ laboratories. The coefficient of performance (COP) of the proposed dew point cooling system reaches as high as 37.4 in ideal weather condition, while the average COP of traditional cooling system is around 3.0. The tested performance of the new system at various climatic conditions are depicted in Fig. 2.

Fig.2 Performance of the super performance dew point cooler at various climatic condition.

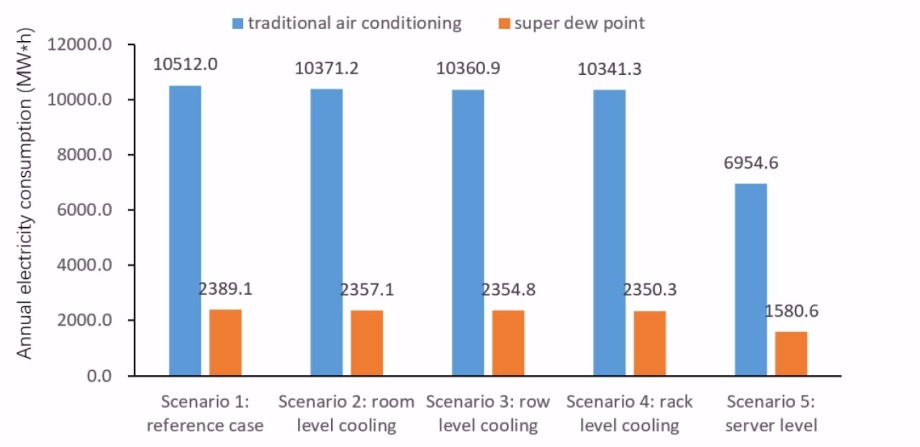

The dynamic simulation was also carried out under UK (London) climate for 4 scale type of CDCs (i.e. small, medium, large, super) and 5 application scenarios (room space level, row level, rack level, server level). The result shows dramatic annual electricity saving compare to the reference cases with traditional cooling plans, especially for the application at server level cooling. The annual energy consumption comparison for large scale CDC is provided as an example shown in Fig 3. The results also show that the bigger the CDC`s scale the more electricity would be saved by applying the super dew point air conditioning system.

The estimated annual electricity saving for the reference Data centres in UK were:

Fig.3 The annual energy consumption comparison of tradition and new cooling system for large scale CDC at various application scenario.

To summarise, the development, test and demonstration of the innovative super performance dew point cooling system for CDCs are going to be completed by 2020. The wide application of such high-performance cooling system will overcome the difficulties remaining with existing cooling systems, thus achieving significantly improved energy efficiency, enabling the low-carbon operation and realizing the green dream in CDCs.

Professor Xudong Zhao, BEng. MSc. DPhil. CEng. MCIBSE, is a Director of Research and Chair Professor at the School of Engineering and Computer Science, University of Hull (UK), and has enjoyed the global reputation as a distinguished academia in the areas of sustainable building services, renewable energy, and energy efficiency technologies. Over the 30 years of professional career, he has led or participated in 54 research projects funded by EU, EPSRC, Royal Society, Innovate-UK, China Ministry of Science and Technology and industry with accumulated fund value in excess of £14 million, 40 engineering consultancy projects worth £5 million, and claimed five patents. Up to date, he has supervised 24 PhD students and 14 postdoctoral research fellows, published 150 peer-reviewed papers in high impact journals and referred conferences; involved authorization of three books, chaired, organized, gave keynote (invited) speeches in 20 international conferences.

By Matthew Philo Product Manager – Denco Happel

The data centre industry continues to develop and innovate at a pace like no other, but this does not change core principles for the operations managers; they want simplicity and efficiency, but never at the cost of reliability. Matthew Philo, Denco Happel’s Product Manager CRAC, explains why these principles were central during the testing and development of their new free cooling solution.

As energy costs continue to rise, data centre owners and managers are looking for ways to reduce the amount of energy used by both IT and supporting infrastructure. Considering that the energy used for climate control and UPS systems can be around 40% of a data centre's total energy consumption1, efficient cooling systems can significantly cut carbon footprints and energy bills. Over the past year, we have been looking at a new way of combining free cooling with the reliability of mechanical cooling technology to help IT managers improve their Data Centre Infrastructure Efficiency (DCiE) and Power Usage Effectiveness (PUE).

The existing Multi-DENCO® range was taken as a starting point, which had introduced inverter compressors so that we could match heat rejection exactly to the room requirements. Whilst a data centre operates in a 24/7 environment, the cooling requirements vary throughout the day and across different seasons. This means that many units spent most of their life in part-load conditions, below 100% output.

It therefore made sense to increase efficiency at lower conditions to reap the biggest benefits. When we incorporated variable technology, such as EC fans and inverter compressors into our refrigerant-based, direct expansion (DX) Multi-DENCO® solution, it provided the opportunity to reduce energy consumption because it benefits from the ‘cube root’ principle. A good rule of thumb is a 20% reduction in speed will give a 50% reduction in energy consumption. This means if you can operate 80%, rather than 100%, very quickly you see your energy consumption halving.

However, this did not mean our progress on efficiency had finished. We realised that we could deliver further energy savings by exploiting the variability of the outdoor environment - in particular when it gets colder.

We knew that we needed to keep the full DX circuit within our design, to give the reliability that was required by our customers. But a refrigeration circuit does not benefit greatly from colder weather, so we focused on using indirect free cooling to provide suitably cold water to the indoor unit.

Outside of peak summer temperatures, this water circuit would reduce or remove the need for mechanical cooling (i.e. a direct expansion circuit), and the Multi-DENCO® F-Version was born.

In typical indoor conditions, 100% of the cooling requirements could be provided by the free-cooling circuit up to an outdoor temperature of 10°C. If the unit is operating in part-load conditions, it can continue to fully meet a datacentre’s cooling needs beyond this temperature, which means that the DX circuit’s compressor can be switched off for longer to save energy. To maintain the unit’s reliability, the free-cooling water circuit was kept separate to ensure that the DX circuit could operate independently if it was needed to fully meet the cooling load. A new EC water pump was chosen to give variable control and deliver the same energy-saving benefits offered by other models in the Multi-DENCO® range.

Whilst the benefits of 100% free cooling are easy to understand, the significant advantages of the mixed-mode operation can be easily overlooked. Mixed-mode, where both the free cooling and the direct expansion operate simultaneously, can be until 5 degrees below the indoor environment’s set point (for example a set point of 30°C would allow mix-mode until 25°C), which in Europe can be a large percentage of the year.

During those many hours of mix-mode operation, the ‘cube root’ principle is being exploited. The free cooling circuit may only be contributing a small percentage of the cooling, but it is also reducing the operating condition of the direct expansion circuit. As mentioned early, if the free cool circuit can provide 20% of what is required, then this is 20% less for the direct expansion circuit. This means the direct expansion circuit will save 50% in energy consumption when the unit is in mix-mode.

Energy consumption continues to be a key factor in the operational cost of a data centre. As datacentres come under mounting pressure to increase performance, managers want to take advantage of any efficiency options available. By combining the reliability of a direct expansion circuit with a simple indirect free cooling circuit, energy efficiency can be improved without risking interruptions to a datacentre’s critical operations.

For more information on DencoHappel’s Multi-DENCO® range, please visit http://www.dencohappel.com/en-GB/products/air-treatment-systems/close-control/multi-denco

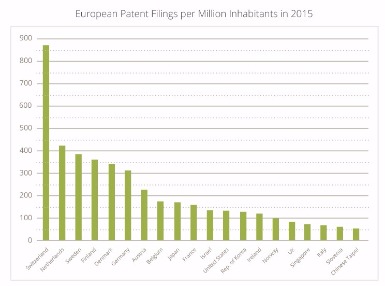

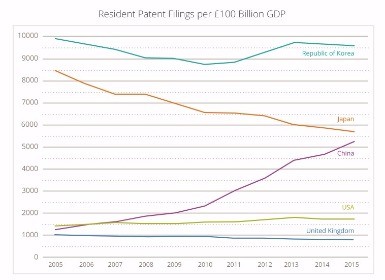

A recent document published by the European Patent Office (EPO) includes a graph which claims to be “measuring inventiveness” of the world’s leading economies using the ratio of European patent filings to population[1].

The data, reproduced in the graph (top right), shows the number of European patent filings per million inhabitants in 2015. Switzerland comes out on top, with 873 applications per million inhabitants, whilst the UK sits 16th on the list with only 79 applications per million inhabitants. This means that Switzerland has over ten times as many European patent filings as the UK, per million inhabitants.

Additional data, provided by the World Intellectual Property Organisation (WIPO)[2], shows resident patent filings per £100bn GDP for the last 10 years - see the graph (bottom right). The UK is at the bottom of the pile, flat-lining at only about one filing per £100m GDP. In 2015, the USA beat the UK by a factor of about two and Korea beat the UK by a factor of over ten.

These graphs show slightly different things. One shows European patent filings, the other shows resident patent filings (i.e. filing in a resident’s “home” patent office). However they both make the same point loud and clear - UK companies file significantly fewer patent applications, in relative terms, than their competitors in other countries.

What is less clear is why the numbers are so low. Broadly speaking, there are two possible explanations.

One is that the UK really is less inventive than the rest of the world - as the EPO graph would have you believe. We would like to think that’s not true - the UK is renowned in the world of innovation, with UK inventors famously having invented the telephone, the world wide web, and recently even the holographic television, to name but a few.

A more plausible explanation is that the UK has a different patent filing “culture”, which originates from a number of factors:

£24bn takeover was the biggest ever tech deal in the UK, and the majority of that value can be attributed to ARM’s patent portfolio.

So the reasons are many and varied, but the message to UK companies is clear: your international competitors are likely to be filing more patents than you, and you need a strategy that takes this into account. This might involve filing more patent applications, or simply becoming more aware of your competitors’ patent portfolios.

Withers & Rogers is one of the leading intellectual property law firms in the UK and Europe. They offer a free introductory meeting or telephone conversation to companies that need counsel on matters relating to patents, trademarks, designs and strategic IP. For more information call 020 7940 3600 or visit www.withersrogers.com

[1] EPO Facts and figures 2016, page 15

Jim Ribeiro

Matthew Pennington

This year, global cloud computing revenue in all its guises will grow 18 per cent to $247 billion. The number of connected ‘things’ is also forecast to grow significantly. Gartner predicts that by the end of 2017, around 8.4 billion devices will be in use worldwide.

By Jackson Lee, VP of Corporate Development at Colt DCS.

The ripple effects of these market forces will be felt by data centre providers in several ways. The mainstream larger cloud service providers will continue to build more compute capacity, networking and storage. This will be in the form of hyperscale server farms, designed to accommodate growing data demands and workloads. To appreciate the scale of transactions today, consider that as I write this, Twitter is handling over 500 million tweets a day. Meanwhile, payment network provider Visa is capable of processing more than 24,000 transaction per second.

We’re also seeing hyperscale demand expand into new areas as cheaper compute power and sensors drive adoption of digital technologies in emerging markets. In industries such as manufacturing, machine-to-machine interactions directed by the Internet of Things (IoT) are creating new hyperscale segments. A good example is engineering giant General Electric (GE). A pair of its jet engines on a Boeing 787 Dreamliner generate a terabyte of information per day.

On the opposite side of the same coin is the evolution of edge computing and micro-data centres.

When applications and data are moved from centralised points to the outer layers of a traditional internet hubs, the distance between users and that data inevitably narrows. It makes delivering the right information at the right time to the user or the device quicker and more efficient. The increase in interconnectivity between machines, applications and other IoT-based devices using cloud providers is directly tied to this trend. As virtual reality (VR), the connected home and driverless cars emerge as mainstream products and services, a latency-centred product that sits closer to the user is key.

Today, almost every company and user requires near-instant access to data in order to be successful. This might explain why edge computing has been publicised as the next multibillion-dollar tech market. Organisations across the board are increasingly looking to double-down on customer experience through the delivery of services, content and data in real-time.

The growing adoption of digitalisation has given rise to new forms of competition and lifestyle improvement for end users. However, more digitalisation also presents significant resource and data processing challenges.

Firstly, a data centre strategy that combines hyperscale and edge computing into one, or chooses one over the other, is neither cost effective or competitive. It is no longer practical for every connected device or application to use the cloud in the same way smartphones do. Consider the millions of connected artificially intelligent devices, medical equipment, manufacturing robots and VR headsets in use today. The strain on network bandwidth and speed that these devices can pose soon makes sense. In short, it is highly likely that the user experience of such devices will rapidly deteriorate if congestion and latency is not addressed.

This is why a hybrid strategy – one that welcomes both full hyperscale (centralised) and edge (decentralised) computing – is so important. If the type of product or service offered is not latency or bandwidth-driven (e.g. the billing process after a transaction has been made on Amazon) it makes more sense to host it in the server farm that sits out of town away from the user. Low-level processing, backup or storage are other examples to mention.

However, technologies such as drones, driverless cars and connected fridges are latency-sensitive they require more “edge” locations so that the information can be distributed quicker and the distance between device and data narrowed, thus improving the end user experience. These products produce too much data for it to be processed in a location far away. In order to function effectively and meet the demands of the user, the products need immediate results. This is particularly true of driverless cars.

Edge computing will continue to grow in importance over the next decade as the world of “connected things” continues to unfold. These data centres will play a key role addressing issues including availability, latency and bandwidth. However, these edge nodes will be no more important than large, centralised server farms that allow organisations to continually scale the IT load to meet user demands.

The future is undoubtedly a hybrid one where organisations have the best of both worlds: the separation of edge and hyperscale data centres so that workloads and content demands are shared and distributed based on enhancing the customer experience with your brand.

Technological innovations drive the data centre infrastructure and enterprise environment where capacity, data speed, latency, bandwidth, and security are daily priorities for developers and operators. This speed of change has given rise to suggestions that Standard Development Organisations (SDOs) are losing influence in the market, especially in niche high performance segments, such as finance which require economically beneficial solutions fast, whether standards based or otherwise.

By Michael Akinla, Manager of Technical System Engineers, EMEA, Panduit.

The incredible growth in data requirements inevitably leads to innovative technology solutions. In the past 10 years, we have witnessed and helped drive the development of 1GBase-T, 10GBase-T, 25GBase-T, 40GBase-T and now 100GBase-T which are amazing achievements. In some instances, there are spikes in the technology roadmap where innovation may leap-frog the plan, nonetheless, the standards are crucial to that roadmap and provide wider technical and economic benefits across the industry.

As an organisation that contributes time and expertise to various committees, within the IEEE, TIA, Fibre Channel, IEC and BSI across the globe, we have witnessed a marked positive change in the attitude of standards bodies to remain relevant and ‘fit for purpose’. In a highly competitive, performance orientated industry, why do we rely on voluntary policy-based rules and guidelines to provide the ‘general’ direction of the technologies within the market? Standards continue to serve the data centre and enterprise environments by facilitating trade through common performance levels, vendor interoperability and finally customer choice, and these cannot be over emphasised in a global market.

We are observing more instances of organisations circumnavigating traditional SDOs and using less formalised Multi-Source Agreements (MSAs), alliances and consortiums to produce de-facto standards. This is particularly true for application setting standards such as IEEE, while individual components, for example fibre optic connectors and cable, remain largely under the purview of traditional SDOs, a MSA faction has developed PSM4, Parallel Single Mode (4 Channel) a multi-vendor, multi-technology optical interface standard for 100Gbps optical interconnect. A reason that we are seeing this shift is that MSAs with fewer members and common perspectives on the technology allows a faster consensus on a standard. This development can provide a short-cut route on to the SDO agenda for a technology solution. Either way, the fundamental value of a standard is largely maintained regardless of the organisation that produces it.

Standards do not constrain technology breakthroughs, rather the SDO roadmaps offer targets for innovators to reach for, developing new insights and products in their efforts to gain market advantage. Standards also provide a platform for secondary product development, where an innovation may not be specified within the standard, but is backwards compatible to it, and offers competitive advantages. For example, 28-AWG patch cords, the standard prescribes 24-AWG copper cable, however, the 28-AWG cabling has significantly smaller diameter wire, offering 41 percent space saving and providing Cat6, Cat6A and Cat5e capabilities, making access to the cabinets, patch panels and cable housing easier and less likely to cause line interference when MACs take place.

Standards by their nature or SDO design are Open Source to enable the widest possible involvement in their development and application. SDOs review competing technologies designed to solve the next generation challenges and deliberate at length to achieve an approach which generates the critical mass necessary for a solution to be ratified. At this point an unsuccessful alternative technology may splinter off and continue development within an MSA or a proprietary market leader and reappear in the market later. In all circumstances, there has been valuable in-depth peer evaluation of the technology and all parties will gain from the process.

We are encouraged to take an active role in shaping the standard organisations that we participate in. While some organisations simply monitor, or contribute to the administration of the standards organisations, we take an active role in the technical aspects of the standards development process. Although many of our technical innovations are applied to differentiate our products, we recognise that our expertise can be applied within the standards community to advance the industry. For example, the contribution we have made in advanced testing of fibre optic channels and components. This has demonstrated improvements in the reliability of fibre optic channels across the industry.

Standards also generate a substantial economic benefit, not only to individual manufactures and organisations, also to the market and the economy in general. The impact of standards on global trade is illuminating, a 2015 BSI (British Standards Institute) report – ‘The Economic Contribution of Standards to the UK Economy’ states that Standards are essential for opening new markets, linking UK companies into global supply chains and reducing technical barriers to trade. The report illustrated that standards compliance is hugely influential in boosting the sales of UK products and services abroad, with reported impacts averaging 3.2percent, equivalent to £6.1 billion per year in additional exports.

The research also indicates that the industry sectors which are the most intensive users of standards are the most productive, outpacing the economy as a whole by a factor of four.

Technical standards also ensure process information and product descriptions match the expectations of suppliers and purchasers across the globe. Standards organisations distribute technical knowledge ensuring information is readily accessible to all firms. This allows for an efficient exchange of information, which assists in reducing transaction costs. This allows third party organisations to provide value into the supply chain, through distribution or consultancy activities further increasing economic activity. Global supply chains are the key to economic development and would be unthinkable without the standards’ guarantee of product and service compliance. This assurance allows data centres to be built across the globe by local contractors, to the same specifications with interoperable systems.

Standardising components is essential in complex industries such as ICT and data centres where components maybe sourced from hundreds if not thousands of suppliers. Each data center is composed of thousands of separate parts sourced from hundreds of companies across the supply chain. Site developers use both internal and international standards of effectively communicate technical requirements to their suppliers, and the vast majority of these devices and components need to be interoperable and easily replaceable.

Independent Testing Laboratories or third party test facilities by their nature offer to the consumer when done right the assurance that the product that is being bought is compliant to the required standards. This can be used as a measure of where a manufactured product sits when compared to its peers.

Table 1. Summary of types of standards and the economic problems they solve

| Standard Type | Positive Impact | Negative Impact |

| Facilitating interoperability of products and processes | · Network externalities · Avoids lock-in of old technologies · Increases choice of suppliers · Promotes efficiency in supply chains | · Can lock in old technologies in the case of strong network externalities |

| Efficient reduction in the variety of goods & services | · Generates economies of scale · Fosters critical mass in emerging technologies and industries | · Can restrict choice · Can increase market concentration · Can lead to premature selection of technologies |

| Efficient quality & promoting efficiency | · Helps avoid adverse selection · Creates trust · Reduces transaction costs | · Can be misused to raise rival’ costs |

| Efficient distribution of technical information | · Helps reduce transaction costs by helping to eliminate information asymmetries · Diffuses codified knowledge | · Can result in excessive influence of dominant players on regulatory agencies |

It is important to remember that standards represent a useful and often superior policy alternative to government regulation, which would need to be developed independently per country, or trading block by block. The legitimacy of the voluntary standard achieved within industry through the consensus process provides a clear demonstration of the high standing SDOs have gained through the quality and consistency they guarantee to a global market.

Atchison Frazer from Talari explores how SD-WAN technology is helping to deliver robust enterprise cloud applications.

The mass adoption of the cloud has resulted in a decline of traditional enterprise WAN traffic from a business and its branches to the corporate data centre, or from one remote site to another. But what we have seen is an explosion in the volume of enterprise internet traffic driven by digital transformation initiatives and the migration of business-critical apps to hybrid-cloud architectures.

What started as projects around file sharing and remote data access has rapidly escalated into the adoption of cloud-native Software-as-a-Service (SaaS) applications and the mass relocation of on-premises IT systems to the cloud. Many companies have now re-architected key applications to take advantage of Platform-as-a-Service (PaaS), which makes the ability to optimise application performance from the LAN to the WAN to the Cloud, even more significant.