Hype is an occupational hazard of the IT industry. Whatever is the next big thing, no one has even seen anything like it, and, whatever it is, it’s going to revolutionise the world! Virtualisation was going to solve world hunger, eliminate disease and ensure the eternal prosperity of all who invested in the technology. In reality, virtualisation wasn’t even a new idea, but an old one whose time had finally come, and didn’t quite deliver on the promises.

Move forward and Cloud assumed the ‘Messiah mantle’ – everyone was going to move their IT to someone’s cloud, obtaining massive cost-savings, agility and solve world hunger…Fast forward, and we’ve all realised that Cloud might be more than just another name for outsourcing, but the basic idea isn’t that new, it’s just that it’s time has arrived, thanks to technology developments that make it possible.

Soon after I first started in the storage networking industry, in the late 1990s, a few future-thinking organisations set themselves up as Storage Service Providers. They didn’t survive – lack of bandwidth and speed put paid to the idea that moving around large quantities of data made any kind of financial, or operational, sense. And, of course, plenty of IT has been outsourced before, often with less than impressive results – but this time we’re believing, and to a certain extent experiencing, that it is going to work better.

And so we arrive at the edge – the radical, stupendous new idea that it makes sense to move lots of content closer to the consumer and/or process lots of locally produced content at source, rather than haul it back to a centralised data centre. Ah, but hasn’t the industry spent the last few years demolishing the idea of a distributed IT infrastructure, collapsing all manner of remote, branch office IT operations into fewer and fewer, larger and larger centralised facilities?

Yes, but now it’s time to start putting that data back where it’s most needed – so, plenty of it will be created, processed and, at least for a short while, stored at the edge, and plenty more will be created, processed and stored centrally. Oh, and yet more data will move between the edge and the centre. Of course, there’s very good reason as to why this ‘new’ edge is gaining traction, and the technology to achieve a hybrid IT infrastructure – a mix of centralised and distributed resources – is rather more advanced, hence more efficient and affordable, than the previous distributed compute era.

But, amidst all the edge hype, let’s not get too carried away and pretend that what’s going on is mind-bogglingly original. History really does repeat itself (both the good and the bad). If there is anything strikingly new about the modern IT era it is the eventual realisation that there is no one size fits all solution. Hybrid is the future – whether it’s IT, cars or anything else. The clever bit is knowing which resources to use, where and when, for any data transaction. Automation, artificial intelligence, neural networks and the like – the tools that will help make these data decisions – these are the next big thing. Ah, but I remember talking to folks about neural networks back in the 90s…

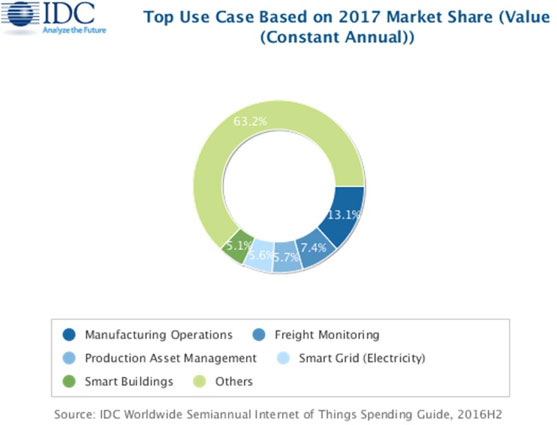

A new update to the International Data Corporation (IDC) Worldwide Semiannual Internet of Things Spending Guide forecasts worldwide spending on the Internet of Things (IoT) to grow 16.7% year over year in 2017, reaching just over $800 billion. By 2021, global IoT spending is expected to total nearly $1.4 trillion as organizations continue to invest in the hardware, software, services, and connectivity that enable the IoT.

"The discussion about IoT has shifted away from the number of devices connected," said Carrie MacGillivray, vice president, Internet of Things and Mobility at IDC. "The true value of IoT is being realized when the software and services come together to enable the capture, interpretation, and action on data produced by IoT endpoints. With our Worldwide IoT Spending Guide, IDC provides insight into key use cases where investment is being made to achieve the business value and transformation promised by the Internet of Things."

The IoT use cases that are expected to attract the largest investments in 2017 include manufacturing operations ($105 billion), freight monitoring ($50 billion), and production asset management ($45 billion). Smart grid technologies for electricity, gas and water and smart building technologies are also forecast to see significant investments this year ($56 billion and $40 billion, respectively). While these use cases will remain the largest areas of IoT spending in 2021, smart home technologies are forecast to experience strong growth (19.8% CAGR) over the five-year forecast. The use cases that will see the fastest spending growth are airport facilities automation (33.4% CAGR), electric vehicle charging (21.1% CAGR), and in-store contextual marketing (20.2% CAGR).

The industries making the largest IoT investments in 2017 are Manufacturing ($183 billion), Transportation ($85 billion), and Utilities ($66 billion). Cross-Industry IoT investments, which represent use cases common to all industries, such as connected vehicles and smart buildings, will be $86 billion in 2017 and rank among the top segments throughout the five-year forecast. Consumer IoT purchases will be the fourth largest market segment in 2017 at $62 billion, but will grow to become the third largest segment in 2021. Meanwhile, The industries that will see the fastest spending growth are Insurance (20.2% CAGR), Consumer (19.4%), and Cross-Industry (17.6%).

From a technology perspective, hardware will be the largest spending category until the last year of the forecast when it will be overtaken by the faster growing services category. Hardware spending will be dominated by modules and sensors that connect end points to networks, while software spending will be similarly dominated by applications software. Services spending will be about evenly split between ongoing and content services and IT and installation services. The fastest growing areas of technology spending are in the software category, where horizontal software and analytics software will have five-year CAGRs of 29.0% and 20.5%, respectively. Security hardware and software will also see increased investment, growing at 15.1% and 16.6% CAGRs, respectively.

"As enterprises are adopting to new and innovative services provided by different vendors a lot of new threats are introduced, so it's very important to upgrade existing security systems to ensure that an optimal business outcome can be reached and ROI can be justified," said Ashutosh Bisht, research manager for IT Spending across APeJ.

Asia/Pacific (excluding Japan)(APeJ) will be the IoT investment leader throughout the forecast with spending expected to reach $455 billion in 2021. The second and third largest regions will be the United States ($421 billion in 2021) and Western Europe ($274 billion). Manufacturing will be the leading industry for IoT investments in all three regions, followed by Utilities and Transportation in APeJ and Western Europe, and Transportation and Consumer in the United States. Cross-Industry IoT spending will be among the leading categories in all three regions as well. The regions that will experience the fastest growth in IoT spending are Latin America (21.7% CAGR), the Middle East and Africa (21.6% CAGR), and Central and Eastern Europe (21.2% CAGR).

More than a quarter of enterprises globally have not built, customized or virtualized any mobile apps in the last 12 months, according to the latest mobile app survey by Gartner, Inc.

This number is surprisingly high, Gartner analysts said, but it is still down from the year before. In the 2016 survey, 39 percent of respondents said they had not built, customized or virtualized any mobile apps in the previous 12 months.

Adrian Leow, research director at Gartner, said that enterprises are responding slowly to increasing demand for mobile apps.

"Many IT teams will have significant backlogs of application work that need completing, which increases the risk of lines of business going around IT to get what they want sooner," said Mr. Leow. "Development teams need to rethink their priorities and span of control over mobile app development or risk further erosion of IT budgets and the perceived value of IT development."

According to the survey, those enterprises that have undertaken mobile app development have deployed an average of eight mobile apps to date, which has remained relatively flat when compared with 2016. On average, another 2.6 mobile apps are currently being developed and 6.2 are planned for the next 12 months, but not yet in development.

"It's encouraging to see significant growth in the number of mobile apps that are planned, but most of this growth is in mobile web apps as opposed to native or hybrid mobile apps," said Mr. Leow. "This indicates that some enterprises may be frustrated with developing mobile apps and are instead refocusing on responsive websites to address their mobile needs."

Gartner's survey reveals that 52 percent of respondents have begun investigating, exploring or piloting the use of bots, chatbots or virtual assistants in mobile app development, which is surprisingly high given how nascent these technologies are. Gartner refers to these as postapp technologies that belong to an era where the traditional app — obtained from an app store and installed onto a mobile device — will become just one of a wide range of ways that functionality and services will be delivered to mobile users. Application leaders need to understand the different postapp technologies that are emerging to ensure their mobile app strategies remain relevant and succeed.

"While this response may be more indicative of greater awareness of these technologies than of anything else, it's still good to see that enterprises have begun to consider these technologies, because they will grow in importance relatively rapidly," said Mr. Leow.

According to the survey, the primary barriers to mobile initiatives are resources related — lack of funds, worker hours and skills gaps. Cost concerns are pervasive in IT organizations so this is not surprising, but it points to the need to enhance productivity with the budgets that IT development organizations already have. Other barriers include a lack of business benefits and ROI justification; however, a lack of understanding of customer needs may contribute to this.

In terms of spending, the survey revealed that organizations' actual IT spend on mobile apps is consistently lower than they forecast. Despite 68 percent of organizations expecting to increase spending for mobile apps, the average proportion of the overall software budget is only 11 percent. Those that plan on increasing spending in 2017 expect to do so by 25 percent over last year. For the past few years, Gartner's research has shown that while organizations have indicated that they will increase their mobile app development budget spend, the reality is that spending allocation has decreased.

"Application leaders must turn around this trend of stagnating budgeted spend on mobile app development, as employees increasingly have the autonomy to choose the devices, apps and even the processes with which to complete a task," said Mr. Leow. "This will place an increasing amount of pressure on IT to develop a larger variety of mobile apps in shorter time frames."

In the first quarter of 2017, worldwide server revenue declined 4.5 percent year over year, while shipments fell 4.2 percent from the first quarter of 2016, according to Gartner, Inc.

"The first quarter of 2017 showed declines on a global level with a slight variation in results by region," said Jeffrey Hewitt, research vice president at Gartner. "Asia/Pacific bucked the trend and posted growth while all other regions fell.

"Although purchases in the hyperscale data center segment have been increasing, the enterprise and SMB segments remain constrained as end users in these segments accommodate their increased application requirements through virtualization and consider cloud alternatives," Mr. Hewitt said.

Hewlett Packard Enterprise (HPE) continued to lead in the worldwide server market based on revenue. The company posted just more than $3 billion in revenue for a total share of 24.1 percent for the first quarter of 2017 (see Table 1). Dell EMC maintained the No. 2 position with 19 percent market share. Dell EMC was the only vendor in the top five to experience growth in the first quarter of 2017.

Table 1: Worldwide: Server Vendor Revenue Estimates, 1Q17 (U.S. Dollars)

| Company | 1Q17 Revenue | 1Q17 Market Share (%) | 1Q16 Revenue | 1Q16 Market Share (%) | 1Q17-1Q6 Growth (%) |

| HPE | 3,009,569,241 | 24.1 | 3,296,591,967 | 25.2 | -8.7 |

| Dell EMC | 2,373,171,860 | 19.0 | 2,265,272,258 | 17.3 | 4.8 |

| IBM | 831,622,879 | 6.6 | 1,270,901,371 | 9.7 | -34.6 |

| Cisco | 825,610,000 | 6.6 | 850,230,000 | 6.5 | -2.9 |

| Lenovo | 731,647,279 | 5.8 | 871,335,542 | 6.7 | -16.0 |

| Others | 4,737,196,847 | 37.9 | 4,537,261,457 | 34.7 | 4.4 |

| Total | 12,508,818,106 | 100.0 | 13,091,592,596 | 100.0 | -4.5 |

Source: Gartner (June 2017)

In server shipments, Dell EMC secured the No. 1 position in the first quarter of 2017 with 17.9 percent market share (see Table 2). The company had a slight increase of 0.5 percent growth over the first quarter of 2016. Despite a decline of 16.7 percent, HPE secured the second spot with 16.8 percent of the market. Inspur Electronics experienced the highest growth in shipments with 27.3 percent.

Table 2: Worldwide: Server Vendor Shipments Estimates, 1Q17 (Units)

Company | 1Q17 | 1Q17 Market Share (%) | 1Q16 | 1Q16 Market Share (%) | 1Q17-1Q16 Growth (%) |

Dell EMC | 466,800 | 17.9 | 464,292 | 17.1 | 0.5 |

HPE | 438,169 | 16.8 | 526,115 | 19.4 | -16.7 |

Huawei | 156,559 | 6.0 | 130,755 | 4.8 | 19.7 |

Lenovo | 145,977 | 5.6 | 199,189 | 7.3 | -26.7 |

Inspur Electronics | 139,203 | 5.4 | 109,390 | 4.0 | 27.3 |

Others | 1,254,892 | 48.2 | 1,286,097 | 47.4 | -2.4 |

Total | 2,601,600 | 100.0 | 2,715, 138 | 100.0 | -4.2 |

Source: Gartner (June 2017)

Growth in worldwide cloud-based security services will remain strong, reaching $5.9 billion in 2017, up 21 percent from 2016, according to Gartner, Inc. Overall growth in the cloud-based security services market is above that of the total information security market. Gartner estimates the cloud-based security services market will reach close to $9 billion by 2020 (see Table 1).

SIEM, IAM and emerging technologies are the fastest growing cloud-based security services segments.

"Email security, web security and identity and access management (IAM) remain organizations' top-three cloud priorities," said Ruggero Contu, research director at Gartner. Mainstream services that address these priorities, including security information and event management (SIEM) and IAM, and emerging services offer the most significant growth potential. Emerging offerings are among the fastest-growing segments and include threat intelligence enablement, cloud-based malware sandboxes, cloud-based data encryption, endpoint protection management, threat intelligence and web application firewalls (WAFs).

Table 1: Worldwide Cloud-Based Security Services Forecast by Segment (Millions of Dollars)

| Segment | 2016 | 2017 | 2018 | 2019 | 2020 |

| Secure email gateway | 654.9 | 702.7 | 752.3 | 811.5 | 873.2 |

| Secure web gateway | 635.9 | 707.8 | 786.0 | 873.2 | 970.8 |

| IAM, IDaaS, user | 1,650.0 | 2,100.0 | 2,550.0 | 3,000.0 | 3,421.8 |

| Remote vulnerability assessment | 220.5 | 250.0 | 280.0 | 310.0 | 340.0 |

| SIEM | 286.8 | 359.0 | 430.0 | 512.1 | 606.7 |

| Application security testing | 341.0 | 397.3 | 455.5 | 514.0 | 571.1 |

| Other cloud-based security | 1,051.0 | 1,334.0 | 1,609.0 | 1,788.0 | 2,140.0 |

| Total Market | 4,840.1 | 5,850.8 | 6,862.9 | 7,808.8 | 8,923.6 |

IDaaS = identity and access management as a service

Note: Numbers may not add to totals shown due to rounding.

Source: Gartner (June 2017)

Increasing security threats, operational and cost benefits and staffing pressure drive market growth.

Small and midsize businesses (SMBs) are driving growth as they are becoming increasingly aware of security threats. They are also seeing that cloud deployments provide opportunities to reduce costs, especially for powering and cooling hardware-based security equipment and data center floor space.

"The cloud medium is a natural fit for the needs of SMBs. Its ease of deployment and management, pay-as-you-consume pricing and simplified features make this delivery model attractive for organizations that lack staffing resources," said Mr. Contu.

The enterprise sector is also driving growth as they realize the operational benefits derived from a cloud-based security delivery model.

"Cloud-based delivery models will remain a popular choice for security practices, with deployment expanding further to controls, such as cloud-based sandboxing and WAFs," said Mr. Contu. According to a global survey conducted by Gartner at the beginning of 2016, public cloud will be the prime delivery model for more than 60 percent of security applications by the end of 2017.

"The ability to leverage security controls that are delivered, updated and managed through the cloud — and therefore require less time-consuming and costly implementations and maintenance activities — is of significant value to enterprises," said Mr. Contu.

Cloud-based security services market growth presents opportunities and challenges for providers.

"On the one hand, new greenfield demand arising from emerging requirements from SMBs is driving growth. On the other hand, new competitive dynamics and alternative pricing practices threaten traditional business models," said Mr. Contu. Providers need to adapt to the shift from an on-premises to a cloud-delivery business model. "Overall, one of the main focus areas for providers relates to the shift from owning and selling a product, to selling and supporting ongoing service delivery."

The worldwide Ethernet switch market (Layer 2/3) recorded $5.66 billion in revenue in the first quarter of 2017 (1Q17), an increase of 3.3% year over year. Meanwhile, the worldwide total enterprise and service provider (SP) router market recorded $3.35 billion in revenue in 1Q17, decreasing 3.7% on a year-over-year basis. These growth rates are according to results published in the International Data Corporation (IDC) Worldwide Quarterly Ethernet Switch Tracker and Worldwide Quarterly Router Tracker.

From a geographic perspective, the 1Q17 Ethernet switch market once again recorded its strongest growth in the Middle East and Africa (MEA) region, which increased a solid 9.1% year over year. At the country level, the United Arab Emirates (up 38.2% year over year) and South Africa (up 25.6% year over year) were among the standouts. Western Europe also saw strong growth, increasing 6.0% year over year in 1Q17, with Belgium (up 27.6% year over year) and Sweden (up 12.0% year over year) as growth pacesetters. Asia/Pacific (excluding Japan)(APeJ) grew at a rate just above the overall market with a 3.5% year-over-year increase in 1Q17. New Zealand (up 20.5% year overyear) was the regional growth leader in 1Q17. North America grew at a below-market rate in 1Q17, increasing 2.5% on a year-over-year basis, with Canada increasing at a stronger 4.5%.

Latin America experienced flat-to-declining performance in 1Q17, with a 0.4% contraction year-over-year. Argentina was a bright spot in the quarter, growing 57.4% on an annualized basis. Japan, in a reversal from the previous quarter, declined 0.6%. Central and Eastern Europe saw the steepest decline of 1Q17, contracting 2.4% year-over-year, as declines in Poland (down 30.4% year-over-year) and Czech Republic (down 29.2%) weighed on the region.

"The Ethernet switch market, across the enterprise and Datacenter segments, is characterized by two competing forces: faster speeds and increased standardization," said Rohit Mehra, vice president, Network Infrastructure at IDC. "Both forces drive port shipments up, but price erosion from standardization and product maturity means that improved price-performance becomes more important across regions. That said, continued penetration of cloud coupled with the digital transformation imperative will drive market growth throughout 2017."

10Gb Ethernet switch (Layer 2/3) revenue decreased 1.6% year over year in 1Q17, coming in at $1.98 billion, while 10Gb Ethernet switch port shipments grew 23.9% year over year with over 10.7 million ports shipped in 1Q17. 40Gb Ethernet revenue came in at $609.5 million in 1Q17, declining 7.2% over 1Q16, while port shipments fell just below 1.3 million, representing a decrease of 21.9% year over year. 10Gb and 40Gb Ethernet are now joined by emerging 100Gb Ethernet (revenue up 323.5% and shipments up 776.4% on annualized basis in 1Q17) to be the primary drivers of the overall Ethernet switch market. 1Gb Ethernet switch revenue decreased 0.5% year over year in 1Q17, despite a 11.0% increase in port shipments in the same period, pointing to a maturing campus segment.

The worldwide enterprise and service provider router market contacted 3.7% on a year-over-year basis in 1Q17 based on a 4.4% decrease in the larger service provider segment and a 1.4% decrease in enterprise routing. This will be a market to watch closely over the coming quarters as software-defined architectures start to take hold across the WAN, with the potential for SD-WAN to disrupt traditional routing architectures and WAN transport services markets especially at the network edge.

The combined enterprise and service provider router market saw a varied regional performance in 1Q17, with APeJ recording the strongest growth (up 8.8% year over year). Japan, the only other region to record growth, increased 5.2% year over year in 1Q17. MEA was down 1.0%, while CEE declined 4.1% over the period. Other regions saw greater declines in 1Q17. Western Europe was down 7.1% on annualized basis, while North America contracted 9.8%. Latin America saw the steepest decline of all, decreasing 23.4% over 1Q16.

Vendor Highlights

Cisco finished 1Q17 with a year-over-year decline of 3.5% in the Ethernet switching market and market share of 55.1%, down from its 55.6% share in 4Q16 and down from 59.0% in 1Q16. In the vigorously contested 10GbE segment, Cisco held 52.4% of the market in 1Q17, down from 53.0% in the previous quarter. Cisco saw its combined service provider and enterprise router revenue decrease 13.3% on an annualized basis, while its market share came in at 43.9% in 1Q17, up from 42.2% in 4Q16, but down from 48.8% in 1Q16.

Huawei continued to perform well in both the Ethernet switch and the router markets on an annualized basis. Huawei's Ethernet switch revenue grew 69.8% year over year in 1Q17 for a market share of 6.3%, down from 9.9% in 4Q16 and up from 3.9% in 1Q16. Huawei's enterprise and SP router revenue increased 17.0% over the same period, to finish with 19.8% of the total router market in 1Q17 compared to 18.8% in 4Q16 and 16.3% in 1Q16.

Hewlett Packard Enterprise's (HPE) Ethernet switch revenue grew 0.8% sequentially in 1Q17 and its market share stands at 6.0% in 1Q17, up from its 5.0% share in 4Q16. (Note: HPE and H3C are tracked separately as of 2Q16).

Arista Networks performed well in 1Q17, with its Ethernet switching revenue rising 37.1% year over year and earning a market share of 5.1%, up from 3.9% in 1Q16. Arista's market share in the 100Gb segment stands at 27.8%.

Juniper's Ethernet switching increased by a notable 39.2% year over year in 1Q17, bringing its market share to 4.3% versus 3.2% in 1Q16. Juniper also saw a 3.4% year-over-year increase in combined service provider and enterprise router revenues, with market share of 15.6%, compared to 14.5% in 1Q16.

"Cloud and software-defined architectures are starting to shake up the status quo in the Ethernet switch and router markets," said Petr Jirovsky, research manager, Worldwide Networking Trackers. "This is already impacting leading and upstart networking vendors in various ways and points to an imperative for vendors to adapt and be nimble, and not depend on the status quo."

As the wearables market transforms, total shipment volumes are expected to maintain their forward momentum. According to data from the International Data Corporation (IDC) Worldwide Quarterly Wearable Device Tracker, vendors will ship a total of 125.5 million wearable devices this year, marking a 20.4% increase from the 104.3 million units shipped in 2016. From there, the wearables market will nearly double before reaching a total of 240.1 million units shipped in 2021, resulting in a five-year CAGR of 18.2%.

“The wearables market is entering a new phase,” points out Ramon T. Llamas, research manager for IDC’s Wearables team. “Since the market’s inception, it’s been a matter of getting product out there to generate awareness and interest. Now it’s about getting the experience right – from the way the hardware looks and feels to how software collects, analyzes, and presents insightful data. What this means for users is that in the years ahead, they will be treated to second- and third-generation devices that will make the today’s devices seem quaint. Expect digital assistants, cellular connectivity, and connections to larger systems, both at home and at work. At the same time, expect to see a proliferation in the diversity of devices brought to market, and a decline in prices that will make these more affordable to a larger crowd.”

“It’s not just the end users who will benefit from these advanced devices,” said Jitesh Ubrani senior research analyst for IDC Mobile Device Trackers. “Opportunities also exist for developers and channel partners to provide the apps, services, and distribution that willl support the growing abundance of wearables. From a deployment perspective, the commercial segment also stands to benefit as wearables enable productivity, lower costs, and increase ROI in the long term.

Watches: We anticipate that watches will account for the majority of all wearable devices shipped during the forecast period. However, a closer look shows that basic watches (devices that do not run third party applications, including hybrid watches, fitness/GPS watches, and most kid watches) will continue out-shipping smart watches (devices capable of running third party applications, like Apple Watch, Samsung Gear, and all Android Wear devices), as numerous traditional watch makers shift more resources to building hybrid watches, creating a greater TAM each year. Smart watches, however, will see a boost in volumes in 2019 as cellular connectivity becomes more prevalent on the market.

Wrist Bands: Once the overall leaders of the wearables market, wristbands will see slowing growth in the years ahead. The sudden softness in the wristband market witnessed at the end of 2016 will carry into subsequent quarters and year, but the market will be propped up with low-cost devices with good enough features for the mass market. In addition, users will transition to watches for additional utility and multi-purpose use.

Earwear: We are not counting Bluetooth headsets whose only task is to bring voice calls to the user. Instead, we are counting those devices that bring additional functionality, and sends information back and forth to a smartphone application. Examples include Bragi’s Dash and Samsung Gear Icon X. In most cases, the additional feature centers on collecting fitness data about the user, but can also include real-time audio filtering or language translation.

Clothing: The smart clothing market took a strong step forward, thanks to numerous vendors in China providing shirts, belts, shoes, socks, and other connected apparel. While consumers have yet to fully embrace connected clothing, professional athletes and organizations have warmed to their usage to improve player performance. The upcoming release of Google and Levi’s Project Jacquared-enabled jacket stands to change that this year.

Others: We include lesser known products like clip-on devices, non-AR/VR eyewear, and others into this category. While we do not expect an immense amount of growth in this segment, it will nonetheless bear watching as numerous vendors cater to niche audiences with creative new devices and uses.

| Top Wearable Devices by Product, Volume, Market Share, and CAGR | |||||

| Product | Shipment Volume 2017 | Market Share 2017 | Shipment Volume 2021* | Market Share 2021* | CAGR (2017-2021)* |

| Watches | 71.4 | 56.9% | 161.0 | 67.0% | 26.5% |

| Wristbands | 47.6 | 37.9% | 52.2 | 21.7% | 1.2% |

| Clothing | 3.3 | 2.6% | 21.6 | 9.0% | 76.1% |

| Earwear | 1.6 | 1.3% | 4.0 | 1.7% | 39.7% |

| Others | 1.6 | 1.3% | 1.4 | 0.6% | -16.0% |

| Total | 125.5 | 100.0% | 240.1 | 100.0% | 18.2% |

| Source: IDC Worldwide Quarterly Wearables Device Tracker, June 21, 2017 | |||||

*Forecast data

Gartner, Inc. has highlighted the top technologies for information security and their implications for security organizations in 2017.

"In 2017, the threat level to enterprise IT continues to be at very high levels, with daily accounts in the media of large breaches and attacks. As attackers improve their capabilities, enterprises must also improve their ability to protect access and protect from attacks," said Neil MacDonald, vice president, distinguished analyst and Gartner Fellow Emeritus. "Security and risk leaders must evaluate and engage with the latest technologies to protect against advanced attacks, better enable digital business transformation and embrace new computing styles such as cloud, mobile and DevOps."

The top technologies for information security are:

Cloud Workload Protection Platforms

Modern data centers support workloads that run in physical machines, virtual machines (VMs), containers, private cloud infrastructure and almost always include some workloads running in one or more public cloud infrastructure as a service (IaaS) providers. Hybrid cloud workload protection platforms (CWPP) provide information security leaders with an integrated way to protect these workloads using a single management console and a single way to express security policy, regardless of where the workload runs.

Remote Browser

Almost all successful attacks originate from the public internet, and browser-based attacks are the leading source of attacks on users. Information security architects can't stop attacks, but can contain damage by isolating end-user internet browsing sessions from enterprise endpoints and networks. By isolating the browsing function, malware is kept off of the end-user's system and the enterprise has significantly reduced the surface area for attack by shifting the risk of attack to the server sessions, which can be reset to a known good state on every new browsing session, tab opened or URL accessed.

Deception

Deception technologies are defined by the use of deceits, decoys and/or tricks designed to thwart, or throw off, an attacker's cognitive processes, disrupt an attacker's automation tools, delay an attacker's activities or detect an attack. By using deception technology behind the enterprise firewall, enterprises can better detect attackers that have penetrated their defenses with a high level of confidence in the events detected. Deception technology implementations now span multiple layers within the stack, including endpoint, network, application and data.

Endpoint Detection and Response

Endpoint detection and response (EDR) solutions augment traditional endpoint preventative controls such as an antivirus by monitoring endpoints for indications of unusual behavior and activities indicative of malicious intent. Gartner predicts that by 2020, 80 percent of large enterprises, 25 percent of midsize organizations and 10 percent of small organizations will have invested in EDR capabilities.

Network Traffic Analysis

Network traffic analysis (NTA) solutions monitor network traffic, flows, connections and objects for behaviors indicative of malicious intent. Enterprises looking for a network-based approach to identify advanced attacks that have bypassed perimeter security should consider NTA as a way to help identify, manage and triage these events.

Managed Detection and Response

Managed detection and response (MDR) providers deliver services for buyers looking to improve their threat detection, incident response and continuous-monitoring capabilities, but don't have the expertise or resources to do it on their own. Demand from the small or midsize business (SMB) and small-enterprise space has been particularly strong, as MDR services hit a "sweet spot" with these organizations, due to their lack of investment in threat detection capabilities.

Microsegmentation

Once attackers have gained a foothold in enterprise systems, they typically can move unimpeded laterally ("east/west") to other systems. Microsegmentation is the process of implementing isolation and segmentation for security purposes within the virtual data center. Like bulkheads in a submarine, microsegmentation helps to limit the damage from a breach when it occurs. Microsegmentation has been used to describe mostly the east-west or lateral communication between servers in the same tier or zone, but it has evolved to be used now for most of communication in virtual data centers.

Software-Defined Perimeters

A software-defined perimeter (SDP) defines a logical set of disparate, network-connected participants within a secure computing enclave. The resources are typically hidden from public discovery, and access is restricted via a trust broker to the specified participants of the enclave, removing the assets from public visibility and reducing the surface area for attack. Gartner predicts that through the end of 2017, at least 10 percent of enterprise organizations will leverage software-defined perimeter (SDP) technology to isolate sensitive environments.

Cloud Access Security Brokers

Cloud access security brokers (CASBs) address gaps in security resulting from the significant increase in cloud service and mobile usage. CASBs provide information security professionals with a single point of control over multiple cloud service concurrently, for any user or device. The continued and growing significance of SaaS, combined with persistent concerns about security, privacy and compliance, continues to increase the urgency for control and visibility of cloud services.

OSS Security Scanning and Software Composition Analysis for DevSecOps

Information security architects must be able to automatically incorporate security controls without manual configuration throughout a DevSecOps cycle in a way that is as transparent as possible to DevOps teams and doesn't impede DevOps agility, but fulfills legal and regulatory compliance requirements as well as manages risk. Security controls must be capable of automation within DevOps toolchains in order to enable this objective. Software composition analysis (SCA) tools specifically analyze the source code, modules, frameworks and libraries that a developer is using to identify and inventory OSS components and to identify any known security vulnerabilities or licensing issues before the application is released into production.

Container Security

Containers use a shared operating system (OS) model. An attack on a vulnerability in the host OS could lead to a compromise of all containers. Containers are not inherently unsecure, but they are being deployed in an unsecure manner by developers, with little or no involvement from security teams and little guidance from security architects. Traditional network and host-based security solutions are blind to containers. Container security solutions protect the entire life cycle of containers from creation into production and most of the container security solutions provide preproduction scanning combined with runtime monitoring and protection.

IT Europa and Angel Business Communications’ new event – the Managed Services Solutions Summit 2017 - is designed to help executives of enterprises, organisations and public sector bodies navigate the managed services maze and get the most out of managed services-based solutions. It builds on the seven-year history of highly successful UK and European Managed Services and Hosting Summit series of channel events.

The rapid growth in managed services and provision of IT as a service is changing the way customers wish to purchase, consume and pay for their IT solutions but evaluating and selecting which services are best suited to particular business needs creates its own challenges. The Managed Services Solutions Summit 2017 is an executive-level conference which will set out to demystify both the new technologies and the new delivery mechanisms and business models.

The event will feature conference session presentations by major industry speakers and a range of breakout sessions exploring in further detail some of the major issues impacting the development of managed services. The summit will also provide extensive networking time for delegates to meet with potential business partners. The unique mix of high-level presentations plus the ability to meet, discuss and debate the related business issues with sponsors and peers across the industry, will make this a must-attend event for any senior decision maker involved in buying information and communication technologies and services.

The Managed Services Solutions Summit 2017 will be staged in London on 22 November 201. The event is free to attend for qualifying delegates (senior managers and executives of businesses and public sector organisations). Those wishing to attend the event or IT hardware or software vendors, hosting providers, data centre co-location providers, ISVs and any other organisations involved in services delivered to end-users can find further information at: www.msssummit.co.uk

IT Europa (www.iteuropa.com) is the leading provider of strategic business intelligence, news and analysis on the European IT marketplace and the primary channels that serve it. In addition to its news services the company markets a range of database reports and organises European conferences and events for the IT and Telecoms sectors.

Angel Business Communications (www.angelbc.com) is an industry leading B2B publisher and conference and exhibition organiser. ABC has developed skills in various market sectors - including Semiconductor Manufacturing, IT - Storage Networking, Data Centres and Solar manufacturing. With offices in both Watford and Coventry, it has the infrastructure to develop a leadership role in the markets it serves by providing a multi-faceted approach to the business of providing business with the information it needs.

The 11th July 2017 sees the next in the highly successful series of Data Centre Transformation Manchester (DT Manchester) events, organised by Angel Business Communications, in association with Datacentre Solutions, the University of Leeds and the Data Centre Alliance.

The combination of such data centre knowledge and expertise ensures that DT Manchester is the premier data centre education event in the calendar, bringing together the data centre research and design community, the data centre vendor industry and, most importantly, enterprise end users, for a collaborative information interchange.

In 2017 DT Manchester will be running six Workshop sessions, covering a range of topics: Power including Power Management, Cooling, IT Energy & Availability, Business Needs & Management, Capability and Workforce Development. The workshops will be managed by independent industry specialists to ensure vendor neutrality. The workshops will ensure that delegates not only earn valuable CPD accreditation points but also have an open forum to speak with their peers, academics and leading vendors and suppliers.

The Workshops are complemented by Keynote presentations from major industry figures: John Kennedy, Senior Researcher at Intel and Tor Bjorn Munder, Head of Research Strategy at Ericsson & CEO SICS North Swedish ICT AB. Workshops and Keynotes, together with networking opportunities provide the perfect blend of educational and informative content and information exchange which is truly valued by the hundreds of delegates who attend.

2017 promises to be an exciting year for the data centre industry, as much of the received data centre design and operations ‘wisdom’ is being challenged not just by the maturing Cloud/managed services model, but by a host of new and emerging threats and opportunities: the Internet of Things, digitalisation, mobility, DevOps and micro data centres to name but a few. In simple terms, more and more intelligence is being brought in at all levels of both the facilities and IT aspects of the data centre – and by combining the information this provides, there are some huge efficiency and optimisation gains to be made by data centre owners and operators.

The Workshop sessions will address the fast moving technological evolution of data centres as mission critical facilities, this includes:

The overall objective of DT Manchester is to reflect the ongoing need for the facilities and IT functions to join together to ensure the optimum data centre environment that can best serve the enterprise’s business requirements. After all, data centres do not exist in isolation, but are the engines that drive the critical applications on which the enterprise relies.

For more information and to register visit: www.dtmanchester.com/register

Will Morris of Alchemy Digital says that web apps herald a new era in how brands engage with their audiences

Progressive Web Apps (PWAs) are set to take off in a big way, and are an ideal solution for organisations wanting a better performance from their incumbent traditional apps.

Following the lead of many big brands already getting in on the act, including the BBC, Twitter and eCommerce stores like Lancome, including a PWA in your digital strategy should be the next natural step, especially if your existing app isn’t performing well or you need to bear the cost. They’re also low risk investments and make the absolute best of your brand within a “smart” digital strategy. By taking the best functionality of native apps (those designed for a specific operating system that leverage device functionality to increase speed and performance) and enabling access via a browser and a URL, PWAs can solve real business challenges.

As a population we are suffering from ‘app fatigue’; MobiLens reported in September 2016 that each month, 51% of UK adults install zero apps on average. There are currently more than two million apps on the App Store and Google Play, rendering it extremely difficult to be ‘discovered’ by users. We all know which apps we love, and which ones we run our lives by, and PWAs make it much easier for consumers to engage with the brands and the apps they want. I believe that PWAs could be the death knell for the App Store, Google Play etc. This next disruption in web is set to open up the advantages of having your own app to everyone, and at the same time being better, faster and cheaper. PWAs are one of the most open innovations in recent years, enabling even small brands and businesses to take advantage.

Gain visibility into every previously hidden corner of your network, so you can simplify and automate.

Securing an IT network has become more daunting and complex than ever. With the emergence of big data, Internet of Things, and machine-to-machine communications, immense volumes of data speed faster and faster across physical, virtual, and cloud infrastructures, linking billions of devices. Add in a growing number and variety of critical threats, including those originating from inside your organization, from cyber-terrorism, from malware, and from ransomware . . . and the result is a domain of ever-increasing cost, complexity, and risk.

REASON 1: Legacy security models are no match for modern threats.

Perimeter and endpoint-based approaches are incomplete.

These outmoded models can’t defeat zero-day attacks from outside. And they provide limited defense against inside threats.

The simple trust model no longer applies.

Gone are the days when every device was owned, controlled, and secured by IT. Bring Your Own Device (BYOD) and Bring Your Own Software (BYOS) blur the lines between what IT controls and what it does not. Trends like BYOD and BYOS may be good for productivity, but they’re bad for security. Sixty-one percent of security breaches today are carried out by insiders: an employee, a contractor, or a business partner on site.*

Legacy static security frameworks cannot adapt.

Today’s networks are anything but static. With near-universal mobility of users, devices, and apps, fixed, immutable choke points are things of the past. The dynamically expandable cloud makes perimeter boundaries even more fluid.

REASON 2: The anatomy of today’s threats is increasingly complex.

Today’s large-scale breaches are complex. Many of these “advanced persistent threats” take place over multiple stages and extended periods of time, ranging from weeks to months.

If you look at a typical kill chain, the activities conducted by a particular actor go through a sequence of steps that are very hard to detect. These steps do not always happen in immediate succession, and can span a long period of time. An attack can remain dormant until it is reactivated, especially once it has opened a backdoor.

Many of these activities can happen without breaching the security perimeter—either because they involve trusted users, devices, or applications, or because that perimeter is subject to the mobility of these users, devices, or applications.

REASON 3: Consequences can be persistent: You may be vulnerable to continuous attacks.

System infection can persist.

When a breach is extensive, the targeted organization often remains compromised. Even after a threat is detected and the network cleansed, some systems can remain infected— making them vulnerable to continuous attack.

Defeating SaaS’s evil twin: malware-as-a-service.

Such compromised systems are made available through sites offering malware-as-a service, an expanding “dark web” industry that gives individuals and organizations an easy and inexpensive way to mount crippling attacks, such as DDoS, at will.

REASON 4: Intrusions take a long time to detect ... and they have a long lease on life.

Complex, nuanced attacks infiltrate and lurk within hidden areas of today’s networks, often taking weeks to detect and even longer to contain.

Meanwhile, the attacker can wreak havoc on an organization’s business by continuing to exfiltrate data.

In addition, businesses can face serious consequences, from breach notification and reporting mandates to fines and potential litigation. Worse yet can be the impact on trust: leery customers are likely to take their business elsewhere.

15 - The median number of days from intrusion to detection for internally detected breaches.*

168 - The median number of days from intrusion to detection for breaches detected and reported by external parties.*

* Trustwave Holdings, Inc. “2016 Trustwave Global Security Report.” 2016.

REASON 5: SecOps pros face a perfect storm of challenges.*

It’s tough to be in cybersecurity operations these days. High-profile attacks are headline news, and the sheer volume of alerts can make it challenging to know what needs attention. SecOps pros face an expanding portfolio of responsibilities spread across myriad functions, technologies and processes. Skilled resources are stretched thin, with too few people covering too many responsibilities. Simplifying and automating key security operations processes must be a priority, along with adopting the right security technology architecture.

* Cisco: “Global Cloud Index.” Dec, 2016. ESG Research: “Network Security Trends,” Oct, 2016.

REASON 6: Security fundamentals have changed. How we address threats has not.

Albert Einstein defined insanity as “doing the same thing over and over again and expecting different results.” Unchanged security models simply cannot handle completely new breeds of hackers and new types of threats. Commercialized hacking tools, malware-as-a-service, and sophisticated multidimensional attacks are all becoming commonplace. At the same time, there is more data speeding across networks, more devices connecting from more places, and more widespread use of encryption.

The “whack-a-mole” approach of adding new tools to address each of these problems creates a patchwork quilt that cannot cover everything and slows time to detection and containment and increases cost and complexity.

REASON 7: Ad-hoc security deployments have unintended consequences.

Proliferation of security tools.

Too many network security appliances of diverse types, at more places in the network, increase complexity and costs.

Inconsistent view of traffic.

Security appliances tied in at specific network points are often blind to traffic from other parts of the network. They also miss mobile users and apps as they circulate to other parts of the infrastructure.

Contention for access to traffic.

Too many tools trying to access traffic from the same points in the network: only one actually gets through.

Blindness to encrypted traffic.

Many security appliances can’t see encrypted traffic—and malware increasingly uses encryption to take advantage of this deficiency.

Extraordinary costs.

Management costs and complexity are soaring due to the proliferation of security tools across the network.

Too many false positives.

More security appliances create more false positives for SecOps staff to wade through.

How can you optimize security in a landscape with so many challenges?

Given the challenges outlined here—from legacy approaches to complex persistent threats or increased burdens on SecOps—what is the best approach to improving your overall security posture?

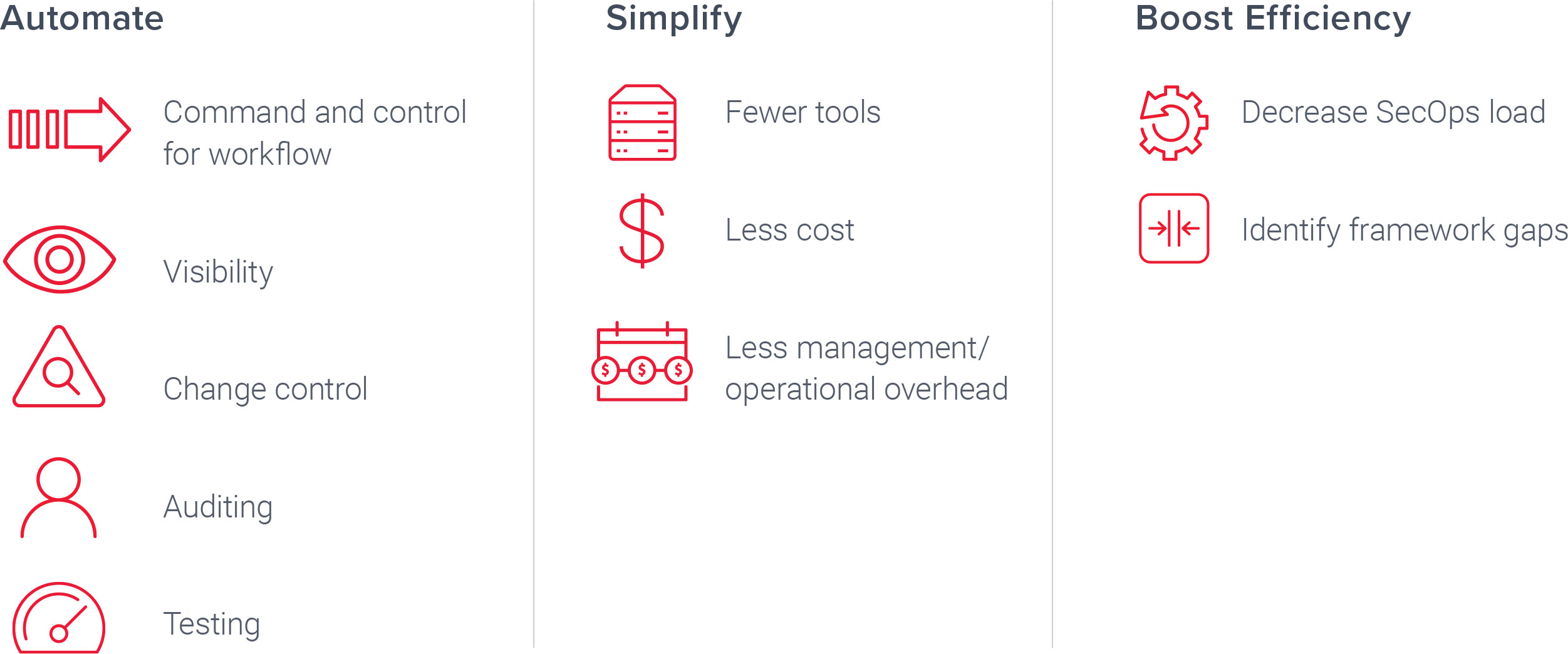

You need to automate, simplify, and boost efficiency of your security operations so that you gain better control while optimizing your existing investments in core security tools.

A security delivery platform transforms your approach to security.

You can automate, simplify, and boost efficiency of your security operations with a security delivery platform. Only Gigamon delivers a security delivery platform that lets you manage, secure, and understand what’s happening with data in motion across your entire network—and allows you to optimize your existing investments in security tools that help keep your organization safe.

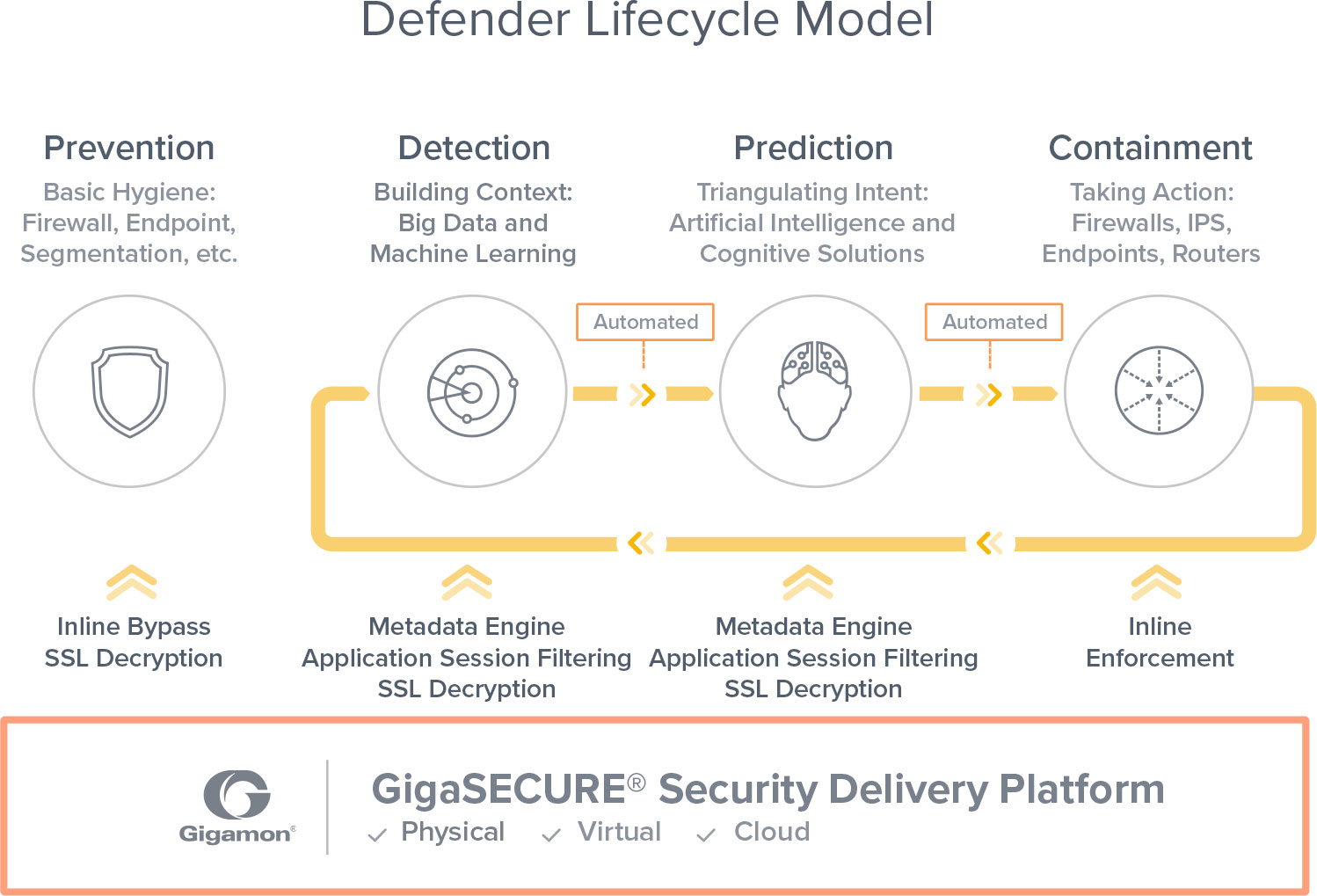

Adopt a Defender Lifecycle Model: Rethink network security with GigaSECURE.

The industry’s first and only bona fide security delivery platform.

GigaSECURE connects to your physical and virtual network, supporting both inline and out-of-band tools across multiple network segments simultaneously. Security tools link directly into GigaSECURE at their customary interface speeds, and then receive a high-fidelity stream of relevant traffic from across the network infrastructure. GigaSECURE delivers visibility into the lateral movement of malware, speeds the detection of exfiltration activity, and can significantly reduce the overhead, complexity, and cost of securing your entire network—physical, virtual, and cloud.

The Gigamon® Security Delivery Platform provides an essential visibility foundation that allows you to adopt a Defender Lifecycle Model and shift the advantage away from attackers back to you.

Leverage the power of the Gigamon ecosystem.

No platform stands alone, and the Gigamon Security Delivery Platform is no exception. Together, Gigamon and its ecosystem partners address all of your visibility and security requirements, so you can focus on what matters to your business.

Flickering lightbulbs, scary Barbie dolls, infected computer networks and cities out of action. Could this be the brave new world of the Internet of Things (IoT), if we neglect IoT security? Ian Kilpatrick, EVP Cyber Security for Nuvias Group, discusses the unstoppable growth of IoT and the necessity for organisations to take appropriate measures to protect their computer networks.

For several years, the IT industry has enthusiastically extolled the virtues of the Internet of Things (IoT), eager to enlighten us to the difference that living in a connected world will make to all our lives.

Now the IoT is here - in our homes and in the workplace. Its uses range widely, from domestic time-savers like switching on the heating, to surveillance systems, to “intelligent” light bulbs, to the smart office dream.

This proliferation of devices and objects collect and share huge amounts of data. However proliferation also has the potential to create greater opportunities for vulnerabilities. Moreover, because these devices are connected to one another, if one device is compromised, a hacker has the potential opportunity to connect to multiple other devices on the network.Indeed, there have been a number of high-profile cases where everyday items have been used to force websites offline. Recently, hackers harnessed the weak security of internet-connected devices, like DVRs and cameras, using botnets implanted on the devices, to take down sites such as Amazon, Netflix, Twitter, Spotify, Airbnb and PayPal. More recently, security vulnerabilities in the new, Wi-Fi enabled Barbie doll were discovered, turning it into a surveillance device by joining the connected home network!

Elsewhere, researchers said they had developed a worm that could potentially travel through ‘smart’ connected lightbulbs city-wide, causing the web-connected bulbs to flick on and off.These are just a few examples of the security failures in devices for the IOT. Unfortunately, they are not the exception. Manufacturers are rushing to make their devices internet-connected but, in many cases, with no thought (or indeed knowledge) around security.

The next step on IoT’s journey is connected or smart cities, where the consequences of an attack are enormous. It’s not just one lightbulb – a hacker can potentially plunge an entire city into darkness, or disable surveillance systems, causing chaos.With IoT devices now moving into the workplace, organisations are increasingly vulnerable to attack. A survey by analyst group 451 Research predicts that enterprises will increase their IoT investment 33 percent over the next 12 months, but that security remains a concern with half of respondents citing it as the top impediment to IoT deployments.

Nevertheless, it says that organisations are forging ahead with IoT initiatives and opening their wallets to support IoT deployments.There’s no turning back the tide of any of these IoT applications – and in fact we shouldn’t try to halt progress. However, checking the security capabilities before deployment isn’t a bad strategy. Especially as it is important to ensure that the advance of IoT isn’t providing hackers and criminals with another entry point for attack.

The IoT challenge is backfilling security onto IoT devices. Because these devices are not running on standard operating systems, they are often invisible to a large part of an organisation’s defences. And if a device is compromised, and you end up with malware within your organisation, you must firstly spot the breach, and then find out where it’s coming from – not an easy task.

Cleaning the device won’t necessarily fix the problem, as you will have a compromised IoT device within your security perimeter, which will just continue to re-infect other devices.

There are many different types of solutions available. Kaspersky Labs, for example, has

Kaspersky OS, a secure environment for the IoT. Other suppliers, including

Tenable Networksand

Check Point, also provide solutions that are relevant here.

A key action for organisations is to pay close attention to the network settings for IoT devices and, where possible, separate them from access to the internet and to other devices.

Also IoT devices should be identified and managed alongside regular IT asset inventories; and basic security measures like changing default credentials and rotating strong Wi-Fi network passwords should be used.

As much as IoT manufacturers need to embed adequate levels of security into their devices, the ultimate responsibility for ensuring an organisation is secure is with the user. This is particularly true as Chief Information Security Officers (CISOs) are under more pressure than ever to maintain the integrity of their organisations, in the face of increasing legislation such as the General Data Protection Regulation (GDPR), which carries potentially crippling fines for data breaches.

Ultimately, IoT is here, and it isn’t secure. It won’t be secure until IoT device manufacturers make it secure, which will be many years in the future. In the meantime, it’s down to organisations to make sure they are protected. User education should be a key element in defence around IoT deployment, partly because of the increased risks of shadow deployment in the workplace with IoT devices.

Business leaders need to ask their IT department or CISO for a strategic plan to deal with IoT vulnerabilities, rather than burying their head in the sand. As the saying goes, a failure to plan is planning to fail.

As shown by the WannaCry outbreak, cyber-threats can have unexpected origins – and solutions. The same goes for the security vulnerabilities inside your network and data centre.

By Ian Farquhar, Distinguished Sales Engineer, Gigamon.

Many Network teams think that if they’re monitoring all of their hosts, their network security should be in good shape. But trying to understand every endpoint means dealing with ‘unknown unknowns’.

You may know which endpoints report back on the network, or which did report back but no longer do. But what about those endpoints that were never audited or new ones being connected and authenticated? Unless you have a process to authenticate devices as they move to more secure network segments (and you do a good job of naming), you may have systems and users on the network accessing resources that you’re unaware of. Combine this with the proliferation of mobile devices, the flux and churn of employees, new SaaS/PaaS products, and the speed and volume of data, and you have a world of system change that often outpaces the ability of all but the most disciplined, sophisticated organisations to keep up.

So how can you begin to address the unknown unknowns? Your network itself is often your most convenient source of truth. Every system is going to consume network resources of one sort or another, so take a look at your core access layer and, if you’re not closer to the distribution layer of switches, add taps. Get as close as you can to the leaf switches and tap them, and if you have segments without visibility, add a tap point to an uplink.

From here you can gather NetFlow and metadata, and feed that to tools like Splunk or Plixer. Or, write a quick program to look at source and destination IPs, put them in a simple database, and use NetFlow to see which ports they’re connecting to and what’s connecting to them. You’ll then have mapped out the domain controllers, SMTP systems, internal tool machines and more. While still not delivering 100% situational awareness, if you get close enough to the endpoints and you’re thoughtful about what you collect, you can begin to compare your known hosts and what you think you see to what’s truly happening on the network.

Keeping track of employees joining and leaving the organisation is relatively straightforward. What’s far more challenging is what they get up to while they’re there. As revealed in the Dtex Systems Insider Threat Intelligence Report, the most important vectors of security breaches are company employees. 60% of all attacks are carried out by insiders, and of those, 68% are committed by simple negligence. The report also showed that a staggering ‘95% of enterprises found employees actively seeking ways to bypass corporate security protocols’.

In other words, when employees perceive internal policies are limiting their ability to do business, more often than not they’ll disregard those policies or find a way around them. That ‘way around’ is often where the threat lies: either the employee has inadvertently leaked data, or malware will find that exploit and infect the network. And this behaviour is extremely widespread. How many employees – including security professionals – have checked their personal email from a corporate device, or loaded a corporate presentation onto a personal thumb drive to share with a colleague?

For the past 20 years, the most common way of ensuring network security has been ‘prohibition-based’. This includes inspecting, identifying and eventually blocking packets; limiting what comes and goes in the environment; blocking access to certain websites, deactivating USB ports, restricting the utilisation of printers, segregating networks etc. And the reason for this approach is that as it’s been impossible to regulate every packet, (manual or automatic) policies have been used to block specific behaviours instead.

The downside is that you can’t just take away functionality without giving employees an alternative; otherwise they’ll find ways to get around the limitations for the simple reason that they’re measured on their output, not their compliance with internal policies. For employees to become more productive without jeopardising security, they therefore need to be allowed to do more with the network and the tools available to them, by substituting this prohibition-based approach with visibility-centric security. Visibility into network traffic enables a more granular understanding of at-risk employee behaviour, while more granular policies also make it possible to retain control by helping ensure network security isn’t getting in the way of employee productivity, and hence fostering a disregard for internal policies.

While no-one seriously questions Network and Security teams’ common dedication to the success of their organisations, another human factor is the cultural disparity in the way these teams operate.

Network teams tend to be highly disciplined and have their performance measured according to strict metrics like network uptime and availability. They’re also constantly being asked to do more with less, as what they deliver is seen as a business function that constantly needs to improve efficiency. Security teams, on the other hand, face an unknown and unpredictable enemy that’s often malicious, will respond to security measures by finding ways around them, and can and will hit every single layer of the OSI stack. The result is an ‘arms race’ between attackers and defenders, with new features and even toolsets continually appearing to address new threats. Measuring the performance of Security teams (beyond ‘don’t get breached’) can also be a challenge, but as security threats resonate to the top level of most organisations, these teams are often well funded in comparison to other IT functions.

These cultural differences can create tensions between Network and Security teams. On the one hand, a Network team has targets for uptime, does multi-year planning and capacity measurement, and has strong processes in place to prevent disruption. The flipside is that these processes can make the team seem slow, risk-averse to outages and yet apparently unconcerned about security.

A Security team, on the other hand, is fighting an intelligent, agile and constantly morphing threat. To try to defeat this enemy, they need to deploy tools and features requiring extreme agility, especially in incident response situations. Security tools are also expensive, and assessing, deploying and operating the best is highly challenging. And yet to the Network team, this behaviour can look ridiculously over-responsive, lacking in strategy, and suffering from a ‘tool of the month’ or even ‘shiny new toy’ behaviour that puts the availability of the network at risk.

Put simply, while Security people care passionately about preventing security breaches, Network people care passionately about preventing outages, making it very easy for either side to see the other as disregarding something that, to their unique mindset, is core to the organisation’s success.

There’s no right or wrong in this clash of cultures: it’s simply a matter of differing priorities and focuses. Fortunately, as well as helping to deal with the issues described above, a solution like Gigamon’s Security Delivery Platform bridges these challenges, by de-risking security deployments for the Network team and speeding up network access for the Security team. And in doing so, it can also save money and increase operational efficiencies.

When it comes to digitally transforming the workplace, companies are struggling to see the bigger picture. In order for an enterprise to truly transform, CIOs need to take the lead and combine cultural change with technical innovation.

By Sven Hammar, Founder and Chief Strategy Officer at Apica.

Organisational culture is key to digital transformation – everyone needs to be on board for it to be successful, from stakeholders and C-suite executives to employees. The digital transformation lead, likely a new-look business CIO unifying business with technical, needs to identify key stakeholder groups, and bring them together to drive change.

Many organisations are still confined by a silo mentality and are not communicating one version of quality service. Take the recent Ryanair outage, for example. The airline announced an eight hour shut down to redesign their website. The outage left customers frustrated that they were unable to check in to flights, and lost Ryanair a high volume of e-commerce traffic.

Digital transformation is not just about modernising the technology that you use. To effect real change, organisations need to break down established norms within their corporate structure. Modern CIOs must act as a bridge between sections of their organisations to align tech perspective with wider company strategy,

CIOs now play a central role in scaling systematic digital transformation efforts, and set out the criteria for what successful change looks like for their organisation. The role of the CIO is changing, and they are expected to create the best value for their organisation, often through greater appreciation of customers and markets.

Digital transformation not only effects company culture, it effects the very foundation of business. The era of stand along products is over – products are increasingly packaged together with a service, creating a new, product-as-a-service approach, and a whole new business model for CIOs to navigate. IoT is a prime example of the product-as-a-service business model that CIOs need to manage in order to successfully implement change.

In order to speed up the process of digital transformation, CIOs often launch a ‘speedboat’ setup, whereby a dedicated initiative, such as IoT, is fast tracked. Launching a speedboat setup in one specific area can create change momentum and enable organisations to surpass their competition for the short term, but if the overall project is to be successful, the whole organisation needs to be on board.

The role of the CIO is as much in flux as wider business strategies, and good CIOs understand their role now covers far more than traditional IT maintenance duties. Some organisations have different initiatives that have changed the way they operate; finance is a good example. Traditional financial services firms now find themselves in competition with ‘born digital’ fintech organisations, who are not confined by the restrictions of legacy systems. In order to compete, established organisations must undergo digital transformation. The barrier is usually not a technical problem, but a business model and culture issue. However, the multi-discipline CIO is still going to be looked at to take the lead and push on to an agile new frontier for their businesses.

Technological innovations are transforming the workplace, workers roles, and business approaches, at a rapid pace. The real challenge for CIOs and industry leaders is to ride this wave of change and pick out the benefits, without losing sight of their organisation’s objectives or the skills that placed individuals in their c-suite role to begin with.

Uncovering relevant links and patterns between data is more and more like the search for a needle in a haystack given the ever-increasing amounts of data. Because the volume and variety of data increases, the unstructured-ness and complexity increases too. Even more significant is the flood of highly interconnected data that flows over us through the Internet of Things (IoT), social ecosystems and even natural and biological systems.

By Dominik Ulmer, VP Business Operations EMEA, Cray Inc.

This sheer amount of data and its strong connectedness, makes it increasingly difficult to expose relationships between data items and follow all the threads. Thereby companies today receive the most valuable insights especially through the understanding of how data are related. The knowledge of certain links enables companies to ask the right questions based on their data.

By default, many companies use relational databases. However, these reach their limits when they have to look for relationships, relationship patterns or interactions between data elements. As data volumes increase, many tables must be joined or linked recursively for advanced queries, making discovery more computationally expensive and time consuming. So, while relational databases do well with searches for detailed information about a specific element, analyzing complex relationships between data can be very slow and resource intensive. This is partly due to their table-based structure that calculates relations at query time; since every side of a relationship must be combined (along with sub-elements), a complex query can become very processor- and memory-intensive very quickly.

For this reason, Graph Analytics are becoming increasingly important in the world of Big Data and IoT. The most well-known application for graph databases are social networks. Here graphs establish relationships between people, their common interests and contributions and determine which person influences whom and what connections exist between groups etc. As a result, for example, very targeted marketing strategies such as personalized recommendations can be created.

Similarly, graph data are also used in the fields such as law enforcement, fraud detection, medical research, and financial services. Graph Databases represent the most efficient way to detect relevant relationships between data within a very short time to deal with their complexity and establish a semantic context. The reason is that they store data in a network structure where individual information elements are represented by nodes and the relationships between this information by edges. Data items that were stored in a relational database in the field of a table are saved in nodes in Graph Databases and data descriptors can become the edges of the graph.

Cray uses a semantic model, Resource Description Framework (RDF), that stores data in a simple subject-verb-object triple. Here each node can be the subject of a triple and the object of another, so each data element occurs only once in the graph. The relationships between the data are generated at the time of insertion and are available from this moment at any time, which makes this type of Graph Databases especially efficient and flexible for ad hoc exploration. The overhead of implementing relationships only incur once, at the time of insertion, as opposed to calculating at each query.

Queries begin at a start node and then navigate the graph by following edges and thus the relationships between nodes. The search speed depends on the number of specific relationships that are relevant to the desired query. In this way, the scan time is proportional to the result set and not to the total amount of data, which in turn leads to quicker results on more complex queries. Thus, within a short time relevant data patterns and connections are detected and returned.

Graph databases attribute the same meaning to the stored data and the relationships between these data. This makes them especially well-suited for highly crosslinked, unstructured, and noisy information with the ability to generate a comprehensive semantic context from a variety of data sources. Since graphs support complex data structures without logical restrictions, they are optimally suited for continuous data changes and expanded data sets.

Graph Databases also have great benefits in tracking down security issues and cyber attacks. Although firewalls and security software can detect known threat-signatures, attack methods change continuously. So, analysts need to discover novel threats and respond to them before financial markets, government affairs and authorities or companies can be damaged. Part of the problem is that network data is often generated faster than it needs to be analyzed. As a result, only a fraction of the available data can be properly evaluated with critical information often hidden in unanalyzed data without regular activity.

In order to stay ahead of new types of threats, a variety of data sources and types must be correlated and interpreted with anomaly detection in mind. This means that analysts must continually modify and/or expand their polling scheme in order to integrate various new data sources and perform a variety of queries and to differentiate regular traffic from abnormalities. A major obstacle is often the limited scalability and performance of the hardware -- many queries require hours or days to deliver results. With a big data application for Graph Analytics like that from Cray, a security analyst can discover and hunt for cyber attackers quickly and with the flexibility required using schema-less models.

Another field of application in health care is the analysis of genomic data and the genome sequencing in cancer research. The Broad Institute of Massachusetts Institute of Technology (MIT) and Harvard, a nonprofit research institute in the United States, which is seeking a greater understanding of diseases and the progress of their treatment, could reach new standards with Cray’s agile analytics solution. The dynamic Big Data analytics platform Urika-GX combines qualities of a supercomputer (enormous speeds as well as scaling and throughput rates) with those of a standardized enterprise hardware and an open source software environment. An exclusive feature of the Cray Urika-GX is the high performance solution Cray Graph Engine by which scientists are able to discover new dependencies and connections between data. In the case of the Broad Institute, scientists can analyze genome sequencing data with high-throughput using the Cray Urika-GX system. They were able to significantly shorten the time in which it achieved the Quality Score Recalibration (QRS)-Results from its genome analysis toolkit “GATK4” and the Apache Spark pipeline: from 40 to nine minutes.

Advertisement: Gigamon

Pick up any publication aimed at cybersecurity, payments, financial services or financial technology and more often than not, two topics dominate the headlines: blockchain and fraud.

While experts in the payments industry opine about the possibilities afforded by an anonymous and transparent distributed ledger technology (DLT) that eliminates third-party intermediaries from all types of peer-to-peer transactions, one thing is certain: In order to be widely accepted and used by the public at-large, it must offer assurances and protections akin to what all parties expect in the current transactional ecosystem.

For this reason, many current academic discussions regarding blockchain are focused on fraud. As with any emerging technology, fraudsters are hard at work looking for ways to capitalize on security loopholes using techniques like identity compromise, as well as ways to bypass authentication using fraud tools, malware and malicious apps.

Much of the security buzz around blockchain revolves around the potential for it to eliminate fraud altogether; however, those discussions focus on the security and transparency of the ledger itself, failing to take a holistic view that includes who conducts those transactions and how they are conducted.

A recent paper by SWIFT and Accenture investigating how distributed ledger technologies (such as blockchain) can be used by the financial services industry (SWIFT on Distributed Ledger Technologies - Delivering an Industry Standard Platform Through Community Collaboration, April 2016) found a number of critical factors that need to be addressed before industry-wide adoption of DLTs, including building an identity framework – the ability to identify parties involved in transactions to ensure accountability and non-repudiation of transactions – and security and cyber defense – the ability to detect, prevent and resist cyber-attacks, which are growing in number and sophistication.

For a transaction to be trusted — whether the person is accessing the blockchain to make a transaction in the ledger, or making an online purchase paid for using Bitcoins — there needs to be a strong understanding that the person involved in the transaction is the person authorized to perform it. There also must be validation that the device itself is “clean” and doesn’t contain malware or crimeware.

While many enterprises may be watering at the mouth at the prospect of greatly reduced or eliminated fraud related to their payments and transaction networks, it will remain of the utmost importance for firms to ensure transactional trust by implementing sophisticated device intelligence solutions that leverage multi-factor authentication (MFA) to determine the riskiness or trustworthiness of the device.