This comment is often used to extol the virtues of the next big thing – Cloud, IoT, software-defined, the edge, Open Source, artificial intelligence, digitalisation – the list is seemingly endless. And while it’s part of the role of the DCS magazine to ensure that all our readers are kept abreast of the latest technologies and trends, just occasionally, maybe we should take a pause for breath, and realise that, for many data centre owners, operators and users, there’s enough to keep them busy just running and using their existing infrastructure, without confusing the picture with a whole new set of ideas.

However, time and tide wait for no data centre professional, so we’re not going to abandon bringing you what’s cutting edge, or even just edge(!), but hopefully balance the old and the new, the tried and the tested with the untried but must be tested. However, I would say that, having worked in and around the data centre industry for the best part of 20 years, I am constantly surprised when I attend industry conferences and workshops and see the same pictures of the same data centre bad practices time after time. It would seem that, despite the best efforts of vendors, consultants, trade associations, the media and others, there are still plenty of data centre managers and operators who don’t mind the ‘spaghetti’ cabling scenario, or are happy to have plenty of gaps in their hot or cold aisle containment strategies, not bothering to use blanking panels, or even a properly sized piece of wood or cardboard to plug the holes!

While the various surveys that predict the extinction of any business that fails to employ robots, IoT and a total Cloud strategy by, say, 2025, might be a little bit over the top, there is no doubt that the pace of change has never been quite so fast as right now. So, if you don’t at least start thinking about some kind of a future strategy soon, then you won’t be in any kind of position to respond to the next technology innovation wave – whenever that arrives (although we seem to be in the midst of one long such wave!).

Yes, the pace of change varies from country to country, and from technology to technology within each country as well, but anyone who doubts that massive change is taking place, needs only to think of some of the big names in virtually any industry sector that have disappeared for good. Sure, there will be many reasons why a company has to close, but I suspect that, in most cases, failing to keep pace with technology changes will be one of the main reasons. After all, properly implemented, the very latest IT offers a level of reliability, flexibility, efficiency and speed unheard of just a few years ago.

If you are not planning to change your data centre to keep pace with today’s available technologies, then there are plenty of companies who will be. No one can predict how, when, where new ideas and technical advances will come along, or even whether they’ll be adopted on a wide scale, but you’d be foolish to ignore them. Hit a wall of fog whilst driving your car and you have two choices – carry on at the same speed and hope that you don’t crash into the vehicle in front, or slow down and be as vigilant as you can to try and spot what’s out there. So, do you keep on doing the same things in the data centre, or make a decision to make some changes?

-l1uge6-aynu23.jpg)

Over 70% of IT organisations have trouble recruiting candidates for datacentre and facilities roles.

Despite on going consolidation worldwide and migration to public cloud, today’s datacentres are well-equipped to handle physical infrastructure requirements for the foreseeable future. Nearly 60% of organisations worldwide surveyed in 451 Research’s latest Voice of the Enterprise: Datacentre Transformation study said they have enough floor space and power capacity to last at least five years.Further, while the total number of IT employees is expected to decline over the coming 12 months, most organisations said the number of personnel dedicated to datacentre and facility tasks will stay the same or increase. This solid outlook was most often attributed to overall business growth (63% of respondents), but more than a third of organisations also pointed to demand from project-driven growth.

As a result of these demands, 73.7% of organisations said that recruiting for datacentre and facilities is at least moderately difficult. Respondents pointed to three common reasons: current candidates lack skills and experience, salary asking prices are too high, and a lack of candidates in the organisation’s region.

“The good news is many organisations are not facing a datacentre and facilities skills shortage at this time,” said Christian Perry, Research Manager and lead analyst of 451 Research’s Voice of the Enterprise: Datacentre Transformation. “Those who do have recruitment challenges say they most often train existing staff to learn new skills due to the dearth of available talent.”

Only 19.2% of the surveyed organisations facing these skills shortages said they would use managed service providers to fill the gaps. While this limits the opportunity for traditional MSPs and infrastructure vendors to offer value-added services, it creates opportunities for them to assist customers with training, for example providing education on eco-friendly HVAC (heating, ventilation and air conditioning) technologies.

Similarly, only 20.5% of the organisations that face skills shortages plan to move spending to public cloud, compared with 42% that said spending will not be impacted by those shortages, and 32.1% that said they will spend more on talent. However, 451 Research analysts found differences between organisations that have more generalists than specialists across their IT team.

“When IT teams consist primarily of generalists, they are more likely to invest to secure talent compared with specialist-heavy firms,” Perry said. “We find that siloed organisations tend not to be in a significant period of IT team transition, whereas generalist firms are transitioning to become even more generalist-heavy. This can backfire when personnel leave or retire, forcing them to scramble to find specialist skills in facilities, for example.”

In the second quarter of 2017, worldwide server revenue increased 2.8 percent year over year, while shipments grew 2.4 percent from the second quarter of 2016, according to Gartner, Inc.

"The second quarter of 2017 produced some growth compared with the first quarter on a global level, with varying regional results," said Jeffrey Hewitt, research vice president at Gartner. "The growth for the quarter is attributable to two main factors. The first is strong regional performance in Asia/Pacific because of data center infrastructure build-outs, mostly in China. The second is ongoing hyperscale data center growth that is exhibited in the self-build/ODM (original design manufacturer) segment.

"x86 servers increased 2.5 percent in shipments and 6.9 percent in revenue. RISC/Itanium Unix servers fell globally for the period — down 21.4 percent in shipments and 24.9 percent in vendor revenue compared with the same quarter last year. The 'other' CPU category, which is primarily mainframes, showed a decline of 29.5 percent in revenue," Mr. Hewitt said.

Hewlett Packard Enterprise (HPE) continued to lead in the worldwide server market based on revenue. Despite a decline of 9.4 percent, the company posted $3.2 billion in revenue for a total share of 23 percent for the second quarter of 2017 (see Table 1). Dell EMC maintained the No. 2 position with 7 percent growth and 19.9 percent market share. Huawei experienced the highest growth in the quarter with 57.8 percent.

Table 1

Worldwide: Server Vendor Revenue Estimates, 2Q17 (U.S. Dollars)

| Company | 2Q17 Revenue | 2Q17 Market Share (%) | 2Q16 Revenue | 2Q16 Market Share (%) | 2Q17-2Q16 Growth (%) |

| HPE | 3,204,569,547 | 23.0 | 3,536,530,453 | 26.1 | -9.4 |

| Dell EMC | 2,776,347,626 | 19.9 | 2,594,180,873 | 19.1 | 7.0 |

| IBM | 963,279,264 | 6.9 | 1,226,947,968 | 9.1 | -21.5 |

| Cisco | 866,450,000 | 6.2 | 858,924,000 | 6.3 | 0.9 |

| Huawei | 845,543,611 | 6.1 | 535,946,936 | 4.0 | 57.8 |

| Others | 5,281,754,345 | 37.9 | 4,801,420,134 | 35.4 | 10.0 |

| Total | 13,937,944,394 | 100.0 | 13,553,950,355 | 100.0 | 2.8 |

Source: Gartner (September 2017)

In server shipments, Dell EMC maintained the No. 1 position in the second quarter of 2017 with 17.5 percent market share (see Table 2). HPE secured the second spot with 17.1 percent of the market. Inspur Electronics experienced the highest growth in shipments with 31.5 percent, followed by Huawei with 26.1 percent growth.

Table 2

Worldwide: Server Vendor Shipments Estimates, 2Q17 (Units)

| Company | 2Q17 Shipments | 2Q17 Market Share (%) | 2Q16 Shipments | 2Q16 Market Share (%) | 2Q17-2Q16 Growth (%) |

| Dell EMC | 492,854 | 17.5 | 529,135 | 19.2 | -6.9 |

| HPE | 483,203 | 17.1 | 529,488 | 19.2 | -8.7 |

| Huawei | 176,426 | 6.2 | 139,866 | 5.1 | 26.1 |

| Inspur Electronics | 158,373 | 5.6 | 120,417 | 4.4 | 31.5 |

| Lenovo | 145,655 | 5.2 | 235,267 | 8.5 | -38.1 |

| Others | 1,367,176 | 48.4 | 1,203,525 | 43.6 | 13.6 |

| Total | 2,823,688 | 100.0 | 2,757,698 | 100.0 | 2.4 |

Source: Gartner (September 2017)

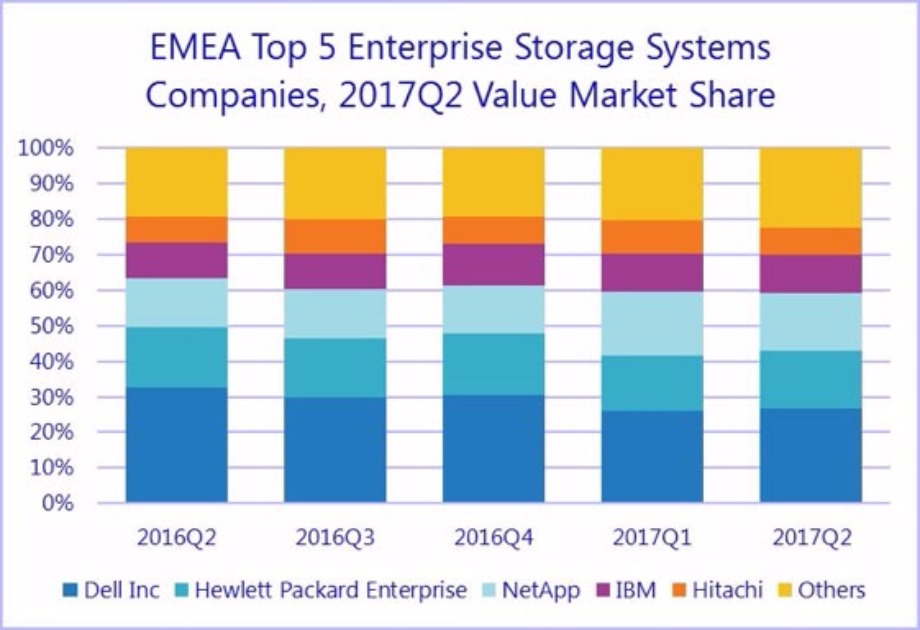

Total EMEA external storage systems value fell by 2.9% in dollars in the second quarter of 2017 but remained fairly flat at -0.1% in euros, according to the International Data Corporation (IDC) EMEA Quarterly Disk Storage Systems Tracker 2Q17.

The all-flash market still recorded high-double-digit growth in value (53.1% in dollars), accounting for about a quarter of the overall market, while hybrid arrays recorded a marginal decline (-3.1%) and HDD-only systems continued to contract (-25.7%).

"As enterprise datacenters embark on their digital transformation, purchase patterns evolve too, shifting toward opex-based consumption models and a preference for more efficient, leaner datacenter infrastructure such as all-flash arrays [AFAs] and hyperconverged systems [HCIs]. This shift keeps putting pressure on industry revenue and margins, rewarding vendors that are quick to adapt to the new market imperatives," said Silvia Cosso, research manager, European Storage and Datacenter Research, IDC.

Western Europe

The Western European external storage market recorded the lowest YoY decline in the EMEA region, at -1.0% in dollars and 1.9% in euros. Capacity, on the other hand, grew by 7.6% to 2,366.2 petabytes.

Unfavorable exchange rates and tough competition are still dragging down the Western European market, but strong growth is still coming from the midrange and AFA segments. In fact, AFA storage solutions again registered strong YoY double-digit growth, albeit amidst an increasingly challenging competitive environment that is shaking up market share rankings in the region.

"As AFA systems have grown to account for a quarter of total Western European shipment value, the segment is heading toward maturity, showing horizontal adoption in terms of enterprise dimension and specialization, as well as workload coverage, also helped by a wider offer in diversified price ranges," said Archana Venkatraman, research manager, IDC Europe.

Central and Eastern Europe, the Middle East, and Africa

The external storage market in Central and Eastern Europe, the Middle East, and Africa (CEMA) declined again in the second quarter of 2017, with value declining 9% to $370.2 million and capacity slipping 4.7% to 748.3 terabytes. With nearly 24% share, AFAs considerably outperformed total market growth and together with hybrid solutions were responsible for 70% of the total market in CEMA.

"AFAs will continue to be the storage segment with the highest growth potential and CEMA is one of the top regions in terms of growth and penetration," said Marina Kostova, research manager, Storage Systems, IDC CEMA. "With NVMe maturity and new SSD technologies, we expect to see external flash storage developing in two directions: nurturing new technologies to boost the performance of HDD/flash hybrid arrays and offering AFA 'hybrid' solutions with different tiers of flash. Both of these will offer better performance and more accessible pricing, increasing the penetration but decreasing the market value."

By region, both Central and Eastern Europe (CEE) and the Middle East and Africa (MEA) performed better than in the previous quarter, paving the way to the long-expected market stabilization. A major boost came from high-end AFA arrays, which posted over 300% YoY growth to take more than 50% of the high-end segment value. Similar to last quarter, CEE market performance was stable, excluding Russia due to the weaker performance of some of the largest incumbents. MEA market value was almost flat year on year as large-scale projects in the pipeline came to fruition.

| Top 5 Vendors, EMEA External Disk Storage Systems Value ($M) | |||||

| Vendor | 2Q16 | 2Q16 Market Shares | 2Q17 | 2Q17 Market Shares | 2Q17 YoY Growth |

| Dell Inc. * | $514.28 | 32.71% | $409.58 | 26.85% | -20.36% |

| Hewlett Packard Enterprise ** | $267.22 | 17.00% | $248.61 | 16.29% | -6.97% |

| NetApp | $212.83 | 13.54% | $246.69 | 16.17% | 15.91% |

| IBM | $162.67 | 10.35% | $163.31 | 10.70% | 0.39% |

| Hitachi | $110.41 | 7.02% | $116.31 | 7.62% | 5.35% |

| Others | $304.89 | 19.39% | $341.22 | 22.36% | 11.92% |

| Grand total | $1,572.30 | 100.00% | $1,525.71 | 100.00% | -2.96% |

Notes: Dell Inc. represents the combined revenues for Dell and EMC. Hewlett Packard Enterprise includes the acquisition of Nimble, completed in April 2017.

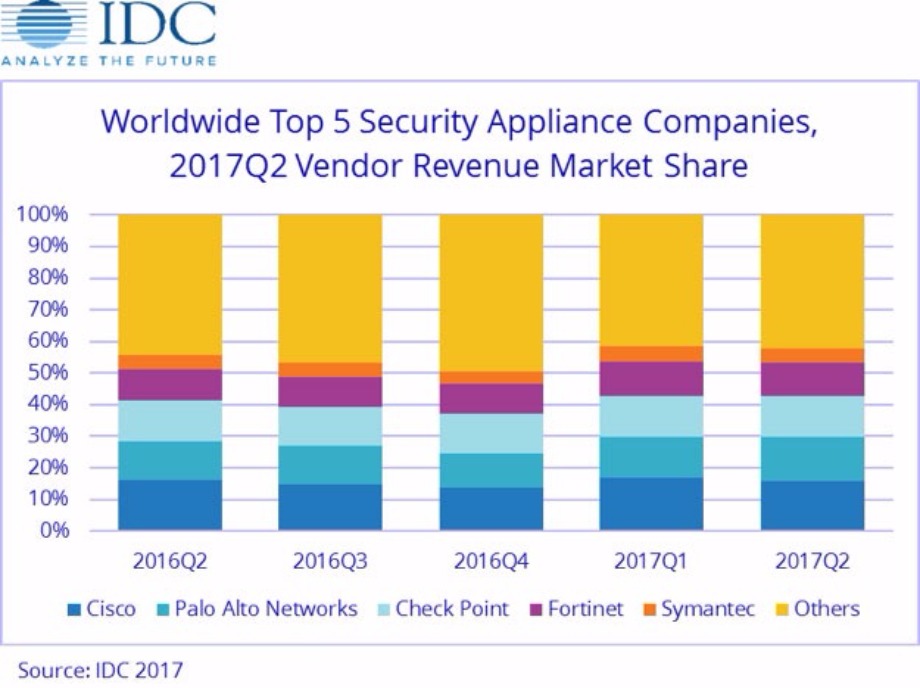

According to the International Data Corporation (IDC) Worldwide Quarterly Security Appliance Tracker, the total security appliance market saw positive growth in both vendor revenue and unit shipments for the second quarter of 2017 (2Q17). Worldwide vendor revenues in the second quarter increased 9.2% year over year to $3.0 billion and shipments grew 7.0% year over year to 706,186 units.

The trend for growth in the worldwide market driven by the Unified Threat Management (UTM) sub-market continues, with UTM reaching record-high revenues of $1.6 billion in 2Q17 and year-over-year growth of 16.8%, the highest growth among all sub-markets. The UTM market now represents more than 50% of worldwide revenues in the security appliance market. The Firewall and Content Management sub-markets also had positive year-over-year revenue growth in 2Q17 with gains of 9.5% and 6.4%, respectively. The Intrusion Detection and Prevention and Virtual Private Network (VPN) sub-markets experienced weakening revenues in the quarter with year-over-year declines of 11.7% and 1.3%, respectively.

Regional Highlights

The United States delivered 41% of the worldwide security appliance market revenue and was the major driver for spending in Q2 2017 with 9.2% year-over-year growth. Asia/Pacific (excluding Japan)(APeJ) had the strongest year-over-year revenue growth in 2Q17 at 20.9% and captured 23.9% revenue market share. The more mature regions of the world – the United States and EMEA – combined to provide nearly two thirds of the global security appliance market revenue. Both regions had positive growth in the single-digit range. Europe, the Middle East and Africa (EMEA) saw an annual increase of 2.3%. Asia/Pacific (including Japan)(APJ) and the Americas (Canada, Latin America, and the U.S.) experienced year-over-year growth of 17.2% and 8.8%, respectively.

"Over the last quarter, there has been growth in every region with particularly strong growth in Asia/Pacific. Firewall and UTM continue to be the strongest areas of growth, as those products continue to add security features leveraging and addressing cloud protection." said Robert Ayoub, research director, Security Products at IDC.

| Top 5 Vendors, Worldwide Security Appliance Revenue, Market Share, and Growth, Second Quarter of 2017 (revenues in US$ millions) | |||||

| Vendor | 2Q17 Revenue | 2Q17 Market Share | 2Q16 Revenue | 2Q16 Market Share | 2Q17/2Q16 Growth |

| 1. Cisco | $479.60 | 15.9% | $448.72 | 16.3% | 6.9% |

| 2. Palo Alto Networks | $421.09 | 14.0% | $333.68 | 12.1% | 26.2% |

| 3. Check Point | $380.32 | 12.6% | $358.05 | 13.0% | 6.2% |

| 4. Fortinet | $320.12 | 10.6% | $269.61 | 9.8% | 18.7% |

| 5. Symantec | $139.35 | 4.6% | $127.13 | 4.6% | 9.6% |

| Other | $1,267.10 | 42.1% | $1,216.50 | 44.2% | 4.2% |

| Total | $3,007.59 | 100.0% | $2,753.68 | 100.0% | 9.2% |

| Source: IDC Worldwide Quarterly Security Appliance Tracker Q2 2017 September 18, 201 | |||||

A survey by leading law firm Blake Morgan has revealed nine out of 10 businesses have still not made crucial updates to their privacy policies – a key requirement ahead of major changes to data protection laws.

As time runs out to comply with the General Data Protection Regulation (GDPR), the survey found many organisations may be at risk of non-compliance, risking regulatory action and reputational and brand damage for not getting their house in order.With the massive growth of the digital economy, GDPR represents the biggest shift in data protection for many years and all organisations which retain or process personal information will need to comply. The new law focuses on greater transparency as to how personal data is collected, retained and processed, makes organisations more accountable and gives enhanced rights to those whose personal data is being collected and processed.

It is backed up with a significantly higher fines regime for the most serious breaches of up to £17m or 4% of worldwide turnover (whichever is greater) and a requirement to notify personal data breaches within 72 hours where they are likely to result in a risk to people's rights and freedoms. Blake Morgan’s research revealed just over 10 per cent of those surveyed had updated their privacy policies to comply with the new law, while only a quarter had put in place systems to ensure data security breaches were notified in line with GDPR.

The findings showed almost 40 per cent of organisations surveyed had not taken steps to prepare for the new regulations, while more than a third were not confident they would be able to comply with GDPR by 25th May next year when the law comes into force. A key finding was that just over a fifth of businesses surveyed were not aware of GDPR and the forthcoming and related ePrivacy Regulation and what these will mean for their organisation. Simon Stokes, a Partner specialising in data protection law at Blake Morgan, said: “Our survey highlights that a significant proportion of organisations across the public and private sectors are still underprepared for these major changes to data protection law.

“There appears to be a genuine confusion among many business leaders about what the new law means and how to achieve full compliance. “Some of the survey comments highlight a desire for clearer guidance and the mountain of work that many organisations believe they are facing because of the sheer volume of data and a limited timescale. “With the clock counting down to the law coming into force, we would recommend a focused effort by businesses to get to grips with the changes and implement a strategic plan of action.

“GDPR Compliance is good corporate housekeeping. Not only will it avoid running the risk of financially and reputationally damaging fines or sanctions – ultimately it will assure the public’s trust in your organisation at a time when data privacy and security are more important than ever before. As the UK's data protection regulator ICO has recently highlighted GDPR is essentially about trust.” Important findings included: Only around one in 10 businesses (13 per cent) had updated privacy policies, one of the significant requirements of GDPR.

Almost a quarter of businesses (23 per cent) said they were unaware of the new data protection laws despite the looming deadline of 25 May 2018. Around four out of 10 businesses (39 per cent) had not taken any steps at all to prepare for the new law – leaving just months to act. Around four out of 10 businesses (38 per cent) were not confident they would be able to comply with GDPR by 25 May. Around one in five businesses (21 per cent) did not currently have a senior person in place responsible for data protection.

More than three quarters of businesses (76 per cent) had not put in place systems to ensure data security breaches are notified in line with GDPR. More than three quarters of businesses (77 per cent) had not reviewed their data processing contracts which will be under greater scrutiny under GDPR.

More than four out of 10 businesses (42 per cent) were unaware that the rules on direct marketing and the use of internet cookies are likely to change with the forthcoming ePrivacy Regulation which also has a target implementation date of 25 May 2018.

New Q2 data from Synergy Research Group shows that over the last 24 months, quarterly spend on all data center hardware and software has grown by just 5%, while spending on the public cloud portion of that has grown by 35%.

The private cloud infrastructure market has also grown, though not as strongly as public cloud, while spending on traditional, non-cloud data center hardware and software has dropped by 18%. ODMs in aggregate account for the largest portion of the public cloud market, with Cisco being the leading individual vendor, flowed by Dell EMC and HPE. The Q2 market leader in private cloud was Dell EMC, followed by HPE and Microsoft. The same three vendors led in the non-cloud data center market, though with a different ranking.

Total data center infrastructure equipment revenues, including both cloud and non-cloud, hardware and software, were over $30 billion in the second quarter, with public cloud infrastructure accounting for over 30% of the total. Private cloud or cloud-enabled infrastructure accounted for over a third of the total. Servers, OS, storage, networking and virtualization software combined accounted for 96% of the Q2 data center infrastructure market, with the balance comprising network security and management software. By segment, HPE is the leader in server revenues, while Dell EMC has a strong lead in storage and Cisco is dominant in the networking segment. Microsoft features heavily in the rankings due to its position in server OS and virtualization applications. Outside of these four, the other leading vendors in the market are IBM, VMware, Huawei, Lenovo, Oracle and NetApp.

“With cloud service revenues continuing to grow by over 40% per year, enterprise SaaS revenue growing by over 30%, and search/social networking revenues growing by over 20%, it is little wonder that this is all pulling through continued strong growth in spending on public cloud infrastructure,” said John Dinsdale, a Chief Analyst and Research Director at Synergy Research Group. “While some of this is essentially spend resulting from new services and applications, a lot of the increase also comes at the expense of enterprises investing in their own data centers. One outcome is that public cloud build is enabling strong growth in ODMs and white box solutions, so the data center infrastructure market is becoming ever more competitive.”

Following assessment and validation from the panel at Angel Business Communications. The shortlist for the 24 categories in this year’s SVC Awards has been put forward for online voting by our readership.

Voting is free of charge and must be made online at www.svcawards.com

The SVC Awards celebrate achievements in Storage, Cloud and Digitalisation, rewarding the products, projects and services as well as honouring companies and teams. The SVC Awards recognise the achievements of end-users, channel partners and vendors alike and in the case of the end-user category there will also be an award made to the supplier who nominated the winning organisation.

Voting remains open until 3 November so there is time to make your vote count and express your opinion on the companies that you believe deserve recognition in the SVC arena.

The winners will be announced at a gala ceremony on 23 November at the Hilton London Paddington Hotel.

Welcoming both the quantity and quality of the 2017 SVC Awards shortlist entries, Jason Holloway, Director of IT Publishing & Events at Angel, said: “I’m delighted that we have this annual opportunity to recognise the innovation and success of a significant part of the IT community. The number of entries, and the quality of the projects, products and people they represent, demonstrate that the SVC Awards continue to go from strength to strength and fulfil an important role in highlighting and recognising much of the great work that goes on in the industry.”

All voting takes place on line and voting rules apply. Make sure you place your votes by 3 November when voting closes. Visit : www.svcawards.com

Storage Project of the Year

Cohesity supporting Colliers International

DataCore Software supporting Grundon Waste Management

Mavin Global supporting The Weetabix Food Company

Cloud / Infrastructure Project of the Year

Axess Systems supporting Nottingham Community Housing Association

Correlata Solutions supporting insurance company client

Navisite supporting Safeline

Hyper-convergence Project of the Year

HyperGrid supporting Tearfund

Pivto3 supporting Bone Consult

UK Managed Services Provider of the Year

EACS

EBC Group

Mirus IT Solutions

netConsult

Six Degrees Group

Storm Internet

Vendor Channel Program of the Year

NetApp

Pivot3

Veeam Software

International Managed Services Provider of the Year

Alert Logic

Claranet

Datapipe

Backup and Recovery / Archive Product of the Year

Acronis – Backup 12.5

Altaro Software – VM Backup

Arcserve - UDP

Databarracks – DraaS, BaaS, BCaaS solutions

Drobo – 5N2

NetApp – BaaS solution

Quest – Rapid Recovery

StorageCraft – Disaster Recovery Solution

Tarmin – GridBank

Cloud-specific Backup and Recovery / Archive Product of the Year

Acronis – Backup 12.5

CloudRanger – SaaS platform

Datto – Total Data Protection platform

StorageCraft – Cloud Services

Veeam Software - Backup & Replication v9.5

Storage Management Product of the Year

Open-E – JovianDSS

SUSE – Enterprise Storage 4

Tarmin – GridBank Data Management platform

Virtual Instruments – VirtualWisdom

Software Defined / Object Storage Product of the Year

Cloudian – HyperStore

DDN Storage – Web Object Scaler (WOS)

SUSE – Enterprise Storage 4

Software Defined Infrastructure Product of the Year

Anuta Networks – NCX 6.0

Cohesive Networks – VNS3

Runecast Solutions – Analyzer

Silver Peak – Unity EdgeConnect

SUSE – OpenStack Cloud 7

Hyper-convergence Solution of the Year

Pivot3 - Acuity Hyperconverged Software Platform

Scale Computing - HC3

Syneto - HYPERSeries 3000

Hyper-converged Backup and Recovery Product of the Year

Cohesity – DataProtect

ExaGrid - HCSS for Backup

Syneto - HYPERSeries 3000

PaaS Solution of the Year

CAST Highlight - CloudReady Index

Navicat – Premium

SnapLogic - Enterprise Integration Cloud

SaaS Solution of the Year

Adaptive Insights – Adaptive Suite

Impartner – PRM

IPC Systems - Unigy 360

Ixia - CloudLens Public

SaltDNA - Secure Enterprise Communications

x.news information technology gmbh – x.news

IT Security as a Service Solution of the Year

Alert Logic – Cloud Defender

Barracuda Networks - Essentials for Office 365

SaltDNA - Secure Enterprise Communications

Votiro - Content Disarm and Reconstruction technology

Cloud Management Product of the Year

CenturyLink - Cloud Application Manager

Geminaire - Resiliency Management Platform

Highlight - See Clearly - Business Performance Acceleration

HyperGrid – HyperCloud

Rubrik – CDM platform

SUSE - OpenStack Cloud 7

Zerto - Virtual Replication

Storage Company of the Year

Acronis

Altaro Software

DDN Storage

NetApp

Virtual Instruments

Cloud Company of the Year

Databarracks

Navisite

Six Degrees Group

Storm Internet

Hyper-convergence Company of the Year

Cohesity

Pivot3

Syneto

Storage Innovation of the Year

Acronis - Backup 12.5

Altaro Software - VM Backup for MSP’s

DDN Storage - Infinite Memory Engine

Excelero – NVMesh

Nexsan – Unity

Cloud Innovation of the Year

CloudRanger – Server Management platform

IPC Systems - Unigy 360

SaltDNA - Secure Enterprise Communications

StaffConnect - Mobile App Platform

Zerto - ZVR 5.5

Hyper-convergence Innovation of the Year

Pivot3 - Acuity HCI Platform

Schneider Electric - Micro Data Centre Solutions

Syneto - HYPERSeries 3000

Digitalisation Innovation of the Year

Asperitas – Immersed Computing

IGEL - UD Pocket

Loom Systems - AI-powered log analysis platform

MapR – XD

For more information and to vote visit: www.svcawards.com

True or false? When it comes to Tier Certification, just ask Uptime Institute.

By Uptime Institute Staff.

Uptime Institute’s Tier Classification System for data centers has reached the two-decade mark. Since its creation in the mid-1990s, Tiers has evolved from a shared industry terminology into the global standard for third-party validation of data center critical infrastructure.

In that time, the industry has changed, and Tiers has evolved with it, remaining as relevant and important as it was when Uptime Institute first developed and disseminated Tiers. At the same time, Uptime Institute has observed that public understanding of Tiers has been clouded by the many myths and misconceptions that have developed over the years.

Uptime Institute has long been aware that not everyone fully understands the concepts described by the Tier Standards, and yet others disagree with some of the definitions. Both these situations lead to classic misunderstandings in which individuals substitute their preferences for accurate information. Other times, however, marketers have invoked a kind of shorthand based on Tiers. While objectionable, these marketers have coined terms like Tier III plus when speaking to their potential customers. These terms have no basis in Tiers but can be especially confusing to IT, real estate, procurement personnel, and even CFOs, all of whom might lack a technical background.

Other myths develop because some industry professionals reference old, out-of-date publications and explanatory material that is no longer valid. There may be other sources of myth, but knowing that the Uptime Institute is the only source of current and reliable information about Tiers is what is really important. We conduct numerous classes during the year, write many articles, and eld numerous inquiries to keep the industry current on Tiers.

Fundamentally, Uptime Institute created the Tier Classification System to consistently evaluate various data center facilities in terms of potential site infrastructure performance, or uptime. The system comprises four Tiers; each Tier incorporates the requirements of the lower Tiers.

Data center infrastructure costs and operational complexities increase with Tier level, and it is up to the data center owner to determine the Tier that fits the business’s need.

Uptime Institute is the only organization permitted to Certify data centers against the Tier Classification System. Uptime Institute does not design, build, or operate data centers. Uptime Institute’s role is to evaluate site infrastructure, operations, and strategy.

From this experience, we have compiled and addressed many of the myths and misconceptions. You can read about some of these experiences in Uptime Institute eJournal articles such as “Avoid Failure and Delay on Capital Projects: Lessons from Tier Certification” and “Avoiding Data Center Construction Problems.” For even more information, please contact us at https://uptimeinstitute.com/contact.

False. Tiers is a performance-based, business-case-driven data center benchmarking system. An organization’s risk tolerance determines the appropriate Tier for the business. In other words, Tiers is predicated on the business case of an individual company. Companies that fail to develop a unique business case for their facilities before developing a Tier objective are misusing Tiers and bypassing the internal dialogue that needs to occur.

False. An organization’s tolerance for risk determines the appropriate Tier to support the business objective. Tier IV is not the best answer for all organizations, and neither is Tier II. Owners should perform due diligence assessments of their facilities before determining a Tier objective. If no business objective is defined, then Tiers may be misused to rationalize unnecessary investment.

Tier I and Tier II are tactical solutions, usually driven more by first-cost and time-to-market than life-cycle cost and performance (uptime) requirements. Organizations selecting Tier I and Tier II solutions typically do not depend on real-time delivery of products or services for a significant part of their revenue stream. Generally, these organizations are contractually protected from damages stemming from lack of system availability.

Rigorous uptime requirements and long-term viability are usually the reason for selecting strategic solutions found in Tier III and Tier IV site infrastructure. In a Tier III facility, each and every capacity component can be taken out of service on a planned basis, without affecting the critical environment or IT processes. Tier IV solutions are even more robust, as each and every capacity component and distribution path can sustain a failure, error, or unplanned event without impacting the critical environment or IT processes.

A Tier IV solution is not better than a Tier II solution. The performance and capabilities of a data center’s infrastructure should match a business application; otherwise companies may overinvest or take on too much risk.

For example, before building a Tier II Certified Constructed Facility, which by definition does not include Concurrent Maintainability across all critical subsystems, an owner should consider whether the business can tolerate a planned or maintenance-related shutdown and how the site operations team would coordinate a site-wide shutdown for maintenance. Similarly business objectives should drive decisions to build a Tier I, Tier III, or Tier IV Certified Constructed Facility.

False. Tier Certification is a performance-based evaluation of a data center’s specific infrastructure; it is not a checklist or cookbook. Unfortunately, some industry shorthand employs N terminology—where N is de ned as the number of components that are minimally required to meet the load demand—to define availability. Incorporating more equipment can be described as designing an N+1, N+2, 2N, or 2(N+1) facility. However, increasing the component count does not determine or guarantee achievement of any specific Tier level, because Tiers also includes evaluation of distribution pathways and other system elements. Therefore, it is possible to achieve Tier IV with just N+1 components, depending on how they are configured and connected to redundant distribution pathways.

False. The first step in a Tier Certification process is a Tier Certification of Design Documents. Uptime Institute Consultants review the 100% design documents, ensuring each electrical, mechanical, monitoring, and automation subsystem meets the fundamental concepts and there are no weak links in the chain. The Design Certification is intended to be a milestone so that data center owners can commence data center construction knowing that the intended design meets the Tier objective.

Tier Certification of Design Documents applies to a document package. It is intended as provisional verification until the Tier Certification of Constructed Facility. Uptime Institute has not verified the constructed environment of these facilities, and thus cannot speak to the standard(s) to which they were built. To emphasize this point, Uptime Institute implemented an expiration date on Design Certifications. All Tier Certification of Design Documents awards issued after 1 January 2014 expired two years after the award date.

During a Facility Certification, a team of Uptime Institute consultants conducts a site visit, identifying discrepancies between the design drawings and installed equipment. The consultants observe tests and demonstrations to prove Tier compliance. Fundamentally, this is the value of the Tier Certification, finding these blind spots and weak points in the chain. Uptime Institute consultants say that in almost every site visit they find that changes have been made after the Design Certification was awarded so that one or more systems or subsystems will not perform in a way that complies with Tier requirements.

More recently, Uptime Institute instituted the Tier Certification of Operational Sustainability to evaluate how operators run and manage their mission-critical facilities. Even the most robustly designed and constructed facilities may experience outages without a well-developed comprehensive management and operation program. Certification at all three levels is how data center owners can be assured they are realizing the maximum potential of their data centers.

False. Uptime Institute removed references to “expected downtime per year” from the Tier Standard in 2009, but they were never a part of the Tier definitions. Tier Standard: Topology is based on specific performance factors (outcomes) that demonstrate that a facility has met specific performance objectives, such as having redundant capacity components, Concurrent Maintainability (generally, the ability to remove any capacity or distribution component from service on a planned basis without impacting IT), or Fault Tolerance (generally, the ability to experience any unplanned failure in the site infrastructure without impacting IT). However, even a Tier IV data center, which is Fault Tolerant, may experience IT outages if it is not operated and managed effectively.

There are statistical tools to predict the frequency of failures and time to recover. Availability is simply the arithmetic calculation of time a site was available over total time. The number, frequency, and duration of disruptions will drive the availability result. However, caution is appropriate when using these tools. Human activity is often not considered by statistical models. In addition, the statistical prediction of a 100-year storm, for example, can obscure the possibility that several 100-year storms can happen in the same year.

False. Uptime Institute has Certified many existing buildings. However, the process can be more challenging when working in facilities with live loads. For best results with an existing facility, the process should begin with a Tier Gap Analysis rather than a formal Certification effort. Tier Gap Analysis provides a high-level summary review for major Tier shortfalls. This allows the owner to make an informed decision whether to proceed with a detailed, exhaustive Certification effort. Tier Certification of Constructed Facility can be performed with any load profile, including resistive load banks, live critical IT load, or a mix.

False. Uptime Institute is currently delivering Tier Certifications in more than 85 countries. Tiers, which allows for many solutions and a variety of configurations, gives the design, engineering, and operations teams the flexibility to meet both local regulations and performance requirements. To date, there has not been a conflict between Tiers and local building codes, statutes, or jurisdictions.

False. In 2014 Uptime Institute and the Telecommunications Industry Association (TIA) agreed on a clear separation between their respective benchmarking systems to avoid industry confusion and drive accountability. In fact, any reference to the TIA rating of a data center may not include the word Tier.

The core objective of Uptime Institute Tiers is to define performance capabilities that will deliver availability required by the data center owner. By contrast, TIA member company experts focus on the need to support the deployment of advanced communications networks. See https://uptimeinstitute.com/uptime-tia for a more detailed explanation.

False. According to Tier Standard: Topology, the only reliable source of power for a data center is the engine- generator plant. This is because utility power is subject to unscheduled interruption—even in places with reliable power grids. As a result, the number of utility feeds, substations, and power grids that provide public power to a data center neither predicts nor influences Tier level. As a consequence, utility power is not even required for Tiers. Most Tier Certified data centers use utility power for main operations as an economic alternative, but this decision does not affect the owner’s target Tier objective.

False. Tiers does not require that the engine-generator plant actually run at all times; however, data centers will typically utilize a public utility a majority of the time for cost or regulatory reasons. At the same time, the engine-generator plant must be properly configured, rated, and sized to have the capability to carry the critical load without runtime limitations. Hence, the performance requirements outlined in the Tier Standard must be met with the data center supported by engine-generator power. Meeting these criteria requires special attention to engine-generator capacity ratings and power distribution.

False. There is no correlation between EPA’s Tiers (or other restrictions of engine-generator operation) and Uptime Institute Tiers, except that both systems use a similar hierarchical system of nomenclature. The EPA’s limits on runtime may complicate a facility’s testing and maintenance regimens and add costs when a facility is forced to rely on backup power for an extended period. However, runtime limitations posed by local authorities do not exempt a data center from having on-site power generation rated to operate without runtime limitations at a constant load.

False. When code or the local authority having jurisdiction (AHJ) mandate an EPO, this does not prohibit Tier compliance. At the same time, Uptime Institute does not recommend EPO installation, unless it is compelled by a local code because even Tier Certified data centers are vulnerable to outages from purposeful or accidental activation of the EPO system. Analysis of the Uptime Institute Network’s Abnormal Incident Report (AIRs) database confirms that accidental EPO activation is a recurring cause of downtime.

The Tiers Standard requires that maintenance, isolation, and/or removal can be performed on the EPO system without affecting the critical load for Tier III data centers. Tier IV data centers additionally require a Fault Tolerant EPO system.

False. The choice of under floor or overhead cooling is a decision to be made by the owner based an operational preference. In Uptime Institute’s experience, a raised floor enhances operational flexibility over the long term. Yet, decisions such as raised floor or on-slab, Cold Aisle/Hot Aisle, containment of Cold Aisle/Hot Aisle, and gallery cooling can affect the efficiency of the computer room environment, but are not mandated by Uptime Institute Tiers.

True. The Tier Standard includes a concession for equipment with odd numbers of cords (1,3,5) in the form of rack-mounted transfer switches to provide access to multiple power paths. However, Tier III and Tier IV data centers must still have multiple and independent feeds to the rack.

The Tier Standard focuses on ensuring that the facility’s infrastructure meets the requirements of the Tier objective. There are many reasons why a facility may contain single-corded IT devices or those with an odd number of power supplies, including lack of knowledge of the facility impacts, lack of options for equipment vendors, and colocation environments where facility personnel have no control over the types of IT devices within the data center. Rack-based transfer switches are most typically supplied by the IT side of the organization, so the facility’s infrastructure can meet the Tier objective. However, planned isolation or fault of these rack-based transfer switches may lead to an outage for individual racks or devices.

Partially true. Tier II allows for Concurrent Maintenance of capacity components, but not distribution pathways or critical elements. So a Tier II Certified facility can perform Concurrent Maintenance on engine generators, UPS, chillers, cooling towers, pumps, air conditioners, fuel tanks, water tanks, and fuel pumps

but not switchboards, panels, transfer switches, transformers, bus bars, cables, and pipes. In many cases, this limitation will require the computer room to be shutdown for planned maintenance or replacement of critical pathways and elements.

The requirement to maintain any component, pathway, or element without shutting down equipment, known as Concurrent Maintainability, defines Tier III. Many owners’ business cases, including healthcare, domestic outsourcers, and state governments, require Tier III. The list of organizations that have protected their investment with Tier Certifications may be found on Uptime Institute’s website.

Partially true. Tier III requires active/active distribution for critical power distribution (which is defined as the output of the UPS and below). Outside of that, active/inactive is acceptable. This means that if a rack receives dual power from two separate power distributions, they must both normally be active. It is not allowable to have one feed normally disabled, nor is it Tier III compliant to have one of the power feeds directly fed from utility power while bypassing a UPS power source.

There are no active/active requirements for mechanical systems in Tier III data centers. So if there are N+1 chillers in a Tier III facility, with each chiller feeding separate A and B chilled water loops, it is permissible for one of the loops to be normally disabled, with all air conditioners normally fed from the same loop.

False. Infrastructure changes must be approached using carefully developed and written procedures and processes. If the topology of a facility changes, it may no longer be Concurrently Maintainable or Fault Tolerant, so clients should have Uptime Institute review designs and construction that might affect a facility’s topology to protect their investment and Tier Certification. Tier Certifications can be revoked if unreviewed changes compromise a facility’s Concurrent Maintainability or Fault Tolerance.

Mostly false. The Tier Standard requires only that Tier IV facilities provide stable cooling to the IT and UPS environment for the time it takes for the mechanical systems to completely restart after a utility power outage and provide rated load to the data center. Tier IV data centers must also be able to maintain a stable thermal environment for the duration of the mechanical restart time and for any 15-minute period in accordance with the 2015 ASHRAE Thermal Guidelines. Tier IV facilities are also required to be active/active for all systems. This is intended to ensure that Continuous Cooling solutions are not negated by a lack of active operation of components. A lightly loaded data center or one with a very complex control system may be able to meet these requirements, without using all the available cooling units. However, there are Tier IV data center designs, especially those at full load, that would in fact require all units to run during normal operations.

Typically false. Makeup air systems in data center applications are typically designed to meet one of three objectives (or a combination of the three):

Data centers are rarely designed in a manner that require the makeup air handler to be active in order to meet the N cooling capacity requirement. However, the existence of a makeup air handler and its operations cannot negatively impact compliance with Tiers. For example, if a makeup air handler is not sized to ASHRAE extremes in compliance with Tiers, the additional heat load from this air handler at those conditions must be considered when sizing the critical cooling system.

False. The Tier Standard is vendor and technology neutral, which means it is possible to Tier Certify facilities that include a wide variety of innovative and new technologies, including DRUPS.

Facilities tend to deploy DRUPS, which combine a diesel engine and a rotary UPS that uses kinetic energy to eliminate batteries, which require high levels of maintenance, somewhat frequent replacement, and a lot of extra space for battery placement/storage. This design usually provides ride-through times of between 10-30 seconds, depending on the application, which is shorter than other technologies. The Tier Standard does not include a minimum ride-through time. In fact, Uptime Institute has Certified several facilities that include DRUPS technology.

DRUPS may also be used to power motor loads. That means that caution must be exercised to ensure that the DRUPS have sufficient capacity to power each and every system and subsystem, including cooling systems, which is accomplished by putting the mechanical components on a no-break bus.

False. Tier Certification analyzes each and every system and subsystem down to the level of valve positions and panel feeds. Ductwork, just like piping systems, may need planned maintenance, replacement, or reconfiguration. As such, traditional ductwork distribution systems must meet the requirements of the Tier objective.

Uptime Institute understands that there is a lot of confusion about what “maintaining” ductwork means to meet Concurrently Maintainable requirements. But in this case, Concurrent Maintainability is about having the capability to isolate a system or part of a system to maintain, repair, upgrade, or reconfigure the data center without impacting any of the computer equipment.

False. Although a critical consideration for the life-cycle operation of the facility and in determining, evaluating, and mitigating risk to the data center, geographical location does not affect a facility’s Tier level and is not part of the Tier Standard: Topology.

Data center designers can take precautions to address the specific risks of a site. A data center sited in a high-risk earthquake zone can include equipment that has been seismically rated and certified as well as incorporate techniques that mitigate damage from seismic activity. Or if a data center has been sited in a high-risk tornado area, designers can consider wind protection measures for the exterior electrical and heat rejection equipment.

Site Location is a criterion in the Tier Certification of Operational Sustainability.

By Steve Hone CEO, The DCA

This

month’s DCA journal theme is Smart Cities, Big Data, Cloud and IoT. All of

these topics are intrinsically linked and put the data centre at the heart of

the Internet of Everything. As more smart city projects are rolled out the

reliance on data centres to support these services will become more and more

critical to the health and sustainability of our society.

Someone asked me today to predict the future demand over the next 10 years. Taking a leaf out of the politician’s guide book I decided to skirt round the question simply because I’m not sure anyone can truly know where this road will lead us in the next five years, let alone ten. Maybe we will all be flying around like the “Jetsons” by then – Wow! Now that shows my age!

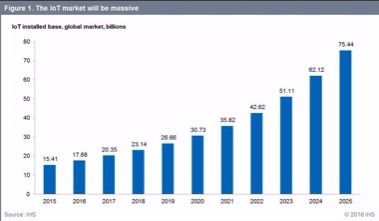

What I do know is if you think we produce a lot of data now “you ain’t seen nothing yet”. The concept of smart cities and IoT is only just in its infancy and as it matures it will in turn produce a mind-boggling amount of data which will all need to be gathered, stored, processed and analysed somewhere, and that somewhere will probably be in a data centre. A more interesting question is - what will a data centre in 2027 look like?

So, if IoT, Cloud and Big Data are helping to facilitate these Smart City initiatives what’s the driver behind it? Well, the answer is “necessity”.

At the start of the 2nd World War we were still flying around in prop planes covered in fabric; within six years we had jet fighters; you can say the same for Radar, Sat Nav and even the first computer. All these innovations were driven though necessity.

The necessity to initiate Smart City projects is largely driven by the increase of the global population and more importantly the fact that a sizable percentage of the population are choosing to live in cities. If you look at the figures of any city around the world, urbanisation continues to increase at an alarming rate. The reason for this is more than just a tribal or herding instinct; go back a hundred years and this move in population was because of the mechanisation of farming which drove people towards towns and cities to find work. This migration to our cities has continued to accelerate ever since at a rate of 150,000 every day according to latest figure. Between 2011 and 2050, the world’s urban population is projected to rise by 72 %; from 3.6 billion to 6.3 billion. Demographers predict that by the end of the next century half of the world's population will be urban dwellers.

The traditional methods of supporting our cities inhabitants is simply not going to be scalable enough when faced with this predicted explosion in the population. We need to start to work smarter not harder to ensure all services (both public and private) operate at optimum efficiency if we are to continue to support our citizens and achieve economic, social, and environmental sustainability.

This will also only be made possible by improving a city’s efficiency, this requires the integration of new infrastructure and services. While the availability of smart solutions for cities continues to rise rapidly we need to make sure the data centre sector can respond and keep pace.

This transformation also requires radical changes in the way cities are run today, therefore developing smart cities is not just a process allowing technology providers to offer technical solutions and of city authorities implementing them; but the development of the right environment for smart solutions to be effectively adopted and used.

The DCA continues to provide a trusted environment where knowledge can be both shared and gained and where collaboration can flourish. Thank you to all the contributors this month and I look forward to a time when my car drives me to work while I read the paper, I have a feeling I won’t have long to wait.

The next edition provides you all with an opportunity to let your customers speak for you and tell everyone how great you are; the deadline for “End User Case Studies” is the 24th October. Please forward all submissions to Amanda McFarlane - amandam@datacentrealliance.org

By Ian Shaylor, Head of Customer Insights and Data, British Gas Business

The myth that ‘big data’ is somehow the preserve of large corporations like British Gas Business lingers over many small firms.

These large, complex datasets may sound overwhelming but when it comes to interpreting and applying this information to add business value, being small can actually be an advantage. This is because small businesses tend to be more agile than larger corporations and can therefore act more quickly on the insights offered by big data. And having access to analytics can be insightful, regardless of the actual size or scale of your dataset.

Whatever business you’re in, you’re part of a supply chain and you’re likely to already be part of the big data picture – particularly if you have big corporate customers who are almost certainly using big data business analytics to assess what is being delivered. On this basis, there’s no ‘opt-out’ clause. The smart reaction is to look at big data as an opportunity and play your scale and agility in your favour.

Another myth is that applying business analytics is an expensive process but in fact there are many simple, low-cost – or sometimes even free – tools and software packages available that can make analytics accessible. Google analytics, for example is a free means of gathering customer insights, while Microsoft’s Azure range is affordable for small businesses. And some of your suppliers may also offer tools to help you analyse specific sets of data.

To use data analytics most effectively, it’s important to stay focused and start small. Identify the specific business issue that you would like business analytics to solve or the question that you want analytics to help you answer.

Are you looking, for example, to better understand how your customers use your website or do you want to assess whether your own perception of your industry matches that of the market? By pinpointing the issue, you will be able to use the available analytics most effectively for your purpose.

To really take advantage of the benefits of big data, having someone near the top of your business with strong analytical and technical abilities as a business analytics champion can make a big difference. This person should be an advocate for data-backed insights and their use in decision-making and should ensure that the application of data across the business grows at a manageable rate.

One way that small businesses across the UK can use big data to their advantage is by analysing their energy consumption to understand how energy is being used and where changes can be made to improve efficiency. As a first step, you need to have a smart meter installed. More than a third of our business customers already have this technology. Smart meters automatically send readings securely to energy suppliers. This means more accurate bills but also more data that can be analysed to help small firms add value to business.

At British Gas Business, we analyse the data we collect in order to provide helpful insights that allow our customers to take advantage of energy efficiencies as part of the smart meter code of practice.

For example, recently we wrote to all our smart customers who were using a lot of energy out-of-hours to offer them advice on how to reduce this consumption. Businesses – both large and, more often, small – benefit from personalised guidance and free insight thanks to big data.

But, you can also analyse your own data and play around with this to understand patterns of energy usage. Our free online Business Energy Insight tool helps you see how much electricity you’re using by year, month, week, day and even hour. The dataset that you will be working with will be small and manageable and provide actionable insights but it is because of big data that you will be able to do this.

While we are at the forefront of this revolution and see it as an opportunity to offer market leading service in energy, other companies in other industries will be offering similar opportunities also.

Big data provides big opportunities for small businesses – and these will only continue to grow over the coming years. Using analytics to understand specific business issues will help your business to develop strategies that are backed by data and insight – and ultimately add value.

Rolf Brink, CEO and Founder, Asperitas

Sustainability has been a theme within the IT industry since the introduction of the energy star label for hardware. Since 2007 sustainable digital infrastructure became a topic internationally shaped by a variety regional organisations running green IT programs to reduce the footprint of layers within digital infrastructure including datacentres.

Reducing the overall footprint of the datacentre industry is an impossible challenge as the rate growth of the adoption of digital services, and therefore the need for infrastructure, has outpaced the rate at which the energy footprint can be reduced. Industry orchestration will be needed if this challenge is to be met and new standards for datacentre sustainability are to be set. Years have passed and excess datacentre heat reuse is still not delivering its promise, often because technology, organisational and operational elements cannot be matched. Recently stakeholders have been aiming to change that by stimulating a new role for datacentres, which is the transformation we still need to see on a large scale: the transformation from energy consumers to flexible energy prosumers in smart cities. This is possible with technologies available today, the future is now!

FROM ENERGY CONSUMER TO ENERGY PRODUCER

The global move to cloud based infrastructures and the Internet of Things (IoT) generates high demand for datacentre capacity and high network loads. The energy demand of datacentres is rising so quickly that it is causing serious issues for energy grid operators, (renewable) energy suppliers and governments. Grid operators and energy suppliers can hardly keep up with the demand in the large datacentre hubs let alone ensure enough renewable energy generation is available where we see the demand. Not only does this raise questions of sustainability on all levels, the demand for flexibility and high loads requires a different approach to the business model of the datacentre. With the ultimate challenge of becoming an energy neutral industry.

The key to resolving this challenge is the adoption of liquid cooling techniques in all its forms. Asperitas is committed to approaching this challenge head-on by taking an active role in discarding the limitations of IT systems and datacentre infrastructures of today to find new ways to drastically improve datacentre efficiency. This can help to develop a future where the datacentre is transformed from energy consumer to energy producer. These excerpts from our whitepaper present our vision of the datacentre of the future. A datacentre that faces the challenges of today and is ready for the opportunities of tomorrow.

With all these advantages, liquid offers solutions that are just not attainable in any other way. This is why liquid is the future for datacentres. But what does this future look like? Which liquid technologies are available and what does this mean for the infrastructure of the datacentre?

In the next chapter, we outline the basic liquid technologies operating in datacentres today. After that we explore the most beneficial environment for these technologies: a hybrid temperature chain. Further on, a model of connected, distributed datacentre environments is introduced. With the dedication to liquid, Temperature Chaining and the distributed datacentre model, the datacentre of the future transforms from energy consumer to energy producer. This approach will drastically reduce the carbon impact of datacentres while stimulating the energy efficiency of unrelated energy consuming industries and consumers.

THE HYBRID INFRASTRUCTURE

The introduction of water into the datacentre whitespace is most beneficial within a purpose-built set-up. This focus for the design of the datacentre must be on absorbing all the thermal energy with water. This calls for a hybrid environment in which different liquid based technologies are co-existing to allow for the full range of datacentre and platform services, regardless of the type of datacentre.

Immersed Computing® provides easy deployable, scalable local edge solutions. These allow for rejecting heat to whatever reuse scenario is present, like thermal energy storage, domestic water, city heating etc. If no recipient of heat is available, only a dry cooler is sufficient. Reducing or even eliminating the need for overhead installations like coolers for edge environments, providing a different perspective on datacentres. Geographic locations become easier to qualify and high quantities of micro installations can be easily deployed with minimal requirements. These datacentres will be integrated in existing district buildings or multifunctional district centres. A convenient location for the datacentre is a place where heat energy will be utilised throughout the whole year. Datacentres can also be placed as a separate building in residential and industrial areas. This creates the potential for a connected datacentre web consisting of mainly two types of datacentre environments. Large facilities (Core Datacentres) which are positioned on the edge of urban areas or even farther away and micro facilities (Edge Nodes) which are focused on optimising the large network infrastructure and are all interconnected with each other and with all core datacentres.

The main purpose of the edge nodes is to reduce the overall network load and act as an outpost for IoT applications, content caching and high bandwidth cloud applications. The main function of the core datacentres is to ensure continuity and availability of data by acting as data hubs and high capacity environments.

This is an excerpt of an Asperitas whitepaper: the Datacentre of the Future, authored by Rolf Brink, CEO and founder of Asperitas. This whitepaper was presented at the DCA’s Datacentre Transformation event in Manchester.

http://asperitas.com/resource/immersed-computing-by-asperitas/

By Lewis Page, Editor of CW Journal

Almost everyone involved in the automotive, transport and mobility sectors believes that significant technology-driven change is coming. Both established vehicle manufacturers and new entrants like Google and Tesla have many projects underway, not only aimed at autonomous or “driverless” cars but also exploring the various concepts grouped under the banner of Mobility as a Service (MaaS).

One idea here is that the cost of using shared vehicles - particularly ones with autonomous driving capability and/or networking - could be considerably less than the cost of owning a vehicle personally, and perhaps the shared vehicle might be more useful too.

These concepts were explored by a group of speakers and attendees at the CWIC Starter: Transport and Mobility event in March this year. All the stakeholders were there: car makers, autonomy developers, telematics and traffic analysts, shared and hire-car services, the insurance industry and - naturally, given that this subject will hinge so much on future regulations - lawyers.

Many interesting concepts were brought up. Julian Turner of Westfield Sportscars, a company famous for offerings such as the GTM - very much drivers’ cars - was nonetheless very enthusiastic about the possibilities which autonomy and networking could unleash for vehicle manufacturers. Westfield is developing the concept of driverless “pods” useful for “last mile” tasks such as home deliveries, school runs and mobility within large complexes such as hospitals or shopping centres.

If autonomy can deliver on its promise of much greater safety, there might be no need any longer for heavy crash structures in pods and other vehicles. This could permit the production of easily recyclable cellulose vehicles, or even ones in which the bodywork is made of carbon and helps propel the car by being part of a supercapacitor. Supercapacitors offer much less range than today’s li-ion EVs, but have the advantage of charging up in less than a minute and being more durable.

Quite apart from autonomy there is the matter of future vehicle networking, a field which is potentially even more complex - especially if networking is used for safety, with attendant legal ramifications. The problems of autonomous driving could be appreciably simplified, for instance, if every vehicle were sharing its location, direction of travel, speed (and perhaps, its intentions) with other vehicles nearby. Ships at sea already do this using AIS, and such systems are also in use in aviation. Such a system could have prevented the well-known crash last year in which a Tesla car on “Autopilot” hit a truck crossing the road ahead: neither the car’s sensors nor the driver detected the light coloured trailer against the light sky.

Networking between vehicles and traffic-control infrastructure could also permit autonomous vehicles to tailgate as routine at high speeds, allowing the roads to carry much more traffic and hugely improving fuel efficiency. This is an idea imagined as long ago as the 1970s, of course (Judge Dredd’s megacity of the future had a prison placed on a traffic island, normally escape proof due to the continual flow of high speed computer controlled vehicles around it).

It could even be that the autonomous, networked vehicles of the future would mean more personal cars and journeys, not fewer. With a personal car that could be summoned from afar by app, you would not need a parking space either at work or at home to use it for commuting: more people might choose to own a car. In such a case there would be as many as six car journeys per commuter per day rather than just two.

The CWIC event did make one thing about the future of mobility clear. Almost all participants agreed that it will be difficult to build the future without some clarity on the legal and regulatory frameworks which will be in place. At the moment, however, no vehicle has yet been permitted onto a public road anywhere without a human driver.

The technology will be there: indeed, in many cases it is already there. It isn’t clear when it will be allowed to reach its full potential, however.

Dr Marcin Budka, Principal Academic in Data Science at Bournemouth University

The alarm on your smart phone went off 10 minutes earlier than usual this morning. Parts of the city are closed off in preparation for a popular end of summer event, so congestion is expected to be worse than usual. You’ll need to catch an earlier bus to make it to work on time.

The alarm time is tailored to your morning routine, which is monitored every day by your smart watch. It takes into account the weather forecast (rain expected at 7am), the day of the week (it’s Monday, and traffic is always worse on a Monday), as well as the fact that you went to bed late last night (this morning, you’re likely to be slower than usual). The phone buzzes again – it’s time to leave, if you want to catch that bus.

While walking to the bus stop, your phone suggests a small detour – for some reason, the town square you usually stroll through is very crowded this morning. You pass your favourite coffee shop on your way, and although they have a 20% discount this morning, your phone doesn’t alert you – after all, you’re in a hurry.

After your morning walk, you feel fresh and energised. You check in at the Wi-Fi and Bluetooth-enabled bus stop, which updates the driver of the next bus. He now knows that there are 12 passengers waiting to be picked up, which means he should increase his speed slightly if possible, to give everyone time to board. The bus company is also notified, and are already deploying an extra bus to cope with the high demand along your route. While you wait, you notice a parent with two young children, entertaining themselves with the touch-screen information system installed at the bus stop.

Once the bus arrives, boarding goes smoothly: almost all passengers were using tickets stored on their smart phones, so there was only one time-consuming cash payment. On the bus, you take out a tablet from your bag to catch up on some news and emails using the free on-board Wi-Fi service. You suddenly realise that you forgot to charge your phone, so you connect it to the USB charging point next to the seat. Although the traffic is really slow, you manage to get through most of your work emails, so the time on the bus is by no means wasted.

The moment the bus drops you off in front of your office, your boss informs you of an unplanned visit to a site, so you make a booking with a car-sharing scheme, such as Co-wheels. You secure a car for the journey, with a folding bike in the boot.

Your destination is in the middle of town, so when you arrive on the outskirts you park the shared car in a nearby parking bay (which is actually a member’s unused driveway) and take the bike for the rest of the journey to save time and avoid traffic. Your travel app gives you instructions via your Bluetooth headphones – it suggests how to adjust your speed on the bike, according to your fitness level. Because of your asthma, the app suggests a route that avoids a particularly polluted area.

After your meeting, you opt to get a cab back to the office, so that you can answer some emails on the way. With a tap on your smartphone, you order the cab, and in the two minutes it takes to arrive you fold up your bike so that you can return it to the boot of another shared vehicle near your office. You’re in a hurry, so no green reward points for walking today, I’m afraid – but at least you made it to the meeting on time, saving kilograms of CO2 on the way.

It may sound like fiction, but truth be told, most of the data required to make this day happen are already being collected in one form or another. Your smart phone is able to track your location, speed and even the type of activity that you’re performing at any given time – whether you’re driving, walking or riding a bike.

Meanwhile, fitness trackers and smart watches can monitor your heart rate and physical activity. Your search history and behaviour on social media sites can reveal your interests, tastes and even intentions: for instance, the data created when you look at holiday offers online not only hints at where you want to go, but also when and how much you’re willing to pay for it.

Personal devices aside, the rise of the Internet of Things with distributed networks of all sorts of sensors, which can measure anything from air pollution to traffic intensity, is yet another source of data. Not to mention the constant feed of information available on social media about any topic you care to mention.

With so much data available, it seems as though the picture of our environment is almost complete. But all of these datasets sit in separate systems that don’t interact, managed by different entities which don’t necessarily fancy sharing. So although the technology is already there, our data remains siloed with different organisations, and institutional obstacles stand in the way of attaining this level of service. Whether or not that’s a bad thing, is up to you to decide.

Suppliers of managed services are taking on more responsibilities as they become the driving force for IT industry growth, delegates at the Managed Services & Hosting Summit were told in London this week. More than two hundred managed services providers (MSPs) and aspiring providers of managed services were advised their need to become rounded providers of business productivity, by adopting the right mindset, getting more training on key issues and by working together to offer a more comprehensive range of services.

Mark Paine, Gartner Research Director told the conference that service providers had to take account of the changing attitudes of buyers by focusing on the business outcomes and the raised expectations among buyers now that IT has had to become a productivity asset for the business. And they need not try to do it all themselves - partners can co-operate to address the wider market requirements, he said. MSPs had to carefully choose go-to-market partners that can talk both technology and business; and they had to start with a vision; look at use cases; and consider processes, challenges and outcomes for their customers.

Customer acquisition costs (CAC) versus lifetime value (LTV) also had to be brought into the equation, considering margins, partnering agreements and questions as to who owns the invoice, plus the on-going up-selling/cross-selling opportunities. The rewards were there through continuing revenues and repeatable business, as successful MSPs have shown. The rewards for getting it right are substantial, Mark Paine says, including valuable access to customers' ecosystems, becoming a pivotal part of customers' success stories, and benefiting from lead sharing with technology partners.

The added benefit of recurring revenue from cloud services is substantial and this was echoed at the Summit by Michael Frisby, Managing Director of Cobweb Solutions, which sells cloud solutions from Microsoft, Mimecast, Acronis and others. Frisby said: “We became a cloud managed service provider from originally focusing on traditional solutions like Exchange, and 95% of our revenues are now recurring. But you have to make sure you get the marketing right to do it.” Continuing training and education of the MSP were essential, he added, pointing out that Cobweb itself had boosted its investment in this four-fold in the last year.

Other issues covered included the growing importance of compliance particularly with regard to GDPR: legal firm Fieldfisher's partner Renzo Marchini warned that the rules were changing and the impact on MSPs would be profound. Service providers are to be regarded as 'data controllers' under GDPR, with that comes the potential for huge fines in the event of regulation breaches.

The theme of partnering was confirmed later in a round-table discussion when the Global Technology Distribution Council’s European head, Peter van den Berg, outlined how distributors were investing heavily in services and education. Security was also a key issue, with the service provider very much in the firing line in the event of any incident. Datto revealed some of the latest research from its global survey: Business Development Director Chris Tate revealed that “87% of our partners have had to deal with a ransomware attack on behalf of their clients.” Earlier, SolarWinds MSP had shown how all-pervading the issue was becoming in discussions with customers, and how MSPs needed to increase their understanding, particularly in relation to the challenges faced by smaller businesses.