I’m delighted that this issue of DCS contains several articles on the topic of data centre design. With the IT world moving so rapidly, and the promise of more disruption/innovation to come, it’s fair to say that data centre design has never been so important. Legacy facilities are already struggling to keep up with the demand of modern IT, and they’ll soon be swamped by the extra demands placed on them by wholescale digitalisation, unless they are updated and/or amended to be able to continue to play a useful part in the rapidly changing data centre landscape.

When Cloud first burst on to the scene, the theory was that everyone would move everything into the public cloud. Then there was something of a backlash, and the private cloud gained attention before, sensibly, the hybrid cloud – a mixture of private and public – became the preferred solution. It’s a similar story when it comes to data centres, where we’ve moved from private, to public, and now to a mixture of both, but we also have the added twist where everything was being centralised, and no it’s time (again) to start distributing data content – edge computing has arrived!

So, how and where your data centre(s) is/are located matters massively, depending on the focus of your business. And, while you might want to own as many of your data centre assets as possible, when it comes to keeping and processing data at a local level, it’s unlikely to make financial sense to build/own/operate your own facility. Much more likely that regional colos will be doing a roaring trade as more and more content is pushed closer to the consumer and more and more locally produced data is processed and consumed locally, without ever making it back to a big, centralised data centre facility.

Flexibility would seem to be key, once you’ve understood what it is you’re trying to achieve with your data centre real estate. And don’t leave it much longer before you do sit down and gain this understanding. IoT, Big Data and, no doubt, the next big thing, are rather like ‘time and tide’ – they wait for no man, or woman.

According to new research from International Data Corporation (IDC), cloud service providers are at risk of underestimating the impact of new data protection legislation on their business models. The General Data Protection Regulation (GDPR) applies from May 25, 2018, and introduces substantial changes in the way that personal data must be protected. As organizations move to the cloud they must assure themselves of their service providers' understanding of the new obligations. Equally, CSPs must understand the extent to which they now have liability under GDPR, and how they can construct workable and valid contractual agreements.

"CSPs must act immediately to consider their position under the GDPR, and review all systems and processes before the 2018 deadline," said Duncan Brown, associate vice president of security at IDC. "GDPR means increased risk and higher costs for CSPs dealing with personal data."

Most CSPs will be affected by GDPR because the definition of processing is broad and includes simply storing personal data. Similarly, personal data is also broadly defined and includes any data that relates to an identified or identifiable living human. "Many CSPs are unaware of these broad scoping definitions and are thus unprepared for their GDPR obligations," said Brown.

The IDC report — The Impact of GDPR on Cloud Service Providers — is divided into two parts. The first examines general considerations for contracts and liability, while the second focuses on security, international data transfers, and other considerations.

The report notes that CSPs not based in the EU will be impacted by GDPR if they are offering goods or services to EU-based individuals, either directly or via a customer organization such as a retailer or SaaS provider. Importantly, it does not matter if a CSP knows whether its customers are using its service to process personal data. "Ignorance is no defense," said Brown.

IDC recommends that CSPs understand the cloud supply chain, and conduct due diligence on subprocessors. Audits of subprocessors will be important, and CSPs may also begin auditing their customers to ensure that cloud services are used in a compliant manner.

Total worldwide enterprise storage systems factory revenue was down 0.5% year over year and reached $9.2 billion in the first quarter of 2017 (1Q17), according to the International Data Corporation (IDC) Worldwide Quarterly Enterprise Storage Systems Tracker. Total capacity shipments were up 41.4% year over year to 50.1 exabytes during the quarter. Revenue growth increased within the group of original design manufacturers (ODMs) that sell directly to hyperscale datacenters. This portion of the market was up 78.2% year over year to $1.2 billion. Sales of server-based storage were down 13.7% during the quarter and accounted for $2.7 billion in revenue. External storage systems remained the largest market segment, but the $5.2 billion in sales represented a modest decline of 2.8% year over year.

"The enterprise storage market closed out the first quarter relatively flat, yet adhered to a familiar pattern," said Liz Conner, research manager, Storage Systems. "Spending on traditional external arrays continues to slowly shrink while spending on all-flash deployments once again posted strong growth and helped to drive the overall market. Meanwhile the very nature of the hyperscale business leads to heavy fluctuations within the market segment, displaying solid growth in 1Q17."

1Q17 Total Enterprise Storage Systems Market Results, by Company

Dell Inc held the number 1 position within the total worldwide enterprise storage systems market, accounting for 21.5% of spending. HPE held the next position with a 20.3% share of revenue during the quarter. HPE's share and year-over-year growth rate includes revenues from the H3C joint venture in China that began in May of 2016; as a result, the reported HPE/New H3C Group combines storage revenue for both companies globally. NetApp finished third with 8.0% market share. Hitachi and IBM finished in a statistical tie* for the fourth position, each capturing 5.0% of global spending. As a single group, storage systems sales by original design manufacturers (ODMs) selling directly to hyperscale datacenter customers accounted for 13.2% of global spending during the quarter.

| Top 5 Vendor Groups, Worldwide Total Enterprise Storage Systems Market, First Quarter of 2017 (Revenues are in US$ millions) | |||||

| Company | 1Q17 Revenue | 1Q17 Market Share | 1Q16 Revenue | 1Q16 Market Share | 1Q17/1Q16 Revenue Growth |

| 1. Dell Inca | $1,968.5 | 21.5% | $2,304.2 | 25.0% | -14.6% |

| 2. HPE/New H3C Group b | $1,865.0 | 20.3% | $2,289.9 | 24.8% | -18.6% |

| 3. NetApp | $731.6 | 8.0% | $645.5 | 7.0% | 13.3% |

| T4. Hitachi* | $460.1 | 5.0% | $508.0 | 5.5% | -9.4% |

| T4. IBM* | $455.3 | 5.0% | $448.5 | 4.9% | 1.5% |

| ODM Direct | $1,212.9 | 13.2% | $680.5 | 7.4% | 78.2% |

| Others | $2,478.6 | 27.0% | $2,344.4 | 25.4% | 5.7% |

| All Vendors | $9,172.0 | 100.0% | $9,221.0 | 100.0% | -0.5% |

| Source: IDC Worldwide Quarterly Enterprise Storage Systems Tracker, June 8, 2017 | |||||

Notes:

* – IDC declares a statistical tie in the worldwide enterprise storage systems market when there is less than one percent difference in the revenue share of two or more vendors.

a – Dell Inc represents the combined revenues for Dell and EMC.

b – Due to the existing joint venture between HPE and the New H3C Group, IDC will be reporting external market share on a global level for HPE as "HPE/New H3C Group" starting from 2Q 2016 and going forward.

1Q17 External Enterprise Storage Systems Results, by Company

Dell Inc was the largest external enterprise storage systems supplier during the quarter, accounting for 27.2% of worldwide revenues. NetApp finished in the number 2 position and HPE in the number 3 position with 14.0% and 9.7% of market share, respectively. Hitachi and IBM rounded out the top 5 in a statistical tie* for the number 4 position with revenue shares of 8.6% and 8.4%, respectively.

| Top 5 Vendors Groups, Worldwide External Enterprise Storage Systems Market, First Quarter of 2017 (Revenues are in Millions) | |||||

| Company | 1Q17 Revenue | 1Q17 Market Share | 1Q16 Revenue | 1Q16 Market Share | 1Q17/1Q16 Revenue Growth |

| 1. Dell Inca | $1,424.6 | 27.2% | $1,696.7 | 31.5% | -16.0% |

| 2. NetApp | $731.6 | 14.0% | $645.5 | 12.0% | 13.3% |

| 3. HPE/New H3C Group b | $511.0 | 9.7% | $535.7 | 9.9% | -4.6% |

| T4. Hitachi* | $449.1 | 8.6% | $497.1 | 9.2% | -9.6% |

| T4. IBM* | $440.6 | 8.4% | $429.0 | 8.0% | 2.7% |

| Others | $1,684.9 | 32.1% | $1,589.5 | 29.5% | 6.0% |

| All Vendors | $5,241.9 | 100.0% | $5,393.6 | 100.0% | -2.8% |

| Source: IDC Worldwide Quarterly Enterprise Storage Systems Tracker, June 8, 2017 | |||||

Software-defined networking has reached the wide area network (WAN). Software-defined WANs (SD-WANs) will play a key role in network evolution as organizations try to cope with the accelerating requirements resulting from digital transformation. In a new report, International Data Corporation (IDC) foresees rapidly growing demand for SD-WAN solutions in Europe, the Middle East, and Africa (EMEA).

SD-WANs build on hybrid network architectures that have been rising in popularity for years, but add centralized software-based intelligence that monitors, analyzes, and controls the network, allowing end users to mix and match different forms of connectivity (like MPLS, internet, Ethernet, wireless) and services into a hybrid network that provides an optimal combination of cost and performance for every single location and application.

The momentum behind SD-WAN is strong, with many startup and established vendors and service providers jumping on the bandwagon. For end-user organizations the rationale for adoption is compelling, and IDC believes this will only increase as solutions mature and awareness and recognition of its benefits grow. The consequence will be a high growth opportunity, with revenues in EMEA expected to grow at an average pace of 92% per year to reach $2.1 billion by 2021.

"SD-WAN has emerged as one of the hottest topics in the WAN industry," said Jan Hein Bakkers, senior research manager at IDC. "It will become one of the key building blocks of network evolution, driving the flexibility, manageability, scalability, and cost effectiveness that organizations require in their balancing act between rapidly growing requirements and much flatter budgets."

According to the International Data Corporation (IDC) Worldwide Quarterly Converged Systems Tracker, the worldwide converged systems market revenues increased 4.6% year over year to $2.67 billion during the first quarter of 2017 (1Q17). The market consumed 1.48 exabytes of new storage capacity during the quarter, which was up only 7.1% compared to the same period a year ago.

"Converged systems have become an important source of innovation and growth for the data center infrastructure market," said Eric Sheppard, research director, Enterprise Storage & Converged Systems. “These solutions represent a conduit for the key technologies driving much needed data center modernization and efficiencies such as flash, software-defined infrastructure and private cloud platforms."

Converged Systems Segments

IDC's converged systems market view offers four segments: integrated infrastructure, certified reference systems, integrated platforms, and hyperconverged systems. Integrated infrastructure and certified reference systems are pre-integrated, vendor-certified systems containing server hardware, disk storage systems, networking equipment, and basic element/systems management software. Integrated Platforms are integrated systems that are sold with additional pre-integrated packaged software and customized system engineering optimized to enable such functions as application development software, databases, testing, and integration tools. Hyperconverged systems collapse core storage and compute functionality into a single, highly virtualized solution. A key characteristic of hyperconverged systems that differentiate these solutions from other integrated systems is their scale-out architecture and their ability to provide all compute and storage functions through the same x86 server-based resources.

During the first quarter of 2017, the combined integrated infrastructure and certified reference systems market generated revenues of $1.37 billion, which represented a year-over-year decrease of 3.3% and 51.3% of the total market. Dell Inc. was the largest supplier of this combined market segment with $647.8 million in sales, or 47.2% share of the market segment.

| Top 3 Vendors, Worldwide Integrated Infrastructure and Certified Reference Systems, First Quarter of 2017 (Revenues are in Millions) | |||||

| Vendor | 1Q17 Revenue | 1Q17 Market Share | 1Q16 Revenue | 1Q16 Market Share | 1Q17 /1Q16 Revenue Growth |

| 1. Dell Inc.* | $647.8 | 47.2% | $667.3 | 47.0% | -2.9% |

| 2. Cisco/NetApp | $395.6 | 28.8% | $313.7 | 22.1% | 26.1% |

| 3. HPE | $206.2 | 15.0% | $291.1 | 20.5% | -29.2% |

| All Others | $122.8 | 9.0% | $146.9 | 10.4% | -16.4% |

| Total | $1,372.5 | 100% | 1,419.1 | 100% | -3.3% |

| Source: IDC Worldwide Quarterly Converged Systems Tracker, June 22, 2017 | |||||

* Note: Dell Inc. represents the combined revenues for Dell and EMC sales for all quarters shown.

Integrated Platform sales declined 13.3% year over year during the first quarter of 2017, generating $635.9 million worth of sales. This amounted to 23.8% of the total market revenue. Oracle was the top-ranked supplier of Integrated Platforms during the quarter, generating revenues of $348.7 million and capturing a 54.8% share of the market segment.

| Top 3 Vendors, Worldwide Integrated Platforms, First Quarter of 2017 (Revenues are in Millions) | |||||

| Vendor | 1Q17 Revenue | 1Q17 Market Share | 1Q16 Revenue | 1Q17 Market Share | 1Q17 /1Q16 Revenue Growth |

| 1. Oracle | $348.7 | 54.8% | $379.6 | 51.8% | -8.1% |

| 2. HPE | $61.7 | 9.7% | $65.8 | 9.0% | -6.2% |

| T3* IBM | $19.9 | 3.1% | $26.6 | 3.6% | -25.1% |

| T3* Hitachi | $19.6 | 3.1% | $28.0 | 3.8% | -30.1% |

| All Others | $185.9 | 29.2% | $233.1 | 31.3% | -20.2% |

| Total | $635.9 | 100% | $733.1 | 100% | -13.3% |

| Source: IDC Worldwide Quarterly Converged Systems Tracker, June 22, 2017 | |||||

* Note: IDC declares a statistical tie in the worldwide converged systems market when there is a difference of one percent or less in the vendor revenue shares among two or more vendors.

Hyperconverged sales grew 64.7% year over year during the first quarter of 2017, generating $665.1 million worth of sales. This amounted to 24.9% of the total market value.

Gartner, Inc. has highlighted the top technologies for information security and their implications for security organizations in 2017.

"In 2017, the threat level to enterprise IT continues to be at very high levels, with daily accounts in the media of large breaches and attacks. As attackers improve their capabilities, enterprises must also improve their ability to protect access and protect from attacks," said Neil MacDonald, vice president, distinguished analyst and Gartner Fellow Emeritus. "Security and risk leaders must evaluate and engage with the latest technologies to protect against advanced attacks, better enable digital business transformation and embrace new computing styles such as cloud, mobile and DevOps."

The top technologies for information security are:

Cloud Workload Protection Platforms

Modern data centers support workloads that run in physical machines, virtual machines (VMs), containers, private cloud infrastructure and almost always include some workloads running in one or more public cloud infrastructure as a service (IaaS) providers. Hybrid cloud workload protection platforms (CWPP) provide information security leaders with an integrated way to protect these workloads using a single management console and a single way to express security policy, regardless of where the workload runs.

Remote Browser

Almost all successful attacks originate from the public internet, and browser-based attacks are the leading source of attacks on users. Information security architects can't stop attacks, but can contain damage by isolating end-user internet browsing sessions from enterprise endpoints and networks. By isolating the browsing function, malware is kept off of the end-user's system and the enterprise has significantly reduced the surface area for attack by shifting the risk of attack to the server sessions, which can be reset to a known good state on every new browsing session, tab opened or URL accessed.

Deception

Deception technologies are defined by the use of deceits, decoys and/or tricks designed to thwart, or throw off, an attacker's cognitive processes, disrupt an attacker's automation tools, delay an attacker's activities or detect an attack. By using deception technology behind the enterprise firewall, enterprises can better detect attackers that have penetrated their defenses with a high level of confidence in the events detected. Deception technology implementations now span multiple layers within the stack, including endpoint, network, application and data.

Endpoint Detection and Response

Endpoint detection and response (EDR) solutions augment traditional endpoint preventative controls such as an antivirus by monitoring endpoints for indications of unusual behavior and activities indicative of malicious intent. Gartner predicts that by 2020, 80 percent of large enterprises, 25 percent of midsize organizations and 10 percent of small organizations will have invested in EDR capabilities.

Network Traffic Analysis

Network traffic analysis (NTA) solutions monitor network traffic, flows, connections and objects for behaviors indicative of malicious intent. Enterprises looking for a network-based approach to identify advanced attacks that have bypassed perimeter security should consider NTA as a way to help identify, manage and triage these events.

Managed Detection and Response

Managed detection and response (MDR) providers deliver services for buyers looking to improve their threat detection, incident response and continuous-monitoring capabilities, but don't have the expertise or resources to do it on their own. Demand from the small or midsize business (SMB) and small-enterprise space has been particularly strong, as MDR services hit a "sweet spot" with these organizations, due to their lack of investment in threat detection capabilities.

Microsegmentation

Once attackers have gained a foothold in enterprise systems, they typically can move unimpeded laterally ("east/west") to other systems. Microsegmentation is the process of implementing isolation and segmentation for security purposes within the virtual data center. Like bulkheads in a submarine, microsegmentation helps to limit the damage from a breach when it occurs. Microsegmentation has been used to describe mostly the east-west or lateral communication between servers in the same tier or zone, but it has evolved to be used now for most of communication in virtual data centers.

Software-Defined Perimeters

A software-defined perimeter (SDP) defines a logical set of disparate, network-connected participants within a secure computing enclave. The resources are typically hidden from public discovery, and access is restricted via a trust broker to the specified participants of the enclave, removing the assets from public visibility and reducing the surface area for attack. Gartner predicts that through the end of 2017, at least 10 percent of enterprise organizations will leverage software-defined perimeter (SDP) technology to isolate sensitive environments.

Cloud Access Security Brokers

Cloud access security brokers (CASBs) address gaps in security resulting from the significant increase in cloud service and mobile usage. CASBs provide information security professionals with a single point of control over multiple cloud service concurrently, for any user or device. The continued and growing significance of SaaS, combined with persistent concerns about security, privacy and compliance, continues to increase the urgency for control and visibility of cloud services.

OSS Security Scanning and Software Composition Analysis for DevSecOps

Information security architects must be able to automatically incorporate security controls without manual configuration throughout a DevSecOps cycle in a way that is as transparent as possible to DevOps teams and doesn't impede DevOps agility, but fulfills legal and regulatory compliance requirements as well as manages risk. Security controls must be capable of automation within DevOps toolchains in order to enable this objective. Software composition analysis (SCA) tools specifically analyze the source code, modules, frameworks and libraries that a developer is using to identify and inventory OSS components and to identify any known security vulnerabilities or licensing issues before the application is released into production.

Container Security

Containers use a shared operating system (OS) model. An attack on a vulnerability in the host OS could lead to a compromise of all containers. Containers are not inherently unsecure, but they are being deployed in an unsecure manner by developers, with little or no involvement from security teams and little guidance from security architects. Traditional network and host-based security solutions are blind to containers. Container security solutions protect the entire life cycle of containers from creation into production and most of the container security solutions provide preproduction scanning combined with runtime monitoring and protection.

IT Europa and Angel Business Communications’ new event – the Managed Services Solutions Summit 2017 - is designed to help executives of enterprises, organisations and public sector bodies navigate the managed services maze and get the most out of managed services-based solutions. It builds on the seven-year history of highly successful UK and European Managed Services and Hosting Summit series of channel events.

The rapid growth in managed services and provision of IT as a service is changing the way customers wish to purchase, consume and pay for their IT solutions but evaluating and selecting which services are best suited to particular business needs creates its own challenges. The Managed Services Solutions Summit 2017 is an executive-level conference which will set out to demystify both the new technologies and the new delivery mechanisms and business models.

The event will feature conference session presentations by major industry speakers and a range of breakout sessions exploring in further detail some of the major issues impacting the development of managed services. The summit will also provide extensive networking time for delegates to meet with potential business partners. The unique mix of high-level presentations plus the ability to meet, discuss and debate the related business issues with sponsors and peers across the industry, will make this a must-attend event for any senior decision maker involved in buying information and communication technologies and services.

The Managed Services Solutions Summit 2017 will be staged in London on 22 November 201. The event is free to attend for qualifying delegates (senior managers and executives of businesses and public sector organisations). Those wishing to attend the event or IT hardware or software vendors, hosting providers, data centre co-location providers, ISVs and any other organisations involved in services delivered to end-users can find further information at: www.msssummit.co.uk

IT Europa (www.iteuropa.com) is the leading provider of strategic business intelligence, news and analysis on the European IT marketplace and the primary channels that serve it. In addition to its news services the company markets a range of database reports and organises European conferences and events for the IT and Telecoms sectors.

Angel Business Communications (www.angelbc.com) is an industry leading B2B publisher and conference and exhibition organiser. ABC has developed skills in various market sectors - including Semiconductor Manufacturing, IT - Storage Networking, Data Centres and Solar manufacturing. With offices in both Watford and Coventry, it has the infrastructure to develop a leadership role in the markets it serves by providing a multi-faceted approach to the business of providing business with the information it needs.

The 11th July 2017 sees the next in the highly successful series of Data Centre Transformation Manchester (DT Manchester) events, organised by Angel Business Communications, in association with Datacentre Solutions, the University of Leeds and the Data Centre Alliance.

The combination of such data centre knowledge and expertise ensures that DT Manchester is the premier data centre education event in the calendar, bringing together the data centre research and design community, the data centre vendor industry and, most importantly, enterprise end users, for a collaborative information interchange.

In 2017 DT Manchester will be running six Workshop sessions, covering a range of topics: Power including Power Management, Cooling, IT Energy & Availability, Business Needs & Management, Capability and Workforce Development. The workshops will be managed by independent industry specialists to ensure vendor neutrality. The workshops will ensure that delegates not only earn valuable CPD accreditation points but also have an open forum to speak with their peers, academics and leading vendors and suppliers.

The Workshops are complemented by Keynote presentations from major industry figures: John Kennedy, Senior Researcher at Intel and Tor Bjorn Munder, Head of Research Strategy at Ericsson & CEO SICS North Swedish ICT AB. Workshops and Keynotes, together with networking opportunities provide the perfect blend of educational and informative content and information exchange which is truly valued by the hundreds of delegates who attend.

2017 promises to be an exciting year for the data centre industry, as much of the received data centre design and operations ‘wisdom’ is being challenged not just by the maturing Cloud/managed services model, but by a host of new and emerging threats and opportunities: the Internet of Things, digitalisation, mobility, DevOps and micro data centres to name but a few. In simple terms, more and more intelligence is being brought in at all levels of both the facilities and IT aspects of the data centre – and by combining the information this provides, there are some huge efficiency and optimisation gains to be made by data centre owners and operators.

The Workshop sessions will address the fast moving technological evolution of data centres as mission critical facilities, this includes:

The overall objective of DT Manchester is to reflect the ongoing need for the facilities and IT functions to join together to ensure the optimum data centre environment that can best serve the enterprise’s business requirements. After all, data centres do not exist in isolation, but are the engines that drive the critical applications on which the enterprise relies.

For more information and to register visit: www.dtmanchester.com/register

As the next generation of digital infrastructure unfolds, there is a widespread expectation that a new tier of datacentres, or at least of compute and storage capacity, will need to be built to meet the latest demands of edge computing.

By Rhonda Ascierto Research Director, Datacenter Technologies & Eco-Efficient IT, 451 Research and Daniel Bizo Senior Analyst, Datacenter Technologies, 451 Research.

The Internet of Things (IoT), distributed cloud and other edge use cases will require computing, routing, caching and storage, localized analytics, and some automation and policy management close to where there are users and 'things.' Some suppliers, ranging from Schneider Electric and Vertiv to Dell, Ericsson Huawei and Nokia, expect that microdatacenters will play a leading role in meeting distributed edge demand.

Others such as Google are less convinced and are focused on building networks and capacity one step back from the edge – to the 'near edge.' Some say that edge datacentres will be relatively niche, citing the main use case as CDNs because of their high data volumes. In most other cases, they say, data volumes and latency needs will not need a 'dedicated' edge datacentre. They say the build out of new large and hyperscale datacentres, supported by reliable networks, will bring the edge much closer to users and 'things.'

Both arguments will likely bear out over time: the edge will almost certainly require many different datacentre types – and probably many of them. They will range from hyperscale cloud and large colocation facilities that are sited near or near enough to the point of use to support many applications, to new micro-modular datacentres at the edge, to smaller clusters of capacity that are not large or critical enough to even be described as datacentres.

The connectivity paths and datacentres required for different edge use cases will vary, although patterns of similar architectures are likely. Here at 451 Research we are bullish on the edge opportunity for micro-modular datacentres, including for integrated IT datacentre products via supplier partnerships (and with remote management services in the mix). At the same time, IoT gateways/systems are becoming increasingly sophisticated with more compute, storage and analytics.

At the near edge, colocation providers are dominating – their focus is on developing properties into connectivity-rich multi-MW datacentres with a 15-plus-year investment timeline. This may change for top-tier metro markets in the next 5-10 years (or sooner) if cloud giants follow through on a strategy of building additional capacity or shift existing capacity to their own datacentres (along with new investments in dark fibre). It is unclear how far the cloud giants will reach into the 'true' edge where telcos will become either key players or key partners.

Where is the edge?

The location of the edge is largely defined at the highest level by the application and workload function of the compute. It can include the physical or virtual location of the following:

Distributed datacentres at the edge

The volume of traffic in some distributed edge datacentres will be very high, and many data sets and applications may be involved. Failures or congestion in networks may cause serious problems in machines, devices and user experience – driving requirements for flexible, agile networking approaches, such as software-defined networking. Two-tier, leaf-and-spine architectures inside the datacentre will be ideal for optimized throughput and redundancy because of the vastly increased traffic between different services.

To understand the different types of datacentres required at the edge, it is useful to differentiate between edge and near edge.

Edge datacentre functions

The true edge is sometimes defined as where near-real-time response and action is needed, measured in sub-2 milliseconds (low or ultra-low latency). Ideally, there would be no – or very few – network paths or 'hops' and very little or no use of shared communications infrastructure between the user/data collection point and the true edge point of processing/aggregation. (When 451 Research has asked enterprise customers what they mean by edge, answers have varied: Some say at the device; others at the first point of aggregation; and others at the point where CIO control, in the form of performance or security, etc., and ownership begins.)

In some cases, telecom gateways such as 4G and 5G base stations and towers will require dedicated datacentre capacity to be collocated very close by, most likely in the form of micro-modular datacentres. These will meet the needs of east-west traffic at the edge where fibre is not an economic or otherwise practical option, such as, for example, between end-user devices and services near each other.

Some edge applications, including process manufacturing and the teleoperation of vehicles, generate data that requires rapid response and action. They need platforms that transform the data streams into formats that can be processed by applications that analyse and act on the data in real time. Wireless networks to support this with management software, protocols and processing/storage are known as ultra-reliable low-latency communications. In these environments, compute and storage capacity is required very close to the point of data generation – these are true edge computing functions.

This true edge processing capability is also referred to as 'fog computing,' which involves performing the required analysis and taking the resultant action as closely as possible to the data – or things of IoT. In other words, below the level of the centralised cloud.

To address this need, manufacturers such as Intel, HPE, Nokia, Dell EMC, Lenovo and Huawei, among others, sell local gateways that are essentially access routers with special interfaces (MODBUS, CANBUS, etc.) with varying degrees of compute and storage capability and that have the capability for VMs or containers to run applications. While this is a good and possibly the only viable solution for many environments, the scale of some potential IoT deployments will require hundreds or thousands of gateways, representing a considerable capital expense, in addition to the ongoing management and security of the gateways. In these cases, localized datacentre capacity will be needed that is one step back – the near edge.

Near-edge datacentre functions

The near edge can be broadly defined as the 'zone' where sub-millisecond latency, or low-single-digit latency, cannot (usually) be guaranteed across available networks, but performance and security has been architected to securely process, analyse, store and forward larger amounts of data – and possibly to connect to other applications and data sources – without going back to a centralized cloud service. Near-edge datacentres will likely be a mix of microdatacenters and much larger facilities, including enterprise, colocation and cloud datacentres that are sited deliberately or coincidentally near the user of the data.

Equinix, for example, positions its colocation and interconnection facilities as offering cost and management benefits over fully distributed edge environments (one or more aggregation points or devices at the interconnect versus hundreds or thousands of smaller devices to manage) and performance benefits over fully centralized cloud models. One of the notable moves by the company in this area is its Data Hub, which combines its interconnection and colocation service with tiered cloud-integrated storage to provide a potential aggregation layer for large amounts of IoT and other edge computing data. The service offers the opportunity for near-local storage, analysis and action of data, including data integration, as well as potential summarization of a large amount of data prior to backhaul to a centralized cloud.

Networking and datacentre topologies at the edge

Generally speaking, datacentre and network topologies to support low and medium latency and high traffic will vary. The deployment of 5G networks (likely to begin in 2019/2020 in pioneering countries such as South Korea and Japan) will reshape connectivity architectures for edge datacentres. In principle, 5G enables very good connectivity coverage from multiple gateways (cell towers) – if one gateway fails, the signal is picked up by another. With its 10Gbps design goal, one potential application of 5G is to replace the need for local high-bandwidth fibre to the edge, especially in some remote locations.

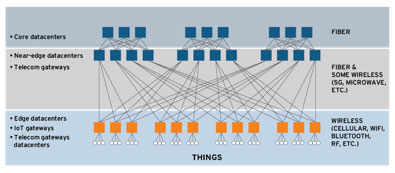

Ideally, 5G networking routes will be optimized, hopping intelligently between different 5G networks and cell towers. To optimize and utilize bandwidth more effectively, network management is increasingly being virtualized and separated from switches and devices. If visualized, these edge network and edge datacentre architectures may look something like the schema below, with true edge microdatacenters, and IoT and telecom gateways (at the very bottom) performing aggregation, control and analytics.

Potential Networking and Datacenter Topologies at the Edge

Source: 451 Research, Datacenters and Critical Infrastructure, 2017

Datacentres at the edge

Even within similar IoT use cases, there will be many different network architectures and datacentre types. It is likely that several IoT deployments will end up storing, integrating and moving data across a combination of public cloud and other commercial facilities, with both distributed micro and very large centralized datacentres playing a role. Some microdatacenters at the edge and near edge will incorporate software and network resiliency/capacity to enable failover to nearby similar facilities. This may mean that they will have relatively low physical resiliency/redundancy capabilities.

Key data and data needed by other applications and people will in some cases be made available at the near edge – including in large colocation and other metro datacentres. Cloud heavyweights are rapidly building hyperscale facilities with direct fibre links to leased colocation sites. These direct connects reduce latency and increase security and reliability, bringing hyperscale cloud capacity closer to the edge – effectively functioning as near-edge datacentre capacity.

Once consumed or integrated, data will then typically be moved or streamed into large or hyperscale, remote datacentres to be aggregated, analysed (including through integration with other data and applications) and archived. These large facilities represent the 'core layer.'

The figure below shows a broad schema of different types of datacentres and data paths for IoT and other types of edge computing, spanning the true and near edge and core layers.

Datacentres for IoT and Edge Computing

Source: 451 Research, Datacenters and Critical Infrastructure, 2017

Summary: Edge datacentre technologies

Although edge computing itself is not a specific technology, it will incorporate and attract a host of datacentre technologies and techniques that will make edge processing and storage a more effective deployment option. These include but are not limited to:

Suppliers are lining up behind the edge opportunity: there were 44 deals involving edge computing technologies in 2015 and 2016, according to 451 Research's M&A KnowledgeBase.

By Steve Hone, DCA CEO, Data Centre Trade Association

We always seem to get a great response from both members and academic partners for the summer edition of the DCA journal. It’s not surprising really when you consider the subject matter being discussed. It is a subject which will already be effecting most businesses as data demand continues to grow.

-nlhew5.jpg)

This month, the journal’s theme is “Education, Awareness, Skills and Training”. I consider all to be vitally important to the future health of our sector however I would like to focus this month on the one I feel is absolutely critical to our sectors future sustainability and that is ‘awareness’.

So, why do I put so much emphasis on ‘awareness’? Well, there are lots of reasons; one reason is that no one outside our little data centre fraternity seems truly aware that we exist or how instrumental the data centre sector is in supporting the global digital economy or the online services we all take for granted; (the recent issue with BA being a great example of this). It is for this very reason the Trade Association has a strong Public Affairs focus to help build bridges between DCA members, the sector and local Government.

The other reason is simply one of “supply and demand” - the demand is clear for all to see. It’s an undeniable fact that the demand for digital services is set for exponential growth over the next 10 years. That’s good and bad news. The good news is obvious, however the bad news is we seem unable to supply or find enough new talent to help swell our ranks to service this projected demand. The bottom line is it’s no good just concentrating on having great educational and training programmes if we can’t find ways to encourage fresh talent to enter the sector at ground level.

Funnily enough very few realise that household names such as Google, Amazon, eBay, Apple, WhatsApp, Snapchat and Uber all heavily rely on the data centre sector to deliver their services, and none of these organisations seem to have issues finding new talent, so what’s their secret?

Well, actually it’s not a secret at all - it’s because they’re not having to find new talent at all– they’re actually attracting it with relative ease. Like “bees to honey” the next generation of app-enabled (or in the case of my teenage son app-dependant), see these brands as sexy, cool and an integral part of their lives. These same teenagers just happen to also be the very consumers who are largely driving the data boom and remain blissfully unaware of what is going on behind the scenes to make these organisations shine. Like the proverbial iceberg they are only seeing what lies above the waterline.

There lies the challenge, it’s a problem we can only fix by pulling together and the trade association can only do this with your help. How do we make the next generation more aware of what the data centre sector is all about? Let’s face it not everyone can work for Google. Below the water line there are literally 1000’s of other career opportunities on offer and 10,000’s of jobs up for grabs in our sector. We just need to be doing a better job at helping the next generation see the bigger picture.

I would like to thank all those who contributed to this month’s DCA Journal and look forward to seeing you all at this year’s DCA Update Seminar and DTC Conference in Manchester on the 10th/11th in July, which is kindly organised for the DCA by Angel Communications (DCS).

By Sarah Parks PgDip MCIM, Director of Marketing and Communications, CNet Training - An Academia Group Company

The importance of education within the data centre sector is often overlooked and sometimes forgotten when it comes to planning budgets. This is in addition to the usual organisational distractions where personal development is an easy ‘pick’ to push aside and put off to the future. Yet, there is a strong connection between having a team of professionally educated and competent staff and organisational risk.

For many years now the outage figures attributed to human error have remained static at around 70%, costing companies thousands of pounds per minute. This lack of change proves that this significant issue is not being addressed. However, it’s not just lack of skills and experience that pose a threat to a data centre facility, even those who may look like the perfect employee on paper can pose a risk. It’s how competent and confident people are at applying their knowledge on an on-going basis within mission critical environments that is the key to risk mitigation.

It’s a common situation that many data centre professionals have naturally evolved into their roles as technology has evolved around them. Meaning that they most likely learnt on the job and naturally adopt the approach of ‘well, it’s worked for years so why change things’.

Data suggests that continuing to do the things the same way time and time again is risky, particularly within mission critical environments. Liken it to passing your driving test, when you first pass you are tuned into the rules of the road and consciously aware of what is going on around you and know the potential dangers. Yet, after a number of years of driving, who refreshes themselves by re-reading the highway code? Who goes out of their way to understand the latest technics that are being taught to the new drivers? Most people are guilty of not refreshing their knowledge in this area. This scenario in a data centre could have dramatic and potentially costly consequences.

Research shows that 29%* of data centre technicians pose a risk to the organisation, they have misunderstanding and misplaced confidence, and 50% have knowledge gaps in some areas where they demonstrate a lack of understanding within subjects.

Unconscious incompetent. This is the most dangerous, or risky. Usually it is new staff who are unaware of what they don’t know. However, as an organisation you know who they are and where they are, so it can be managed.

Conscious incompetence. Individuals are aware that they have a lack of understanding. This is less risky, as these people will not carry out a task as they realise they do not know how to do it.

Conscious competence. These are good people. They are capable and know that they are capable, an example being those that have recently passed their driving test.

Unconscious competent. Individuals that do stuff intuitively, they have been doing it for a long time and do it every day so don’t even think about it.

This hierarchy follows a cyclical process. It is a fact that when people perform the same role for a period of time, initially they are highly confident and highly competent (the conscious competent) but over time they sway into the intuitive (unconscious competent) zone. Usually these are people that have been in the role or function for a very long time, they have surpassed the conscious competence stage and because they are doing things intuitively they present a risk.

Today there are new tools that measure individuals’ competence and confidence levels and on a very detailed scale. This allows gaps to be identified and intervention tools put in place to rectify the problems, plug the gaps and significantly reduce the risk.

Effective Intervention Tools

OK, so some may think this is the new phrase for education, and maybe it is in a distant way, however the most important concept to grasp is that education can come in many different guises and do not necessarily have to affect the bottom line. Here are some examples:

Internal Tools

External Tools

However, investment in education needs to be put into perspective. Millions of pounds are spent on data centre equipment and on some that may never be used – yet the people who work in a data centre every day are not considered in the same way. It’s important that organisations understand that investment in professional development is hugely positive and beneficial to the organisation and does actually provide an ROI. A competent and confident team reduces business risk, increases productivity (employee contribution), helps with staff retention and loyalty as staff know they are doing things right and therefore gain more satisfaction from their job, adds brand value (against the brand damage that occurs when there is an outage) and, with a reputation for ongoing staff development, it can actually attract new talent directly to your organisation, after all it’s a big pond out there and if your organisation can shine above the rest, you have greater chance of catching the good ones.

Just think about the cost of just one minute of data centre outage and how this could significantly reduce the risks within your mission critical facility.

Footnotes:

*Sources: Data Centre Technician Competent & Confidence Evaluation May 2016-March 2017. Cognisco

Author:

Sarah Parks – Director of Marketing, CNet Training

Sarah Parks is the director of marketing and communications at CNet Training. Sarah is a seasoned marketing professional with a real passion for marketing and 20 plus years' experience within the technology, FMCG, retail and other sectors.

A study by Geist

The technology sector is one of the most demanding but rewarding sectors to work in – from the latest innovations to the generous salaries on offer in this industry, there are plenty of opportunities to expand the IT skillset; which in turn, leads to promising promotions and pay rises. Yet with a plethora of different disciplines to choose from, how do you determine which role best suits your experience and interests?

With numerous academic institutions offering specialised degrees as well as businesses featuring internships, there are plenty of prospective career paths to take. From taking into account which route to follow (starting out as an intern then undertaking cross-departmental duties) to recognising the relevant qualifications for your chosen job, you’re not limited in your options – with plenty of support and resources available to help you find the right position that complements your background.

• Cloud computing

• Big data analytics

• Mobility

• Social Business

Additionally, by anticipating any trends, this gives you leverage – as you can build up your knowledge and therefore, place you ahead of your competition. With more and more people vying for coveted roles within the technology industry, this has meant many employers have had to make the hiring process much harder – from assigning complex assessments to conducting interviews over several stages.

As a result, prospective employees have had to widen their expertise – not only having an excellent academic and vocational record, but possessing unique qualities in order to differentiate them from other candidates. While anyone can learn from educational materials and historic events, going that extra mile to do your research and predict industry trends will place you in good stead – as not only can your specialised knowledge benefit the company when developing future products, but also shows your most important asset: that you see a future with the company, and how you intend to carve your career within the data centre industry.

You may be required to demonstrate both quantitative and qualitative qualities – so it’s important to have a broad spectrum of understanding data as well as being able to carry out extensive research. For example, a data centre technician’s job often encompasses a number of different responsibilities, providing vital support to a team. Certain tasks include troubleshooting any failures, ensuring equipment is configured correctly as well as maintaining best practice guidelines (which at times may require customer communications). However, due to the non-stop nature of the data centre, it’s not unheard of to do shift work – so ensure your schedule is flexible, as you may need to work nights. Additionally, emergencies can take place any time (such as data recovery) – so be prepared to be called out at unsociable hours!

Above all, you should possess excellent communication skills – as communicating any type of issue is imperative to ensure the smooth running of a data centre. Supporting various team members as well as being in direct contact with your supervisors contributes to an excellent support network, and given the various elements involved in a data centre environment, makes it crucial that you can step in and help out with a duty normally maintained by another member of the team.

Here are the most popular data centre certifications undertaken by those starting out:

• Certified Data Centre Management Professional (CDCMP). The training covers key matters such as data centre management as well as basic design issues) while the professional unit advises on management topics (facilities, procedures) and business strategies. Provided through global training firm, CNet Training, other certifications available include Certified Data Centre Design Professional (CDCDP) and Certified Data Centre Energy Professional (CDCEP) plus many more.

• Cisco Certified Network Professional (CCNP) Data Centre. Considered by many in the data centre industry to be the gold standard, acquiring a Cisco Certified Network Professional (CCNP) Data Centre credential is one of the most highly-coveted certifications going. As one of the most respected credentials across the board, this is aimed specifically at technology architects and solutions – developed for candidates with three to five years’ experience with Cisco technologies.

• VMware Certified Professional 5 – Data Centre Virtualization (VCP5-DCV). Highly recognized for both its traditional data centre networking and cloud management aspects, candidates studying for this certification must fully understand Domain Name System (DNS), as well as have the know-how to install, manage and scale VMware vSphere environments (it’s recommended to have at least six months’ experience with VMware infrastructure technologies prior to taking the VCP5-DCV assessment).

While there are numerous other certifications to take – such as the BICSI Data Centre Design Consultant (DCDC) – the three mentioned are the best-known, renowned for their world-class reputation. However, this is only a starting point; and is completely dependent on your experience and which specialist area you choose to work in.

Below is a selection of job roles in the data centre industry that you can progress into:

• Data Centre Test Engineer. Tasked with setting up environments in order to test PC and network solutions within the data centre, while also exposing any issues and identifying major contributing factors.

• Data Centre Environmental and Safety Technician. Offering support and monitoring the various environmental, health and safety activities within the data centres, it’s your responsibility to investigate any safety issues while conducting environmental training.

• Infrastructure Architects. Responsible for the design of the data centre, infrastructure architects typically take care of any supporting services such as cooling and power – while project managers ensure any major installations are well maintained.

• Data Centre Manager. Overseeing the general running of the facilities, it’s imperative that data centre managers have a wide range of knowledge of all things data centre-related – from understanding about network and operating systems to knowing the correct protocols and processes.

• Electrical Engineer, Data Centre R&D. Working in a team that helps design and build the software, hardware and networking technologies, your role as a hardware engineer sees you develop small scale projects right through to high volume manufacturing.

• Data Centre Maintenance Planner/Scheduler. As a data centre maintenance planner/scheduler, this involves communicating both internally as well as maintaining regular contact with clients. Planning requirements for customers and managing entire project lifecycles, you ensure efficient execution of the various planning and scheduling processes – additionally, providing equipment-related knowledge and technical expertise on improving preventive maintenance tasks.

• Data Centre Control Systems Staff Engineer. This role usually entails at least 10 years’ experience as well as a relevant degree, with experience in critical infrastructure such as industrial automation, SCADA systems and PLCs. Providing technical support to the engineering and operations teams, your responsibilities encompass resolving any critical electrical controls related matters along with handling any procurement and vendor management issues.

Whether you choose to be a DCIM Specialist due to your interests lying in infrastructure, or prefer to be an in-house dedicated programmer, there is a number of academic and vocational qualifications to help you achieve your desired career goal. Typically, staff are separated into two categories – one team consists of implementation managers (meeting new customer requirements) with the backing of engineers (who look after installation and cabling). The operational team are tasked with equipment configuration and maintaining it once installed, consisting of senior employees specialising in areas such as storage and backup, database management, as well as customer service representatives.

A day in the life of a data centre employee is never routine, and at times, can be stressful – yet the breadth of skills learnt can be acquired extremely quickly. This allows you to attain a senior level relatively swiftly whilst developing a good understanding of specialist areas; and as no two customers are ever the same, only serves as the perfect breeding ground for data centre employees looking to expand both professionally as well as personally. As a sector that continues to grow in popularity and size each day, it’s a sector that boasts one of the lowest unemployment rates – so if you’re looking for a secure and highly gratifying job, then working in the data centre industry is the ideal career for you.

By Dr. Rabih Bashroush, University of East London

Many people confuse these terms or understandably assume they mean the same thing, but while the data centre sector and the DCA moves to address its needs, it’s critical to understand that there are very clear differences. The terms of training, education and awareness are often mixed, and the terms used interchangeably. Although choosing the right training or education program doesn’t need to be a laborious process; there is a need to clearly understand the very fundamental differences between them and the benefits associated with each.

Training provides candidates with skills and knowledge that are associated with state-of-the-art technologies and best practices. Training usually focuses on the ‘how’, and is often acknowledged through certification. Professional certification proves that an individual has completed the learning process and achieved the stated objectives. It can provide post nominal letters to use after the delegates name. Certification is unique; it shows a commitment to life-long learning, as re-certification is often required every few years due to ever evolving technologies and best practices.

Benefits for the employer

Benefits for the employee

Education, on the other hand, provides candidates with insights and understanding of theoretical underpinnings behind most technologies. Education focuses on the ‘why’, and is often acknowledged through qualifications that are valid for life. They also differ from certifications in that they are tightly controlled by professional bodies and only accredited providers can award qualifications. They are mapped to the International Qualification Framework and therefore recognisable across the world.

Benefits for the employer

Benefits for the employee

Finally, awareness provides target audiences with information about a topic to help them recognise its importance (e.g. Energy Efficiency, health and safety, etc.). Awareness is usually about the ‘why’ and largely delivered through videos, newsletters, posters, seminars and other types of campaigns (e.g. printouts on shirts, mugs, etc.).

Together, training, education and awareness programmes can provide a very strong framework to up-skill and retain your work force, increase your organisation’s capabilities, and drive professionalism.

Dr Theresa Simpkin, Senior Lecturer, Leadership and Corporate Education, Lord Ashcroft International Business School, Anglia Ruskin University

It’s no secret that the Data Centre continues to suffer from a deeply entrenched skills and capability crisis. Traditional talent pipelines are more reminiscent of leaky funnels as a reduced graduate population filter off into other, better known, industries.

Lack of gender balance is contributing to a diminished pool of qualified recruits and other non-traditional sources of talent (people from low socio-economic backgrounds for example) remain largely untapped.

The capability landscape, despite an emerging groundswell of activity, will remain somewhat barren without looking to emerging opportunities to fill vacancies now and to develop a pool of potential talent for the future.

One of the most opportune mechanisms available for the domestic Data Centre is the role of apprenticeships. Used elsewhere in the world to develop vocationally focused individuals with robust academic qualifications, apprenticeships offer an alternative to waiting for the current crop of university graduates to pop out of universities and filter into various industries; all of which are clamouring for the best and brightest of a relatively small supply. There’s simply not enough of them to go ‘round.

At the recent Datacloud Europe event in Monte Carlo, Christian Belady of Microsoft and Infrastructure Masons recently suggested “Availability of competency is being outgrown. There is a shortage of nonlinear thinkers.”

On the other hand apprenticeships, particularly degree level apprenticeships, are a common sense alternative to the reliance on the traditional labour pool. Unlike the image of the 16 year old male mechanic or painter in overalls and steel capped boots, the new apprenticeship offers the opportunity to skill, upskill or reskill new and existing employees of almost any age in technical and academic based programmes.

It makes good economic sense too. As from April this year, employers with an annual payroll of £3 million or more will need to make a contribution to the apprenticeship levy aimed at funding a target of three million apprentices. This is an initiative that recognises that industry needs to develop practical skills and capabilities quickly. As a blend of on and off the job training, apprenticeships can create job ready individuals much more quickly than traditional forms of higher education.

Degree apprenticeships (those that offer a bona fide undergraduate or post graduate university degree) in particular will, over time, provide a framework for employers to develop internal capability with a capacity to better fit the needs of the industry. As much of the training is on the job the development of competencies happens in real time, paying dividends almost immediately. For the Data Centre sector this is imperative. Simply keeping up with organisational change and technical advances requires a more nimble and responsive approach. A well-designed apprenticeship curriculum and skill development programme can deliver this.

Smart organisations will see this not as a tax but as a means to leverage the levy for business and industry capability development. Even smarter organisations will recognise how such an initiative could replace traditional graduate programmes and create an almost bespoke learning and development agenda that delivers benefits to a range of stakeholders;

It would be a travesty if the levy is seen as a tax instead of a golden opportunity to reinvigorate the capability development agenda across the sector. Whilst it will take some effort to maximise benefits, the potential return on investment is profound.

Get wise. Find out how the levy works and think creatively about how it could deliver benefits to a more responsive learning and development initiative.

Get involved. Trailblazer initiatives give industry the power to determine what’s needed by way of curriculum and end capabilities. Having a say creates a more bespoke approach for greater levels of apprentice employability and lays the foundation for a return on investment for the sector as a whole. End point assessments associated with apprenticeships, too, offer industry a means of ensuring what’s taught and assessed is consistent with the needs of the sector.

Join the dots. The current dearth of talent making its way into the sector is indicative of a complex and long standing suite of factors. The apprenticeship agenda can chip away at the talent shortage, gender imbalance and demographic shifts if approached in a holistic and sensible sector-led manner.

Overall, while there are different apprenticeship initiatives in different countries, the new English model offers an alternative way of looking at how the sector can address long standing and potentially disastrous capability shortages.

Find out more at https://www.gov.uk/government/publications/apprenticeship-levy/apprenticeship-levy

There is an overarching fear that Artificial Intelligence (AI) and Machine Learning are going to take over people’s jobs, but there is a counter argument that their main purpose is to support humans as enabling technologies.

By David Trossell, CEO and CTO of Bridgeworks.

In their proponents’ viewpoint, they aren’t disabling anyone. However, organisations that don’t train up their staff now to learn new skills may find themselves left behind. This includes IT, which is of increasingly strategic importance to most organisations today. Both technologies are becoming a fundamental part of our lives, and with the advent of semi-autonomous and autonomous vehicles they will become more so – both in consumer and enterprise applications.

SD-WANs are very good at the branch office level, but as technology moves forward data volumes are going increase and the time to intelligence will need to shrink. Whilst SD-WANs are great for low bandwidth applications, with high bandwidth applications a different approach is needed to move ever larger amounts of data.

Humans make mistakes – that’s part of our nature, and by using AI and machine learning the risks associated with human intervention can be removed, which could include unexpected network downtime due to the poor manual configuring of a wide-area network (WAN). Thankfully, the concepts of AI and machine learning in IT networking are not science fiction. Rather than making us weaker, they can make us stronger and enable us to increase our performance. They are no Armageddon; they are an enabler that can permit organisations to do more with fewer resources.

The science fiction of autonomous networking, which is spoken about by David Hughes, Founder and CEO of Silver Peak Systems, in his sponsored article for Network World, is already here today in solutions such as PORTrockIT and WANrockIT. They can correctly mitigate the effects of latency without your organisation having to unnecessarily spend money on ever increasingly large bandwidths, WAN Optimisation, SD-WAN and WAN optimisation solutions. With AI and machine learning much can be achieved with what you’ve already got, and an ever larger pipe won’t defeat the laws of physics no matter how much you spend. The problems created by latency will still remain.

Hughes says that many enterprises are using SD-WAN solutions to connect employees consistently and securely to cloud and datacentre applications, but by themselves they do not provide any form of optimisation to enhance the flow of data. You have to add WAN optimisation, which many of the SD-WAN providers do. However, with security concerns requiring encrypted data and rich media being an increasing part of the data mix, they provide little or no performance improvement. He’s nevertheless right to explain that automation is playing a role in SD-WANs to eliminate many of the repetitive and mundane manual steps, which are required to configure and connect remote offices.

He believes it has limitations though: “Automation has its limitations…[it] is not sufficient to translate high-level business goals or intent into specific actions across the network, and automation is not good at dealing with the many unanticipated situations across production WAN deployments.” In his view these are areas where machine learning and artificial intelligence can play a role. With machine learning, WANs can be directed to adapt to changing environments without human intervention.

AI and machine learning techniques permit us to better manage and to cope with the ever-growing data volumes too. Clint Boulton, Senior Writer at CIO magazine, talks about freight forwarding company JAS Global in his 12th May 2017 article, ‘How logistics firm leverages SD-WAN for competitive advantage’, and refers to it taking a gamble on an unknown technology.

The firm is using an SD-WAN to run cloud applications, but hopes to use it as the backbone of a predictive analytics strategy to grow its business. The claim is that JAS Global managed to cut millions of dollars from its bandwidth costs. That’s good.

Boulton also explains: “SD-WANs allow companies to set up and manage networking functionality, including VPNs, WAN optimisation, VoIP and firewalls, using software to program traffic routing typically conducted by routers and switches. Just as virtualisation software disrupted the server market, SD-WANs are shaking up the networking equipment market.”

He will, as many before him have found out once you start down the big data path, find that the volumes of data start to increase exponentially. The need to gather data from further afield at an increasing rate SD-WANs limitations start to bite. There will also be a a need to invest in larger bandwidth capabilities and data acceleration techniques. What’s certain is that data acceleration makes big data and predictive analytics increasingly viable. Machine learning can be used to help us humans to understand what story the data is telling us. Latency on the other hand can lead to inaccurate data analysis.

To me this just sounds like hype – particularly as WAN optimisation won’t necessarily increases WAN performance like it should do. On the other hand, data acceleration solutions can create performance increases. Your datacentres and disaster recovery sites don’t need to be situated within the same circles of disruption. Boosted by machine learning they can be placed thousands of miles apart, and as the transmitted data is encrypted it is very secure. The analysis of the network’s performance happens in real-time too, eliminating the risks of being reactive as opposed to being proactive.

Managing network performance, protecting your data, mitigating latency and reducing packet loss needn’t be the gamble that Boulton writes about. Mark Baker, CIO of JAS Global, felt he had to embrace SD-WANs because his company was already supporting global applications and email with MPLS networks and VPNs. The costs of running an enterprise resource planning (ERP) system over them worried him though. The ERP software required a sub-150 millisecond of latency. “Setting up and provisioning an MPLS system also takes several months”, says Boulton. Baker was therefore drawn to SD-WANs from Aryaka.

This is fine, but organisations should also look beyond SD-WAN to a data acceleration solution as it can do more for less. Many of Baker’s goals would probably have been achieved more quickly and more simply with one of them to address the latency challenge of having a global company “go from Atlanta to L.A. to London and Paris”. He adds: “But when you start talking about going across the pond or [to the northern and [southern] hemispheres there is a huge latency challenge to overcome when you’re lacking a traditional MPLS network”. With AI and machine learning, such a challenge is minimised - and that’s simply because machines can support humans effectively and sometimes outperform them. With machine learning behind data acceleration, you’ll always be a step ahead too.

Cloud computing is levelling the playing field for large and small businesses.

Netmetix’s Managing Director, Paul Blore outlines why.

Phil Simon’s 2010 book, The New Small, foretold the story of how an emerging breed of small businesses were poised to take on the big boys by harnessing the power of disruptive technology. Its central proposition has proved highly prophetic. Seven years later and we’ve all seen the PowerPoint; the world’s biggest cab company owns no taxis, the largest phone companies have no telecoms infrastructure and the biggest movie house on the planet doesn’t own a single cinema. You get the picture. Ambitious start-ups have, with the help of now-familiar technology, unseated the giants and established a new normal. David has slain Goliath, with the cloud rather than the catapult providing the metaphorical knock-out blow.

But you don’t have to aspire to be the next Netflix to exploit the value of technology. It’s there for all of us – and it’s changing the game. Traditionally, evaluating the technology infrastructures of large and small businesses was generally akin to comparing apple with pear. Now, thanks to cloud computing, every SME can compare with Apple. Independent research has found that almost half of SMEs believe technology ‘levels the playing field’ between small businesses and large corporations. Furthermore, the study suggests that the agility that comes with being smaller often gives SMEs the edge, enabling them to take advantage of digital innovation more quickly. The dynamic of modern business is changing. Small is becoming the new big – and cloud computing is helping to put it there.

However, despite the undoubted benefits, some SMEs are yet to move to the cloud. Many persist with legacy systems, or rely on server upgrades to solve their needs. Too often, technology is viewed as a tactical consideration rather than a strategic enabler. It’s a missed opportunity that’s holding companies back. Cloud computing is the transformative technology of our time. Fundamentally, it gives even the smallest businesses access to enterprise-grade IT infrastructure – the very same infrastructure, in fact, that the world’s biggest conglomerates are themselves deploying. It’s this transition that levels the playing field, giving SMEs a platform to future proof their businesses and flexibly align for growth.

The benefits of cloud computing are many but the most resonant boil down to advantages in four key components of the drive for digital transformation; flexibility, resilience, security and the development of the digital workplace. Primarily, the cloud gives small businesses the ability to scale their IT platforms based on the needs of their business today, rather than having to invest in infrastructure based on estimates of where it might be tomorrow. Instead of forking out significant up-front capital investment for functionality they may not need, the cloud allows companies to pay for services as and when they need them. IT becomes a utility – you simply turn the tap on when you need a little more, and turn it off when you don’t. This gives SMEs flexible access to transformative technologies such as Internet of Things, machine learning and artificial intelligence, as well as powerful tools and software that may have previously been out of reach. The ‘utility’ approach breeds an operational agility that helps small companies evolve infrastructure in line with business needs.

Another major consideration in the digital transformation journey is the need to maintain business continuity. Resilience is imperative. Typically, smaller businesses have relied on RAID storage, using multiple drives to protect their data in the event of hardware failure. The best cloud systems are more robust; data is stored not on drives but in industrial-strength data centres. In the rare event that an entire centre fails, businesses continue to function via remote data centres. This provides a level of resilience that’s unparalleled in most large organisations, let alone SMEs.

Perhaps the first question that’s asked about cloud computing is around security: how safe is my data? It’s a perceived barrier that often prevents businesses from considering it. But security is not a barrier to the cloud – it’s a reason to move there. Without doubt, a cloud deployment is more secure than any on-premise system – both physically and digitally.

Physically, most on-premise systems are ‘secured’ behind a locked door or in an alarm-protected room. Data centre environments are typically protected by CCTV, perimeter fencing and biometric access controls. Similarly, digital security for on-premise systems is often just a firewall. The major cloud providers invest hundreds of millions in data security each year – and their users reap the benefits simply by using their platforms. This means that small businesses can enjoy the same resilience as global giants like BP. Moreover, with a cloud deployment, organisations always benefits from the latest operating system, security updates and patches – further minimising risks. In an era where ransomware and data protection breaches present significant threats, companies need to do all they can to reduce vulnerability. The cloud, underpinned by good governance and good practice from a trusted IT partner, helps take care of it.

Across all industries, there’s much focus on the need to create a digital workplace that meets the needs of the modern workforce – providing mobility and connectivity and supporting collaboration. Cloud computing not only enables this, it can also unlock efficiency gains and help SMEs align for growth. For example, start-ups can facilitate remote working or open regional offices without the need for expensive infrastructure; with the cloud, everyone works off the same system and has access to the same tools and data. The benefits can be practical too. For instance, in companies that have migrated to the cloud, the removal of unnecessary hardware can free up office space and reduce the costs of outsourcing IT.

There’s little doubt that a move to the cloud can transform SMEs. It’s no surprise that many are making the journey. But it’s important to exercise caution: not all clouds are equal. Some ‘cloud providers’ offer little more than storage space on locally hosted servers. This provides no resilience and few of the scalable benefits associated with fully-managed services. It’s therefore essential you ask the right questions. Where will your data be hosted? Is it a credible data centre? What’s the physical and digital security of that facility? Where will data be replicated to if there’s an incident at the primary site? Can you actually operate from that back-up site, or will you need to restore your systems elsewhere to get up and running again? These are just the base considerations. The most effective partners will understand your business requirements and work with you to develop the best strategy.

In the era of digital transformation, having a flexible, robust and secure IT system is a clear business advantage. If you haven’t got the right infrastructure, whether that’s on-premise or cloud, you won’t be able to run your business effectively in the modern world. In truth, that world is marching relentlessly down a one-way street towards the cloud. Those that wait will get left behind.