The worldwide public cloud services market is projected to grow 18 percent in 2017 to total $246.8 billion, up from $209.2 billion in 2016, according to Gartner, Inc. The highest growth will come from cloud system infrastructure services (infrastructure as a service [IaaS]), which is projected to grow 36.8 percent in 2017 to reach $34.6 billion. Cloud application services (software as a service [SaaS]) is expected to grow 20.1 percent to reach $46.3 billion (see Table 1.)

"The overall global public cloud market is entering a period of stabilization, with its growth rate peaking at 18 percent in 2017 and then tapering off over the next few years," said Sid Nag, research director at Gartner. "While some organizations are still figuring out where cloud actually fits in their overall IT strategy, an effort to cost optimize and bring forth the path to transformation holds strong promise and results for IT outsourcing (ITO) buyers. Gartner predicts that through 2020, cloud adoption strategies will influence more than 50 percent of IT outsourcing deals."

Advertisement: Eltek

"Organizations are pursuing strategies because of the multidimensional value of cloud services, including values such as agility, scalability, cost benefits, innovation and business growth," said Mr. Nag. "While all external-sourcing decisions will not result in a virtually automatic move to the cloud, buyers are looking to the 'cloud first' in their decisions, in support of time-to-value impact via speed of implementation."

Table 1. Worldwide Public Cloud Services Forecast (Millions of Dollars)

| 2016 | 2017 | 2018 | 2019 | 2020 | |

| Cloud Business Process Services (BPaaS) | 40,812 | 43,772 | 47,556 | 51,652 | 56,176 |

| Cloud Application Infrastructure Services (PaaS) | 7,169 | 8,851 | 10,616 | 12,580 | 14,798 |

| Cloud Application Services (SaaS) | 38,567 | 46,331 | 55,143 | 64,870 | 75,734 |

| Cloud Management and Security Services | 7,150 | 8,768 | 10,427 | 12,159 | 14,004 |

| Cloud System Infrastructure Services (IaaS) | 25,290 | 34,603 | 45,559 | 57,897 | 71,552 |

| Cloud Advertising | 90,257 | 104,516 | 118,520 | 133,566 | 151,091 |

| Total Market | 209,244 | 246,841 | 287,820 | 332,723 | 383,355 |

Source: Gartner (February 2017)

The SaaS market is expected to see a slightly slower growth over the next few years with increasing maturity of SaaS offerings, namely human capital management (HCM) and customer relationship management (CRM) and the acceleration in the buying of financial applications. Nevertheless, SaaS will remain the second largest segment in the global cloud services market.

"As enterprise application buyers are moving toward a cloud-first mentality, we estimate that more than 50 percent of new 2017 large-enterprise North American application adoptions will be composed of SaaS or other forms of cloud-based solutions," said Mr. Nag. "Midmarket and small enterprises are even further along the adoption curve. By 2019, more than 30 percent of the 100 largest vendors' new software investments will have shifted from cloud-first to cloud-only."

Gartner predicts more cloud growth in the infrastructure compute service space as adoption becomes increasingly mainstream. Additional demand from the migration of infrastructure to the cloud and increased demand from increasingly compute-intensive workloads (such as artificial intelligence [AI], analytics and Internet of Things [IoT]) — both in the enterprise and startup spaces — are driving this growth. Furthermore, the growth of platform as a service (PaaS) is also driving the growth in adoption of IaaS.

From a regional perspective, China's IaaS cloud market forecast has been increased to account for anticipated higher buyer demand over the forecast period. In particular, the larger pure-play IaaS providers in China, as well as other telecom-related cloud providers driving this market, are reporting significant growth. While China's cloud service market is nascent and several years behind the U.S. and European markets, it is expected to maintain high levels of growth as digital transformation becomes more mainstream over the next five years.

There was a record 155MW of take-up across the four major European data centre markets of Frankfurt, London, Amsterdam and Paris during 2016 according to global real estate advisor, CBRE.

Amsterdam (54WM) became the first market in history to see more than 50MW of take-up in a single year, whilst London (49MW) and Frankfurt (34MW) recorded more take-up than any individual market had done in any year before 2016; the previous high was London with 29MW in 2010.

The Paris Market saw 17.6MW of take-up in the year, which is over seven times that of a particularly poor performance in 2015 (2.5MW). This was, in percentage terms, the largest increase from any market on the previous year.

Andrew Jay, Executive Director, Data Centre Solutions, at CBRE commented: “The record level of take-up in 2016 was totally unprecedented. Q4 alone saw almost as much activity as any other full year. Over the course of 2016 all four markets saw more take-up than they each did in the previous two years combined. The numbers are quite astounding.

“Cloud continues to dominate the landscape, with 70% of deals coming from this sector. These hyperscale cloud deals that once would have been unusual became the norm. We predict that cloud take-up will continue to increase in size as more hyperscale providers turn to large-scale build-to-suit facilities as an effective speed to-market option.”

Internet of Things (IoT) infrastructure spending is making inroads into enterprise IT budgets across a diverse set of industry verticals. IDC has just released the results of a U.S. survey, IoT IT Infrastructure Survey, which finds that improved business offerings, IoT data management, and new networking elements are key to a successful IoT initiative within an enterprise.

The new survey examines current and future plans of IT end users for IoT infrastructure and provides analysis on IT organizations' knowledge of IoT, their plans to deploy an IoT strategy in the next 24 months, and which vendors are best suited to influence end user IoT initiatives.

"Given the strong uptake in IoT based technology solutions, enterprise IT buyers are looking for vendors who can add IoT capabilities to the current networking and edge IT infrastructure," said Sathya Atreyam, research manager, Mobile and IoT Infrastructure. "Further, success of IoT initiatives will also depend on how IT buyers can effectively leverage newer frameworks of low power connectivity mechanisms, network virtualization, data analytics at the edge, and cloud-based platforms."

Advertisement: Vertiv

Additional findings from the survey include the following:

"The survey revealed that IoT will have significant impact on end users' decisions and strategies related to IT infrastructure across all three major technology domains: networking, software, and storage," said Natalya Yezhkova, research director, Storage. "Increase in budgets, broader adoption of public cloud, and open source solutions are the most anticipated results of IoT initiatives."

A new update to the Worldwide Digital Transformation Spending Guide from IDC forecasts worldwide spending on digital transformation (DX) technologies to be more than $1.2 trillion in 2017, an increase of 17.8% over 2016. IDC expects DX spending to maintain this pace with a compound annual growth rate (CAGR) of 17.9% over the 2015-2020 forecast period and reaching $2.0 trillion in 2020.

"Changing competitive landscapes and consumerism are disrupting businesses and creating an imperative to invest in digital transformation, unleashing the power of information across the enterprise and thereby improving the customer experience, operational efficiencies, and optimizing the workforce," said Eileen Smith, program director in IDC's Customer Insights & Analysis Group. "In 2017, global organizations will spend $1.2 trillion on digital transformation with discrete and process manufacturers contributing almost 30% of this spending, while the fastest growth will come from retail, healthcare providers, insurance, and banking."

The technology categories that will see the greatest amount of DX spending in 2017 are connectivity services, IT services, and application development & deployment (AD&D). Combined, these categories will account for nearly half of all DX spending this year. However, investments in these categories will vary considerably from industry to industry. The discrete and process manufacturing industries, for example, will invest roughly 20% of their DX budgets in AD&D and another 12-13% in IT services while the transportation industry will devote nearly half of its spending to connectivity services.

Advertisement: 8Solutions

The fastest growing technology categories associated with digital transformation over the five-year forecast are cloud infrastructure (29.4% CAGR), business services (22.0% CAGR), and applications (21.8% CAGR). And, despite a CAGR that is slower than the overall market (17.3%), AD&D spending will grow fast enough to overtake IT services as the second largest DX technology category by 2020.

More than half of all DX investments in 2017 will go toward technologies that support operating model innovations. These investments will focus on making business operations more responsive and effective by leveraging digitally-connected products/services, assets, people, and trading partners. Investments in operating model DX technologies help businesses redefine how work gets done by integrating external market connections with internal digital processes and projects. The second largest investment area will be technologies supporting omni-experience innovations that transform how customers, partners, employees, and things communicate with each other and the products and services created to meet unique and individualized demand.

On a geographic basis, Asia/Pacific (excluding Japan) will see the largest investments in DX technologies in 2017 with 37% of the worldwide total. DX spending in this region will be led by the discrete and process manufacturing industries as well as professional services firms. The United States will be the second largest region with 30% of the worldwide total, led by professional services, discrete manufacturing, and the transportation industries. Latin America and the Middle East and Africa will experience the fastest growth in DX spending with five-year CAGRs of 23.4% and 22.6%, respectively.

Worldwide revenues for information technology (IT) products and services are forecast to reach nearly $2.4 trillion in 2017, an increase of 3.5% over 2016. In a newly published update to the Worldwide Semiannual IT Spending Guide: Industry and Company Size , IDC estimates that global IT spending will grow to nearly $2.65 trillion in 2020. This represents a compound annual growth rate (CAGR) of 3.3% for the 2015-2020 forecast period.

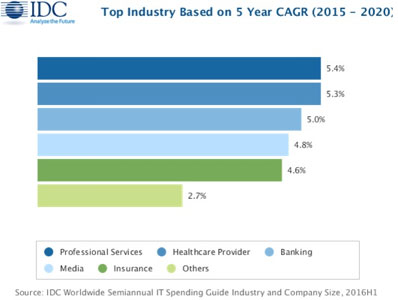

Industry spending on IT products and services will continue to be led by financial services (banking, insurance, and securities and investment services) and manufacturing (discrete and process). Together, these industries will generate around 30% of all IT revenues throughout the forecast period as they invest in technology to advance their digital transformation efforts. The telecommunications and professional services industries and the federal/central government are also forecast to be among the largest purchasers of IT products and services. The industries that will see the fastest spending growth over the forecast period will be professional services, healthcare, and banking, which will overtake discrete manufacturing in 2018 to become the second largest industry in terms of overall spending.

Advertisement: Hillstone

How can datacentre owners and operators rapidly understand availability, capacity and efficiency of their facility?

This video showcases the available datacentre services including datahall CFD calibration & server simulator load banks. Further details can be found on our website, or by getting in touch.

Meanwhile, more than 20% of all technology revenues will come from consumer purchases, but consumer spending will be nearly flat throughout the forecast (0.3% CAGR) as priorities shift from devices to software for things such as security, content management, and file sharing.

"Consumer spending on mobile devices and PCs continues to drag on the overall IT industry, but enterprise and public sector spending has shown signs of improvement. Strong pockets of growth have emerged, such as investments by financial services firms and utilities in data analytics software, or IT services spending by telcos and banks. Government spending has stabilized, and shipments of notebooks including Chromebooks posted strong growth in the education market. Double-digit increases in commercial tablet spending will drive a return to growth for the overall tablet market this year, despite ongoing declines in consumer sales. These industry-driven opportunities for IT vendors will continue to emerge, even as the global economy remains volatile," said Stephen Minton, vice president, Customer Insights and Analysis at IDC.

On a geographic basis, North America (the United States and Canada) will be the largest market for IT products and services, generating more than 40% of all revenues throughout the forecast. Elsewhere, Western Europe will account for slightly more than 20% of worldwide IT revenues followed by Asia/Pacific (excluding Japan) at slightly less than 20%. The fastest growing regions will be Latin America (5.3% CAGR) followed by Asia/Pacific (excluding Japan) and the United States (each with a 4.0% CAGR).

IT spending in the United States is forecast reach nearly $920 billion this year and top the $1 trillion mark in 2020. While IT services such as applications development and deployment and project-oriented services will be the largest category of spending in 2017 ($275 billion), software purchases will experience strong growth (7.9% CAGR) making it the largest category by 2020. Business services will also experience healthy growth over the forecast period (6.0% CAGR) while hardware purchases will be nearly flat (0.5% CAGR).

"While we are seeing a tempering in growth for U.S. healthcare provider IT spending as we enter the post-EHR era, the diverse and innovative professional services industry is expected to exhibit the fastest growth over the life of the forecast. Combine tech-savvy talent with an information-based business, and one can envision the multitude of possibilities for IT in this segment. IT investments will be used to achieve goals related to the differentiation of products and services, improving client satisfaction, and increasing revenue," said Jessica Goepfert, program director, Customer Insights and Analysis at IDC.

In terms of company size, more than 45% of all IT spending worldwide will come from very large businesses (more than 1,000 employees) while the small office category (businesses with 1-9 employees) will provide roughly one quarter of all IT spending throughout the forecast period. Spending growth will be evenly spread with the medium (100-499 employees), large (500-999 employees) and very large business categories each seeing a CAGR of 4.3%.

“Global SMB software spending will surpass that of hardware in 2018, upending traditional IT spending habits. More mature SMBs already recognize the value of linking software investments to business processes, and by the end of the forecast, we expect most midmarket firms will be on a path to embrace digital transformation," said Christopher Chute, vice president, Customer Insights and Analysis.

"Changing SMB attitudes regarding the importance of technology investment cut across company size and region categories. Small and midsize firms in developing geographies are just as interested in leveraging technology as those in developed regions. This sets the stage for spending growth everywhere, especially in midsize firms," added Ray mond Boggs, program vice president, SMB Research.

There are clear signs of maturity and still further evolution in the managed services market. Recent research continues to show high double digit growth in managed services for the next five years at least. The core reason for this is that it works, offering high effectiveness and performance, but to take full advantage, there is still a need for channels and MSPs themselves to keep up to speed on the latest developments, perhaps even more on the sales, management and development side than the technical aspects. The Managed Services and Hosting Summit 2017 (http://mshsummit.com/amsterdam) will focus on how the market has evolved and how the Managed Service Provider is helping organisations to succeed in this brave new digital world, but also on what MSPs need to know in 2017.

A 360Market research report in February 2017 - The Cloud-based Managed Services Market - predicts growth of 19.7% CAGR during the period 2016-2020. It says that the market drivers are the same as ever: the need to gain competitive edge, while the challenge to all players is the lack of integration expertise, both in customers’ organisations and the channels supplying them.

The research highlights a key trend in how demand for mobility services is spreading across industry verticals – something which MSPs themselves have noted, and have geared up to provide across diverse industries for improved data security, productivity, and privacy.

Cloud-based managed services consist of a wide range of services that help organizations to monitor, regulate, and improve the IT infrastructure of an organization and all these aspects are important. These services offer the advantages of cost containment and reduced inventory, which are attractive to customers, but the managed services industry still needs to arm itself with a clear sales message and the ability to convey all the implications of managed services through its sales message. These are required by organisations to develop an economical cost structure and minimise expenditure and to provide a solid foundation to build on in the future.

Until recently services were still unknown and some organisations and institutions were both reluctant to make any changes and rather sceptical about the security and privacy systems in the cloud services. However, now that larger companies are comfortable with the concept of the cloud services and are looking to move more and more of their IT requirements to this model, the advantages are being seen by smaller business. At the same time, new models of data management and control through the use of IoT are now appearing on customers’ lists of requirements.

So this model is not all-pervasive - many small and medium businesses lack the technical expertise that is required to make the conversion to the cloud, and some channels are still primarily following the traditional break-fix model. So the process of change is still accelerating. Reaching the SMB and IoT sectors is expected to provide lucrative opportunities to managed services providers. The segment of managed mobility services is anticipated to surge at a considerably high CAGR during the next couple of years. With the growing use of tablets, smart phones, and other different mobile devices, the growth opportunities in the managed mobility services market have also surged.

The Managed Services and Hosting Summit will examine the role of managed services in a digital world - The sessions will focus initially on the evolution of the digital marketplace, how Managed Services need to evolve in this digital era and how governments and the European Community are driving this marketplace. The event then breaks into two streams. The first, ‘Behind the Service’, will be a series of talks focused on the latest technologies and practices around the infrastructure and services vital to delivering the managed service offering. The second stream, ‘Delivering the Service’, will address some of the key issues around delivering a first class user experience.

The keynote talks following this will focus on how Managed Services can help organisations secure their financial future by the exploitation of the era of the ‘Industrial Internet’ as well as secure their own and customers’ digital information against a background of mounting international fraud and cyber-attacks. Speakers and panellists will share their knowledge and insight into the opportunities and threats that organisations now face and what they can expect as digitalisation spreads throughout all facets of business operations. For full details of the agenda see http://mshsummit.com/amsterdam/agenda.php

[optional extra para] The network management services segment is likely to hold the largest market share in the IoT Managed Services Market, says research, and this is another aspect to be considered by supply channels and MSPs. Network management deals with the entire network chain of an organisation. It is essential to enhance the network for optimum utilization of the available resources. Network management services assist in analysing the amount of data transferring over a network and automatically routes it, to avoid congestion that can result in crash of the network. Opting for managed services can help organisations with reduced downtime, better network connectivity, safety, security, automatic device discovery, scalability, and seamless operation of the business process, but the MSP, integrator and other channels need to understand the management issues and layers of responsibility.

A one-day end-user

conference on flash and SSD storage technologies and their benefits for IT

infrastructure design and application performance.

1st June 2017 – Munich / 15th June 2017 - London

Since the very early days of flash storage the industry has gathered pace at an increasingly rapid rate with over 1,000 product introductions and today there is one SSD drive sold for every three HDD equivalents. According to Trendfocus over 60 million flash drives shipped in the first half of 2016 alone compared to just over 100 million in the whole of 2015.

FLASH FORWARD brings together leading independent commentators from the UK, Germany and the USA, experienced European end-users and most of the key vendors to examine the current technologies and their uses and most importantly their impact on application time-to-market and business competitiveness.

Divided into four areas of focus the conference will carry out a review of the technologies and the applications to which they are bringing new life together with examining who is deploying flash and where are the current sweet spots in your data centre architecture. The conference will also examine what are the best practices that can be shared amongst users to gain the most advantage and avoid the pitfalls that some may have experienced and finally will discuss the future directions for these storage technologies.

In London the keynote speakers and moderators are confirmed as Chris Mellor of The Register, Ken Male from TechTarget, Randy Kerns of Evaluator Group and the widely read blogger Chris Evans while in Munich we have respected analyst Dr. Carlo Velten, Jens Leischner from the user community, Bertie Hoermannsdorfer of speicherguide.de and André M. Braun representing SNIA Europe delivering the main conference content.

Sponsors include Dell/EMC, Fujitsu, IBM, Pure Systems, Seagate, Tintri, Toshiba, and Virtual Instruments and both events are fully endorsed by SNIA Europe.

Pre-register today at www.flashforward.io

By Steve Hone CEO, DCA Trade Association

This month’s theme is Service Availability and Resilience. It’s only natural that every data centre wants to ensure they are as resilient as possible.

Data centre owners spend hundreds of thousands on technology trying to achieve this goal and then tens of thousands to keep it maintained both from a support and power perspective. The other holy-grail that everyone seems to chase is the lowest PUE figure possible.

These two objectives are actually diametrically opposed to one another as the more resilience you build into your data centre the more inefficient it becomes as you need more energy to maintain the infrastructure you have running. This often has the perverse effect of sending your PUE up, not down. It is worth noting at this point that the “E” in PUE stands for “Effectiveness” NOT “Efficiency” which is a mistake often made and this yet again puts a different prospective on it.

On the subject of effectiveness, it is also worth noting that just because you think the facility you run or use is resilient on paper (e.g. N+ this and N+ that) please don’t automatically assume these measures will actually be “effective” in ensuring your service remains ‘Available’. Making sure you have fully tested processes and that a business continuity plan is in place is just as important, and some would argue more important, than the hardware itself. After all, when things go wrong, and they will, it is normally human error which is ultimately to blame.

Advertisement: Riello UPS

One very real example of this happened recently to one of the largest cloud providers to the insurance world, having suffered a major service outage at its third party colocation data centre despite boasting a 99.99999% uptime record, all eyes quickly turned to the data centre provider as the guilty party. I am sure there will be lessons learnt by the colocation provider, however I can’t help wondering if the ultimate reasonability for this outage actually lies with the managed service cloud provider for not asking the right questions and not planning more effectively for the worst.

Having a regularly tested business continuity strategy with your suppliers is critical, in fact it is often referred to as an insurance policy to help protect your business and your clients. The irony of this story is that it involved one of the largest providers of cloud based services to some of the top global insurance companies – who all failed to recognise the risk or value in investing in an insurance policy of their own.

It would be unfair to single out this one incident, provider or sector as I’m sorry to say this story is not uncommon, there are clear lessons to be learnt here for all clients seeking cloud based services and for all cloud providers seeking a hosting provider to deliver and underpin their offering.

Problems do occur irrespective of how resilient the provider says their service is and when it happens the SLA won’t save you. It is vital you do your due diligence, ignorance is no defence in the eyes of the law and no defence in the eyes of very unforgiving clients.

I would like to thank all those that contributed articles this month. Next edition is an opportunity for your customers to speak for you in the form of client case studies so if you would like to submit then please forward them to:

Kieranh@datacentrealliance.org

Deadline for submissions is the 15th March.

Finally, The DCA has an update seminar the afternoon before DCW 2017 at the Excel on the 14th March, if you are a DCA member or someone interested in finding out more about the Trade Association and the value it can deliver, you are more than welcome to register and attend. Full details are available on the DCA website:

By Prof Ian F Bitterlin, CEng FIET, Consulting Engineer & Visiting Professor, University of Leeds

We’ve all seen the claims for 99.999% uptime in data centre SLAs (service level agreements) and adverts for UPS, promising the holy-grail of ‘five-nines’ Availability. But what does it mean? By itself, without any explanation or supporting statements, any claim for any percentage is meaningless along similar lines to Sam Goldwyn’s ‘a verbal contract isn’t worth the paper it’s printed on’.

To understand why I claim it to be ‘meaningless’ we simply have to consider how we calculate the percentage Availability in the first place. It could not be easier; you need just two numbers and the ability to add, divide and multiply by 100. The two numbers are usually number of hours, the MTBF (mean time between failures) and the MDT (mean down time). If you divide MTBF by (MTBF+MDT) and multiply the answer by 100 you have the percentage uptime. Simply put, it’s the ratio of the time between failures divided by the total elapsed time. So, 1 hour MDT every 25,000h (with one year being 8760h) results in 99.996% Availability.

Unfortunately, so does a 4 hour MDT every 100,000h, or 15 minutes every 6250h. Now we see the trick because, let’s face it, we are more interested in having a system that doesn’t fail for 11 years, but when it does takes 4 hours to fix than in a system that fails nearly every 9 months but only takes 15 minutes to fix. The load, the ICT system, can often take several hours to reboot and in the process much transient data can have been lost forever. So ‘Availability’ isn’t a good, or an informative, metric. We are, clearly, much more interested in MTBF and, we have to presume, the person doing the Availability calculation has used an MTBF and MDT figure to create the percentage – rather than just guess it?

Let’s look at the two simple examples I opened with. First the data centre uptime SLA. Now 99.999% will result in a break of once per year of approximately 5 minutes (a very poor data centre by any standards) or, more attractively, a break of 52.5 minutes once every 10 years. If you consider that the power system can fail for 10ms (10 thousandths of a second) and the load can be lost then it is vital that that the 52.5 minutes is in one single failure event and not an accumulation of 315,000 very short events!

However, a promised 99.999% presents problems for the M&E systems. If we consider that the ‘availability’ of a data centre depends upon mainly human error (which you can’t model) but on the product of power, cooling, communication and fire suppression and inadvertent action of the EPO (Emergency Power Off) button, then each system will have to provide close to 99.99999% uptime – a very ambitious and expensive target.

Secondly let’s consider the frequent claims for modular UPS systems with 99.999% uptime. Firstly, that rarely includes the power distribution between the UPS and load but we should ignore that for now. The point is that most UPS are limited to the MTBF of their output circuit breaker which is in the order of 250,000h and when you model the whole UPS all systems, largely regardless of technology or architecture, tend to 80,000-100,000h MTBF.

The only way to get 99.999% is to assume, without telling anybody, that the modular UPS is fixed within 15 minutes. This means fixed by the client himself, assuming he can, assuming he has the spares on site and not waiting 4-8 hours for the service engineer – all highly unlikely. If you use 8 hours for the ‘fix’ then the same MTBF produces an Availability of 99.99%, four not five ‘nines’. Herein lies a perception problem – 99.99% doesn’t look much worse than 99.999% but the difference could be disastrous for a data centre manager.

So, what ‘should’ we do? Well, that is also easy. A ‘proper’ SLA would read ‘an Availability of 99.999%, measured over a period of 10 years and defined as one failure event’. You will notice that you can now see the MTBF (87,600h) and you can (now) calculate the assumed MDT as 52.5 minutes. Mind you it isn’t as attractive for the marketing department as ’99.999%’ is much punchier!

By Wendy Torell, Senior Research Analyst, Schneider Electric’s Data Centre, Science Centre

The migration of critical applications from traditional data centres to the cloud has garnered much attention from analysts, industry observers and data centre stakeholders. However, as the great cloud migration transforms the data centre industry, a smaller, less noticed revolution has been taking place around the non-cloud applications that have been left behind. These “edge” applications have remained on-premise and because of the nature of the cloud, the criticality of these applications has increased significantly.

The centralised cloud was conceived for applications where timing wasn’t absolutely crucial. As critical applications shifted to the cloud, it became apparent that latency, bandwidth limitations, security and other regulatory requirements were placing limits on what could be placed in the cloud. It was deemed, on a case-by-case basis, that certain existing applications (e.g. factory floor processing), and indeed some new emerging applications (like self-driving cars, smart traffic lights and other “Internet of Things” high bandwidth apps), were more suited for remaining on the edge.

Considering the nature of these rapid changes, it is easy for some data centre planners to misinterpret the cloud trend and equate the decreased footprint and capacity of the on-premise data centre with a lower criticality. In fact, the opposite is true. Because of the need for a greater level of control, adherence to regulatory requirements, low latency and connectivity, these new edge data centres need to be designed with criticality and high availability in mind.

The issue is that many downsized on-premise data centres are not properly designed to assume their new role as critical data outposts. Most are organised as one or two servers housed within a wiring closet. As such, these sites, as currently configured, are prone to system downtime and physical security risks and therefore, require some rethinking.

Systems redundancy is also an issue. With most of the applications living in the cloud, when that access point is down, employees cannot be productive. The edge systems, when kept up and running during these downtime scenarios, help to bolster business continuity.

Advertisement: Schneider Electric

In order to enhance critical edge application availability, several best practices are recommended:

Enhanced security – When you enter some of these server rooms and closets, you typically see unsecured entry doors and open racks (no doors). To enhance security, equipment should be moved to a locked room or placed within a locked enclosure. Biometric access control should be considered. For harsh environments, equipment should be secured in an enclosure that protects against dust, water, humidity, and vandalism. Deploy video surveillance and 24 x 7 environmental monitoring.

Dedicated cooling – Traditional small rooms and closets often rely on the building’s comfort cooling system. This may no longer be enough to keep systems up and running. Reassess cooling to determine whether proper cooling and humidification requires a passive airflow, active airflow, or a dedicated cooling approach.

DCIM management – These rooms are often left alone with no dedicated staff or software to manage the assets and to ensure downtime is avoided. Take inventory of the existing management methods and systems. Consolidate to a centralised monitoring platform for all assets across these remote sites. Deploy remote monitoring when human resources are constrained.

Rack management – Cable management within racks in these remote locations is often an after-thought, causing cable clutter, obstructions to airflow within the racks, and increased human error during adds/moves/changes. Modern racks, equipped with easy cable management options can lower unanticipated downtime risks.

Redundancy – Power (UPS, distribution) systems are often 1N in traditional environments which decreases availability and eliminates the ability to keep systems up and running when maintenance is performed. Consider redundant power paths for concurrent maintainability in critical sites. Ensure critical circuits are on emergency generator. Consider adding a second network provider for critical sites. Organise network cables with network management cable devices (raceways, routing systems, and ties). Label and colour-code network lines to avoid human error.

A systematic approach to evaluating small remote data centres is necessary to ensure greatest return on edge investments. Schneider Electric White Paper 256, “Why Cloud Computing is Requiring us to Rethink Resiliency at the Edge” provides a simple method for organising a scorecard that allows IT managers to evaluate the resiliency of their edge environments.

Wendy Torell is a Senior Research Analyst at Schneider Electric’s Data Center Science Center. In this role, she researches best practices in data center design and operation, publishes white papers & articles, and develops TradeOff Tools to help clients optimize the availability, efficiency, and cost of their data center environments. She also consults with clients on availability science approaches and design practices to help them meet their data center performance objectives. She received her bachelor’s of Mechanical Engineering degree from Union College in Schenectady, NY and her MBA from University of Rhode Island. Wendy is an ASQ Certified Reliability Engineer.

By Michelle Reid, Board Director, Telehouse Europe

The hyper-connected economy - where people, places, organisations and objects are linked together as never before, presents data centre providers with both opportunities and challenges. The market for new facilities is on a sustained upward trajectory, and predicted to be worth $32.3 billion by 2020(1). The popularity of mobile video services, the emergence of new business models based around the Internet of Things, and the widespread use of cloud services is underpinning strong demand for data storage and transmission, leading to the construction of a string of new data centres around the world. These new facilities are tasked with meeting an ever-growing requirement for connectivity, resilience and scalability, across diverse platforms and partners.

The architecture of today’s data centres needs to be specifically designed to meet customer demands for connectivity, guaranteeing an environment that is resilient, secure, and provisioned with low-latency links to a wide range of business partners.

The different architectural models meeting this demand come in two forms. Large cash-rich organisations such as Facebook, Amazon, Microsoft and Apple have invested in gigantic new facilities costing hundreds of millions of dollars.

The market for colocation centres, meanwhile, where equipment, space and bandwidth are available for rent to retail customers, continues to thrive. These two strands of data centre provision combine to make a flourishing sector where investment levels remain robust.

Indeed, the data explosion shows no signs of abating, driven by several key factors. These include: insatiable demand for mobile video services delivered through social media platforms and Over the Top (OTT) players; the emergence of new business models based around the Internet of Things; the shift from locally managed hardware to cloud computing and the adoption of more complex data privacy legislation across the European Union.

While demand for data storage is likely to remain buoyant, rapidly-evolving communication technologies are likely to require new thinking around data centre architectures. Predicted trends include the emergence of so-called ‘edge’ data centres, with the potential to provide enterprises that have a highly distributed customer base faster access to applications and even more processing power.

Advertisement: 8Solutions

The growth of mobile content, cloud services, and the emergence of IoT-enabled networks in both consumer and industrial sectors, means the ‘edge’ of data centres needs to be closer to users in order to reduce latency and increase the cost efficiencies of data transfer.

First and foremost, it’s about visual networking - where video streaming, high-speed networks, and interactivity come together to allow consumers to communicate, share or receive information over the Internet, when, where and how the user wants it. Visual networking has become one of the most dominant trends of modern times, transforming in a very short space of time video content from long-form movies and broadcast television programming to a database of segments or ‘clips’ and social network annotations.

These days, individuals and businesses are actively pursuing new combinations of video and social networking across a wide range of entertainment and communications. This is resulting in the creation of unprecedented amounts of data that need to flow across networks reliably, predictably and with low-latency.

A Visual Networking Index, produced by networking equipment specialist Cisco, tracks and forecasts the impact of visual networking applications, revealing the data challenge that lies ahead. The latest version of the Visual Networking Index (See Figure 1), produced earlier this year, predicts annual global IP traffic will pass the zettabyte (ZB), equivalent to 1,000 exabytes (EB) or 1 billion terabytes (TB), threshold by the end of 2016, and will reach 2.3 ZB per year by 2020.

Overall, IP traffic will grow at a compound annual growth rate of 22 per cent from 2015 to 2020, while monthly IP traffic will reach 25 GB per capita by 2020, up from 10 GB per capita in 2015. The growth of global cloud traffic has sky rocketed over the course of the past five years and Cisco predicts that cloud traffic will be responsible for 92% of all data centre traffic by 2020(2).

Cloud service providers need to offer a service that is ‘always on’ and is hosted in a secure environment that enables low latency access to enterprise customers in the most efficient manner possible.

Data centre providers have been tasked with meeting this buoyant demand, particularly through the provision of highly connected data centre facilities which offer an eco-system of business partners and organisations.

The above market shifts are key drivers of the hyper-connected economy and are placing huge pressure on data centre infrastructure, with companies racing to add extra capacity, scalable bandwidth, scalable high density power options and true redundancy. This activity is illustrated by KDDI-owned Telehouse Europe, which in 2016, launched the first phase of Telehouse North Two, its new data centre in London, deliver clients 24,000 sq. m of gross area across an 11-storey building located in Telehouse’s existing Docklands campus. This expansion takes its overall footprint in London to more than 73,000 sq. m. North Two is fully integrated within the Docklands campus, enabling established customers and new clients to make the most of connectivity across the wider site and cross its interconnected network of 48 data centres worldwide.

Connectivity and High Density/Future proof power is key to data centre performance

In conclusion, it’s clear that the hyper-connected economy is underpinning a vibrant data centre sector, with plenty of scope for global growth. The rapid pace of technological advancement in areas such as mobile video services, cloud computing and the emergence of the Internet of Things means that the requirement for resilient data storage and transmission is being propelled forward at an unprecedented rate.

The challenge, for data centre providers, is to ensure that they have the capacity and the flexibility to meet customers’ needs, increasing the requirement for connectivity – both in terms of access to application platforms and partners as well as high density power options to meet ever growing, power demanding, virtualisation and cloud based services. It’s only through the continued investment in modern, efficient data centre infrastructure that the hyper-connected economy will reach its full potential.

Further reading

http://www.marketsandmarkets.com/PressReleases/data-center-construction.asp

http://www.datacenterdynamics.com/content-tracks/colo-cloud/research-cloud-to-be-responsible-for-92-percent-of-data-center-traffic-by-2020/97297.article

By Giordano Albertazzi, President EMEA, Vertiv

In 2016, global macro trends significantly impacted the industry, with new cloud innovations and social responsibility taking the spotlight. As cloud computing has integrated even further into IT operations, the focus will move to improving underlying critical infrastructure as businesses look to manage new data volumes. Vertiv believe that 2017 will be the year that IT professionals will invest in future-proofing their data centre facilities to ensure that they remain nimble and flexible in the years to come.

Here are the key infrastructure trends we see shaping the data centre ecosystem in 2017:

However, while energy efficiency remains a core concern, water consumption and refrigerant use are important considerations in select geographies. Data centre operators are tailoring thermal management based on location and resource availability, and there has been a global increase in the use of evaporative and adiabatic cooling technologies which deliver highly efficient, reliable and economical thermal management. Where water availability or costs are an issue, waterless cooling systems such as pumped-refrigerant economisers have gained traction.

Advertisement: Riello UPS

While data breaches continue to garner the majority of security-related headlines, security has become a data centre availability issue as well. As more devices get connected to enable simpler management and eventual automation, threat vectors also increase. Data centre professionals are adding security to their growing list of priorities and beginning to seek solutions that help them identify vulnerabilities and improve response to attacks. Management gateways that consolidate data from multiple devices to support DCIM are emerging as a potential solution. With some modifications, they can identify unsecured ports across the critical infrastructure and provide early warning of denial of service attacks.

Technology integration has been increasing in the data centre space for the last several years as operators seek modular, integrated solutions that can be deployed quickly, scaled easily and operated efficiently. Now, this same philosophy is being applied to data centre development. Speed-to-market is one of the key drivers of the companies developing the bulk of data centre capacity today, and they’ve found the traditional silos between the engineering and construction phases cumbersome and unproductive. As a result, they are embracing a turnkey approach to data centre design and deployment that leverages integrated, modular designs, off-site construction and disciplined project management.

For businesses looking to stay competitive and seamlessly transition to new, cloud based technologies, the strength of their IT infrastructure continues to be the cornerstone of success. With data volumes rapidly rising, IT infrastructures will continue to evolve throughout 2017 to offer faster, more secure and more efficient services needed to meet these new demands. Investment in the right infrastructure – not just a new infrastructure – is essential. It’s therefore vital that a partner with a strong history of data centre operations is involved throughout the system upgrade – from planning and design, to project management and ongoing maintenance and optimisation.

By Amanda McFarlane, Marketing & PR Executive, The DCA

Data Centres North 14 – 15 February, Manchester

The DCA team spent a productive two days at Data Centre North, Emirates Old Trafford. The show comprised of an exhibition, a conference programme and networking event. Our CEO, Steve Hone, chaired sessions on ‘Broadening the Data Centre Offering’ by Mike Kelly from Datacentred, ‘What Brexit means to the Data Centre Sector’ by Emma Fryer from Tech UK and ‘Data Sovereignty Update’ by Mark Bailey from Charles Russell Speechlys, the sessions were well attended and provoked some great questions from the audience. The atmosphere was buzzing after a superbly organised evening dinner with

the networking continuing into the small hours!

The DCA – Data Centre Sector Update Seminar 14 March, London, Excel

The day before Data Centre World (DCW) on 14 March, The DCA Trade Association will be hosting an update seminar. Registration opens at 12.00pm, the programme comprises of four 45 min sessions finishing at 4.30pm.

Sessions include an update on Standards, EU Projects, Public Affairs and a dedicated focus on Workforce Development to help address the growing skills gap in the sector. The update is followed by networking at The Fox pub. Please visit the DCA website for details and to register.

Data Centre World

15 – 16 March, London, Excel

Approximately 10,000 Data Centre professionals attended this event in 2016 and its predicted to be even bigger this year. Once again it is being held at the Excel in London’s Docklands. Data Centre World is co-located with Cloud Expo Europe which this year are in separate halls. These two shows combined make DCW one of the largest and best attended technology events on the world.

One of the highlights this year is sure to be the DCW Live Green Data Centre which was first introduced last year and it promises to be even bigger this year.

DCS Awards - 11 May 2017, London

The DCS Awards are designed to reward the product designers, manufacturers, suppliers and providers operating in data centre arena.

The Awards recognise the achievements of the vendors and their business partners and this year encompass a wider range of project, facilities and information technology award categories designed to address all of the main areas of the datacentre market in Europe.

The editorial staff at Angel Business Communications validate entries and announce the final short list to be forwarded for voting by the readership of the Digitalisation World stable of publications during March and April. The winners will be announced at a gala evening on 11 May at London’s Grange St Paul’s Hotel. Nomination is free of charge and all entries must feature a comprehensive set of supporting material in order to be considered for the voting short-list.

Advertisement: The Data Centre Alliance

EDIE Live 2017

23 – 24 May, NEC, Birmingham

EDIE 2017 is an annual two-day exhibition and conference attracting thousands of energy, sustainability and resource efficiency professionals. The DCA are excited to be sponsoring and attending this event for this first time this year. Our objective is to meet with our members end users, to help promote members and the data centre sector as a whole.

Data Centre World

24 – 25 May 2017, Hong Kong

The DCA are confirmed as event partners for DCW Hong Kong. Our Ambassadors (based in Hong Kong) are Andrew Green and Barry Lewington of PTS Consulting. Andrew is planning to speak on Energy Efficiency case study and to also talk about the value PTS has gained from its collaboration with the DCA.

Data Centre Transformation

11 July 2017, Manchester

The DCA will again be partnering with Data Centre Solutions and Leeds University to organise and host the 2017 Data Centre Transformation Conference on the 11th July 2017. In 2016 we introduced a completely new workshop format which was refreshingly different from the more traditional conference format. This was such a great success that the format will be repeated again this year. Workshops will have a theme that is currently of importance to the industry, each being designed and moderated to ensure they are vendor neutral.

The sessions attended last year proved to be very educational with a high level of delegate interaction. Everyone the DCA spoke with felt they had a voice and

could contribute to the overall discussion. The conference format also allows ample time between the workshop sessions providing a great opportunity for delegates to follow up on the points raised in the sessions and speak to the experts. This year there will be six workshops throughout the day.

DCA Golf Tournament

14 September, Oxfordshire

The DCA Golf Tournament is scheduled to take place on the 14th September 2017 at Heythrop Park, Oxfordshire. It is the first time we have had the tournament at this course so it should be fun. This is a popular event allowing our members and partners to enter a team to play at this picturesque 18 hole course. Look out for our mailers and on our website for information on how to register.

Credentialing equates to lower risk – and lower insurance costs

There are multiple areas of potential risk in a data center environment that can cause incidents that would result in an insurance claim. Risks for a data center include accidents that damage the facility, potential for workplace injuries, and business risks from downtime events that impact the data center’s or its customers’ business continuity. By R. Lee Kirby, President of Uptime Institute and Stephen F. Douglas, Risk Control Director for CNA – Technology.

When insurers and underwriters evaluate a data center organization for coverage, they want to be certain that the risk profile of the facility is as low as possible. Considerations such as fire resistance are a component of assessing data center risk, but focusing only on facility risks leaves out the most important part of the picture.

It would be like insuring an automobile based solely on the quality of the vehicle design and manufacture, but failing to ascertain if a licensed driver is operating it.

In today’s global economy, data centers are critical. Organizations depend on 24 x 7 x 365 IT infrastructure availability to ensure that services to customers/end-users are available whenever they are needed. To provide and maintain this availability is not only a matter of designing and building the right facility infrastructure—it’s about how that facility is managed and operated on a day-to-day basis to safeguard the business-critical infrastructure.

Owners and operators must do what they can to ensure that the risk of incidents and downtime has been minimized, and prove this low risk profile to their insurers. Industry-recognized credentials can help validate that operating risks are being managed effectively.

Relying solely on the physical characteristics of the data center such as the construction, type of fire protection system, and proximity to flood and earthquake-prone areas; although important, leaves out very important considerations in evaluating the effectiveness of a service provider’s risk management program. Typically, the redundant infrastructure of engineered data centers does present a low frequency of loss when compared to other types of operations. However, there is a significant increase in reliance on these data centers by end users as more companies outsource to the cloud or house their primary or backup networks offsite. This increasing dependency of end users on a centralized, outsourced infrastructure presents opportunities for technology service providers to set themselves apart from the competition and manage risks by formally addressing operational controls.

In framing the risks that service providers are exposed to—and that insurers will be concerned with—it is important to view the operation in terms of what part of the “data supply chain” the service provider occupies or is responsible for. Infrastructure providers, such as a co-location provider, have a specific but related set of exposures as compared to a software as a service (SaaS) provider at the other end of supply chain. The various entities in these increasingly complex supply chains must make decisions about the viability of accepting, avoiding, mitigating or transferring these risks. The risks to the data supply chain include not only first party direct losses, but third party liability losses as well. Even the first party losses will differ based on the services provided. The primary risks to the data supply chain can be categorized as:

Regulations – regulations create compliance risks at all levels of the data supply chain. Regulatory impact is greatly dependent on the types of services offered, industries served, and the complex shared responsibilities of infrastructure and service providers and their clients. Just a few examples of regulatory frameworks that may have impact even down to the infrastructure level include U.S. regulations such as HIPAA, GLBA, FISMA; international regulations such as the EU Data Protection Directive and industry standards such as the PCI DSS. In these complex regulatory environments, regulatory enforcement actions are common and the impact of fines and penalties is growing.

From 20 years of collecting incident data, Uptime Institute has determined that human error (i.e., bad operations) is responsible for approximately 70% of all data center incidents. Compared to this, the threat of “fire” (for example) as a root cause is dwarfed: data shows only 0.14% of data center losses are due to fire. In other words, bad operations practices are 500 times more likely to negatively impact a data center than fire. An outage at a mission critical facility can result in hundreds of thousands of dollars or more in losses for everything from equipment damage and worker injuries to lost business and penalties for failure to maintain contractual Service Level Agreements.

For both data center operators and insurers, there are some key questions to ask:

As discussed above, increased outsourcing, flexible cloud architecture, and resilient network infrastructures are creating increasing dependency on third party providers at all levels of the data supply chain. This increased dependency is creating greater liability risk for service providers.

Managing liability risks starts with contracts. A clear scope of work and allocation of risk between the contracting parties is essential. Clauses such as service level agreements, limitation of liability, force majeure, wavier of subrogation and indemnification wording reinforce the intended allocation of risk. Complex multiparty contract disputes are common particularly when significant losses are incurred. Claims of negligence are non-contractual, so even well executed contracts may not mitigate significant liability losses.

Data center operations credentials are another means of mitigating liability risks. In addition to reducing the probability of loss, clearly defined repeatable procedures and processes demonstrate adhering to a duty of care that is foundational to most standards of care. As with any human endeavor, residual risk will remain regardless of mitigation efforts. Insurance provides a means of risk transfer particularly effective on high severity risks.

Uptime Institute has provided data center expertise for more than 20 years to mission-critical and high-reliability data centers. It has identified a comprehensive set of evidence-based methods, processes, and procedures at both the management and operations level that have been proven to dramatically reduce data center risk, as outlined in the Tier Standard: Operational Sustainability.

Organizations that apply and maintain the Standard are taking the most effective actions available to protect their investment in infrastructure and systems and reduce the risk of costly incidents and downtime. The elements outlined in the Standard have been developed based on the industry’s most comprehensive database of information about real-world data center incidents, errors, and failures: Uptime Institutes’ Abnormal Incident Reporting System (AIRS). Many of the key Standards elements are based on analysis of 20 years of AIRS data collected on thousands of data center incidents, pinpointing causes and contributing factors.

To assess and validate whether a data center organization is meeting this operating Standard, Uptime Institute administers the industry’s leading operations certifications. These independent, third-party credentials signify that a data center is managed and operated in a manner that will reduce risk and support availability. There are two types of operations credentials: Tier Certification of Operational Sustainability (TCOS) and The Management & Operations (M&O) Stamp of Approval.

Both credentials are based on the same rigorous Standards for data center operations management, with detailed behaviors and factors that have been shown to impact availability and performance. The Standards encompass all aspects of data center planning, policies and procedures, staffing and organization, training, maintenance, operating conditions, and disaster preparedness.

The data center environment is never static; continuous review of performance metrics and vigilant attention to changing operating conditions is vital. The data center environment is so dynamic that if policies, procedures, and practices are not revisited on a regular basis, they can quickly become obsolete. Even the best procedures implemented by solid teams are subject to erosion. Staff may become complacent, or bad habits begin to creep in.

There is tremendous value for organizations that hold themselves to a consistent set of standards over time, evaluating, fine tuning, and retraining on a routine basis. This discipline creates resiliency, ensuring that maintenance and operations procedures are appropriate and effective, and that teams are prepared to respond to contingencies, prevent errors, and keep small issues from becoming large problems.

Insurance is priced competitively based on the insurers assessment of the exposure presented. Data center operations credentials provide the consistent benchmarking of an unbiased third party review that can be used by service providers at all levels of the data supply chain to demonstrate the quality of the organization’s risk management efforts. This demonstration of risk quality allows infrastructure and service providers to obtain more competitive terms and pricing across their insurance programs.

When data centers obtain the relevant Uptime Institute credential, it results in a level of expert scrutiny unmatched in the industry, giving insurance companies the risk management proof they need. Insurers can validate risk level to a consistent set of reliable Standards. As a result, facilities with good operations, as validated by TCOS or M&O Stamp of Approval, can benefit from reduced insurance costs.

Experience all the key technologies for the digital transformation in one place – at CeBIT in Hannover!

What was pure science fiction just a few years ago has become reality today and limitless business opportunities lie ahead.

CeBIT is the world’s foremost event on the wave of digitalization revolutionizing every aspect of business, government and society. Every year, the show features a lineup of around 3,000 exhibitors and attracts some 200,000 visitors to its home base in Hannover, Germany. The spotlight is on all the latest advances in fields such as artificial intelligence, autonomous systems, virtual and augmented reality, humanoid robots and drones.

Thanks to a rich array of application scenarios, CeBIT makes digitalization tangible in the truest sense of the word. “d!conomy – no limits”, the chosen lead theme for 2017, underscores the show’s emphasis on revealing the wealth of opportunities arising from the digital transformation. As a multifaceted exhibition/conference/networking event, CeBIT is a perennial must for everyone involved in the digital economy. The startup scene is also right at home at CeBIT and its dedicated SCALE 11 showcase, which sports more than 400 aspiring young enterprises.

The next CeBIT will be staged next month from 20 to 24 March 2017, with Japan as its official Partner Country and, on 19 March, Shinzo Abe, the Prime Minister of this year’s Partner Country, Japan, and German Chancellor Angela Merkel will officially open CeBIT 2017 in the presence of more than 2,000 VIP guests at the Welcome Night ceremony in Hall 9 of the Hannover Exhibition Center. The Partner Country, alone, is fielding around 120 companies to appear in every segment of the show.

Artificial intelligence, humanoid robots, virtual reality: new technologies are constantly pushing the boundaries of what is possible. What does this mean for society, the economy and concretely for your company? What new business models bring the most value? Dive into the fascinating digital future – at the world's biggest digitization showcase and experience all the big digital trends and highlights:

The integration of a trade show with a complementary conference program not only creates the ideal setting for generating new business, but also facilitates effective networking and a cross-industry knowledge transfer and dialogue between experts.

We have secured a limited number of complimentary exhibition tickets - Click here to get yours! (link: http://www.cebit.de/promo?qgpew

The CeBIT Global Conferences

#cgc17 will revolve around the slogan "Explore the Digital World!". The five day conference will delve deep into the realms of artificial intelligence, cyborgs & biohacking, robots, virtual worlds, the Darknet and cybercrime. The conferences in hall 8 bring together IT suppliers and users, Internet firms and investors, as well as creative and future-oriented thinkers.

This year’s conferences boast a truly stellar line-up of speakers. Among the big names are Google engineer and futurist Ray Kurzweil, the famed roboticist Hiroshi Ishiguro, and Stanford professor and social researcher Michal Kosinski. An expert in psychometrics, Kosinski has developed a mathematical method that analyzes Facebook likes and other publicly available data to determine people’s personality traits. The speaker list also includes someone who is arguably the world’s most famous whistleblower – Ed Snowden. Snowden will be joining the proceedings via live stream from Moscow.

Purchase your ticket to the CeBIT Global Conference http://www.cebit.de/en/conferences-events/cebit-global-conferences/cgc-tickets/

For more information visit www.cebit.de

However, there is something of a mystique surrounding these different data center components, as many people don’t realize just how they’re used and why. In this pod of the “Too Proud To Ask” series, we’re going to be demystifying this very important aspect of data center storage. You’ll learn:•What are buffers, caches, and queues, and why you should care about the differences?

•What’s the difference between a read cache and a write cache?

•What does “queue depth” mean?

•What’s a buffer, a ring buffer, and host memory buffer, and why does it matter?

•What happens when things go wrong?

These are just some of the topics we’ll be covering, and while it won’t be exhaustive look at buffers, caches and queues, you can be sure that you’ll get insight into this very important, and yet often overlooked, part of storage design.

Recorded Feb 14 2017 64 mins

Presented by: John Kim & Rob Davis, Mellanox, Mark Rogov, Dell EMC, Dave Minturn, Intel, Alex McDonald, NetApp

Advertisement: Cloud Expo Europe

Converged Infrastructure (CI), Hyperconverged Infrastructure (HCI) along with Cluster or Cloud In Box (CIB) are popular trend topics that have gained both industry and customer adoption. As part of data infrastructures, CI, CIB and HCI enable simplified deployment of resources (servers, storage, I/O networking, hardware, software) across different environments.

However, what do these approaches mean for a hyperconverged storage environment? What are the key concerns and considerations related specifically to storage? Most importantly, how do you know that you’re asking the right questions in order to get to the right answers?

Find out in this live SNIA-ESF webcast where expert Greg Schulz, founder and analyst of Server StorageIO, will move beyond the hype to discuss:

· What are the storage considerations for CI, CIB and HCI

· Fast applications and fast servers need fast server storage I/O

· Networking and server storage I/O considerations

· How to avoid aggravation-causing aggregation (bottlenecks)

· Aggregated vs. disaggregated vs. hybrid converged

· Planning, comparing, benchmarking and decision-making

· Data protection, management and east-west I/O traffic

· Application and server I/O north-south traffic

Live online Mar 15 10:00 am United States - Los Angeles or after on demand 75 mins

Presented by: Greg Schulz, founder and analyst of Server StorageIO, John Kim, SNIA-ESF Chair, Mellanox

The demand for digital data preservation has increased drastically in recent years. Maintaining a large amount of data for long periods of time (months, years, decades, or even forever) becomes even more important given government regulations such as HIPAA, Sarbanes-Oxley, OSHA, and many others that define specific preservation periods for critical records.

While the move from paper to digital information over the past decades has greatly improved information access, it complicates information preservation. This is due to many factors including digital format changes, media obsolescence, media failure, and loss of contextual metadata. The Self-contained Information Retention Format (SIRF) was created by SNIA to facilitate long-term data storage and preservation. SIRF can be used with disk, tape, and cloud based storage containers, and is extensible to any new storage technologies.

It provides an effective and efficient way to preserve and secure digital information for many decades, even with the ever-changing technology landscape.

Join this webcast to learn:

•Key challenges of long-term data retention

•How the SIRF format works and its key elements

•How SIRF supports different storage containers - disks, tapes, CDMI and the cloud

•Availability of Open SIRFSNIA experts that developed the SIRF standard will be on hand to answer your questions.

Recorded Feb 16 10:00 am United States - Los Angeles or after on demand 75 mins

Simona Rabinovici-Cohen, IBM, Phillip Viana, IBM, Sam Fineberg

SMB Direct makes use of RDMA networking, creates block transport system and provides reliable transport to zetabytes of unstructured data, worldwide. SMB3 forms the basis of hyper-converged and scale-out systems for virtualization and SQL Server. It is available for a variety of hardware devices, from printers, network-attached storage appliances, to Storage Area Networks (SANs). It is often the most prevalent protocol on a network, with high-performance data transfers as well as efficient end-user access over wide-area connections.

In this SNIA-ESF Webcast, Microsoft’s Ned Pyle, program manager of the SMB protocol, will discuss the current state of SMB, including:

•Brief background on SMB

•An overview of the SMB 3.x family, first released with Windows 8, Windows Server 2012, MacOS 10.10, Samba 4.1, and Linux CIFS 3.12

•What changed in SMB 3.1.1

•Understanding SMB security, scenarios, and workloads•The deprecation and removal of the legacy SMB1 protocol

•How SMB3 supports hyperconverged and scale-out storage

Live online Apr 5 10:00 am United States - Los Angeles or after on demand 75 mins

Ned Pyle, SMB Program Manager, Microsoft, John Kim, SNIA-ESF Chair, Mellanox, Alex McDonald, SNIA-ESF Vice Chair, NetApp

•Why latency is important in accessing solid state storage

•How to determine the appropriate use of networking in the context of a latency budget

•Do’s and don’ts for Load/Store access

Live online Apr 19 10:00 am United States - Los Angeles or after on demand 75 mins

Doug Voigt, Chair SNIA NVM Programming Model, HPE, J Metz, SNIA Board of Directors, Cisco

It had to come one day. After the initial and growing adoption of 10G Fibre Channel over Ethernet (FCoE) since the end of 2008, it was just a matter of time before the market introduction of the next speed level would materialize.

By Fausto Vaninetti, SNIA Europe Board Member.

The first switch capable of 40G FCoE appeared in the middle of 2013 but during 2016 a wider portfolio of 40G FCoE capable devices was brought to commercial fruition by some vendors. At first sight, 40G FCoE may seem just a speed bump as compared to its predecessor but a closer look shows a different story.

The original idea behind FCoE was network convergence and that is why FCoE technology started with 10G and not 1G bit rate. Having a single adapter, a single cable, a single switch for both LAN and SAN traffic seemed a good idea in terms of lowering cost and reducing administrative burden. When FCoE was first introduced, 8G Fibre Channel (FC) technology was already available but majority of deployments were still only using 4G FC. As a result, a 10G pipe offered a nice consolidation opportunity by bringing together native Ethernet traffic with Ethernet-encapsulated Fibre Channel traffic.

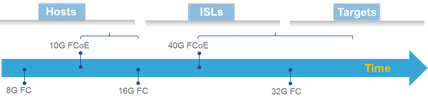

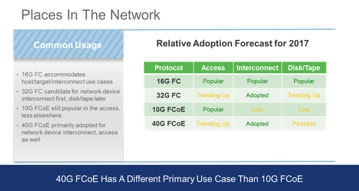

A few years after the availability of 10G FCoE capable adapters and switches, 16G FC became available. The convergence benefit provided by FCoE technology was still there, but the bandwidth advantage that FCoE could offer on top of 8G FC was now lost in favor of 16G FC. As a result, 10G FCoE saw a slowdown in adoption and remained confined to the place where it still makes a lot of sense: the access network. As a proof point of this, many blade chassis are nowadays sold with some type of embedded FCoE connectivity. This choice appears convenient since it reduces network adapter’s footprint on blade servers, minimizes cabling and shaves out overall cost. Rack mount servers, instead, are mostly sold with separate Ethernet and Fibre Channel adapters. As of now, market research indicates Fibre Channel traffic out of servers is using converged network adapters in approximately 30% of cases, leaving the rest to native FC host bus adapters.

Advertisement: Vertiv

The tangible savings when adopting FCoE are mostly coming from consolidation in the access, where server nodes connect to the network edge, but when you need to interconnect switches or connect to disk arrays, or simply when there is no interest in network convergence, 16G FC has won the majority of deployments. This is not to say 10G FCoE cannot be used for end-to-end multi-hop solutions, but not many organizations have embraced that deployment option.

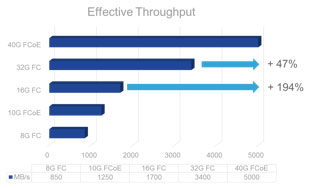

The introduction of 40G FCoE could change the scenario again. In fact, 40G FCoE is supported by both switches and modular platforms and could fit organizations of different sizes. It also offers three times the bandwidth of 16G FC for approximately twice the price, so that it captures attention in terms of cost/Gbps ratio. All in all, when cabling simplification is accounted for, the total cost of ownership of 40G FCoE becomes even more interesting.

This means the window of opportunity for 40G FCoE is not going to reach an end when 32G FC will grow in popularity and both protocols are expected to be seen in use within datacenters.

With the continuous increase in processing power within compute nodes, bandwidth needs on servers keeps growing. Between 2015 and 2017, cloud service providers are expected to double their virtual machine density per host in order to improve their profitability. At the same time, new applications within enterprises will leverage the newly available processing power to push network needs beyond 10G. When consolidation is a priority, 40G adapters are more than adequate for transporting modern Data Center traffic and can be a valid alternative to deploying separate 16/32G FC HBAs and 10/40G NICs. As a result, since their commercial introduction in 2015, 40G FCoE converged network adapters have experienced a slowly growing market penetration.

With the continuous increase in processing power within compute nodes, bandwidth needs on servers keeps growing. Between 2015 and 2017, cloud service providers are expected to double their virtual machine density per host in order to improve their profitability.

At the same time, new applications within enterprises will leverage the newly available processing power to push network needs beyond 10G. When consolidation is a priority, 40G adapters are more than adequate for transporting modern Data Center traffic and can be a valid alternative to deploying separate 16/32G FC HBAs and 10/40G NICs. As a result, since their commercial introduction in 2015, 40G FCoE converged network adapters have experienced a slowly growing market penetration.

However, the future is a bit uncertain. The combined effect of an increased price pressure, real aggregate bandwidth needs out of servers and newly introduced network speeds are possibly casting a shadow on 40G adoption. In fact, chances are that 25G will be the next speed on servers after 10G becomes insufficient. The consequence of this explains why the sweet spot for 40G FCoE seems to be as a technology to interconnect network devices more than anything else. If 10G FCoE became notorious for convergence in the access network, 40G FCoE could become popular to interconnect the edge of networks to the core, leveraging the high bandwidth it can deliver. This approach seems very reasonable for IT departments that already embraced FCoE in the access but could be viable also for organizations that are relying on a native FC access network.

As bit rate increases, the complexity of circuitry, modulated light transmitters and receivers will grow and consequently another important aspect will influence decisions: the cost of the transceiver itself as compared to the cost of a network port on a switch. A multimode transceiver at 16G FC can be approximately 20-40% of the cost of a 16G FC port on a switch. These pricing considerations will have an impact on the speed of adoption for 32G FC transceivers on both servers and switches, but for sure they will not impede their use on inter switch links even during the initial ramp up phase for the higher bit rate. Moreover, 32G FC is expected to enjoy a growing success on the storage side as well. In many cases, network devices and disk arrays are not refreshed at the same time and there is a need for the newly purchased equipment to be able to work with the existing installed base. From this point of view, the main feature that will drive 32G FC adoption in place of the 40G FCoE approach will be its backward compatibility with 8G and 16G FC ports.

It is never easy to make predictions, but do not be surprised if during 2017 the situation for new deployments will see 10G FCoE remaining popular in the access and 40G FCoE and 32G FC both gaining traction for networking devices interconnection and trending up elsewhere. Rather than competing solutions, they seem to be the two faces of the same coin, clear representation of reliable, scalable, deterministic storage networking options.