Data centre and more general IT consolidation has been a constant theme over the past few years, as the benefits of having less building and hardware and software assets, but using those that remain far more efficiently than before, thanks to virtualisation and, more recently, various software-defined technologies, are being realised by many organisations.

All makes perfect sense. And even the most cynical of us who have witnessed the constant ebb and flow of a period of centralised IT, followed by a move towards distributed compute, storage and networks, just maybe thought that we had witnessed the end game. Consolidation is here to stay, right? Whether it’s Public, Private or, favourite of all, Hybrid Cloud, with the addition of some more specialist managed services, everyone is trying (and generally succeeding) to do more with less.

And then along comes edge computing. Apparently, centralising everything is a bad idea, so it’s time to start sending the data much closer to where it is needed – distributing it to a local or regional data centre, so that the end user can access the data more quickly and, perhaps, more reliably. Well, for most, where milliseconds of latency aren’t a major issue, the consolidation strategy still holds good, so long as the consolidated data centre(s) and IT infrastructure is sufficiently robust (reliable, fast and of sufficient capacity) to cope with the required workloads.

However, with the advent of IoT and the ongoing big data explosion, some kind of an edge strategy is definitely going to make sense. As one vendor explained it to me recently, imagine an aeroplane flying across the US where, apparently, there are several thousand planes in the sky at any one time. The plane hits some turbulence at a certain height and location and wants to share this information with all the other planes in the sky. Such data needs to be sent as quickly as possible, which almost certainly means using a local data centre/IT resource – not a centralised location/infrastructure. Sounds as if grid computing is making a major come back…

Okay, so it’s early days, but I think it’s safe to say that edge (grid) computing is going to make sense in plenty of places and for plenty of applications. Dare I say it, just as most organisations are settling on a Hybrid Cloud model, it may well be that a Hybrid IT/infrastructure model will make sense as well. Data and applications, plus the necessary compute/network/storage resource, will be held and used centrally or locally, depending on what makes most sense. And here the idea of open technology and containers makes ever more sense, as the idea of sharing data without proprietary obstacles takes off. And orchestration, currently finding its way in this brave new world, holds the key to making it all happen.

What better way to

celebrate the end of the 2017 sporting summer than by joining up with the 4th

annual Data Centre Golf Tournament?

Co-organised by the Data Centre Alliance and Data Centre Solutions, the event brings together data centre owners, operators and equipment and services vendors for a great day out at Heythrop Park in Oxfordshire. This year’s venue promises to build on our three previous successful events, raising money for the CLIC Sargent cancer charity, and providing an enjoyable and friendly atmosphere to mix with your industry colleagues while trying to get that little white ball into that very small white hole

There might not be any green jackets or huge prize funds to tempt you, but if the following description of our 2017 venue doesn’t have you reaching for the application form right away, either you don’t have a pulse, or you really, really don’t like golf (or being pampered):

“Open 365 days of the year the Bainbridge Championship Course at Heythrop Park was redesigned in 2009 by Tom MacKenzie the golf course architect responsible for many Open Championship venues, including Turnberry, Royal St. Georges and Royal Portrush. The 7088 yard par 72 course weaves throughout the 440 acres of rambling estate and provides the perfect challenge for all golfers.

The 18 hole course meanders over ridges and through valleys that are studded with ancient woodland, lakes and streams. The course is quintessentially English and has several signature holes notably the 6th hole where the green nestles besides a fishing lake, the 14th which sweeps leftwards around an ancient woodland and the closing hole which is straight as a die and has the impressive mansion house as its backdrop.

Located 12 miles north of Oxford just outside Chipping Norton in the Cotswolds, Heythrop Park is within 90 minutes drive time of London and the Midlands.”

With the DCS and DCA teams already signed up, there’ll be plenty of meandering over ridges and through valleys, with plenty of diversions to inspect the woodlands, lakes and streams at close quarters. The fishing lake sounds particularly promising, if coming a little early in the round, while the 14th offers the opportunity to ignore the sweep left round the ancient woodland, and to head straight into the trees, where cries as ancient as civilisation itself will be heard. Needless to say designing a hole to be as ‘straight as a die’ will be wasted on most of us, and it is to be hoped that the mansion house is set sufficiently far back from the 18th green to avoid the potential vandalism of thinned approach shots.

Full details of the Data Centre Tournament, co-organised by the DCA and DCS, can be found here:

https://digitalisationworld.com/golf/

And if any of your colleagues think that a day on the golf course has nothing to do with data centres, tell them that it involves all of the following:

See you at Heythrop Park in September.

Asia-Pacific to lead market growth until 2020, finds Frost & Sullivan’s Energy & Environment team.

The mature global data center infrastructure solutions market will grow at a moderate pace despite data center consolidation, high capital expenditure, and end-user skepticism toward new or unfamiliar solutions. Increasing demand for

data storage, security and speed is creating an unprecedented growth in data traffic, ultimately driving data center infrastructure growth.“While North America accounts for the highest revenues, emerging markets will grow at a faster rate, and China will lead the Asia-Pacific region,” said Energy Research Analyst Gautham Gnanajothi. “Edge computing and disaster recovery applications offer tremendous potential, indicating a shift toward bringing data centers closer to end users. This, too, will drive modularity in data centers in the Asia-Pacific region.”

Advertisement: 400G At Light Speed - Panduit

Global Data Center Infrastructure Solutions Market is the new analysis from Frost & Sullivan’s Energy Storage Growth Partnership Service program. It highlights the growth opportunities and trends in the data center infrastructure solutions arena and provides a five-year forecast on the market metrics, covering data center cooling, genset, uninterruptible power supply (UPS), and rack segments. Cooling products accounted for a majority of the total market revenues in 2015-16 and remain the fastest growing segment, followed by rack and rack options.

“High user focus on traditional technologies and their tendency to underestimate the potential of modular solutions pose a severe challenge for the vendors,” noted Gnanajothi. “Further, the user base is contracting as data center owners turn to site consolidation to get the best value from IT investments; reducing the number of physical data center locations means fewer management issues and higher cost savings in terms of cooling and power needs.”

In addition to stepping up efforts to educate users on product innovations and technology advancements, market participants will increasingly form partnerships and alliances with other participants in the data center ecosystem to increase market reach. For instance, while rack manufacturers are collaborating with power distribution unit (PDU) manufacturers, UPS and cooling participants are forming alliances with modular data center providers.

Equinix and Digital Realty remain the market leaders among nearly 1,200 worldwide suppliers.

451 Research projects that global colocation and wholesale market revenue will top $48bn by 2021 in its latest quarterly release of our Datacenter KnowledgeBase (DCKB), which tracks nearly 4,500 datacenters operated by 1,193 companies worldwide.

In Q3 2016, the datacenter colocation and wholesale market saw $28.9bn in annualized revenue. The majority of this revenue (42%) was generated in North America, with Asia-Pacific generating 31%.

Following a year of significant M&A activity in 2015, the first three quarters of 2016 maintained the momentum with notable industry consolidation that included Equinix completing its acquisition of Telecity Group, Tierpoint buying Windstream and Digital Realty Trust taking on eight of Equinix’s European datacenters, among many other deals.

The datacenter market continues to grow, not only in many of the main markets, but increasingly in ‘edge’ markets outside of the global top 20. “Interest in edge markets is one of the factors driving consolidation,” notes Leika Kawasaki, Senior Analyst, Datacenter Initiatives at 451 Research. “Over the next one to two years, we expect to see growing interest from top providers and investors in markets outside of the top 20, particularly in Asia and Latin America.

Advertisement: DCS Awards

In terms of annualized colocation and wholesale revenue, as of Q3 2016 Equinix and Digital Realty remained the global leaders, with 9.5% and 5.7% share, respectively. Once the Equinix acquisition of Verizon's datacenter business closes in mid-2017, Equinix market share is expected to expand to 11.4% of global annualized revenue, equivalent to double that of the second-largest provider, Digital Realty Trust

New 2016 data from Synergy Research Group shows that revenue growth for the world’s three leading colocation operators far outstripped overall market growth in the year, thanks to a combination of major acquisitions and organic growth.

While the total colocation market grew 9% from the previous year, Equinix, Digital Realty and NTT in aggregate grew their colocation revenues by 28% and increased their share of the worldwide market. Beyond these three companies, the other top ten operators grew their colocation revenues by 12% while the companies ranked 11-20 grew by 8%. Apart from the three market leaders, other companies whose growth rates were well above average and who climbed the rankings included QTS, CyrusOne, CoreSite, China Telecom and KDDI-Telehouse. Consolidation will continue to be a major feature of the market, as evidenced by the recent announcement that Equinix is acquiring 29 data centers from Verizon, which is ranked second in the US retail colocation market.

The data, which covers both retail and wholesale colocation, shows that the market is expanding steadily across all regions, with APAC having the highest growth rate. The major countries with the highest growth rates were China, Hong Kong and India. Across the major regions, Equinix was the leader in EMEA, ranked second in North America and third in APAC. Digital Realty was the leader in North America and NTT the leader in APAC. Historically wholesale colocation revenues have been growing more rapidly than retail colocation, though in 2016 the growth rates were broadly similar. Equinix continues to have a strong lead in retail colocation while Digital Realty holds a similar position in wholesale colocation. Notably, Digital Realty and NTT now have significant market shares in both the retail and wholesale sectors, while Equinix maintains a tight focus on retail operations.

Advertisement: Managed Services & Hosting Summit Europe

“In some senses colocation is following the same path as the cloud with market power gradually being concentrated in the hands of a few focused and deep-pocketed operators,” said John Dinsdale, a Chief Analyst and Research Director at Synergy Research Group. “In both cases the ability to run large data center operations effectively and efficiently is vital to success and companies that are too diversified or unfocused will struggle. And the similarities don’t stop there – as cloud usage continues to explode, colocation growth opportunities are pulled along in the slipstream.”

According to the International Data Corporation (IDC) WW Quarterly Cloud Infrastructure Tracker, IT infrastructure spending (server, disk storage, and Ethernet switch) for public and private cloud in Europe, the Middle East, and Africa (EMEA) grew 19.5% year on year to reach $1.5 billion in revenue in the third quarter of 2016.

The cloud-related share of total EMEA infrastructure revenue from servers, disk storage, and Ethernet switches grew by 6 percentage points compared with last year to 24.9% in 3Q16. In terms of storage capacity, cloud represented around 44.8% of total EMEA capacity in 3Q16, with 8.6% growth over the same period a year before. Looking at the market in euros, EMEA in 3Q16 reported strong YoY user value growth (19.1%) in public and private cloud across servers, storage, and switches.

"IDC expects this market to reach a value of $10.9 billion by 2020, from the five-year forecast, or 35.4% of the total market expenditure. Fueled by increasing maturity and adoption rates of many new cloud-dependent technologies such as the Internet of Things, cloud continues to represent an area of tremendous growth for the European infrastructure sector," said Kamil Gregor, research analyst, European Infrastructure Group, IDC.

For the scope of this tracker, IDC has tracked the following vendors: Cisco, DellEMC, Fujitsu, Hitachi, HPE, IBM, Lenovo, NetApp, Oracle, the major ODM vendors, and others.

Advertisement: DCS Awards

Both fiber-optic data transmission and silicon-based microchips have been around for more than 40 years. But the integration of the two has proved to be an elusive challenge for material scientists in the semiconductor industry for many years – a challenge that has been overcome only recently with the development of silicon photonics (SiPh).

By Daniel, Bizo, Senior Analyst, Datacenter Technologies, 451 Research.

451 Research believes SiPh, which creates fiber-optic links directly in semiconductor chips, could fundamentally change how IT systems are designed and operated. SiPh involves transmitting light using semiconductor chips, only without the need for discrete optical devices, which leads a step change in the economics of high-speed optical connections in datacentres. It can deliver much more bandwidth for every dollar and every watt than existing copper interconnects.

Once in volume production, SiPh is expected to make fiber-optic links affordable enough to be used in servers to interconnect subsystems (e.g., processor and storage, network ports, accelerators). This could radically change the way servers are designed and deployed. Because optical links can support much longer distances than copper, components can be spread out in a cabinet, or across cabinets even, into pools and dynamically assigned from software. This will promote unprecedented utilisation levels.

This in turn will also affect datacentre facilities' capacity and configuration. Higher IT utilisation will reduce and shape demand for datacentre capacity, and SiPh-based system interconnect links will promote datacentre designs with relaxed climatic settings for 100% free cooling year-round or even the adoption of direct liquid cooling.

Today's system architectures are largely defined by the electrical properties of copper: peak data rates drop off steeply, while power requirements grow with wiring length. For decades, this limitation has required subsystems (processor, memory, storage, networking interface, expansion cards) to be very closely coupled to the system printed circuit board (PCB).

An optical link, however, can span many meters while retaining very high data rates. If it can be made small, low power and affordable, which is exactly what SiPh promises, then server subsystems such as storage, networking, accelerators or even memory, can be physically decoupled without loss of performance. This could lead to new system designs, such as Ericsson's HDS8000, that can be deployed spanning multiple cabinets and individual physical machines are defined from software. Among the promised benefits are more flexible configurations – such as much better and dynamic compute-to-storage balance in hyperconverged systems – as well as much higher utilisation of IT infrastructure because unused resources will not be trapped inside individual systems.

Advertisement: PIC International Conference

The key to the breakthrough of SiPh is the integration of all the fiber-optic components on silicon chips using a largely standard high-volume semiconductor fabrication process. Chip makers have sunk billions of dollars into their existing technology and fabrication plants (fabs) that are churning out millions of processors and memory chips every day; they will seek to reuse most of their existing manufacturing tools to produce SiPh parts at a very low unit cost.

The first company to fully achieve that objective is Intel, which started shipping its first commercially available SiPh components in the third quarter of 2016. Although there are other companies, such as Luxtera and Kotura, that also describe their technology as SiPh, those use workarounds such as precision mounting and micro packaging. Research efforts similar to Intel's are also under way at Hewlett Packard Enterprise and IBM and are expected to yield commercial results in the next few years.

At 451 Research we expect that with each generation of SiPh-based systems this concept of resource decoupling and pooling will be pushed ever further. The first generation of SiPh-based systems still ties processors and memory together. Once (and if) SiPh links are integrated on the processor silicon or in its package, compute nodes themselves will be dynamically configurable from one processor to a high number of processors, from a little memory to an ocean of (then storage-class) memory. This could happen sometime by the first half of the 2020s.

There will likely be several major implications from SiPh-based infrastructure designs. One of the most significant could be a 2-3x improvement in server and datacentre resource utilisation. Potentially the biggest will be much more balanced resource utilisation on an infrastructure scale, which alone could deliver a factor of 2-3 improvement. Much of the waste today is a result of resource imbalances in IT systems and across the supporting datacentre infrastructure, which is generally inflexible (datacentre cooling and power backup systems, for example, are typically static). As a result, many IT systems are overprovisioned, yet some still struggle to handle peak load due to internal resource bottlenecks – with the processor, memory, storage, networking capacity or any combination thereof.

These imbalances create waste and result in underperforming servers. If server subsystems can be dynamically composed from software, administrators will have the means to push the whole infrastructure much closer to the upper limits of their performance. Decoupling of subsystems also means that technology refresh cycles will be more specific (whereby just the compute is refreshed, or just the memory, storage or other subsystems). This will likely promote faster technology upgrades for processors – Intel tends to introduce a new server chip generation every year.

The net effect of SiPh on the overall datacentre footprint, however, remains unclear. If history is any guide, demand for technology grows faster than its cost and power efficiency gains because the fast-dropping marginal cost of IT capacity attracts ever more business and consumer activity, and creates new ones as well that were cost-prohibitive to computerise before. For many slow-moving enterprises, however, their datacentre footprint will likely shrink as new SiPh-equipped systems with the latest generation processor and solid-state storage outperforming legacy systems by an order of magnitude – a few cabinets will likely displace a complete data hall. This will allow many enterprises to decommission some of their datacentre facilities to save money.

Another interesting potential of SiPh-based systems is the ability to distance hard drives and tape libraries from all-silicon sections of the infrastructure to allow for vastly different climatic settings and operating styles. Because silicon is much less susceptible to high temperatures or high humidity, datacentre designers will likely be more amenable to using 100% free cooling year-round or even to introduce direct (to the chips) liquid cooling to eliminate fan power. Disks, tapes and legacy systems, on the other hand, can be placed in a tightly controlled data hall but connected via SiPh links.

Volume production of fully semiconductor technology-based SiPh is at its early stages at Intel, while HPE and IBM and others are still in the development phase. Manufacturing costs will largely depend on the maturity of the manufacturing process and sales volumes – both are unknown quantities today. It remains to be seen if commercial SiPh components from Intel can deliver on the triple promise of low cost, low power and high performance to deliver a breakthrough in fiber-optic interconnectivity. It will take another year at least to ramp up production, with meaningful adoption unlikely before 2018/2019. Even if Intel does deliver, it will take years for server designs to fully adopt and adapt.

First-generation disaggregated systems are available from Ericsson and Dell and other server vendors. However, it typically is only for select large customers that are willing to buy many fully populated cabinets of infrastructure. For general enterprises, it is not yet accessible, and for the small and midmarkets, it probably won't be a priority, initially at least, because their IT infrastructure requirements are much smaller.

Indeed, adoption will be likely skewed toward large service providers and enterprises, including hyperscale operators that are adding new capacity in massive increments. SiPh needs some scale and a considerable up-front commitment to show its true potential. As a result, technical and economic benefits will unlikely become evident for the mainstream buyer for some years to come.

As a company that’s made a number of acquisitions itself, Alternative is well experienced in how to amalgamate IT functions post acquisition. After all, we’ve acquired six IT and telco businesses in the past 10 years, so we know the complexity involved in the unification of existing structures at many different levels.

By Marion Stewart, Director of Operations, Alternative Networks.

And if you have a core proposition that positions your own IT operations function as “an extension of your customers’ IT team”, as Alternative’s does, then managing such amalgamations can add further layers of complication. Even if you don’t position your IT as an adjunct of the clients’, then getting your tech in order pre-merger, is still something you need to ensure you do well, otherwise you run the risk of disrupting and possibly damaging the benefits of the acquisition in the first place.

There are a number of facets to integration that clearly need discussing, but for the purposes of this blog I think we should focus on the area that consumes the most time and resources in M&A: tooling.

Clearly change is a significant factor of any M&A, but we have determined that we could reduce the impact of change on organisational teams by having the right technology already in place to collaborate effectively when we either made acquisitions, or indeed became an acquisition target ourselves. We now dine out on the story of how we have “industrialised our operations” and how we are able to help our customers do the same.

Following any merger, there clearly are lots of unknowns. People and skills are more often than not stuck in silos and quite surprisingly, yet commonly, do not overlap. Communication methods are complex, usually with multiple and disparate domains, email addresses, file stores, etc.; whilst processes are disjointed, and unconnected, so much so that finding harmony, efficiencies and service improvements add to overall complexity.

Advertisement: Panduit

Our recommendation and approach to resolving this, based on our own experience, is to have a clear and uncompromising view of the end game, in terms of service architecture, technology and tooling. This end game needs to be mandatory, simple and everyone needs to be bought in.

Over the past year, we have focussed our efforts on rationalising operational tooling into a single ServiceNow instance and a single Salesforce instance, with some more specialist tools and bespoke IP around the outside. It was important though that the overall tooling and service architecture could always be drawn on one side of A4; and equally important that everyone could draw it. Internally we called it the “Magic Doughnut”.

Armed with the doughnut, we set about going from eight different ERP platforms, four CRM, numerous spreadsheets and fag packets, to a clear consolidated approach. Satisfyingly, because we’d clearly set out the end game, we found that all stakeholders proactively considered the possible ramifications of the new tooling and process on their own teams, rather than expecting someone to have done it for them.

Generally speaking, the integrations went smoothly because we did not waiver from this end game vision. Of course people had to compromise in places, but providing this compromise did not adversely affect our services to our clients, we were happy to make the compromise. The end result is that we have a simple, structured and universally understood operational structure, which runs from a centralised and universally visible tool. We have layered our ITIL processes over this ServiceNow instance, integrated ServiceNow with other systems to provide end to end workflow and bespoked some interfaces to maintain our services to our users.

We termed this as “industrialising” our operations, because we felt the legacy platforms could have been better, but by moving to a single industrial strength structure, we could now drive improved customer services and efficiency through consistent nomenclature and clarity of understanding. We now run tickets according to best practice, manage configurations according to best practice, provision new services according to best practice, and integrate our cloud platforms according to best practice.

And now this is in place, future acquisitions or mergers, or future product innovations, can be delivered from the same service architecture with minimum rework. Critically though, a smoothly integrated, uncomplicated, consolidated approach, not only enhances the ease and efficiency of service and boosts customer satisfaction, but resonates positively with your workforce, which, and make no mistake, during any merger or acquisition, is certainly the most valuable asset, but also its most sensitive.

The Cloud Industry Forum (CIF), is a not for profit company limited by guarantee, and is an industry body that champions and advocates the adoption and use of Cloud-based services by businesses and individuals.

CIF aims to educate, inform and represent Cloud Service Users, Cloud Service Providers, Cloud Infrastructure Providers, Cloud Resellers, Cloud System Integrators and international Cloud Standards organisations.

Each issue of DCS Europe includes an article written by a CIF member company.

For developers and implementers of data storage solutions,

it’s useful to understand the Serial Attached SCSI (SAS) connectivity

documentation developed by various organizations within the SAS community and

how they fit and work together.

By Jay Neer, board member, SCSI Trade Association, Moxex.

The International Committee for Information Technology Standards (INCITS) is the central U.S. forum dedicated to creating technology standards for the next generation of innovation. INCITS members combine their expertise to create the building blocks for globally transformative technologies. The INCITS Technical Committee T10 creates the SCSI standards which include the SAS standards that apply to today’s most popular enterprise level storage interface.

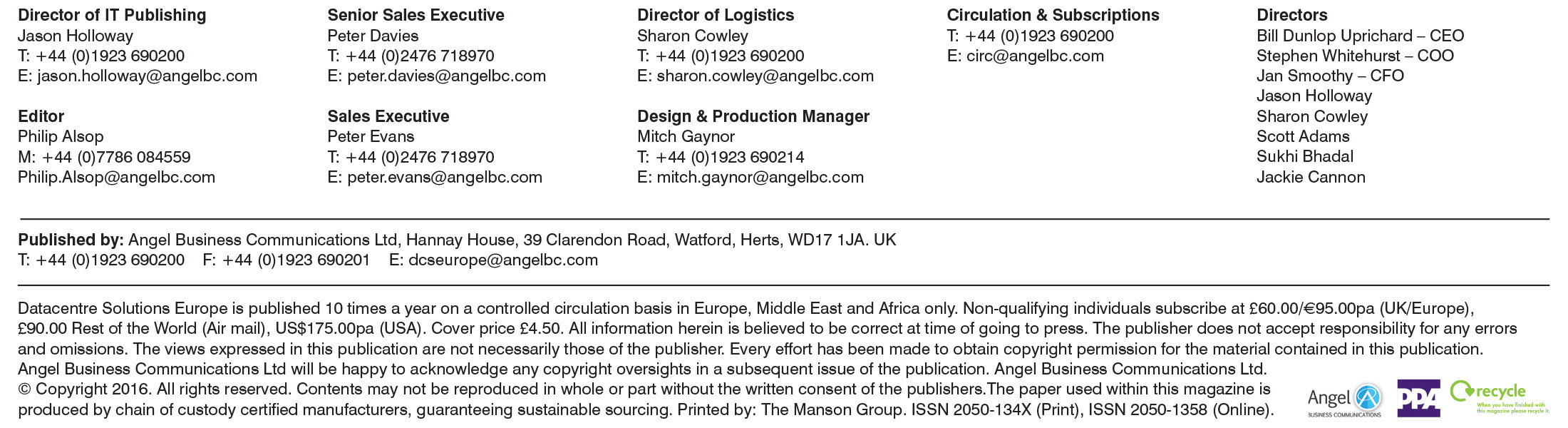

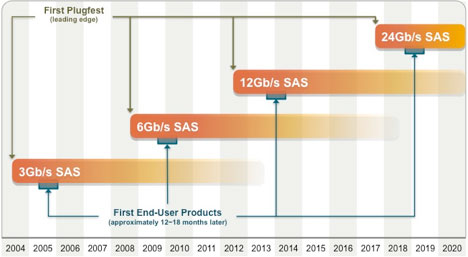

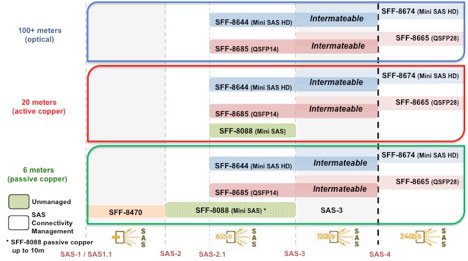

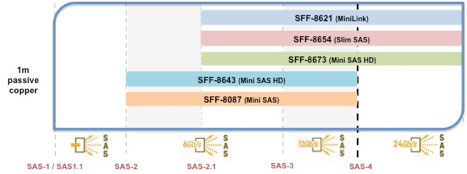

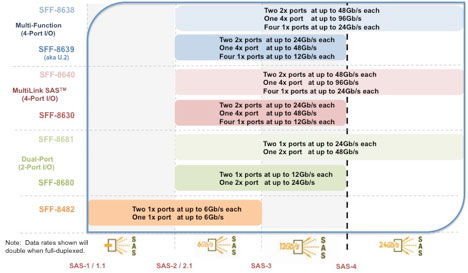

The SCSI Trade Association (STA) was formed to provide marketing support for the SAS standard and community. The roadmaps published by the STA show the progression of and the projections for SAS data rates as well as for the external I/O, internal I/O, and mid-plane connector interfaces that accompany each SAS revision. The latest revisions of the four components of the SAS Connectivity Roadmap follow and are available at www.scsita.org.

Figure 1 – SAS Advanced Connectivity Technology Roadmap

Figure 2 - SAS Advanced Connectivity External I/O Roadmap

SAS-3 interconnects have been woven into the SAS Advanced Connectivity Roadmap and therefore highlight updates and/or when new physical connector interfaces have been incorporated into the SAS-3 standard.

- SFF-8644 (mechanical) plus SAS-3 (electrical performance)

The external I/O is provided in 4x granularity. As shown in Figure 3, 1x1, 1x2 and 1x4 fixed/host board shielded receptacles are specified.

Figure 3

Figure 4 - SAS Advanced Connectivity Internal I/O Roadmap

Figure 5

Advertisement: Panduit

Mating/free cables are 4x (1x1) and 8x (1x2) with the 8x capable of plugging into any two adjacent 4x ganged host board receptacles. 4x and 8x passive copper, 4x active copper and 4x active optical cables are defined in SAS-3. Four 4x ports fit within the I/O connector space defined for the low-profile PCIe add-in cards.

- SFF-8643 (mechanical) plus SAS-3 (electrical performance)

- SFF-9402 supersedes SFF-9401 and provides recommended pinouts

Any single port, dual port or quad port SAS-3 storage device can physically plug into any of these three storage device receptacles – the number of active ports depends on which of the three receptacles (the dual, quad or multi-protocol) has been designed onto the mid-plane, as well as how many ports are active on the storage device itself.

Figure 6 - SAS Advanced Connectivity Mid-plane I/O Roadmap

Figure 7

The SAS standard includes a comprehensive table listing all of the approved interconnects for each revision level of the standard. The specification numbers do change based on the performance requirements for each succeeding revision level of the SAS standard.

The Small Form Factor (SFF) Committee, an ad hoc industry association, develops the mechanical and auxiliary specifications for the connectors and cables implemented by the T10 committee and are listed within the SAS standards. These specifications are also integrated into the STA roadmaps as shown above. The latest revisions of these specifications are available at http://www.sffcommittee.com.

The electrical performance for all SAS interconnects and links are defined within the INCITS SAS-3 standard itself. Pin 1 and complete pin-out specifications are located within the SAS-3 standard. It is important to keep these kinds of interface specifications within the primary source document which is the SAS-3 standard. Copies of the SAS-3 standard can be ordered from the INCITS website.

As we start 2017, organisations are forced to consider what the future holds for cloud computing. What balance of traditional infrastructure, private clouds, and public cloud services will IT departments hedge their bets on in the next three years? Even five years?

By Monica Brink, EMEA Director of Marketing, iland.

With cloud usage maturing and companies relying more and more on cloud computing to drive innovation and keep the business running, the focus is turning to how companies can implement the ideal cloud provider strategy. After all, there’s a lot of choice in cloud service providers out there. For many organisations, a dual-provider cloud strategy makes sense.

The IT department is not new to deploying dual vendor strategies. Indeed, avoiding vendor lock-in has been something that most IT organisations have been striving to do for years in order to ensure reliability, access new innovations and improve negotiating power. Now, as cloud providers such as AWS, Azure and Google reach saturation levels, there’s a good case for employing a dual-provider strategy for cloud services as well.

With the threat of cloud outages, organisations are opting more and more frequently for a dual provider cloud strategy with one provider for production cloud and another for Disaster Recovery.

But, it’s not just downtime concerns that are the driving factor behind companies having a dual-provider cloud strategy. When deploying new innovations in the form of new apps in the cloud, having a cloud provider that can work with you to customise the cloud hosting service required, offer flexible pricing options and even help you achieve the necessary compliance requirements for the service, is of paramount importance. Choosing the right cloud strategy can be game-changing to many organisations, and the “hands off” approach of many cloud providers is often not sufficient.

Advertisement: Data Centre World

Some organisations are using a variety of cloud providers for different use cases that require more flexibility and more visibility around billing and performance than they get from their existing provider. Furthermore, organisations are using a different cloud provider for disaster-recovery-as-a-service, to ensure failover in the event on an outage. With the changing landscape of cloud providers and the diverse IaaS use cases that many organisations have, it just makes sense to diversify and avoid having all your eggs in one cloud basket.

The cloud computing market is evolving fast and it’s difficult for anyone to predict how it will continue to evolve. What is becoming increasingly obvious though is that the cloud is becoming a vital part of a company’s overall IT infrastructure and there will always be value in having a true partnership with your cloud service provider. Furthermore the decision to engage in a multi-cloud strategy is one which should undergo great consideration and is important to drive the benefits of cloud computing throughout your organisation.

2016 was a rollercoaster of a year with new technologies and upsets causing stirs within the market. The year saw the rise of virtual and augmented reality (VR) (AR) into the mainstream consumer and business market, Brexit and the knock-on impact this has had on business confidence and the ever-growing technologies underpinning the data centre. But what does 2017 hold and how can data centre managers and technology businesses capitalise on these changes?

Colocation provider Aegis Data’s CEO, Greg McCulloch takes a look.

Virtual and augmented reality really came into the mainstream in 2016 with the global success of Pokémon GO. Its rise wasn’t a smooth journey with delays in the UK due to server overloads causing great angst amongst consumers. The hype surrounding the app opened a whole new market for data centre providers realising the vast opportunities behind the latest mobile craze. Operators with sufficient server capabilities, adequate cooling and high enough power capabilities are in an ideal position to enable game users and developers to provide increasingly sophisticated, data hungry games. Next year is likely to see a slew of new VR and AR games that will be just as data hungry, or more so as the technology develops. Data centre operators that are able to offer substantial power capabilities are set to benefit the most, thanks to their reliability in ensuring consumers are not left with slow games.

“We saw VR and AR hit the mainstream in 2016 after years of being a fringe technology. 2017 will see the consolidation of this technology and the growth in its availability and detail. The data centre backing these up must be able to take high loads or it will fail, causing the games developers to seek support elsewhere,” says McCulloch.

Advertisement: Starline Power

This year is already seeing a continuation of the process of change

which rattled many people in previous years. The IT industry is in the middle

of one of the biggest upheavals it has ever faced. All parties agree that the

change is here to stay; indicators are pointing to the continued rapid growth

of managed services, with the world market predicted to grow at a compound

annual growth rate of 12.5% for at least the next two years.

Inside this new model, there are other indicators. Not least of all the considerations, and a factor which is prominent in most discussions with customers, is security. Security as a service is a part of the picture, but needs to be better understood, particularly when the rising need to secure devices through the Internet of Things (IoT) is examined. Industrial applications are set to be the core focus for IoT Managed Security Service Providers (MSSPs) with ABI Research forecasting overall market revenues to increase fivefold and top $11bn in 2021. Though OEM and aftermarket telematics, fleet management, and video surveillance use cases primarily drive IoT MSSP service revenues in 2017, continued innovation in industrial applications that include the connected car, smart cities, and utilities will be the future forces that IoT MSSPs need to target and understand in all their implications.

ABI Research stresses that there will not be one sole technology that addresses all IoT security challenges; in actuality, the fact that true end-to-end IoT security is near impossible for a single vendor to achieve is a primary reason for the rise in vendors offering managed security services to plug security gaps. But it will be something that customers are aware of, and where they will need answers. Security will, in the future, form part of the purchase choice, directly driven by improved security education and a broader demand to protect digital assets in the same sense as users protect their physical assets today, says the researcher. For this reason, security service providers, although largely invisible today, may become the household names of tomorrow as IoT security moves from a requirement to a product differentiator.

Security is not an afterthought in most environments, however, and the way it relates to an industry or enterprise is a critical aspect of the architecture and systems design. This plays to the strengths of the expert service provider, who understands both the solutions and the risk profile of the customer. Just as ABI has argued that the security provider brand will start to play more of a role, so the channels with more of the answers will reap more of the rewards as customers look for help in challenging times. Security, like hosting and recovery, network resilience and scaling, will become a part of an overall solution which offers the lower costs of repeatability as well as enhancements as a part of the package which the customer will look to build on for the future.

How to find the answers to customers’ questions and engage in a more holistic discussion on security will be a concern for many. The Managed Services and Hosting Summit, London, 20 September, is the perfect event at which to do this, with over twenty leading suppliers on hand as well as industry experts.

And now in its seventh year, the MSHS event offers multiple ways to get those answers: from plenary-style presentations from experts in the field to demonstrations; from more detailed technical pitches to wide-ranging round-table discussions with questions from the floor, there is a wealth of information on offer.

A key part of the event will look at how, having gained the knowledge needed to offers such managed services, complete with security implications understood, the messages can then be conveyed to the customer in a meaningful way. Sales is no longer just a matter of faster technologies and brands, it is about being able to convey the whole picture of changed ways of working as the race for productivity, competitive advantage and cost control drives customers to look for new and different answers. Get your questions answered, see what others are doing, learn just what other resources are available to support your business at the key managed services event of 2017. New for 2017, Managed Services and Hosting Summit Europe, taking place at the Hilton Hotel, Apollolaan, Amsterdam.

For more details on both events visit:

London: http://www.mshsummit.com

Amsterdam: http://mshsummit.com/amsterdam

Angel Business communications have announced the categories and entry criteria for the 2016 Datacentre Solutions Awards (DCS Awards).

The DCS Awards are designed to reward the product designers, manufacturers, suppliers and providers operating in data centre arena and are updated each year to reflect this fast moving industry. The Awards recognise the achievements of the vendors and their business partners alike and this year encompass a wider range of project, facilities and information technology award categories designed to address all of the main areas of the datacentre market in Europe.

DCS are pleased to announce the Headline Sponsor for the 2017 event. MPL Technology Group is a Global leader in the development, production and integration of advanced Software and Hardware solutions to support the availability, agility and efficient operations of IT infrastructures and mission-critical environments worldwide. N-Gen by MPL provides a detailed yet intuitive interface, rich visualisations and easy access to data in real-time.

Phil Maidment, Co-Founder and Owner of MPL Technology Group said: "We are very excited to be Headline Sponsor of the DCS Awards 2017, and have lots of good things planned for this year. We are looking forward to working with such a prestigious media company, and to getting to know some more great people in the industry."

The DCS Awards categories provide a comprehensive range of options for organisations involved in the IT industry to participate, so you are encouraged to get your nominations made as soon as possible for the categories where you think you have achieved something outstanding or where you have a product that stands out from the rest, to be in with a chance to win one of the coveted crystal trophies.

The editorial staff at Angel Business Communications will validate entries and announce the final short list to be forwarded for voting by the readership of the Digitalisation World stable of publications during March and April. The winners will be announced at a gala evening on 11 May at London’s Grange St Paul’s Hotel.

The 2017 DCS Awards feature 26 categories across four groups. The Project and Product categories are open to end use implementations and services and products and solutions that have been available, i.e. shipping in Europe, before 31st December 2016 while the Company and Special awards nominees must have been present in the EMEA market prior to 1st June 2016. Nomination is free of charge and all entries must feature a comprehensive set of supporting material in order to be considered for the voting short-list. The deadline for entries is : 1st March 2017.

Please visit : www.dcsawards.com for rules and entry criteria for each of the following categories:

By Steve Hone CEO and Cofounder, DCA Trade Association

It’s all change at the DCA for the start of 2017. There are now two new staff at the DCA to help and support members. Kieran Howse joined the team at the start of the New Year as Member Engagement Executive replacing Kelly Edmond; he is already up to speed and here to support you moving forward.

I am also pleased to announce that Amanda McFarlane has joined the team as Marketing Executive. Amanda has extensive marketing experience in the IT sector. This new role has been created to ensure information continues to be effectively disseminated out to our members target audiences; be that government, end users, general public or supply chain. Amanda has already taken over responsibility for the delivery of the new DCA website and members portal which is due to come on stream very soon.

Many of you will have had the pleasure of both working and spending time with Kelly Edmond over the past three years and I know you will join me in wishing her all the best for the future and extending a warm welcome to our new staff.

Advertisement: Data Centre World

This month’s theme is ‘Industry Trends and Innovations’. I would like to thank all those who have taken time to contribute to this month’s edition allowing us to start the year with a good selection of articles.

During a recent visit to Amsterdam to attend the EU Annual COC meeting and the 5th EU Commission Funded EURECA event. I had the pleasure of getting a lift in the New Tesla X, not only does it run on pure battery power and do 0-60 in 2.6 seconds (quicker than a Ferrari) it literally drove itself, which when you think about all the innovative technology which must go into this car, the computer power needed to ensure it remains both reliable and safe - it’s quite mind blowing!

If this is a barometer of the sort of trends and innovations we can expect to see within the data centre sector, then we certainly have exciting times ahead. The DCA plans to dedicate a section of the new website to showcase our members ground breaking ideas as they emerge. Please contact Kieran or myself if you wish to spread the word about something new we’d be very keen to hear your news. The theme for the March edition is ‘Service Availability and Resilience’, the deadline date for copy is the 23rd February. This will be closely followed by the April edition which this year offers members the opportunity to submit customer case studies, the copy deadline is the 21st March.

Looking forward to a productive year, thank you again for all your article contributions and support.

T: +44(0)845 873 4587

E: info@datacentrealliance.org

Amanda McFarlane

Marketing Executive

Kieran Howse

Member Engagement Executive

By Franek Sodzawiczny, CEO & Founder, Zenium

The benefit of hindsight is that it provides clarity and perspective. It is now clear that - mergers, acquisitions and ground breaking technologies aside – there was an obvious shift in thinking last year towards the data centre now being regarded as a utility. Going forward, that most likely means that there is also going to be a much greater emphasis than ever before on service excellence in the data centre in 2017.

The key driver behind this is that data centres are becoming increasingly core to business operation and success, and are no longer considered to be ‘simply running behind the scenes’ but ensuring backup and storage is managed effectively. Information continues to be the lifeblood of global businesses, the mission-critical data centre is now deemed to be an asset that needs to be protected at all times.

Data centres are playing an increasingly important role in providing the essential infrastructure required to support ‘always-on’, content-rich, connectivity-hungry digital international businesses, we must perhaps now expect that the way in which they are procured will also change.

Advertisement: Cloud Expo Europe

What is shifting first and foremost is that the major purchasers of data centre space are now more likely to be hyper-scale companies such as Amazon, Google and Alibaba, than large corporates. The move to the Infrastructure-as-a-Service (IaaS) and Software-as-a-Service (SaaS) model, or any other such cloud services for that matter, will only continue to grow. The hyper-scale businesses know what they want and exactly when they need it. Their expertise in providing a hosted service to their customers means that they are 100 percent focused on efficiency, pricing, flexibility and reliability of service from the data centre operators that they ultimately select as business partners. They set the business agenda and the data operator is expected to meet it.

As a result, the requirement for consultancy and ‘old fashioned outsourcing’ from data centre operators is being replaced by a smart new approach, driven by an overall preference for finding a different way of working that focuses on taking a far more collaborative approach.

Data centre operators will without doubt continue to be selected based on their ability to build a facility that is sophisticated but added to future demands and expectations will be the underlying ability to form a trustworthy partnership with their clients. The focus will become about showing detailed levels of understanding about the specifications provided by the client’s highly knowledgeable and experienced engineering and IT team, whilst delivering an agile yet cost effective implementation and maintenance model in the longer term. As a service industry, data centre operators will therefore need to expand their levels of knowledge to identify lower efficiencies and continued cost savings. It will also require a responsive ‘can do’ attitude, complemented by advanced problem-solving skills and a heightened awareness of what guaranteeing a 24x7 operation actually means.

The impact of globalisation also suggests that data centre operators should be prepared to be asked to support client expansion into new territories, practically on demand, by harnessing their own experience of what it takes to enter new markets successfully, and then build quickly.

Extensive advanced planning, combined with the ability to deliver the same infrastructure in more than one country - against increasingly short deadlines - in order to support ambitious go to market strategies will become critical requirements in the RFP.

Looking ahead, we fully expect data centre operators to have to work much harder to meet the needs of fewer but larger customers and our flexible business model means we are ready. For those intending to deliver a new kind of data centre service in 2017, agility and adaptability will be what keeps you ahead of the game. Don’t under-estimate the importance of this.

Why innovation should not just be about the ‘tech’

By Dr Theresa Simpkin, Head of Department, Leadership and Management, Course Leader Masters in Data Centre Leadership and Management, Anglia Ruskin University

When Time magazine named the personal computer the “Machine of the Year” in 1982 few would have accurately predicted the rampant technological advances that stitch together the fabric of our economies and underpin our personal interactions with the world.

The introduction of the internet and all that has come with it, the explosion of big data, innovations in artificial intelligence and machine learning have brought with them profound change in an extraordinarily snapshot of time.

The tools available to us to be better at what we do (be it related to an IoT connected golf club that improves one’s swing or making better medical diagnoses based on advice from AI applications) have evolved exponentially. However, the way in which we work is still largely based on post war habit and leadership capabilities that have changed little in the last half century.

It is clear that despite sensational advances in technology and the applications to which they have been put, we are yet to apply similar attitudes to technical innovation and change to the human and organisational structures that form the spines of our businesses. It is ironic that the Data Centre sector is as much a victim of outmoded business thinking as many other traditional sectors.

There is a raft of evidence to suggest that the sector is facing some big ticket issues; ones so large that individually they put business objectives at risk but together they form a perfect storm that dampens growth and may limit carriage of strategic objectives such as profitability and growth in market share.

One of the most pressing is the discussion regarding the capabilities inherent in the human aspects of this ‘second machine age’; the rampant advancement of technology bringing with it seismic shifts in the way we work, the skills we develop and the capabilities we need to take organisations and economies into a space where there is optimal capacity for efficiency, profitability and public good. For example, there is a raft of research from industry associations, vendors and business analysts that identifies skills shortages as a pressing and immediate risk to business and the sector as a whole.

Shortages in occupations such as engineering, IT and facilities management are leading to a rise in salaries as the ‘unicorns’ (those with a valuable suite of capabilities) are poached from one organisation to another driving up wages and generating a domino effect of constant recruitment and selection activity. So too, the upsurge of contingent labour (contract and short term contracts) to back fill vacancies gives rise to an enlarged risk profile for the DC organisation. The lack of a pipeline of diverse entrants to the sector diminishes the capacity for a varied landscape of thought, critical analysis that leads to innovation and creative application of technical and knowledge assets. Couple this with a generally aging workforce and the picture for organisations that are chronically ‘unready’ is grim.

These few examples of ‘big ticket’ and often intractable problems exist in a business environment of shifting business models, a sector that is swiftly consolidating and that is enhancing operations in emerging markets. Place all this against a backdrop of more savvy but possibly shrinking operational budgets and a highly competitive landscape it becomes obvious that the business responses of yesterday are little match for the sectoral challenges of today, let alone tomorrow.

When we think of research and development, innovation and advancement we tend to think about the kit; the fancy new widget that will help us make better decisions, build better tools or store and utilise data more securely and efficiently. We rarely think of the innovations in the way we lead, manage, educate and engage our people within the organisations charged with building the next big thing.

Research currently being undertaken by Anglia Ruskin University suggests that, like many other sectors, the DC sector is hampered by traditional business thinking. Inflexible organisational silos are obstructing innovation in the ways companies are organised and traditional ‘back end’ structures provide incompatible support to a ‘front end’ that is dynamic and geared for change and challenge.

For example, the ways in which people are recruited, trained, retained and managed in general is largely still influenced by and designed around business practices of mid last century. The attitudes and practices of recruitment and selection for example are still largely predicated on the workforce structures of that time too; but that time has passed and the workforce itself is remarkably different in structure, behaviour and expectation.

The Data Centre sector, of course, lies at the confluence of the ongoing digital revolution. The ‘second machine age’ demands a new managerial paradigm.

The intersection of rapid and discontinuous technological advances and latent demand for new business approaches is quite clearly the space where the Data Centre sector resides.

It is self-evident then that innovation should not just be thought of in terms of the tech or the gadgets. As the digital revolution marches on unconstrained, so should we see an organisational revolution of leadership capability, managerial expertise and business structure if the ‘perfect storm’ of sectoral challenges is to be diminished and overcome.

By Prof Ian F Bitterlin, CEng FIET, Consulting Engineer & Visiting Professor, University of Leeds

Whilst the principles of thermal management in the data centre, cold-aisle/hot-aisle layout, blanking plates, hold stopping and ‘tight’ aisle containment are not exactly ‘new’ it is still surprising to see how many facilities don’t go the whole way and apply those principles. Many of those that do seem to be running into problems when they try to adopt a suitable control system for the supply of cooling air.

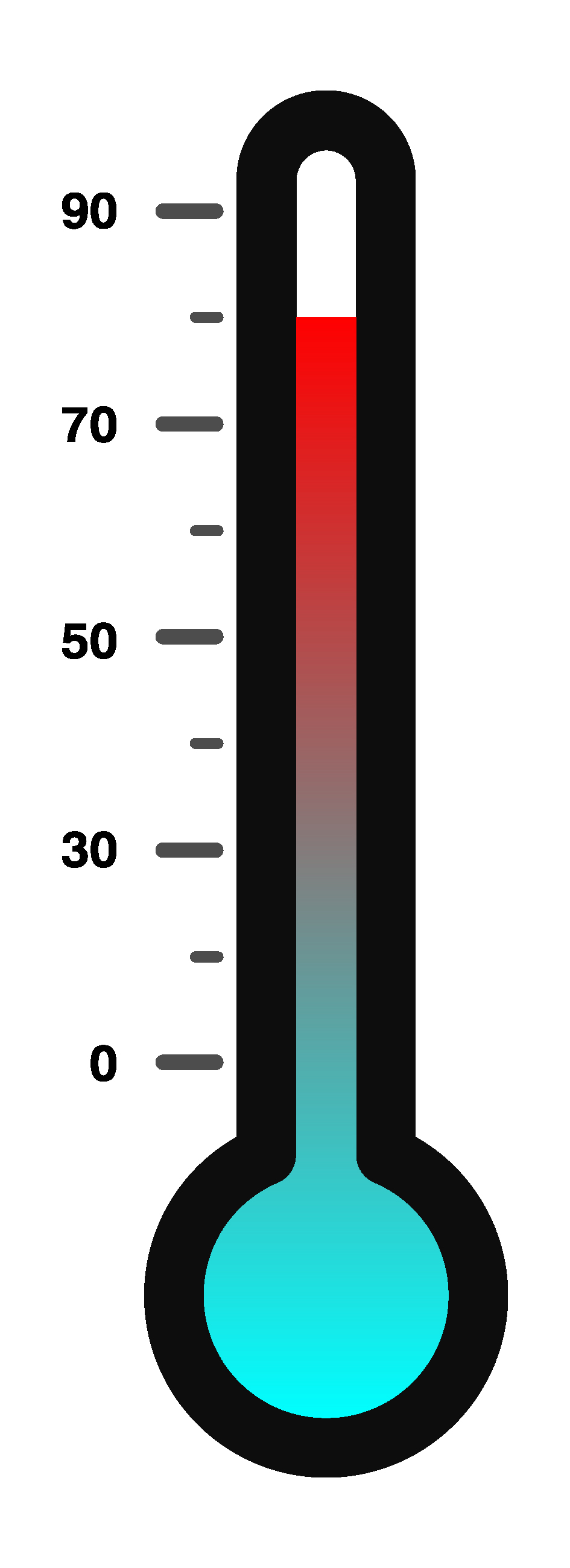

The principles are simple; if you separate the cold air supply from the hot air exhaust you can maximise the return air temperature and increase the capacity and the efficiency of the cooling plant. This is especially effective if you are located where the external air temperature is cool for much of the year and you can take advantage of that by installing free-cooling coils that cool the return air without a mechanical refrigeration cycle. In this context, the term ‘free-cooling’ simply translates into ‘compressor free’ – you still must move air (or water) by fans (or pumps) so it is certainly not ‘free’ but it does reduce the cooling energy. The hotter you make your cold-aisle and the colder it is outside (or you use adiabatic or evaporative technology to take advantage of the wet-bulb, rather than dry-bulb) the more free-cooling hours you will achieve per year.

Advertisement: APC Managed Service Providers

Clearly this doesn’t work very effectively when the outside ambient is hot and humid but for most of Europe from mid-France northwards 100% compressor-free operation is perfectly possible if the cold-aisle is taken up into the ASHRAE ‘Allowable’ region for temperature. Of course, some users/operators don’t want to take such risks (real or perceived) with their ICT hardware so limit the energy saving opportunities and some will still fit compressor-based cooling to be in ‘standby’. For some users, the consumption of water in data centre cooling systems, for adiabatic sprays or evaporative pads, is an issue, but it is simple to show that as water consumption increases your energy bill goes down and the cost of water (in most locations in northern Europe) does not materially eat into those energy savings. In fact, if your utility is based on a thermal combustion cycle (such as coal, gas, municipal waste or nuclear) then the overall water consumption (utility plus data centre) goes down as thermal power stations use more water than an adiabatic system per kWh of ICT load. For a rule-of-thumb you can calculate a full adiabatic system in the UK climate as consuming 1000m3/MW of cooling load per year, equivalent to about 10 average UK homes. So, what’s the problem? To see what the problem of tight (say <5% leakage between cold and hot aisle, instead of 50%) air management is we should look at the load that needs cooling, e.g. a server. The mechanical designer positions the cooling fans and all the heat-generating components.

The space is small and confined so the model can be highly accurate using CFD as a design tool. He places the 20-30 temperature sensors that are installed in the average server at the key points and someone writes an algorithm that takes all the data and calculates the fan speed to keep the hottest component below its operational limit.

However, we should note the simple model environment – one source of input air at zero pressure differential to the exhaust air and one small physical space with a route for the cooling air. So, the fan speed varies with IT load as it causes the components to heat up. Then we can look at the cabinet level:

And what to load each server with? Any model is now incapable of representing reality: If the user enables the thermal management firmware on their hardware (admittedly not as common as it should be) the loads’ fan speed is controlled by the IT load (or more specifically by the hottest component) then each server will be ramping its fans up and down on a continuous basis as the work flows in and out of the facility.

In a large enterprise facility, the variations of air-flow are totally random. The fan speed variation with ICT load is unique to each server make/model with some varying kW load from (as good as) 23% at idle to (as bad as) nearly 80% at idle.

This leads us to consider ‘how?’ to control the air delivery: Often cooling systems are designed to the set-point feed temperature or exhaust temperature or to operate with a slight pressure differential between cold and hot side – although this is most undesirable as the ICT hardware dictates the air-flow demand and its on-board fans are rated for zero pressure differential between inlet and exhaust. That results in air being ‘pushed’ through the hardware as the server fans slow down. Don’t forget that the cooling air will find the shortest path, not the most effective, and the clear majority of excess bypass air is through the load.

However, for air-cooled servers, the whole concept of a small set of very large supply fans (operating together) feeding a very large set of very small fans (servers all operating independently) with no control between them is a mechanical engineering problem. If we add to that a set of very large fans acting to remove/scavenge the exhaust air we have three fans in series and, without a degree of bypass-air, it becomes a mechanical engineering nightmare.

Then we might as well take the opportunity to look at the generally held view that server fans ramp up at 27°C and any temperature higher than that only serves to cancel out any energy saving in the cooling system. The principle is true but the knee-point is nearer 32°C. The main reason for the ‘Recommended’ higher limit of 27°C in the ASHRAE Thermal Guidelines is to limit the fan noise from multiple deployments to avoid H&S issues needing the facility to be classified as a noise hazard area.

We can now see that, somehow, the cooling system must:

Whatever you do don’t succeed in pressurising the cold-aisle as there will be risks of internal hot and cold spots inside your ICT hardware with unknown consequences.

By Garry Connolly, Founder and President, Host in Ireland

The European data hosting industry is on an upswing as the region continues to attract impressive infrastructure investments from a number of content and technology behemoths, including Google, Apple, Microsoft and Facebook. This rapid growth is not only felt at the top, however, as a host of small and medium-sized enterprises (SMEs) are also recognising and capitalising on the growth of colocation opportunities and increased availability of transatlantic bandwidth capacity due to additional subsea cable deployments.

Against this backdrop, a major sea change looms over the EU data hosting community that will fundamentally impact all industry stakeholders, including service providers and data centres, as well as hyperscales and SMEs alike. Following four long years

of debate and preparation, the EU General Data Protection Regulation (GDPR) was approved by the EU Parliament last spring and will go into effect on May 25, 2018.

The GDPR is making waves throughout the European data industry, as it is set to replace the Data Protection Directive 95/46/EC, a regulation adopted in 1995. Since that year, there hasn’t been any major change to the regulatory environment surrounding data storage and consumption, making this the most significant event in data privacy regulation in more than two decades. Unfortunately, this major shift leaves many IT professionals and organisational heads in a state of confusion as they attempt to understand and comply with coming

policies.

According to a report by Netskope, out of 500 businesses surveyed, only one in five IT professionals in medium and large businesses felt that they would comply with upcoming regulations, including the GDPR. In fact, 21 percent of respondents mistakenly assumed their cloud providers would handle all compliance obligations on their behalf.

Advertisement: Cloud Expo Europe

It’s clear that there are many questions surrounding the coming changes to the regulatory landscape, among these, what will be the impact of Brexit and how this new regulation will affect UK-based organisations.

Following this decision, the UK government stated its intention to give notice to leave the EU by the end of March 2017. Under the Treaty of the European Union, after giving notice there will be an intense period of preparation and negotiation between the UK and EU with respect to the terms of withdrawal. Once these terms are agreed upon or two years have passed since the original notice, the UK be officially separated from the European Union. That means that there is a possibility that UK will remain a part of the EU through March of 2019 – roughly a full year after the GDPR takes effect.

This leaves much confusion for British organisations wishing to remain compliant with their government’s data privacy regulations. Given the fact that the UK’s membership in the EU is likely to linger into 2019, UK-based organisations must take appropriate measures to comply with the GDPR between May 2018 and the official departure date from the EU, at which point they are to conform to their country’s individual legislation which may or may not differ.

Some of the more formidable obligations of the GDPR include increased territorial scope, stricter rules for data processing consent, and heavy penalties for non-compliance. The GDPR also puts major emphasis on the privacy and protective rights of data subjects, whether individuals or corporate entities. These new rules will not only apply to organisations residing in the EU, but also to organisations responsible for the data processing of any EU resident regardless of the company’s physical location. Fines for non-compliance are severe, as any data controller in breach of new GDPR policies will receive fines of up to four percent of total annual global turnover or €20 million, whichever is deemed larger.

May 2018 may seem a far way off, however it’s important to remain current and educated on the GDPR to ensure your company is prepared for what’s ahead. For data centres, arguably the community that will be most significantly affected by this law, the Data Centre Alliance will keep readers abreast of developments regarding this critical issue in accordance with its mission to promote awareness of industry best practices.

By John Taylor, Vigilent Managing Director, EMEA

In a perfect world, data centres operate exactly as designed. Cold air produced by cooling systems will be delivered directly to the air inlets of IT Load with the correct volume and temperature to meet the SLA.

In reality, neither of these scenarios occur. The disconnect is airflow – invisible, dynamic, and often counterintuitive; airflow is completely susceptible to even minor changes in facility infrastructure.

Properly managed, correct airflow can deliver significant energy reductions and a hotspot-free facility. Add dynamic control, and you can ensure that the optimum amount of cooling is delivered precisely where it’s needed, even as the facility evolves over time.

Why does airflow go awry, even within meticulously maintained facilities? The biggest factor is that you can’t see what is happening. Hotspots are notoriously difficult to diagnose, as their root cause is rarely obvious. Fixing one area can cause temperature problems to pop up in another. When a hot spot is identified, a common first instinct is to bring more air to the location, usually by adding or opening a perforated floor tile.

Temperatures may actually fall at that location. But what happens in other locations? As floor tiles proliferate, a greater volume of air, and so more fan energy, is required to meet SLA commitments at the inlets of all equipment.

Consider that fans don’t cool equipment. Fans distribute cold air, while adding the heat of their motors to the total heat load in the room. As the fan speed increases, power consumption grows with the cube of their rotation. Opening holes in the floor to address hot-spots is ultimately self-limiting.

Increased fan speed also increases air mixing, disrupting the return of hot air back to cooling equipment and compromising efficiency. We often see examples of the Venturi effect, where conditioned air blows past the inlets of IT equipment, leaving IT equipment starving for cooling.

Ideally, conditioned air should move through IT equipment to remove heat before returning directly to the air conditioning unit. If air is going elsewhere, or never flows through IT equipment, efficiency is compromised.

Poorly functioning or improperly configured cooling equipment can also affect airflow. Even equipment that has been regularly maintained, and appears on inspection to be working, may in reality be performing so poorly that it actually produces heat. And facility managers don’t realize when this occurs.

The data is typically not available. Containment is often deployed to gain efficiency by separating hot and cold air. Unfortunately, most containment isn’t properly configured and can work against this objective. Even small openings in containment or non-uniformly distributed load can lead to hot spots. Where pressure control is used, small gaps lead to higher fan speeds in order to properly condition the contained space.

So how can airflow be better managed? First you need data. And not just a temperature sensor on the wall, or return air temperature sensors in your cooling equipment. Airflow is best managed at the point of delivery – where the conditioned air enters the IT equipment.

Since airflow distribution is uneven, sensing and presenting the temperatures at many locations within each technical room will provide the best visibility into airflow. And instrumentation of cooling equipment can reveal which units are working properly and which machines may require maintenance.

Next, you need intelligent software that can measure how the output of each individual cooling unit influences temperatures at air inlets across the entire room. When a cooling unit turns on or off, temperatures change throughout the room. It’s possible to track and correlate changes in rack inlet temperatures with individual cooling units to create a real-time empirical model of how air moves through

a particular facility at any moment.

And finally, you need automatic control. Cooling equipment and fan speeds that are adjusted dynamically and in real-time will deliver the right amount of conditioned air to each location. Machine learning techniques ensure that cooling unit influences are kept up to date over time.

When you talk about data centres, it’s easy to ignore the physical structure and think only about what’s inside – the data itself, now the world’s most powerful and valuable commodity.

By Billy McHallum, Senior Manager – IBX Ops Engineering at global interconnection and data centre company Equinix.

Yet the buildings themselves are remarkable in their own right. Modern data centres are some of the most technologically advanced buildings in the world, with uninterruptible power sources, infallible failsafe systems, and security measures that rival even the most advanced military facilities.

With global data traffic expected to increase by 22 per cent a year by 2020, and with more and more companies interconnecting within these vital digital ecosystems, ensuring that data centres are as secure, efficient and effectively managed as possible will be more important than ever. Getting the design right from the very beginning is crucial to making this happen.

A key challenge when it comes to data centre design is energy efficiency. Data centres, for obvious reasons, use significant amounts of power, so anything you can do to reduce energy dependence is vital to the long-term performance of the facility.

Last year Equinix opened its LD6 data centre in Slough, one of the most advanced of its kind in the world. It was the first in the UK to achieve the Leadership in Energy and Environmental Design (LEED) gold energy efficiency rating.

This is an extremely difficult standard to obtain and meeting it required considerable investment. We had to look at the architecture and structure of the building with energy efficiency in mind from the word go, incorporating significant changes to design and process management compared to basic legal requirements. Every component required a clear audit trail so you can trace the full carbon cycle of each. The specifications covered everything right down to transport for employees, with the requirement to provide options such as shuttle buses, bike sheds and electric car charging points.

Because LD6 was designed and built from scratch, we were able to integrate these considerations at the design phase. Converted buildings are more challenging. The design expertise comes in knowing how to deploy solutions in the most effective way, using the infrastructure and physical footprint available to you.

At our AM3 facility in Amsterdam, we have deployed an Aquafier system that draws water from a cold well in summer to cool the facility, while in winter our building supplies hot water to the local university for their central heating system. We have also deployed solar panels on the office complex at AM3 to further boost the facilities energy neutrality.

Advertisement: CeBIT

It’s not just energy efficiency that proves challenging. You need to ensure that the technology being deployed – from cooling and power systems to security measures – will work just as effectively in the existing infrastructure as they would in a new build.

We employ extremely strict due diligence processes on every product and system used in our facilities, far beyond what is legally required. We also try to be as engaged with our supply chain as possible – providing detailed analysis to suppliers following every test so they can feed this into the development and improvement of their products.

Our London data centres house one of the world’s largest Internet Exchanges and more than 170 financial services companies. In total, a quarter of European equities trades flow through our International Business Exchanges (IBX) data centres. Security – physical and cyber – must always be one of our highest priorities.

The original security system for our LD6 IBX data centre in Slough was designed by the same person who masterminded the security system for the US Federal Reserve. They use a patented multi-level security system that tracks the whereabouts of everyone inside the facility at any time, using a combination of CCTV cameras, manned guarding and biometric hand geometry scanners at key access points.

When it comes industry standards Equinix is fully accredited to ISE 27001, the most widely accepted certification available for supporting information security, physical security and business continuity.

However, the very nature of our interconnection offer – where customers can directly connect to each other through private connections, bypassing the public internet – means the threat of cyber attack is significantly reduced. And, thanks to the Equinix Cloud Exchange, one-to-many connections can be just as secure as one-to-one connections.

One of the major trends we’ve seen at Equinix over the past three years is a huge uplift in the number of companies embracing interconnection as a solution to the challenges of rising data demands, disparate customers and the need for ever-lower latency/high performance. In this interconnected era, data centres will become increasingly vital to the global economy – the hubs through which the world’s most valuable information passes and on which the digital economy itself is built.

These facilities need to be built to not only meet the challenges of today, but the challenges of the future as well – whether that be from security threats or the sheer volume of data being created. Robust design will be crucial to ensuring that this continues to be the case, no matter what the future holds.

Making the most of your data means more than collecting,

storing, and processing bits and bytes. A reliable flow of data across a

secure, stable network will help to turn data into actionable information. By

unlocking your organisation’s data, you can change the way it operates and

accurately steer its transformation.

By Don Mac Millan,

General Manager, Data Centre Business, Dimension Data UK&I.

There’s growing acceptance that technology is hybrid. And it’s not a future state. Hybrid IT has arrived. For CIOs, this brings the challenge of ensuring that the various elements of their hybrid environments come together to deliver a single integrated set of services.

If you were building a data centre a few years ago, your biggest concern would be uniting the different technologies within your facility. So you’d evaluate different tools to help you achieve this. Security would be a key consideration that guided many of the decisions made. Next, you’d turn your attention to middleware: here it was all about integrating tiers of applications to deliver a single, end-to-end application experience.

Fast-forward to today. In the world of hybrid IT, you’re acquiring capabilities from a variety of different sources. Some may be provided by your own IT department, and some might be software-as-a-service (SaaS) applications such as Salesforce. It’s likely that much of it will be infrastructure-as-a-service, delivered by cloud providers.

DevOps has also evolved. Today, developers typically use a variety of portals – some may work in Amazon, others in Azure – and they’ll create applications using a combination of different toolsets. Next, they move the applications into production.

One way to optimise your hybrid IT environment is to rationalise the number of participants within it, especially the number of clouds in use. If you’ve always required a dual-sourcing strategy, leveraging just one single cloud isn’t an option, but it’s unlikely that you need more than three or four at the infrastructure level, in addition to the SaaS choices.

Advertisement: DCS Awards 2017

Optimising the network is also critical. Failure to do so will impact your ability to provide a high-quality user experience. And it will introduce unwelcome costs that can negate the promised value of cloud.

Many organisations see value in enlisting the expertise of a managed services provider to help them integrate the disparate elements within their environments and to deliver the speed and user experience their business requires, while maintaining their security posture.