Welcome to the February 2017 edition of Digitalisation World, Angel Business Communications’ flagship digital publication. And, if I may say so myself, what an issue it is. If, like me, you’ve been struggling to understand just how the Internet of Things is going to have a really practical impact in the business world, then our lead article should help re-assure you that there’s more to IoT than seemingly endless consumer ‘gimmicks’. As the article introduction explains:

“The Gotthard Base Tunnel is one of the first major global engineering projects to incorporate advanced IoT technology to transform the delivery of essential services. It uses a web of IoT devices to manage passenger and vehicle safety 24x7 in one of the world's most challenging operational environments.”

And we also have a fascinating article focusing on the role that the data centre has to play in the IoT era:

“It’s clear that the automotive industry is leading the way in the progression of the Internet of Things (IoT). Yet, with this comes a growing number of challenges and pressures – not least a need to process, analyse and store all of this new information. Data centres are therefore fast becoming the solution to the automotive sector’s rapid data growth, but how is this driving the connected car revolution forward? “

The answer can be found in the article ‘The data centres driving the intelligent automotive revolution’.

Advertisement: Data Centre World

Elsewhere in the magazine, there are articles focusing on the process of digitalisation itself, as well as some of the topics besides IoT that are important ingredients on the digital journey. These include hyperconvergence, open technology, the Cloud and, not least, security. During 2016, I made no apology for focusing heavily on security in several of my editorial comments, and I make no apology for flagging up security as the IT issue that will continue to dominate the digital landscape. In simple terms, digital data is rather more vulnerable than paper contained in folders, contained in filing cabinets, contained in physical offices.

At the present time, we all seem happy to accept the trade-off between a less secure information world and the huge opportunities, efficiencies and cost-savings that the march of digitalisation brings. Whether this will always remain the case will depend largely on a mixture of education – people and businesses understanding how best to protect their digital data – and the best efforts of those whose business it is to develop security measures that defeat the hackers.

The very real possibility, however slim, that the new US president owes his job in part to the industrial scale cybercriminal activities of the Russian government is as good an illustration as any as to the complications of the digital world.

My age places me in what I like to think of as ‘border country’. Not so old as to have missed out entirely on the smart world as I grew up (although my first job did involve a typewriter and not a PC!), and not so young as to be called a digital native. I like to think this gives me a balanced perspective on the pros and cons of the process of digitalisation. And for any organisation heading towards down this road, it’s a good idea to take a step back every now and then to evaluate the actions your company has taken and to understand their likely impacts – both positive and, almost inevitably, negative. And try to think of these impacts in the widest possible terms and over the longest period of time.

Brexit and Trump may both be successful, however this might be defined, but they are unlikely to turn back time. Ironically, the best way to combat the ‘threat’ of globalisation and monopolisation is by groups of countries working together to regulate the activities of those who seek to benefit, at any cost, from these trends. So, leaving the EU, or pursuing a path of ‘splendid isolation’, is not the answer.

As anyone who knows their war movies and westerns knows, a town, region or country divided falls easy prey to the enemy. Bring in Clint Eastwood to get everyone working together, and the enemy is quickly shown the exit!

And what has all of the above to do with the digital world and the IT industry? Well, it is both a part of the globalisation ‘problem’, but also a part of the potential solution. And it will be fascinating to see how the Digital Age is managed by governments across the globe, unable to resist market forces, but just maybe able to ensure that the right amount of taxes are paid (!) and that the overall impact of digitalisation is a positive one, whereby technology is used to bring people, communities and countries together, and not to turn us all into our own little, self-centred islands.

Angel Business Communications Ltd, 6 Bow Court, Fletchworth Gate, Burnsall Rd, Coventry CV5 6SP. T: +44(0)2476 718970. All information herein is believed to be correct at time of going to press. The publisher does not accept responsibility for any errors and omissions. The view expressed in Digitalisation World are not necessarily those of the publisher. Every effort has been made to obtain copyright permission for the material contained in this publication. Angel Business Communications Ltd will be happy to acknowledge any copyright oversights in a subsequent issue of the publication. Angel Business Communications Ltd © Copyright 2016. All rights reserved. Contents may not be reproduced in whole or part without the written consent of the publishers. ISSN 2396-9016

Advertisement: Panduit

Worldwide IT spending is projected to total $3.5 trillion in 2017, a 2.7 percent increase from 2016, according to Gartner, Inc. However, this growth rate is down from earlier projections of 3 percent.

"2017 was poised to be a rebound year in IT spending. Some major trends have converged, including cloud, blockchain, digital business and artificial intelligence. Normally, this would have pushed IT spending much higher than 2.7 percent growth," said John-David Lovelock, research vice president at Gartner. "However, some of the political uncertainty in global markets has fostered a wait-and-see approach causing many enterprises to forestall IT investments."

The Gartner Worldwide IT Spending Forecast is the leading indicator of major technology trends across the hardware, software, IT services and telecom markets. For more than a decade, global IT and business executives have been using these highly anticipated quarterly reports to recognize market opportunities and challenges, and base their critical business decisions on proven methodologies rather than guesswork.

Worldwide devices spending (PCs, tablets, ultramobiles and mobile phones) is projected to remain flat in 2017 at $589 billion (see Table 1). A replacement cycle in the PC market and strong pricing and functionality of premium ultramobiles will help drive growth in 2018. Emerging markets will drive the replacement cycle for mobile phones as smartphones in these markets are used as a main computing device and replaced more regularly than in mature markets.

The worldwide IT services market is forecast to grow 4.2 percent in 2017. Buyer investments in digital business, intelligent automation, and services optimization and innovation continue to drive growth in the market, but buyer caution, fueled by broad economic challenges, remains a counter-balance to faster growth.

Advertisement: APC by Schneider Electric

Table 1. Worldwide IT Spending Forecast (Billions of U.S. Dollars)

| 2016 | 2016 | 2017 | 2017 | 2018 | 2018 |

Data Center Systems | 170 | -0.6 | 175 | 2.6 | 176 | 1.0 |

Enterprise Software | 333 | 5.9 | 355 | 6.8 | 380 | 7.0 |

Devices | 588 | -8.9 | 589 | 0.1 | 589 | 0.0 |

IT Services | 899 | 3.9 | 938 | 4.2 | 981 | 4.7 |

Communications Services | 1,384 | -1.0 | 1,408 | 1.7 | 1,426 | 1.3 |

Overall IT | 3,375 | -0.6 | 3,464 | 2.7 | 3,553 | 2.6 |

Source: Gartner (January 2017)

"The range of spending growth from the high to low is much larger in 2017 than in past years. Normally, the economic environment causes some level of division, however, in 2017 this is compounded by the increased levels of uncertainty," said Mr. Lovelock. "The result of that uncertainty is a division between individuals and corporations that will spend more — due to opportunities arising — and those that will retract or pause IT spending."

For example, aggressive build-out of cloud computing platforms by companies such as Microsoft, Google and Amazon is pushing the global server forecast to reach 5.6 percent growth in 2017. This was revised up 3 percent from last quarter's forecast and is sufficient growth to overcome the expected 3 percent decline in external controller-based storage and allow the data center systems segment to grow 2.6 percent in 2017.

Equinix and Digital Realty remain the market leaders among nearly 1,200 worldwide suppliers.

451 Research projects that global colocation and wholesale market revenue will top $48bn by 2021 in its latest quarterly release of our Datacenter KnowledgeBase (DCKB), which tracks nearly 4,500 datacenters operated by 1,193 companies worldwide.

In Q3 2016, the datacenter colocation and wholesale market saw $28.9bn in annualized revenue. The majority of this revenue (42%) was generated in North America, with Asia-Pacific generating 31%.

Following a year of significant M&A activity in 2015, the first three quarters of 2016 maintained the momentum with notable industry consolidation that included Equinix completing its acquisition of Telecity Group, Tierpoint buying Windstream and Digital Realty Trust taking on eight of Equinix’s European datacenters, among many other deals.

The datacenter market continues to grow, not only in many of the main markets, but increasingly in ‘edge’ markets outside of the global top 20. “Interest in edge markets is one of the factors driving consolidation,” notes Leika Kawasaki, Senior Analyst, Datacenter Initiatives at 451 Research. “Over the next one to two years, we expect to see growing interest from top providers and investors in markets outside of the top 20, particularly in Asia and Latin America.

In terms of annualized colocation and wholesale revenue, as of Q3 2016 Equinix and Digital Realty remained the global leaders, with 9.5% and 5.7% share, respectively. Once the Equinix acquisition of Verizon's datacenter business closes in mid-2017, Equinix market share is expected to expand to 11.4% of global annualized revenue, equivalent to double that of the second-largest provider, Digital Realty Trust.

Advertisement: Managed Services And Hosting Summit

According to a new forecast from the International Data Corporation (IDC) Worldwide Quarterly Cloud IT Infrastructure Tracker, total spending on IT infrastructure products (server, enterprise storage, and Ethernet switches) for deployment in cloud environments will increase by 18.2% in 2017 to reach $44.2 billion.

Of this amount, the majority (61.2%) will be done by public cloud datacenters, while off-premises private cloud environments will contribute 14.6% of spending. With increasing adoption of private and hybrid cloud strategies within corporate datacenters, spending on IT infrastructure for on-premises private cloud deployments will growth at 16.6%. In comparison, spending on traditional, non-cloud, IT infrastructure will decline by 3.3% in 2017 but will still account for the largest share (57.1%) of end user spending. (Note: All figures above exclude double counting between server and storage.)

In 2017, spending on IT infrastructure for off-premises cloud deployments will experience double-digit growth across all regions in a continued strong movement toward utilization of off-premises IT resources around the world. However, the majority of 2017 end user spending (57.9%) will still be done on on-premises IT infrastructure which combines on-premises private cloud and on-premises traditional IT. In on-premises settings, all regions expect to see sustained movement toward private cloud deployments with the share of traditional, non-cloud, IT shrinking across all regions.

Ethernet switches will be fastest growing segment of cloud IT infrastructure spending, increasing 23.9% in 2017, while spending on servers and enterprise storage will grow 13.6% and 23.7%, respectively. In all three technology segments, spending on private cloud deployments will grow faster than public cloud while investments on non-cloud infrastructure will decline.

Long term, IDC expects that spending on off-premises cloud IT infrastructure will experience a five-year compound annual growth rate (CAGR) of 14.2%, reaching $48.1 billion in 2020. Public cloud datacenters will account for 80.8% of this amount. Combined with on-premises private cloud, overall spending on cloud IT infrastructure will grow at a 13.9% CAGR and will surpass spending on non-cloud IT infrastructure by 2020. Spending on on-premises private cloud IT infrastructure will grow at a 12.9% CAGR, while spending on non-cloud IT (on-premises and off-premises combined) will decline at a CAGR of 1.9% during the same period.

Advertisement: Preventing Data Corruption in the Event of an Extended Power Outage

Meanwhile, according to the International Data Corporation (IDC) WW Quarterly Cloud Infrastructure Tracker, IT infrastructure spending (server, disk storage, and Ethernet switch) for public and private cloud in Europe, the Middle East, and Africa (EMEA) grew 19.5% year on year to reach $1.5 billion in revenue in the third quarter of 2016.

The cloud-related share of total EMEA infrastructure revenue from servers, disk storage, and Ethernet switches grew by 6 percentage points compared with last year to 24.9% in 3Q16. In terms of storage capacity, cloud represented around 44.8% of total EMEA capacity in 3Q16, with 8.6% growth over the same period a year before. Looking at the market in euros, EMEA in 3Q16 reported strong YoY user value growth (19.1%) in public and private cloud across servers, storage, and switches.

"IDC expects this market to reach a value of $10.9 billion by 2020, from the five-year forecast, or 35.4% of the total market expenditure. Fueled by increasing maturity and adoption rates of many new cloud-dependent technologies such as the Internet of Things, cloud continues to represent an area of tremendous growth for the European infrastructure sector," said Kamil Gregor, research analyst, European Infrastructure Group, IDC.

For the scope of this tracker, IDC has tracked the following vendors: Cisco, DellEMC, Fujitsu, Hitachi, HPE, IBM, Lenovo, NetApp, Oracle, the major ODM vendors, and others.

"In Western Europe, we are beginning to see not only specific solutions based on 3rd Platform and Innovation Accelerator technologies, but increasingly often innovative solutions that combine multiple technologies to harness unique value that none of the technologies could unlock alone," said Gregor. "For example, several emerging industry clouds in the region combine data from the Internet of Things edge devices with real-time and Big Data analytics in subverticals such as advanced building automation, manufacturing asset management, and predictive maintenance.

"Regulatory compliance is becoming an increasingly important inhibitor of cloud adoption in the region, mainly due to political volatility in the EU, both in 2016 and potentially continuing throughout 2017, and as we approach the end of a two-year transition period for the EU's General Data Protection Regulation. Enterprises at the bleeding edge of innovation are looking into ways of mitigating these issues, for example by taking blockchain technology from the world of financial transactions and applying it to automation of policy compliance in complex cloud environments."

Central and Eastern Europe, the Middle East, and Africa (CEMA) cloud infrastructure revenue grew by 17.8% year over year to $214.14 million in 3Q16, driven by investment in networking functionalities as Ethernet switch recorded the fastest growth. The Middle East and Africa (MEA) region saw the strongest growth in EMEA, with many organizations investing in private cloud to consolidate and optimize their resources as IT budgets come under pressure due to challenging economic conditions in the region.

"Private cloud deployments have been driving growth in the CEMA region as organizations that are consolidating their IT infrastructure seek greater flexibility, lower capex, and faster implementation over traditional IT infrastructure," said Jiri Helebrand, research manager, Systems and Infrastructure Solutions, IDC CEMA.

Cloud infrastructure spending in the CEMA region is estimated to be 19% of the total addressable server, storage, and networking hardware market, with public cloud accounting for about 47% of this share.

Deployment Model ($B) | 3Q16 | 3Q16 | 3Q15 | 3Q15 | 3Q16 |

Segment Shares | Segment Shares | YoY Growth | |||

Private cloud (on- and off-premise) | $0.9 | 14% | $0.7 | 10% | 24.8% |

Public cloud | $0.7 | 11% | $0.6 | 9% | 13.9% |

Traditional IT | $3.9 | 59% | $4.7 | 64% | -14.5% |

Grand total | $6.7 | 100% | $7.3 | 100% | -8.0% |

Worldwide spending on the Internet of Things (IoT) is forecast to reach $737 billion in 2016 as organizations invest in the hardware, software, services, and connectivity that enable the IoT. According to a new update to the International Data Corporation (IDC) Worldwide Semiannual Internet of Things Spending Guide, global IoT spending will experience a compound annual growth rate (CAGR) of 15.6% over the 2015-2020 forecast period, reaching $1.29 trillion in 2020.

The industries forecast to make the largest IoT investments in 2016 are Manufacturing ($178 billion), Transportation ($78 billion), and Utilities ($69 billion). Consumer IoT purchases, the fourth largest market segment in 2016, will become the third largest segment by 2020. Meanwhile, Cross-Industry IoT investments, which represent use cases common to all industries, such as connected vehicles and smart buildings, will rank among the top segments throughout the five-year forecast. The industries that will see the fastest spending growth are Insurance, Consumer, Healthcare, and Retail.

Given Manufacturing's position as the leading IoT industry, it's no surprise that manufacturing operations is the IoT use case that will see the largest investment ($102.5 billion) in 2016. Other IoT use cases being deployed in Manufacturing include production asset management and maintenance and field service. The second largest use case, Freight Monitoring ($55.9 billion), will drive much of the IoT spending in the Transportation industry. In the Utilities industry, combined investments in Smart Grid for electricity and gas will total $57.8 billion in 2016. Smart Home investments by consumers will more than double over the forecast period, reaching more than $63 billion by 2020. In the Insurance industry, telematics will be the leading use case while remote health monitoring will see the greatest investment in the Healthcare industry. Retail firms are already investing in a variety of use cases, including omni-channel operations and digital signage.

Advertisement: IoT International Conference

"A fairly close relationship exists between high growth IoT use cases in consumer product and service oriented verticals like retail, insurance, and healthcare," said Marcus Torchia, research manager, IoT, with IDC's Customer Insights and Analysis team. "In some cases, these are green field opportunities with tremendous room to run. In other verticals, like manufacturing and transportation, large market size and more moderate growth rate use cases characterize these verticals. As a whole, the IoT opportunity is a diverse developing market place for vendors and end users alike."

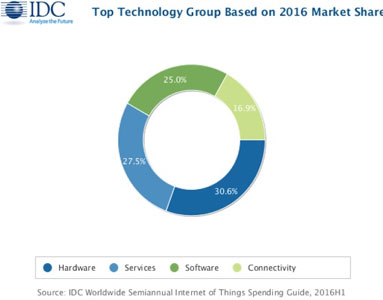

From a technology perspective, hardware will remain the largest spending category throughout the forecast, followed by services, software, and connectivity. And while hardware spending will nearly double over the five-year timeframe, it represents the slowest growing IoT technology group. Software and services spending will both grow faster than hardware and connectivity. Hardware spending will approach $400 billion by 2020. Modules and sensors, that connect end points to networks, will dominate hardware purchases, while application software will represent more than half of all IoT software investments.Asia/Pacific (excluding Japan)(APeJ) is the geographic region that will see the greatest IoT spending throughout the forecast, followed by the United States, Western Europe, and Japan. Nearly a third of IoT purchases in APeJ will be made by the manufacturing industry, while Utilities and Transportation will change places as the second and third largest IoT industries by the end of the forecast. In the United States, Manufacturing will be the industry with the largest IoT investments throughout the forecast but with a lower share (roughly 15%) of total spending. In Western Europe, Consumer IoT spending will overtake Transportation and Utilities to become the second largest IoT industry in 2020.

"It is great to see that the Internet of Things will continue to fuel both business transformation and innovation acceleration markets such as robotics, cognitive computing, and virtual reality," said IoT Research Fellow and Senior Vice President Vernon Turner. "The investments by China and the United States in IoT solutions is driving these two countries to account for double-digit annual growth rates and over half of the IoT spending."

By Erik Peeters, Managing Director IoT, KPN.

It’s a common phrase that we’ve all used from time to time. And it’s frustrating. Especially when all of those ‘cooks’ are the equivalent of Michelin Starred experts. But as the vision of the Internet of Things becomes a more ubiquitous reality, many needs and interests have to be unified. It will be a challenge to work through connectivity and usage standards for connected devices, but it is worth the effort.

It is obvious that IoT offers many competitive advantages. A great number of companies are restructuring themselves so they can actually use the wealth of data they possess. Gartner predicts that half of new business processes will have an IoT element by 2020. They are also using innovative connectivity options to create efficient processes and subsequently profitable revenue streams for the business.

The IoT will improve our quality of life in many areas. To make sure that the development of the infrastructure remains on track, it’s important for leaders in the industry to provide a support network. For example, workshops and brainstorms to help define and execute strategies to improve IoT standards, connectivity or usage, as laid on by the IoT Academy.

Advertisement: Cooling Strategies for IT Wiring Closets and Small Rooms

The goal of this collaboration is to make it possible for users to always access the best networks in whichever country they are in. This is most effectively achieved using unsteered roaming. This allows all devices to connect to the strongest signal, no matter the provider operating it. It makes roaming more effective and goes a long way to ensuring that devices have the bandwidth they need available.

The LoRa Alliance has also been established by companies including KPN to support international use of Long Range Low Frequency technology. The M2M World Alliance which enables these leaders to give companies around the globe the connectivity they need for connecting their M2M devices.

The technology necessary to run the internet of things exists, it’s now down to the creative people to maximise its potential. Cooperation between industries, companies, departments and people is essential. Not only when it comes to software and hardware standards, but also when it’s about finding ways to securely manage data and clarify responsibilities.

It’s clear that when cooperating, there are many paths to success there’s no single best way of doing things. It’s about making compromises. Then the broth will stay unspoiled.

By Kevin Linsell, Director of Strategy & Architecture at Adapt, a Datapipe company.

Over the course of the last year, there have been several well-regarded data centre facilities that have struggled with service issues. From the Global Switch 2 outage in September, to the more recent outage for UK government cloud provider Memset in November, service issues at data centres have been as prevalent as ever.

I believe that this underlines the fact that, unfortunately, the traditional high availability/redundancy approach to infrastructure services is not sufficient enough to deliver the 24/7 availability that is demanded by the modern business world. However, I believe very little will change, with regards to the evolution of data centres in the future. In the short term, data centres are going to continue being architected at a facility line level and will comply with the Up-Time Institute’s guidelines for generators, power feeds and cooling etc. However, the key change that we will start to see in the near future is that the infrastructure and applications we integrate into those data centre facilities will begin to change.

Advertisement: How Monitoring Systems Reduce Human Error in Distributed Server Rooms and Remove Wiring Closets

The current applications and infrastructures that are usually implemented into data centres need to become much more tolerant of failure, and much better at “self-healing”. By this, I mean that the applications will be able to understand when it is not working properly, and then automatically adjust itself to restore normal operation, all without the help from a human engineer. Not only would this reduce the amount of time lost in down-time, but it would reduce the amount of time needed to manage the system as well, as resources can be reallocated quickly, while upgrades can be implemented as soon as they are required.

This is important, because hyperscale cloud vendors have built their platforms on the premise that facilities and infrastructure might fail. In some cases, this is caused by adding all that resilience to an application, which means too much complexity has also been added to it. This makes the application more difficult to manage when implementing changes, such as patching or upgrades to the software.

Of course, not all applications are designed to be self-healing anyway, and it will probably be many years before applications are refactored or replaced in a system. This means that organisations need to understand the risk, mitigate it, and then prioritise the refactoring of those applications which are of the greatest criticality.

Despite the concept of self-healing systems being attractive to IT directors, there are several difficulties that users may encounter when trying to implement the self-healing applications. For example, one issue is that the application would need to be closely linked to the system management software, but if it is linked too closely, then the application would need to support proprietary interfaces for the system management, rather than having open standards. This would just add complexity to the system and would incur additional expense. Neither of which is desirable for the organisation using the application.

There are managed service providers that have mastered handling these types of applications though, and over the last 5 years, I have seen an increasing market shift to using such managed service providers. With data centres unlikely to adopt self-healing applications in the near future, managed service providers will become more crucial to organisations who want to refactor their critical applications in the next few years. This will continue into 2017 and beyond, in parallel with the application shift to self-healing.

In summary, until all applications are developed and created to be self-healing, then our current data centres will need to continue to provide an environment designed around redundancy and resiliency. This is because the levels of duplicity and complexity related to self-healing is expensive to procure, maintain and operate. The only solution in the short-term is to enlist a managed service provider to help devise a solution to minimise the possibilities of downtime and outages. Self-healing applications are most certainly the future of data centres, and the focus will be on building application-aware data centres, rather than on those that are focussed on infrastructure and facilities.

The Gotthard Base Tunnel is pioneering cutting-edge IoT technology.

To mark the start of operations on the 11th December, Laurent Moureu, General Manager at ALE Switzerland GmbH points out the Gotthard Base Tunnel is not just a major engineering feat. It is also one of the first major global engineering projects to incorporate advanced IoT technology to transform the delivery of essential services. It uses a web of IoT devices to manage passenger and vehicle safety 24x7 in one of the world's most challenging operational environments.

The Gotthard Base Tunnel will transport some 9,000 passengers every day through the Alps at 250km per hour, and up to 260 freight trains – which are much longer and heavier than in the past.

When you include areas such as access tunnels and cross passages, we are actually talking about an area of approximately 152km that needs to be connected and secured. This area must be served by immensely reliable IP network connectivity throughout. Even minimal network disruption through inefficient data transfer or bottlenecking will have the potential to cause delays and even impact worker and passenger safety.

Advertisement: Data Centre World

A lot of the technology is automated. This means that an extremely stable and reliable network for data is necessary to transmit essential operational data to and from the tunnel. An IoT environment relies on real-time communication between IP-enabled devices – the ’things’- to collect up-to-the-second operational data and provide tunnel operators the information they need to ensure all systems run smoothly and safely within the tunnel.

The doors are just one example. If any of the doors leading to service areas or access tunnels are left open, the pressure caused by a high-speed passenger train is enough to cause considerable physical damage to systems within the tunnel. The IoT-connected tunnel monitors all the doors 24/7, automatically sending alerts to the control room if the doors are not secured when they need to be.

But when you also consider the sensors, surveillance cameras, ventilation and drainage infrastructure, communications, control and monitoring systems across the entire site, which are all sending or receiving real-time data, you can begin to see why reliable connectivity is so important.

It is up to the data network - or rather data networks, as there are separate networks in each of the two parallel tunnels - to bring all these IP-enabled end points of IoT together and transmit the data to the operators in the tunnel’s control centres. It must be resilient and able to operate at all hours and in all temperatures and environments. This means having data switches which go beyond the ordinary - designed to operate with uninterrupted traffic and zero communication errors in some of the harshest working environments.

The size of the tunnel complex and remoteness of some of the service areas means that many of the networking components must work in the middle of the tunnel for extended periods – far outside the safety of climate controlled data centres. But it’s not just the location. Parts of the tunnel can reach temperatures of up to 40°C and hit humidity levels of 70%, a far cry from the usual home of a data switch.

Then there is the dust. Even in normal enterprise environments dust and airborne particles can cause problems eventually, but inside the railway tunnel it can cause serious problems for network components, as metal dust is kicked out from the train’s brakes. Add to this the electromagnetic interference and vibrations caused by everyday operations and you have an environment that would severely limit the lifespan of standard switching equipment and cause mechanical failure.

The Gotthard Tunnel required a specialised hardened network with rugged components to ensure a reliable and secure network.

The Gotthard Tunnel showcases a hardened network. What do we mean by this? Firstly, it uses switches, access points and routers that offer embedded security, dynamic network performance tuning for real-time application delivery and reliable broadband IP connectivity as standard.

Then all this needs to be built using network hardware with industrial grade form-factors.

The job of designing and implementing the data network fell to the engineers at Alpiq InTec, who used over 450 ruggedized Alcatel-Lucent OmniSwitch® 6855 hardened LAN switches to create the backbone of the data network running through the tunnel. Low levels of preventative and corrective maintenance are an absolute must when installing a network up to 2.3km under a mountain. These switches use convection cooling which relies on a heatsink rather than a fan to keep cool, minimising the danger of metallic particles entering the unit and damaging the internal electrical components.

This specialised hardened network enables the Gotthard Tunnel to take IoT where standard networking cannot – guaranteeing the level of service required for the longest, safest and best connected tunnels in the world - transporting those 9,000 passengers every day in safety and comfort.

Advertisement: DCS Awards

The first few start-ups in the hyperconverged infrastructure space enjoyed a captive market for a short period of time. But over the last 12 to 18 months, much larger legacy IT companies have entered the playing field.

By John Lucey.

This is hardly surprising when you consider the fact that hyperconverged infrastructure is seeing tremendous growth and adoption and shows no signs of slowing down. Indeed, IDC has noted a 154% annual growth in spending within this space while demand for traditional enterprise storage is in the decline.

However, legacy providers entering the space have to cope with two tough dynamics:

1. How to quickly catch up with the pure hyperconverged players that have built their solutions from the ground up – Legacy companies need to decide whether they want to spend the significant R&D investment required to build an adequate hyperconverged solution and risk losing time-to-market, or if they want to cobble together existing solutions that will come to market quicker, but may leave a lot to be desired in terms of functionality.

2. How to develop a solution that may eliminate or largely reduce traditional revenue streams

Despite these challenges, hyperconverged infrastructure still presents a huge opportunity for legacy vendors. A recent Gartner report noted the hyperconverged market is becoming ‘mainstream’ and is expected to reach a valuation of almost $5 billion by 2019. Moreover, the report went on to predict that other segments will suffer as hyperconvergence continues to be adopted.

Advertisement: Flash Forward

So why did it take legacy providers so long to enter the hyperconverged market?

Legacy IT vendors spent a long time ignoring hyperconverged technology in favour of reference architectures and converged infrastructures that leveraged existing technologies in their portfolios. Hyperconvergence consolidates and naturally replaces traditional data center components, such as servers, storage, and storage networking – the same components legacy players currently sell – while reference architectures and converged infrastructure pieces together disparate parts. Some hyperconverged players go beyond this level of convergence to include additional data services, such as data deduplication, compression, backup, cloning, and replication, which all remove the need for standalone solutions for WAN optimisation, data protection, and data efficiency. Overall, legacy providers that pursue hyperconverged infrastructure may risk cannibalising their own profitable product lines.

Many legacy vendors also argued that hyperconverged infrastructure only served “niche” and “greenfield” use cases, such as VDI. But that is not the case. Enterprises are purchasing hyperconverged infrastructure for tier-1 applications as well as entire data center modernization projects.

Regardless, since these large incumbent vendors are still primarily selling legacy server and storage infrastructure, the transition to hyperconverged infrastructure may be a rocky one for them. Given legacy infrastructure providers’ well-documented track records, it is likely they will take the path of least resistance and choose to partner or acquire an existing company rather than investing in innovation internally.

It’s clear that the battle between hyperconverged innovators and legacy IT providers will heat up in the near term as more IT organisations opt to modernise their data centers. This can only result in an increasingly confused market as more solutions claim to be hyperconverged. The fact is, legacy infrastructure companies that offer “just good enough” hyperconverged solutions won’t deliver the efficiency, scalability, elasticity, global management, and resiliency required if on-premises IT infrastructure is to compete with cloud alternatives.

This is a lot of innovation and technology for the old guard to catch up with.

In addition to improved IT agility, the results of the data-center-in-a-box approach are a significantly reduced data center footprint, an increasingly operationally efficient IT team, and significant total cost of ownership savings.

Companies of all sizes are turning to hyperconverged infrastructure for their entire IT infrastructure with the goal of driving out millions in data center costs while making their data centers more efficient. With this type of momentum and analyst outlook, it’s no surprise that the legacy IT providers have finally jumped onboard the bandwagon. However, legacy IT providers need to decide if they can responsibly enter this market and still do right by the customer. And customers need to carefully choose the infrastructure that’s right for them and that will fit all their needs.

Organisations are looking to digitally transform – at pace, writes Duncan Hughes, Systems Engineering Director, EMEA, A10 Networks.

The drive for agility is fuelling key transformations, including the

move to cloud, in IT processes and organisations in the enterprise. Application

development teams demand it, which is putting immense pressure on

infrastructure teams to deliver.

At the same time, the shift to DevOps processes is empowering teams to deploy applications much more quickly, often several times a day; and they’re looking to leverage new architectures based on microservices and containers to deploy and deliver those apps even faster. As IT and enterprises embrace these new app-centric operations, they strive to find ways to leverage their traditional data centres and existing investments while taking advantage of the cloud to achieve these new levels of agility and speed.

This makes it even more of an imperative to optimise application delivery and security, regardless of where applications run, whether that’s in public, private or hybrid clouds. And these next-generation application architectures must elegantly integrate not only with microservice and container based architectures, but with increasingly popular DevOps tools and processes, like Ansible, Chef, Jenkins and Puppet.

Advertisement: Avoidable Mistakes that Compromise Cooling Performance in Data Centers and Network Rooms

Traditionally, application architectures leveraged monolithic development processes, were deployed in a physical data centre, relied on IT-led operations and were built around hardware appliances. This new method embraces development and deployment of cloud-native applications and multi-cloud environments while taking advantage of agile and self-service practices built on a consumption model.

Look no further than household names like Netflix, Airbnb and Uber, and you’ll see companies that have taken application agility to the next level, and are now currently reaping its benefits. These new methods are a major departure from the current state of application delivery, which tends to rely solely on either hardware, open source solutions or load balancing offered by cloud providers, each of which, when used individually, often fall short of delivering the level of agility modern application teams desire.

So, what are organisations supposed to do?

Business led application teams crave agility, and IT teams want to deliver that agility through infrastructure that’s secure and easy to manage and control, while also laying the foundations to eventually move more of their applications to the cloud. Yet traditional models fall short in their ability to deliver the auto-scale, visibility and analytics, centralised management, self-service, security and multi-cloud capabilities that modern application teams are now demanding.

This is where a cloud-native application delivery service that is designed to boost security and delivery of applications across both traditional on-premises and cloud environments can come in. In fact, here at A10 Networks we have just launched our new Lightning Application Delivery Service (ADS) which does exactly this and provides the agility of the cloud while protecting exiting investments in on-premises hardware and data centre architecture.

Lightening ADS is a cloud-native, software-defined solution that can work in multi-cloud environments—public, private and hybrid— and can deliver integrated load-balancing, performance optimisation, application security and per-application analysis to increase operational agility and application performance. All of this combines to improve responsiveness and simplicity while lowering costs.

Now our customers can enjoy the benefits of cloud-native secure application delivery services. For example, a major US-based financial services company sought to de-risk migration from an on-premises data centre to a public cloud IaaS infrastructure. With our cloud native solution, this financial organisation was able to implement centralised per-app policies and visibility across clouds while reducing its CapEx and OpEx costs compared to legacy data centre ADCs. The IT team was able to provide the app team increased agility through the per-tenant policy controls.

A purpose-built application solution for the cloud, like Lightening ADS, can support traditional applications and modern architectures like microservices and containers. And it accelerates and secures applications to support the advanced delivery, security, orchestration, management and control that modern application teams crave. So, now as IT teams look to embrace new app-centric operations, this provides a way of leveraging both their traditional infrastructure while taking advantage of the cloud to achieve these new levels of agility and speed.

While the short answer is no, it’s worth considering what you can learn from others who have already found better ways to do business through transforming digitally.

By Matthew Smith, CTO at Software AG.

Attendees of Software AG’s recent Innovation Day event in London were given a unique opportunity to take an inside look at some of the biggest innovators in their respective industries, exploring what they see as innovation and learning how all organizations can achieve it.

Innovation is happening all around us as businesses that we interact with every day, as well as those working away in the background, pursue a digital future. In many ways, however, we are already living in the digital future.

Eric Duffaut, Chief Customer Officer at Software AG, kicked off the event with an insightful look at how digital transformation is allowing companies from all industries to increase customer intimacy, operational efficiency and gain competitive advantage.

Digitalization, Duffaut explained to attendees, is an incredibly disruptive force that is lowering the barrier to entry, increasing competition and shifting the balance of power towards the consumer.

“Nobody better than you can invent your digital future,” said Duffaut.

Advertisement: Effect of UPS on System Availability

A highlight of this year’s Innovation Day was “Modernising the British Army,” a keynote presented by Major General R J Semple CBE, CIO and director of information for the British Army. Digital transformation now plays a role in nearly every aspect of our military, from shaping how our forces are prepared and deployed to improving the management capability needed for effective decision making across the whole organisation.

Semple explained how acquiring real-time knowledge in order to make actionable decision is at the heart of the British Army’s journey into the 21st Century, while sharing a number of key lessons, experiences and advice applicable to any industry.

The Internet of Things (IoT) is a vital component of digital transformation and a major talking point for innovators across Europe. Giles Nelson, SVP of product strategy and marketing at Software AG, and Dr Christos Emmanouilidis, senior lecturer in IoT and visual analytics at Cranfield University, joined forces to explain how organizations can get the most value from their existing enterprise systems and data working together with the new data streams provided by IoT.

Matt Harris, retail client partner at Cognizant, outlined how retailers can keep the customer at the core of the business amidst the explosion of data currently taking place in the retail sector. His keynote, “Retail and the Internet,” took an in-depth look at the flexibility of the customer journey and how retailers can leverage the power of their data to provide the ideal experience.

Having gained over 20 years of experience in creating and developing successful businesses in Africa and Europe, Pavlo Phitidis, CEO of Aurik Business Accelerator, provided attendees with a wealth of insights into the entrepreneurial side of digitalization. In his keynote, he discussed how helping businesses to digitalize is a full time job. But first they have to figure out what they want to achieve.

“The only reason a business exists is to solve a problem,” said Phitidis.

With such a diverse range of presenters, Innovation Day provided every member of the audience with a wealth of information that will provide some insight and guidance into digitalization and what it means to be truly innovative.

Advertisement: APC by Schneider Electric

Moving services to the cloud is an interesting proposition.

By Mike Puglia, Chief Product Officer, Kaseya.

With server virtualisation, doing so is made simple, as current infrastructure can be tweaked to act more similarly to the cloud. But as demand grows, one must scale up by adding more resources, even if they are not often needed. However, particular services will benefit from being located in a public cloud, though they must be connected to local applications. This brings us to the hybrid cloud, which combines local and disparate resources.

The hybrid cloud market is expected to grow almost threefold to $91.74 billion by 2015, according to the Hybrid Cloud Market report released by Markets and Markets. Although this architecture can be complex, any challenges can be overcome.

A hybrid cloud generally starts with the creation of a private cloud. Measuring performance is relatively easy in this environment. You know the speed of your servers, disks and LAN connections. You also understand the maximum capacity of your resources, and can see which IT services are consuming these. On top of that, you can have performance monitoring and management tools that look for problems.

Advertisement: Preventing Data Corruption in the Event of an Extended Power Outage

The integration of the public cloud adds more concerns – you have the cloud service itself plus your WAN connections that impact actual performance. That’s why apps distributed via a hybrid cloud can be slower than when run solely on a private cloud. The same is true for private applications that ‘burst’ to the public in times of stress – here the public portion can be slower than the in-house operation.

Due to these intricacies, there is a need for 360O visibility into the performance across the whole cloud infrastructure. With hybrid environments, IT has to deal with many variable factors, including your network, internal servers, virtual machines and applications that need to be monitored to keep track of cloud services. Performance issues can come from several sources, and interdependencies can result in even larger headaches. It could be a router issue, a VM using too many resources, or another infrastructure component causing the degradation. You don’t get the requisite visibility using traditional siloed monitoring tools, making it difficult to pinpoint the root cause of performance issues.

However, with holistic visibility, coupled with the right remediation tools, you can spot problems and rectify them quickly. Visibility is also instructive – by understanding overall performance you know whether you need to upgrade the underlying infrastructure.

You need to keep a close eye on security in the hybrid world, of course. While your service provider should help secure your cloud data, you need strong authentication and you must ensure your data is encrypted when in transit.

Finding the cause of problems, though, is often the most complex issue. Part of this requires your cloud provider to monitor from their multi-tenant system. The part you need to control is the network that supports private cloud connections to the service provider. Here monitoring the connections for performance, doing root-cause analysis and fixing problems is critical.

When it comes to true performance monitoring, however, you need tools that support virtual technologies such as VMware, Microsoft Hyper-V, Xen and KVM. To have a holistic view, the same monitoring tools must support key cloud infrastructure offers such as Amazon AWS, Microsoft Azure, as well as CloudStack and OpenStack-based services.

Deep, unified monitoring can be used to let IT professionals see where performance problems lie, and that delivers root-cause analysis to support remediation. The same understanding of performance allows IT to predict future needs and plan upgrades ensuring that business-critical hybrid networks always work at peak efficiency.

In today’s complex hybrid cloud environments, businesses require systems that help reduce IT services downtime. The days of monitoring servers and routers in an isolated silo are gone. Businesses today require tools that offer real-time tracking and correlation of the business impact these devices have on overall IT services.

They also need systems that maintain mappings of relationships between hosts, guests, applications and services, to support service-centric monitoring of virtualised environments.

With hybrid clouds, you have two complex environments that both need access control and password management. A combination of Multi-Factor Authentication, secure remote access and Single Sign-On (SSO) that works both on-premises and in the cloud can solve this problem.

At the same time, the chosen technology needs to enforce strong password policies, not only keeping your hybrid environment secure, but helping you stay compliant at all times.

The rapid adoption of cloud services and the recent ramp up in hybrid cloud implementations, in particular, has led to a growing need for performance measurement and monitoring systems that can work across complex IT environments, incorporating different flavours of cloud. The good news is the latest technology has the capability to navigate this complexity and enable businesses of all types to face their future in the cloud with complete confidence.

Open source software has been at the core of the DevOps movement from the beginning. Mark Hinkle, Vice President at the Linux Foundation, explains why it will play a crucial role in the professionalisation of the field as well.

Open source was there from the start. The core idea behind DevOps – to bring together software developers and operations personnel, and make integrated, continuous deployment a reality – was only possible with new tools, and those tools were created by the very people who needed them. It was a very organic process: the moment professionals noticed something was missing, the community started to build it. Open source was, and is, the movement’s natural choice.

It was this – the clever use of open source development in order to stay flexible and responsive – that allowed the DevOps community to break down the barriers between developers and operations personnel, and enable IT professionals to make better judgements about how to deploy and integrate software continuously.

As a result, what started as a small cultural movement quickly grew into a central element of the modern IT landscape – and a rapidly growing career field.

Advertisement: Cloud Expo Europe

DevOps professionals do not specialise in a single technology as so many IT professionals have in the past; they are generalists who understand how to incorporate new technologies as they arise. Doing so, they are not only helping organisations to create more agile projects; they also assure that there’s a much tighter integration of those projects.

Organisations that implement DevOps best practices have been demonstrated to be more flexible and effective in designing and implementing IT practices and tools, and this often results in higher revenue generation – at lower cost.

The Puppet Labs State of DevOps Report showed how high-performing IT organisations experience 60 times fewer failures and recover from failure 168 times faster than their lower-performing peers. They also deploy 30 times more frequently with 200 times shorter lead times. And one of the top measures of how an organisation can move towards becoming more high performance is organisational investment in DevOps.

As a career field, DevOps provides strong job security, highly competitive compensation, and enormous opportunities for growth. And yet: there’s still a lack of experience and talent. One reason for this is a degree of confusion around the definition: do we all mean the same thing when we talk about DevOps? Another is a professional structure that is still only developing.

This is where support materials such as the “DevOps Handbook” and easily accessible online courses can offer assistance. The idea behind those initiatives is to further educate the DevOps talent pool, support and encourage projects, and professionalise DevOps to the same standards as other, more established fields.

Open source will play a key role in this effort: there’s widespread agreement in the community that a collaborative approach is the best way to create the kind of framework that will enable individuals to better understand the concepts involved in DevOps.

Organisations such as the Linux Foundation are helping to develop new technology for DevOps professionals – through open source projects.

Training opportunities – such as The Linux Foundation’s edX-based “Introduction to DevOps: Transforming and Improving Operations” – will help to explain the need for DevOps, its values, and its guiding philosophies. Professionals will be able to better understand DevOps foundations, principles and practices. Monitoring and business metrics will establish themselves more widely. And a new safety culture might take hold.

In the wake of this, networking forums of all kinds are developing into places and opportunities where members of the DevOps community can expand their skillsets and learn from one another. Holding on to open source principles, the DevOps movement looks set to take a big step towards creating the kind of structure that will embed its enormous achievements in a way that encourages even further advancement.

The IT foundation that enables the coveted driverless and connected car technologies that will radically change the way we travel into the future.

By Jorge Balcells, Director of Technical Services at Verne Global .

When I started driving my first car, vehicles were generating virtually no data. But with many vehicles now considered computers connected to the internet, data is flooding in. In fact, it is estimated that one connected vehicle uploads 25 gigabytes of data to the cloud per hour. Compare this against the prediction that a quarter of a billion connected cars will be on the road by 2020, and that’s a massive 6.25 exabytes (or 6.25 billion gigabytes) every 60 minutes.

With such vast amounts of data expected to continue growing in coming years, it’s clear that the automotive industry is leading the way in the progression of the Internet of Things (IoT). Yet, with this comes a growing number of challenges and pressures – not least a need to process, analyse and store all of this new information.

Data centres are therefore fast becoming the solution to the automotive sector’s rapid data growth, but how is this driving the connected car revolution forward?

Big Data is often described as a driver for innovation and remains at the forefront of CIOs minds.

The automotive industry’s application of Big Data is certainly testament to that, leading the way when it comes to machine to machine (M2M) and machine to human (M2H) communication – how we connect ourselves to cars, cars to other cars, and cars to manufacturers.

Advertisement: Panduit

Data analytics and processing in this space is a growing phenomenon but it is certainly not a new one. Whether it’s calculating drag coefficients or running simulations for every driving eventuality, automotive manufacturers are increasingly dependent on fast, reliable and complex computing to deal with their data needs.

This has been present in the motorsport industry for some time, take Formula One for example. F1 cars generate terabytes of data during races – information that pit engineers must quickly interrogate in order to make vital adjustments in between laps, ultimately effecting whether the driver will race to victory (or to a painful loss). As a result, the F1 industry is fast becoming the expert that suppliers and manufacturers are turning to for advice.

But it’s not just manufacturers turning to F1 data experts for advice. As more and more automotive giants recognise the need for complex computing to drive their businesses (and vehicles) forward, there has been exponential growth in the number of customers from the automotive industry turning to external data centre providers to meet their Big Data and High Performance Computing (HPC) demands.

The wider data centre industry is consequently looking to experts in the motoring space to gauge the requirements for handling automotive data, and ways to apply it to the compute solutions it presents the wider consumer vehicle market.

The complex nature and overall size of automotive data sets has sparked another trend – the need for scalable, secure HPC data centre solutions. For many auto-companies, these kind of data hubs are not necessarily those on their doorstep, and IT decision makers are looking to external data centre providers to support their HPC operations, by supplementing compute capacity and improving operational costs.

These colocation datacentre providers must present a solution: campuses that are ‘HPC-ready’, with the expertise to support the management of information loads as quickly, efficiently and successfully as the F1 experts.

More often than not, these are remote facilities with the power infrastructure, resiliency levels and computing resources needed to process HPC loads cost-effectively. Moving automotive HPC workloads to campuses with inherent HPC-ready capability gives automotive manufacturers the medium and high power computing density required at significantly lower energy costs. Ultimately that enables the ability to gain more insight from more data, and moves us closer to the benefits of autonomous driving.

The Nordics – home to campuses like Verne Global – are ideally designed to facilitate the needs of HPC workloads for automotive progress, thanks to 100% renewable power resources. Iceland in particular has abundant, low-cost power, and is powered by geothermal and hydropower only. There are also no fossil fuels in Iceland, which translates to a cost effective supply of energy to customers who house data in this location.

A number of automotive leaders have recognised these benefits, and are already reaping the rewards. One such manufacturer is Volkswagen, which recently announced the migration of one megawatt of compute-intensive data applications to Verne’s Icelandic campus in order to support on-going vehicle and automotive tech developments.

Likewise, BMW is a well-established forward-thinker in this area, having run portions of its HPC operations – those responsible for the iconic i-series (i3/i8) vehicles, and for conducting simulations and computer-aided design (CAD) – from the same campus since 2012.

These automotive leaders consider Iceland an optimal location for their HPC clusters – not only for the energy and cost efficiencies it delivers, but the opportunity it allows them to shift their focus from time-intensive management of the technical compute requirements of their day-to-day work to what’s really important: continued automotive innovation.

That said, wherever automotive data is stored, analysed and understood one thing is for sure: HPC – and the data centre industry as a whole – lies at the heart of the intelligent automotive revolution. It will advance our understanding of auto-tech, smarten our driving behaviours and ultimately carve a path to the coveted driverless and connected car technologies that will radically change the way we travel into the future.

The SIEM market is stagnating, writes Michael Fimin, CEO and co-founder of Netwrix.

Just one year ago the picture looked quite healthy. Gartner’s 2015 Magic Quadrant for Security Information and Event Management (SIEM) showed 11% growth during 2014 and predicted 12.4% growth for 2015.

But the corresponding survey for this year paints a different story with growth down to just 3.5%, from $1.67 billion in 2014 to $1.73 billion in 2015.

So what’s happened?

Despite its early promise SIEM has not proved very effective at preventing real cyber threats. Enterprises with complex IT infrastructures and hundreds of users generate logs in huge volumes.

While SIEM does aggregate these logs, it is unable to provide administrators with readily actionable data – simple, easy-to-interpret reports alongside contextual information for example – to help organizations to identify steps in the kill chain.

From our own research - the 2016 SIEM Efficiency Survey Report - we know that 81% of SIEM users complain about too much noise in reports while state that reports are either incomplete (68%) or hard to understand (63%).

What often happens is this: the SIEM issues numerous alerts, creating the impression that data may be in jeopardy. After many hours of investigation it turns out all the alerts were false alarms. Meanwhile some real threats may have slipped under the radar and before you know it some law enforcement or other agency is on the phone to tell you that you have been breached.

Advertisement: Gigamon

The sheer amount of effort it takes to make sense out of SIEM data and alerts is enough to exhaust any IT professional. Respondents in the Netwrix survey repeatedly encountered issues such as trouble finding necessary audit data (65%) or having to rewrite reports for non-technical colleagues (57%).

After all the false positive alerts and time spent trawling through logs by the bucket-load it should come as no surprise that only about 10% of all breaches are discovered by internal administrators. Most are reported by customers, law enforcement or auditors.

Moreover, SIEM solutions do not scale easily, need constant fine-tuning and tie up expensive management time.

In short, SIEM is failing to meet today’s cyber security challenges. The results simply do not justify the costs and that’s why investment in SIEM systems is falling.

Verizon’s 2016 Data Breach Investigations Report talks about most breaches taking several days to complete.

It seems to me that the incorporation of smart security analytics, with its ability to help IT staff focus on the real threats, could make all the difference here.

When considering what cyber security technology to invest in, it’s critical to identify something that can deliver real value now — and that can continue to deliver value as even as the IT environment you operate in and the cyber threat landscape evolve.

Look for a cyber security solution with the following functionality:

You can get all these features with User and Entity Behaviour Analytics (UEBA).

UEBA may have emerged only recently, but already it has shown much clearer evidence of detecting threats and providing administrators with refined insight than SIEM, according to Gartner customers.

Unlike SIEM, UEBA solutions analyze entity, user and privileged user behaviour to proactively spot anomalous activity.

This enables UEBA to address one of the most challenging security issues: identifying malware, compromised accounts, malicious insiders and other threats masquerading as authorized activity.

Furthermore, and crucially, advanced UEBA can defend against rapidly evolving cyber threats. Instead of relying on predefined rules like its predecessor, UEBA has the ability to learn, which gives it the flexibility to respond to the ever-changing threat landscape.

Together, the ability to identify threats disguised as authorized activity and the ability to learn allow UEBA to address every stage of the kill chain, enabling early detection of security incidents.

The best news is, if you already have a SIEM in place, there’s no need to rip and replace.

You simply integrate UEBA technology with your SIEM to get the best of both worlds: full system coverage and actionable intelligence.

To conclude, although some SIEM vendors are looking to acquire UEBA solutions and integrate the functionality into their products, it’s not clear how well they will support or extend the UEBA technology to combat future threats.

A better strategy is to supplement your SIEM with a best-in-class UEBA solution from a vendor focused exclusively on providing deep visibility into user behaviour and the governance needed to reduce your attack surface.

With that integration, you can protect your organization against today’s and tomorrow’s cyber threats while maximizing the value of your SIEM investment.

About Michael Fimin

Michael Fimin is an expert in information security, CEO and co-founder of Netwrix the first company to introduce a visibility and governance platform that supports hybrid cloud IT environments.

Advertisement: Gigamon An intro to the first pervasive Visibility Platform into Hybrid, Private and Public Cloud.

Digital transformation requires more than just a new hardware stack or software programme, it needs a shift within the entire business culture.

By Justin Anderson, GM EMEA at Appirio.

A strong culture permeates all aspects of business, irrespective of whether it is an SME or a large enterprise. Get the company culture right, with happy employees and what follows will be an atmosphere of efficiency, collaboration and performance. With optimum levels of a satisfactory Worker Experience and high employee retention, a successful digital transformation journey is easy. However, get it wrong and it’s a path to underperformance, high turnover and weak growth.

There are many ways to ensure successful digital transformation. Senior management can influence company culture from the top down ethos set out by the CEO and impact the benefits and perks rolled out by HR.

From an operational perspective, corporate IT must deliver and enable staff to their job well, but now more so than ever the technology choices a business makes will impact the way the company is perceived. It can define the underlying culture which runs throughout the organisation under every line of business. Some of the cultural challenges businesses face when transitioning to a digital business model and ways to address these obstacles:

C-suite adoption: C-level executives must be as much a part of the technology adoption process as possible, and be seen as the leaders in executing this change. Their investment in fostering innovation and pursuing change that benefits the company will in turn, foster positive sentiment amongst their employees when it comes to changing the way they work.

Plug the generation gap: Influenced by some of the biggest changes in technology including cloud, mobile and social media, the new breed of digitally proficient and mobile dependent employee craves information, be it access to or the ability to share it. This attitude poses a problem for more seasoned executives who don’t share the same enthusiasm around collaboration or mobile working.

Organisations need to think about how they attract and maintain the best employees and talent. Taking advantage of innovative ideas such as crowdsourcing should be considered as an option for organisations looking to find the right talent for their projects. Mobile ways of working are fast becoming a way for younger talent and other workers to hone their skills. Equally they’re helping organisations manage talent from all generations, as it allows them to move quickly and maintain their competitive edge.

Engaging employees: It’s all well and good adopting new technologies, but a lack of understanding of how the technology will be used to make a difference, can sink the sartorial digital transformation ship. Technology may solve a problem, but it shouldn’t be viewed as a solution in isolation. How does it align with the business’ digital goals?

Advertisement: Datacentre Transformation Manchester

Big data and analytics are part of most digital business models now. However, the strategy has to go beyond collection. When a business embraces a data culture it empowers its skilled workers to draw conclusions from the data in order to make fact-based decisions and collect important insights.

Lagging customer experience: This can be down to one thing, unsatisfied and unmotivated employees. A business may not have the right technology solution mix or have invested in providing employees the tools they need to provide a better service to their customers.

Tools such as Google Docs make a statement about the type of company you want to be. However, it’s crucial not to just ‘push out’ change to an organisation with little regard for training and employee involvement. Workers don’t appreciate feeling that they were forced to use a new system for which they had no training, and many of whom — while excited about a new technology — had no idea how to use it correctly. It might also be wise to think of alternative training methods that don’t involve sitting in a room for hours trying to learn a new system all at once, such as a vetting assessment process that identifies a genuine need for a solution.

A robust learning calendar, established goals and key performance indicators for employees are the keys to a successful Worker Experience and improved customer service. The ability to access training through contextual learning, short videos, user adoption sites, and quick reference guides can also make a huge impact.

As businesses shift towards digital business models, their current infrastructure, employees and processes will have to adapt with it. Disregarding the power of existing cultural business values, will have serious consequences for all involved from the CEO, down to the baseline employee, eventually reaching the customer. Finding the balance between improving the Worker Experience and improving the business model while maintaining a personally invested culture of employees is tantamount. The effects that will have on the business include boosting efficiency, fostering innovation and streamlining the customer experience.

Advertisement: Gigamon

Q&A on how to gain visibility into your AWS Cloud

In recent years, IT departments have invested in a range of technology tools to support them in managing and resolving users’ issues. Many businesses are unaware that facilities management (FM) departments can benefit from the same tools to manage and deliver business services. In particular, the adoption of self-service portals and interfaces that IT departments are well-used to, can be easily adapted for FM service users.

By Grant Macdonald, Managing Partner, Fruition Partners UK.

In the last ten years, IT have developed their role from simply building systems to brokering services. As the complexity of the business world has increased, many facilities functions have also been required to respond to change. Despite some progress, there are still many facilities teams managing requests and work orders using a series of disconnected tools, such as email and spreadsheets, that are often supported by outdated processes.

The potential inefficiencies can impact the business, but to change this scenario, facilities can take a leaf from IT’s book and improve operations by embracing service management and self-service technology. This can have huge benefits, not least improving bottom-line profitability. Indeed, research conducted by independent research company Vanson Bourne for Fruition Partners, found organisations could be saving around £600,000 by extending IT service management technology to other functions, such as facilities, rather than purchasing new stand-alone technology for each department.

As providers of services, facilities and IT have quite a bit in common. Typically, when a staff member contacts the IT department, it’s because they want one of three things: something fixed, some help or something new. In other words, exactly the sort of reasons why they contact FM, whether it’s fixing broken furniture, booking a room, or adding a new employee to the gym membership.

The IT Service Management (ITSM) discipline has become adept at putting in place processes to address these questions with efficiency and accountability. These are just as relevant to facilities, and with the advent of cloud, technologies can be easily customised and implemented by other business areas. Combined with self-service tools, service management can be simplified across the entire business. Compare, for example, a request to IT for a new printer and a request to FM for a new chair – the workflow behind the requests follow the same process. If we disregard the IT ‘content’ of the request, it’s not too big a leap of imagination to apply the same technology to ‘process-heavy’ functions such as FM and IT.

Advertisement: DCS Awards

There are a growing number of organisations which are using service management solutions to create a single ‘point of contact’ for all staff to access facilities advice and support across the business. Particularly advanced business have integrated this set-up into a complete ‘concierge-style’ portal which provides access to other business services such as HR, legal, marketing and IT, all from one place. Employees can use the self-service portal to then do anything from making catering requests, to reporting equipment failures, ordering new assets or booking their holidays. The service management technology behind self-service can also automate the systems used for managing projects such as property developments and upgrades, and provide management teams with advanced reporting on key indicators, including resource utilisation and response times to users.

By easing the workload and improving efficiency, and giving employees a means to liaise facilities other than by lengthy and resource-heavy phone calls, or via an unstructured email trail, the facilities function can focus its staff on more ‘value-added’ work, rather than day-to-day admin. Moreover the implementation of self-service technology also significantly boosts the approval ratings of the facilities function within an organisation. Users like the self-service elements, the fact that information is available 24 hours a day, and their ability to track queries and requests online. And because the facilities team has more readily-available information, they are able to respond more quickly to colleagues when personal interaction is needed.

The starting point for an FM team keen to pursue this approach and implement self-service technology is to talk to their IT function and find out if they are using service management software which can be adapted for FM. The next stage is to build a convincing business case that demonstrates the value of this kind of technology. This should be based on the costs-savings that can be delivered, and the increased productivity that can be achieved by automating manual processes and reducing reliance on unstructured technology such as email.

By reducing repetitive admin tasks across the whole business, it’s been calculated that a large corporation of 5000 employees could save the equivalent of 2,000 employees or 4 million hours. For some organisations that will mean a reduction in employee overheads; for others it’s a huge opportunity to reinvest precious human resource in generating value for the business, and getting more out of the investment in facilities across the board.

With the lower infrastructure cost, improved security and greater agility on offer compared to traditional client-server architectures and public clouds, it’s no great surprise that an increasing number of organisations are now looking to virtualised, automated data centres and hybrid clouds.

Dr Malcolm Murphy, Technology Director, Western Europe, Infoblox.

In fact, a recent Forrester survey of enterprise infrastructure technology decision makers revealed that 59 per cent of businesses plan to adopt a hybrid cloud model in the next year.